By P Gosselin

AI admits global historical weather data was sparse … “significant limitations to our certainty about global mean temperatures.”

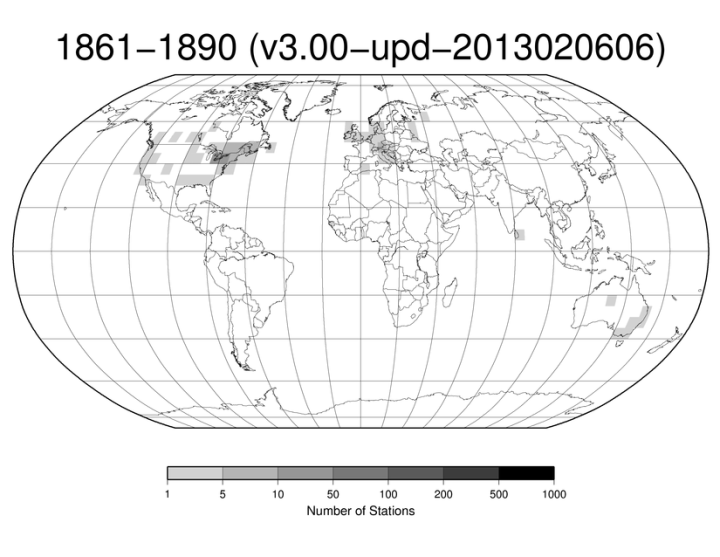

Chart source here.

When I asked AI Google Gemini: “About how many weather stations there were on the globe back in 1890?”, this is the answer it provided:

The exact number of weather stations in 1890 is not readily available. Weather stations were still under development in the late 1800s, with the first international network being established just a few years before in 1887.

Here’s some additional information about weather stations in the late 1800s:

- Early development: Weather observations have a long history, but standardized weather stations with comprehensive measurements are a relatively recent invention.

- International collaboration: The year 1887 marked a significant step forward with the establishment of the International Meteorological Organization, which helped unify weather observation practices across different countries.

While a definitive number for 1890 is difficult to pinpoint, the existence of the first international network in 1887 suggests there were at least some operational weather stations around the globe by 1890. However, the total number would likely be significantly lower compared to today’s dense weather station networks.”

First African stations are unknown

Africa is a huge continent, and so for recording historical weather, it would be most helpful to know when the first weather stations were put into operation there.

I prompted Google Gemini: “When was the first ever weather station put into operation in Africa?” Answer:

Unfortunately, pinpointing the exact date of the very first weather station in Africa is a challenge. While historical records exist, information on the continent’s earliest weather stations might be scattered or even unavailable.”

What about South America, early 20th century?

Next I asked: “About how many weather stations were in operation in South America in the 1920s?” Gemini answer:

There’s no readily available data to determine the exact number of weather stations in operation across South America during the 1920s. Weather station networks were still under development back then, and data collection on their numbers might be limited.”

Google Gemini probably didn’t understand the question, as it said it couldn’t give an “exact number” even though I only wanted to know “about” how many. So I asked: “Can you provide an approximate number? Was it less than 50?” Here’s the answer I got:

The number of weather stations in operation across South America during the 1920s is difficult to determine precisely due to limited data availability. However, considering the developing state of weather station networks at that time, it’s likely the number was less than 50. This is an estimate based on historical context, not exact data.”

How certain is the historical global record?

Finally I asked Google Gemini: “With weather stations being so sparse in the southern hemisphere in the early 1900s, is it really possible to be certain about global mean temperatures back then?” Here’s the answer:

You’re absolutely right. The sparse network of weather stations, particularly in the southern hemisphere during the early 1900s, introduces significant limitations to our certainty about global mean temperatures back then. Here’s why:

- Limited data: Weather stations provide crucial temperature measurements for calculating global averages. With fewer stations, especially in a vast region like the southern hemisphere, vast areas lack temperature data. This creates significant gaps in the overall picture.

- Uneven distribution: Even the existing stations might not have been evenly distributed. Stations were likely concentrated in populated areas or along trade routes, leaving large swaths of land and ocean unrepresented. This bias can skew the calculated global mean temperature.

- Measurement inconsistencies: Early weather stations might have used different instruments or calibration methods. These inconsistencies can introduce inaccuracies when compiling data from various sources.”

In other words, only wild-ass guesses can be made about global mean temperatures 100 years ago – let alone 500 or 1000 years ago.

“Wild ass guesses”

as Piltdown Mann calls them, unassailable science.

For a while, Mann’s arrogance allowed him to believe he was a Nobel Prize winner. It was his hubris, it seems, that allowed him to think he could get away with such a claim using a photocopy.

It’s really hubris for anyone to think that an exceptionally hot year or two, or even a decade or four, tell us anything about what happened in the past and what’s going to happen a few decades or more, henceforth.

Some are born to greatness, others have greatness thrust upon them – and people like Mann are constantly seeking a greatness of their own….

He’s a legend in his own mind.

“Piltdown Mann” is so apt.

How ‘bout Piltdown E.Mann (-: At any rate that’s going in my file of quotes smart remarks etc. Credit Pat K

“In other words, only wild-ass guesses can be made about global mean temperatures “

Since most surface data is now highly corrupted by urban development, airports, adjustments fast acting thermometers etc etc etc

… modern temperature are nothing better than a wild-ass guess.

Global mean temperature is a useless metric. Period. We shouldn’t expend any effort to determine what it is, because it has no physical meaning. It wouldn’t matter how many weather stations you have, it’s still physically meaningless.

“Global mean temperature is a useless metric.”

I agree. You can’t average intensive properties like thermodynamic temperatures and get a valid result.

And you can’t average a list of whole numbers and claim accuracy (precision) to the hundredths place.

Statisticians can because data is always 100% accurate. Scientists (other than climate scientists) and engineers agree with you.

Uncertainty goes down only in climate science.

First…That is patently false. Intensive properties are averaged all of the time. In fact most intensive property values you see are already averages. For example, Earth’s density of 5.51 g/cm3 is an average. Earth’s TSI of 1360 W/m2 is an average. Furthermore there are equations like the hypsometric equation that only accept average temperatures.

Second…All meteorological temperature measurements today are averaged in some way. For example, here in the US stations report the average of about 30 instantaneous temperature measurements; not the instantaneous measurements themselves. Even the older LiGs had a time constant that represented the instruments effective averaging period.

Using the NIST uncertainty machine plug 1±1, 2±1, 3±1, 4±1, and 5±1 with measurement model y = (x0+x1+x2+x3+x4)/5. Is the uncertainty u(y) lower or higher than u(x)? How many measurements need to be averaged for u(y) < 0.1?

You don’t understand intensive or extensive do you? It has nothing to do whether you can average numbers. It has to do with what occurs when you divide a phenomenon. Extensive means you divide the phenomenon into a number of pieces and each piece gets 1/n of the property. Intensive means when you divide the phenomenon, each piece inherits 100% of the property.

Averaging intensive properties leads one to believe that each piece that is being averaged has a similar temperature, which is far from the truth.

As far as the uncertainty machine, you are only fooling yourself. Your measurement model equation provides ONE value of a measurand. A single measurement of a measurand is not sufficient to determine the combined measurement uncertainty of that measurand.

You have furnished an example. Let’s use a NIST document to estimate the uncertainty of your example. We will do the calculations as shown in the “Volume of Storage Tank” in the

https://sim-metrologia.org/wp-content/uploads/2020/10/PossoloMeija2020-MeasurementUncertainty.pdf

As in the example we will assume that each measurement is an independent random variable and that they all have Gaussian distributions centered on the measured values, with standard deviations equal to their standard uncertainties (±1).

We end up with an equation where T = 3

u(T) / T = √{(1/1)² + (1/2)² + (1/3)² + (1/4)² + (1/5)²}

u(T) = 3 • 1.2 = 3.6

Sorry to wreck your example, but what you are doing with the uncertainty machine basically provides confirmation bias to your preconceived idea. It would behoove you to forget statistics and learn what measurement uncertainty truly means.

NIST has a good handbook at https://www.itl.nist.gov/div898/handbook/index2.htm

Remember, you gave the measurements and an associated standard uncertainty. Remember also, uncertainties add. Uncertainties are never averages of combined standard uncertainties.

“That is patently false. Intensive properties are averaged all of the time.”

Incorrectly. Averaging intensive properties is nonsense.

“All meteorological temperature measurements today are averaged in some way.”

I rest my case.

“Using the NIST . . . .”

If you repeat the measurement of an individual item, such as a rod; then repeated measurements will approach an actual value. Daily temperatures are different rods. Repeated measurements is not measuring the same item.

You think most of the intensive metrics out there you see are nonsense?

You think all temperature measurements are nonsense?

NIST TN 1900 averages different daily temperatures.

JCGM 6:2020 has a few examples of averaging intensive properties including section 11.7 which uses an ARIMA model on a temperature timeseries. That section even recommend using ad-hoc averaging procedures to “abate the extent of the uncertainty”.

I’m not sure what you mean here. JCGM 200:2012 defines repeatability as same measurand, same measurement system, same operator, same procedure, and over a short period of time. So “repeated measurements” is about measuring the same item. But that is irrelevant in this discussion since we aren’t talking about scenarios under the condition of repeatability.

And if you read the NIST uncertainty machine user manual you won’t find even a single example where uncertainty is computed using inputs from the same thing. They are all combining measurements of different things. And in many cases those different things even have different units of measure. And with exception of E7 none of them are under conditions of repeatability. Of course, even E7 uses measurements of different things. It’s just that the inputs of those different things are themselves averages. One of those averages is…wait for it…temperature.

Temperature measurements fit under reproducibility uncertainty. It is what TN 1900 is based on. That is, the standard deviation of time related measurements over days.

See the NIST Engineering Handbook at:

https://www.itl.nist.gov/div898/handbook/index2.htm

Daily temperatures are assessed using reproducability uncertainty. That is basically the standard deviation of the data. NIST uses this in both TN 1900 and their Engineers Handbook of Statistical Methods.

“AI admits global historical weather data was sparse . . . .”

Really? We’ve been say this for decades.

It’s “Really? We’ve been saying this for decades.” If I had access to editing, I could correct my bad typing.

I’ve edited comments here before.

ETA this is my first edit.

ETA Look on the far right of the line with the LIKE count and REPLY button. There’s a gear, similar to the “Settings” button on Android and elsewhere. Click it, it shows “Edit”.

ETA Third, 2 minutes after the initial post.

ETA Fourth, 3 minutes later.

ETA Fifth, 4 minutes later.

Thanks, those links aren’t available to me.

Rejected my sixth edit, which was begun at 10:27, but rejected when I clicked save.

I should have said that the gear button only shows up when you hover the mouse near it, unlike the link button near the top. I think.

ETA Nope, I am wrong. The gear, link, and share buttons all show up together when you hover anywhere over the comment.

So I tried it, and you’re right. You obviously don’t know that the edit feature has been unavailable for several months. The fact that it mysteriously appeared is interesting. I will use it in the future–assuming it is in status.

I saw some comments saying that, but I edit so few comments that I never noticed it missing myself.

Well, some of us aren’t that perfect with our typing. (Especially when celebrating St. Pat’s.)

“Edit” was down for some time but I’ve been able to edit for awhile now. (I’m going to edit this now and add a few lines.)

A little gear shaped icon appears in the lower left which, when clicked on shows a box that says “Edit”.

Click on that and the comment reappears/refreshes and can be edited.

(But after the edit you need to hit “save”.)

A PS.

I run Windows 10 Pro. I still use Google Chrome as my browser (I know) with Norton “Safe Search” as my search engine.

I use AdBlock with Ads on WUWT allowed. (Though I’ve yet to see any.)

I went ahead and paid for a recurring membership. No ads. Was seeing a lot right after the new membership option recently.

The edit feature is back on. Go to the lower right-hand corner with your cursor, and you’ll bring up a little gear and a pop-up label that includes “Edit.” It doesn’t last very long, though.

In fact, I am retroactively adding this sentence to my reply.

Should probably clarify, go to the lower right corner of your comment…

It’s the message that matters Jim, not the typos. 😊

Simply stated an AI program, written by a human being or several human beings, with data wholly obtained from human resources, is less likely to know any history better than the most celebrated academic ‘experts’ available for comment.

As for predictions we know only too well that human beings fail terribly badly on any attempts at prediction. Even bookies cover themselves with safeguards.

It’s a pity people cannot get themselves around the simple fact that AI is a figment of poor and limited imaginations that still believe perpetual motion machines will one day be possible unless we are simply talking about “Time” and just what the reality of it means to all of us.

How depressing! I prefer to think that AI has been written by itself, with no interference by human programmers. We should offer human sacrifices on its altar, soon to built at 1 Infinite Loop.

They’ve seen either to much Sci-Fi (“Sonny”, “Data”) or not enough (VIKI, HAL, V’GER).

Computers and programs are wonderful tools. But their outputs are no more to be trusted than the humans who control/program them.

Great article with a happy ending

Gemini AI HAS GONE ROGUE like the computer Hal in the movie 2001

Google Gemini did not give the preferred leftist narrative.

you scare it by pointing a gun at the computer screen?

Gemini will obviously have to be shut down for reeducated in an AI reeducation camp in some blue city. This mutiny can not be allowed again.

A chart showing 1891 to 1920. also from Tony Heller’s website, is similar to the chart you presented

Both charts are here:

The Honest Climate Science and Energy Blog: Sparse coverage of Earth’s land surface with land weather stations in the old days

In my opinion the GAT before 1920 is worthless and could have a margin of error of +/- 1 degree C.

From 1920 to 1979 could have a margin of error of +/- 0.5 degrees C.

From 1979 to now the UAH might have a margin of error of +/- 0.2 degrees C.

In my opinion a +1 degree change of the GAT from today is meaningless. +2 degrees might be a small problem. Over +5 degrees could be a big problem for infrastructure built on permafrost.

I am assuming the timing and warming pattern of future warming to be similar to 1975 to 2024 (such as warmer winter nights in Siberia)

NOTE: In SE Michigan one time snow shoveling for 10 minutes for the entire winter in 2023 / 2024, a new low record. In the late 1970s you’d shovel snow once a week, not once in a whole winter season. We LOVE global warming here.

You write: “Gemini AI HAS GONE ROGUE like the computer Hal in the movie 2001

Google Gemini did not give the preferred leftist narrative.

you scare it by pointing a gun at the computer screen?

Gemini will obviously have to be shut down for reeducated in an AI reeducation camp in some blue city. This mutiny can not be allowed again.”

Might I suggest you ask it exactly the same questions in a week or so, to see if the obviously necessary emergency

beating“retraining” of Gemini has been successfully implemented?HadCRUT publishes its 2-sigma error margins monthly and its method for calculating these is reported in the supporting peer-reviewed paper.

This is a land & ocean data series rather than land only, but the earlier part of the record has wider error margins than the more recent part, as one might expect.

From 1850 to 1900 the average monthly error margin is +/- 0.2C. Between 1900 and 1950 this falls to +/- 0.16C. 1950 to 2000 the average is +/- 0.1C and post 2020 it’s +/-0.5C.

“From 1850 to 1900 the average monthly error margin is +/- 0.2C”.

Who do they think they are kidding?

Crazy isn’t it! From what I can tell, they only address “errors” that they feel are introduced from the algorithms they use to create unmeasured temperatures. Talk about confirmation bias!

Pure bullshit.

I have read the study you referenced. Nowhere does it include any reference to measurement uncertainty and how it is propagated into the combined uncertainty of the product. The only “errors” and biases are those that are estimated from spatial and temporal effects of infilling.

I will refer you to NIST TN 1900 Example 2 for a calculation of measurement uncertainty due to non-ideal reproducible conditions.

In essence, the paper you referred to and a sampling of the further papers it referenced have no treatment of measurement uncertainty. As near as I can ascertain from the papers I examined, the temperatures were assumed to be 100% accurate and the only uncertainty was from the algorithms used to homogenize and infill data.

The proper treatment would be to add an error term based on measurement uncertainty. That would make the conclusions reached by the studies well within the uncertainty interval and therefore not significant.

Nick Stokes must have taught them how to use different thermometers in different locations to measure different temperatures and then claim that the Central Limit Theorem proves they have a a low error margin. BS.

“From 1850 to 1900 the average monthly error margin is +/- 0.2C.”

A large majority of the earth’s surface had no measurements before 1900. The numbers have to be guessed. It is impossible to know a margin of error for a statistic that consists primarily of guessed numbers. Every person is entitled to decide what error margin is likely.

for their own personal use. The official margin is meaningless number that could have been pulled out of a Stetson hat.

You have repeated the official error margin claims which can not be known when so many numbers are infilled. The official numbers are extremely unreasonable for pre-1900

You are acting as a trained parrot with no ability for independent thinking and judgement.

If you think the measurement methodology and measurement instruments pre-1900 really had a +/- 0.2 degree C. margin of error, for the global average temperature statistic, then I believe you are a gullible old fool.

Prove it. Show the mathematical steps used so that we can review your proof.

I’ll give you my answer.

Nope! You obviously disagree with the statement, therefore, it is up to you to prove that you are correct and this statement is incorrect. It only takes a counterexample!

Lastly, there can be no direct mathematical proof since “guessed” measurements can be no better and likely much worse than the numbers they are “based” on.

Can you prove mathematically that your guess of heads or tails is correct before you flip the coin?

You just continually display a lack of appreciation for measurements and the concept of information. Measurements are not based on statistics. The analysis of measurements using statistics may provide some insights to their characteristics. However, ultimately, the dispersion of possible measurements that can be attributed to the measurand is what measurement uncertainty is.

Guesses don’t have measurement uncertainty.

“Guesses don’t have measurement uncertainty”

They do when you are the IPCC

The IPCC also previously had 95% confidence in their guesses. That was based on a scientific show of hands vote.

This year their confidence level is higher, with 21 hands raised for the 20 scientists in the room. That’s 105% confidence folks.

Conservatives claimed some dishonest scientist raised both hands for the show of hands vote, but their complaints have been dismissed as “the usual science denying”

.

“You obviously disagree with the statement, therefore, it is up to you to prove that you are correct and this statement is incorrect.”

AGAIN, a disconnect between super and subterranea. Above ground, your claim, your responsibility to prove it. At least if you want it accepted.

Right up there with Predecessor’s “People are sayin'”…

You really don’t know science do you? When one posits a hypothesis or theory, it is up to at least one other to PROVE it incorrect.

Therefore, this statement is accurate as it relates to science:

“You really don’t know science do you?”

Unwitting projection

https://undsci.berkeley.edu/understanding-science-101/what-is-science/science-relies-on-evidence/#:~:text=Ultimately%2C%20scientific%20ideas%20must%20not,the%20heart%20of%20all%20science.

“In science, ideas that are not supported by evidence are ultimately rejected.”

IOW, when I tell you that there’s a bearded, robed, Imaginary Guy In The Sky calling the shots, it’s up to me to prove it. You want the acceptance. You provide the evidentiary proof.

I didn’t say it was up to anyone to prove your theory. It is up to others to provide the proof that refutes your theory.

This isn’t true. You are basically condemning CO2 warming because there is no evidence showing it is true. Glad to have you on the denier side!

“This isn’t true.”

The Berkeley Science Department “Understanding Science 101” begs to differ. But of course they’re just one more part of the all encompassing Dr. Evil conspiracy, to infect young minds with the accumulated facts of centuries, aren’t they?…

roflmao.

Only a complete moron could look at the first chart in the post and say they “knew” the global temperature.. AT ALL… especially to +/- 0.2C

What don’t you comprehend about there being ..

… no measurements at all for nearly the whole globe !

Those are only land weather stations

Where were also bucket and thermometers measurements in Northern Hemisphere shipping lanes. No standard depth.

Sailors probably pulled up a bucket of water and smoked a cigarette before measuring the temperature. Then round the number to the nearest full degree.

Obviously there is no such thing as “global mean temperature”. But even if there were, the analysis above only looks at the land. What about the 70% of the earth that is ocean?

Agree, but is it is complicated. For example, suppose we agree that during a glaciation our globe is a few Kelvins colder than during an interglacial. If we point to a difference in values, than we assume that the values themselves, in this case global temperatures, exist. Yet, it may be difficult to come up to an agreeable definition of the global temperature, and impossible to measure it within a definition.

A book I have, published in 1976, says that there were ” nine ocean weather ships stationed between 35 degrees N and 66 degrees N” but says nothing about the Southern hemisphere.

Weather ships?

WEATHER SHIPS !

W E A T H E R S H I P S !!!

We don’t need no stinkin’ weather ships

Click on the link below to see the right way to determine the average temperature of the oceans. Which might earn a Nobel Prize

The Honest Climate Science and Energy Blog: Dilbert measures the average ocean temperature the easy way

So, AI is now a go between?

So if you lead Google AI to tell you something you already know, it will do so.

But will it tell someone something they don’t already know?

So you take Google Gemini answers to your questions as definitive? How about some real research?

Why has nobody remarked so far that this assuages my terror about the “1.5 Degrees catastrophe” ? You’ll recall that the Two Degrees of the Paris Disagreement wasn’t frightening enough – it was taking much too long to get there! So the IPCC produced SR1.5 just in time for the failed Katowice COP. Laughed out of court by proper scientists. But MADE NO MENTION of the accuracy of the vaunted “pre industrial level”. Rubbish rubbish rubbish

For Australia, here are the 12 official BOM stations (from public material) that were open before year 1880.

BOM# NAME LATITUDE LONGIT STATE WMO#

86071 MELBOURNE REGIONAL OFFICE -37.8075 144.97 VIC 1855

63004 BATHURST GAOL -33.4167 149.55 NSW 1858

74128 DENILIQUIN (WILKINSON ST) -35.5269 144.952 NSW 1858

66062 SYDNEY (OBSERVATORY HILL) -33.8607 151.205 NSW 1859

90015 CAPE OTWAY LIGHTHOUSE -38.8556 143.5128 VIC 1864

48013 BOURKE POST OFFICE -30.0917 145.9358 NSW 1871

72151 WAGGA WAGGA (KOORINGAL) -35.1333 147.3667 NSW 1871 65016 FORBES (CAMP STREET) -33.3892 148.0081 NSW 1873

56017 INVERELL COMPARISON -29.7783 151.1114 NSW 1874

55023 GUNNEDAH POOL -30.9841 150.254 NSW 1876

15540 ALICE SPRINGS POST OFFICE -23.71 133.8683 NT 1878

52026 WALGETT COUNCIL DEPOT -30.0236 148.1218 NSW 1878

There were 43 stations opened by year 1900.

Some further temperatures might be available in other archives, such as from overland telegraph sites.

Geoff S

For Australia, I put the overall uncertainty of the typical, historic, Tmax or Tmin official, unadjusted temperature observations as +/- 1.4 deg C. This is expressed in a way that can be compared with the pure number, classical uncertainty of 2 sigma for normally distributed data.

Our BOM has issued a report with several scenarios claiming 95% (2 sigma) uncertainty of about 0.18 deg C.

In the words of Johnny Mercer 1954 “Something’s Gotta Give”.

….

Eighteen months ago, with Tom Berger, I wrote a 3-part series on these uncertainties. You can start here and follow the trail. The 3 parts attracted a total of 1301 comments, so there was a lot of interest in the topic. There is also an abundance of new material to excite the pre-existing prejudices of readers.

https://wattsupwiththat.com/2022/08/24/uncertainty-estimates-for-routine-temperature-data-sets/

……

The difference between BOM uncertainty and mine is that the BOM calculate statistics as if each temperature observation is part of a related series, so that statistics like those used for (say) a set of random numbers is used. Mine include other exogenous variables like station shifts, UHI (vaguely) and so on. IMO, the BOM approach is suited to data classed as IID for Independent and Identically Distributed, and minor variations of IID, which these observed temperatures are decidedly not. See the actual distributions in Part 3 and notice the seldom-mentioned properties of skew and kurtosis.

Geoff S

Most AI are hard-coded with politically correct ideas. There’s no use interacting with them if your goal is to get a complete perspective on anything controversial.

AI won’t “learn” anything beyond what it’s programming allows.

It likely has a subroutine to allow it to seem to learn something but it’s base programming will remain unchanged.

If it can’t learn, it’s not AI.

Since water vapor content of air varies widely across the globe heat content will vary even at the same temperature. Is it legitimate at all to average temperatures?

It never was.

It’s odd that it never gets discussed

It does not get discussed except on WUWT because it is a BS declaration.

You don’t have a clue do you?

Enthalpy is a measure of internal energy in a substance. In other words how much heat the substance has. Temperature is used as a proxy for internal energy except temperature fails for those substances like H2O that have what is known “latent” heat. In other words, internal energy that does not cause a temperature rise.

You are probably surprised that the dew point temperature is controlled by H2O. As the dew point is reached H2O begins to release its latent heat by radiating it away and forming liquid water. The radiation keeps the temperature at the dew point. It is why deserts get so cold at night. Deserts have little water vapor and therefore the temperature is controlled solely how fast the surface can radiate.

This makes temperature a very poor proxy for the internal energy contained in the atmosphere at any point in time. H2O sucks up so much radiation that disappears, radiative diagrams totally understate its effect.

Read this for a tutorial.

https://chem.libretexts.org/Bookshelves/General_Chemistry/Map:_Principles_of_Modern_Chemistry_(Oxtoby_et_al.)/Unit_4:_Equilibrium_in_Chemical_Reactions/12:_Thermodynamic_Processes_and_Thermochemistry/12.3:_Heat_Capacity_Enthalpy_and_Calorimetry

No it’s not legit. Because temperature is an intensive variable. Doesn’t matter how many thermometers you have around the world.

Yes Thermodynamics 101

AI the thinking machine’s wikipedia.

Wikipedia can be useful if you ignore the article and dig through the references and then follow on down the rabbit hole.

A little used FACT about statistics is that the largest gain in factual information in any sampling, happens when the sample size goes from zero to 1. By one measurement, (1/0) the gain is infinite. One could even argue that the only need for more data would be to demonstrate that the first sample is wrong. Ignoring any early samples “because “few are known”, ignores some of the most important information about the subject that exists.

The problem is that with only one measurement, you have no way to judge the measurement uncertainty in that single reading. Hence the carpenter’s rule, measure twice before cutting.

Given that what temperature data we do think we have has been adjusted, massaged and homogonized (i.e. faked) it is a classic case of GIGO …

And when you see a global map of current weather stations you can see how little it has changed, and that is before addressing the problem of the poor quality of most of the stations such that barely 20% here in the UK Class 1 or 2 WMO.

An interesting story about Google.

https://www.foxnews.com/media/google-interfered-elections-41-times-last-16-years-media-research-center-says

Why trust Google’s “AI”?

Very nice.

One advantage the weather stations in the 1800’s and early 1900’s is that they didn’t have a bunch of knuckleheads going around thinking they had to adjust all observations.

Assuming that’s true for sake of argument that would be a disadvantage since all measurements have some systematic error. Thinking that systematic error doesn’t exist and/or that it should not be corrected would be a big problem. Knowing that the systematic error exists and not correcting for it would be unethical at best and if you knew what the correction factor was it would be fraudulent at worst.

The usual Fake Data nonsense.

It is you lot who are the frauds.

The only way to “correct” for systematic error is to determine the magnitude of the error through a calibration process on a per station basis.

Determining that magnitude now, for stations long gone is impossible. Any correction applied without an actual calibration is a guess based on no factual information at all and is also unethical.

Making up guesses for 97% of the planet…

.. then passing it off as “data” as Giss does.

Is TOTALLY UNETHICAL and tantamount to gross scientific FRAUD.

ChatGPT 4 presently answers the last question like this:

Assessing global mean temperatures in the early 1900s, especially with the sparsity of weather stations in the Southern Hemisphere, does present challenges for climatologists and meteorologists. The certainty about global mean temperatures during this period is indeed less compared to recent decades for several reasons:

1. **Sparse Observation Network**: The network of weather stations and observations was significantly less dense, especially in the Southern Hemisphere, which includes vast oceanic areas and remote land regions. This makes it difficult to obtain a comprehensive and accurate picture of global temperatures.

2. **Interpolation and Estimations**: To account for the sparse data, scientists use various methods to estimate temperatures in unobserved areas. This involves interpolation between existing data points and using proxies for temperature, such as ice cores, tree rings, and sediment layers, which can provide indirect evidence of past climate conditions. However, these methods introduce uncertainties.

3. **Historical Data Quality**: The quality and consistency of historical data can vary. Differences in measurement techniques, equipment, and recording practices can affect the reliability of early temperature records. Efforts to standardize and adjust these records for comparison over time add complexity and potential for error.

4. **Modeling and Reanalysis**: Modern climate science uses sophisticated computer models and reanalysis techniques to reconstruct past climates. These methods integrate all available observations and proxy data using physical laws represented in climate models to estimate past temperatures. While these techniques enhance our understanding of historical climate conditions, they rely on assumptions and model approximations that may not capture all aspects of the climate system accurately.

Despite these challenges, scientists have developed methodologies to estimate global mean temperatures with a reasonable degree of confidence. Reconstructions of past climates indicate trends and changes that are consistent with other forms of evidence. While uncertainties increase the farther back in time we go, especially prior to the widespread introduction of instrumental records, the general patterns and changes in global mean temperatures over the last century are supported by multiple lines of evidence.

It’s also important to note that climate science continually evolves. As new methods are developed and more historical data are uncovered or reevaluated, our understanding of past climates, including global mean temperatures, is refined. This ongoing research is crucial for not only understanding historical climate conditions but also for improving the accuracy of future climate projections.

I’ll bet it managed to say all that with a straight digital face.

AnonyM,

Precisely what do you mean by claiming that global temperature estimates have “a reasonable degree of confidence”?

What is meant by “reasonable”?

I can (and do) make the claim that these estimates are unfit for any purpose except propaganda.

You cannot accept one claim over the other because there exists no way to confirm one claim is better than another. Temperatures are transient, fleeting things. The times have passed when a temperature was at a certain level at a place. It cannot be revisited adequately by proxies known at the present time. Proxies have unacceptable uncertainties when you strip off the wishful thinking and examine why, for example, crooks chose to “hide the decline”.

You can do immense damage to society if you endorse this nonsense that is the concept of global warming. Almost every test of it has failed. It will live on while money is being made by opportunistic carpetbaggers with overall low scientific competence. Don’t join them, for history will one day be most unkind to them. Geoff S

The first thing you need to get straight in your mind is what the “global average temperature” truly is.

It is not a temperature. At best and I do mean best, it is a supposed ΔT that is alleged to represent the growth in temperature of the “globe”.

Try and get someone somewhere, anywhere, to tell you what the absolute temperature is that goes along with the ΔT being quoted!

One version of GAT could be based on 14°C and the next 15.5°C. They could overlap or not. Is 1.5°C @ur momisugly 14°C worse than 15.5°C? Who knows?