Guest Essay by Kip Hansen — 17 December 2022

The Central Limit Theorem is particularly good and valuable especially when have many measurements that have slightly different results. Say, for instance, you wanted to know very precisely the length of a particular stainless-steel rod. You measure it and get 502 mm. You expected 500 mm. So you measure it again: 498 mm. And again and again: 499, 501. You check the conditions: temperature the same each time? You get a better, more precise ruler. Measure again: 499.5 and again 500.2 and again 499.9 — one hundred times you measure. You can’t seem to get exactly the same result. Now you can use the Central Limit Theory (hereafter CLT) to good result. Throw your 108 measurements into a distribution chart or CLT calculator and you’ll see your central value very darned close to 500 mm and you’ll have an idea of the variation in measurements.

While the Law of Large Numbers is based on repeating the same experiment, or measurement, many times, thus could be depended on in this exact instance, the CLT only requires a largish population (overall data set) and the taking of the means of many samples of that data set.

It would take another post (possibly a book) to explain the all the benefits and limitations of the Central Limit Theory (CLT), but I will use a few examples to introduce that topic.

Example 1:

You take 100 measurements of the diameter of ball bearings produced by a machine on the same day. You can calculate the mean and can estimate a variance in the data. But you want a better idea, so you realize that you have 100 measurements from each Friday for the past year. 50 data sets of 100 measurements, which if sampled would give you fifty samples out of 306 possible daily samples of the total 3,060 measurements if you had 100 samples for every work day (six days a week, 51 weeks).

The central limit theory is about probability. It will tell you what the most likely (probable) mean diameter is of all your ball bearings produced on that machine. But, if you are presented with only the mean and the SD, and not the full distribution, it will tell you very little about how many ball bearings are within specification and thus have value to the company. The CLT can not tell you how many or what percentage of the ball bearings would have been within the specifications (if measured when produced) and how many outside spec (and thus useless). Oh, the Standard Deviation will not tell you either — it is not a measurement or quantity, it is a creature of probability.

Example 2:

The Khan Academy gives a fine example of the limitations of the Central Limit Theorem (albeit, not intentionally) in the following example (watch the YouTube if you like, about ten minutes) :

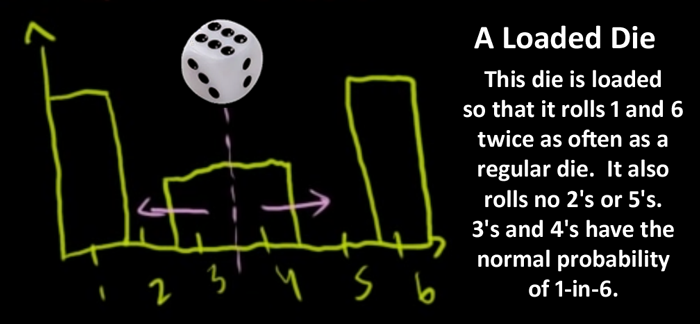

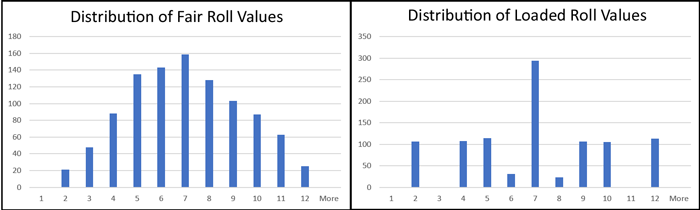

The image is the distribution diagram for our oddly loaded die (one of a pair of dice). It is loaded to come up 1 or 6, or 3 or 4, but never 2 or 5. But twice more likely to come 1 or 6 than 3 or 4. The image shows a diagram of expected distribution of the results of many rolls with the ratios of two 1s, one 3, one 4, and two 6s. Taking the means of random samples of this distribution out of 1000 rolls (technically, “the sampling distribution for the sample mean”), say samples of twenty rolls repeatedly, will eventually lead to a “normal distribution” with a fairly clearly visible (calculable) mean and SD.

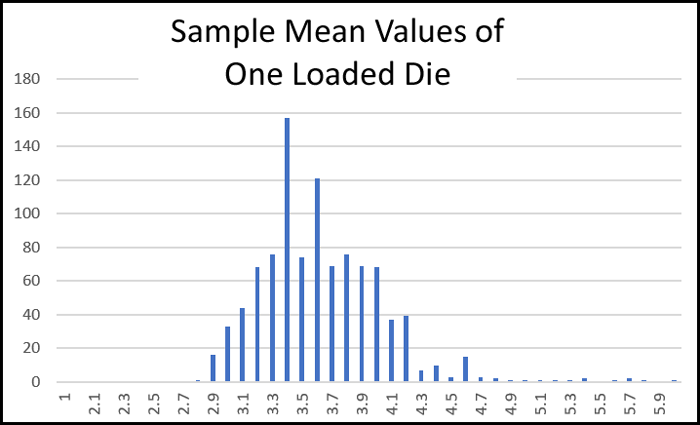

Here, relying on the Central Limit Theorem, we return a mean of ≈3.5 (with some standard deviation).(We take “the mean of this sampling distribution” – the mean of means, an average of averages).

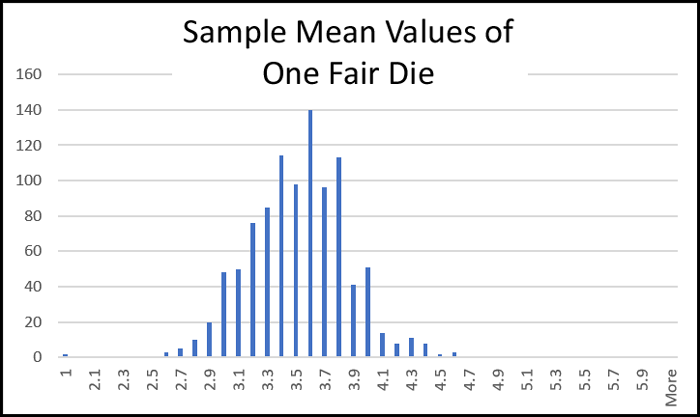

Now, if we take a fair die (one not loaded) and do the same thing, we will get the same mean of 3.5 (with some standard deviation).

Note: These distributions of frequencies of the sampled means are from 1000 random rolls (in Excel, using fx=RANDBETWEEN(1,6) – that for the loaded die was modified as required) and sampled every 25 rolls. Had we sampled a data set of 10,000 random rolls, the central limit would narrow and the mean of the sampled means — 3.5 —would become more distinct.

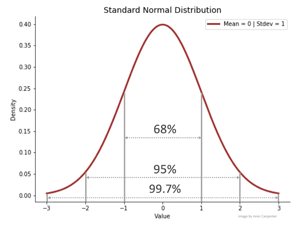

The Central Limit Theorem works exactly as claimed. If one collects enough samples (randomly selected data) from a population (or dataset…) and finds the means of those samples, the means will tend towards a standard or normal distribution – as we see in the charts above – the values of the means tend towards the (in this case known) true mean. In man-on-the-street language, the means are clumping in the center around the value of the mean at 3.5, making the characteristic “hump” of a Normal Distribution. Remember, this resulting mean is really the “mean of the sampled means”.

So, our fair die and our loaded die both produce approximate normal distributions when testing a 1000 random roll data set and sampling means. The distribution of the mean would improve – get closer to the known mean – if we had ten or one hundred times more of the random rolls and equally larger number of samples. Both the fair and loaded die have the same mean (though slightly different variance or deviation). I say “known mean” because we can, in this case, know the mean by straight-forward calculation, we have all the data points of the population and know the mean of the real-world distribution of the dies themselves.

In this setting, this is a true but almost totally useless result. Any high school math nerd could have just looked at the dies, maybe made a few rolls with each, and told you the same: the range of values is 1 through 6; the width of the range is 5; the mean of the range is 2.5 + 1 = 3.5. There is nothing more to discover by using the Central Limit Theorem against a data base of 1000 rolls of the one die – though it will also tell you the approximate Standard Deviation – which is also almost entirely useless.

Why do I say useless? Because context is important. Dice are used for games involving chance (well, more properly, probability) in which it is assumed that the sides of the dice that land facing up do so randomly. Further, each roll of a die or pair of dice is totally independent of any previous rolls.

Impermissible Values

As with all averages of every type, the means are just numbers. They may or not have physically sensible meanings.

One simple example is that a single die will never ever come up at the mean value of 3.5. The mean is correct but is not a possible (permissible) value for the roll of one die – never in a million rolls.

Our loaded die can only roll: 1, 3, 4 or 6. Our fair die can only roll 1, 2, 3, 4, 5 or 6. There just is no 3.5.

This is so basic and so universal that many will object to it as nonsense. But there are many physical metrics that have impermissible values. The classic and tired old cliché is the average number of children being 2.4. And we all know why, there are no “.4” children in any family – children come in whole numbers only.

However, if for some reason you want or need an approximate, statistically-derived mean for your intended purpose, then using the principles of the CLT is your ticket. Remember, to get a true mean of a set of values, one must add all the values together divide by the number of values.

The Central Limit Theorem method does not reduce uncertainty:

There is a common pretense (def: “Something imagined or pretended“) used often in science today, which treats a data set (all the measurements) as a sample, then take samples of the sample, use a CLT calculator, and call the result a truer mean than the mean of the actual measurements. Not only “truer”, but more precise. However, while the CLT value achieved may have small standard deviations, that fact is not the same as more accuracy of the measurements or less uncertainty regarding what the actual mean of the data set would be. If the data set is made up of uncertain measurements, then the true mean will be uncertain to the same degree.

Distribution of Values May be More Important

The Central Limit Theory-provided mean would be of no use whatever when considering the use of this loaded die in gambling. Why? … because the gambler wants to know how many times in a dozen die-rolls he can expect to get a “6”, or if rolling a pair of loaded dice, maybe a “7” or “11”. How much of an edge over the other gamblers does he gain if he introduces the loaded dice into the game when it’s his roll?

(BTW: I was once a semi-professional stage magician, and I assure you, introducing a pair of loaded dice is easy on stage or in a street game with all its distractions but nearly impossible in a casino.)

Let’s see this in frequency distributions of rolls of our dice, rolling just one die, fair and loaded (1000 simulated random rolls in Excel):

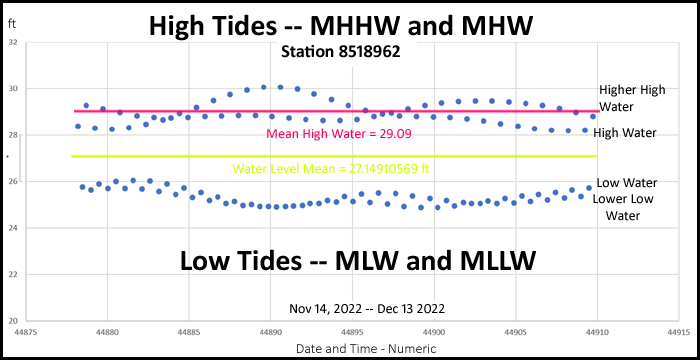

And if we are using a pair of fair or loaded dice (many games use two dice):

On the left, fair dice return more sevens than any other value. You can see this is tending towards the mean (of two dice) as expected. Two 1’s or two 6’s are rare for fair dice … as there is only a single unique combination each for the combined values of 2 and 12. Lots of ways to get a 7.

Our loaded dice return even more 7’s. In fact, over twice as many 7’s as any other number, almost 1-in-3 rolls. Also, the loaded dice have a much better chance of rolling 2 or 12, five times better than with fair dice. The loaded dice don’t ever return 3 or 11.

Now here we see that if we depended on the statistical (CLT) central value of the means of rolls to prove the dice were fair (which, remember is 3.5 for both fair and loaded dice) we have made a fatal error. The house (the casino itself) expects the distribution on the left from a pair of fair dice and thus the sets the rules to give the house a small percentage in its favor.

The gambler needs the actual distribution probability of the values of the rolls to make betting decisions.

If there are any dicing gamblers reading, please explain to non-gamblers in comments what an advantage this would be.

Finding and Using Means Isn’t Always What You Want

This insistence on using means produced approximately using the Central Limit Theorem (and its returned Standard Deviations) can create non-physical and useless results when misapplied. The CLT means could have misled us into believing that the loaded dice were fair, as they share a common mean with fair dice. But the CLT is a tool of probability and not a pragmatic tool that we can use to predict values of measurements in the real world. The CLT does not predict or provide values – it only provides estimated means and estimated deviations from that mean and these are just numbers.

Our Khan academy teacher, almost in the hushed tones of a description of an extra-normal phenomenon, points out that taking random same-sized samples from a data set (population of collected measurements, for instance) will also produce a Normal Distribution of the sampled sums! The triviality of this fact should be apparent – if the “sums divided by the [same] number of components” (the means of the samples) are normally distributed then the sums of the samples must need also be normally distributed (basic algebra).

In the Real World

Whether considering gambling with dice – loaded and fair – or evaluating the usability of ball bearing from the machinery we are evaluating – we may well find the estimated means and deviations obtained by applying the CLT are not always what we need and might even mislead us.

If we need to know which, and how many, of our ball bearings will fit the bearing races of a tractor manufacturing customer, we will need some analysis system and quality assurance tool closer to reality.

If our gambler is going to bet his money on the throw of a pair of specially-prepared loaded dice, he needs the full potential distribution, not of the means, but the probability distribution of the throws.

Averages or Means: One number to rule them all

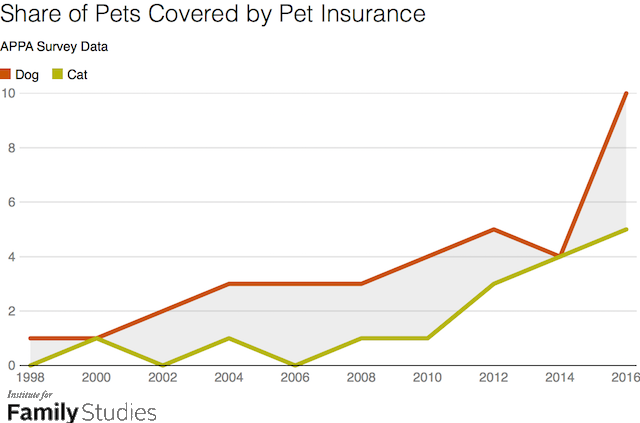

Averages seem to be the sweetheart of data analysts of all stripes. Oddly enough, even when they have a complete data set like daily high tides for the year, which they could just look at visually, they want to find the mean.

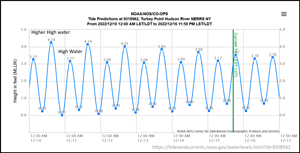

The mean water level, which happens to be 27.15 ft (rounded) does not tell us much. The Mean High Water tells us more, but not nearly as much as the simple graph of the data points. For those unfamiliar with astronomic tides, most tides are on a ≈13 hour cycle, with a Higher High Tide (MHHW) and a less-high High Tide (MHW). That explains what seems to be two traces above.

Note: the data points are actually a time series of a small part of a cycle, we are pulling out the set of the two higher points and the two lower points in a graph like this. One can see the usefulness of a different plotting above each visually revealing more data than the other.

When launching my sailboat at a boat ramp near the station, the graph of actual high tide’s data points shows me that I need to catch the higher of the two high tides (Higher High Water), which sometimes gives me more than an extra two feet of water (over the mean) under the keel. If I used the mean and attempted to launch on the lower of the two high tides (High Water), I could find myself with a whole foot less water than I expected and if I had arrived with the boat expecting to pull it out with the boat trailer at the wrong point of the tide cycle, I could find five feet less water than at the MHHW. Far easier to put the boat in or take it out at the highest of the tides.

With this view of the tides for a month, we can see that each of the two higher tides themselves have a little harmonic cycle, up and down.

Here we have the distribution of values of the high tides. Doesn’t tell us very much – almost nothing about the tides that is numerically useful – unless of course, one only wants the means, which would be just as easily eye-ball guessed from the charts above or this chart — we would get a vaguely useful “around 29 feet.”

In this case, we have all the data points for the high tides at this station for the month, and could just calculate the mean directly and exactly (within the limits of the measurements) if we needed that – which I doubt would be the case. But at least we would have a true precise mean (plus the measurement uncertainty, of course) but I think we would find that in many practical senses, it is useless – in practice, we need the whole cycle and its values and its timing.

Why One Number?

Finding means (averages) gives a one-number result. Which is oh-so–much easier to look at and easier to understand than all that messy, confusing data!

In a previous post on a related topic, one commenter suggested we could use the CLT to find “the 2021 average maximum daily temperature at some fixed spot.” When asked why one would want do to so, the commenter replied “To tell if it is warmer regarding max temps than say 2020 or 1920, obviously.” [I particularly liked the ‘obviously’.] Now, any physicists reading here? Why does the requested single number — “2021 average maximum daily temperature” — not tell us much of anything that resembles “if it is warmer regarding max temps than say 2020 or 1920”? If we also had a similar single number for the “1920 average maximum daily temperature” at the same fixed spot, we would only know if our number for 2021 was higher or lower than the number for 1920. We would not know if “it was warmer” (in regards to anything).

At the most basic level, the “average maximum daily temperature” is not a measurement of temperature or warmness at all, but rather, as the same commenter admitted, is “just a number”.

If that isn’t clear to you (and, admittedly, the relationship between temperature and “warmness” and “heat content of the air” can be tricky), you’ll have to wait for a future essay on the topic.

It might be possible to tell if there is some temperature gradient at the fixed place using a fuller temperature record for that place…but comparing one single number with another single number does not do that.

And that is the major limitation of the Central Limit Theorem

The CLT is terrific at producing an approximate mean value of some population of data/measurements without having to directly calculate it from a full set of measurements. It gives one a SINGLE NUMBER from a messy collection of hundreds, thousands, millions of data points. It allows one to pretend that the single number (and its variation, as SDs) faithfully represents the whole data set/population-of-measurements. However, that is not true – it only gives the approximate mean, which is an average, and because it is an average (an estimated mean) it carries all of the limitations and disadvantages of all other types of averages.

The CLT is a model, a method, that will produce a Mean Value from ANY large enough set of numbers – the numbers do not need to be about anything real, they can be entirely random with no validity about anything. The CLT method pops out the estimated mean, closer and closer to a single value whenever more and more samples from the larger population are supplied it. Even when dealing with scientific measurements, the CLT will discover a mean (that looks very precise when “the uncertainty of the mean” is attached) just as easily from sloppy measurements, from fraudulent measurements, from copy-and-pasted findings, from “just-plain-made-up” findings, from “I generated my finding using a random number generator” findings and from findings with so much uncertainty as to hardly be called measurements at all.

Bottom Lines:

1. Using the CLT is useful if one has a large data set (many data points) and wishes, for some reason, to find an approximate mean of the data set, then using the principles of the Central Limit Theorem; finding the means of multiple samples from the data set, making a distribution diagram, and with enough samples, by finding the mean of the means, the CLT will point to the approximate mean, and give an idea of the variance in the data.

2. Since the result will be a mean, an average, and an approximate mean at that, then all the caveats and cautions that apply to the use of averages apply to the result.

3. The mean found through use of the CLT cannot and will not be less uncertain than the uncertainty of the actual mean of original uncertain measurements themselves. However, it is almost universally claimed that “the uncertainty of the mean” (really the SD or some such) thus found is many times smaller than the uncertainty of the actual mean of the original measurements (or data points) of the data set.

This claim is a so generally accepted and firmly held as a Statisticians’ Article of Faith that many commenting below will deride the idea of its falseness and present voluminous “proofs” from their statistical manuals to show that they such methods do reduce uncertainty.

4. When doing science and evaluating data sets, the urge to seek a “single number” to represent the large, messy, complex and complicated data sets is irresistible to many – and can lead to serious misunderstandings and even comical errors.

5. It is almost always better to do much more nuanced evaluation of a data set than simply finding and substituting a single number — such as a mean and then pretending that that single number can stand in for the real data.

# # # # #

Author’s Comment:

One Number to Rule Them All as a principal, go-to-first approach in science has been disastrous for reliability and trustworthiness of scientific research.

Substituting statistically-derived single numbers for actual data, even when the data itself is available and easily accessible, has been and is an endemic malpractice of today’s science.

I blame the ease of “computation without prior thought” – we all too often are looking for The Easy Way. We throw data sets at our computers filled with analysis models and statistical software which are often barely understood and way, way too often without real thought as to the caveats, limitations and consequences of varying methodologies.

I am not the first or only one to recognize this – maybe one of the last – but the poor practices continue and doubting the validity of these practices draws criticism and attacks.

I could be wrong now, but I don’t think so! (h/t Randy Newman)

# # # # #

Kip,

Your patience to write this essay is appreciated. No doubt, as you forecast, statisticians will make comments.

As I wrote to your earlier post, a central concept for statistics is to sample a population, so you can work with sub sets of the population. One seldom sees confirmation that one population is being sampled. A single population might be identified as one without significant influence of other variables affecting it.

Physicians use thermometers to get numbers for human body temperatures. Their population is the human population, here regarded as one population. The measured temperature is not influenced by the various designs of engineers.

Meteorologists use thermometers to get numbers for global temperature estimation. The result depends on engineered design.

Humans are part of the human population.

Thermometers in screens are individual devices whose properties vary so widely that they fail to be classed as a population when their numbers are grouped. They are not candidates for central limit or large numbers laws.

Physicians do not insert thermometers in patient A to measure the temperature of patient B.

My dislike for many aspirations of statistics in climate research is because of the improper ways that real uncertainty is made to look smaller than it is in practice. Bad outcomes are then permitted by appeals to the authority of statisticians. Geoff S

Geoff ==> Yeah, to be real and useful, averages (and CLT produces approximate averages) the data set must consist of “objects in sets to be averaged must be homogeneous and not so heterogeneous as to be incommensurable.”

Or, as I say above, not so vague and uncertain to barely be considered real measurements.

Physicians may not use a thermometer in one patient to determine the temperature of another, but the pathology labs do use the results gathered over time to determine the “normal” or reference range for those patients who utilised that particular laboratory which may differ from laboratory to laboratory for a wide range of reasons, both in-house procedures and external factors.

The variations are never discussed though. One of my jobs during COVID was to screen students coming to school. The variation was tremendous. Many were below the 98.6 and some significantly above on every check. The standard deviation was pretty large.

nonsense, variations are always discussed

Look! A comma!!

Perhaps he is recovering from his commaitis. However, his concurrent perioditis strain of punctuationitis and capitalizationitis are not showing any improvement.

That’s a classic example of spurious precision, having been converted from 37 degrees C.

And the 37 degrees C was originally the mid-point of a range, either 36 to 38 or 35 to 39 – too long since I read it, and didn’t pay much attention to an interesting piece of medical trivia.

cocky ==> I wrote something about body temperature in this essay.

I did a quick Google and found this:

Normal Body Temperature: Babies, Kids, Adults (healthline.com)

My own body temperature usually runs 96.8°F (my homeostasis function is dyslexic). If my temperature reads “normal” I’m courting a fever.

kalsel3294 ==> Physicians, and my father was one, use “normals” as a broad range to determine if one individual’s metric is way out of the normally expected range.

Many blood test metrics have normal ranges, and this is a good thing.

What none of these have is a single number to which all need conform. Even with the ranges, it is well recognized that many patients can have a number far out of range without having medical condition or needing treatment of any sort — Natural Variability.

And an important point is that in the practical application of such measurements, a precision of a tenth of a unit is meaningless when the range is several units.

Thank you for reminding us that most calculations involving a large data set produce “just a number”. I measure your rod with my tape measure, graduated in 1/16in, and get 19 9/16 inches which I like to write as 19.5625 inches because it’s much more accurate!

Exactly! The measurement is really only good to +/- half of 1/16″ (you know it’s not 19 1/2″ nor 19 5/8″ and can ‘eyeball’ a bit of precision tighter than that) which would be about +/- .03″ but the 19.5625 quoted above gives a false impression that the measurements are good to 10,000th of an inch.

One thing though, in climate science they can know the precision of the thermometers and tide guages, and then fret about some increasing trend away from the mean – but never considering that the trend is miniscule compared to the daily variation.

“The world is increasing in temp 1.5°C (or 3 or 5, etc) per century and therefore there will be mass extinctions and so on ”

And yet the biosphere tolerates 50°C swings in a year quite readily and generally seems to do better the warmer it is.

It like climate science can’t see the tree for the forest! Can’t see the real effects and benefits on actual living things because some global average is increasing – and like you point out, a false sense of precision is giving them an even more false trust in their doomsday predictions.

In particular when the “GAST” is an average of a COMBINATION of average daytime HIGHS and average nighttime LOWS, and most of the “increase” in the “average” temperature is an increase in the overnight LOW temperatures, NOT the daytime HIGH temperatures.

So exactly what species is going to be threatened with extinction or be forced to migrate to a new habitat because it doesn’t get quite as cool at night?!

But the “one number” statistical malfeasance allows such misperceptions to propagate. “The Earth has a FEVER!” Utter nonsense.

Maybe I’m misunderstanding something, but it doesn’t seem to me the author understands what the CLT is. We have phrases like “…by finding the mean of the means, the CLT will point to the approximate mean, and give an idea of the variance in the data”.

You don’t use the CLT to find a mean of means, and it doesn’t point to an approximate mean. The sample mean is the approximate mean, you don;t need the CLT to tell you what it is. What the CLT says, is that as sample size increases the sampling distribution tends towards a normal distribution.

You don’t have a clue do you? You sample a population with a given sample size and a large number of samples. You find the mean of each sample and write it down. All of the means from each of the samples forms a “sample mean distribution”. The mean of the “sample means distribution” is the ESTIMATED MEAN. The standard deviation of the “sample means distribution” is the Standard Error or SEM.

Why do you think Dr. Possolo expanded the standard deviation of the temperature sample in TN1900? It is basically because of the limited number of samples (that is, only one sample of size 22).

The sample size (number of elements in each sample) is important to insure you have IID samples. The size also determines how alike the deviation is in each sample which in turn makes the SEM more accurate. The number of samples determines the accuracy of the shape of the distribution.

I’ve tried to explain this to you many times, and I know you will never listen. But the problem is you, and Kip, keep confusing a description of what the CLT means, with the method of using it. You do not usually take a large number of samples in order to discover what the sample distribution is. You use the CLT to tell you what sort of distribution your single sample came from.

Taking multiple sample to get a better mean is not using the CLT to estimate the mean, it’s simply taking a much bigger sample, who h will have it’s own smaller SEM than the individual samples

“Why do you think Dr. Possolo expanded the standard deviation of the temperature sample in TN1900? ”

You keep asking me that, and then ignore my answer. He expands the uncertainty range to get a 95% confidence interval. It’s what you always do when you have a standard error or deviation or whatever. You multiply it by a coverage factor to get the required confidence interval. The size of the sample is irrelevant to this, apart from using a Student distribution rather than normal one.

he doesnt understand CLT and neither does gorman

The clown car has arrived, mosh, bellcurveman, bg-whatever can’t be far behind.

Mosher ==> I use CLT to represent the process of using the principle.

Holy schist! THREE capitalist letters!

Bell ==> I use CLT to represent the process of using the principle.

I’m not sure what you mean by that. My point it that you don’t seem to understand what the process is. You seem to be suggesting in the essay that the process is finding the mean of multiple means, and my point is that is not how you use the CLT.

I’m not objecting to you using simple examples rather than going into the proof of the theorem. I’m objecting to the use of strawman arguments to attack statisticians and scientists for doing things they don’t do.

Bell ==> The process is clearly explained and demonstrated in an app at onlinestatbook.com (for example).

Or, as explained here: “the sampling distribution of sample mean…This will have the same mean as your original distribution”

You may well have used different words to explain the process, but it is what it is.

Neither are explaining “the process” They are just demonstrating what the CLT looks like. I’m still trying to understand what you think the process is, and how it is used by statisticians and metrologist.

To me the sort of processes which make use of the CLT are when you take a single sample of reasonable size from a population, and then use the assumption of normality to test the significance of a hypothesis.

You seem to think that the use of the CLT process is to take thousands of samples of a given size and take the average of the average to get a less uncertain average.

How do you think anyone ever confirmed the theorem? Before assuming a normal distribution it’s a good idea to do a little legwork before applying the CLT willy-nilly.

It’s a theorem. You don’t need to confirm it. It’s proven.

Of course, it’s reassuring that you can run simulations to show that it’s correct.

Likewise, having spent years as a metrology tech (meter calibration, not weather monitoring) it continues to bug me greatly that people claim accuracy greater than the calibrated accuracy of an instrument simply by averaging values. Additionally, how many instruments you use does not matter if you are thinking in that direction. My experience in instrument calibration certainly showed me that the instrument uncertainty is essentially never normally distributed within the calibration specification window.

Gary W==> Yes. There is a continuing issue of confusing sample measurement distribution statistics – Mean, Standard Deviation, Skew, Kurtosis – which describe the variability of the sample data and the Measurement Uncertainty which describes the measurement instrument’s capability. The CLT deals with how sample size relates to variability (I.e. variance/standard deviation) in the sample and the population from which the sample is drawn. It has nothing to do with the measurement uncertainty.

The uncertainty of a mean of N samples is calculated as the instrument MU divided by the square root of N. Thus if the MU for a caliper is 0.01 mm then the MU of an average of 25 repeated measurements is 0.002 mm. This formula is derived from addition in quadrature of the MU’s of the individual measurements times a sensitivity coefficient. This is based on the fact that MU’s contribution to error in the measured results is random within the stated limits and thus multiple measurements will result in canceling a portion of the error.

I would note that in most all real world measurement processes the variability in a series of measurements as described by the Standard Deviation (e.g. 2-Sigma limits) is substantially larger than the MU of the average. If it is not, one should obtain a better instrument. I would further note that these comments apply only when dealing with well defined and controlled sampling and measurement methods. The error or uncertainty or any other characteristics claimed for data sets that are derived from different instruments, measurement procedures, sample selection and other highly variable conditions are indefensible. And that goes for the applicability of the CLT as well.

“The uncertainty of a mean of N samples is calculated as the instrument MU divided by the square root of N. Thus if the MU for a caliper is 0.01 mm then the MU of an average of 25 repeated measurements is 0.002 mm.”

People should read this twice. Let me add that the “repeated measurements” also means of the same thing. You can’t measure 25 different things, find an average, then claim you know the measurement of each thing to 0.002.

nope still wrong

You are not as smart as you think you are. If you were, you would realize that your down-votes indicate that people are not accepting your pronouncements. If you want to make your time investment worthwhile, explain exactly why you disagree with Jim and others. Your arrogant, drive-by ‘edicts’ are not impressing this group. Most are well educated, and are your intellectual peers. They provide logical arguments and often direct quotes from people who are actually experts, not someone who wants others to think he is some kind of expert.

Back in the 80s I played with temperature measurement and control while employed by a manufacturer of technological measuring equipment. Their temperature measurement equipment made ten measurements in a second or so, threw out any of those ten, 3 sigma or more from the mean, then presented the average of what remained as the temperature. I had to control a process at +/-0.1°F with a temperature dependent outcome variation. We seemed to be able to control the process, so the measurement system must have worked very closely to reality.

Steve ==> Of course, you are measuring basically “the same thing” many many times in a system with very little variation. The Law of Large Numbers thus is appropriately applied (especially when you throw away measurements “that you don’t like” (3 sigma more of the mean). I assume that the controlling equipment used that running mean to adjust the temperature in real time.

Quite simply, the system had to work….

“(especially when you throw away measurements “that you don’t like” (3 sigma more of the mean)”)

I’m guessing that the outlier rejection criteria was more based on previous data evaluation and sound engineering judgment than on “like”. That’s why they got the good results…

YES. There are no “series” of “repeated measurements” of the temperature, anywhere. There is only ONE measurement of temperature at a given moment at a given location (if there are any).

So we’ll never get greater precision by computing an average of such measurements.

AGW is Not Science said: “There is only ONE measurement of temperature at a given moment at a given location (if there are any).”

The ASOS user manual says all temperature observations are report as 1-minute averages.

So. does any climate scientist you can refer us to that actually uses that data to find an integrated value for an average, or do they all still use Tmax and Tmin.

Recording a 1 minute average is useless unless someone uses it to determine something.

Tmax and Tmin are themselves averages. That’s the point.

They are a one minute average, so what. What period of time does a MMTS average its readings over? How about an LIG? Do you think those are instantaneous readings? Ever hear of hysteresis?

Recall that this is the guy who thinks it is possible to determine and then remove all the “biases” in historical data.

The so what is that according to you and Kip that makes all temperature observations using ASOS and other similar modern electronic equipment useless and meaningless. Does it even make sense to argue about the uncertainty of a value you don’t think is useful and meaningful? Playing devil’s advocate here…wouldn’t the best strategy be to focus on that? Think about it. Since the law of propagation of uncertainty requires computing the partial derivative of an intensive value that means it’s result would have to be useless and meaningless as well. Again…assuming it truly is invalid to perform arithmetic operations on intensive properties. I’m just trying to help you form a more consistent argument.

My goodness, have you not read the multitude of posts denigrating using temperature as a proxy for heat? Tell us what the enthalpy difference is between a desert and marshland both at 70 degrees. Temperature is not a good proxy because of latent heat of H2O like it or not.

Are temps adjusted for height above sea level, i.e., the lapse rate? What is the difference in a temperature measured here in Topeka versus one in Miami, Florida at sea level due to the lapse rate?

Deflection and diversion. Enthalpy has nothing to do with this subthread. The fact remains that Tmin and Tmax are actually averages and you still think an average of an intensive property is useless and meaningless. If you want to argue that Tmin and Tmax are useless and meaningless than u(Tmin) and u(Tmax) would have to be useless and meaningless as well since the law of propagation of uncertainty requires doing arithmetic with Tmin and Tmax. Nevermind that you have stated many times that averages aren’t measurands which would have to mean that you don’t think either Tmin and Tmax are measurands. And if you don’t think they aren’t measurands then you probably don’t think u(Tmin) and u(Tmax) even exists.

So you have now proceeded to cancel those with whom you disagree. Good Luck. If you can’t beat them, cancel them. Why don’t you just admit that you have never had an upper level class, done research or designed anything needing to have true measurements. If you had you would appreciate the issues.

Zeke Hausfather is a serial abuser of the law of large numbers.

https://www.climateforesight.eu/interview/zeke-hausfather-every-tenth-of-a-degree-counts/

Well, let’s try a slightly different angle on CLT and uncertainty. Let’s assume you have made a large number of observations of a temperature value – perhaps of the water in a large tank. Your thermometer has one degree temperature marks. Furthermore, let’s assume the temperature does not change during your observation time and all temperature values you record are the same. (This is not unusual in the real world.) What does CLT tell you about the mean and uncertainty of your observed data? You certainly cannot use Standard Deviation of those observations to claim an uncertainty of ZERO for the tank’s water temperature. The uncertainty must always be equal to or greater than the measurement instrument’s calibration accuracy. Standard Deviation is not a substitute for instrument calibration accuracy.

Gary ==> “The uncertainty must always be equal to or greater than the measurement instrument’s calibration accuracy. Standard Deviation is not a substitute for instrument calibration accuracy.”

And it really is that simple — except for “stats and numbers” guys.

Sorry guys, if you follow the GUM or NIST with respect to Measurement Uncertainty you will find that any results derived through mathematical combination of multiple measurements follows this general formula.

uY = √[((δY/δx1)u1)^2 + … + ((δY/δxn)un)^2]

In this formula the δY/δx terms are the partial derivatives of the combining formula with respect to each measurement. These are referred to as “sensitivity coefficients”. The u terms are the uncertainties associated with the measurement. This formula is applicable to any result that involves calculation from more than one measurement. They can be different properties such as voltage times current to measure power.

For an average of multiple measurements the sensitivity coefficients are all 1/n and the u’s are all the same. Thus the combining formula for the MU of an average reduces to u/√n.

u=√n(u/n)^2) = √n(u^2/n^2) = u/√n

So averaging multiple replicate measurements does indeed reduce the uncertainty of the result. If it did not why would anyone want to bother with making repeated measurements? The argument that you can’t reduced MU through averaging assumes that every measurement is off by the same amount in the same direction. That is the definition of a systematic error, not measurement uncertainty.

“So averaging multiple replicate measurements does indeed reduce the uncertainty of the result. If it did not why would anyone want to bother with making repeated measurements? “

Interestingly, that is a bogus question. There are situations where averaging multiple measurements can be useful. For one, in some instances it can reduce noise. It’s been a few years but my recollection of the use of the NIST document you mention above is that it assumes the process measured is more accurately known than the instrument measuring it. Averaging repeated measurements provides an estimate of the instrument error from a known standard. Of course, that is generally useful only in instrument calibration labs.

Rick ==> Spoken like a true statistician. However, I am not talking “sensitivity coefficients” — I am talking just plain vanilla uncertainty of the original measurements.

Total uncertainty of uncertain measurements is ADDITIVE — and averaging is division.

Arithematic — not statistics.

See here.

If you can diagram — similar to the diagram I supply — a proof of your statistical formula, I’d like to see it here.

Use the same example — single digit +/- some equal amount.

Kip: What you have diagramed is not the uncertainty of the result, but rather a “sensitivity analysis” which simply asks what is the highest and lowest possible result within the uncertainty. But there is near zero probability that both measurements would deviate by the full uncertainty value in the same direction. Adding or subtracting results in a sensitivity coefficient of 1 for each value and thus the MU of the result is the square root of 2 = 1.414 if the MU is +/- 1.

Gary W: For all intents and purposes the variability in a series of repeated measurements is noise. That is why making multiple measurements and averaging produces a result with less uncertainty. It’s also why in the lab we do multiple replicate measurements and report the mean as well as the standard deviation and the Uncertainty of the mean. The SD and MU are not measures of the same thing. Often the SD is an order of magnitude greater than the MU. This is of particular importance when the measurement involves destruction of random samples such as steel coupons sampled from coil to determine tensile strength. The SD represents real variability between samples while the MU is a statement of confidence in the in the reported result.

Rick C ==> I diagram the real world uncertainty — not a probability. The diagram is a real world everyday measurement problem. Probability does not reduce uncertainty. The uncertainty is just there and doesn’t disappear because some of the range is “less probable” — which may be true, but it is still uncertain — which is why they call it uncertainty.

If you can diagram your solution to the simplest of everyday problems of averaging known measurement uncertainties and show that the “statistical approach” is a better understanding, have at it.

“The uncertainty is just there and doesn’t disappear because some of the range is “less probable” — which may be true, but it is still uncertain — which is why they call it uncertainty.”

But uncertainty, in the real world, is based on probabilities. Uncertainty intervals are defined by a probability range, e.g. the 95% confidence interval. Requiring 100% confidence in the range just makes the concept meaningless.

Bell ==> The uncertainty of rounded recorded temperatures is not a probability — it is a certainty — we know for certain what the range of the real world absolute measurement uncertainty is.

Uncertainty is not a probability when we know.

It’s odd to be requiring certainty about uncertainty. You want a meaninglessly large uncertainty interval, just so you can be certain it’s covered all possibilities, no matter how improbable.

Wrong—the probability distribution of a combined uncertainty is typically unknown.

If that were true, talk of an uncertainty interval is a meaningless sham. What use it it to know a result with an uncertainty of ±2cm if all that means is the value could be inside the interval or outside it, and you don’t know how likely it is to be inside? Why go through all the calculations if the result is just a bit of hand waving?

What is the probability distribution of a combined uncertainty spec for a digital voltmeter?

karlomonte: As a former manager of an accredited calibration laboratory, I can tell you that the answer to your question should be contained in the calibration certificate provided with the instrument. The certificate may even provide the calibration data and the uncertainty budget. Various components of the MU might be from normal, triangular or rectangular distributions. Each component has a “standard uncertainty” which is analogous to the standard deviation of a normal distribution. These standard uncertainties are combined as the square root of the sum of the squares (quadrature). This combined standard uncertainty is multiplied by a coverage factor (typically designated as ‘k’) equal to 2 for the 95% confidence MU or 3 for 99% confidence. This process is thoroughly described in technical detail with examples in the GUM which is the global standard that must be followed by. all organization providing calibration services. If you want to see an exhaustive treatment of the subject obtain a NIST calibration certificate for a primary standard reference.

You can challenge it all you want, but it is the process rigorously derived through international cooperation of standards bureaus and publishers such as ISO and NIST, and enforced by accreditation bodies such as ANSI, NVLAP, APLAC and ILAC.

Every independent laboratory, calibration laboratory and scientific instrument manufacturer is supposed to follow ISO 17025 which details calibration requirements including reporting of instrument MU in compliance with the GUM (ISO Guide to the expression of Uncertainty of Measurements.

Now, as me if the bulk of information being used by the climate change hysteria industry deals with MU correctly.

Hahahaha… NO.

Rick, you are of course quite correct, although in my ISO 17025 training I don’t recall having to report distributions, but only the work to calculate the combined and expanded uncertainties.

Typically the DVM manufacturer provides error band specs from which a combined uncertainty has to be inferred, specific to how it is being used. But the error bands have no statistics attached to them, not even the direction.

As the usual suspects here have finally revealed, they really don’t care about MU so it is quite pointless to argue these ideas with them.

Karlo: Yes, manufacturer’s specifications typically are very abbreviated. I most all cases you have to ask for an actual calibration certificate to get a proper MU statement. Many manufacturers charge extra for a CalCert. I’ve had cases where the charge for a calibration certificate was more than the price of the instrument.

There are also cases where calibration and determination of MU are not feasible using the normal process. The GUM and ISO 17025 allow for other methods to estimate MU based on things like experience and interlaboratory comparison studies.

If the uncertainty interval is +/-2cm, why do you say the value could be inside OR outside the interval? Does not the +/- value define the interval where the true value must be (barring a faulty instrument or faulty measurement, either of which is, it seems to me, a whole different thing).

How does that apply to averaging temperatures from different times in the day and from different locations with different devices?

I can measure temperature at one location at one time with an uncertainty of 0.1 C. The average global temperature might be something like 15C with a standard deviation of +/- 10C. That would indicate that ~ 95% of the measurements fall with a range of -5 to +35C. Given such a wide range in raw data, I’m not sure anything meaningful can be derived from the average. The measurement uncertainty of the individual measurements is trivial by comparison. Where global atmospheric temperature is concerned, there are dozens of reasons to doubt the validity of any claim. Fundamental problems include:

Sampling is not random.

Much of the earth is not sampled at all.

Frequency of measurements is inadequate to capture an integrated mean over a specified time period.

Instruments and measuring procedure are not standardized.

The “Global Mean Temperature” is not clearly defined.

A great deal of the data contained in various data bases has been adjusted or infilled based on comparison to other location and thus are not “independent” measurements.

All these issues violate sound scientific measurement practices and thus invalidate any claim of accuracy.

I do agree with everything you have stated here. The only thing I will add is that temperature trends should be done using time series analysis and not simple regression of very, very, sketchy data.

The only rationalization I can think of is that too many folks working on the Global Average Temperature are mathematicians that have no concept of how measurements are done in the real physical world. They have no appreciation for the problems you and Kip have mentioned.

If the same thing is measured by the same instrument and the thing being measured has a single, unique value.

If the samples have a bi-modal distribution, a more precise estimate of the mean of the samples may be useless if what one needs is an estimate of each of the mode’s values.

If a time series with a trend loses the sequence information, then it looks like a large variance that grows with time. The point being is that one can’t always depend on more samples providing more precision to a mean. One has to show some intelligence in handling the data.

In the example, the measurements are recorded to the nearest 1̊F. Unstated but necessarily true is that the ‘actual’ value is somewhere in the inclusive interval +/-0.5̊F around that reading.

If the measurements are all the same to some ̊F, then the water might actually be, for example -0.5̊F lower. Does using the calculations defined by the CLT get one closer to that actual temperature, with less uncertainty?

This, you state is systematic error but is that correct? It is within the basic uncertainty of the measurement. Can you get closer to the true temperature through any statistical or probability calculation?

The only thing you can reduce by multiple measurements of the same thing is random error. Those are errors whereby a minus error offsets a plus error. Technically, one should graph the errors to see if they have a Gaussian distribution.

The problem one has with uncertainty is that each measurement, even those with error, has an uncertainty. So, one doesn’t really know if the errors cancel each other out. In other words, uncertainty builds. It is one reason for an expanded standard uncertainty.

Andy: I said that if an instrument is always off by the same amount in the same direction that is systematic error and is not subject to the CLT. This might occur for example in an old style thermometer where the glass tube is attached to an scaled metal plate. If the plate should slip down relative tube all readings will be off by the same amount.

Systematic error exists to some degree in almost all measurements but often it is identified in calibration and applying a correction then eliminates it. But an unrecognized systematic error is not accounted for in MU statements for the simple reason that it is unrecognized.

You are mixing instrument resolution (the smallest readable difference) with uncertainty and systematic error. Resolution is always one component of uncertainty – taken as 1/2 the smallest division. Other components must also be included such as the uncertainty of calibration references.

Rounding to the nearest marking introduces a half-interval plus/minus. However, there is also an uncertainty introduced from the inability to determine 0.49 from 0.50. Consequently, some measurements are rounded up that should have been rounded down, and vice versa, some are rounded down that should have been rounded up. I suspect this is why the NWS specified a +/- 1 degree.

And how many weather “thermometer readings” fit this “repeated measurements” description? None.

Gary W ==> Thank you for the report from “the guy who actually does things” — which is opposed to the guy who studied stats in uni.

Additionally, something that is being measured has to have a unique value that doesn’t change with time (stationarity). Furthermore, if what is being measured has a large variance, increasing the precision of the mean has little practical value because it spreads out the probability bounds to the extent that the extra ‘significant’ figures have little utility.

Clyde,

That is an excellent point. From my engine rebuilding days as a youth, this is an important fact in high compression engines. One must measure the cylinder bores multiple times at different locations and be sure that the inside micrometer is reading the maximum measurement each time. One must be sure that the high spots and low spots can be sufficiently covered by the compression rings or blowby will occur. Measurement uncertainty abounds.

It always slays me when people think that uncertainty when measuring different things will cancel. I liken it to saving old brake rotors in a pile, and when working on one car, you go measure a whole bunch of the saved ones along with the one you are considering, to arrive at an accurate measurement. The real world just doesn’t work that way. You have to measure the SAME thing multiple times.

The example in the opening paragragh is an example of determining the accuracy of the measuring device. The mean length of the rod can only be expressed as 500mm +- 2mm. Using the old spring style kitchen scales is a good example of getting a different weight each time the same item is weighed.

Example 1 is a different situation with that used to measure the ball bearings already having a known accuracy, it is measuring the variation in the production process.

Except, each measurement also has uncertainty that is contributed by the measuring device. As you say the same instrument can give different readings each time you measure the same thing. That is part of the quality process, understanding when the measuring device is showing variation and when the manufacturing process itself is introducing variation.

kalsel3294 ==> Welcome to the conversation, don’t recognize your commenter-handle (but that may be my oldster memory). This is your second comment here to this essay.

Meauring the stainless steel rod, in the real world, is a guy attempting to get a good measurement of his SS rod expecting it to be 500 mm. Correctly, he must decide to report 500 mm +/- 2 mm as you suggest. Hopefully, that is good enough for him and his superiors or customers. For the Mars Rover Project, a total failure though.

Example 1, ball bearings, is NOT measuring “ball bearings already having a known accuracy”….that is what the measuring is meant to accomplish. Sorry if this was not clear.

“The Central Limit Theorem is particularly good and valuable especially when have many measurements that have slightly different results.”

Totally confused, as many here, about what the CLT is. From the Wiki link:

“In probability theory, the central limit theorem (CLT) establishes that, in many situations, when independent random variables are summed up, their properly normalized sum tends toward a normal distribution even if the original variables themselves are not normally distributed.”

It’s important, but the surprising result is convergence to a normal distribution, not convergence to the mean. The latter is the result of the Law of Large Numbers, and it applies under the same conditions as CLT; in fact it is a corollary of it.

sed ‘s/[Cc]entral [Ll]imit [Tt]heorem/Law of Large Numbers/g;s/{Cc]{Ll][Tt]/LLN/g” $infile > $outfile

Simples 🙂

Hmm, that joke seems to have gone down like a lead balloon 🙁

old cocky ==> Gee, you’d have to explain it to me — I had no idea it was a joke — didn’t mean a thing to me….

You had to be there…

And to add the most important aspect of the CLT: it allows comparison between two different datasets to assess the probability they come from a single population.

In other words it is a key element of statistical testing and inference.

I would suggest people go and read Statistics and data analysis in geology by John C. Davis.

Then try geostatistics and particularly the concept of a random function and the difference between kriging and simulation and why both are important to understanding uncertainty.

ThinkingScientist ==> don’t get me started on the idiocy of krigging temperatures……

If kriging isn’t your thing then how do you propose forming a scalar (or vector) field from a set of data point?

What are your thoughts on NIST using kriging in TN 1900?

Same old, same old. Try to stay on the subject at hand instead of deflecting to something else. I don’t remember seeing krigging mentioned anywhere in the GUM when discussing measurement uncertainty.

Dude, do the mass fractions measured in sediment change on a second by second continuous basis like temperature?

I never mentioned kriging temps. I am talking about understanding of underlying principles.

Like using a thermometer on the east coast of Greenland plus one in Nova Scotia and one on Ellesmere Island to report the ‘average’ temperature of Greenland?

It’s important, but the surprising result is convergence to a normal distribution,

====$$

On this point I agree. As I state in more detail further on, it is my conjecture that this convergence to the normal distribution that has led a generation of climate scientists to falsely believe that temperature records have predictive power

It is the same problem as predicting the stock market from the dow. When the dow is going up it is a safe bet the market will be up tomorrow. Until it isn’t.

Temperature is a time series at its base. What climate science has tried to do is grab onto some measurements that were never designed to be able to be used for trending over a long term. I have developed myself too many graphs showing Tmax stagnant and Tmin growing to believe that land temperatures are going to burn us up. Others have posted the same.

Climate science needs to start explaining how the increase in Tmin is going to affect the earth. I have seen several studies from the agriculture community where growing degree days have increased thereby allowing longer maturing varieties that have better yields. Warmer nights mean less heating. On and on.

Part of the reason some are denying the accuracy of what is being done is having to admit that past temperature data is not fit for use. Man, that a big apple cart to upset.

HVAC folks have moved on to using integration of the latest minute based temperatures that are available for more accurate computing of heating/cooling degree days. You would think climate science would be doing the same since we now have decades of this kind of data available.

Certain trees, such as apples and cherries, do have minimum cold hour requirements.

“I have developed myself too many graphs showing Tmax stagnant and Tmin growing to believe that land temperatures are going to burn us up. Others have posted the same.”

Here’s my graph based on BEST maximum and minimum land data.

Min temperatures have gone up more since the 19th century, but since 1975 max temperatures seem to be warming faster.

Who am I to disagree, but I certainly can’t detect any warmer in the winter nights here?

From:

https://www.probabilisticworld.com/law-large-numbers/

The term ‘probabilistic process’ is defined as the probability of something occurring in a repeated experiment. The flipping of a coin is a probabilistic process. That is, what percentage of the time does heads or tails occur. Rolling a die is a probabilistic process when you are examining how often each number occurs. So fundamentally the LLN deals with probabilities and frequencies in a process that can be repeated. It is important that the subject remain the same. For example, if I roll 10 dice 1000 times and plot the results, it could happen that I will have different frequencies for the numbers 1 – 6 because of differences in the dice.

Can this weak LLN be applied to measurements? It can under certain conditions. Basically, you must measure the same thing multiple times with the same device. What occurs when this happens? What is the distribution of the measurements? What happens is that there will be more of the measurements in the center and fewer and fewer as you move away from the center. In other words a normal or Gaussian distribution. The center becomes the statistical mean and is called the “true value”. Why is this? Because small errors in making readings are more likely than large errors. As a result, a Gaussian distribution will likely occur where there are equal values both below and above the mean which offset each other and the mean is the point where everything cancels. The above is a description of the weak LLN.

The strong LLN deals with the average of random variables. The strong law says the average of sample means will converge to the accepted value. However, in both cases these laws must meet what is known as Independent and Identical Distributions (IID).

What does identically distributed mean? Each sample must have the same distribution as the population. For example, you have a herd of horses and you want to find the average height. But, the herd is made up of Clydesdales, Thoroughbreds, Welsh Ponies, and Miniatures. You wouldn’t go out and measure only Welsh Ponies and Miniatures in some samples and only Clydesdales in other samples. In other words your samples would not have identical distributions as the population.

Remember, X1, X2, X3, … are really the means of each of various samples taken from the entire population. They are NOT the data points in a population. This is an extremely important concept. Many folks think the sample means and the statistics derived from the distribution of sample means accurately describe the statistical parameters of the population. They do not! If sampling is done correctly, the sample mean can give a direct and fairly accurate estimate for the population mean. However, the standard deviation of the sample means IS NOT a direct estimation of the population standard deviation. Remember, the distribution of the sample means is a DERIVED value from sampling the population. It will not resemble the variance of the population. It must be multiplied by the square root of the size of the samples taken (not the number of samples).

Open a new tab and copy and paste this

http://www.ltcconline.net/greenl/java/Statistics/clt/cltsimulation.html

Click user and draw the worst population distribution you can, then select different sample sizes to see what the sample means distribution looks like.

Then do the same and go to this site.

https://onlinestatbook.com/stat_sim/sampling_dist/index.html

And when a large error does occur, it is likely to be a problem of transposing digits or an electrical noise spike. These then end up being discarded as outliers.

Closely followed by the Nitpick Nick Shuffle…

Nick ==> It is the use in practice that concerns me — and in practice, CLT is used to excuse confusing the convergence to a normal distribution with its centralized mean and to add some sort of meaning to its SDs. — this result is NOT surprising at all. That is the magical thinking — CLT methodology requires the result and gets the result from any sort of large collection of mere numbers.

Since it does this with any sort of collection of numbers — it must be used very carefully and not be confused as transferring any significance to the data set.

The key word here is, “sum.” The CLT is about the distribution of the sum of many independent random variables. But note that word, “independent.” If the random variables are correlated, the CLT doesn’t apply.

If the CLT does apply, the distribution of the sum will tend toward a normal (Gaussian) distribution, the mean will be the sum of the means, and the variance will be the sum of the variances.

The big “limitation” is in the independence. Lots of things are not independent or, more likely, cannot be known to be independent.

Note also that even when the CLT doesn’t apply the mean of the sum is still the sum of the means. The mean of the sum of a number of random variables is always the sum of the means. This is NOT the law of large numbers.

You can work through any example of just two random variables and see that the mean of the sum is always the sum of the means. For example, take a random variable that’s 0 half the time and 1 half the time. The mean is 1/2. Suppose we have second random variable that’s perfectly correlated with the first one. It’s 0 or 2, 0 when the first variable is 0 and 2 when the first variable is 1. The second variable’s mean is 1 (0 half the time and 2 half the time). The sum of the means is 1.5, but note that the sum of the two random variables is either 0 or 3, each half the time, also yielding a mean of 1.5

Now let the second random variable be perfectly negatively correlated, so that it’s 2 when the first variable is 0, and 0 when the first variable is 1. Now the sum is 1 half the time and 2 half the time, and the mean is still 1.5

One final note. A theorem about the sum of random variables teaches us nothing about the quality of the individual variables that have been summed. You may call that a “limitation” if you like.

Regarding this CLT description, what would a “properly normalized sum” be of thousands of different temperature measurements made in thousands of different places by thousands of different thermometers of varying accuracy?

A lot of this implies making a reading with one instrument. How do you accommodate readings with different instruments? And never reading the same thing with these different instruments.

I’m asking if the statistics have to be run differently, if say, I read 1000 items and use 4 different instruments to get my data.

Real world operating practices vs desktop theoretically perfect situations Alexy?

Alexy ==> It is important to know that we are dealing with statistical animals here. Statistics has its place — but in practice is more often substituted for thinking.

The Central Limit Theorem is an observable phenomenon dealing with large sets of numbers (any numbers).

The Law of Large Numbers (LLN) (two versions) mostly deals with lots of measurements of the same thing (see Jim Gorman’s comment above).

In your example, I wouldn’t try to use the LLN but could consider all of the measurements as a single data set (“My Measurements of my item”) apply the principles of CLT, and get a distribution of the means, find the means of those samples, and settle for that. You will have an approximate mean of all the measurements and some variance.

Don’t confuse your finding as the real mean or what statisticians call “the true mean”.

We should ignore statistics for a moment and consider the sources of instrument error.

1 – sixty students measure something with the same ruler

2 – one student measures the same thing with sixty different rulers

3 – one machinist measures the same thing with a micrometer

The rulers have different thicknesses. That changes the parallax error.

There are predictable human errors. If the thing being measured is precisely one inch thick, the average of the sixty student measurements will probably be one inch. If the thing is 1.01 inches thick, the average of the sixty student measurements will probably still be one inch.

If I’m setting up four production lines and have four properly calibrated test instruments of the same type, any variability will be due to the production lines, not the test instruments.

On the other hand, if I’m taking field strength measurements for an AM radio transmitter, I have to realise that I won’t get the same measurement even later on the same day.

So, the answer to your question depends on what you’re measuring and why you’re measuring and what you intend to do with the results.

Commie ==> Thanks heavens for persons who realize that “context matters”.

In many cases, the “context” that is missing is “…in the real world.”

“The mean found through use of the CLT cannot and will not be less uncertain than the uncertainty of the actual mean of original uncertain measurements themselves.”

I’m not quite sure what this means.

However, the standard error of the sample mean really is smaller than

(1) the standard deviation of the sample; and

(2) the measurement error.

It is generally not advisable to contest mathematics with words.

None of Kip’s homespun statistical theories are presented with any mathematically precise and rigorous descriptions and proofs using techniques described in text books and peer-reviewed journals. Understandable, given as far it appears, he has no formal training in statistical theory and falls back on being just a “science journalist” (or more correctly “science blogger”). What is not understandable is his level of dogmatism that he is unassailably correct despite the above lack of mathematical rigour. What is also hard to understand is why WUWT is hosting this statistical “snake oil” without at least some subject-matter expert review. I have been a reviewer for applied statistics and application-specific journals and published myself many times in this area over 45 years and I can tell you that these essays would not even make it to the review stage without the prerequisite mathematically explicit descriptions and proofs.

A rigorous mathematical proof can only be properly evaluated by a rigorous mathematition, on average 99 time out of 100.

Case in point. Does the Law of Large Numbers apply to future temperatures? For LLN we need a constant mean and variance, such as a coin. Many overlook this requirement and assume LLN applies everywhere.

Thus our question can be answered both yes and no.

No, because we know from paleo temperature data that mean temperature and variance are not constant.

Yes, because if one looks at the entire paleo record one can calculate a mean and variance that for all intents and purposes will not change over a span of a few thousand years.

Which one of these is true?

steve_showme

It is likely that WUWT staff will welcome your written essay on the subject. They accepted three of mine in September. Why not go for it? Geoff S

Geoff. Thanks for that idea. I generally like reading WUWT on subject areas I have no professional expertise in, like energy economics, policy, battery technology, climatology etc and news articles with comments such as those posted by Eric Worrall. I enjoy reading those and follow-up links to articles and getting informed. I just do not get the point of these statistical theory “lectures” by Kip especially when they are presenting false assertions as far as “false” can be gleaned from the maths-free homespun “theory” presented. The links given are web articles and not text books or peer-reviewed journal articles and these web links tend to be maths free as well.

I like to think I can make a better contribution in the peer review literature, even if its less sensational which applied statistics usually is unless its this type of “ALERT: the experts have had this wrong all along!” article.

I believe I have helped push back against the alarmist narrative (“Blue Planet”, Greta’s “ecosystems are collapsing…” etc) that Antarctic krill populations have catastrophically declined over the past 4 decades.

10.9734/arrb/2021/v36i1230460

https://environments.aq/publications/antarctic-krill-and-its-fishery-current-status-and-challenges/

and called out poorly designed studies from a statistical power standpoint

10.9734/cjast/2022/v41i333946

I can open all but your first “pushback against the alarmist narrative”. Any ideas?

Notably silly clicking. Even for here. I aksed for help opening the only link I was having trouble with. Apparently there’s some click first (based on the poster), don’t aks questions later posters lurking…

Bib ==> the numbered IP addresses don’t work for me either.

Thanks for your attention. Just one of those things….

Use Google Scholar for the DOIs and for the DOI 10.9734/arrb/2021/v36i1230460 use the first version GS links to.

Thanks steve. If your evaluative critiques are correct, then this would certainly be the best way to out claims made with improper evaluative techniques. I read WUWT articles per the Seinfeld Kramer effect*, but prefer superterranea for actual science and technology advancement…

*“He is a loathsome, offensive brute. Yet I can’t look away.”

Sorry dude, you have not shown that any of the claims are in “false”.

The main driver here is the fact that there are temperature values in “anomalies” that far, far exceed the resolution of readings and records. Prior to 1980, temperatures were read and recorded as integers. Showing variations with 2 or 3 decimal places just doesn’t compute with those of us have dealt with measurements in industry where this would not be allowed, either ethically or legally. Trying to use statistics to justify this is both incorrect and inept.

Resolution in measurements conveys a fixed amount of information. Adding more information in the form of extra significant digits is writing fiction, no matter how you cut it. It is what Significant Digits were designed to control and what error bars are supposed to show.

If you have a way to increase resolution of measurements through mathematics and statistics most of us would be more than pleased for you to post the mathematics behind it. Most of us would enjoy purchasing less expensive measuring equipment and instead use a computer to add the necessary resolution.

A couple of caveats you must deal with though. First, temperature is not a discreet phenomenon. It is an analog continuous function. It is a time series with very, very sparse and generally highly uncertain samples.

And the variance isn’t always noise. Often it is just high-frequency variations associated with turbulence and wind gusts, associated with the passage of air masses of different properties.

Also, one can, as I have, see fairly consistent large temperature differences for different but not widely located locations. Temperatures, for climate purposes, are reported as a single value derived from single location, or homogenized from a number of different locations, that may well be very different from any of the measured temperatures or any average of those measured temperatures. It is rather like an extreme version of reported urban/rural differences.

Also it would be very difficult to write a technical response to this article since without the precise mathematical expressions and exposition of these in the text its hard to be sure exactly what Kip is proposing and what statistical methodology he is relying on. That’s if I was even motivated to wade through it all which I am not since I have better things to do in my semi-retirement like gardening, sport (mostly watching actually talented sports-people), consulting and publishing peer reviewed papers.

If you need the necessary documents, there are several.

NIST has several Technical Notes on uncertainty in measurements as does NIH.

Dr. John R. Taylor’s book, An Introduction to Error Analysis is a good starting point.

JCGM 100:2008, Evaluation of measurement data — Guide to the expression of uncertainty in measurement is perhaps the “bible” most of us start with. You will want to read Annexes B, C, and D for a good basis in physical measurements.

Perhaps reference to the first article of this series would bring a little clarity.

https://wattsupwiththat.com/2022/12/09/plus-or-minus-isnt-a-question/

It seems peculiar to me that Kip did not provide a link at the beginning of this article.

steve-showme,

C’mon now, why not give it a go. If you are worried about audience comprehension, some WUWT readers are educated rather well. Not me, I just did the advanced option of both Pure and Applied Mathematices III at uni as part of an ordi=nary Science fegree. Others are much wiser.

If it encourages you, I can suggest a topic. It is about measurement and interpretation of surface sea temperatures, SST. Here are the basic from Kip’s previous post here.

…………

It is unclear what the purpose of uncertainty estimation is. To illustrate this, I use the example of measurement of sea surface temperatures by Argo floats. Here is a link:

https://www.sciencedirect.com/science/article/pii/S0078323422000975

It has claim that “The ARGO float can measure temperature in the range from –2.5°C to 35°C with an accuracy of 0.001°C.”

I have contacted bodies like the National Standards Laboratories of several countries to ask what the best performance of their controlled temperature water baths is. The UK reply is typical:

National Physical Laboratory | Hampton Road | Teddington, Middlesex | UK | TW11 0LWDear Geoffrey,

“NPL has a water bath in which the temperature is controlled to ~0.001 °C, and our measurement capability for calibrations in the bath in the range up to 100 °C is 0.005 °C.”