By Greg Chapman

“The world has less than a decade to change course to avoid irreversible ecological catastrophe, the UN warned today.” The Guardian Nov 28 2007

“It’s tough to make predictions, especially about the future.” Yogi Berra

Introduction

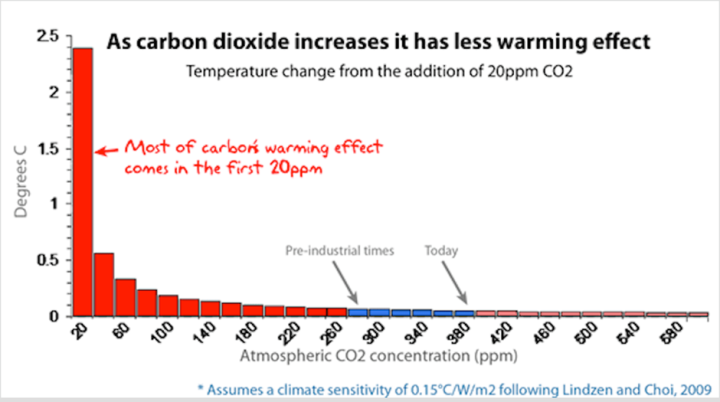

Global extinction due to global warming has been predicted more times than climate activist, Leo DiCaprio, has traveled by private jet. But where do these predictions come from? If you thought it was just calculated from the simple, well known relationship between CO2 and solar energy spectrum absorption, you would only expect to see about 0.5o C increase from pre-industrial temperatures as a result of CO2 doubling, due to the logarithmic nature of the relationship.

The runaway 3-6o C and higher temperature increase model predictions depend on coupled feedbacks from many other factors, including water vapour (the most important greenhouse gas), albedo (the proportion of energy reflected from the surface – e.g. more/less ice or clouds, more/less reflection) and aerosols, just to mention a few, which theoretically may amplify the small incremental CO2 heating effect. Because of the complexity of these interrelationships, the only way to make predictions is with climate models because they can’t be directly calculated.

The purpose of this article is to explain to the non-expert, how climate models work, rather than a focus on the issues underlying the actual climate science, since the models are the primary ‘evidence’ used by those claiming a climate crisis. The first problem, of course, is no model forecast is evidence of anything. It’s just a forecast, so it’s important to understand how the forecasts are made, the assumptions behind them and their reliability.

How do Climate Models Work?

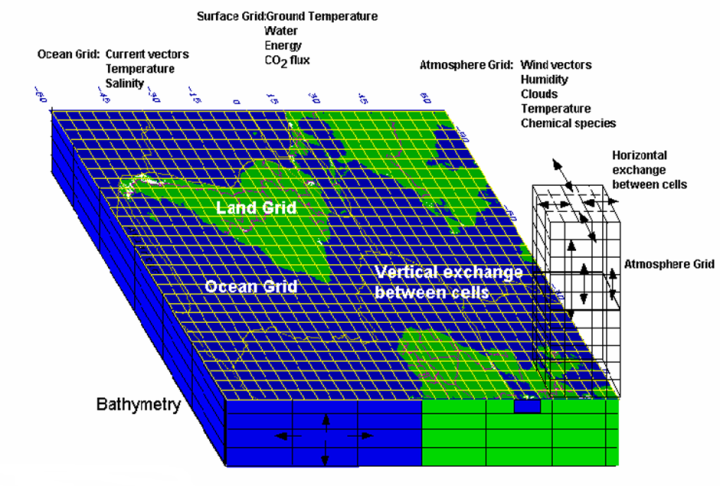

In order to represent the earth in a computer model, a grid of cells is constructed from the bottom of the ocean to the top of the atmosphere. Within each cell, the component properties, such as temperature, pressure, solids, liquids and vapour, are uniform.

The size of the cells varies between models and within models. Ideally, they should be as small as possible as properties vary continuously in the real world, but the resolution is constrained by computing power. Typically, the cell area is around 100×100 km2 even though there is considerable atmospheric variation over such distances, requiring each of the physical properties within the cell to be averaged to a single value. This introduces an unavoidable error into the models even before they start to run.

The number of cells in a model varies, but the typical order of magnitude is around 2 million.

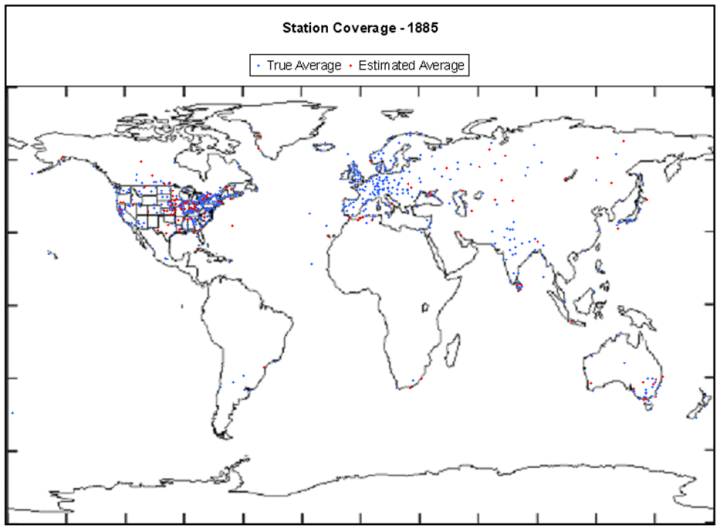

Once the grid has been constructed, the component properties of each these cells must be determined. There aren’t, of course, 2 million data stations in the atmosphere and ocean. The current number of data points is around 10,000 (ground weather stations, balloons and ocean buoys), plus we have satellite data since 1978, but historically the coverage is poor. As a result, when initialising a climate model starting 150 years ago, there is almost no data available for most of the land surface, poles and oceans, and nothing above the surface or in the ocean depths. This should be understood to be a major concern.

Once initialised, the model goes through a series of timesteps. At each step, for each cell, the properties of the adjacent cells are compared. If one such cell is at a higher pressure, fluid will flow from that cell to the next. If it is at higher temperature, it warms the next cell (whilst cooling itself). This might cause ice to melt or water to evaporate, but evaporation has a cooling effect. If polar ice melts, there is less energy reflected that causes further heating. Aerosols in the cell can result in heating or cooling and an increase or decrease in precipitation, depending on the type.

Increased precipitation can increase plant growth as does increased CO2. This will change the albedo of the surface as well as the humidity. Higher temperatures cause greater evaporation from oceans which cools the oceans and increases cloud cover. Climate models can’t model clouds due to the low resolution of the grid, and whether clouds increase surface temperature or reduce it, depends on the type of cloud.

It’s complicated! Of course, this all happens in 3 dimensions and to every cell resulting in considerable feedback to be calculated at each timestep.

The timesteps can be as short as half an hour. Remember, the terminator, the point at which day turns into night, travels across the earth’s surface at about 1700 km/hr at the equator, so even half hourly timesteps introduce further error into the calculation, but again, computing power is a constraint.

While the changes in temperatures and pressures between cells are calculated according to the laws of thermodynamics and fluid mechanics, many other changes aren’t calculated. They rely on parameterisation. For example, the albedo forcing varies from icecaps to Amazon jungle to Sahara desert to oceans to cloud cover and all the reflectivity types in between. These properties are just assigned and their impacts on other properties are determined from lookup tables, not calculated. Parameterisation is also used for cloud and aerosol impacts on temperature and precipitation. Any important factor that occurs on a subgrid scale, such as storms and ocean eddy currents must also be parameterised with an averaged impact used for the whole grid cell. Whilst the effects of these factors are based on observations, the parameterisation is far more a qualitative rather than a quantitative process, and often described by modelers themselves as an art, that introduces further error. Direct measurement of these effects and how they are coupled to other factors is extremely difficult and poorly understood.

Within the atmosphere in particular, there can be sharp boundary layers that cause the models to crash. These sharp variations have to be smoothed.

Energy transfers between atmosphere and ocean are also problematic. The most energetic heat transfers occur at subgrid scales that must be averaged over much larger areas.

Cloud formation depends on processes at the millimeter level and are just impossible to model. Clouds can both warm as well as cool. Any warming increases evaporation (that cools the surface) resulting in an increase in cloud particulates. Aerosols also affect cloud formation at a micro level. All these effects must be averaged in the models.

When the grid approximations are combined with every timestep, further errors are introduced and with half hour timesteps over 150 years, that’s over 2.6 million timesteps! Unfortunately, these errors aren’t self-correcting. Instead this numerical dispersion accumulates over the model run, but there is a technique that climate modelers use to overcome this, which I describe shortly.

Model Initialisation

After the construction of any type of computer model, there is an initalisation process whereby the model is checked to see whether the starting values in each of the cells are physically consistent with one another. For example, if you are modelling a bridge to see whether the design will withstand high winds and earthquakes, you make sure that before you impose any external forces onto the model structure other than gravity, that it meets all the expected stresses and strains of a static structure. Afterall, if the initial conditions of your model are incorrect, how can you rely on it to predict what will happen when external forces are imposed in the model?

Fortunately, for most computer models, the properties of the components are quite well known and the initial condition is static, the only external force being gravity. If your bridge doesn’t stay up on initialisation, there is something seriously wrong with either your model or design!

With climate models, we have two problems with initialisation. Firstly, as previously mentioned, we have very little data for time zero, whenever we chose that to be. Secondly, at time zero, the model is not in a static steady state as is the case for pretty much every other computer model that has been developed. At time zero, there could be a blizzard in Siberia, a typhoon in Japan, monsoons in Mumbai and a heatwave in southern Australia, not to mention the odd volcanic explosion, which could all be gone in a day or so.

There is never a steady state point in time for the climate, so it’s impossible to validate climate models on initialisation.

The best climate modelers can hope for is that their bright shiny new model doesn’t crash in the first few timesteps.

The climate system is chaotic which essentially means any model will be a poor predictor of the future – you can’t even make a model of a lottery ball machine (which is a comparatively a much simpler and smaller interacting system) and use it to predict the outcome of the next draw.

So, if climate models are populated with little more than educated guesses instead of actual observational data at time zero, and errors accumulate with every timestep, how do climate modelers address this problem?

History matching

If the system that’s being computer modelled has been in operation for some time, you can use that data to tune the model and then start the forecast before that period finishes to see how well it matches before making predictions. Unlike other computer modelers, climate modelers call this ‘hindcasting’ because it doesn’t sound like they are manipulating the model parameters to fit the data.

The theory is, that even though climate model construction has many flaws, such as large grid sizes, patchy data of dubious quality in the early years, and poorly understood physical phenomena driving the climate that has been parameterised, that you can tune the model during hindcasting within parameter uncertainties to overcome all these deficiencies.

While it’s true that you can tune the model to get a reasonable match with at least some components of history, the match isn’t unique.

When computer models were first being used last century, the famous mathematician, John Von Neumann, said:

“with four parameters I can fit an elephant, with five I can make him wiggle his trunk”

In climate models there are hundreds of parameters that can be tuned to match history. What this means is there is an almost infinite number of ways to achieve a match. Yes, many of these are non-physical and are discarded, but there is no unique solution as the uncertainty on many of the parameters is large and as long as you tune within the uncertainty limits, innumerable matches can still be found.

An additional flaw in the history matching process is the length of some of the natural cycles. For example, ocean circulation takes place over hundreds of years, and we don’t even have 100 years of data with which to match it.

In addition, it’s difficult to history match to all climate variables. While global average surface temperature is the primary objective of the history matching process, other data, such a tropospheric temperatures, regional temperatures and precipitation, diurnal minimums and maximums are poorly matched.

Even so, can the history matching of the primary variable, average global surface temperature, constrain the accumulating errors that inevitably occur with each model timestep?

Forecasting

Consider a shotgun. When the trigger is pulled, the pellets from the cartridge travel down the barrel, but there is also lateral movement of the pellets. The purpose of the shotgun barrel is to dampen the lateral movements and to narrow the spread when the pellets leave the barrel. It’s well known that shotguns have limited accuracy over long distances and there will be a shot pattern that grows with distance. The history match period for a climate model is like the barrel of the shotgun. So what happens when the model moves from matching to forecasting mode?

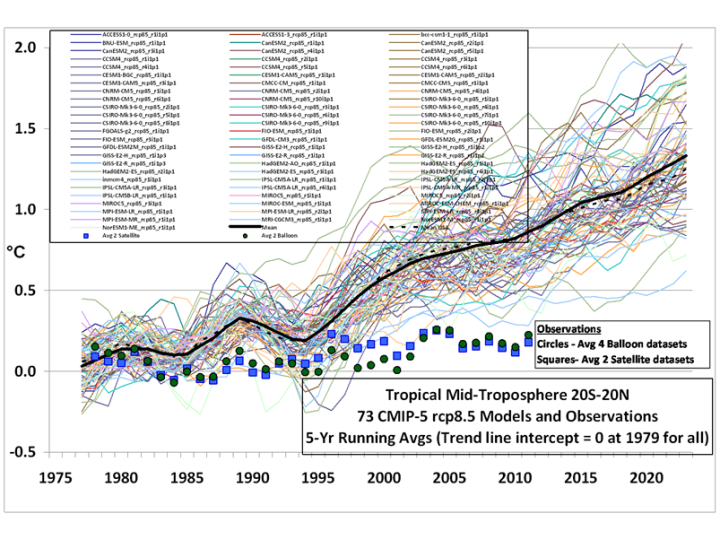

Like the shotgun pellets leaving the barrel, numerical dispersion takes over in the forecasting phase. Each of the 73 models in Figure 5 has been history matched, but outside the constraints of the matching period, they quickly diverge.

Now at most only one of these models can be correct, but more likely, none of them are. If this was a real scientific process, the hottest two thirds of the models would be rejected by the International Panel for Climate Change (IPCC), and further study focused on the models closest to the observations. But they don’t do that for a number of reasons.

Firstly, if they reject most of the models, there would be outrage amongst the climate scientist community, especially from the rejected teams due to their subsequent loss of funding. More importantly, the so called 97% consensus would instantly evaporate.

Secondly, once the hottest models were rejected, the forecast for 2100 would be about 1.5o C increase (due predominately to natural warming) and there would be no panic, and the gravy train would end.

So how should the IPPC reconcile this wide range of forecasts?

Imagine you wanted to know the value of bitcoin 10 years from now so you can make an investment decision today. You could consult an economist, but we all know how useless their predictions are. So instead, you consult an astrologer, but you worry whether you should bet all your money on a single prediction. Just to be safe, you consult 100 astrologers, but they give you a very wide range of predictions. Well, what should you do now? You could do what the IPCC does, and just average all the predictions.

You can’t improve the accuracy of garbage by averaging it.

An Alternative Approach

Climate modelers claim that a history match isn’t possible without including CO2 forcing. This is may be true using the approach described here with its many approximations, and only tuning the model to a single benchmark (surface temperature) and ignoring deviations from others (such as tropospheric temperature), but analytic (as opposed to numeric) models have achieved matches without CO2 forcing. These are models, based purely on historic climate cycles that identify the harmonics using a mathematical technique of signal analysis, which deconstructs long and short term natural cycles of different periods and amplitudes without considering changes in CO2 concentration.

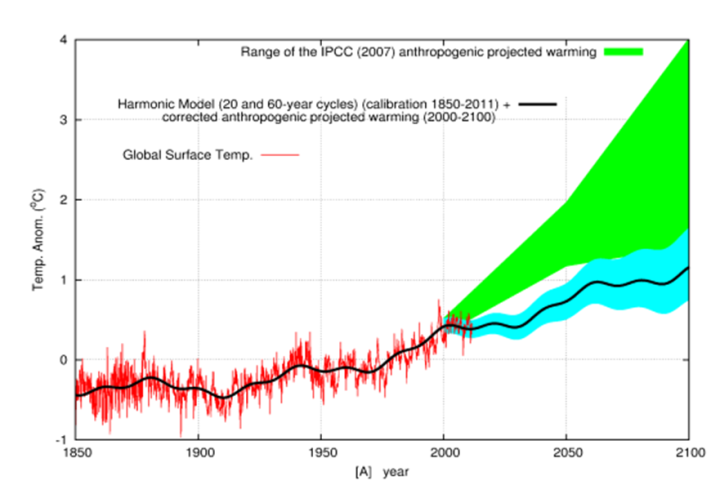

In Figure 6, a comparison is made between the IPCC predictions and a prediction from just one analytic harmonic model that doesn’t depend on CO2 warming. A match to history can be achieved through harmonic analysis and provides a much more conservative prediction that correctly forecasts the current pause in temperature increase, unlike the IPCC models. The purpose of this example isn’t to claim that this model is more accurate, it’s just another model, but to dispel the myth that there is no way history can be explained without anthropogenic CO2 forcing and to show that it’s possible to explain the changes in temperature with natural variation as the predominant driver.

In summary:

- Climate models can’t be validated on initiatialisation due to lack of data and a chaotic initial state.

- Model resolutions are too low to represent many climate factors.

- Many of the forcing factors are parameterised as they can’t be calculated by the models.

- Uncertainties in the parameterisation process mean that there is no unique solution to the history matching.

- Numerical dispersion beyond the history matching phase results in a large divergence in the models.

- The IPCC refuses to discard models that don’t match the observed data in the prediction phase – which is almost all of them.

The question now is, do you have the confidence to invest trillions of dollars and reduce standards of living for billions of people, to stop climate model predicted global warming or should we just adapt to the natural changes as we always have?

Greg Chapman is a former (non-climate) computer modeler.

Footnotes

[2] https://serc.carleton.edu/eet/envisioningclimatechange/part_2.html

[3] https://climateaudit.org/2008/02/10/historical-station-distribution/

[4] http://www.atmo.arizona.edu/students/courselinks/fall16/atmo336/lectures/sec6/weather_forecast.html

Whilst climate models are tuned to surface temperatures, they predict a tropospheric hotspot that doesn’t exist. This on its own should invalidate the models.

I’ve always wondered what goes through the heads of the jet-setting alarmunists as they whirl from conference to conference. Kerry, Gore, and all politicians are not a mystery, they are just control freaks and don’t believe in anything except power over the masses.

But celebrities? I doubt very many of them have any aspirations for political office. I know their sense of reality is warped by all the parts they play making them think they understand how the world works. But none of that has anything to do with spouting nonsense about CO2 at stops in between spewing CO2. There must be some cognitive dissonance somewhere in their noggins. I can only conclude they are just pandering to what their PR flacks told them to pander to, and that they like seeing their name and face in the press, and know that if they don’t spout the nonsense, their adoring public will turn against them; no publicity is bad publicity, as the saying goes. A silent celebrity is no longer a celebrity, and that is their worst fear.

I think that the glitterati are driven by popularism and the desire to artificially project their IQ’s beyond room temperature. Cognitive dissonance is not a concept that their brains are capable of holding.

deg C, not drg F

The left has a do good view of themselves. They think they are the only one who can save the world. However because they have never actually accomplished anything in their live, they have never failed and don’t know caution. It would be better if they worked in a missionary carrying for the sick but unfortunately fate place them in a position of power. It’s the equivalent of giving a kid a box of matches and putting them in a room full of explosives. It scares the daylights out of me knowing the damage they can cause through ignorance.

I’ve always wondered what goes through the heads of the jet-setting alarmunists

They are going to a party !

The CLAP party:

C = Climate

L = Liars

A = Annual

P = Party

Have fun

Pick up girls

Get drunk

Go back home and claim you were saving the planet !

Caught in the CLAPTRAP

My prior comment was censored yet again today

This automatic moderation is very annoying.

Here’s what I tried to say:

They are going to a party where they can have fun and when they go home they can claim they were trying to save the planet.

C = Climate

L = Liars

A + Annual

P = Party

Doesn’t appear to have been censored. Perhaps you’re impatient?

The “moderated” comment here showed up many hours after being posted. But a majority of my comments on the Ukraine / Zelensky article never showed up at all. I am not inventing this moderation problem — it has been real for me.

It’s a part of human nature that we want to feel important, to feel appreciated.

These celebrities know that nothing they do matters in the long run.

As a result they search for a cause that they can latch onto. It is the cause that gives meaning to their lives.

By giving money, by giving speeches, they can convince themselves that they are making a difference, and that thanks to their efforts, they will be remembered as people who cared, people who made a difference.

This also explains why they are so impervious to logic and actual data. If they were to admit that AGW isn’t a problem, and that their efforts aren’t helping anyone, then they would have to admit to themselves that they don’t matter. Their egos just can’t handle it.

Excellent comment, MarkW.

But Mark, do they “…know that nothing they do matters in the long run” or do they believe they are making a difference for the planet? I think you are right that some, like Dicrapio, are part of the flim flam, while others are too ignorant to know anything beyond grab a sign, repeat a mantra, and look good for the camera.

I believe that most celebrities are gifted at memorizing lines and playing dress up. Beyond that they are not really sure which way is east nor understanding how to go about figuring it out.

Like Emma Thompson?

At least she helped to spread manure…

Thanks for the responses, all plausible, all realistic, but I remain unsatisfied at my understanding of celebrity mindset. I had a bit part in a 9th grade Spanish play, and was thoroughly disgusted at the audience watching us. I guess adoring it is just beyond me.

Two words may help you understand the Celebrity mindset.

Introvert.- A person who is confident in themselves and doesn’t require others to tell them they have done a good job. Think Spock from Star Trek.

Extrovert – Somebody who is somewhat insecure in their view of themselves and enjoys the complements of others because it counters the insecurity that they feel.

Most people have a a bit of extrovert in their personality but there are those like me who are so much introvert that they feel a little embarrassed by complements by others. Then there are others who are so insecure that they will do anything for the support of others.

There is a problem in the concept.

Most extroverts thoroughly believe that if they force an introvert to appear in front of a crowd, that introvert will love the recognition and become extroverts.

It remains to be one of the worst things that can happen to an introvert.

I’m neither timid nor subservient to peers and often not to bosses.

I abhor passionately speaking in front of crowds.

Growing up, I learned that extroverts who praise obsequiously or oleaginously fully intend to speak nasty insults when talking to others.

Especially when one notices that narcissists and egotists absolutely love speaking to crowds. For many, they are so focused on speaking to large crowds that it is dangerous to be between them and a microphone of TV camera.

Which brings up the celebrities and wannabe glitterati who are all too eager to speak their ignorance about climate, e.g., Dicaprio and Michael manniacal.

People who are both extroverted and introverted are very normal with enough self insight to know their bounds and when to speak or not speak. Unfortunately, many are willing to repeat bad news.

I could never become an extrovert. My life is in my head and it’s my favorite place to be. Some people may enjoy partying where as I enjoy adding to my knowledge away from the crowd. I work or do whatever is required in public but at the end of the day, I enjoy going home for my quiet time. My goal isn’t to impress others though it happens when I use my talents to assist somebody else. I know that others probably wouldn’t enjoy what I do so I would never force it on another. I don’t have a problem saying no to something that is a bad idea. I don’t wish to harm others but I am not going to be pushed around.

Such is the life of an introvert.

Yes, despicable. It is the ignoble art of monetising Clickbaits.

As for celebrities, climatista DiCaprio never finished high school but he can lecture all of us. I consider him a very good actor but that doesn’t qualify him for anything else.

“There must be some cognitive dissonance somewhere in their noggins.”

Human beings have a strong desire to conform to what they percieve as the majority view of a tribe or society. This is a survival instinct.

The Tribe/Group has the knowledge to survive this life and they pass this knowledge on to the young, giving the young a better chance to survive. So Group-think is important in this way and humans are prone to engage in group-think for this reason.

When it comes to a complicated subject like the Earth’s climate, most people don’t have the disposition or inclination to look too deeply into the subject so they accept the general public opinion as being true, even though the general public opinion is no more informed then they are, in this case. In this case, group-think is detrimental to the human condition.

So there are a lot of things that could contribute to this kind of thinking.

I guess I’m lucky in this sense. I’m a non-conformist, so group-think is anathema to me. Something I reject out of hand, because it may be mindless group-think.

I know I’ve been an independent thinker for a long time; a childhood friend tried to get me to join the cub scouts, one of his arguments being that I could learn knot tying and other things. My response was that I could learn those on my own, and he had no other reasons. Whether it would have been a good idea for other reasons, I do not know. Maybe this is part of what confounds me so much about celebrities.

This is a good explanation for the term “morals”. Morals are those traditions and beliefs that contribute to the survival of a group. Morals can vary all over the place, from the Aztecs to the Quakers. The morals of Hollywood celebrities are those that contribute to the survival of that group. Those morals don’t generally fit with the morals of most of the people I know.

This could be your theme song, Tim –

Billy Thorpe and The Aztecs –

“Most People I Know”

YEP!

no model forecast is evidence of anything. It’s just a forecast,

In scientific terms, it’s an hypothesis. No hypothesis in the scientific method is worth a hoot until confirmed by actual observations, measurements or whatever, which confirm its predictions. The learned politicians at IPCC need to wait until year 2100 before they go looting taxpayers and prohibiting anything. Patience, dweebs!

Thats sounds right.

But the climate alarmists no longer follow any rules of science. In the modern lingo you would call them ‘disrupters’, where they just go their own way and break conventions when it suits.

A later disrupter that acts in a similar way is crypto currency. Doesnt make any sense , but what the hell its only about perceptions

You’re right, insufficientlysensitive. The idea that any particular computer model predicts the climate is no more than a hypothesis. If the IPCC and the “climate scientists” used the scientific method properly, any model whose predictions were found to be wrong, i.e. further from the actual measured values than the error bounds which were defined as part of the hypothesis, would have to be either modified and re-run, or its results dropped altogether. But this does not happen, as is proven by Figure 5 in the article. The models are never properly validated by comparing their predictions for the future with the values measured when that future has arrived.

This is all bound up with the IPCC pulling wool over eyes, by referring to its pronouncements as “projections.” As far as I can see, what they are doing is just calculating some average from the (totally unvalidated) predictions of a number of different models. But if individual models have not had their predictions validated against measurements, any average of their results is completely meaningless.

“further from the actual measured values than the error bounds which were defined as part of the hypothesis,”

The problem is that the modelers don’t accept that there are any *error* bounds to their models. Their only boundary is the model blowing up. Their assumption has always been that “all uncertainty cancels” thus their models are 100% accurate and don’t have to comport with actual observations.

The biggest problem with computer models is getting them to agree real world observations, without constant “adjustments.”

epicycles

What defines “real world observations”? Not the fraudulent Hockey Stick temperature charts.

The written, historical temperature records tell a completely different story from the story the bogus Hockey Stick charts tell.

The written record shows there is nothing to worry about from CO2. It shows CO2 is not the control knob of the Earth’s atmosphere.

The “hotter and hotter and hotter” Hockey Stick charts are blantant lies created to scare people into doing really stupid, destructive things.

Not a problem at all

Run the IPCC models for TCS with RCP 4.5, rather than ECS and RCP 8.5

The result is a future global warming trend prediction similar to actual warming from 1975 to 2022.

Going through the summary:

Climate models can’t be validated on initiatialisation due to lack of data and a chaotic initial state.

As with CFD and similar modelling, there is a reason why they “wind back”. As is sometimes complained of with GCMs and CFD, model runs are not very responsive to details of initial conditions. Many of the processes are diffusive, and so what mainly matters is getting conserved quantities right. Chaos and things that are unphysical get ironed out, and the model comes into equilibrium with the forcings.

Model resolutions are too low to represent many climate factors.

No, weather factors. Again it comes down to conserved quantities. It mainly matters that you get their magnitude and mobility coefficients right.

Many of the forcing factors are parameterised as they can’t be calculated by the models.

Forcing factors aren’t calculated. They are the “given”. They come from scenarios.

Uncertainties in the parameterisation process mean that there is no unique solution to the history matching.

The article is wrong on the history matching. Modelers don’t try to match to the history. Tuning is used to firm up values for parameters that are otherwise not well known, eg some cloud properties. For that you generally fit to some aspect of the solution that will be affected, say SST, and over a fixed period, say 30 years.

Numerical dispersion beyond the history matching phase results in a large divergence in the models.

If the models really did try to match history, they would do a better job of it. The key thing to remember is that models are models. That is, they have as best can be managed the same properties as the Earth, but they won’t follow the same time sequence of events, any more than would an alternative real Earth, if we could create one. That is why it would be foolish to try to match history. The model isn’t supposed to. It is supposed to respond in the same climate way (long-term) to forcing, but not to behave in a synchronised way to short term.

The IPCC refuses to discard models that don’t match the observed data in the prediction phase – which is almost all of them.

Doesn’t sound like a constructive recommendation. But it’s wrong for the same reason. Models aren’t supposed to track Earth in the prediction phase. ENSO is just one example. Models perform proper ENNSO oscillations, but there is nothing to synchronise them to those on Earth. So they will deviate for that reason at least, but still respond like Earth on a climate time scale.

A “climate time scale” – please define your terms? If it is thirty years, well, the “consensus forecast” was off the rails in 1990, and is even more so thirty years later.

Wait until 2100? Very convenient to have a “forecast” that can’t be debunked except by our hypothetical* grandchildren.

* Hypothetical, as if you alarmists have your way, there will only be a few million humans left on the Earth by then, as the rest are moldering in the ground from famine, freezing, and plague. Very small chance that my grandchildren will exist – nor yours, as you will have outlived your usefulness.

“climate time scale” is one where the long term effects of forcing dominate over the short-term effects of other effects like ENSO and volcanoes. There is no unique number, but 30 years would be a reasonable figure.

Forecasts from 30 years ago, despite the early stage of modelling knowledge, have worked out very well.

Only five times the actual temperature change is “very well.” Only in Stokes-World…

Which particular version of Monckton misleading are you referring to?

The IPCC FAR (1990) predicted a warming of 0.2°C/decade or surface temperatures with Scenario B, which is what unfolded. And that warming is very close to measurements.

Nick, that the observed warming is near the predicted of FAR could also occured from the wrong reasons. AFAIK there was a paper (Mauritsen et al) describing the tuning of the MPI-M CMIP6 model. They got ( mostly from cloud properties) an ECS of about 7 K/2*CO2 (!) and tuned their model on the observed GMST and this reduced the ECS to about 3. The ECS was NOT a (physical) model result in the end but a result of tuning. There are also some examples for models which got a too high Sensitivtiy and the too strong warming was adjusted with a (also too high) negative aerosol forcing. I’m not quite sure if those issues enhance the validity of present climate models. If I remember correctly the modellers know the issues and are the most radical critics of their own creations.

best Frank

UAH warming since 1979 is .13 degrees C/decade. So 0.2°C/decade is a 50% error. Carry on.

UAH is not measuring surface temperature.

UAH measures temperature anomalies in the lower atmosphere. The lower troposphere is where “climate change” temperatures are alleged to be most pronounced. UAH provides the most comprehensive temperature survey without urban heat island effects.

Not sure that UAH avoids UHI altogether. There will be some kind of vertical temperature gradient above a UHI location. Just not sure how high that gradient is measurable.

“climate time scale” is one where the long term effects of forcing dominate over the short-term effects of other effects like ENSO and volcanoes.”

So how do you ”model” a super volcano that could happen tomorrow???

You can’t. That is an example of the kind of effect that makes it impossible for models to track the short to medium term behaviour of the Earth. But the effects of the volcano fade; the effects of GHGs in the atmosphere persist, and govern the longer term behaviour, which is what GCMs usefully predict.

”But the effects of the volcano fade; the effects of GHGs in the atmosphere persist”

Nope, not a super volcano, the effects of which could create a mini ice age and would throw your precious models out the window.

And not all GHG’s, like methane, don’t persist. This pledge to reduce methane emissions by 30% by 2030 is simply BS. We have already reached an equilibrium.

What makes someone like you come back here time and again to just be wrong and laughed at? Is this a hobby?

“have worked out very well.”

Once they adjust the data to fit !

Against reality.. NOPE, a complete FAILURE

You can’t justify 30 years that way. You and I both know there are many oscillations that exceed that period and that their interactions are very poorly understood. The uncertainty involved is additive with every step taken. The models are junk.

Read Dr. Mototaka Nakamura’s book about climate models. Try and rebut his sad tale of how inaccurate the models are for forecasting.

“Complex climate models,as predictive tools for many variables and scales, cannot be meaningfully calibrated because they are simulating a never before experienced state of the system…….It is therefore inappropriate to apply any of the currently available generic techniques which utilise observations to calibrate or weight models to produce forecast probabilities for the real world”

Stainforth et al Phil. Trans. R. Soc. A (2007) 365, 2145-2161

One of the authors was \Myles Allen later an IPCC lead author.

I will tackle one of your claims:

“Many of the forcing factors are parameterised as they can’t be calculated by the models.

Forcing factors aren’t calculated. They are the “given”. They come from scenarios.”

Wijngaarden and Happer have shown how forcing factors can be calculated and that their theoretical basis is in very good agreement with observations. It is however a computationally intensive task that would render climate models intractable. The assumptions are about trends in GHG concentrations that are included in black box mode as forcings because of the computational difficulty. Some of those assumptions about trends in GHG concentrations are of course themselves controversial, and the modelling of residence times, reactions in the atmosphere etc. for given inputs is also rudimentary.

There is also the fundamental point made by Wijngaarden and Happer that you can only correctly calculate the forcings if you have adequate data on the actual conditions in the atmospheric column, and that methodology that assumes that forcings are additive (e.g. by adding in water vapour and cloud as averaged effects) is wrong.

I took the author to mean by forcing factor the actual inputs that make the climate change (eg GHG concentrations). These are prescribed by scenario.

You are talking about global radiative forcing, which is calculated by some sort of model, often back-calculated from the GCM runs. People do that, but GCM’s make no use of them. They of course model the radiation, and cell by cell it’s interaction with the GHG concentration. The general integration of the N-S equations, with energy, combines that, but they don’t need a separate totaling process.

Every so called climate model includes an assumption of how CO2 concentration will increase over time – the “Representative Concentration Pathways.” All the subsequent calculations are built around the assumption that the change in CO2 concentration will result in increased temperatures based on an invalidated hypothesis. It is thus not surprising that the models predict exactly what they are designed to predict. It is not surprising that if you run the models with CO2 concentration held constant, they predict no significant future warming.

However, they also cannot account for climate changes such as the warm periods and little ice age that occurred prior to industrialization and use of fossil fuels. In fact, the correlation between CO2 concentration and historical temperature over the millennia is very poor and thus one must conclude that there must certainly be other natural factors that drive climate change. Models that do not include these factors – whatever they might be – are simply garbage. Let me know when someone comes up with a climate model that correctly reproduces the Roman and Medieval warm periods and the little ice age. We know this is an important issue because the climate alarmists have been denying that the climate changed at all before 1850 or so.

“Every so called climate model includes an assumption of how CO2 concentration will increase over time “

Complete nonsense. No model includes such an assumption. It will accept an explicit scenario as input; that is what the RCPs are. THE GHG concentrations are input data. You can make CO2 increase, decrease, whatever you think it might do.

“It will accept an explicit scenario as input;”

So it is assuming how CO2 concentration is increasing.. OK !!

Input data is part of the modelling process.. didn’t you know that !

Alright, what happens if we take such a model and use a historical CO2 data set as the RCP. How well will the model predict historical temperatures? Do you think that it will get any of well-known historical temperature trends correct?

Every model run contains a RCP assumption. You’re being a pedant to pretend you don’t know what he was saying.

“often back-calculated from the GCM runs”

Back calculating from erroneous, assumption driven un-validated models.

You HAVE to be joking !!

You might try to address the points I made rather than attempting to pretend I said something else.

The WH calculations were applied to several radically different atmospheric column conditions, including polar, tropical desert, temperate, etc., producing different results that matched corresponding satellite measurements, looking at the IR spectrum at a sub wavenumber resolution, and at far greater altitude resolution than used in GCMs. Their overall global average forcing calculation merely serves to lay down a marker that says that most models run too hot. It is sufficient to demonstrate that the majority of models are wrong, and their more detailed calculations using the correct physics on different atmospheric columns produce the right results for radiative balances, whereas GCM methodology does not, except by happenstance when the parameterization coincides with the proper answer as it will from time to time under the Brouwer Fixed Point Theorem.

You do not address the poor understanding of atmospheric chemistry that acts as a severe limitation on model capabilities in projecting future concentrations and dispersions of GHGs, which are far from uniform.

You make much of the idea that so long as parameterization produces the right average answers and bulk properties there is perhaps some use in GCMs. The problem is they don’t. Getting the radiative balance wrong is fundamental: how the energy is spread across the atmosphere and shared with the oceans is secondary. But with the wrong radiative balance the tweaking of the actual climate model is bound to be wrong to compensate.

Global radiative forcing. LOL! Have you ever had an interest in gliders. You can rise at 600fpm to altitudes of 10,000 ft or more in a thermal. Some of those thermals are less than a square mile. Can you imagine the amount of heat being moved upwards? There is no way a GCM can model the radiative/conduction/convection in areas that small. The uncertainty involved is tremendous.

You trying to minimize the errors and uncertainties just isn’t going to work. We aren’t dealing with wind tunnels here! The atmosphere is infinitely larger and has far more diverse parameters.

I’ve never seen so much evasion, and so little actual logic and data in such a short response, before.

Nick has topped himself.

Yeah, Nick’s assertion that explicitly choosing a specific RCP is NOT equivalent to assuming how CO2 concentration varies over time has to be a new low.

Of course, if you choose an RCP, you make that assumption. But it isn’t built in to the model.

QED.

If you can’t dazzle them with brilliance, baffle them with bovine fertilizer.

Maybe Nick the Stroker should get some kind of award?

If the above were correct, the models would do a better job of predicting the climate, be closer together, show improvement as the parameters get refined, and get the actual physical temperature more accurately. This is not the case. the models continue to diverge from the measured data and from each other. If the actual temperature for a location or average for the entire world is consistently high by 5°C, I don’t think the physics are correct and the increase predicted by adding more CO2 to the model is not reliable.

Nick

Looking at figure 5 above, seriously questions the validity of your comments.

Regards

Fig 5 represents cherry picked circumstances – tropical mid troposphere. It has never passed peer review. And it ends in 2013.

It is amazing how the whether or not data is relevant, corresponds perfectly with whether or not the data supports the global crisis narrative.

Nick,

Thank you for your experienced comments.

What method of validation would you suggest to allow acceptance of results of these models and their CMIP differences? The mismatch between observed and estimated temperatures remains a concern.

Geoff S

Geoff,

There is a brief article on the subject here. The first thing is to check that they solve the equations correctly. They often are related to weather forecasting programs – did they get that right. You can also check various regional signature effects to se if they are coming out right. Obviously things like Hadley cell.

The best way to test prediction observationally is to try to match results after correcting (after the fact) for the effects (like ENSO and volcanoes) which would have caused deviation.

On the signature approach, here is an example of SST, intended to show the various well-known currents around the world. Remember, these are obtained from first principles, so it is meaningful to test if they are getting the patterns right. The individual eddies of course will not correspond in time to reality on Earth.

Ta Nick,

On models, we had exquisetly good results from modelling the properties of discrete magnetite bodies from Tennant Creek, with inputs being the surface grid measurements of the perturbation of the natural field. We know they were exquisite, because in half a dozen cases we mined the discrete body for Au, Cu, Bi and so could check our derived depth to top, X.Y,X axes length and attitude and direction, as well as more difficult problems like the various forms of magnetics, induced, remnant etc. So I am not here to knock the concept of models.

You note that “The best way to test prediction observationally is to try to match results after correcting (after the fact) for the effects (like ENSO and volcanoes) which would have caused deviation.” It is because I have not seen good reults from this approach that I asked you the validation question. Little is really known about the quantitative climate effects of volcanos (which need to include submerged) and with cloud, even the sign is disputed.

Is there any concept of a “point of no return” when despite best efforts, the results are not good enough to be useful?

Geoff

Geoff,

Basically, the problem about predicting the future is that you then have to wait to see what happens. But you can get inklings, and the correcting for effects approach lets you get better inklings earlier. How much earlier? Yes.

”…you then have to wait to see what happens”

Looking at the chart above, it is obvious the models are running way too hot compared to observations. So why aren’t the models adjusted accordingly? But I think we all know the answer to that, and it has nothing to do with science.

Nick,

I mentioned the exquisite success of modelling magnetism in that remote spot, Tennant Creek, because it found new mines and generated new wealth for all. This was the value of sales calculated to 2015, all in 2015 values for $$$ and commodity prices.

JUNO $ million 3.27

IVANHOE $ million 1.38

ORLANDO $million 1.30

WARREGO $million 10.9

GECKO $million 1.7

ARGO $million 0.2

Each of these mines – Orlando possibly excepted – was discovered because of the success of the magnetic modelling.

Success, to be meaningful, has to be able to be expressed in dollar terms, that being one driver of the concept of and the reasons for, money.

Nick, do you forsee a day when these GCMs and other related climate models will reach maturity, in the sense of being able to show a dollar value?

If not, what is the main impediment to estimation of their value?

Geoff S

You just basically admitted that the models do NOT work properly by themselves. Corrections after the fact are needed.

Nick,

Thanks for thr reference to Validation of climate models by Simon Tett of the UK Met Office.

I read this years ago and wondered why such a simple, data-free (almost) explanation of the bleeding obvious was needed.

Got anthing pertinent?

Geoff S

“Models aren’t supposed to track Earth in the prediction phase”

ROFLMAO.

We know they don’t track reality in any of their predictions.

That is what we have been saying !!

Word salad without a single admission of faulty models. Dr. Pat Frank hit the nail on the head with his uncertainty analysis. Notice how many times uncertainty is mentioned in this essay. Think how much uncertainty there is in a 2+ million cell model.

Is it any wonder the models disperse tremendously? Their forecasting is faulty any way you look at it.

“That is why it would be foolish to try to match history.”

If they can’t match history then how can they match the future? The models *should* be able to pretty well match history from even a million years ago if all the “short term” stuff fades away over time. If they can’t do that then why should anyone expect them to be able to match the future?

“Statements about future climate relate to a never before experienced state of the system; thus it is impossible to either calibrate the model for the forecast regime of interest or confirm the usefulness of the forecasting process.”

Stainforth et al Phil. Trans. R. Soc. A (2007) 365, 2145-2161. One of the authors was Myles Allen later an IPCC lead author.

Thank you for this reference. I need some time to read and digest it. But this leapt off the page:

“Finally, model inadequacy captures the fact that we know a priori,

there is no combination of parametrizations, parameter values and ICs which

would accurately mimic all relevant aspects of the climate system. We know

that, if nothing else, computational constraints prevent our models from any

claim of near isomorphism with reality, whatever that phrase might mean.”

I think this is actually saying that the models can’t match reality simply because of computational constraints. I’ve never heard an climate alarmists actually admit this. If true then the models are actually describing a different Earth, not the one we live on!

Exactly. These models aren’t replicas or even near replicas of Earth or it’s systems. When you just assume what cloud albedo is without factoring in what processes create those clouds, for example, it’s all circular reasoning with a toy model. You can get any conclusion you want and even if you initialized the model perfectly well you’d get garbage results because while swaths of the model are parameterized, not dynamic.

They may not try to “match” every detail of high-frequency weather events, but they do “discard as unrealistic” all “Model + Parameterisation” combinations that are too many sigmas away from overall medium- to long-term statistics, or “patterns”.

The screenshot attached to this post is from a NCAR/UCAR website that summarised just how well (/ badly, e.g. the “ENSO” line …) the CMIP6 climate modelling teams managed to “match” the various model (+ parameterisation) outputs to the “history” of various large-scale (in both time and space) “patterns”.

Direct URL : https://webext.cgd.ucar.edu/Multi-Case/CMAT/CMATv1_CMIP6/index.html

NB : The webpage is dated 22/1/2020. There may well be more up-to-date “summaries” for the CMIP6 / AR6 cycle elsewhere that I have not come across (yet).

Nick,

Assuming 2x CO2 as an ‘input’, is it possible to run a CFD-based GCM to the point where the Earth’s average surface temperature has reached equilibrium? If so, what exactly are the modeled changes to the Earth’s 1) average surface temperature, 2) net atmospheric long-wave forcing and 3) albedo. If not, how does the IPCC come up with its estimates, e.g., for ECS? Seems to me that estimates for these would be a minimum requirement before any steps could be justified to mitigate ‘carbon’ emissions.

Looking forward to your response.

Frank

Yes, it is possible. Here is an example, a 2018 paper that does exactly that. It is titled

“Equilibrium Climate Sensitivity Obtained From Multimillennial Runs of Two GFDL Climate Models”

Nick, thanks for the reference. From the ‘Global Changes’ section of the paper:

‘ESM2M simulates T of 3.34 K and N of −0.07 W m−2 by year 4300…CM3 simulates T of 4.84 K and N of −0.09 W m−2 by year 4700… For ESM2M, T reaches 3.34 K by year 2500, after which it remains almost constant for the remaining 2000 years. In CM3, T steadily increases until year 4700. Therefore, we include the full 4,800 years in our CM3 LE analysis. The reason why warming continues after N = 0 W m−2 is uncertain.’

So, two models, big difference in ECS, and big difference in how they get there. And since we’re looking at temperatures a couple of thousand years from now, I’m not sure I’d want to bet the energy ranch on this.

Also, assuming one of these models is correct, can you please point out what it projects for changes in net atmospheric long-wave forcing and albedo, i.e., points 2 & 3, above. Thanks again.

“And since we’re looking at temperatures a couple of thousand years from now, I’m not sure I’d want to bet the energy ranch on this.”

You asked for an analysis that ran for 2000 years. It doubled CO₂ at the start, but then held it constant for that time. If we do nothing, it won’t stay constant.

You’ll need to read the paper to find out about LW and albedo.

‘You asked for an analysis that ran for 2000 years.’

Actually, I asked for a model run that captured the ECS for 2xCO2. I think if folks knew that they’d have to hang around for 2,000+ years to see the projected impact, they’d be less likely to buy the alarmist narrative.

‘You’ll need to read the paper to find out about LW and albedo.’

I’ll take a look, but my assumption is that they’re not explicitly noted in the paper. Any thoughts as to why they wouldn’t be up front about that?

“if folks knew that they’d have to hang around for 2,000+ years to see the projected impact”

You asked for an analysis that would reach equilibrium. Yes, you have to wait for that. But you’ll take only a few decades to reach halfway. And it won’t stop at doubling.

Nick,

I think Figure 2 in the paper gives me what I need. For the ESM2M model, LW and SW forcing (using clear sky results to give benefit of the doubt to the authors) increase by about 6.0 and 3.0 W/m^2, respectively, for a total net forcing increase of 9.0 W/m^2. For the CM3 model, LW and SW forcing increase by about 9.0 and 3.0 W/m^2, respectively, for a total net forcing increase of 12.0 W/m^2. But ECS for ESM2M and CM3 are 3.34 and 4.84 K, respectively, which implies (using the S-B equation) increases in surface LW radiation of 18.4 and 26.0 W/m^2 for these models at equilibrium.

Do you suppose the authors are aware that at equilibrium the change in the amount of LW energy leaving the surface has to equal the net change in SW in / LW out at TOA?

It’s been a while since I took undergrad fluid mechanics, but I do recall that any plausible ‘solution’ to a problem had to at least satisfy its boundary conditions.

“which implies (using the S-B equation)”

How are you using the S-B equation here? That would be radiation from a black solid body to space.

Nick,

The S-B equation is applicable to the Earth’s surface (solid and liquid), but not to its atmosphere. The increase in LW radiative flux from the surface is calculated by adding the ECS estimates from either model, e.g., 3.34K for ESM2M, to the Earth’s pre-warming surface temperature of ~288K and noting the change.

If you have a moment, I’d kindly ask you to look at Figure 2 (panels A and C) in the GFDL paper you referenced, above, since I want to be sure I’m interpreting the clear sky flux components correctly.

Specifically, looking at results for ESM2M (panel C, violet), it looks like outgoing LW flux at TOA decreases by slightly less than 6.0 W/m^2, which would obviously be consistent with increasing surface temperature. For panel A, which is the SW component for this model, it looks like SW flux is increasing by about 3.0 W/m^2, but am not sure if this means the Earth is reflecting more SW (higher albedo) or absorbing more SW (lower albedo). I had assumed, above, that it was a case of the latter (ice melt?), meaning the total ‘forcing’ on surface temperature was about 9.0 W/m^2.

In any event, that 9.0 W/m^2 is still far short of the increased surface LW flux of 18.4 W/m^w arising form a modeled surface temperature increase of 3.34K, so was hoping you might be able to shed some light as to where the additional forcing of ~ 9.0 W/m^2 arises from.

Absent that, I’m thinking the approximate 50%+ shortfall in forcing required to explain the increase in surface temperature in both models might be consistent with other analyses indicating that the models ‘run hot’ by about 2x versus observed warming.

Thanks.

All minutiae aside, I think the only issue that matters here remains a simple one. Are these constructs valid guides to widespread, disruptive political and economic policy?

I think the only answer to that is an emphatic NO.

Every time I open a comment thread on WUWT I do a text search for “Stokes” because I know your comments and discussions arising from them will be incredibly informative.

You’ve put very clearly and succinctly a concept I’ve struggled to articulate: climate models aren’t supposed to recreate exactly earth, they are supposed to…. create another earth that behaves like our earth does. Models produce realistic hurricanes that behave like real hurricanes do, but they don’t reproduce exactly the hurricanes we have on earth at the exact time we experienced them. That’s one reason why the “if models are so good why is weather prediction poor” argument is so wrong.

‘[I]ncredibly informative.’

Well, certainly prolific. Since you’re following his every comment, maybe you can point out Nick’s estimates for how the 1) average surface temperature, 2) net atmospheric long-wave forcing and 3) albedo change at equilibrium for a his alternative ‘Earth that behaves like our Earth does’ for a doubling of CO2.

Don’t get me wrong, NIck is a very smart guy, but he’s always giving the impression that the GCMs have numerically ‘solved’ the Navier- Stokes equations for the Earth’s mass and energy flows to the extent that our ‘elites’ are justified in outlawing how the Earth’s population sources about 80% of its energy. They haven’t.

So climate models could be described as –

“other-worldly”?

I can agree with that.

(i.e. having no relevance to our actual world)

The only thing informative is educated and versed people schooling Nick in response to his blather. It’s educating for sure, but also sad, because I can only conclude that a personality disorder or extreme immaturity is involved in why he posts such nonsense which is easily refuted.

Yet again, Nick says exactly the opposite of what is true and is instantly owned by the community.

Nick writes

This is an assumption, not a fact. And a shaky one at that. The general assumption is that the earth cant have climate change due to persisting local climate change but history and archaeology tells us otherwise. Greenland was habitable for many decades and that is persisted local climate change the models cant reproduce.

Nope. The actual timesteps of the model (ie finer grained than even “weather”) matters because it determines how much energy is retained by the earth. The amount of energy the earth retains determines climate change itself and especially ECS.

Forcing factors as represented by cloud parameterisation are calculated from what we’ve seen and fitted. The same goes for many parameterisations.

Ouch. If the clouds were based in physics then they wouldn’t need setting but this is borderline lying because Nick knows the values for the parameters are actually set to cause the model to align with some quantity, typically the TOA radiative imbalance that is “expected”.

The key thing to realise is that models are fitted expressions of climate expectations

And your explanation of why badly behaving models aren’t thrown out is wrong for a different reason. Model output is claimed to be a proxy for climate and once you make that claim about a model then it must be included for the same reasons you cant throw out proxy samples that dont reflect historical temperature either. Otherwise you just end up creating hockey sticks and every statistician on the planet sees it as plain as day.

The Yogi Berra quote sounds like something he’d say but it’s apocryphal.

“I never said most of the things I said.” – Yogi Berra

regardless of who said them, they are ingenious

Gotta love, Yogi! 🙂

It supposedly goes back to Niels Bohr, but that might be apocryphal as well.

Yogi never said that

I read all his books

He’s my favorite philosopher.

The author missed the biggest dirty secret of them all:

Climate computer games start with a desired conclusion.

How they reach that conclusion is a moot point.

The consensus climate prediction was decided BEFORE the models were programmed. They must support the 1979 Charney Report wild guess of ECS, if the programmers want to keep their jobs. This rule of thumb does not apply in Russia, where programmers are NOT forced to agree with the Charney Report for their INM model.

INM CM5 is the only CMIP6 model that does NOT produce a tropical troposphere hotspot. That suggests it is the only one observationally ‘correct’. It produces an ECS of 1.8, in line with EBM ECS about 1.7; again observationally ‘correct’. So what basics set it apart?

As this post suggests, all the other models should simply be discarded. But that would vastly disrupt the multibillion dollar per year climate modeling ‘industry’.

+100!

I built some computer models back in the 1970s for water and sewer systems. We used the output of the sewer model to move setpoints to regulate sewer overflows during rainfall.

But never, no never, did we allow model results to compromise the safe operation of the sewer system.

Just sayin’.

The question now is, do you have the confidence to invest trillions of dollars and reduce standards of living for billions of people, to stop climate model predicted global warming or should we just adapt to the natural changes as we always have?

__________________________________

Perhaps the goal isn’t to predict future climate, but reducing the standards of living for billions of people is. That of course is ridiculous, but that’s what the Duck Test says.

“reducing the

standards of living forbillions of people is”FIFY 🙂

For once the climate catastrophists are correct, it is worse than we thought, except we are talking about the dismal state of their models. They are the only profession where the models get less accurate with use instead of improving. Even economics does better.

Thanks Greg for your excellent and informative contribution.

Often wondered why climate modellers don’t correct their models based on current data.An obvious explanation is that to do so would mean that they would have to relook at assumptions underpinning their model structure.

Two basic areas of contestable assumption might be, firstly , their assumption that World average temperature at the start of the industrial revolution was as low as they assume, (perhaps it was higher?)

Secondly the assumption that theEGE due to higher CO2 levels actually retains enough heat to raise average temperatures. (It hasn’t demonstrated that Central Australia over the last 65 y of reliable uncontaminated data. Ref raw BOM data Giles WS)

Of course to discard a model based on poor predictive outcome would be problematical as it would be an admission that the science was getting it wrong.

WHERE are the climate modelling scientists in all of this??

Inevitably someone will break ranks, then what?

Read this book.

“Confessions of a Climate Scientist”, by Mototaka Nakamura. It is available on Amazon for no cost.

Free with Kindle Unlimited membership Join Now

Available instantly

Or $0.99 to buy

Amazon.com

We cannot predict the future, and only manage to infer the past.

measuring the temperature of the water and the temperature of air several feet above the land and then combining them into some sort of “average” has to be the biggest case of apples and oranges in science … and utter nonsense …

Correct me if I am wrong, but my understanding is that a model must converge in order to be useful. That means that the model must tend towards the same result even if you change the initial conditions. That is absolutely not the case with climate models, as was demonstrated by model runs with starting temperatures changed by less than a trillionth of a degree (way way below any possible measurement) giving results differing by several degrees (ie. by several trillion times the change in initial conditions).

https://news.ucar.edu/123108/40-earths-ncars-large-ensemble-reveals-staggering-climate-variability

The climate models as currently structured can never make meaningful predictions.

Yes I do have some modelling experience, not climate but of fluid flow.

“Correct me if I am wrong”

You are wrong. As people sometimes delight in saying, the system is chaotic. But it also incorporates conservation laws, which still apply. You should know of this from fluids experience, where the same holds. Beyond a certain Reynolds Number, where most practical problems lie, you get the same problem of impossibly sensitive dependence on initial conditions. This is turbulent flow.

But the conservation laws hold, and govern the movement of bulk fluid. So you solve essentially the same equations for mean flow. The main difference is in the diffusive coefficients like viscosity and thermal conductivity. On this is built the great and glorious (and useful) industry of Computational Fluid Dynamics.

Mike said…

“The climate models as currently structured can never make meaningful predictions.”

He is totally and absolutely correct.

The projections from climate models are baseless and totally meaningless, because they have been proven WRONG against reality.

The past data that they hindcast to is manifestly adjusted, and not worth a tinker’s curse as scientific data.

The methodology with the climate models relies on unproven assumptions and ignores many actual real life atmospheric functions.

Large chunks of the mess are “parameterised” with pure guesswork values that have no physical or scientific or measured data to support them.

They are like a scatter gun , that misses the side of the barn !

Reading tea leaves would be just as reliable, and at least you get a cup of tea before reading them

If the climate models are so wonderful, why is there such huge divergence between their predictions?

If they all predicted +3.0 degrees C. +/- 1.5 degrees C., you’d get suspicious !

Hand waiving

Computational Fluid Dynamics applies very well to a wing in a wind tunnel. However, it CANNOT be used to predict the future states of a chaotic, impossibly complex system like Climate.

It was a long time ago and I relied on others with more advanced knowledge than mine of fluid dynamics, but if I remember correctly then I think you must be referring to the transition to turbulent flow, not turbulent flow itself. For our modelling of fluid flow, which was inside pipes not unrestricted (ie. much easier), we gave no results where transition to or from turbulent flow was involved, but gave useful results if all the flow was turbulent. Certainly our end users were happy with that, and they had a lot of real money riding on it.

Exactly!

We had to learn this in therodynamics classes since steam flow and water flow was important in boilers/turbines of a power plant.

No, I’m referring to fully turbulent flow, which is what you get in most applications.

It’s been decades since my undergrad Fluids courses, but isn’t the real difficulty in the state transition between laminar and turbulent flow?

Transition to turbulence is an interesting and difficult problem of theoretical interest. But most practical flows have no real laminar region; they are fully turbulent. No transition. Some of the corresponding difficulty arises at rigid boundaries, where you could say the flow next to the wall is laminar, but that isn’t very helpful.

Yeah, boundary layers are closer to “still air” than laminar flow.

Fluid dynamics has come a long way since my undergrad days, and computing power has come even further. That must have been a fascinating area to work in.

Part of the problem is that they only hold based on time. The author used a shotgun analogy. Those familiar with shotguns have probably patterned it but still experience “holes” that birds fly thru. They don’t know that the shot string spreads out in time also. It can be 5 yards long depending on time from exit.

Thank you for your explanation in your article, Greg.

This is an excellent post, one wishes that actual open debate and discussion would become fashionable again.

When the models are as wrong as we clearly see in figure 5, I would be ashamed to even put my name to 99% of them.

What a fictitious blatantly corrupt era we live in.

The face of climate alarmism is best shown by this Sky News interview yesterday.

This shows the demonic level of brainwashing that has taken place in the minds of the affected millennials. This young lady has no idea how misguided she is. The worst part is, the Sky News interviewer does not challenge the position/validity of the just stop oil spokesperson? He simply keeps banging on about the methods she is using failing to convey the message?.

The clip is just extraordinary! Indigo is utterly devoid of reason and commonsense. She’s like a robot. So intense, its like a….religious cult. And very shouty and aggressive. Incapable of listening to another point of view. Is she a product of our education system? Rains in Pakistan, drought in Somalia. So what? She is 28, too young to have seen it all before like I have.

Totally beyond reason. She is a babbling fool, utterly naive. And no Just Stop Oil are NOT asking nicely.

The anchor Mark Austin showed incredible restraint. Indigo the ranty, sarcastic, patronising little girl. The more TV gives these idiots airtime the better, so everyone can see how mad they are. Loved the little text box that popped up part way through about mental health in the UK – ironic!

I’ve heard that the British people are starting to get fed up and lose confidence in the police because Britain’s law enforcement have been unable to stop the Just Stop Oil and other protesters from daily disruptions with their protests. There are not enough police and too many gantries over the M25 and roadways throughout London to prevent more disruptions from protests. Unless you keep them locked up after arrest, they will continue.

The only way this is likely to end is when a concerted effort is undertaken to discredit the CAGW narrative with the science that pokes holes in its credibility. Unfortunately, the masses are not being told that the climate models are wrong (among other things).

I can see the increasing risk of violence and maybe death on the horizon. This is what happens when mass hysteria and group think rule the roost.

What is so really incredible about this whole (climate) thing is that anybody even needed to, or did, write an essay like this.

(Think about it, it could hardly be any worse because *that* is The Secret that this totally junk science is trying to hide)

Temps in Africa are estimated-

WMO- “Because the data with respect to in-situ surface air temperature across Africa is sparse, a one year regional assessment for Africa could not be based on any of the three standard global surface air temperature data sets from NOAANCDC, NASA-GISS or HadCRUT4. Instead, the combination of the Global Historical Climatology Network and the Climate Anomaly Monitoring System (CAMS GHCN) by NOAA’s Earth System Research Laboratory was used to estimate surface air temperature patterns’

climate change works in mysterious ways-

https://www.weforum.org/agenda/2018/05/why-the-world-s-fastest-growing-populations-are-in-the-middle-east-and-africa/

Unless CO2 emissions are reduced there’s a 50% chance that the 1.5 degrees Celsius rise will be broken in 9 years time. https://www.bbc.co.uk/news/science-environment-63591796. Based of course on computer models.

I believe the +1.5 degree C. arbitrary limit was temporarily broken two times already (or close to being broken) in April 1998 and February 2016, at the peak monthly temperatures of two large El Nino heat releases

If not for bald-faced lies the politically driven leftist agenda would have been dead 80 years ago.

Thanks very much for this Greg. I suspect this is classified as “inconvenient information” to be Platformed by all the ‘Alarmunist Party’ (AP) faithful. I’ve been yearning for those sort of details for years. It seems the AP thoroughly infests the whole of the Media these days.

Meanwhile- a couple of things. 1) Temperature is not a good metric to use in any predictive result as it inadequately describes the overall ENTHALPY situation at a global level. The gas laws tell us that Volume is inversely proportional to Temperature; so this means that you can have a variable with a single temperature but a variable Enthalpy depending on its prevailing phase.

2) The above occurs during water evaporation where, in the oceans solar energy is absorbed and converted to Latent Heat at CONSTANT temperature resulting in a very large increase in volume. I suspect that this gets deliberately or otherwise ignored by these models, which would explain much.

Can you explain to me IF or where the above gets fitted into these models? (It looks to me here that we are dealing with two different Mindsets.)

My regards

Alasdair Fairbairn

The models use conservation of energy principles for each time step across the model. Physicists have developed these models so I’m reasonably certain that this has been correctly implemented, but as the time steps are finite, each is a jump.

I agree that using temperature as a proxy for global energy, which is what we should be measuring, is an issue, but that’s much harder to measure.

It shouldn’t be that much harder to measure today. Most measurement stations record humidity and pressure along with temperature. You don’t need much else. The issue is that you don’t get the same length of data, you can typically only go back to the 80’s.

The real problem is “tradition” and the fear of the climate alarmists that their hoax will be revealed by using enthalpy instead of temperature.

Who created the model in Figure 6, the author?

Where can I find out more about it?

I like, and always have, the idea that everything in heavan and earth is cyclical

Footnote 6

Yes.

I’d classify myself as a climate “cyclist” (not a ‘denier’).

Fascinating to watch DiCaprio et al lecture us about climate change , his super yacht must use way more energy than my modest house and economical cars . What’s good for goose isn’t good for the gander

“analytic harmonic model”

A fancy name for an analog computer. When I was at college digital computers were in their infancy. Analog computers were used to solve electronic circuit design problems and they worked well.

I’ve often wondered why they were not used more in climate research.

Or slide rules

Better yet, an abacus

I’m guessing you don’t know what an analog computer is, and why in some circumstances they can generate more accurate results than digital computers can.

Analog computers are designed to handle continuous waveforms with harmonics and interactions. They do not require “numerical” solutions to find a value for trigonometric functions with integrals or derivatives. A model can be built and modified for specific purposes. It is not a general purpose computer, it is designed and built for a unique set of circumstances.

How many handheld calculators do you think were used to develop the Saturn V rocket that took humans to the moon? How many computers did Feynman, Einstein, Bohr, Planck, or Maxwell have? Your derisive comment shows just how much some of your comments are worth.

If you’ve never designed one or used one, you have NO knowledge of just what one can do.