December 12th, 2019 by Roy W. Spencer, Ph. D.

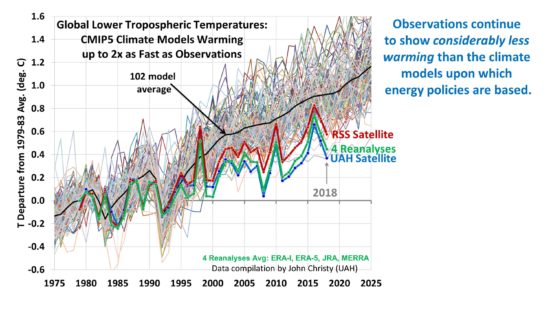

I keep getting asked about our charts comparing the CMIP5 models to observations, old versions of which are still circulating, so it could be I have not been proactive enough at providing updates to those. Since I presented some charts at the Heartland conference in D.C. in July summarizing the latest results we had as of that time, I thought I would reproduce those here.

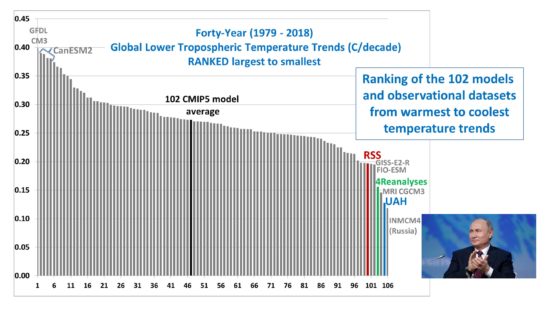

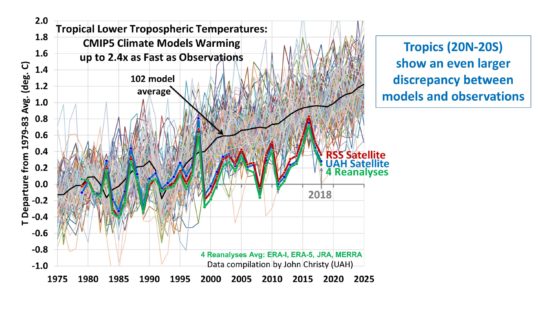

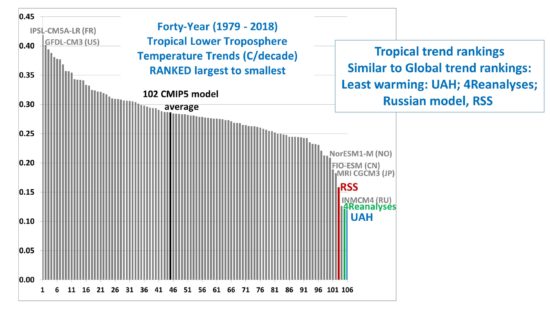

The following comparisons are for the lower tropospheric (LT) temperature product, with separate results for global and tropical (20N-20S). I also provide trend ranking “bar plots” so you can get a better idea of how the warming trends all quantitatively compare to one another (and since it is the trends that, arguably, matter the most when discussing “global warming”).

From what I understand, the new CMIP6 models are exhibiting even more warming than the CMIP5 models, so it sounds like when we have sufficient model comparisons to produce CMIP6 plots, the discrepancies seen below will be increasing.

Global Comparisons

First is the plot of global LT anomaly time series, where I have averaged 4 reanalysis datasets together, but kept the RSS and UAH versions of the satellite-only datasets separate. (Click on images to get full-resolution versions).

The ranking of the trends in that figure shows that only the Russian model has a lower trend than UAH, with the average of the 4 reanalysis datasets not far behind. I categorically deny any Russian involvement in the resulting agreement between the UAH trend and the Russian model trend, no matter what dossier might come to light.

Tropical Comparisons

Next is the tropical (20N-20S) comparisons, where we now see closer agreement between the UAH and RSS satellite-only datasets, as well as the reanalyses.

I still believe that the primary cause of the discrepancies between models and observations is that the feedbacks in the models are too strongly positive. The biggest problem most likely resides in how the models handle moist convection and precipitation efficiency, which in turn affects how upper tropospheric cloud amounts and water vapor respond to warming. This is related to Richard Lindzen’s “Infrared Iris” effect, which has not been widely accepted by the mainstream climate research community.

Another possibility, which Will Happer and others have been exploring, is that the radiative forcing from CO2 is not as strong as is assumed in the models.

Finally, one should keep in mind that individual climate models still have their warming rates adjusted in a rather ad hoc fashion through their assumed history of anthropogenic aerosol forcing, which is very uncertain and potentially large OR small.

“Another possibility, which Will Happer and others have been exploring, is that the radiative forcing from CO2 is not as strong as is assumed in the models.”…

I’m a layman and I have always wondered how levels of a trace gas in the atmosphere could “drive” warming. The mainstream climate science (AGW) community says the physics are well established and there is no doubt.

Happer seems to say the physics is not well established.

The models, based on CO2 levels, seem to be utter failures.

So, Happer is correct, right?

Yes, he is. The ECS range of 1.5 to 4.5 degrees C stems from the 1979 Charney report, which combined Manabe’s primitively modeled 2.0 degrees with Hansen’s 4.0 degrees and added an arbitrary 0.5 degree margin of error.

But actual observations show that the range is much lower, probably around 0.1 to 2.10 degrees. The central figure of 1.1 degree is the lab result, without any feedbacks as might be expected in the real, complex climate system. IMO, net negative feedbacks are more likely than positive, as per the 0.7 degree found by Lindzen and Chao, as revised, based upon satellite data, rather than GIGO models.

The value of 4.0ºC was due to Ramanathan et al. (1979)

The report cites Ramanathan, but the second of two computer models used was Hansen’s:

J. Hansen, Goddard Institute for Space Studies, NASA

S. Manabe, R. T. Wetherald, and K. Bryan, Geophysical Fluid Dynamics Laboratory, NOAA

They cite Ramanathan for the value of 4.0ºC the results of the Hansen model gave lower values.

Page 13:

4.1 THREE-DIMENSIONAL GENERAL CIRCULATION MODELS

We proceed now to a discussion of the three-dimensional model simulations on which our conclusions are primarily based. Some of the existing general circulation models have been used to predict the climate for doubled or quadrupled CO2 concentration. The results of several such predictions were available to us: three by S. Manabe and his colleagues at the NOAA Geophysical Fluid Dynamics Laboratory (hereafter identified as Ml, M2, and M3) and

two by J. Hansen and his colleagues at the NASA Goddard Institute for Space Studies (hereafter identified as HI and H2). Some results obtained with the British Meteorological Office model (Mitchell, 1979) were also made available to us but will not be described here because both the sea-surface temperature

and the sea-ice distribution were prescribed in this model, thus placing strong constraints on the surface (Delta)T, whereas it is just the surface (Delta)T that we wish to estimate.

I have a pdf of a deck by Hansen:

“Climate Threat to the Planet: Implications for Energy Policy and Intergenerational Justice”

Jim Hansen • December 17, 2008 • Bjerknes Lecture, American Geophysical Union • San Francisco, California

on p8

Empirical Climate Sensitivity

3 ± 0.5C for 2XCO2

I do not have a link, but it might be here at WWUT

Walter,

Hansen’s first model, used by Charney, derived 4.0 K per doubling, vs. Manabe’s of 2.0.

Your citation is based upon IPCC’s then central figure. They’ve since raised it above 3.0 K.

There it is, 1979 again, just like the sea ice charts. Also the peak of the ice age scare after a substantial period of cooling, since erased from the record.

The 1.1 degree result is not the zero feedback sensitivity, but is based on the current steady state sensitivity applied to all incident solar energy after all factors that can be considered feedback like have already manifested their effect on the final averages. This value corresponds to an emissions sensitivity of about 1.62 W/m^2 of surface emissions per W/m^2 of forcing. The zero feedback result is 1 W/m^2 of surface emissions per W/m^2 of forcing as would be the case for an ideal black body. This corresponds to about 0.2 C per W/m^2 or about 0.75C per W/m^2.

Thinking in terms of an incremental temperature per W/m^2 is where the fundamental error lies as this disconnects the next Joule from the average Joule making an absurdly high incremental sensitivity seem plausible despite the limitations of COE which requires the next Joule to have the same effect as the average Joule. COE may apply to the 1.1 C per W/m^2, but beyond that it breaks.

The increase from 1 W/m^2 of surface emissions per W/m^2 of forcing up to 1.62 is the net result of all feedback like effects, positive, negative known and unknown. The idea that this increases all the way up to 4.4 W/m^2 per W/m^2 of forcing illustrates a level of ignorance about basic conservation laws that’s stunning. The fact that the feedback power of 3.4 W/m^2 exceeds the forcing power of 1 W/m^2 indicates that the system is already in a runaway state and the Earth is clearly not.

The concept of long term feedbacks that have yet to manifest is absurd junk invented only to lend plausibility for an ECS high enough to cause concern, yet that’s otherwise trivially falsified by non controversial conservation laws. If you do the math and eliminate the reflectivity from of the all ice and snow on the planet across 12 months and distribute the unreflected energy across the planet. it’s still not near enough to replace 4.4 W/m^2 of emissions per W/m^2 of ‘original’ forcing. Keep in mind that the planet is 2/3 covered by clouds which means that the reduced reflectivity from 2/3 of the melted ice and snow has no incremental effect!

John Tillman,

“The central figure of 1.1 degree is the lab result, without any feedbacks as might be expected in the real, complex climate system.”

The 1.1C is NOT a lab result and moreover it is overtly incorrect. The 1.1C derivation contains no stratospheric cooling in it (only stratospheric warming along with tropospheric warming), which is the main/fundamental signature of GHE induced surface warming. This by itself is falsification of every sensitivity result in the literature, including Roy Spencer’s, Monckton’s, Lindzen’s, etc.

The 1.1C is NOT giving you a theoretical measure of intrinsic surface warming via the GHE from 2xCO2, thus neither is anything directly amplified or attenuated off of it, positive or negative feedback, high or low sensitivity.

It looks like RW writes that 1.1 C degree is not correct but he/she fails to write what is correct. It is too easy to say that something not correct without showing the errors or assumed correct numbers.

What is your comment about this quote: “In the AR4, the IPCC writes: “The diagnosis of global radiative feedbacks allows better understanding of the spread of equilibrium climate sensitivity estimates among current GCMs. In the idealized situation that the climate response to a doubling of atmospheric CO2 consisted of a uniform temperature change only, with no feedbacks operating (but allowing for the enhanced radiative cooling resulting from the temperature increase), the global warming from GCMs would be around 1.2 °C.”

It is a fact that the LW radiation absorption happens 98% in the troposphere. Cooling or warming in the stratosphere has a minor impact.

Test

If I had to gander at the behavior of feedbacks using biological systems as a model, there would be a central, and mathematically determined equilibrium tropospheric temperature for a given condition. The feedbacks would work to bring the energy balance to that calculated mean. Temperatures above that mean will be brought down, and temperatures below it will be brought up. With water vapor, there appears to be a dual role, working as a positive feedback when temps are below the mean, but working as a negative feedback when temps go above the mean. I personally don’t think CO2 has much of anything to do with it.

IMO, the problem with climate science and climate models is that the information needed to accurately calculate that mean temp for a given condition is simply not known and without it, you cannot determine the correct behaviors of feedbacks. The reason for this is that practically all of the information for those conditions are natural in origin and thus outside of the agenda of the anthropogenic climate cabal, which is to prove man is killing the planet and thus giving justification for transforming society in a way that gives them power and riches at everyone else’s expense. I really don’t blame the so called scientists, they are just doing what they have to do get grants to fund their careers. The real culprit, as all of you already know, resides in the political sphere.

It just may be that the reason the Russian Model is the closest to accurate is that they’ve given up on taking over the world via political means, therefore their model better considers reality.

@John … and even some of the biggest CAGW proponent names agree … the models are broken, in large part because ECS forcing they’ve fed to the models are overstated (GIGO), and as such model predictions are overestimating warming – by a factor of as much as two times or more.

“We conclude that model overestimation of tropospheric warming in the early twenty-first century is partly due to systematic deficiencies in some of the post-2000 external forcings used in the model simulations.”

“Causes of differences in model and satellite tropospheric warming rates …

Santer et al 2017”

http://centaur.reading.ac.uk/71264/1/Santer_etal_NatGeosci_MainText_25may17.pdf

The GIGO models must ignore or “parameterize” such important water feedbacks as convective and evaporative cooling and clouds.

It will be a long time, if ever, before GCMs can model clouds. Grids are currently typically about 100 x 100 x 10 km , while clouds are on the scale of tens to hundreds of meters. Going to the required 10 x 10 x 10 meter resolution would require on the order of 100 billion times more computing power.

But even then, the models would still be inadequate.

Dr Brown of Duke had a great rant on slashdot about this very subject. In it he stated we are at least 30 orders of magnitude in computing power away from being able to start modeling clouds.

30 orders of magnitude! Put 30 zeros behind the computing power of the current model farms. LOL. Even quantum computing might not be able to model clouds. We might have to cluster a bunch.

But hey man, the science is settled. LMAO

That puts my estimate of 11 orders of magnitude in the shade.

But, yes of course going down to millimetric scale would be better.

Climate is essentially uncomputable. To imagine it is constitutes huge hubris, but it’s too lucrative for reality to impinge on GCM practitioners, ie computer gamers.

The ancient Assyrians and Babylonians kept extensive records of climate, weather, crops, etc. They had no geometric model of the solar system, or any of the mathematical models we know as “physics”. They did, however, have statistical models. Their records were kept on clay tablets (instant mimeograph via wet clay), and we actually have quite a few recovered from Ninevah and other sites.

The statistical models were accurate enough to substantially assist farmers, a la Farmers Almanac. It would be helpful if the Climate researchers would quit pretending they have any kind of useful physics model of climate, and emulate the Assyrians. The solar researchers recognize that the processes that drive solar cycles are mostly mysterious, and go full statistical, and do pretty well. There are lots of different cycles of widely varying periods from 11 years to 100s of thousands.

Predicting the sun is like listening to music with complex harmonics. The climate on earth and mars seems similar, with their harmonies echoing the leading voice of the sun.

At present, climate science is in its infancy, and not likely to mature soon. Consider just the problems of the changing sun, the uncertain heat sink effect of the oceans, the mix of positive and negative feedbacks related to clouds, the uncertain magnitude of heat delivery from lower to upper atmosphere via thunderstorms, an add in the various lag times for these——and then claim to have a useful model of CO2 acting as the dominant driver of climate change. Absurd.

One scientific fact we know, based on evidence, is that we are in a bi-stable cold climate era these last few million years….flipping in an out of serious ice ages multiple times.

You said ” The models, based on CO2 levels, seem to be utter failures.”

What more do you need?

The null hypothesis is wrong and it gets worse from there.

https://arxiv.org/PS_cache/arxiv/pdf/0707/0707.1161v4.pdf

Almost everyone agrees that the CO2 absorption bands are saturated. The question is how much additional warming is provided by “pressure broadening”. This is the phenomena that is debatable. Here is the view of one physics professor.

“I’ve simply never understood what people mean when they assert that there is some sort of pressure broadening contribution to the expected GHE due to increasing CO_2. No, there is not. There is an effect due to increased concentration and a reduced mean free path of IR photons, but this effect is known to be extremely weak as it is long since saturated.” … RGB

https://diggingintheclay.wordpress.com/2014/04/27/robinson-and-catling-model-closely-matches-data-for-titans-atmosphere/#comment-6072

It appears that Dr. Happer and Dr. Brown are in agreement. If “pressure broadening” is an overall air pressure effect instead of a CO2 species effect, then the changes over the past century are trivial. They would have already existed back when CO2 was 280 ppm. That would mean it was also saturated. This would mean CO2 cannot provide even the 1.1 C of warming often claimed. Feedback then becomes irrelevant.

Richard

“but this effect is known to be extremely weak as it is long since saturated.” … RGB

How could it be anything less than saturated at any give time ?

What would cause a state pf low saturation ?

Regards

If the term “saturated” means that the energy distribution within the CO2 molecule is a result of thermal equilibrium (through the process of energy equipartition) – with which I agree – then the CO2 molecules act as ANY ideal gas in a mixture of ideal gases. Thus, changes in the properties of the mixture reflect only the changes in the proportions of the gases involved. I think the Connolly’s research shows precisely that the gas mixture in the atmosphere can be considered as an ideal gas…

The meaning of saturated in the usage I quoted means all the available surface IR in the bands that CO2 can absorb is already being absorbed. You can’t absorb more than 100% of the IR which was where we were at around 200 ppm.

What pressure broadening was supposed to do was make available more IR just outside the normal CO2 bands. The quote by Dr. Brown tells us those bands were also available at 280 ppm and would also be saturated.

This would be easy to measure in the lab. Spectrophotometry is a mature branch of chemistry and physics. If CO2 absorbs outside its normal bands, we should be able to see it. If there are no data, then this is unlikely to actually occur.

I have seen a poster produced by Wijngaarden and Happer (WH) which has promised a more detailed treatment of the issue of calculating radiative transfer in earth’s atmosphere, but I have not yet seen the full work. There is a recent contribution by these two authors which is also not the full work.

Here is an issue they raise in a nutshell. Radiative transfer codes, like MODTRAN, make use of detailed measurements of absorption lines of “greenhouse” gases, but the full calculation requires modeling the doppler and pressure broadening of these lines. WH maintain these models have a small but significant discrepancy with measurements made in the atmosphere. The issue appears to be that the mathematical form used to model broadening is too wide, and the post treatment of this model with apodization (that is a filter that cuts the broadened wings off early) does a better job of reconciling calculations with measurements.

This is not an issue of feedback, but an issue of basic radiative calculation which indirectly affects feedback. It does not invalidate models per se, but it does call into question their accuracy at the level required to make exacting temperature forecasts into the future — i.e. it calls into question their fitness for purpose.

models per se, but it does call into question their accuracy at the level required to make exacting temperature forecasts into the future — i.e. it calls into question their fitness for purpose.

Anyone who says that the physics of global warming is simple, has self identified as a fool.

“I’m a layman and I have always wondered how levels of a trace gas in the atmosphere could “drive” warming. ”

except its not a “trace gas” and it doesnt “drive” warming. it reduces the cooling to space.

Half right.

http://www.atmo.arizona.edu/students/courselinks/fall16/atmo336/lectures/sec1/composition_fall16.html

David

All of Mosher’s ‘drive by’ remarks could be considered to be “Half write.” 🙂

It’s important to read what is written before replying, he did not say “could cause a little warming” ( maybe/maybe not detectable ). He said how could it DRIVE warming, ie be the control knob so many are trying to pretend it is even to point of attempting to explain it as being the cause of the last deglaciation.

Actually, SM is wrong on both counts. CO2 is indubitably a trace gas in Earth’s atmosphere, and it doesn’t reduce cooling to space, but rather raises emission height, at least hypothetically.

Please make that “effective emission height”.

Raising the effective radiative level in the troposphere has the effect of reducing radiation to space. But the satellites are unequivocal. They see radiation to space in CO2 bands at the tropopause and above, NOT from the troposphere. Above the tropopause temperature increases with altitude, and radiative energy to space increases to the fourth power of increased temperature. CERES finds no reduction in LW radiation to space. Mr. Mosher believes the CERES sensors are inadequate, perhaps because there is a four watt discrepancy the good CERES folks have “balanced and filled” under Mr. Trenberth’s tutelage by burying most of it in the ocean. Without this CERES would show far more radiation to space, perhaps explaining the dramatic cooling of the stratosphere.

@Steven … I’m usually inclined to support you – but you’re picking nits here.

If you reduce cooling to space it pretty much directly drives increased warming.

I understand your point – but I think both claims are correct.

.04 % of the atmosphere…is that right?

Yep

40,000 ppm 40,000/1,000,000 = 4% 4/100

4,000 ppm 4,000/1,000,000 = 0.4% 4/1000

40 ppm 400/1,000,000 = 0.04% 4/10,000

Your last one is written wrong 🙂

Dang, I gotta fire that Zero

My Hero Zero must have been off on patrol

To be clear,

400ppm = 400/1,000,000 = 0.0004 = 0.04%

Quibble much? I guess you don’t ‘drive’ your car, you just press pedals and turn the wheel in front of you. Some distinctions are important. The difference between carbon and carbon dioxide is huge. The difference between a scientific skeptic and a science denier is huge. Yet whenever I bring that up how the two or used interchangeably, someone gets exasperated and says “Oh, you know what that means. Stop quibbling over semantics!”

The difference between a climate crisis skeptic and a climate science denier is not semantics at all. The former is a real human being with a scientifically supported viewpoint. The ladder is a slanderous phrase associated with a completely fictitious character, used to demonize an opponent so you do not have to face the inevitable agony of defeat at the hands of their superior knowledge and understanding!

On the other hand, the difference between ‘reduces the cooling to space’ and ‘drives warming’ is essentially zero anywhere outside a physics laboratory. It really is just semantics and you do know what ‘layman’ meant.

This may seem like much ado about nothing but it is getting so we can’t talk to each other because there is no consistency in what words mean. Our language is being destroyed for fleeting political gains and propaganda, and I am tired of it. It is impossible to discuss anything at all, if all words are subject to either quibbling or conflating, depending on which interpretation is more politically advantageous at the moment.

+42^42

Of course it’s a trace gas, in a tiny atmospheric concentration, yet still essential for most life. Even water vapor, 100 times more plentiful than CO2 in the moist tropics, is still a trace gas.

“it reduces the cooling to space.”

That’s the conjecture. Others have postulated that the cooling of the earth increases as CO2 radiates so effectively.

So.. has the stratosphere temperatures dropped?

Obviously, the assertion about “trace gas” is not the accepted position.

https://en.wikipedia.org/wiki/Trace_gas

Trace gases are those gases in the atmosphere other than nitrogen (78.1%), oxygen (20.9%), and argon (0.934%) which, in combination, make up 99.934% of the gases in the atmosphere (not including water vapor).

https://www.climate-change-guide.com/trace-gas-definition.html

When discussing climate change, trace gas refers to any of the less common gases found in the Earth’s atmosphere.

As for “reduces cooling to space”, yes, this seems to be a common popular explanation, but I have yet to comprehend the mechanism by which a trace gas could do anything of the sort.

Steven Mosher, “ [CO2] reduces the cooling to space.”

Not correct. The rate of cooling (radiative transfer) to space remains identical. The radiative transfeer just happens at a bit higher altitude in the atmosphere. A constant lapse rate makes the surface warmer.

And then there’s the negative feedback from water vapor acting as temperature regulator in the system. The 70% salt water ocean thing pretty much does the rest.

-thermal inertia.

-poleward heat transfer via current flow

-the more radiately active the system becomes (DWIR), the more wind driven advection and convection of water vapor to provide convective transport of latent heat through the troposphere to be released as sensible heat.

– the salt is essential to system, supercooled heavy brine rapidly sinks under forming polar ice helping to drive global thermo-haline circulation system to return cold polar water to tropics near the benthic depths.

@Patrick Guinness Frank

It works both ways. An increase in radiative forcing has warmed the surface. A constant lapse rate would have necessarily raised the ERL.

Also, the moist adiabatic lapse rate – and moisture affects the height of the ERL – is anything but constant. It changes from place to place, season to season. How do we know the global average has remained unchanged over the industrial era?

I was just citing the standard explanation for Steve’s benefit, Snape.

The unknowns you express are typically ignored by AGW proponents. Certainty reigns there.

On behalf of Snape:

@sycomputing

Thanks!

Snape, see my “Propagation of Error and the Reliability of Global Air Temperature Projections” here.

Regarding AGW no one knows what they’re talking about. Literally.

The paper shows that climate models are predictively useless. None of the air temperature projections have any physical meaning.

The IPCC is clueless. So is Al Gore. And the real tragedy: so is NASA.

In GHE theory, more CO2 doesn’t reduce the cooling to space. It raises the emission height.

Steve wouldn’t understand what you are talking about you are wasting your time. Nick Stokes would I am seen he has messed around with this. It is actually “effective emission height” it isn’t real as the emissions are from all heights but it is the height that gives equality to the blackbody calculation of Stefan-Boltzmann law (It’s current value would be around 5Km).

It isn’t a popular or supported view these days because it leads to a decreasing forcing for CO2 as it increases so no AR8.5. They much prefer the model forcing version because that creates the shape of graph they want.

I meant to write “effective”, but hit “Post” before proofing.

It’s an important qualifier.

@Patrick Guinness Frank

Sorry for posting down here, For some reason there was no “Reply” option under your comment. This keeps happening…. my end I’m sure. Nothing wrong with the blog.

Anyway, yeah, I see this is not an idea you were strongly advocating. I am an AGW advocate, but the “higher ERL” description of the greenhouse effect bugs me. It turns the lapse rate into a forcing.

By the way, SM is not only familiar, but a proponent, citing the likes of Pierrehumbert. Who am I to argue?

“For some reason there was no “Reply” option under your comment.”

That’s by design. In this case you should reply to yourself, which then places your reply in line of the thread in order of posting.

See above.

Thanks, Sy.

Snape, I’ve replied to you above.

Work to become knowledgeable, Snape. It’s the only way forward.

I am not convinced that something that is 0.041% of the atmosphere can alter the entire climate. There is over 20 times as much argon in the atmosphere than CO2, and yet I am to believe that something that is much less than one half of one percent is a driver of climate. Uh-huh.

“For a molecule to absorb IR, the vibrations or rotations within a molecule must cause a net change in the dipole moment of the molecule. The alternating electrical field of the radiation . . . interacts with fluctuations in the dipole moment of the molecule. If the frequency of the radiation matches the vibrational frequency of the molecule then radiation will be absorbed, causing a change in the amplitude of molecular vibration.” — https://teaching.shu.ac.uk/hwb/chemistry/tutorials/molspec/irspec1.htm

Atmospheric gas molecules such as nitrogen and oxygen do not have a dipole moment and therefore do not interact with LWIR from Earth. Argon and other monatomic gases also do not have a dipole moment and therefore also do not react with LWIR.

Water (vapor), CO2 and methane do have molecular dipole moments are therefore are appropriately called “greenhouse” gases.

Absent a dipole moment, gas concentration is meaningless as regards IR absorption.

… yet meaningful in so many other ways.

Perhaps regarding IR absorption so highly in the context of this greater meaning is what is meaningless.

Explain how that dipole moment enables the “slowing” of anything.

Robert Kernodle, you replied to me “Explain how that dipole moment enables the “slowing” of anything.”

Cute . . . nice try . . . but first, please point exactly where, in any post I made in this thread, I referred to a dipole moment as “slowing” anything.

Any molecule that is a good absorber of LWIR is also a good emitter of LWIR. The exchange is near instantaneous. In > Out.

What makes water dominate atmospheric heat transport? Phase change from liquid to gas (absorbing heat energy), followed by convection from the surface to the upper troposphere (mass transport), followed by phase change from gas to liquid (condensation liberating heat energy) in the upper atmosphere, followed by radiation to space from the upper atmosphere.

At normal atmospheric temperatures and pressures CO2 undergoes no phase change, rendering it a small bit player in heat transport.

CO2’s major environmental role in the energy transport cycle is as a chemical feed stock for photosynthesis, where it plays a vital role (along with water) in the major chemical energy storage process in the world. The amount of solar energy stored in chemical bonds every day is mind boggling. It makes CO2’s interaction with LWIR pale in comparison.

Atmospheric CO2 molecules that absorb LWIR and momentarily store it via molecular vibrational energy do not always release such energy via re-radiation at LWIR . . . a good portion of that energy can be transferred out via kinetic energy exchange (molecule-molecule collisions) with non-excited molecules that are effectively at lower “temperature”, actually at lower energy states) in the atmosphere, such as nitrogen and oxygen. The process is known as “thermalization.”

To elaborate, condensation/crystallization in the upper atmosphere liberates energy as radiation. The clouds thus formed obscure/reflect solar energy, changing the albido of the earth, a variable that the climate models do not deal with with any confidence. This is just to complex a problem and they admit it.

As the graphs of GCMs vs OBSERVED TEMPERATURES indicates and as John Christy has stated, the GCMs have demonstrated NO predictive ability. They are wrong.

As to the amount of solar energy stored in chemical bonds, there are 3.87 kilocalories of energy per gram of simple sugar (glucose, cellulose). One watt=0.860421 kilocalories/Hr.

Multiply this by the number of kilotons of biomass produced every day. Staggering.

“a good portion of that energy can be transferred out via kinetic energy exchange”

What portion? Has it been quantified?

I have always wondered how the radiative effect of water vapor and CO2 could dominate the warming.

As a thought experiment imagine a thermometer. It is surrounded by 99.9+ percent of non-radiative gases and about 0.1 percent of radiation absorbing gases. Let’s assume the temperature of the thermometer goes up one degree. What does the temperature of the radiative gases have to be to accomplish this?

It seems to me that there must be a lot of kinetic energy transfer going on to the other gases.

Any molecule that is a good absorber of LWIR is also a good emitter of LWIR. The exchange is near instantaneous. In > Out.

Not true, the emission lift-time for CO2 is the order of milliseconds whereas near the surface the average time between collisions is about a tenth of a nanosecond consequently most energy ends up in kinetic energy transfer to neighboring molecules.

“consequently most energy ends up in kinetic energy transfer to neighboring molecules”

This thermalization process runs both ways: CO2* + N2 ↔ CO2 + N2⁺. The rates are equal. Net energy exchange = 0

Please see this article for a full treatment:

https://wattsupwiththat.com/2010/08/05/co2-heats-the-atmosphere-a-counter-view/

Richard. –>

Šo you agree that CO2 absorbs IR, then converts it to kinetic energy to ALL the other gases. Question, how does positive feedback of CO2 to water vapor get so strong?

Richard G.,

I had previously read the “full treatment” at the WUWT link that you provided, and again reviewed it in preparation for this response.

In summary, the article is fatally flawed in applying the theoretical concept of “local thermodynamic equilibrium (LTE)”—useful perhaps for a gedanken experiment—to the Earth’s real atmosphere. It is fairly easy to see that LTE cannot apply, statistically, across any significant number of arbitrary-size control volumes drawn in the Earth’s atmosphere.

If LTE dominated Earth’s atmosphere it would be (a) impossible for convection to be occurring, and (b) impossible for a temperature gradient to develop in atmosphere, and (c) impossible for there to be day vs. night atmospheric surface temperature differences caused by presence/absence of solar insolation.

The fatal flaw in the LTE argument is that there NEVER is equilibrium, to any statistically significant degree, in any realistic-sized control volume one may draw within the Earth’s atmosphere . . . during the day, solar radiation is creating a net downward flux of energy from the control volume’s top surface to its bottom surface (wrt to gravity), and during the night Earth’s radiation is creating a net upward flux of LWIR from the control volume’s lower surface to its upper surface.

More specifically the statement “Because the number of excited molecules in a small volume in LTE must stay constant , follows that both processes emission/absorption must balance” totally ignores the fact that radiation is always crossing the control volume surfaces in one direction or the other.

Given that, statistically, there is ALWAYS some amount of radiation absorption within a given-size control volume containing a mixture of LWIR-absorbing gases, there can NEVER be LTE because radiation is ALWAYS crossing the control volume’s boundaries and thereby ADDING energy, on a statistically significant basis.

Richard G. December 15, 2019 at 2:36 pm

“consequently most energy ends up in kinetic energy transfer to neighboring molecules”

This thermalization process runs both ways: CO2* + N2 ↔ CO2 + N2⁺. The rates are equal. Net energy exchange = 0

The rates are not equal, because the concentration of N2 is much higher than CO2 the chance of the reverse reaction occurring is extremely small. The N2⁺ is much more likely to collide with an N2 or O2 molecule in a kinetic energy exchange.

Please see this article for a full treatment:

https://wattsupwiththat.com/2010/08/05/co2-heats-the-atmosphere-a-counter-view/

Yes it was wrong then as I pointed out at the time.

Gordon, Thank you for Making a point I frequently make.

“The fatal flaw in the LTE argument is that there NEVER is equilibrium, to any statistically significant degree, in any realistic-sized control volume one may draw within the Earth’s atmosphere . . . during the day, solar radiation is creating a net downward flux of energy from the control volume’s top surface to its bottom surface (wrt to gravity), and during the night Earth’s radiation is creating a net upward flux of LWIR from the control volume’s lower surface to its upper surface.”

I agree there NEVER is equilibrium. There is however a ‘dynamic equilibrium’ or a range of natural variability as the climate system chases it’s equilibrium tail. Never the less, CO2* + N2 ↔ CO2 + N2⁺ is a valid thermodynamic expression (and I referenced the “treatment” as a good explanation of the interactions of gasses, not to advocate for LTE). It runs both ways depending on the energy gradient of each collision interaction. If the GCMs understood this complexity they would not be such failures. I don’t pretend to understand this complexity either.

If there never is a state of equilibrium in the climate system how can there be an ‘equilibrium constant’? Global average temperature is a statistical fiction. What is important is the LOCAL enthalpy of the system. This is dominated by the water vapor fraction which is highly variable. Even Tmax/Tmin does not do it justice if not referenced to relative humidity.

The ‘greenhouse effect’ is a misnomer that is misleading. Greenhouses work by mechanically blocking convective heat loss, not by the actions of ‘greenhouse gasses’. The functional equivalent mechanical element in the atmosphere is the tropopause where convection stops. (Greenhouse operators enrich the CO2 levels for it’s fertilizer effect not for a thermal effect.)

The biosphere is the big unknowable wild card in the climate equation. It is opportunistic and craves carbon. Climate change happens at the biosphere level. Satellites show the Sahel is greening as CO2 becomes more abundant.

The known and experimentally proven benefits of enhanced CO2 are real. The dire risks of enhanced CO2 are conjectural and wrong as demonstrated by the GCM predictive failures.

“That is my opinion and if you don’t like it, I have others.”- Groucho Marx.

Never the less, CO2* + N2 ↔ CO2 + N2⁺ is a valid thermodynamic expression (and I referenced the “treatment” as a good explanation of the interactions of gasses, not to advocate for LTE). It runs both ways depending on the energy gradient of each collision interaction

This is not a valid description on the process.

CO2* + N2 → CO2 + N2⁺

N2⁺ + M → N2 + M

is a more reasonable description which allows for the fact that the subsequent collision will be with M (N2, O2, Ar etc.) not with CO2

Tom Vonk may be a physicist but he doesn’t understand reaction kinetics.

Phil –> I think you’re missing the point a little bit.

The question was if a gas (CO2) is 0.04 % of the atmosphere and it absorbs some radiation energy how does it get rid of the energy. Do collisions with other molecules transfer this energy? If so, which molecules are most likely to absorb the energy of the collision. It only makes sense that the larger percents (N2 or O2) of the atmosphere would be the most likely to have a collision. And convection should be a large part of heating.

Jim Gorman December 17, 2019 at 9:31 am

Phil –> I think you’re missing the point a little bit.

The question was if a gas (CO2) is 0.04 % of the atmosphere and it absorbs some radiation energy how does it get rid of the energy. Do collisions with other molecules transfer this energy? If so, which molecules are most likely to absorb the energy of the collision. It only makes sense that the larger percents (N2 or O2) of the atmosphere would be the most likely to have a collision.

Not missing the point at all, I explained how the CO2 gets rid of the energy, in the lower atmosphere it predominantly loses it by collision with neighboring molecules (N2, O2, Ar in that order). These collisions occur with high frequency, about 10X per nanosecond. The same molecule of CO2 can continue absorbing IR as long as it exists. Since the molecule that collisionally deactivated the initial excited CO2 molecule continues to collide with other molecules it will share that energy with them, millions of collisions during the average radiative lifetime of a vibrationally excited CO2 molecule.

Q: What contribution does thermalization make to the surface temperature in a corn field when photosynthesis has removed virtually all the CO2 from the local atmosphere air parcel during peak growth/ peak insolation?

A: Virtually 0?

Q: What effect does evapotranspiration have on the surface temperature in a corn field during peak growth/peak insolation? (Stomata stay open when trying to scavenge CO2, increasing evapotranpiration.)

A: Cooling.

Q: Are these effects quantified and incorporated in the GCM model calculations?

Climate effects happen at the microclimate level.

Phil: If you have 100 photons and 100 CO2 molecules, thermalization should convert 100% of the photons to kinetic energy. Saturation?

What happens when there are 120 CO2 molecules with 100 photons? The extra 20 CO2 should remain in their ground state and make more sugar?

Richard G. December 18, 2019 at 10:06 am

Phil: If you have 100 photons and 100 CO2 molecules, thermalization should convert 100% of the photons to kinetic energy. Saturation?

If those photons are of a wavelength that excite the rovibrational state of the CO2 and the molecules are immersed in the lower troposphere then yes the photon energy will be thermalized. No saturation since the CO2 will rapidly decay to their ground vibrational state where they will be able to reabsorb more photons, this process will take place in microsecs.

What happens when there are 120 CO2 molecules with 100 photons? The extra 20 CO2 should remain in their ground state and make more sugar?

The additional CO2 molecules will remain in their ground state where they can absorb future photons.

Richard G. December 18, 2019 at 9:25 am

Q: What contribution does thermalization make to the surface temperature in a corn field when photosynthesis has removed virtually all the CO2 from the local atmosphere air parcel during peak growth/ peak insolation?

That doesn’t happen, typical measurements in a corn field (CO2 level ~370ppm) at night the CO2 level rises to ~500ppm and during the day drops to ~300ppm.

Q: What effect does evapotranspiration have on the surface temperature in a corn field during peak growth/peak insolation? (Stomata stay open when trying to scavenge CO2, increasing evapotranpiration.)

Stomata respond to water rather than CO2, also corn is a C4 plant. In a C4 plant the CO2 accumulates and is transported to the bundle sheath cells where photosynthesis occurs even when the stomata are closed. That’s why C4 plants lose less water than C3 plants.

A recent James Delingpole podcast with Will Happer:

https://www.youtube.com/watch?v=gLzcz_l391w

“Top physicist, expert on high-energy lasers, and anthropogenic climate change skeptic Will Happer tells James about the global warming scam.”

Dr. Happer is the world’s leading expert on atmospheric optics, so his opinion is well founded in experience. He’s responsible for the adaptive optics which allow ground-based telescopes to compensate for distortion of light in air.

Either the physics is wrong or there is some larger variability that is not being accounted for. Time will tell though.

Phyisics, in general, seems well established, but some people in positions of respect or authority seem to have poor understanding of some of the critical basics that somehow fell through the cracks, when they got their PhDs.

Dave, I don’t know what you do for a living, how old you are or your outdoor experiences. Our atmosphere, I believe acts, is similar to a a swamp cooler. Even on a very cold day with clear skies and no or very, very low wind speed the sun will warm your face (SW radiation at work). What happens to the warmth on your face when the wind picks up or a cloud blocks the sun? Why can’t Co2 radiant forcing overcome the wind or lack of sunshine? Do you know what worst than being cold and hungry? Being wet, cold and hungry.

According to NASA, “ Climate models that include solar irradiance changes can’t reproduce the observed temperature trend over the past century or more without including a rise in greenhouse gases.”

https://climate.nasa.gov/causes/

Doesn’t this assume the climate models correctly describe the interaction of all relevant factors, use past climate data (which is itself assumed to be correct) to tune those interactions and then use that very same data to draw conclusions regarding the role of CO2 in the climate?

Is it me or is this circular reasoning?

It’s not you.

I’ve looked at it as the Sherlock Holmes fallacy: “Watson, there are five possible causes and we’ve ruled out the first four, so it must be the fifth!”

What NASA is saying is that if you take a climate model which includes solar irradiance but does not have any change in greenhouse gases, you will get a temperature trend that is not increasing. You have to include greenhouse gases to get a rising temperature trend.

However, Roy Spencer is demonstrating that almost all climate models either (A) have too much feedback OR else (B) carbon dioxide, in particular, does not have the forcing effect attributed to it.

Thus in A, the forcing from CO2 is correct, but the assumption that increased CO2 means more water vapour and hence increased forcing. In B, the assumed forcing due to CO2 is correct, but there is no or little feedback as a result of the atmosphere containing more water vapour.

There is possibly no way to determine which is correct. However, given that the amount of water vapour in the atmosphere is large, and up to 100 times the amount of CO2 (4% max to 410 ppm = 0.041%) I would suggest that, similarly to the CO2 absorption bands with that minimal amount being nearly incapable of raising the temperature with increase of CO2, the water vapour is so saturated in the atmosphere that observed changes in the concentration of water vapour cannot change the temperature, either up or down, within the normal limits of observed temperature.

I would also suggest that modellers have been assuming too great a figure for the sensitivity – temperature change due to increase in CO2 concentration, due to working with data from the 1975 to 1998 period, and this parameter being sacrosanct, all subsequent estimates are too great. Which is what you state. This period was possibly a period when many of the various atmospheric and oceanic oscillations combined to produce an abnormally rapid temperature rise with the change in CO2. Now, even though the CO2 increase is slightly greater, the oscillations do not coincide and so the temperature is not rising so fast – perhaps even slowing?

or (C) increased water vapor causes NEGATIVE feedback. I don’t see why that’s not possible.

Eustace Cranch, Ask yourself this or answer the question yourself. Why do crop picker in Yuma, Az wear two layers of clothing from head to toe work to pick crops in temperature close to 100f?

” due to working with data from the 1975 to 1998 period”

When something else was dominating temp change…if they had used the previous 20 years…they would have gotten the opposite result

cherry picking at it’s worst

Lat –

Cue the Aerosols.

That is not necessarily wrong.

The clean air acts around the world that went into effect in the 1970s did much to remove heavy particulates and SOx and NOx compounds from the atmosphere. The warming experienced in the decade after that is likely the result of a lower albedo from cleaner air.

NH industrial air pollution i.e. particulate matter, ozone (O3), nitrogen dioxide (NO2), sulphur dioxide (SO2) are relatively localised and anyway don’t cross the Equator due to the Coriolis effect (as I understand it).

Yet a similar T hiatus ~1940 – ~1980 occurred in the SH where there was very little industrial air pollution.

http://www.woodfortrees.org/plot/crutem4vnh/from:1900/mean:12/offset:-0.15/plot/crutem4vsh/from:1900/mean:12

There is some circular reasoning, but you aren’t the one doing it.

Just as well the climate isn’t chaotic or the factors that need to be taken into account for the models to tune on may not even be discernible.

Hang on a second…

Kevin Balch, the NASA website you linked to essentially ignores many other possible warming influences. These include secondary solar effects and ocean effects. It also ignores ozone changes, cloud changes, ENSO changes and just plain random variations.

Yeah — i think a big thing here is the change in behavior of the jet stream in response to solar cycle. When the solar cycle wanes the tropical ozone layer cools and it allows the jet stream to dip low – which also allows large blobs of warm tropical air into the arctic and antarctic. The meridional flow seems like a pump that quickly pulls energy to the poles where there is nothing stopping it from being lost to space or dumped into phase change for ice.

When the solar cycle waxes again – the tropical ozone heats up, the jetstream keeps a zonal flow and the speed of heat moving from the tropics to the poles is slowed down. This allows the tropics to keep most of their heat – with warming of the arctic only coming from ocean currents.

When looking at the instrumental record of sunspot counts – the 1920s and 1930s had huge spikes in sunspot count. From the beginning of the 20th century to the late 50s the sunspot counts ramped up with each cycle. from 1964 to 1978 a very low sunspot cycle happened – followed by much more vigorous cycles in the 80s and 90s. We are at a low now and this next cycle might be very low as well. We see very obvious meridional flow of the jetstream now – and have been seeing it for a few years now.

I think the real driver though – is the difference decade over decade – that flips these modes of jetstream behavior into meridional mode more often than zonal mode.

Well, Canadian news organizations were reporting these “loopy” changes in the jetstream occurring as the result of too much CO2 in the atmosphere. Gosh, they had their science reporter dancing around a huge computer generated mockup with lots of bright colours and flowing lines while he assured us it was so, so he must be correct. /sarc

That science reporter did not talk about solar cycles.

But, you know, the science is settled and all.

The comparison made differently

https://tambonthongchai.com/2018/08/31/cmip5forcings/

Interesting reading. I was especially struck by this passage …

If we are truly convinced that we understand and have a complete census of forcings, then this is a discrepancy that requires some explanation.

Good point.

Well put.

Thank you Kevin.

That paper says that CO2 controls 20% of the GH warming. According to GISS Model E.

Other models say CO2 controls anywhere from about 15-40% of the warming.

Lack of agreement among all the models discredits every model.

All one can truly say is that CO2 is the control knob governing Earth’s temperature, as simulated within the given model.

The real physical Earth? Anyone’s guess.

Kevin Kilty:

No, we do NOT have a complete census of forcings.

The IPCC Diagram of Radiative Forcings correctly shows a negative forcing for increased SO2 aerosol emissions, but does not show the positive forcing that occurs when they are removed from the atmosphere, such as because of Clean Air Act reductions, or when volcanic SO2 aerosols settle out of the atmosphere.

(Actually, the diagram DOES show the forcing, but its forcing is attributed to CO2, which is why models using CO2 can never be correct).

The Russian Model, which closely matches actual temperature , obviously does not use CO2, but instead uses SO2 . This is obvious from its match of the large peaks of 1996-97, 2010, and 2015-2016, which were due to large reductions in Global SO2 aerosol emissions and consequent warming due to the cleansed air. There is simply no way for these random events to appear in a model for any other reason.

“This is obvious from its match of the large peaks of 1996-97, 2010, and 2015-2016, which were due to large reductions in Global SO2 aerosol emissions and consequent warming due to the cleansed air. There is simply no way for these random events to appear in a model for any other reason.”

Don’t forget El Niño during those years.

Tom Abbott:

No, Tom, I have not forgotten the El Ninos. They are what I was referring to.

ALL El Ninos are caused by reductions in the amount of atmospheric SO2 aerosol emissions, of either volcanic or Anthropogenic origin.

(and all La Ninas are caused by increased levels of SO2 aerosols in the atmosphere, primarily from VEI4 or larger volcanic eruptions.

My peeve about these graphs is the curve fitting of the early decades that gives the impression of skill. No models predicted the temporary cooling in the 1980s and early 1990s produced by volcanic eruptions, yet all model runs now show those dips in the right place, because the curve has been fit to the observations. This gives the impression that the models had skill in the 80s and 90s, but they didn’t. They were way too warm then as well.

The only relevant part of the graph is the last 10-15 years, when the models were very bad and getting dramatically worse. That is what people and policy makers need to see. I fear that CMIP6 will come out with an updated curve-fit that will once again prompt activists and the media to trumpet the skill of the models, just like they did with the CMIP5 when it was new.

What a scam!

Yeah – its pretty easy to be totally accurate when you keep moving the starting point from your model runs to the current year.

The other irritating thing about these graphs is that there is always a ‘model average’ line, as though that meant something. With a single model, you could run it with random noise added to its various inputs, and expect that averaging the outputs would get you to a better estimate. But trying to average outputs from different models where the variations between them are definitely not random, gives a result with no better credibility than any of the inputs.

When a model is higher by a fraction of a degree, then the model is “accurate”.

When the global temp anomaly is higher by a fraction of a degree, then reality is “alarming”.

That fraction is NOT alarmingly inaccurate for the model, but it IS alarming for reality.

reality

not something they are familiar with

“Climate scientist” 1 to “climate scientist” 2:

“Get more bananas, those CHIMPS need to get out our latest version. Their food may grow on trees, but grants don’t, ya know….”

“ I still believe that the primary cause of the discrepancies between models and observations is that the feedbacks in the models are too strongly positive.”

Would it perhaps be naive of me to suggest that, as the saying goes, this is a feature not a bug?!

Are these really fair comparisons? Climate models are designed for propaganda generation while hindcasting. Using the models in a way for which they weres not designed is an abuse of science and typical for WUWT.

LOL!!

Why measure temperature when all the models disagree with each other? Actual temperature is irrelevant as there is nothing to compare it against as anyone suggesting a specific model is correct fails to understand that the system is too complex /chaotic to model. Any period of alignment between a model and measured temperature would be a coincidence as a forward model cannot know of events like volcanoes/cosmic rays flux/interactions between ocean currents and chaotic jet stream in advance. Even more frivolous is to suggest that the average of many wrong models is in someway correct. Imagining the earth system as a giant computer (like in Hitch-hikers Guide to the Galaxy) where the real thing only runs once means any sort of pseudo Monte-Carlo array is pointless..

Missing the point of climate models is a common denier flaw. I am sure some climate scientists believe that models can predict climate but I do not think most of them are that stupid.

Hence all the caterwauling about tipping points and the terrible future the earth will have if we don’t do something about the increasing temperature. They are predicting future climate and it isn’t only some of them.

The ‘point’ of the climate models is to tell Western Civilization to go to hell in such a way that it is enthusiastic about the trip.

The models are a numerical representation of a bad theory that was seized upon by post-modern socialists to create a social environment in which they can be the ruling elite. That is the point of the climate models.

Good points, James!

The russian models tantalisingly, (for we laypersons), referred to in the article are INM-CM4 and5.

It or they are frequently mentioned here, but not usually linked to, presumably because the commentator assumes his/her audience is totally familiar with them. However I had to Google for it and fortunately Patrick Michaels has fairly recently commented, referencing a paywalled paper, on the relative success or relevance of the numerous models :

https://www.cato.org/publications/commentary/are-climate-models-overpredicting-global-warming

and provides a link to INM-CM5 , which is open access .

https://www.earth-syst-dynam.net/9/1235/2018/

-“Simulation of observed climate changes in 1850–2014 with climate model INM-CM5”-

Evgeny Volodin and Andrey Gritsun

Institute for Numerical Mathematics, INM RAS, Gubkina 8, Moscow 119333, Russia

I make no aplogy for quoting from some of P Michaels’ article because it seems apposite to the post:

..”Weather forecasters know that some models work better than others in specific situations, and they tend to rely on the versions that work best, depending upon the forecast problem. When the issue is a potential big snow along the eastern seaboard, forecasters usually lean upon the model from the European Center for Medium-Range Weather Forecasting (the “Euro” model). When diagnosing shifts in jet stream patterns a week or 10 days ahead, they may place more weight on the American Global Forecast System model.

But the United Nations’ Intergovernmental Panel on Climate Change simply averages up the 29 major climate models to come up with the forecast for warming in the 21st century, a practice rarely done in operational weather forecasting. As dryly noted by Eyring and others “there is now evidence that giving equal weight to each available model projection is suboptimal.”

…Indeed. The authors of the new paper show that the aggregate models are making huge errors in three of the places on earth that are critical to our understanding of climate. ….

…But one of the models actually works. According to University of Alabama’s John Christy and his colleagues, only the Russian model, designated INM-CM4, gets things right. So why not weight heavily on the model that is working? Perhaps because it has less global warming in it than all the other U.N. models?

Its successor, INM-CM5, is so good that it is the only one that diagnoses the “pause” in warming from 2002 to 2014. That the “pause” was real is obvious in the global surface temperature record that the that the IPCC relies upon most heavily, from the Climate Research Unit at the University of East Anglia.

It’s high time that the scientific community come clean about longstanding climate shenanigans. Averaging up a large number of models that don’t work well is guaranteed to produce an unreliable forecast. Using ones that get things right, like the two Russian models, is accepted best-practice in weather forecasting. With regard to forecasting methodology, new research at least moves climate science closer to the 20th century.”…

(Reference to the “20th Cent” is not a typo by me – a tongue in cheek on the author’s part?)

@Mike … Ron Klutz has done some very good write ups on the Russian INMCM4 and INMCM5 models … that give insight into some of the reasons these models may be better at predicting real world measured temps.

https://rclutz.wordpress.com/2015/03/24/temperatures-according-to-climate-models/

https://rclutz.wordpress.com/2017/10/02/climate-model-upgraded-inmcm5-under-the-hood/

https://rclutz.wordpress.com/2018/10/22/2018-update-best-climate-model-inmcm5/

Ron Klutz offers an observation on the differences in the INMCM4 model:

“There appear to be 3 features of INMCM4 that differentiate it from the others.

1.INMCM4 has the lowest CO2 forcing response at 4.1K for 4XCO2. That is 37% lower than multi-model mean.

2.INMCM4 has by far the highest climate system inertia: Deep ocean heat capacity in INMCM4 is 317 W yr m^-2 K^-1, 200% of the mean (which excluded INMCM4 because it was such an outlier)

3.INMCM4 exactly matches observed atmospheric H2O content in lower troposphere (215 hPa), and is biased low above that. Most others are biased high.

So the model that most closely reproduces the temperature history has high inertia from ocean heat capacities, low forcing from CO2 and less water for feedback. ”

https://rclutz.wordpress.com/2015/03/24/temperatures-according-to-climate-models/

His 2017 and 2018 updates review the improvements in the INMCM5 model.

A Scott

Thanks for the links. I’m glad to see that someone has looked into how the Russian model differs from the others. Unfortunately, it seems that the mainstream modelers haven’t put the information to good use. Something else that might be instructive is to look at those that have performed the worst (e.g. Can EMS2, and GFDL-CM3) and similarly see how they differ from the typical model.

Good suggestion , Clyde , and hopefully, surely, some post grad somewhere is doing just that.

Alternatively perhaps Steven Mosher would like to comment on the apparently very significant differences between models when compared to actual observation.

Thanks for re-posting Dr. Spencer’s update on WUWT. It is very informative, and shows clearly that the IPCC needs to just admit defeat in their ability to model climate, even over a period as short as 38 years.

In particular, the curves of actual GLTT data measurements (the RSS satellite data, UAH satellite data, and the average of four reanalysis datasets) show that:

(a) the trend of GLTT over time is best characterized as LINEAR from 1980 to 2018, despite the massive increases in mankind’s annual CO2 emissions that have occurred EXPONENTIALLY over that same time period . . . thereby providing strong evidence that AGW is just not happening, or alternatively essentially reached its asymptotic limit prior to 1980.

(b) the average linear rate of GLTT increase is CONSTANT at 0.13 C per decade.

One thing more, if we look at the same data for the tropics only (20N-20S), the average linear rate is much less, about 0.09 C per decade. This would seem to support Dr. Spencer’s belief that models-versus-observations discrepancies reside in how the models handle moist convection and precipitation efficiency, which in turn affects how upper tropospheric cloud amounts and water vapor respond to warming (related to Richard Lindzen’s “Infrared Iris” effect, as noted). One would think the tropics would have much greater “moist convection and precipitation efficiency” effects moderating lower tropospheric temperatures than that occurring at higher latitudes around the planet.

And yet when you look at historical warming phases they precede the purported rise in CO2 by centuries or at the very least can’t be pinned on homo erectus. Well not unless you think cooking fires and cooking up bronze are what it’s all about-

https://www.nationalgeographic.com/news/2014/2/140226-wales-borth-bronze-age-forest-legend/

Sometimes you have to see the trees instead of the tree rings unless you think the water is bubbling up out of the mantle or coming in from outer space. The downside of using tea bags I suppose whereby one loses the art of reading tea leaves and feeding them into computers for a sign from the Gods-https://www.ncdc.noaa.gov/news/what-are-proxy-data

Perhaps I could invite Griff over for an instant coffee and he could explain the art to me in depth.

Why should anyone have the confidence they have about the temperatures before World War 2? Before then most of the world did not have any temperature data and the places that did have it appear to have been adjusted to almost bizarre lengths so that they do not resemble the contemporary news stories about heat waves and cold snaps in different regions. This also implies that the surface temperature data is not corrupted by urban heat island effect – but we know based on the proportion of poorly cited temperature stations that it MUST have an impact. The simple heuristic they use to adjust for this seems too ad hoc and not tuned on a station by station basis.

For instance – When rural areas surrounding a city all have a temperature that is similar – but the city has temperatures that are 10C warmer — the adjustment is to increase the temperatures of the rural areas to blend the result. This does not accurately represent to true ambient air temperature without local human effects.

Then there are the adjustments that when compared to comtemporary news sources – are laughable. In Australia there was a massive heat wave in the late 19th century – with temperatures hotter than anything in the rest of the modern record. There are news stories about people dying from the heat. When you look at the adjusted data — it becomes no more hot than a moderate Australian summer. The 51C readings become 46C readings. Idf adjustments to data are doing that – anything that results from such chicanery cannot be inferred to be representative of reality.

It should be concerning that the models seem tuned to the curve – but I guess since the models were used to make the adjustments – its not a surprise.

100%

Roy,

You wrote, “I categorically deny any Russian involvement in the resulting agreement between the UAH trend and the Russian model trend, no matter what dossier might come to light.”

That’s sufficient to get a FISA warrant on your tail. Let’s call it the Trans-Alabama Tornado. Conspiracy to Collude with Climate-Destroying and Climate-Denying Entities. I see some subpoenas coming right now.

Kurt

And anyone, currently, or in the past, who has knowingly eaten calamari, frequented restaurants that serve calamari, or expressed an interest in tasting calamari, may be charged with providing comfort and support to the squid pro bono movement.

To pop-up ad that comes up when I open WUWT is so aggressive that I have to close the page and re-open it in order to get in.

The first time this happened I thought that activists had hacked in and were driving away traffic, now I realize that it’s the advertisers driving away web traffic.

Under the rules of the scientific method, the CAGW hypothesis is, for all intents and purposes, already a disconfirmed hypothesis because CAGW’s hypothetical mean projections have exceeded empirical observations by 2 or more standard deviations for over 2 decades.

All empirical data show ECS is most likely between 0.6~1.2C, which is roughly 1/5th~1/3rd less than CAGW’s hypothetical projections.

When the AMO and PDO soon enter their respective 30-year cool cycles, the already huge disparity will likely exceed 3 standard deviations, and the CAGW hypothesis will be laughable, and tossed on the trash heap of failed ideas.

Do hope you’re right but it’s policy, not science, that needs to accept failure.

The CACA conjecture was born falsified.

The postwar cooling, despite steady increase in plant food in the air, showed it false. Callendar argued for beneficial AGW in the 1930s, but lived long enojugh to suffer the bitterly cold winters from the ’40s to his death in the early ’60s. Worse was to come in the late ’60s to ’70s, before the 1977 PDO flip gave us better weather.

“ I categorically deny any Russian involvement ”

It has been reported that Vladimir Putin and Roy Spencer have never been seen together nor at the same exact time in different places.

If this is true the UN should investigate.

{– – winking smiley face – – Poe’s Law}

The models are basically nothing but a monotonic rise with some noise thrown in as a red scarf trick. If you look at the graphs the only feature they about right is the dip around the time of Mount Pinatubo eruption in 1991. They point to that and say : look we are modelling climate, it even matched the volcanic effects.

But no.

Firstly look at the magnitude. Roughly twice the measured effect in the tropics and about 50% too much in global mean. The means to model is twice as sensitive as it should be the radiative forcing in the tropics, where the vast majority of the Earth’s energy input enters the system. Get that wrong and you model will warm faster than it should and you will be scurrying around looking for “missing heat”.

The scaling of observed aerosol effects: atmospheric optical depth ( AOD ) is one of the biggest frig factors in the whole game. In 1992, using “basic physics” modelling of El Chichon data,it was scales as 30W/m^2, by 2005 it was “tweaked” to 20W/m^2. Measurement and “basic physics” was dropped for arbitrarily frigging the numbers to get the desired result.

Having under-estimated the true strength of volcanic forcing they could pump up other factors ( positive feedbacks ) and still get a dip in the right place. The added bonus is that the same feedbacks will apply to other “forcings”, like CO2.

And bingo, you an exaggerated warming that you can shout about for about 20 years before it becomes more and more apparent that you warming far too quickly. By that time you hope to have pushed to political agenda far enough to have entrenched the idea the oceans are going boil like they did on Venus !!

So Dr. Spencer is correct, but it all starts from frigging the volcanic forcing via AOD scaling.

https://judithcurry.com/2015/02/06/on-determination-of-tropical-feedbacks/

So, to sum up the last 67 comments the answer is that we don’t know what causes warming or cooling in the atmosphere. OK. Got it.

The system is incompletely or insufficiently characterized and unwieldy. We can neither forecast nor predict its state outside of a limited frame of reference, other than within the known bounds of a semi-stable system and processes.

The posted chart shows that whenever there is an El Nino, the actual temperatures approach the midrange of the model ensemble. This is very deceiving because the models do not model El Ninos. If they did, the error of the models would be much more apparent.

“This is very deceiving because the models do not model El Ninos.”

They leave a lot of things out of those climate models, don’t they. That would be the same climate models they use to promote the spending of TRILLIONS of taxpayer dollars on foolishness like the Green New Deal.

I think they need to refine their Climate models a little more before taxpayers start forking over the cash.

Dr. Spencer, I’m glad to see you tying that model issues to who benefits…that being Putin.

BOMBSHELL: Dr. Hill Exposes Russia’s Propaganda Campaign to Kill US Fracking Industry

https://co2islife.wordpress.com/2019/11/21/bombshell-dr-hill-exposes-russias-propaganda-campaign-to-kill-us-fracking-industry/