Guest opinion: Dr. Tim Ball

Two recent events triggered the idea for this article. On the surface, they appear unconnected, but that is an indirect result of the original goal and methods of global warming science. We learned from Australian Dr, Jennifer Marohasy of another manipulation of the temperature record in an article titled “Data mangling: BoM’s Changes to Darwin’s Climate History are Not Logical.” The second involved the claim of final, conclusive evidence of Anthropogenic Global Warming ((AGW). The original article appeared in the journal Nature Climate Change. Because it is in this journal raises flags for me. The publishers of the journal Nature created the journal. That journal published as much as it could to promote the deceptive science used for the untested AGW hypothesis. However, they were limited by the rules and procedures required for academic research and publications. This isn’t a problem if the issue of global warming was purely about science, but it never was. It was a political use of science for a political agenda from the start. The original article came from a group led by Ben Santer, a person with a long history of involvement in the AGW deception.

An article titled “Evidence that humans are responsible for global warming hits ‘gold standard’ certainty level” provides insight but includes Santer’s comment that “The narrative out there that scientists don’t know the cause of climate change is wrong,” he told Reuters. “We do.” It is a continuation of his work to promote the deception. He based his comment on the idea that we know the cause of climate change because of the work of the Intergovernmental Panel on Climate Change (IPCC). They only looked at human causes, and it is impossible to determine that, if you don’t know and understand natural climate change and its causes. If we did know and understand then forecasts would always be correct. If we do know and understand then Santer and all the other researchers and millions of dollars are no longer necessary.

So why does Santer make such a claim? For the same reason, they took every action in the AGW deception, to promote a stampede created by the urgency to adopt the political agenda. It is classic the sky is falling” alarmism. Santer’s work follows on the recent ‘emergency’ report of the IPCC presented at COP 24 in Poland that we have 12 years left.

One of the earliest examples of this production of inaccurate science to amplify urgency was about the residency time of CO2 in the atmosphere. In response to the claims for urgent action of the IPCC, several researchers pointed out that the levels and increase in levels were insufficient to warrant urgent action. In other words, don’t rush to judgement. The IPCC response was to claim that even if production stopped the problem would persist for decades because of CO2’s 100-year residency time. A graph produced by Lawrence Solomon appeared showing that the actual time was 4 to 6 years (Figure 1).

Figure 1

This pattern of underscoring urgency permeates the entire history of the AGW deception.

Lord Walter Scott said, “What a tangled web we weave when first we practice to deceive.” Another great author expanded on that idea but from a different perspective. Mark Twain said, “If you tell the truth you don’t have to remember.” In a strange way, they contradict or at least explain how the deception spread, persisted, and achieved their damaging goal. The web becomes so tangled and the connection between tangles so complicated that people never see what is happening. This is particularly true if the deception is about an arcane topic unfamiliar to a majority of the people.

All these observations apply to the biggest deception in history, the claim that human production of CO2 is causing global warming. The objective is unknown to most people even today, and that is a measure of the success. The real objective was to prove overpopulation combined with industrial development was exhausting resources at an unsustainable rate. As Maurice Strong explained the problem for the planet are the industrialized nations and isn’t it our responsibility to get rid of them. The hypothesis this generated was that CO2, the byproduct of burning fossil fuel, was causing global warming and destroying the Earth. They had to protect the charge against CO2 at all cost, and that is where the tangled web begins.

At the start, the IPCC and agencies supporting them had control over the two important variables, the temperature, and the CO2. Phil Jones expressed the degree of control over temperature in response to Warwick Hughes’ request for which stations he used and how they were adjusted in his graph, He received the following reply on 21, February 2005.

“We have 25 or so years invested in the work. Why should I make the data available to you, when your aim is to try and find something wrong with it.”

Control over the global temperature data continued until the first satellite data appeared in 1978. Despite the limitations, it provided more complete coverage; the claim is 97 to 98%. This compares with the approximately 15% coverage of the surface data.

Regardless of the coverage, the surface data had to approximate the satellite data as Figure 2 shows.

Figure 2

This only prevented changing the most recent 41 years of the record, but it didn’t prevent altering the historical record. Dr. Marohasy’s article is just one more illustration of the pattern. Tony Heller produced the most complete analysis of the adjustments made. Those making the changes claim, as they have done again in Marohasy’s challenge, that they are necessary to correct for instrument errors, site and situation changes such as for an Urban Heat Island Effect (UHIE). The problem is that the changes are always in one direction, namely, lowering the historic levels. This alters the gradient of the temperature change by increasing the amount and rate of warming. One of the first examples of such adjustments occurred with the Auckland, New Zealand record (Figure 3). Notice the overlap in the most recent decades.

Figure 3

The IPCC took control of the CO2 record from the start, and it continues. They use the Mauna Loa record and data from other sites using similar instruments and techniques as the basis for their claims. Charles Keeling, one of the earliest proponents of AGW, was recognized and hired by Roger Revelle at the Scripps institute. Yes, that is the same Revelle Al Gore glorifies in his movie An Inconvenient Truth. Keeling established a CO2 monitoring station that is the standard for the IPCC. The problem is Mauna Loa is an oceanic crust volcano, that is the lava is less viscous and more gaseous than continental crust volcanoes like Mt Etna. A documentary titled Future Frontiers: Mission Galapagos reminded me of studies done at Mt Etna years ago that showed high levels of CO2 emerging from the ground for hundreds of kilometers around the crater. The documentary is the usual, people are destroying the planet sensationalist BBC rubbish. However, at one point they dive in the waters around the tip of a massive volcanic island and are amazed to see CO2 visibly bubbling up all across the ocean floor.

Charles Keeling patented his instruments and techniques. His son Ralph continues the work at the Scripps Institute and is a member of the IPCC. His most recent appearance in the media involved an alarmist paper with a major error – an overestimate of 60%. Figure 4 shows him with the master of PR for the IPCC narrative, Naomi Oreskes.

Figure 4

Figure 5 shows the current Mauna Loa plot of CO2 levels. It shows a steady increase from 1958 with the supposed seasonal variation.

Figure 5

This increase is steady over 41 years, which is remarkable when you look at the longer record. For example, the Antarctic ice core record (Figure 6) shows remarkable variability.

Figure 6

The ice core record is made up of data from bubbles that take a minimum of 70 years to be enclosed. Then a 70-year smoothing average is applied. The combination removes most of the variability, and that eliminates any chance of understanding and predetermines the outcome.

Figure 7 shows the degree of smoothing. It represents a comparison of 2000 years of CO2 measures using two different measuring techniques. You can see the difference in variability but also in total atmospheric levels of approximately 260 ppm to 320 ppm.

Figure 7

However, we also have a more recent record that shows similar differences in variation and totals (Figure 8). It allows you to see the IPCC smoothed the record to control the CO2 record. The dotted line shows the Antarctic ice core record and how Mauna Loa was created to continue the smooth but inaccurate record. Zbigniew Jaworowski, an atmospheric chemist and ice core specialist, explained what was wrong with CO2 measures from ice cores. He set it all out in an article titled, “CO2: The Greatest Scientific Scandal of Our Time.” Of course, they attacked him, yet the UN thought enough of his qualifications and abilities to appoint him head of the Chernobyl nuclear reactor disaster investigation.

Superimposed is the graph of over 90,000 actual atmospheric measures of CO2 that began in 1812. Publication of the level of oxygen in the atmosphere triggered collection of the CO2 data. Science wanted to identify the percentage of all the gases in the atmosphere. They were not interested in global warming or any other function of those gases – they just wanted to obtain accurate data, something the IPCC never did.

Figure 8

People knew about these records decades ago. The record was introduced into the scientific community by railway engineer Guy Callendar in coordination with familiar names as Ernst-Georg Beck noted,

“Modern greenhouse hypothesis is based on the work of G.S. Callendar and C.D. Keeling, following S. Arrhenius, as latterly popularized by the IPCC.”

He deliberately selected a unique set of the data to claim the average level was 270 ppm and changed the slope of the curve from an increase to a decrease (Figure 9). Jaworowski circled the data he selected, but I added the trend lines for all the data (red) and Callendar’s selection (blue).

Figure 9

Tom Wigley, Director of the Climatic Research Unit (CRU) and one of the fathers of AGW, introduced the record to the climate community in a 1983 Climatic Change article titled, “The Pre-Industrial Carbon Dioxide Level.” He also claimed the record showed a pre-industrial CO2 level of 270 ppm. Look at the data!

The IPCC and its proponents established through cherry-picking and manipulation the pre-industrial CO2 level. They continue control of the atmospheric level through control of the Mauna Loa record, and they control the data on annual human production. Here is their explanation.

The IPCC has set up the Task Force on Inventories (TFI) to run the National Greenhouse Gas Inventory Programme (NGGIP) to produce this methodological advice. Parties to the UNFCCC have agreed to use the IPCC Guidelines in reporting to the convention.

How does the IPCC produce its inventory Guidelines? Utilising IPCC procedures, nominated experts from around the world draft the reports that are then extensively reviewed twice before approval by the IPCC. This process ensures that the widest possible range of views are incorporated into the documents.

In other words, they make the final decision about which data they would use for their reports and as input to their computer models.

This all worked for a long time, however, as with all deceptions even the most tangled web unravels. They continue to increase the atmospheric level of CO2 and then confirm it to the world by controlling the Mauna Loa annual level. However, they lost control of the recent temperature record with the advent of satellite data. They couldn’t lower the CO2 data because it would expose their entire scam, they are on a treadmill of perpetuating whatever is left of their deception and manipulation. All that was left included artificial lowering of the historical record, changing the name from global warming to climate change, and producing increasingly threatening narratives like the 12 years left and Santer’s certainty of doom.

NOTE: In my opinion, I do not give the work of Ernst-Georg Beck in Figure 8 any credence for accuracy, because the chemical procedure is prone to error and the locations of the data measurements (mostly in cities at ground level) have highly variable CO2 levels. Note how highly variable the data is. – Anthony

The tangled web that is getting complicated to perpetuate is the cooling we are experiencing by explaining it away as a result of ‘climate change’ due to warming. It was explained simply by a TV meteorologist on the weather the other night that because the temperature gradient between the poles and equator is being reduced due to major warming at the poles, that the jet steam is becoming weaker and causing the jet stream to dipping further south, allowing more of this polar vortex cold air to sink south which is why February was just so cold.

Except I thought the TV meteorologist had just said the poles were that much warmer now which is what was the causing the jet stream to dip south. So what is all that cold air up north doing there ready to sink south if they just said the poles were warming? They can’t even keep their story straight. It would seem obvious to me that a new cooling cycle is starting for perhaps 30-35 years, like we just had with a 30-35 warming cycle we had since the early 1980’s.

Perhaps warming is from minus 50C to minus 45C. Still cold.

That is exactly it. The warming on this planet has occurred at the poles, in their respective winters. It is easily explained by the SSTs and atmospheric moisture in the regions normally much drier during winters. When the SSTs drop, the high latitude enthalpy will also. The strike against CO2 causing sea ice loss is that the polar summer temperatures have been completely normal, if not a bit below.

I think the problem of measuring the effect of CO2 in the atmosphere is that theory is based on dry air mathematics and gives little or no credence to the effects of water vapor, the chief constituent of the “greenhouse effect”. If CO2 condensed into liquid, froze, and evaporated again at temperatures experienced on this rock it might just have a real grip on climate, just like water in all its terrestrial apparitions. But, given the trace amounts present it would never compete with the copious water present in the average of the global atmosphere.

Yes, the variabilty at ground level is the reality eliminated by the Mauna Loa measures. Thank you for proving my point.

I challenge anyone to go and look at the remarkable precision in data and analysis by Beck.

Here are my comments direct to you about Beck’s work.

I spoke with his colleagues and warned him about the attacks. Nobody was more meticulous in his analysis of the data and the results. Certainly better than the people who attacked him, like all the CO2 proponents including Wigley and those at the IPCC.

Here is what I wrote in my obituary about him

“I was saddened to hear that Ernst Georg Beck died after a battle with cancer. I was flattered when he asked me to review one of his early papers on the historic pattern of atmospheric CO2 and its relationship to global warming. I was struck by the precision, detail and perceptiveness of his work and urged its publication. I also warned him about the personal attacks and unscientific challenges he could expect. On 6 November 2009 he wrote to me, “In Germany the situation is comparable to the times of medieval inquisition.” Fortunately, he was not deterred. His friend Edgar Gartner explained Ernst’s contribution in his obituary. “Due to his immense specialized knowledge and his methodical severity Ernst very promptly noticed numerous inconsistencies in the statements of the Intergovernmental Penal on Climate Change IPCC. He considered the warming of the earth’s atmosphere as a result of a rise of the carbon dioxide content of the air of approximately 0.03 to 0.04 percent as impossible. And it doubted that the curve of the CO2 increase noted on the Hawaii volcano Mauna Loa since 1957/58 could be extrapolated linear back to the 19th century.” (This is a translation from the German)”

http://ef-magazin.de/2010/09/22/2559-climategate-ernst-georg-beck-ist-nicht-mehr

I completely disagree with your assessment of Beck and his work. I also remind you that Jaworowski vetted his work and he knew better than anyone about CO2 and atmospheric gases.

Hello Tim,

I know he was a friend and a colleague, but just look at the data, the variability of it exceeds the note you have of 1 to 3% accuracy! It doesn’t matter how careful you are, when the data swings with such extremes, it doesn’t represent the atmospheric average well. It’s very noisy. You can be the most careful scientist in the world and still end up with a noisy data set.

Trying to compare Beck’s noisy data to modern data only invites criticism. I have to get out in front of it.

I agree rather more with Tim. I consider the individual analysis results to be very good. The individual results record what the CO2 was at that place, at that time. Nothing more and nothing less. Low level CO2 concentrations are far more variable than we are led to believe for a “well mixed gas”.

For example, Harvard University has a monitoring station in a nearby pine forest. The station is at 10 meters height. Concentrations vary by time of day, seasonally, and annually. Concentration even varies according to the weather. (Think about it, of course it will.)

None of this means that the Harvard data is bad, or even noisy. It just means they are looking at a highly variable system.

Anthony:

I have some comments stuck in moderation. (again)

Locally, CO2 can vary by about 60 percent over the course of a few days.

It is anything but a well mixed gas at low altitude.

How does the variability in the first few hundred metres impact upon the theory.

After all the surface temperatures are taken at about 2 metres and this is where CO2 is not well mixed.

What does OCO-2 data say about this?

Measurements of atmospheric CO2 via gas chromatography can be accurate; but GC is only about as old as the Hawaii CO2 data. Earlier data were by chemical analysis, which required gas collection, possible storage, and chemical processing. These present opportunities for CO2 loss or gain by contamination. This in addition to the wide variability of atmospheric CO2 over location and time.

I agree with Anthony.

There is nothing wrong with the Beck reanalysis. It is just that any local sample is highly variable because CO2 is not a well mixed gas at low altitude.

An October 1955 paper by Giles Slocum criticize the arguments made by Callendar.

http://www.pensee-unique.fr/001_mwr-083-10-0225.pdf

“which required gas collection, possible storage, ”

This was done for Antarctic measurements of CO2 levels and after Keelings revision and calibration, the underlying trend was very close to that of ML.

I’m convinced that the general trend is given by d[CO2]/dt = c T with actual measurement providing only a little noise (T being SST SH, maybe north, and the old RSS after 1990 or maybe the satellite data used to calibrate SST).

Anthony,

I also agree with Tim. I think your criticism of the Beck data is far too harsh.

I believe the Beck measurements were taken at different times and locations; of course there will be a large variation. Quite likely if modern measurements were taken at random times and many locations they would look just as noisy. The measurements we use today (Mauna Loa) are probably highly smoothed to take our random variations. If the Beck measurements were similarly smoothed they would look very different and probably similar to modern measurements, apart from the actual values and trends.

Although noisy, the Beck values show a clear falling trend. Are you saying this was random? As there are many measurements I would have thought that would be unlikely. This falling trend actually does make it roughly consistent with modern values. If there were no falling trend, then that would be a problem, because it would not be consistent with modern values.

If you think the Beck values can be ignored because they are wrong then, please, provide proof. Simply pointing out that the data is noisy is no proof at all.

Best regards,

Chris

The same misguided rationale could be applied to the temperature, which varies enormously from region to region and from year to year. Averaging, spatial or temporal, always reduces variability and that reduction has no bearing upon the accuracy of measurements.

What you quoted, 1sky1, does not say anything about the accuracy of the individual measurements. It says that extreme data swings do not lend themselves to representing averages well. The key word is “averages.”

The same can be said about temperature. No matter how accurate individual temperature readings are, there are just too few of them and too much variation over both time and distance to produce a worldwide temperature average with any real degree of accuracy. Saying that the average temperature of the Earth has yet to be reliably determined is not the same as saying that individual temperature measurements are not accurate.

By the same token, a few measurements of CO2, even if those measurements are extremely accurate, do not give you sufficient data to produce an accurate atmospheric average of CO2 across the planet. It doesn’t matter how accurate individual measurements are if there are too few of them and the data show extreme swings.

You miss the point. What I quoted was clearly preceded by a statement erroneously linking variability with accuracy, namely:

In fact, accuracy and data variability are intrinsically separate concepts, no matter whether individual measurements or averages, spatial or temporal, are involved. “Extreme data swings” per se don’t inhibit accurate determination of averages. The notion that such swings, ipso facto, make the data averages “very noisy” betrays an amateurish conflation of noise with high-frequency signal variations. And the error due spatial undersampling, which you stress, is a different matter altogether.

Mauna Loa was created to continue the smooth but inaccurate record.

They’re all in on it.

http://3.bp.blogspot.com/-GRv_gtjeb6Y/T5Oso3blh4I/AAAAAAAAAh4/YXtvephg7zU/s1600/Global+CO2+new+2.jpg

That’s not at issue in this discussion.

I thought you were being sarcastic, insinuating there was a conspiracy because there is agreement among measurements at different sites. You weren’t being sarcastic?

I thought the CO2 levels were increasing because

temperatures were going up …. that’s the correlation

I’m reading

Rising ocean temperatures outgassing CO2 are probably the main culprit.

I somewhat agree with Anthony’s comment. The early CO2 data is noisy and certainly reflects poor site selection or just the difficulty of making measurements in pristine locations. The scientists making the measurements at this time were, like Dr. Ball said, were trying to measure atmospheric composition. They likely did not concern themselves too much with CO2, because it was and still is a trace gas.

However, their chemical measurement techniques were excellent and amazingly accurate and precise given the state of technology. If they had known about the future climate debate, they would have done a better job of sampling to make more “global” representative measurements.

There really is no way to reconstruct something more useful from that old data. And Beck’s data selection is highly arbitrary.

As is Callendar’s.

No, Callendar’s selection is very much “selective”

Not arbitrary in any way.

Yes, well, it’s noisy and I guess arbitrary doesn’t have the right connotation. I think you are probably right on further thought.

Similarly, CO2 measured within ice cores is selectively dependent on the measurement techniques used. It hasn’t really been subjected to proper analytical validation. A handful of labs basically adjusted and readjusted their measurement methods until they obtained the results they thought were correct.

It is not surprising that the Beck data is variable : it is not from a consistent location and conditions. Comparison to a fixed , purpose built recording station with the expectation that both should show the same thing is not credible. It is odd that Tim Ball knew all this yet chose not to give one peep about it in the above article.

So much for the ‘well-mixed’ meme.

Actually, the OCO2 satellite does seem to show a well-mixed spread of CO2 over the globe. +-3.75% or so. [OCO3 will be launched in April, BTW]

https://wattsupwiththat.com/2015/10/04/finally-visualized-oco2-satellite-data-showing-global-carbon-dioxide-concentrations/#comment-1617531

(Don’tjudge the concentrations by the map’s color coding, it was rendered to look alarming. Look at the legend below the maps and do your own math to compute the mixing)

Yes, CO2 is “well mixed”. But if you do a reading in your living room or in a barn full of cows you will find a huge difference from “well mixed” values on the scale seen from a satellite.

This is just the problem with many of Beck’s measurements. There are issues with the volcanic nature of MLO but it is high, in the middle of the ocean and swept by strong winds with not influence of other human activity.

QA measures are taken to eliminate readings on days when the wind is not coming from the ocean. I think both Ralph and Charles Keeling are doing a thorough job of getting the best data available. I don’t believe they have their thumb on the scales.

Is Mauna Loa considered a representative atmospheric sampling location w/o significant influence from Asia CO2 producers which may be up-wind?

Also, with the aerodynamics of air-flow across a flat surface (ocean) and over a volcanic peak, are they actually sampling air at altitude or is the air a mixture from lower altitudes being forced over the peak. The venturi effect of Mt. Washington comes to mind. Having piloted a small plane across the mountains of MT, it’s pretty obvious what the wind does when you try to maintain altitude.

China if far enough “up wind” for it to be mixed. Also , I think if you look at the OCO2 animations, the weather partterns do not blow chinese CO2 in the direction of MLO.

CO2 is well mixed only at high altitude.

At low altitude, say below 1000 metres it is anything but well mixed as shown by the Beck reanalysis data.

It is not a magic gas. Of course there will be significant local variations if you are near a local source. If it is well mixed above 1km that is enough to treat it as well mixed for IR forcing calculations.

It is now too late to remeasure those locations.

However, can it be demonstrated that near industrial sources CO2 can vary considerably from locations distant from sources??

I would really like to know if the Mauna Loa measurements are correct???? Why are they controlled by certain people??

@Greg

That depends where the dominant radiative GHE is.

If say at the below 200 m CO2 is often at 600 ppm we are already seeing more than 1 doubling over pre industrial levels.

Richard. Humans only occupy about 2% of the surface. I don’t think local variations near the surface make any significant change to the calculated radiative “forcing”.

Greg,

What is odd, is that the variability in the Beck figures should also be recognised in the ice core samples. That is the main example here of selective use of data with large sampling errors. You are pitting skilled analytical chemists analysing local air samples in the Beck set, with others doing ice core work where the samples have gone through several severe additional ice processes, well documented. If you accept that ice core results are nearly as precise as Mauna Loa, then you have to place the Beck results in between. Geoff

The ice core analyses are probable more accurate than even a skilled chemist in 1900. What they represent is another question.

There is clearly a heavy averaging process in ice bubbles which take decades to close off from the exterior and the location ensures well mixed samples. Keeling start the whole project to create a long term, consistent CO2 record. I guess until someone thinks they are doing fraudulent data manipulation, they get to keep the gig.

Geoff,

Good question! Although several commenters have mentioned the variability of ‘local’ CO2 measurements, I did not notice any specific reference to the daily (diel) cycle of atmospheric CO2 levels below the atmospheric boundary layer (i.e. generally below about 1000m, but quite variable). Every single day, the local CO2 level may change by 100 ppm or more, which could explain, at least in part, the variations recorded by Beck. So, his measurements may be precise while being unrepresentative. A good example of typical daily variations can be seen here:

https://meteo.lcd.lu/papers/co2_patterns/co2_patterns.html

Referring to Figure 12, see how the CO2 level is lowest during the daytime (photosynthesis in action) and then it increases every night due to respiration. A key observation is that the minimum value is (a) about the same level each day and (b) is comparable to the level of CO2 in the ‘free atmosphere’, as measured at Mauna Loa, for example. It appears, therefore, that photosynthesis is able to remove essentially all the locally released CO2 (of whatever source) each day. (Of course, annual CO2 growth of about 2 ppm globally will not be evident at a daily scale.) Supporting evidence for this link between local daily minima and global levels can be found in this PhD thesis:

https://ueaeprints.uea.ac.uk/42961/

Figure 3.3 (page 79) shows very well that the variation in the daily minima reflects the annual/seasonal variation as seen at Mauna Loa. The annual growth in atmospheric CO2 is also evident on this plot.

So, to get back to your question. The dominant cause of the daily variation is the photosynthesis/respiration cycle of local vegetation. This is strongly supported by reference to the 13C/12C changes. As noted above, this may be at least part of the reason for the variability in Beck’s data. I have not seen any daily atmospheric CO2 data for the Antarctic, but we know from the monthly South Pole data that the seasonal variation is very small indeed so it would appear that the impact of vegetation in the Antarctic is insignificant (not too surprising!) and hence no such variations are seen in the ice core data.

I took a look into the historical data one time. I was amazed at the accuracy and precision they were able to achieve. The standard methodology was to use “lime water”, a saturated solution of Calcium Hydroxide. Air is bubbled through the solution and the CO2 is quantitatively precipitated out as CaCO3. the precipitate is collected, washed, dried, and weighed.

As a follow-on, CaOH was replaced by BaOH, which gives even better results.

This analytical technique is known as gravimetric analysis, which even today is the gold standard for accuracy and precision.

It is very much worth noting that all kinds of specialized glassware and other apparatus was developed to aid in the accuracy of the measurements. The solutions were often ingenious. Often, it was desired to flush out the equipment with CO2-free air to eliminate contamination. How to do that? Simple, bubble air through a solution of NaOH, the CO2 precipitates as Na2CO3, and you have contaminant-free air. True, the chemists of the day did not have the technology we have today. Therefor, they had to think, a skill which is in notably short supply today.

Another thing that became apparent was the effort they would put forth to bet good data. Many sites were established all over the Alps to get pristine data uncontaminated by urban areas. One researcher set up a station on the west coast of Ireland to get air fresh off a 2000 mile run across the Atlantic ocean. Clearly, with all this effort to get a good sample, they were not about to get sloppy with the lab work.

The chemists of the day most certainly knew what they were doing, and I consider their results to be an outstanding part of the historical record, not to be treated lightly.

Yes, too bad they didn’t go to the top of Mauna Loa or any number of other sites.

I agree with your assessment of the quality of the scientists and their results. But an accurate and precise measurement of a bad sample produces a bad result. Had they been addressing the question we are today, they could do as well as the expensive modern equipment that we have now, though not as quickly or frequently.

“too bad they didn’t go to the top of Mauna Loa”

Numerous researchers went way up in the Alps. If there is a real difference between a high altitude continental site and a high altitude oceanic site, then goodbye to “Well Mixed”.

The chemists of the day were keenly aware of sampling issues.

But this does bring up another issue. If there really is a real difference between the high Alps and Mauna Loa, then what makes Mauna Loa the gold standard and the Alps just random noise?

This becomes the old analytical question:

Are we really measuring what we think we are measuring?

It’s not the altitude, it’s getting away from combustion emission sources. The alps should be good in most cases.

It is nothing to do with combustion sources.

The variability is due to nature particularly due to respiration of vegetation and trees and the like.

Of course, anything that consumes or emits CO2 will impact the measurement, including plants, animals, fire, geological sources, etc. and time of day also matters, yes. We put out close to a mg of CO2 in each breath, for example. Our metabolism is higher than that of plants, but if we’re in the midst of big trees in the middle of a forest then we don’t impact that level at all. If that forest is on fire, then CO2 will approach very high levels indeed.

I just aswer with the abstract:

More than 90,000 accurate chemical analyses of CO2 in air since 1812 are summarised. The historic chemical data reveal that changes in CO2 track changes in temperature, and therefore climate in contrast to the simple, monotonically increasing CO2 trend depicted in the post-1990 literature on climate-change. Since 1812, the CO2 concentration in northern hemispheric air has fluctuated exhibiting three high level maxima around 1825, 1857 and 1942 the latter showing more than 400 ppm. Between 1857 and 1958, the Pettenkofer process was the standard analytical method for determining atmospheric carbon dioxide levels, and usually achieved an accuracy better than 3%. These determinations were made by several scientists of Nobel Prize level distinction.

Source

You know about a follow up by Francis Massen ?

Accurate Estimation of CO(2) Background Level from Near Ground Measurements at Non-Mixed Environments

Did you get this reversed?

If we stop all human CO2 production globally there would be a 200ppm drop by this time next year.

Yes, I said 200ppm. See, there’s no “latency” or “half life” to CO2, it is heavy and drops to the surface where it is immediately consumed. It is snagged by raindrops, dew, ground moisture, puddles, rivers, lakes and oceans.

The yearly 2ppm increase is laughable (as is the methodology of having values of “407.96ppm”… is the equipment incapable of accurately counting molecules to begin with?)

CO2 concentrations in atmosphere are controlled far more by plate tectonics than by human production and the natural environmental decay is a greater source still.

Why did C14 produced from nuclear bomb testing decay so slowly then?

Because plants prefer C12.

https://www.google.com/search?client=ms-android-samsung&hl=de-AT&authuser=0&biw=360&bih=288&ei=g1N8XL7_FbSWjgblmq-QDg&q=plants+prefer+c12+over+c14&oq=plants+prefer+c12+over+c14&gs_l=mobile-gws-wiz-serp.

As you should already know, R Shearer.

Regards –

No, they don’t prefer it by that much. He said there is “no latency” or “half life,” as in NONE.

Even the ugliest at the bar get taken home sometimes, otherwise there wouldn’t be so many ugly people (insert the name of your least favorite climate activist here – possibly one in a picture in this posting).

Point being, that carbon dating wouldn’t work if C14 weren’t taken up by plants.

Hmmm a new meaning of carbon dating, eh?

Pick up a gal with a carbon fetish at the local dive bar.

Yes, some don’t mind the gals with an extra large nucleus.

” it is heavy and drops to the surface ”

OH no, not again. Try to get informed about gas diffusion. Even is still air gases mix by diffusion, they do not “sink” into layers according to “heaviness”.

Yes, I was going to mention that too.

Once mixed there is too much entropy to overcome for “heavy” gases to separate on their own. I think people get confused because of instances where CO2 might be released from a volcano into a lake and pools at the surface and only very slowly dissipates. Or is created in a sewer or well by bacterial action and seemingly stays there forever. Such mixing is favored by entropy driven enthalpy.

The error is also often repeated about Freons.

With freons there is a difference.

If the fridge is leaking gas, it leaks into a room. It is therefore very much more difficult for freons to become well mixed and gain altitude.

If the concentration of CO2 in the atmosphere is solely due to diffusion and the surface concentration of CO2 has gone up, then you would expect some of that increased concentration to diffuse upwards in the atmosphere. This *should* have seen the tree line on mountain ranges moving up in altitude and tree growth on the mountainsides increasing.

Yet I have not seen any study indicating this is the case.

I always thought that the rate of diffusion was not just based on concentrations but also on the weight of the molecule involved. Heavier molecules don’t move as fast and therefore cover less distance over time. As CO2 is added to the surface concentration from the oceans, volcanoes, and whatever, it may not “sink” but it doesn’t rise up (i.e. diffuse) nearly as fast as other things in the atmosphere.

In addition, force = mass x acceleration. The force working on CO2 because of gravity is much higher because of its molecular weight. How does this play out in keeping CO2 from diffusing up into the atmosphere?

You are right. Hydrogen for example diffuses much more rapidly than carbon dioxide as its moving at a velocity 4.7 times faster.

Wind is the major cause of mixing in the atmosphere. I mentioned diffusion because even in a closed box gases will mix in about 24h. Co” will only sink to the bottom initially.

No, the natural experiment invalidates that first sentence.

In 1929-1931, global human CO2 production declined rapidly by 30%, CO2 increase continued at the unchanged indolent rate, and temperature kept rising through 1941. During WWII and the post war reconstruction, CO2 stabilized and temperature declined enough to raise alarms about the Coming Ice Age (Time, Newsweek, and Science News).

Luckily, it doesn’t make any difference since CO2 is not in control of climate, and we are not in control of CO2.

This is an awesome bit of data.

Here is yet another problem with trying to figure out what correlates with global temp across time.

Long story short: Mann et al used principles component analysis to figure out proxies that gave a temp record across time rising and falling. This was then correlated with CO2. So, he supposedly was able to isolate the degree that the change – specifically, increase – in CO2 was associated with change – again, an increase – in global temps.

A problem is that the CO2 measure is invariant. It rises monotonically. This defies a very under-recognized aspect of any covariance (with a small C) analysis: you need to have rises and falls in both of the measures you are attempting to correlate. Mathematically, the regression, or correlation, will run. You have to look for this by eye, not by regression diagnostics. It is an assumption of the entire correlational endeavor.

In Figure 7 of MBH98 the CO2 measure they apparently used is illustrated. It does not vary: there are not both higher points and lower points across time, so it can play no role in predicting global temps.

Being able to explore any time point where CO2 went down would give the ability to see if CO2 covaries with temp: to see if rising CO2 correlates with rising temps, and vice versa.

Is there much of a point sceptics banging on endlessly “it’s not CO2” since not many, if any of the AGW advocates listen to what the sceptics are putting forward. Even the BBC, supposedly the bastion of impartiality, has banned sceptic views in their discussions.

We sceptics think that we know that CO2 is not cause of the present or many past climate changes or that the CO2 is not driving the current global warming if there is a such event taking place, but without a credible alternative we will never get the AGWs to listen.

There are various aspects of the solar input with a lot of interest and attention but convincing case is still elusive.

Science should also be looking into other factors acting individually or in concert that might be cause/s but are not sufficiently researched in order to be fully discounted or accepted as the plausible.

For some two or three years I have been drawing attention to the best correlation available that there is, not necessarily the cause but the strong association shown may be a pointer to it.

Yes, that’s true, but skeptics need not supply an alternative model if that “best available” correlation breaks down definitively over the next decade. While it would accelerate acceptance to have a predictive alternative theory, the CO2 hypothesis cannot be sustained if a natural cooling period has begun but fossil fuel use continues unabated, right?

True, correlations come and go, e.g.

Solar Cycles length or aa geomagnetic index

have failed in the last few decades, therefore it should not be unexpected that

magnetic dipole

also may fail in the near future.

The operating principle should be “factors acting individually or in concert”, most likely in concert, i.e. if natural variability is cyclical, as it appears to be, then it could be that final output is a cross-modulation from two or more periodically variable factors.

aa geomagnetic index link is http://www.mitosyfraudes.org/images-4/SW-3.gif

IMHO, the earth’s temperature rise over the last 3 to 4 decades is natural variation due to solar cycles. Nothing more. Of course the natural rise in temperature (ie climate change) has been ‘juiced’ by cooling the past and warming the present. Confirmation bias has had a heavy hand in temperature dataset revisions, making it appear that there’s a strong correlation between CO2 and temperature. There is, but it’s much, much weaker than what is being modeled by the CAGW believers.

Good point. The hype about CO2 is actually hilarious to any competent scirntist. It is also tragic.

we will never get the AGWs to listen.

Well, we are not singing the song they want to hear!

The tangled web is the myriad of incorrect assumptions, misrepresentations and hyperbolic conjecture that passes for ‘settled climate science’. Science this broken is unsustainable and destined to collapse.

“The IPCC response was to claim that even if production stopped the problem would persist for decades because of CO2’s 100-year residency time. A graph produced by Lawrence Solomon appeared showing that the actual time was 4 to 6 years (Figure 1).”

A lot of totally discredited stuff in this essay, including Beck. But this one of ‘residency time’ is an old faithful. Why in quotes? As Wilis and others patiently explain, there are two different things being described. One is the residence time of individual CO2 molecules, as indicated by the persistencce of C14, for example. That is 4-6 years. But what the IPCC is talking about is the persistence of an amount of CO2 added to the atmosphere, sometimes called the e-folding time. That is just a different quantity, and it is deceptive to lump them in one bar chart.

To see the difference, note that the average residence time of molecules in our bodies is about 6 weeks. That doesn’t have anything to do with our life expectancy.

Hi Nick.

The 4-6 years is also an “e-folding time”: exponential decay. It shows a dilution of the airborne excess of C14 based CO2 into the larger terrestrial and oceanic reservoirs. The imbalance is being redressed.

I don’t see why this is difference from an airborne excess of CO2 being absorbed by those same reservoirs.

This is a supposed distinction that I have never seen adequately explained. Can you elaborate?

Greg,

“Can you elaborate?”

Yes. It frequently happens that there is some mixing process which exchanges atoms or molecules, but has builtin tendency to leave mass in place. In the body example, water comes and goes, but what goes has to be replaced. In the atmosphere mixing, there are two major exchange processes. One is photosynthesis, which takes out about 15% each year. But almost all carbon that is reduced is then oxidised within a few years, so this does not generally change total mass. The other is seasonal sea-air exchange, also over 10%/year. In winter cool water dissolves more CO2, but in the summer warms and gives it back. But as with photosynthesis, they aren’t the same molecules. It preserves mass in the air, but mixes, say C14, into the sea.

A simpler analogy

A tank with 10 liters of water. It has a drain with outflow rate = 10 liters/hr and a faucet with inflow rate = 9.9 liters/hr. The residence time of water in tank:

10 liters / 10 liters/hr = 1 hr

If you add 1 liter to the tank, the water level will rise to 11 liters. How long will it take to get back to 10-liter level?

Net outflow = 10 liters/hr – 9.9 liters/hr = 0.1 liter/hr

1 liter / 0.1 liter/hr = 10 hrs

“But what the IPCC is talking about is the persistence of an amount of CO2 added to the atmosphere, sometimes called the e-folding time. ”

The whole argument about residence time and adjustment time is fallacious. If the sources and sinks were constant or were a net positive, then the adjustment time would be infinite into the future and the CO2 levels would NEVER go back down even if we stopped all emissions. Saying that they are a constant net decrease and that they would decrease if man wasnt involved is saying that the 400000 year old ice core record is false because it shows a balance more or less.

https://www.youtube.com/watch?v=rohF6K2avtY

Murray Salby has shown that the Bern model of the IPCC is nonsensical. It doesn’t follow the conservation law. There are 3 drains of CO2 from the atmosphere. The oceans,

the land and vegetation. The collective removal of all the CO2 is dictated by the fastest removal drain of the atmospheric CO2 NOT the removal time of the addition of all 3 of the drains. The actual removal of CO2 from the atmosphere is a function directly of the amount of CO2 in the atmosphere which is itself a function of temperature. The adjustment time reduces to the residence time. Salby has shown that the Bern model exaggerates the amount of net CO2 in the air by 5x by 2100. If the adjustment time was longer than the residence time, then if residence time was reduced to zero by some infinitely fast sink, then the adjustment time would still be a net positive time period. You can’t have a real positive adjustment time of something that doesn’t even accumulate in the 1st place.

It is chemistry.

Analytics.

CO2 + cold + water =HCO3

HCO3 + heat = water + CO2

So

There is always correlation between ambient T and CO2 concentration

The natural carbon cycle is about 10% each year with the land-based biosphere. So your input is about the same as the output. Now you dump some fossil carbon in and then stop. You calculation gives infinite time to recover.

false analogy Dr Strangelove.

When you increase atmospheric CO2, ocean uptake increases. It’s not infinite time to recover. Read Henry’s law

Uptake of CO2 by the oceans is a function of temperature and pH of the water,

i.e. the colder the water the higher the uptake.

Assuming the pH stays constant over a long time, we have,

simplified:

CO2 (g) + 2H2O + cold = > HCO3- + H3O+

In a time where water temperature rises, due to increased irradiation , we get simplified:

HCO3- + heat = > CO2 (g) + OH-

So, the correlation between warmth and CO2 is and will always be there. In fact, if it were not so, we would probably not be here today. But it is not causal. More CO2 in the atmosphere does not cause more heat. Humans adding a bit more CO2 to the atmosphere is not going to change the climate, not even one tiny little bit….

wouldn’t adding CO2 to the atmosphere…be like drinking a glass of water?

…and the e-folding time how long until you piss it out?

kinda. But I think body analogies are dubious since there are many fast acting negative feedbacks in our biological systems to ensure that certain, critical parameters stay within closely controlled limits.

This is fundamentally different from a relaxation to equilibrium situation.

Greg, Top point. Thank you. Geoff

“One is the residence time of individual CO2 molecules, as indicated by the persistencce [sic] of C14, for example. That is 4-6 years. But what the IPCC is talking about is the persistence of an amount of CO2 added to the atmosphere, sometimes called the e-folding time.”

Nick, I do not understand why the half life of CO2 in air is a function of the total quantity present. Is not the whole equal to the sum of its parts? Start with a quantity of CO2 in the atmosphere, call it X, from sources such as plant respiration, volcanos, ocean degassing, rotting plants and so forth, coupled with removal mechanisms such as adsorption in the oceans, which yields the equilibrium value, X. Then double it to 2X by burning lots of fossil fuels quicker than the removal mechanisms can keep up with. If we then stop using fossil fuels completely, will not the amount of CO2 decline with a half life of ~6 years, or so back to where it was from plant respiration, ocean adsorption etc., etc? What is causing the decay constant to change from ~6 years to ~100 years simply by introducing a new source for a time then turning it off?

The time const is not changing , the argument is that it is not the same process.

It is the excess above the supposed natural equilibrium which should decay exponentially. Your 2X back down to X. However, since there is a notable amount of new CO2 ( previously stored in the ground away from the air/land/ocean reservoirs ) there will be a new equilibrium when the excess has been redistributed to those reservoirs, air being the smallest by far.

I think that 17y exponential from C14 data gives the correct estimation of settling to the new equilibrium.

I would like a clear explanation if anyone thinks there is different process at work. Because individual CO2 molecules does not seem to qualify as a scientific argument.

“What is causing the decay constant to change from ~6 years to ~100 years”

The decay constant doesn’t change. They are time constants of different processes. The first is mixing, and for that you don’t need a gradient of total CO2. You don’t need to shift a mass of CO2 to anywhere. It’s just environments in contact (sea, air, bio) which exchange. But to move a total mass of CO2, such as we have injected into the air, it has to go somewhere, driven by a concentration or partial pressure gradient. That takes longer.

But did not the C14 injected from bomb tests over a several year period have the same mixing, concentration and partial pressure issues as the C12/13 from fossil fuel use? I believe the ~6 year number came from the bomb data. Still not clear to my why the C12/13 from fossil fuels should be different.

C14 CO2 is still CO2, it does not get its own partial pressure. It’s like a CO with a tracer. There are small differences in biological preferences between isotopes but in terms of gas laws and part.press. it’s all CO2

Does the Orbiting Carbon Observatory OCO-2 contribute useful data?

Maybe I have a wrong understanding of e-folding time. Is it not the time for a pulse to decay to 1/e (base of natural logarithm)? So it would be the time to decay to 1/2.718 = 37%

Whereas the time for atomic bomb 14C to leave the atmosphere almost completely was about 4-6 years if I recall. So that implies several e-folding times, or an e-folding time of maybe around a year.

Yrs Remaining fraction

1 0.37

2 0.14

3 0.05

4 0.02

5 0.01

6. 0.002

Similarly only about half of human emissions end up increasing CO2 concentration in the atmosphere. Without a rigorous analysis this seems consistent with that 14C-derived 1-yr e-folding time and a series of n pulses.

Help me see where I am going astray.

No, it took several decades not 6 years.

https://climategrog.wordpress.com/c14_norway_dbl_exp_cosine_64-78/

Not sure where 4-6 years comes from. I got more like 17y.

See paper I found below..All kinds of measurements show

that the real residence time of atmospheric CO2 is about 5 years.

Macha

Ok, if your 17-yr value holds, then multiply the years in my table by about 3. I think it still implies that if we stop driving atmospheric CO2 away from equilibrium, we would absorb 95% of the excess CO2 in about 9 years. (Not hundreds of years). How is that wrong?

No , you need to multiply your table by 17 ! It will take 3*17 =51 y to reduce to within 5% of the starting value. Did you look at that graph ?!

Ok fine, Greg, go with e-folding time of 17 years. That means we would drop (410-280)*(1-0.37) = 82ppm to 328ppm in 17 years. 350.org would be in trouble, no?

Alarmists want us to believe that once we emit, CO2 will haunt us for millennia.

If the hypothesis is that we stop all CO2 emissions overnight we’d all be in trouble. Most of the world’s population would be dead before we got measure the first 5ppm of reduction.

No, it’s not my point to say we will follow AOC on the Green Leap Forward. It’s to point out that if all of us skeptics are wrong, and ECS is 4.5, we will have plenty of time to transition to nuclear power at even a higher percentage than France has long achieved. Then within a reasonable length of time, the alleged catastrophe will reverse naturally.

In the meantime we don’t need to do anything or if we want a precautionary action, then start building molten salt reactor nukes.

Over to you, kent…

Also called the time constant, which is the time it takes to reach 63% of equilibrium. Relative to the climate, this would be the amount of time it takes to emit all of the energy stored by the planet (capacitor) at the current average rate emitted by the planet (voltage). This ends up less than 100% of the heat (charge) because the rate is decreasing as the planet cools (capacitor discharges). If the time constant is actually constant, then the fraction of equilibrium achieved after one time constant is 1-1/e.

The planet is a little trickier since while the temperature is linear to stored energy, the rate of cooling (discharging) is proportional to the temperature raised to the forth power, thus the time constant is not constant, but proportional to 1/T^3.

Rich,

“Is it not the time for a pulse to decay to 1/e (base of natural logarithm)?”

Yes. And I don’t think that is a particularly useful term, since the time constant relates to diffusion, which isn’t exactly exponential. But the issue is not the kind of time pattern of the decay, but what is supposed to be decaying. In one case it is individual atoms, in the other case, total mass.

You can see the difference in the mechanisms of sea diffusion. The 90 or so Gtons of CO2 that are absorbed seasonally each year need only penetrate a few tens of metres. In that space they mix, and from it an equal amount is emitted in the summer. But added total CO2 has to find a permanent home, and so has to diffuse over hundreds of metres, because unlike the seasonal oscillation, it increases total CO2 in the water, and so must be transported downwards by diffusion. THat takes a lot of time.

Nick,

Only the temperature of the top few hundred meters of the oceans varies in temperature as the oceans adapt in response to seasonally variable solar forcing, or any other change for that matter. If we needed to wait for the entire ocean to respond to seasonal variability, we wouldn’t even notice the seasons.

Increased absorption of CO2 by the ocean in response to changes in partial pressure will occur very rapidly. Besides, if the ocean is warming, it will be releasing CO2, not absorbing it.

Nick,

It seems to me that the surface waters where CO2 is absorbed are in the polar regions where the CO2-enriched cold water then sinks to the deep ocean. CO2 is sequestered to the deep not by diffusion but via the thermohaline circulation. The water that sinks at the polar regions is replaced by relatively CO2-poor water flowing from the tropics to be cooled again making it absorb more CO2. The oceans outgas in the tropics where CO2-rich, cold water wells up and gets warmed in the tropical sun.

Rich,

“It seems to me that the surface waters where CO2 is absorbed are in the polar regions”

That is a minor part of the total seasonal absorption, although it is significant as it is actually transported somewhere. The vast majority is just from seasonal change in cool to temperate waters. It isn’t talked about a lot because CO2 isn’t transported anywhere much; it is just absorbed when the solubility is high (winter) and returned in summer. But it is a big contributor to the brevity of residence time of individual molecules.

Yes, Nick, I agree that it is a minor part of the total seasonal exchange, but it is the critical factor. You tell me where I’m wrong. Here’s my thinking: the total seasonal exchange when in a neither warming nor cooling world at equilibrium will be net zero. Any time a patch of water is warming up, it’s also outgassing CO2. Any time it is cooling off, it is also absorbing CO2. The areas that are merely exchanging seasonally are cycling through roughly equal periods of warming and cooling.

Compared to the areas that are downwelling, the areas that are merely exchanging seasonally are much bigger of course. Like the number of homeless who sleep on the train are a small number compared to the commuters who are getting off and on.

In a slightly-warming world, the net seasonal exchange should be slightly net to the atmosphere (all other things being equal), because there will be slightly more patches of water that are warming than that are cooling at any given time, as well as integrated over the whole year. But if the partial pressure of CO2 in the atmosphere is forced higher by human emissions (or any other reason), then the system is perturbed toward more absorption. And of course it’s complicated by the fact that higher atmospheric CO2 stimulates a bigger biological response counteracting the outgassing. Negative feedback!

Here is my key point. Currently only about half of the CO2 that humans emit is remaining in the atmosphere to drive up CO2 concentration. A lot of it is going into the biosphere– trees, plankton, etc., and some is going into the deep ocean through the thermohaline circulation. If we dramatically reduce emissions of CO2 from fossil fuels, those processes will continue to act. Will they not? If not, please explain how that can be.

Yes, these sinks will decrease logarithmically as the driving force (such as the partial pressure of CO2 in atmosphere > partial pressure of CO2 in the ocean) gradually dissipates. However, if ECS is at the alarmist end of the IPCC range, rapidly switching to nuclear power over a decade or two would be an achievable and (relatively) affordable, less-disruptive resolution of the problem, compared to the Green New Deal and other marginally less-ridiculous IPCC ideas.

The only reason that AOC and the IPCC are trying to force us onto the crazy train to oblivion is that they claim that the CO2 we emit will “pollute” the atmosphere for centuries, combined with ECS estimates that cannot begin to be supported by empirical evidence of warming. What arguments can you make for why they are correct about the residence time of CO2?

If the truth is that only a little less CO2 will be sequestered after an abrupt reduction in human emissions than is currently being sequestered, then the reality is that we eat away at the CO2 concentration relatively quickly. No justification for the destruction of western civilization. Combine that with the real ECS value of no greater than 1.5 and it should be clear that radical change is totally unnecessary and harmful to human welfare.

In the long run, we’re going to run out of economically-extractable fossil fuels. We need to have a lot of nuclear power in place by then. So for that reason I would certainly support investing in the safest possible nuclear technologies and in rolling some out sooner than later, even if it is not the least-cost way to generate energy at the moment.

“Yes, Nick, I agree that it is a minor part of the total seasonal exchange, but it is the critical factor. ”

How minor is it when you have just ruled out diffusion as being too slow to sequester much CO2 out of the surface layers? Clearly there is a big “missing” sink if half our emissions are disappearing each year.

The “average” difference in air/ocean partial pressure is about 7 micro-atm but can be many times more in the polar regions. Another case of climatologists not realising you can not “average” intensive properties like temperature and pressure.

Greg, I don’t think it’s minor at all. It’s more like comparing a billionaire’s income to government spending.

The reason I’d say diffusion doesn’t help segregate CO2 to the deep ocean is that the concentration of CO2 above the thermocline should be lower than the concentration below, right? (CO2 concentration is a function of temperature, where the surface waters are generally warm and relatively CO2-poor, deep water is typically around 3C, and relatively CO2-rich). Diffusion causes a species to move from high concentration to lower concentration. So to the extent that there is diffusion, we should expect it to flow from the deep ocean to the surface waters, I think. I’d guess that plankton tend to reduce CO2 in the surface layer as well, making it even more likely that diffusion would be from the deep to the surface rather than vice versa.

At least that’s my non-expert way of thinking about it. I wouldn’t be surprised to find out that I’m way over-simplifying it.

To show some actual data to back up the comments by Greg and Rich, here are a couple of examples of the variability of CO2 concentration in surface waters compared to atmospheric CO2 variations:

https://pmel.noaa.gov/co2/story/GAKOA

The huge drop every spring is, I assume, photosynthesis activity by phytoplankton. And just to illustrate that CO2 in surface waters can also (greatly) exceed atmospheric levels, see:

https://www.pmel.noaa.gov/co2/story/Twanoh

Thanks for making this point. It seemed like an odd misunderstanding for the author to have.

“…the claim that human production of CO2 is causing global warming. “

Really should be:

“… the claim that human production of CO2 is causing catastrophic global warming. ”

Climate sensitivity to increasing CO2 is the only relevant scientific question today.

To be clear: sensitivity is the only relevant “climate science” question.

There are of course an unlimited number of relevant science questions waiting to be addressed by scientists, and usually answering just one creates a cascading chain of many more questions.

Yes that statement would seem to be one that could legitimately be regarded as ( anthropogenic ) global warming denial.

Yes, the IPCC’s ECS is the root of all evil. The IPCC claims an ECS of 0.8C +/- 0.4C per W/m^2 while estimates from the skeptics are generally close to or below the IPCC’s lower limit. You can even show that because the IPCC’s lower limit of 0.4C per W/m^2 requires increasing surface emissions by 2.17 W/m^2 from 288K to 288.4K, it’s already more than the maximum possible equivalent emissions sensitivity of 2 W/m^2 of surface emissions per W/m^2 of forcing.

The primary criteria for establishing the size of the ECS in AR1 was that it be large enough to justify the creation of the IPCC and UNFCCC. They then used the Hansen/Schlesinger broken incremental feedback analysis as the theoretical basis for amplifying the Planck sensitivity up to their presumed ECS. This incorrectly decoupled the effect of the next Joule from all others, enabling it to have an arbitrarily large effect. The result is the longest lived broken science of the modern age.

The IPCC can never admit this, as it would preclude their reason to exist. How was this conflict of interest ever allowed to arise?

I am truly thankful for all of the scientific minds that are able to see through this CAGW charade and counter it with fact based rebuttals.

I have not obtained university credentials that would give my opinion credence but I have read many, many different scientific studies from all sides of this debate and have arrived at the conclusion that CO2’s effect (and specifically man-made CO2) on the earth’s temperature is de minimus.

From my readings, my personal belief is that solar cycles are the thermostat of the earth and they change the earth’s temperature in multidecade/multicentury cycles. The temperature variations are buffered by the oceans, which are the earth’s heatsink (and CO2 repository) … the oceans do a fantastic job of moderating the earth’s temperature but they also cause CO2 levels to rise (due to outgassing CO2) when the oceans have warmed. Conversely, the oceans ingas CO2 as the oceans cool.

If the predictions are correct that we have entered a period of very low solar activity, the earth will start cooling and CO2 levels could possibly start declining due to the ocean ingassing CO2. We are already close to the point where ‘adjustments’ to the temperature record are approaching the entirety of claimed heating of the earth; if the planet cools a bit and these climate statisticians (they’re not scientists; scientists don’t manipulate raw data to prove their thesis) further manipulate the temperature record, we will quickly reach a crossover point where adjustments to the historical temperature record exceed the claimed rise in the earth’s temperature.

I simply look at Australia’s BoM adjustment in data from Acorn1 to Acorn2 as clear proof of how confirmation bias has destroyed the world’s temperature records. That’s why scientists don’t make adjustments to raw data … because their personal biases will influence the adjustments and they end up achieving the exact result that matches their thesis.

Anyway, all of you skeptical scientists keep up the good work; I suspect the entire CAGW theory will soon fall apart. And remember, Galileo was convicted of heresy and sentenced to house arrest for the remainder of his life due to believing that the sun, not the earth, was the center of the universe

Tim …Love all your stuff….but….

“We learned from Australian (Dr,)….. Jennifer Marohasy ?

“Because it is in this journal (it)? raises flags for me”

“‘emergency’ report of the IPCC presented at COP 24 in Poland that we have 12 years left.” ?

The last line is wrong because we actually have 16 years, 18 months,39 days,29 hours and 76 minutes….!

Since the Antarctic record shows “remarkable” change from about 175 to 275 ppmv over about 2000 years, I don’t see the implied contradiction with MLO values. There is about two orders of magnitude difference in the timescales there. That would make anything more than 2.75 ppmv change a MLO indeed “remarkable” variability.

Sadly , it really does not appear that Dr Tim Ball is an honest broker. He is just and dug-in and selective in what he wishes to show and those on the other side who he is out to criticise.

I liked his appearance in The Great Global Warming Swindle but my appreciation stops there.

If the half life of C14 is 5,700 years, and fossil fuel carbon is too old to contribute CO2 with C14 to the atmosphere. Therefore, if the Anthropogenic CO2 contribution is significant should there not be a measurable change in the C12/C14

ratio.? Assuming C14 is generated at a constant rate from Nitrogen by solar radiation, the basic assumption of Carbon dating.

Any inputs?

Cheers

Mike

Look into C13/C12 ratio 😉

C14 production is actually somewhat variable. Nevertheless, there are all kinds of studies on the so-called human “fingerprint” mostly on C12/C13 isotope ratios. Go here if you want to see cherry picking at its finest. https://www.skepticalscience.com/human-fingerprint-in-global-warming.html

Thanks Greg/ R Shearer

Cheers

Mike

Maybe the reason for all the recent frantic alarmist reports (World ends in 12 years, etc.) is that temps are expected to cool enough in the next 10-15 years that it will be obvious CO2 driving high global temps is a false theory. But, if enough CO2 sources can be taken offline in that time, the alarmist scientists can say “See?? We were right!!! CO2 production is reduced and the world is cooling!!!” Alarmist politicians around the world can then say “We must maintain control of the economy so none of that nasty CO2 gets out again! Now continue commuting on your bicycle in the dead of winter and put on another sweater! Oh, and don’t complain!”

This whole thing is making my head hurt. I think I need to lay down.

Creating urgency is basic salemanship 101. But yes, by now they have realised that it is not going to work out the way they have been claiming and they want to try to get their agenda sealed into the system before it become too obvious for anyone to miss that they are way off the mark and there is not “emergency”.

Take a look at how a major corporation is benefitting from the deception:

https://www.ey.com/ca/en/services/specialty-services/climate-change-and-sustainability-services/climate-change-and-sustainability-services

Some are suggesting that this company is responsible for bankrupting many cities.

How so?

I don’t commute on my bicycle when it’s too cold, windy, wet, icy or snowy. If it were a working day I would drive as it’s snowing, the streets are covered with snow, and it’s -13C (about 22C below normal).

Yes, it is a theory that I entertain as well. If they succeed in pushing through policies reducing CO2 emissions, even marginally, (or through fake statistics on Chinese fossil fuel consumption), it is a certainty that any cooling will be credited to their policies being successful, rather than that the theory of CO2 as the master control knob has been debunked. They would have to gloss over CO2 concentration not dropping if emission data is faked, but most likely there will be adjustments to handle that.

Our best hope is that fossil fuel emissions rise faster than ever, atmospheric CO2 increases unambiguously, and temperatures plunge beyond the range of any possible adjustments. But even in that scenario, we will just hear new fables about how we have destabilized the system and we’re experiencing the wild swings of dangerous climate disruption.

Never forget that Climate Change ™ in not a falsifiable theory.

“But, if enough CO2 sources can be taken offline in that time, the alarmist scientists can say “See?? We were right!!! CO2 production is reduced and the world is cooling!!!” Alarmist politicians around the world can then say “We must maintain control of the economy so none of that nasty CO2 gets out again!”

I think that is exactly their aim, George. The Alarmists are going to spin the weather situation to suit their narrative. Just like they claim cold weather is caused by CAGW. Whatever the weather does, they will claim it fits in with the CAGW hypothesis.

James Hansen and Gavin Schmidt already have this base covered. Even if it cools they say, it doesn’t negate CAGW. Nothing negates CAGW!

https://wattsupwiththat.com/2018/01/24/nasa-james-hansen-gavin-schmidt-paper-10-more-years-of-global-warming-pause-maybe/

The long-wave doesn’t just pass through a sliver of atmosphere at a fixed level above the surface. It passes through the entire atmosphere.What Beck shows, as i discovered with experiment, that the variability throughout the atmosphere, but especially in the the area Geiger studied in Climate Near the Ground, is very wide. We did extensive measures of varying gas levels at all altitudes from the absolute surface to 200 feet and found enormous variability. Is CO2 a magical gas that is only affected at specific heights and under specific atmospheric conditions? If nothing else, Beck demonstrated the fallacy of that assumption. In addition, I should have added the OCO2 discovery of the myth about CO2 being a well-mixed gas throughout the atmosphere. No. it doesn’t form into layers, but equally, it does not mix evenly.

If you measured the effect at the top of the atmosphere (TOA) on the levels of long-wave as affected solely by CO2, the OCO2 suggest it would show remarkable variance of percentages and distribution. Of course, this would be difficult because of the wavelength overlap of the different gases within the spectrum. Why do they have such difficulty getting accurate measures of the volume, variance, and distribution of water vapor in the atmosphere?

“In addition, I should have added the OCO2 discovery of the myth about CO2 being a well-mixed gas throughout the atmosphere. No. it doesn’t form into layers, but equally, it does not mix evenly.”

Then how do you explain the following.

More conspiracy?

Mixed enough there to even detect the NH seasonal cycle when the “not mixed evenly” CO2 makes it down to Antarctica.

There is no need to use Hawaii as the source. There is an atmospheric monitoring station at the tip of Cape Point staffed by volunteer scientists throughout the year. It is automated for logging and calibration.

If the numbers there match the Hawaiian numbers, there is no legitimacy to the complaints about the location.

there are actually over 160 station all over the world measuring and reporting. I don’t know why people keep saying, that there is only one station on mauna loa.

https://gaw.kishou.go.jp/search

It’s simply the longest.

This paper is worth a read…covers the carbon isotope thread…..

Carbon cycle modelling and the residence time of natural and anthropogenic atmospheric CO2:

On the construction of the “Greenhouse Effect Global Warming” dogma

Tom V. Segalstad

Mineralogical-Geological Museum

University of Oslo

Sars’ Gate 1, N-0562 Oslo

I have not found that paper yet, do you have a link?

http://www.co2web.info/Segalstad_CO2-Science_090805.pdf

Pretty close to the 17y I found for 14CO2. So the 4-6 given by Nick does not seem to be the right figure for 14C.

“All these observations apply to the biggest deception in history, the claim that human production of CO2 is causing global warming. ”

Just to remind folks, almost every serious “skeptic” that we all know and love (Curry, Lindzen, Lewis, and even Mr. Watts, I would expect, etc.) completely disagrees with this. Some “skeptics” give the whole (legitimate) movement a black eye. Much in the same way it was RealClimate (in the early days) that turned me into an AGW skeptic, these types of articles are having the opposite effect. We all have to at least be intellectually honest or the whole enterprise loses and we are no better than the folks we accuse on the ‘other side’.

Agreed, I find Dr Ball “no better than the folks we accuse on the ‘other side’.”

I wouldn’t subscribe to the theory that CO2 was around current levels in the early 40s just based on probably unreliable measurements, although I would not rule it out either. That doesn’t mean that we cannot see cooling in the face of increasing CO2 over the next decade. And it doesn’t mean that temperature and CO2 could not have been moving in concert but actually mostly independent of each other.

The question is what is ECS? If the pause was natural variability counteracting GHE, how do we know that prior warming was not natural change of a similar magnitude with the GHE modestly enhancing the natural watming? (Rather than the unsupportable claim that virtually all warming since 1950 is due to greenhouse effect of anthropogenic CO2). This question is why the pause must be adjusted away at all cost.

who is claiming “virtually all warming since 1950 is due to greenhouse effect of anthropogenic CO2”

I thought the IPCC claim “majority” : that means >50% not “virtually all “, though that is how many people seem to hear that term.

If the observed temperature change is only 50% due to GHE of anthropogenic CO2, then what transient climate sensitivity would be calculated? What is the implication for equilibrium climate sensitivity?

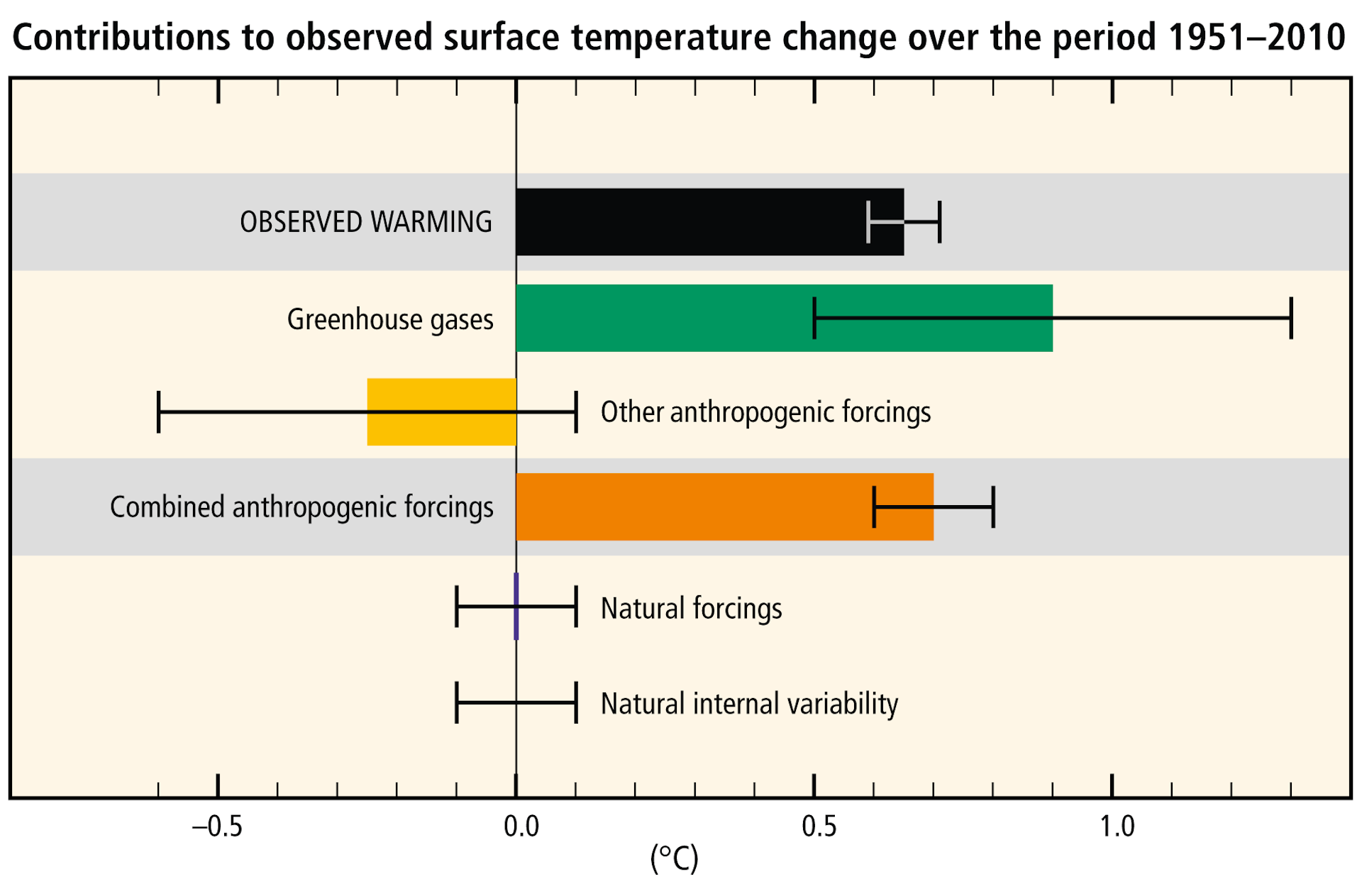

That would be a logical inference from the text of the summary of the fourth report (2007) but their fifth report adds to the incoherence, for instance figure SPM.3 shows anthropogenic forcing contributing 100% of the supposed post-1950 warming:

?auto=format&q=45&w=754

?auto=format&q=45&w=754

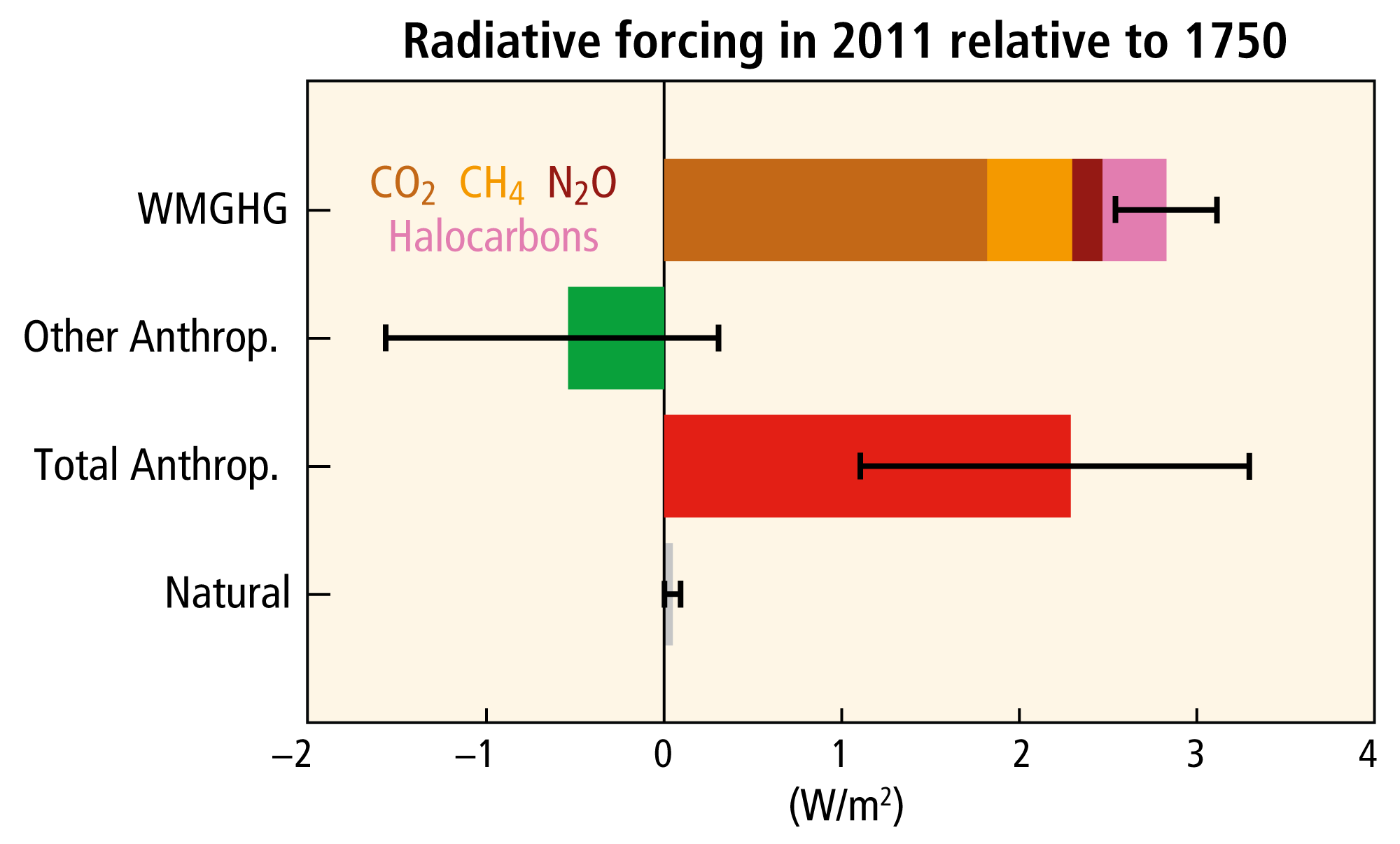

Another diagram from the same report (Figure 1.4 Radiative forcing of climate change during the industrial era (1750-2011) has, by implication, all supposed the warming since 1750 due to humans:

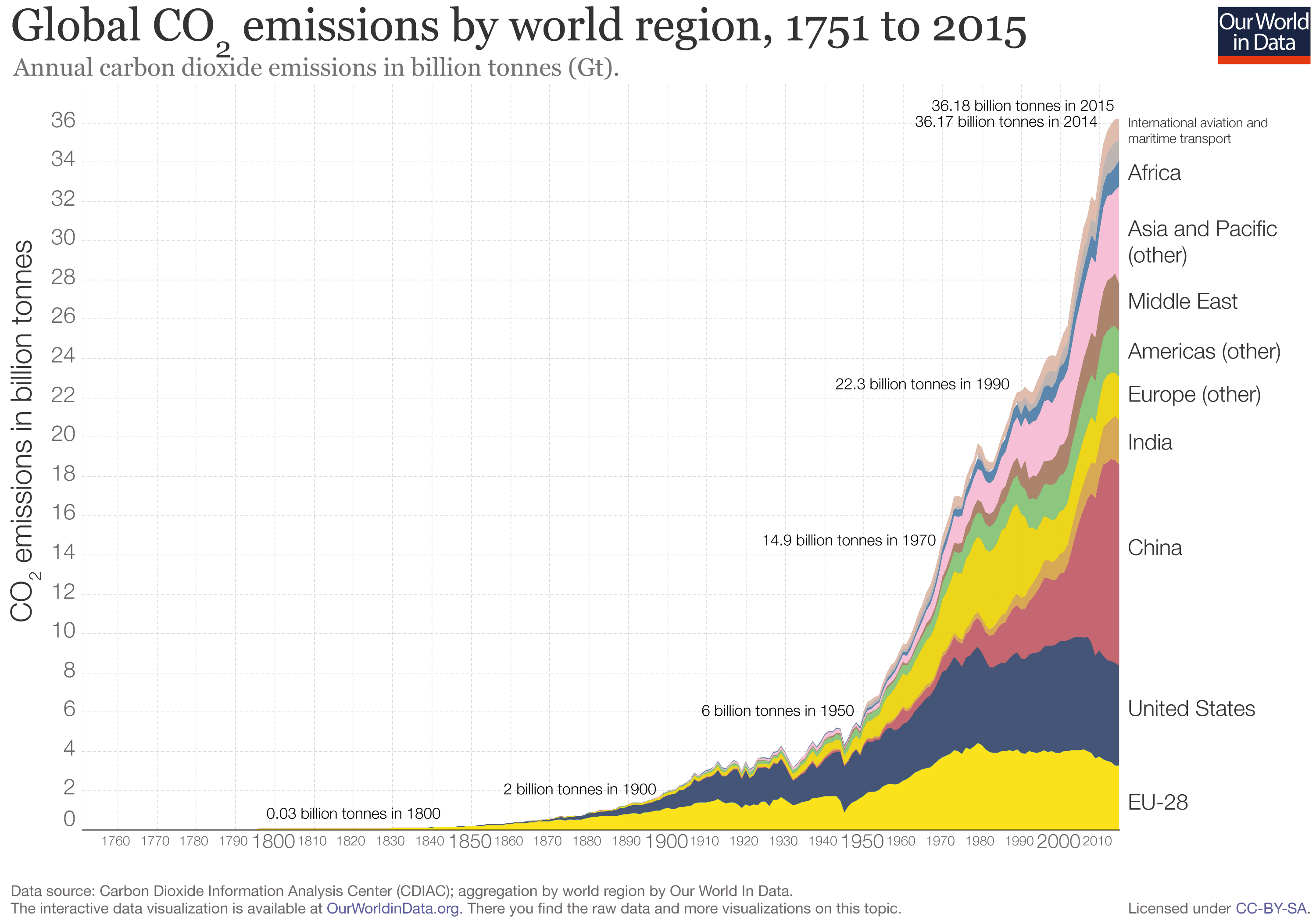

That’s despite the fact that human CO2 emissions before 1945 were relatively insignificant:

Statements like that referred above “biggest deception …” etc. are unfortunate.