Guest Post by Werner Brozek, Comment Included From David Hoffer, Edited by Just The Facts:

In the above graphic, the green line is the slope since May 1993 without consideration of error bars. When including error bars, the range could be as low as zero as indicated by the blue line. It could also be an equal amount above the green line as indicated by the purple line.

The numbers that were used to generate the above graphic are from Nick Stokes’ Temperature Trend Viewer site.

For RSS, the numbers are as follows:

Temperature Anomaly trend

May 1993 to Feb 2016

Rate: 0.871°C/Century;

CI from -0.022 to 1.764;

t-statistic 1.912;

Temp range 0.118°C to 0.316°C

So in other words, for 22 years and 10 months, since May 1993, there is a very small chance that the slope is negative.

For UAH6.0beta5, the numbers are as follows:

Temperature Anomaly trend

Jan 1993 to Feb 2016

Rate: 0.911°C/Century;

CI from -0.009 to 1.830;

t-statistic 1.941;

Temp range -0.001°C to 0.210°C

So in other words, for 23 years and 2 months, since January 1993, there is a very small chance that the slope is negative.

As mentioned in my January post, there is now no period of time going back from February 2016 where the slope is negative for any period worth mentioning on any of the five data sets I am analyzing.

As a result, my former Section 1 will not be shown for the foreseeable future.

My last post had an excellent comment by David Hoffer that I would like to share to give it wider exposure and for you to give your thoughts:

davidmhoffer

March 2, 2016 at 10:11 am

1. The “Pause” hasn’t disappeared. It now just has a beginning and an end. But it is right there in the data where it always was, and it doesn’t cease to exist merely because we can’t calculate one starting from the present and working backwards.

2. The “Pause” was never significant in terms of showing the CO2 doesn’t heat up the earth. It only became significant because the warmist community (Jones, Santer, etc) said that natural variability was too small to cancel the warming of CO2 for more than a period of 10 years…er 15…er 17 and made a big deal out of it.

So regardless of the “Pause” having ended or not, what we have is conclusive evidence that the models either:

a) grossly under estimated natural variability or

b) grossly over estimated CO2 sensitivity or

c) both

In all three scenarios above, natural variability dominates in terms of any risk associated with a changing global temperature. That’s what we should be studying first and foremost. Once we understand it, then we can determine how much CO2 changes natural variability. Trying to determine CO2 sensitivity without first understanding the natural variability baseline that it runs on top of is a fool’s errand. Unfortunately, fools seem determined and well funded, and so they continue to try and do just that.

The world has been warming for 400 years, almost all of it due to natural variability. It will continue to warm (I expect) and most of the warming will be due to natural variability, which we just learned from this last 20 years of data is a lot bigger deal than CO2.

(End of David’s post)

In the sections below, we will present you with the latest facts. The information will be presented in two sections and an appendix. The first section will show for how long there has been no statistically significant warming on several data sets. The second section will show how 2016 so far compares with 2015 and the warmest years and months on record so far. For three of the data sets, 2015 also happens to be the warmest year. The appendix will illustrate sections 1 and 2 in a different way. Graphs and a table will be used to illustrate the data.

Section 1

For this analysis, data was retrieved from Nick Stokes’ Trendviewer available on his website. This analysis indicates for how long there has not been statistically significant warming according to Nick’s criteria. Data go to their latest update for each set. In every case, note that the lower error bar is negative so a slope of 0 cannot be ruled out from the month indicated.

On several different data sets, there has been no statistically significant warming for between 11 and 23 years according to Nick’s criteria. Cl stands for the confidence limits at the 95% level.

The details for several sets are below.

For UAH6.0: Since January 1993: Cl from -0.009 to 1.830

This is 23 years and 2 months.

For RSS: Since May 1993: Cl from -0.022 to 1.764

This is 22 years and 10 months.

For Hadcrut4.4: Since October 2001: Cl from -0.016 to 1.812 (Goes to January)

This is 14 years and 4 months.

For Hadsst3: Since May 1996: Cl from -0.002 to 2.089

This is 19 years and 10 months.

For GISS: Since March 2005: Cl from -0.004 to 3.688

This is exactly 11 years.

Section 2

This section shows data about 2016 and other information in the form of a table. The table shows the five data sources along the top and other places so they should be visible at all times. The sources are UAH, RSS, Hadcrut4, Hadsst3, and GISS.

Down the column, are the following:

1. 15ra: This is the final ranking for 2015 on each data set.

2. 15a: Here I give the average anomaly for 2015.

3. year: This indicates the warmest year on record so far for that particular data set. Note that the satellite data sets have 1998 as the warmest year and the others have 2015 as the warmest year.

4. ano: This is the average of the monthly anomalies of the warmest year just above.

5. mon: This is the month where that particular data set showed the highest anomaly. The months are identified by the first three letters of the month and the last two numbers of the year. The 2016 records are not included here.

6. ano: This is the anomaly of the month just above.

7. sig: This the first month for which warming is not statistically significant according to Nick’s criteria. The first three letters of the month are followed by the last two numbers of the year.

8. sy/m: This is the years and months for row 7.

9. Jan: This is the January 2016 anomaly for that particular data set.

10. Feb: This is the February 2016 anomaly for that particular data set.

11. ave: This is the average anomaly of all months to date taken by adding all numbers and dividing by the number of months.

12. rnk: This is the rank that each particular data set would have for 2016 without regards to error bars and assuming no changes. Think of it as an update 10 minutes into a game.

| Source | UAH | RSS | Had4 | Sst3 | GISS |

|---|---|---|---|---|---|

| 1.15ra | 3rd | 3rd | 1st | 1st | 1st |

| 2.15a | 0.263 | 0.358 | 0.745 | 0.592 | 0.86 |

| 3.year | 1998 | 1998 | 2015 | 2015 | 2015 |

| 4.ano | 0.484 | 0.550 | 0.745 | 0.592 | 0.86 |

| 5.mon | Apr98 | Apr98 | Dec15 | Sep15 | Dec15 |

| 6.ano | 0.743 | 0.857 | 1.009 | 0.725 | 1.10 |

| 7.sig | Jan93 | May93 | Oct01 | May96 | Mar05 |

| 8.sy/m | 23/2 | 22/10 | 14/4 | 19/10 | 11/0 |

| 9.Jan | 0.542 | 0.663 | 0.899 | 0.732 | 1.14 |

| 10.Feb | 0.834 | 0.974 | 1.057 | 0.604 | 1.35 |

| 11.ave | 0.688 | 0.819 | 0.978 | 0.668 | 1.25 |

| 12.rnk | 1st | 1st | 1st | 1st | 1st | Source | UAH | RSS | Had4 | Sst3 | GISS |

If you wish to verify all of the latest anomalies, go to the following:

For UAH, version 6.0beta5 was used. Note that WFT uses version 5.6. So to verify the length of the pause on version 6.0, you need to use Nick’s program.

http://vortex.nsstc.uah.edu/data/msu/v6.0beta/tlt/tltglhmam_6.0beta5.txt

For RSS, see: ftp://ftp.ssmi.com/msu/monthly_time_series/rss_monthly_msu_amsu_channel_tlt_anomalies_land_and_ocean_v03_3.txt

For Hadcrut4, see: http://www.metoffice.gov.uk/hadobs/hadcrut4/data/current/time_series/HadCRUT.4.4.0.0.monthly_ns_avg.txt

For Hadsst3, see: http://www.cru.uea.ac.uk/cru/data/temperature/HadSST3-gl.dat

For GISS, see:

http://data.giss.nasa.gov/gistemp/tabledata_v3/GLB.Ts+dSST.txt

To see all points since January 2015 in the form of a graph, see the WFT graph below. Note that UAH version 5.6 is shown. WFT does not show version 6.0 yet. Also note that Hadcrut4.3 is shown and not Hadcrut4.4, which is why many months are missing for Hadcrut.

As you can see, all lines have been offset so they all start at the same place in January 2015. This makes it easy to compare January 2015 with the latest anomaly.

Appendix

In this part, we are summarizing data for each set separately.

UAH6.0beta5

For UAH: There is no statistically significant warming since January 1993: Cl from -0.009 to 1.830. (This is using version 6.0 according to Nick’s program.)

The UAH average anomaly so far for 2016 is 0.688. This would set a record if it stayed this way. 1998 was the warmest at 0.484. The highest ever monthly anomaly was in April of 1998 when it reached 0.743. This is prior to 2016. The average anomaly in 2015 was 0.263 and it was ranked 3rd.

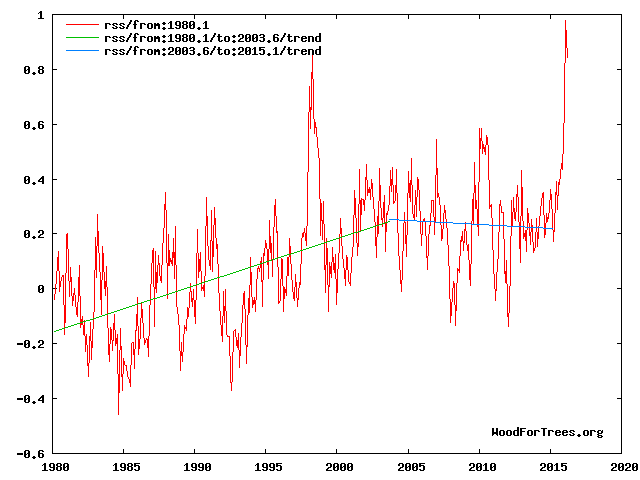

RSS

For RSS: There is no statistically significant warming since May 1993: Cl from -0.022 to 1.764.

The RSS average anomaly so far for 2016 is 0.819. This would set a record if it stayed this way. 1998 was the warmest at 0.550. The highest ever monthly anomaly was in April of 1998 when it reached 0.857. This is prior to 2016. The average anomaly in 2015 was 0.358 and it was ranked 3rd.

Hadcrut4.4

For Hadcrut4: There is no statistically significant warming since October 2001: Cl from -0.016 to 1.812. (Goes to January)

The Hadcrut4 average anomaly so far is 0.978. This would set a record if it stayed this way. The highest ever monthly anomaly was in December of 2015 when it reached 1.009. This is prior to 2016. The average anomaly in 2015 was 0.745 and this set a new record.

Hadsst3

For Hadsst3: There is no statistically significant warming since May 1996: Cl from -0.002 to 2.089.

The Hadsst3 average anomaly so far for 2016 is 0.668. This would set a record if it stayed this way. The highest ever monthly anomaly was in September of 2015 when it reached 0.725. This is prior to 2016. The average anomaly in 2015 was 0.592 and this set a new record.

GISS

For GISS: There is no statistically significant warming since March 2005: Cl from -0.004 to 3.688.

The GISS average anomaly so far for 2016 is 1.25. This would set a record if it stayed this way. The highest ever monthly anomaly was in December of 2015 when it reached 1.10. This is prior to 2016. The average anomaly in 2015 was 0.86 and it set a new record.

Conclusion

Warming does not become catastrophic just because we cannot go back from February 2016 and find a negative slope. This is especially true since it was a very strong El Nino and not CO2 that was mainly responsible for the negative slope disappearing for now.

A better illustration of the current situation is shown below.

See figs 1, 3,4 and 8 at

http://climatesense-norpag.blogspot.com/2016/03/the-imminent-collapse-of-cagw-delusion.html

The millennial temperature peak is seen at about 2003.This corresponds to the solar activity peak at about 1991 Fig 8 .The previous temperature peak was at 990 +/- Fig 4

The El Nino peak is temporary aberration from the cooling trend (blue line) which will continue with various ups and downs until about 2650 +/- . Fig 4

Has anyone noticed the desperation of some to try isolate selecting a pause in warming from data, from AGW theory.

They will attack the claimed cherry picking while forgetting the very theory they are pushing makes 10 year or more pauses in warming makes those statistical pauses relevant.

CO2 in the atmosphere is causing warming of a greater order than natural variability they say, so when CO2 goes up and up, and there is a longer than 10 year pause in increasing temperatures, they attempt to isolate the statistics from the theory, and claim cherry picking.

The start data for ice levels is the biggest cherry pick in the field. But it’s apparently OK to do that

Plus temperature is following Hansen’s original C scenario (draconian cuts).

Now he’s back again claiming waterworld is on the horizon. The guy has a few screws loose

It appears from your comment that you don’t understand Scenario C or other aspects of Hansen’s paper.

You’re in good company, Steve mcIntyre made a similar error until I corrected him several years ago.

[Link, please? -mod]

Why does everyone put so much into charts , graphs, trend lines and on and on. I’m no scientist but I am a business owner for 40 years. Critical thinking skills and logic would seem appropriate. Nick wants me to believe that the 33 or so molecules in 85000 parts of our atmosphere is causing runaway heating. As silly as that seems to me, he then wants me to believe that tha ONE molecule that is human caused is the main driver of this run away climate change. Since we obviously can’t anytime soon eliminate all of this one human molecule added, what percentage does anyone think we can eliminate? And then I’m expected to believe this tiny percent change at a tremendous cost in money and possibly lives in developing countries, is worth it. Hubris falls short in defining this belief. Why don’t we invest in better preparing for what nature throws at us whether it’s hot or cold and stop goofing around trying to control it with some trumped up idea that co2 is poison.

Also that the Pause ‘insofar as it existed’ – upwards of two decades – is not significant if it doesn’t precede cooling, as opposed to demonstrating the lack of warming in the face of increasing C02 predicted by models . Not to mention the absence all the supposed consequences that are supposed to result.

Concur; once the EPA decided this low level gas was a pollutant, I knew Big Brother was here. If you want to claim its anthropogenic why not look at water vapor, SOx, NOx, etc?

John C: The way I had it put to me goes something like this: You have a v large swimming pool into which you have dumped 99,962 white ping-pong balls (which keep the water temperature fairly stable and very comfortable). You then add 38 blue balls which, as well as keeping the water healthy, have the (claimed) ability to raise the temperature by a very, very small amount – that cannot be verified with a thermometer (or by dipping a toe in the water!). You then add two more blue balls, which, although being man-made have a similar tendency to warming (it is believed). Now, do you think it would be safe to enter the water, or will it be too hot?

That’s my point. Illogical at best. Outright propaganda that too many believe. I suppose the mass of the co2 molecule can factor in but even that comparison falls short of making me fall for the tiny amount my tailpipe emits and the electricity for my house and business is doing anything. Sorry AGW promoters, I need a bigger problem to stay awake at night worrying about. I truly believe after pollution control greatly improved the air we breath, as it needed to, they needed another problem to attack business and capitalism. So they found co2. A naturally occurring gas that is great for greening of the planet and they called it a controlled substance and poison. And this came from educated pinheads. Sad state we find ourselves in today.

John C,

Why? Because…physics. Increasing the CO2 concentration in the Earth’s atmosphere will cause the temperature of the atmosphere to rise. Anyone who doubts that is simply mistaken, no matter how fervently they believe otherwise. The question is how much rise and what are the consequences. You mentioned costs; what are the costs if the majority of the science community is right about the effect of CO2 at projected concentrations?

Harry Passfield, if your analogy is meant to represent the current conditions in the Earth’s atmosphere, the number of blue balls should be continuously increasing. Also, how small is very, very? The atmospheric temperature change necessary to have a deleterious effect on the climate is many times smaller than that necessary to make a comfortable swimming pool too hot for safety.

Slipstick: Is it meant to represent the current conditions, etc? Of course not. It’s a load of balls. Oh, hang on… (And there’s always one.)

Slipstick: Let’s ignore the FACTS presented to you. Let’s also ignore the sun, water vapor, ocean cycles and all the other things we don’t understand. The agreed FACT is only one molecule in 33 is human caused. This is in 85,000 molecules of air. Let’s assume, and you seem to like assumptions as opposed to facts, that we can magically stop adding that one molecule. What affect do you think it would have? Not to mention that all the efforts the greens and government propose and are doing will have very little reduction overall. Waste of time, resources, and dangerous. Answer my question. Why don’t we put our efforts into coping with climate instead of trying to control it? logic doesn’t seem to apply only emotion and agenda. The agenda is the part I haven’t figured out yet. It’s so crazy there must be something I’m missing.

And if all you have are platitudes and Algore propaganda, don’t waste my time.

Slipstick:

‘Anyone who doubts that is simply mistaken, no matter how fervently they believe otherwise.’

Wow! You seem pretty sure about your opinion. In fact it sounds like you fervently believe it.

A rise in the equilibrium temperature of a gaseous system with an increasing proportion of CO2 exposed to infrared energy is not an opinion, it’s physics, and yes, I fervently believe in physics.

“You have a v large swimming pool”

The swimming pool is a good analogy. Imagine adding 400 ppm of ink. Then you can’t see the bottom. In the IR range, in the air, CO2 is ink. And radiant heat needs a clear view to emerge. Otherwise less efficient modes of heat transfer are used.

Nick Stokes: “The swimming pool is a good analogy. Imagine adding 400 ppm of ink. Then you can’t see the bottom.”

Totally wrong.

Stop making stuff up.

Wasn’t it Angstrom’s assistant who showed all the IR to be absorbed by CO2 was already. Adding more CO2 makes no difference unless the sun outputs more IR.

“Totally wrong.”

Your evidence?

According to Beer’s law, the total absorption of light (or IR) by a solute depends on the amount of it in the light path. If you dilute it so that the column is deeper (but same cross-section) the total absorbed is the same.

400 ppmv of ink in a 2.5m deep pool is equivalent to adding a 1mm layer and stirring. And 1 mm of ink is quite opaque.

“And radiant heat needs a clear view to emerge. Otherwise less efficient modes of heat transfer are used.”

Less efficient? The troposphere is dominated by convection; a water driven heat pump with a staggeringly large throughput running on idle most of the time. Diffusion confusion yet again. You cannot use fag packet fizzicz to calculate net radiative transfer in isolation, much less attempt it in only two dimensions assuming magic partial mirrors mounted on some toy story spherical Rubik’s cube.

All forms of energy diffusion are integrated all of the time in all directions. The usual cartoon and confusion of colour temperature with actual energy transfer are sheer stupidity and the very root of this entire nonsense.

“Less efficient?”

Yes. Upward infrared through a transparent atmosphere is efficient enough to emit all absorbed solar radiation at the snowball Earth temperature of 255K. The fact that we are at about 288K shows that all operating mechanisms including convection are far less efficient, and require a much larger driving temperature difference.

Nick , Harry et al

You don’t need ink or swimming pools.

Milk is 87% water. If you add a teaspoon of milk to a glass of clear water (about half a litre) you will not be able to see the bottom of the glass. Much of the visible light will have been reflected – yet the reflective constituents (fats and casein) only represent about 0.06% (600 ppm) of the liquid.

Try it.

John C. April 7, 2016 at 10:36 am

Slipstick: Let’s ignore the FACTS presented to you. Let’s also ignore the sun, water vapor, ocean cycles and all the other things we don’t understand. The agreed FACT is only one molecule in 33 is human caused.

That is not a FACT of any sort.

Nick,

Your ink analogy is faulty.

In the first place, the “pool” with a million balls is already inky from 30,000 H2O molecules. Second, we’re not adding 400 extra CO2 molecules, but only 115. How much inkier will 115 extra molecules out of 30,285 make the pool? To say nothing of the other GHGs at even lower concentrations.

One extra CO2 molecule per 10,000 might have a measurable effect in parts of the atmosphere with low H2O concentrations, but in most places H2O totally swamps the radiative effect of CO2, above the level required for life. Adding more CO2 generally has a negligible effect, as in fact has been observed over the past century or more of rising CO2.

The effect is so slight that Callendar, proponent of (beneficial) man-made GW during the 1930s, considered his hypothesis shown false by the 1960s, more frigid despite much more CO2 than in the ’30s. And he was right.

Under yet more CO2, the ’70s were still cold. Then the PDO flipped and the planet warmed slightly from the late ’70s to late ’90s. Since then, GASTA has stayed flat to declined. The long-awaited super El Nino has finally occurred, producing a probably temporary ever so slight uptrend since the super El Nino of 1997/8, but in all likelihood we’re headed back down in coming decades, thanks to the PDO and AMO oceanic oscillations.

Nick Stokes says:

400 ppmv of ink in a 2.5m deep pool is equivalent to adding a 1mm layer and stirring. And 1 mm of ink is quite opaque.

Fuzzy thinking. You’re just making things up and asserting them as fact. The depth of the pool is not your argument, and it also disregards the area of the pool. What matters is 400 ppm.

When I was a kid my aunt used to add what was called “bluing” to her clothes washer. It was supposed to make ‘whites whiter’.

The bluing was in a bottle, and it was as dense and dark as any India ink. She would pour a few tablespoons into the water, and my cousins and I would watch amazed as the bluing disappeared. It did not visibly change the transparency of the water at all.

Every argument made about the dangers of more CO2 amounts to evidence-free hand waving. Those arguments have remained unchanged for decades. But since hand waving is all you’ve got, that’s what you use.

If you were an honest skeptic, you would have more options.

Gloateus Maximus April 8, 2016 at 9:56 am

Nick,

Your ink analogy is faulty.

In the first place, the “pool” with a million balls is already inky from 30,000 H2O molecules. Second, we’re not adding 400 extra CO2 molecules, but only 115. How much inkier will 115 extra molecules out of 30,285 make the pool? To say nothing of the other GHGs at even lower concentrations.

Wrong, in the 15 micron band H2O does not significantly absorb compared with CO2:

http://i302.photobucket.com/albums/nn107/Sprintstar400/H2OCO2.gif

dbstealey April 8, 2016 at 10:38 am

Nick Stokes says:

400 ppmv of ink in a 2.5m deep pool is equivalent to adding a 1mm layer and stirring. And 1 mm of ink is quite opaque.

Fuzzy thinking. You’re just making things up and asserting them as fact. The depth of the pool has nothing to do with your argument, which also disregards the area of the pool. The only thing that matters is 400 ppm.

Yes your thinking is indeed fuzzy stealey. The depth of the pool is indeed critical, look up Beer’s law, absorption is proportional to concentration X path length.

When I was a kid my aunt used to add what was called “bluing” to her clothes washer. It was supposed to make ‘whites whiter’.

The bluing was in a bottle, and it was as dense and dark as any India ink. She would pour a few tablespoons into the water, and my cousins and I would watch amazed as the bluing disappeared. It did not visibly change the transparency of the water at all.

Perhaps you should have asked your aunt how laundry blue works. The dye, say Prussian Blue, is absorbed onto the clothes and therefore removed from solution, what you’ve done is to dye the clothes blue (v slightly). The slight blue color added to the clothes counteracts the dingy yellow color of the old clothes and makes them appear white.

Nick Stokes is right here. Depth has everything to do with it and area has nothing to do with it.

As far as depth is concerned, you need to take the ratios of the depths and the 400 ppm to million ppm. 400/1 000 000 has the same ratio as 1 mm/2500 mm. So if the pool were 25 m or 25 000 mm deep, you would need 10 times as much ink, or 10 mm of ink.

As for area, that does not matter. If you had a 2.5 m long straw or a 2.5 m pool the size of a city, it would still take enough ink to cover the top with 1 mm.

(On the best science site, we cannot let slip ups go unchallenged. ☺ Agreed?)

Werner,

I’ll agree that the 400 ppm is the relevant metric. Neither depth nor area have anything to do with Nick’s claim of making the water opaque, because that wasn’t his argument.

It doesn’t matter if the pool is 2.5 cm deep, or 2.5 metres, or 2.5 miles deep. Or wide. The 400 ppm (or as you say, the one molecude in 10,000) is what matters.

Also, from personal observation I don’t accept Nick’s belief that one molecule of ink in 10,000 of water will make the water opaque.

(On the best science site, we cannot let slip ups go unchallenged. Agreed? ☺)

Actually, Nick said that 4 molecules in 10,000 will make it opaque. Now turning to CO2, what was not directly addressed was the fact that nature already had 2.8 molecules of CO2 in 10,000 in 1750. Does man’s additional 1.2 molecules of CO2 in 10,000 make a further difference? And most would agree that due to the logarithmic affect, this further addition by man makes very little difference to temperature.

“Upward infrared through a transparent atmosphere is efficient enough to emit all absorbed solar radiation at the snowball Earth temperature of 255K. The fact that we are at about 288K shows that all operating mechanisms including convection are far less efficient, and require a much larger driving temperature difference.”

Absolute rubbish, we are not talking about an idealised transparent atmosphere and you’re still thinking in terms of isolated transfer mechanisms. Take a look at the temperature gradient through the entire atmosphere. Aggregate diffusion is heavily skewed by convection, which is in large part why earth has an enormous stratospheric temperature inversion (and Venus does not). The tropospheric lapse rate is linear due entirely to convection within reducing density. The assumption from colour temperatures that CO2’s effective radiative altitude for 2-dimensional SB calculation purposes is below the tropopause is not only wrong, it’s ludicrous. It confuses energy flux with temperature. Mixed gases with condensing components do not behave like black bodies or even grey bodies. Point a pyrometer anywhere you like, it cannot tell you anything about net aggregate energy transfer. Doing the physics properly requires integration of all forms of transfer in three dimensions at the micro scale, particularly the latent heat component inherent to cloud evolution. Back of a fag packet shell games in isolation are not even wrong.

A recent study showed an increase in atmospheric CO2 of 22 ppm during the period 2000-2010 produced an increase in radiative forcing of 0.2 W/m^2. That’s close to the predicted value, and evidence that added CO2 produces warming.

http://www.iflscience.com/environment/scientists-find-direct-evidence-atmospheric-co2-heats-earth-s-crust

http://www.nature.com/nature/journal/vaop/ncurrent/full/nature14240.html

The first link does not work and they want money to see the second. However the dates could not be worse to prove a point! 2000 had a La Nina and 2010 had an El Nino.

Werner, both links work on my machine. The bottom link is to an abstract where you can find the information I provided. Finally, the study shows an increase in radiative forcing and does not measure surface temperature.

“Why don’t we invest in better preparing for what nature throws at us whether it’s hot or cold and stop goofing around trying to control it with some trumped up idea that co2 is poison.”

Such a relevant, intelligent, logical question. And you know what….I cannot think of ONE logical, intelligent, relevant reason except one (but the idea itself is insane mind you)…if you wanted to control the world’s money for any reason…play with it, make buildings out of it, redistribute it, undermine your enemies….economy, people…you’d have to attack at the very root of prosperity. Which for the Western world has been the increasing ability to move freely about, and live in relative comfort, relatively inexpensively. Fossil fuels. Movers and shakers can only move and shake because of them.

Having so many powerful industrial giants sucks away vast amounts of power (money) from ever being focused one centralized place. (one world government) You gotta stop the diffusion….close down access from a lot to a few. But it would be easy to see that such an agenda was mindbogglingly dangerous and that those who believe in it were absolutely bonkers…that is… if they went after powerful and necessary business men and women and investors outright. So what would be a very clever way of bringing them down without looking like bat crap crazy, jealous, power hungry hyenas? Attack using a “scientific hypothesis” that all those evil, nasty emissions that can only be attributed to one source—fossil fuels….break down their profit margins with taxes, ruin their reputations with accusations and make the whole world believe that they are KILLING THE PLANET, and they couldn’t give a rat’s behind about it either.

Invite all the little, pathetic, powerless people of the world to become superheros for Earth….first, tell them how marginalized they really are….teach them that there’s 97% of them and only 3% of the big, bad enemy….stoke class warfare…and movements like Occupy Everything….pretend you are supporting their progress while squeezing them in subtle ways to make their suffering even more acute. You gotta keep them down so you can point out how down they are all the time! Make them angry. And scared. And feed them propaganda 24/7 from every angle. And hope with all you have in you that the climate doesn’t do what it most likely and naturally will….reach a certain point and start to cool off……BEFORE your plan is successful. After all, it would look stupid if the world starts to cool off like it always has and you haven’t implemented any of your “world saving strategies” before it does! In fact….the closer it gets to that actually happening…the more shrill and panicked and terrifying you might have to become in order to push it all past the tipping point.

And the best part is….between the advances of technology and the age old fact that some people are so stupid, so gullible, soooooo incredibly weak in the face of even basic suggestions… you wouldn’t even have to form an old fashioned physical conspiracy! No meetings….no secret handshakes….no overhead..no heads on spikes. Nothing. Just hit the public over and over and over again in their emotional soft spots….home….family….hopes….dreams….their religion….their futures….their darkest fears…..death…..destruction….insecurity….loss.

You don’t need to crest a hill with an overwhelmingly large army arrayed in shining battle gear anymore to bring your foes to their knees! Silly! Just make them feel like something that bad and that awful is coming for them if they don’t do something.

(Hint…if you spend money on preparations and adaptations for future natural disasters…they’ll realize doing so is much easier, productive, and visibly reassuring. It would give people hope and comfort and peace….and you can’t do a d@mned thing to push an agenda on hopeful, comfortable, peaceful people!)

Yes thanks

It will be interesting to see how the Satellites and NOAA/GIIS deal with the sudden drop in Sea Temps.

Will they ignore it, adjust it out or what?

See

http://notrickszone.com/2016/04/06/global-sea-surface-temperatures-have-fallen-sharply-cooled-surprisingly-negative-global-temperature-anomaly-by-end-of-2016/#sthash.vdno3ipL.dpbs

Or ignore it until they can adjust it, or simply claim that it all proves their point anyway.

Re: “effect”

The phrase “CO2 has an effect” describes something very small (if indeed it exists at all), but it has great potential to mislead. This hyper-technical phrase evokes in the average reader’s mind the false implication that CO2 has a controlling effect. In tort law, when a potential, very small, cause is OVERWHELMED by another controlling cause, the controlling cause is called a supervening causation. Here, the effect of natural drivers is the supervening cause of climate change.

To mention human CO2 emissions is unhelpful at best, damaging to the truth about causation at worst.

If an effect is obliterated by another cause, here natural climate drivers, it is accurate and wise (we are in a WAR for science realism where word-twisting by the likes of St0kes can easily fool the uninformed) to leave the conjecture about human CO2 emissions’ potential “effect” completely aside.

************************************************************

Remember Major Burns on M.A.S.H.? If he were made a general, he would lose the war, getting bogged down in bickering over hyper-technical nit-picking: winning wars takes strategy as well as technical expertise. Wisdom must guide knowledge.

*****************************************************

The STOP in warming, so far as meaningful measurement goes, IS. It has no “end” at this point.

*****************************************

ANOTHER GREAT ARTICLE, MR. BROZEK — THANK YOU!

You are welcome! But do not ignore the giants who directly or indirectly contributed, namely David Hoffer and Nick Stokes.

Maybe I’ve missed some posts but the last I read the ‘pause’ was somewhere around 18 years. What caused the sudden jump to 23? What did I miss?

Looks like you missed THIS post, Mr. G.:

2016 -1993 = 23

We are talking about two different things. See an earlier post of mine that clearly describes the differences here:

http://wattsupwiththat.com/2014/12/02/on-the-difference-between-lord-moncktons-18-years-for-rss-and-dr-mckitricks-26-years-now-includes-october-data/

In short, the 18 years had a slight negative slope. The present 23 years has a positive slope, but it is not statistically significant enough for climate scientists to be sure we really do have warming over the 23 years.

As an analogy, suppose we have a political poll that says one candidate has 40% and the other has 38%. But then they say the margin of error is 3% 19 times out of 20. So with the margin of error considered, we really cannot be sure the one candidate is favored by a majority.

Ok that makes sense now. I knew there was something fairly obvious I was missing. Thanks for clarifying.

“it is not statistically significant enough for climate scientists to be sure”

But the 23 trend observed was actually 0.92°C/Century. And while with different weather it just might have been as low as zero, it might equally have been as high as 1.84 °C/cen. This does not really have the attributes of a pause.

True, but Phil Jones and the rest of the climate science community, rightly or wrongly, use that yardstick for certain conclusions. Is that not correct?

“use that yardstick for certain conclusions”

People use the existence of statistical significance (SS, at 95%) as a yardstick. They don’t use the non-existence of SS. Lack of SS just means you don’t have enough data to be sure.

The trend of RSS since Aug 2010 had a lower CI of -0.852. Not SS (relative to 0). But the trend itself was 5.29°C/Cen. That isn’t a pause. Actually, there is an interesting paradox here. To Jan, from 8/2010, the lower CI was -0.751 – higher than Mar. But we’ve had two very hot months, and we’re less certain of positive trend? The reason is that the sudden rise increased the estimate of variability more than the rise in trend.

Thank you! That explains a puzzle I had with respect to Hadcrut4. I wrote:

For Hadcrut4.4: Since October 2001: Cl from -0.016 to 1.812 (Goes to January)

But with a high February anomaly, it got extended back to August as follows.

Temperature Anomaly trend

Aug 2001 to Feb 2016

Rate: 1.007°C/Century;

CI from -0.003 to 2.018;

t-statistic 1.954;

Temp range 0.443°C to 0.589°C

The Pause numbers for 18 years (or 18 years, X months) is based on the definition of a regression line with a slope less than 0.0

The 23 year numbers use the definition of a regression line with a slope not statistically significantly different from 0.0

The issue of statistical significance is very important, as it takes into account, in some fashion, the natural variability of the data.

The error bars on RSS are actually worse than that.

They are so big that its really pointless to compare Satellite temperatures with land temperatures

or to compare satellite temperatures with GCMs.

As to the pause..

when can we see the goofy approach of starting with today and going back in time to measure a pause?

That could take quite a while. See Nick Stokes’ excellent comment here on this point:

http://wattsupwiththat.com/2016/04/04/march-was-3rd-warmest-month-in-satellite-record/#comment-2182271

When the La Nina takes effect of course. Then you will be able to read on wuwt that the pause has lengthened.

Slipstick, you just did the propaganda response. Make a statement without any facts. You didn’t explain how this one human caused molecule in 85000 was going to have the effect you believe. And what percent reduction do you think will stop what you believe? You might need to figure out an economical method to remove as much co2 as possible to save the planet. Of coarse this will needlessly kill off many plants and cause much human and animal deaths. But you may be OK with that.

John C,

Before I can respond, to what does 85000 refer?

Molecules of what we call air in a specific volume. 400 parts per 1,000,000 is a FACT we all agree on. Do the math with some rounding of course. Of the approximately 33 molecules in 85,000 parts, only one is attributed to humans. There is some difference in mass but not enough to really make a difference in basic physics. The re radiation of absorbed heat is well known. I am only concerned with quantity because I’m applying critical thinking. I can’t see how what is claimed is possible. If co2 was at a much higher level, and I mean much higher, you may have a point. The fact that so little of co2 is human caused and our ability to reduce it and maintain a livable planet, defies logic without some very big changes.

John C.,

You’re misunderstanding two things. First, a single CO2 molecule can interact repeatedly with photons, redistributing their energy without ever using up the CO2. Second, concentration is only part of the equation, the other is the shear scale of the atmospheric air column. Here’s an analogy I wrote a long time ago to try and illustrate:

Assume a square glass jar that is 10 grains on a side. That’s 100 grains in a single layer. Now imagine the jar is 100 grains tall. 10,000 grains in all. Make 99,996 white and just 4 red, same ratio as your example above. Suppose the jar is about 10 cm tall. Now, instantly make all the white grains invisible. What would you see?

Well, you’d see a 10 cm tall jar that is mostly empty, with a fleck of red here and there. You could easily draw a vertical line from the bottom of the jar to the top without hitting any of those red flecks. In fact, you could draw a lot of them.

Now, stack thousands of those jars on top of each other in a tower 14 kilometers high. You’ll need a stack of 140 thousand jars. Now try drawing a line from bottom to top without hitting a red grain. You can’t. In fact, not only that, you can’t even do it without hitting thousands of red grains.

I’m a confirmed skeptic, but radiative physics is a bit more complex than simply drawing conclusions from concentration ratios.

John C: I hadn’t refreshed when I posted below. I figured it out …3%? Guess I was close….

Only one is attributed to humans? Using your scale, the current concentration is 34/85000 and since ~1960 the concentration has risen from ~26 to 34. Are you attributing that increase to something other than human activity? If so, what?

Perhaps it’s the ratio of CO2 that is man-made? (As opposed to natural CO2 in the atmosphere) Just guessing here.

There have been many posts on this and I am certainly not going to get into it in this post. As well, there is strong disagreement. Here is my understanding:

Out of 100 CO2 molecules that enter the atmosphere each year, 3 are due to man and 97 due to natural sources. However these 3 due to man added up over the last 250 years so that of the present 400 parts per million, 120 parts per million is the cumulative total due to man. So we caused a 40% increase in CO2. But so what? The important thing is that this has not contributed to CATASTROPHIC warming, nor will it in the future.

davidmhoffer: Thank you for the analogy with the glass jars (14km high).

I’m a confirmed skeptic, too (does it show?), but our warmist politicians – who make the carbon laws) are themselves “drawing conclusions from concentration ratios”.

I guess the thing we need to take notice of is that little bit in “ppmbv” (I’m assuming your glass jars are the “bv” bit).

I take issue with this phrase only.

The truth is we are not sure what caused the LIA and therefore we don’t know what is causing the post-LIA warming. The most popular theory is that LIA was caused by low solar activity helped by unusual volcanic activity, specially at the beginning (13th century) and at the end (1815).

We do know that on a millennial scale the planet is cooling due to lower obliquity (axial tilt) and low summer insolation in the Northern Hemisphere due to unfavorable precession.

The most reasonable explanation is that LIA was an anomalous cold period caused by unusual conditions and the planet has naturally and gradually warmed to the level that corresponds to its present orbital configuration and perhaps a bit more due to a rebound effect and helped by the increase in GHGs.

The most reasonable expectation is that after this warming period the world should resume its progressive cooling. The more we warm, the stronger the opposing cooling forces get. Paleovclimatology shows that GHGs are not a strong driver of temperatures by themselves, since the second half of the Holocene showed progressive cooling despite increasing GHGs concentration.

Most people assume that in the absence of warming forcings global average temperatures should remain more or less levelled, and that in the presence of indefinitely increasing levels of CO2 in the atmosphere temperatures should indefinitely increase. Both assumptions are wrong. Global temperatures work like a roller coaster: Once the highest point was reached in the present interglacial the only way is down, even if the fall is made of ups and downs. As high levels of CO2 did not prevent the planet from entering glacial conditions previously, and they did not prevent the cooling in the 1945-1975 period, we should not expect them from preventing a global cooling in the near future.

In conclusion you should not expect that it will continue to warm. It might continue to warm for some more time or not, but a peak warmth should be reached at some point and then global cooling should resume. Let’s hope that peak warmth was not 2015. I hope we continue getting more record warm years in the future, because it beats the alternative.

Earth has been in a long-term cooling trend for 3000 years, ie since the end of the Minoan Warm Period. The East Antarctic Ice Sheet quit retreating at that time, for instance.

Peak warmth of the Minoan WP was a little less than the Holocene Optimum, but lasted less time. The peaks of the Roman, Medieval and so far Modern WPs have each been lower than for the preceding WP. The trend is down and not our friend.

We may however be in another of the super interglacials which, based upon the orbital eccentricity cycle, occur at roughly 400K year intervals, in which case “catastrophic” warming could occur naturally over the next 20,000 years or so, ie partial melting of the Southern Dome of the Greenland Ice Sheet.

Earth has been in a long-term cooling trend for 5200 years, when the Neoglacial subperiod of the Holocene started. See Thompson, Lonnie G., et al. “Abrupt tropical climate change: Past and present.” Proceedings of the National Academy of Sciences 103.28 (2006): 10536-10543.

There is no super interglacials which occur at roughly 400K year intervals. MIS19 took place 800K years ago and was about 12,000 years long, slightly more than the Holocene so far. The astronomical signature of MIS19 is almost identical to the Holocene. The closest of all interglacials for the past million years.

Yes, you could argue that the cooling trend started at the end of the HCO c. 5 Ka, but the Minoan was as warm, briefly, as the HCO.

Dunno what data you rely upon, but the fact is that the Southern Dome of the GIS melted completely or almost so during the interglacials of c. 800 and 400 Ka. During the warmer and longer than now Eemian, it partially melted.

I can’t link to studies on the length of MISes 19 and 11, since they’re paywalled, but both interglacials were warmer and longer than the Eemian, up to 30,000 years in duration (or more, depending upon how you count).

I don’t know what you are talking about. Antarctica has been frozen for millions of years. The Antarctic ice cores do not extend further into the past because the bottom melts away due to geothermal heating, or the bottom layers get messed by horizontal shearing. They are now looking for places that could have older ice, up to 1,5 million years, because they accumulate less ice, not more.

https://www.sciencedaily.com/releases/2013/11/131105081228.htm

MIS 11 is longer than MIS 1 (Holocene), probably because the precession peak (Northern summer insolation) and the obliquity peak are separated by a little less than 10,000 years so the rise in insolation from precession compensates the fall in insolation from obliquity. But in MIS 1 the peaks are coincident, so the next peak in precession is almost 20,000 years apart with plenty of time for obliquity to fall almost to the bottom of its cycle without significant northern summer insolation from precession.

MIS 19 800k years ago was not longer than MIS 1. Nor was it warmer. Probably about the same or slightly cooler judging from deuterium levels.

The following figure is from Pol, K., et al. “New MIS 19 EPICA Dome C high resolution deuterium data: Hints for a problematic preservation of climate variability at sub-millennial scale in the “oldest ice”.” Earth and Planetary Science Letters 298 (2010): 95-103.

http://i1039.photobucket.com/albums/a475/Knownuthing/Figure%209_zpsl52xhrtm.png

MIS 1 is in red and MIS 19 in black. From highest deuterium level to the end of the plateau phase MIS 19 was about 11,000 years, and MIS 1 has already extended to 10,500 years. The accelerated cooling starts at about half way down in the obliquity cycle despite rising summer insolation from precession.

There is no basis to say that the lows in the eccentricity 400k years cycle produce longer interglacials, quite the contrary, the lower the eccentricity, the lower the northern insolation from precession and the lower the forcing to warm during precession peaks. If you check the 65°N summer insolation curves you can quickly see that the highest values are reached during eccentricity highs like the one that took place at MIS 15 200k years ago.

MIS 11 and MIS 1 are really very different astronomically so we should not expect them to behave similarly in terms of temperatures or duration. This figure shows that they can be aligned by precession or by obliquity, but not by both since the peaks are displaced. MIS 1 in red and MIS 11 in black.

http://i1039.photobucket.com/albums/a475/Knownuthing/MIS11Tzedakis_zps4fubj4yy.png

GIS means Greenland Ice Sheet. I should have spelled it out.

Here is an old link on the melting of the Southern Dome of the GIS:

http://www.livescience.com/7331-ancient-greenland-green.html

Data from Antarctica might differ, but it now appears that the Southern Dome melted twice, once during MIS 19 and again during MIS 11. As I mentioned, it partially melted during MIS 5, ie the Eemian Interglacial.

I think you got it wrong because you didn’t actually read the paper, Gloateus. The paper you are referring is this one:

http://www.ncbi.nlm.nih.gov/pmc/articles/PMC2694912/

And about the dating of the material found in the ice cores they say:

All four dating methods suggest that the Dye 3 silty ice and its forest community predate the Last Interglacial (LIG, ~130-116Ka) (Fig 2), which contrasts with the results of recent models suggesting that Dye 3 was ice-free during this period (27, 28). Indeed, all four dating methods give overlapping dates for the silty ice between 450Ka and 800Ka (Fig. 2), exceeding the current record of long-term DNA survival from Siberian permafrost of 300-400Ka (9). However, due to the many assumptions and uncertainties connected with the interpretation of the age estimates (7), we cannot rule out the possibility of a LIG age for the Dye 3 basal ice.

In plain words they think is older than 450k years and younger than 800k years. That rules out MIS 11 that took place 425k years ago, so it should be MIS 13, MIS 15, MIS 17 or MIS 19. However not all is lost, because since the dating methods are so uncertain the biological material could actually be from the Eemian, so any interglacial is a candidate.

There is simply no support for any theory about periodic super interglacials. The Holocene is just like any interglacial, about to end in one or two millennia at most.

Javier,

I read that paper and subsequent ones which found material from 800 Ka.

The Holocene might well last another 50,000 years. Or not. But the fact is that super interglacials have happened and could again.

Even is the organic material from Greenland is only 400,000 years old, it shows that the Southern Dome melted then, in a very long interglacial.

Please see comment by Bill Illis:

https://wattsupwiththat.com/2014/06/25/study-the-greenland-ice-sheet-collapsed-400000-years-ago/

Why can’t we quantify how much global warming is due to step inputs from El Nino, presumably each La Nina doesn’t fully undo each El Nino. Any remaining trend may then be attributed to third world co2 since northern hemisphere co2 consumption by farm crops exceeds northern hemisphere production if co2.

Of course each La Nina should “fully undo” each El Nino. IN THE LONG TERM.

Otherwise GMT would be set in stone on a rising trend from millenia ago.

ENSO redistributes heat in the climate system (~93% of which resides in the oceans) into the atmosphere. It all comes from the Sun ultimately.

Without an internal source of heat from the ocean bed then PDO/ENSO should cancel.

That it no longer does is due to the GHE of CO2 – up 40% due to anthro emissions.

CO2 is a tiny portion (400 ppm) of total GHG, although a very distant second to H2O (perhaps 30,000 ppm on a global average basis).

Over the past 150 years, the naturally warming Earth has benefited from having about one more CO2 molecule per 10,000 dry air molecules, ie up from around three to four. Two more such molecules would be even better for plants and other living things.

Toneb. Co2 produces downwelling infrared which only penetrates one micrometre so cannot warm the ocean, nor concentrate it’s force in such a localised part of the ocean. There is good satellite imagery which points at an ocean bed contribution. Also there is a 60 year cycle which would seem to rule out co2. There may be proxy evidence for El Nino going back centuries, further reducing the role of co2 in El Nino. If co2 plays a role in El Nino, then what role does it play in La Nina ?

The amateurish practice of fitting linear regression lines to woefully short stretches of record and then computing “the confidence intervals” based upon unverified models (e.g. “red noise”) of global temperature variability yield highly arbitrary estimates of physically meaningless “trend.” If an unequivocal indication of actual low-frequency variability is desired, a well-designed low-pass filter with a cutoff near one cycle per decade has to be employed. The results of such filtering are exact.

“a well-designed low-pass filter with a cutoff near one cycle per decade has to be employed. The results of such filtering are exact.”

Linear regression is a filter. It is a Welch filter on the differences. Or a differencing of Welch filtered.

The slope of linear regression–which is the metric used in this article–is a very crude BAND-bass filter and does NOT completely display the low-frequency content of the data series, as would a well-designed low-pass filter.

J Martin, the oceans could also presumably be assumed to have a nice layer of Water vapour hanging over it, which would swamp any effect CO2 is supposed to have

Apparently Brozek has used a test with low statistical power. The standard regression analysis shows statistical significance at the 99.9999% confidence level (p=1.21e-7). The slope of the regression line is 0.0881°C/decade, with a 95% confidence interval of 0.056-0.120°C/decade. Data source for UAH version 6 beta 5: http://vortex.nsstc.uah.edu/data/msu/v6.0beta/tlt/tltglhmam_6.0beta5.txt

The statistical significance of “standard regression analysis” is predicated upon entirely independent trials of linear relationship (i.e., “white noise” plus trend), instead of serially autocorrelated data, such as found in a geophysical setting.

Brozek’s conclusions do not take into account serial autocorrelation. If that is an issue, the evidence for that needs to be presented here. A previous study demonstrates the major global temperature trends are positive, even when controlled for serial autocorrelation.

jpaullanier,

In other words, satellite measurements are very accurate. That contradicts the alarmist talking point that satellite data is NFG.

“Brozek’s conclusions do not take into account serial autocorrelation.”

They certainly do. Ar(1). There is a discussion here. Autocorrelation has small effect on trend, but greatly increases uncertainty.

Nick, I performed a Durbin-Watson test for autocorrelation on the regression I mentioned. The Durbin-Watson statistic is 2.186. At the 1% level of significance, UL=1.637. So there is no reason to suspect autocorrelation. This is consistent with what was found earlier for major global temperature trends.

“Global temperature series have positive trends that are statistically significant even when controlling for the possibility of strong serial correlation.”

http://journals.ametsoc.org/doi/full/10.1175/1520-0442%282002%29015%3C0117%3ATAOSRT%3E2.0.CO%3B2

JPL,

“So there is no reason to suspect autocorrelation. This is consistent with what was found earlier for major global temperature trends.”

The paper you cite is using annual data. Then there is not much autocorrelation. But if you use monthly data, there is much more, and you must allow for it. Here is a post at Climate Audit where Hu McCulloch correctly criticises Steig et al for not allowing for autocorrelation with Antarctic monthly data. Steig published a corrigendum.

Of course, the extra uncertainty of monthly data with autocorrelation is compensated by the greater number of data points.

“In other words, satellite measurements are very accurate. That contradicts the alarmist talking point that satellite data is NFG.”

No they are not .. no more accurate than a GCM for temp, as they employ complex algorithms to extract the temperatature, with paparmeterisations included.

V’s 1 to 4 for RSS and v’s 1 to 6 for UAH show that.

And your “darling” dataset, or is it was since v4.0 – RSS’s chief Carl Mears says….

“A similar, but stronger case can be made using surface temperature datasets, which I consider to be more reliable than satellite datasets (they certainly agree with each other better than the various satellite datasets do!)”

Toneb, supreme expert at conflating apples with oranges, says that satellite data is…

…no more accurate than a GCM for temp…

So now a computer model output is considered “data”, equivalent to satellite data?

Admit it, you’re just winging it; a know-nothing using the alarmist tactic of ‘Say Anything’.

jpaullanier:

Durbin-Watson is a notoriously weak metric for detecting serial autocorrelation, which doesn’t conform to the AR(1) model used by Brozek in accounting for it. And the corresponding power density shows a very strong spectral peak at multi-decadal periods, a feature totally at odds with your presumed uncorrelated white noise.

dbstealey April 8, 2016 at 10:51 am

Toneb, supreme expert at conflating apples with oranges, says that satellite data is…

…no more accurate than a GCM for temp…

So now a computer model output is considered “data”, equivalent to satellite data?

The satellite data is fine it’s the angular distribution of a certain frequency range of microwave radiation due to emission by O2 (although some other sources such as ice have to be eliminated). This radiation has to be modeled to convert it into a temperature and at this time there have been difficulties in doing this accurately (hence the multiple versions of the software). In fact the difficulty in dealing with the radiation from near the surface has proved to be too difficult and the TLT product appears to be in the process of being abandoned.

1sky1:

I’m confused about where AR(1) is used. I don’t see where Brozek says he uses it, and I don’t see in the Temperature Trend Viewer site where it is used, either. Maybe I have missed something. It seems to me that Brozek needs to address this in his article. I am also wondering, if AR(1) is employed by Nick, does this allow a correction for the p-value for correlation?

GCMs (models) vs Reality:

http://i2.wp.com/poorrichardsnews.com/wp-content/uploads/2016/03/climate.jpg

jpaullanier:

Although Brozek doesn’t mention it explicitly, AR(1) variability is the (unwarranted) default assumption in “climate science.” From his comment, I suspect Nick Stokes resorts to it.

“I don’t see in the Temperature Trend Viewer site where it is used”

It’s used in the calculation of CI’s (which Werner quotes), in the significance shading, and in the plot of t-values. The original post is here. There is discussion here, here, and here.

Nick, I provided the Durbin-Watson statistic for the monthly series I used. It provides no reason to suspect autocorrelation. If there is any further calculation that needs to be performed, that must be documented in a study published in a peer reviewed scientific journal so that anyone can check it.

“Nick, I provided the Durbin-Watson statistic for the monthly series I used.”

Well, I don’t think it is right. The statistic should be about 2*(1-r) where r is the sample autocorrelation, which is positive. And r for monthly residuals is typically about 0.6 or so. See my acfs here. As for published literature, there is plenty. Here is Santer et al, where they use a Quenouille method even for seasonal (see Table 3). Here is Foster and Rahmstorf, where they contend that even Ar(1) isn’t enough.

Nick, I used the standard method for calculating the Durbin-Watson statistic. You may check it yourself. We’ll just have to leave it at that.

By the way, thanks for your comments and the sources you provided, Nick. From your acfs it does look like I have made a mistake, although I don’t see it! So thanks for taking the time to point that out. I need to learn more about autocorrelation anyway, since it applies to temperature anomalies. I’ll be studying that.

Now I see my mistake. I switched Sum of Squared Differences of Residuals and Sum of Squared Residuals. The correct value for the Durbin-Watson statistic from this method is 0.464. Using ACF, the value is 0.514. Both are less than dL, so autocorrelation is significant at both the 0.05 and 0.01 significance levels. My apologies, and thanks again, Nick.

Davidmhoffer: Actually I do understand. All the analogies we all can come up with still can’t explain how only 3% of the co2 we are responsible for can be so catastrophic to climate. There is only so much energy this little molecule can absorb and radiate. When the sun doesn’t shine, it cools very rapidly. Unless there is a lot of water vapor….curious. I, using my critical thinking for questions, would be much more worried about the 97% I have no control over. This doesn’t address the fact that we will not be able in any practical way in any reasonable time, be able to eliminate more than a small portion of what we are responsible. This ignores the fact that co2 has been much higher in the past and yet here we are arguing about tiny amounts. Seems to me that there are likely other very important drivers of climate such as the sun, oceans, and possibly cosmic rays affecting cloud cover. The fixation on co2 seems a waste of time and very political.

John C. April 7, 2016 at 4:42 pm

Davidmhoffer: Actually I do understand. All the analogies we all can come up with still can’t explain how only 3% of the co2 we are responsible for can be so catastrophic to climate.

As was explained upthread, we’re responsible for a 40% increase over background levels to date. 3% year over year adds up.

When the sun doesn’t shine, it cools very rapidly. Unless there is a lot of water vapor

And would cool even more if there were no CO2. Plus, you have to keep in mind that water vapour concentrations decline with temperature, which in turn declines with altitude. So even at the equator, over the ocean, where water vapour sits at 40,000 ppm, once you get to a certain altitude, water vapour drops off to nearly zero. CO2 on the other hand remains relatively constant to the top of the troposphere. So total effect of CO2 is outsized compared to concentration versus water vapour.

The balance of your argument I would agree with. But start with the proper physics, so that the balance of your argument has more credibility.

Can you prove humans caused all the increase? Based on your data, once co2 is in the atmosphere it never leaves. How did it come down from the much higher levels In the past? Is it hiding with the warming along with the 20 or so year pause in temperature? Which by the way doesn’t support the additive theory you want me to accept. I see ocean cycles along with sun cycles much more likely to be the driver of climate. We shall soon see as cycle 24 ramps down. By the way, cycle 24 has been very weak compared to 22 and 23. We may all wish there was much more co2 in the air if cycle 25 is also weak or even less active. All this focus on co2 in just a smoke screen. I can’t say the purpose but something isn’t logical.

Based on your data, once co2 is in the atmosphere it never leaves.

That is absolute nonsense. I never said any such thing. But I see your uninterested in learning anything.

You may wish to read:

http://wattsupwiththat.com/2010/09/24/engelbeen-on-why-he-thinks-the-co2-increase-is-man-made-part-4/

Davidm: On my interest in learning…I have read about all the beliefs you have in the boogieman co2. I just come to different conclusions. And reading the posts all the way down from here, It seems I’m not alone. So unless you have information to better support you’re position, don’t even try to infer I lack interest in learning. I just chose to apply this knowledge with logic and observation. Like I said, I have been a business owner for forty years. I grew up during the sky is falling immanent coming ice age. Didn’t buy that one either. You don’t address the sun, ocean cycles, cloud cover and cosmic radiation. You seem to be fixed on co2 even though it’s affect as a driver of climate diminishes above 400 PPM. My main point is the amount humans are adding is small. Very small. A total ban on co2 emissions across the globe would have little impact in temperature. Just look back to when co2 was 300 PPM and unless you subscribe to ALGORE fake movies and overly adjusted data, there is no there there. But I digress.

John C. April 8, 2016 at 9:15 am

I have read about all the beliefs you have in the boogieman co2.

You’ve no idea what my beliefs are. Your statement is insulting.

You’ve drawn a lot of conclusions that I agree with, but they are based on a very poor understanding of the facts. When I point out things that you could learn in order to make your own articulation of the issue stronger, you change the subject and yammer on about different issues.

David, speaking of proper physics, davidmhoffer said:

I read this comment a couple of days ago and didn’t continue reading the thread beyond it. The analogy annoyed me and has been bothering me ever since.

There are a couple of things that are quite wrong and if I understand your mind experiment correctly, then your analogy is way off track.

Parts per million (PPM) in climate science is taken to mean PPM by volume (PPMV). It is a mass ratio of the relative volumes of gasses.

Changing the temperature and pressure will not effect the mass ratio, only the volume of the mixture, which is why PPMV is a useful and handy metric.

The absolute amount of stuff (Number of molecules and their total mass.) will change but not the relative ratio of the mix.

To be clear, to prepare a PPMV mixture, you simply choose any VOLUME of a gas (Such as CO2 at any T or P, it doesn’t matter!) and you add it to a million equivalent volumes of air!

The volume of a gas (Such as CO2) includes the molecules and the empty space they move in. What you fail to demonstrate is the very tiny size of molecules and the massive amount of empty space. Even at standard temperatures and pressures (STP) your 10cm cube is 99.9% empty space.

All the “grains” (Molecules or parts.) would occupy just 0.0726% of the volume in the real world. But the volume is far smaller in the experiment because the number of parts (grains) are limited to 10,000 in your cube.

In the real case there would be about 2.5×10^19 parts/grains/molecules and of that, CO2 would occupy just one hundred millionth of the volume.

The grains analogy in a 10cm cube makes no sense in a rational world. Even to get 10,000 molecules this closely packed or their movements constrained to orbits this tight would require a tiny volume at impossible pressures.

Maybe I’m wrong about what you mean by grains/parts/volume and I will stand to be corrected, if so. However those are probably the least of the problems with the analogy!

You then stack 14 km of “cubed” jars to represent the atmosphere but the problem with that is that the density of the real atmosphere lowers with altitude.

At just 5km half the mass of the atmosphere has gone. The volume has doubled and those grains have a lot more empty space to be alone in!

Forget 14km, at 11km, only 25% of the total mass remains (The PPMV is unchanged but the volume is now huge and the number of molecules is low).

To be honest, the effect is actually worse in reality because CO2 is heavier than air and the PPMV does actually change, leading to even less CO2 at altitude.

Oddly, your model succeeds in inverting reality! It represents the exact opposite of what actually happens.

Starting with a cube of sample “stuff” that could only really exist in an impossibly compressed and unimaginably tiny space you then extrapolated. The result exposes how massively opposite reality actually is. There is much more empty space, the parts are actually vanishingly small and when you add on 14 km of additional atmosphere, an analogy that started badly only gets worse as it is sucked into the emptiness of its own vacuity* 😉

*Sorry couldn’t resist the vacuum pun!

I’m sorry that you have totally and completely missed the point of the analogy.

Not to mention that actual measurements of radiated spectrum escaping from the atmosphere back up my analogy 100%. If it is wrong, it is wrong for other reasons than you propose and certainly is not an inversion of reality as you claim. It is consistent with observational data.

Searching for a pause through noisy data is not a very reliable form of linear trend analysis. If this were my investigation, I would have to say very little about what I have found. Like when my children would bring a picture of something they have created with a crayon. Whatever they said it was, I would agree but I had not a clue as to what it really was. Your graph seems to be of the same cloth. You say it is one thing. I haven’t a clue as to what it really is.

The middle line is the slope when ignoring error bars. The region between the flat line and the steep line represent a 95% probability that the real slope is between these two lines. There is a 2.5% chance the real slope is above the highest line and also a 2.5% chance the real slope is actually negative. And that 2.5% is good enough for climate scientists to say the warming is NOT statistically significant.

Actually the opposite, the usual standard is the 0.05 level for significance, at that level the results you present show that warming is significant at the 0.05 level.

Phil, only if the degrees of freedom allows that statement. Not that I argue against warming. I have no problem saying the long term slope is a warming slope. You and I likely differ on the cause. However, in this case, my issue with this post is this: Taking such a short segment of that slope and trying to say something significant is fraught with all kinds of statistical limits. The author appears weak in dealing with noisy data and uses an inappropriate statistical method on the short string of data used.

Which opens the door to this discussion: If you have less than graduate level work in statistical analysis, you run the risk of leading your followers down a primrose path. Statistical analysis, applied carefully and within the limits of your raw data and research design, has kept many hairbrained ideas away from the general public, where if released, could do great harm. The author of this current post stands squarely in that primrose path and thus his work is easily dismissed by experienced researchers with sound statistical analysis knowledge.

A year ago, when Nick Stokes had a value of 22 years for RSS, Dr. Ross McKitrick came up with 26 years.

Hopefully Nick will address all of the above points.

Pamela Gray April 8, 2016 at 6:31 am

Phil, only if the degrees of freedom allows that statement. Not that I argue against warming. I have no problem saying the long term slope is a warming slope. You and I likely differ on the cause. However, in this case, my issue with this post is this: Taking such a short segment of that slope and trying to say something significant is fraught with all kinds of statistical limits. The author appears weak in dealing with noisy data and uses an inappropriate statistical method on the short string of data used.

No argument with that, but the statement made re warning requires a one-tailed test, doing a two-tailed test incorporates the probability of warming faster than mean + 2 σ into the probability of the slope being less than 0, which makes no sense.

I use linear trend in educational matters (but carefully is student performance is noisy). The use of linear trend when data is noisy, like it is with temperature, is a good topic to discuss. Might we begin here?

http://nimbus.cos.uidaho.edu/abatz/PDF/sap1717draft37appA.pdf

John C is onto something. First, there’s the log effect of adding more CO2:

(click in charts to embiggen)

As we see, most of the warming took place in the first few dozen ppm. At current concentrations of ≈400 ppm, any warming from more CO2 is simply too minuscule to measure.

Next, before industrial CO2 emissions began, that trace gas was 15 – 20 times higher than now:

http://2.bp.blogspot.com/_cHhMa7ARDDg/SoxiDu0taDI/AAAAAAAABFI/Z2yuZCWtzvc/s1600/Geocarb%2BIII-Mine-03.jpg

That much more CO2 did not cause any runaway global warming — or any global warming for that matter. The only verifiable correlation shows that ∆CO2 is caused by ∆temperature.

Radiative physics argues that at current concentrations, a rise in CO2 will have some small effect on global T. But then we’re back to the first chart above. Extrapolate from 400 ppm to 800 ppm. Doubling atmospheric CO2 would still not cause any measurable rise in global T. And that is what empirical observations support. But after almost twenty years of a steady rise in CO2, global T has been in stasis. That falsifies the CO2=cAGW conjecture.

And as John C points out, human CO2 emissions have been quite negligible. It is true that those emissions have brought about a rise in that trace gas. But there is no credible evidence showing that more CO2 has caused any global harm. Thus, we can state that the rise in CO2 has been “harmless”. There are peer reviewed papers that agree, and which state that the CO2=AGW predictions have been wrong by more than an order of magnitude.

And on the other hand, there is ample evidence showing that the added CO2 has been very beneficial. Agricultural productivity has measurably risen:

Plants can easily tell the difference between 300 ppm and 400 ppm. But humans cannot; it is only by using very sensitive instruments that we even know about the rise. And to put John C’s numbers another way: over the past century, CO2 has risen by just one part in 10,000. Based on the informed skepticism here, it seems preposterous that going from a natural 9,999 molecules, to one more molecule in 10,000 is the proximate cause of the predicted global warming — which anyway has failed to happen.

As Prof Richard Feynman pointed out: if your theory is contradicted by observations, then your theory is wrong. “That’s all there is to it,” explained the Prof.

If CO2 has caused some minuscule warming, it has been entirely harmless, and beneficial to the biosphere. The IPCC has been so far off base that it’s obvious they are wrong. The real world is not cooperating with their version of political science.

dbstealey;

You can’t claim that CO2 is logarithmic AND that what amounts to a 40% increase (+1 in 10k) in concentration is insignificant. You can have one or the other, but not both.

Sensitivity, feedbacks, and net impacts are matters separate and distinct which observations suggest the IPCC is dead wrong in regard to.

davidmhoffer says:

You can’t claim that CO2 is logarithmic AND that what amounts to a 40% increase (+1 in 10k) in concentration is insignificant.

Of course I can. And as I explained, it is significant to plants. The insignificant part refers to forcing global T to measurably rise. That has been endlessly predicted, but never conclusively observed.

Davidmhoffer,

“dbstealey;

You can’t claim that CO2 is logarithmic AND that what amounts to a 40% increase (+1 in 10k) in concentration is insignificant. You can have one or the other, but not both.”

Where did he claim that? What he actually said was:

“At current concentrations of ≈400 ppm, any warming from more CO2 is simply too minuscule to measure.”

In other words, the 40% concentration increase in the total amount of CO2 (from 280 ppm in 1880 to 400 ppm today = 120 ppm) can be viewed as a “significant increase in the amount of CO2,” but because of its logarithmic nature, it will take an additional 240 ppm increase over today’s 400 to get the same degree of warming that we got from the prior 120! Making each increase in CO2 less significant as far as it’s ability to increase temperatures. It’s logarithmic nature is what allows one to “have both” so to speak.

So how is he wrong? 120 ppm= 0.8C increase. Not dividing logarithmically (too lazy) 0.8 / 120= 0.007 degrees increase per 1 ppm. Too miniscule to measure. And 0.8/240= 0.003 C per each additional ppm!

Aphan April 8, 2016 at 11:21 am

Where did he claim that?

He said, and I quote:

over the past century, CO2 has risen by just one part in 10,000. Based on the informed skepticism here, it seems preposterous that going from a natural 9,999 molecules, to one more molecule in 10,000 is the proximate cause of the predicted global warming

You can’t make the “one in 10,000” argument and the logarithmic argument at the same time. CO2’s effects (be they big or small) can’t be expressed both ways. One quantifies a logarithmic relationship and the other quantifies a linear relationship. It can’t be both at the same time.

CO2’s effects (be they big or small) are logarithmic.

“You can’t make the “one in 10,000” argument and the logarithmic argument at the same time. CO2’s effects (be they big or small) can’t be expressed both ways. One quantifies a logarithmic relationship and the other quantifies a linear relationship. It can’t be both at the same time.”

What are you smoking? He didn’t express the EFFECT of CO2 in two ways! The “one in 10,000” statement is a numerical fact. It indicates a mathematical increase in number of CO2 molecules in the atmosphere. The logarithmic statement indicates the EFFECT that those molecules have on TEMPERATURE.

You might need to read up on something because what I’m saying is accurate and extremely basic scientific fact!

Aphan April 8, 2016 at 5:56 pm

The “one in 10,000” statement is a numerical fact. It indicates a mathematical increase in number of CO2 molecules in the atmosphere.