UPDATE: I’ve added graphs of the difference between the observations and the models at the end of the post, under the heading of DIFFERENCE.

####

We’ve shown in numerous posts how poorly climate models simulate observed changes in temperature and precipitation. The models prepared for the upcoming Intergovernmental Panel on Climate Change (IPCC) 5th Assessment Report (AR5) can’t simulate observed trends in:

1. satellite-era sea surface temperatures globally or on ocean-basin bases,

2. global satellite-era precipitation,

3. global, hemispheric and regional land surface air temperatures, and

In this post, we’ll compare the multi-model ensemble mean of the CMIP5-archived models, which were prepared for the IPCC’s upcoming AR5, and the new GISS Land-Ocean Temperature Index (LOTI) data. As you’ll recall, GISS recently switched sea surface temperature datasets for their LOTI product.

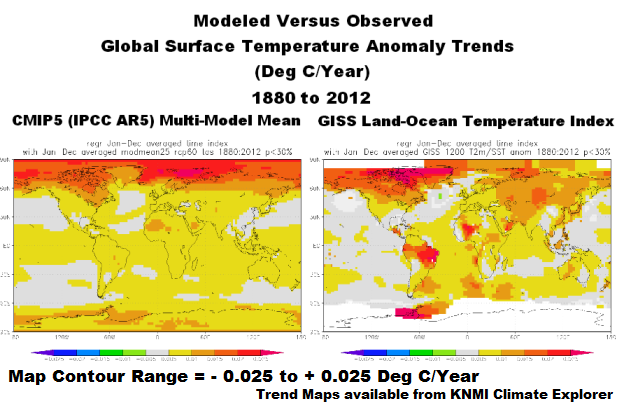

We have not presented trend maps in earlier comparisons of observed and modeled global land plus sea surface temperature anomalies, so, for the sake of discussion, we’ll provide them with this post. A comparison is shown in Figure 1 for the period of 1880 to 2012.

Figure 1

The maps in Figure 1 show the modeled and observed linear trends for the full term of the GISS data, from 1880 to 2012. The CMIP5-archived simulations indicate stronger-than-observed polar-amplified warming at high latitudes in the Northern Hemisphere. The models also show a more uniform warming of the tropical Pacific, while the observations show little warming. There are a number of other regional modeling problems.

Presenting the trends over the full term of the GISS data actually tends to make the models look as though they perform reasonably well. But when we break the observations and model outputs into the 4 periods shown in Figure 2, the models do not fare as well. In fact, the trend maps will help to show how poorly the models simulate observed temperature trends during the early cooling period (1880 to 1917), the early warming period (1917 to 1944), the mid-20th century flat temperature period (1944 to 1976) and the late warming period (1976 to 2012).

Figure 2

To head off complaints by global warming enthusiasts, the IPCC acknowledges those warming and cooling (flat temperature) periods in their 4th Assessment Report. As a reference, see Chapter 3 Observations: Surface and Atmospheric Climate Change of the IPCC’s AR4. Under the heading of “3.2.2.5 Consistency between Land and Ocean Surface Temperature Changes”, the IPCC states with respect to the surface temperature variations over the period of 1901 to 2005 (page 235):

Clearly, the changes are not linear and can also be characterized as level prior to about 1915, a warming to about 1945, leveling out or even a slight decrease until the 1970s, and a fairly linear upward trend since then (Figure 3.6 and FAQ 3.1).

You’ll notice by extending the data back to 1880, the data shows a cooling trend before 1917.

A NOTE ABOUT THE USE OF THE MODEL MEAN

The following discussion is a reprint from the post Blog Memo to John Hockenberry Regarding PBS Report “Climate of Doubt”.

The model mean provides the best representation of the manmade greenhouse gas-driven scenario—not the individual model runs, which contain noise created by the models. For this, I’ll provide two references:

The first is a comment made by Gavin Schmidt (climatologist and climate modeler at the NASA Goddard Institute for Space Studies—GISS). He is one of the contributors to the website RealClimate. The following quotes are from the thread of the RealClimate post Decadal predictions. At comment 49, dated 30 Sep 2009 at 6:18 AM, a blogger posed this question:

If a single simulation is not a good predictor of reality how can the average of many simulations, each of which is a poor predictor of reality, be a better predictor, or indeed claim to have any residual of reality?

Gavin Schmidt replied with a general discussion of models:

Any single realisation can be thought of as being made up of two components – a forced signal and a random realisation of the internal variability (‘noise’). By definition the random component will uncorrelated across different realisations and when you average together many examples you get the forced component (i.e. the ensemble mean).

To paraphrase Gavin Schmidt, we’re not interested in the random component (noise) inherent in the individual simulations; we’re interested in the forced component, which represents the modeler’s best guess of the effects of manmade greenhouse gases on the variable being simulated.

The quote by Gavin Schmidt is supported by a similar statement from the National Center for Atmospheric Research (NCAR). I’ve quoted the following in numerous blog posts and in my recently published ebook. Sometime over the past few months, NCAR elected to remove that educational webpage from its website. Luckily the Wayback Machine has a copy. NCAR wrote on that FAQ webpage that had been part of an introductory discussion about climate models (my boldface):

Averaging over a multi-member ensemble of model climate runs gives a measure of the average model response to the forcings imposed on the model. Unless you are interested in a particular ensemble member where the initial conditions make a difference in your work, averaging of several ensemble members will give you best representation of a scenario.

In summary, we are definitely not interested in the models’ internally created noise, and we are not interested in the results of individual responses of ensemble members to initial conditions. So, in the graphs, we exclude the visual noise of the individual ensemble members and present only the model mean, because the model mean is the best representation of how the models are programmed and tuned to respond to manmade greenhouse gases.

In other words, IF (big if) global surface temperatures were warmed by manmade greenhouse gases, the model mean presents how those surface temperatures would have warmed.

Let’s start with the time period when the models perform best, the recent warming period. And we’ll work our way back in time.

NOTES: For the trend maps in Figures 4, 6, 8 and 10, I’ve used a different range for the contour levels than those used in Figure 1. The contour range is now -0.05 to +0.05 deg C/year to accommodate the higher short-term trends. And for the temperature anomaly comparisons, I used the base period of 1961-1990 for anomalies. Those are the base years used by the IPCC in their model-data comparisons in Figure 10.1 from the Second Order Draft of AR5.

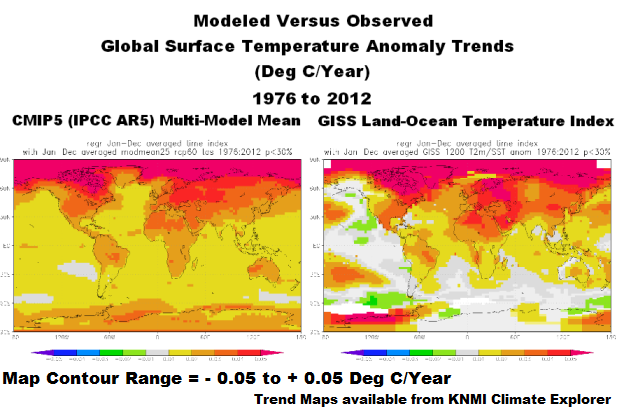

RECENT WARMING PERIOD – 1976 TO 2012

Figure 3 compares the observed and modeled linear trends in global land plus sea surface temperature anomalies for the period of 1976 to 2012. The models have overestimated the warming by about 28%. The divergence between the models and the data in recent years is evident. It’s no wonder James Hansen, now retired from GISS, used to hope for another super El Niño.

Figure 3

Figure 4 compares the modeled and observed surface temperature trend maps for 1976 to 2012. The models show warming for all of the East Pacific, while the data indicates little warming to cooling there. For the western and central longitudes of the Pacific, the models fail to show the ENSO-related warming of the Kuroshio-Oyashio Extension (KOE) east of Japan and the warming in the South Pacific Convergence Zone (SPCZ) east of Australia. The models also underestimate the warming in the mid-to-high latitudes of the North Atlantic. Modeled land surface temperature anomaly trends also show very limited abilities on regional bases, but that’s not surprising since the models simulate the sea surface temperature trends so poorly.

Figure 4

MID-20th CENTURY FLAT TEMPERATURE PERIOD

If we were to look only at the linear trends in the time-series graph, Figure 5, the modeled trend is not too far from the observed trend, only 0.026 deg C/decade.

Figure 5

However, as discussed in the post Polar Amplification: Observations versus IPCC Climate Models, the climate models fail to present the polar amplified cooling that existed during this period. See Figure 6. (Yes, polar-amplified cooling exists in the Arctic during cooling periods, too.) The climate models failed to simulate the cooling at high latitudes of the North Pacific and in the mid-to-high latitudes of the North Atlantic. The climate models also failed to simulate the warming of sea surface temperatures in the Southern Hemisphere, and they missed the warming of Antarctica.

Figure 6

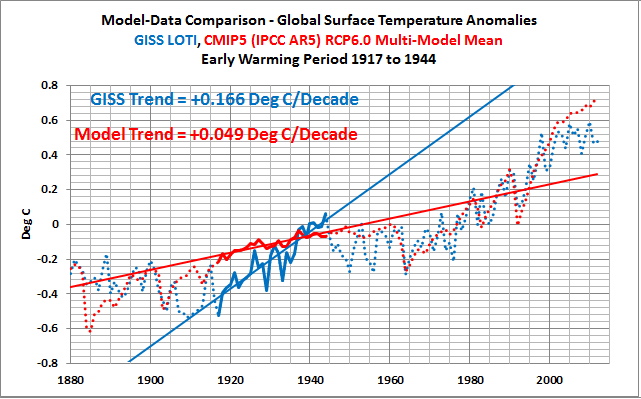

EARLY WARMING PERIOD – 1917 TO 1944

Atrocious, horrible and horrendous are words that could be used to describe the performance of the CMIP5-archived climate models during the early warming period of 1917 to 1944. See Figure 7. According to the models, if greenhouse gases were responsible for global warming, global surface temperatures should only have warmed at a rate of about +0.049 deg C/decade. BUT according to the new and improved GISS Land-Ocean Temperature Index (LOTI) data, global surface temperatures warmed at a rate that was approximately 3.4 times faster or about 0.166 deg C/decade. That difference presents a number of problems for the hypothesis of human-induced, greenhouse gas-driven global warming, which we’ll discuss later in this post.

Figure 7

Looking at the maps of modeled and observed trends, the models failed to simulate the general warming of sea surface temperatures from 1917 to 1944 and they failed to capture the polar amplified warming at high latitudes of the Northern Hemisphere. As discussed in the post about polar amplification that was linked earlier, the observed warming rates at high latitudes of the Northern Hemisphere were comparable during the early and late warming periods (See the graph here).

Figure 8

Note: Areas in white in the GISS map have no data or they don’t have sufficient data to perform the trend analyses, which I believe is a threshold of 50% in the KNMI Climate Explorer.

EARLY COOLING PERIOD – 1880 TO 1917

If the data back this far in time is to be believed (that’s for you to decide), surface temperatures cooled, Figure 9, but the model simulations show they should have warmed slightly.

Figure 9

The observations-based map in Figure 10 shows land and sea surface temperature trends seeming to be out of synch during this period—sea surface temperatures show cooling while land surface temperatures show warming in many areas.

Figure 10

If GISS had kept HADISST as their sea surface temperature dataset for this period, the disparity between land and ocean trends would not have been as great. That is, the HADISST data they formerly used does not show that much cooling during this period.

A MAJOR FLAW IN THE HYPOTHESIS ON HUMAN-INDUCED GLOBAL WARMING

The observed warming rate during the early warming period is comparable to the trend during the recent warming period. See Figure 11.

Figure 11

But according to the models, Figure 12, if global temperatures were warmed by greenhouse gases, global surface temperatures during the recent warming period should have warmed at a rate that’s more than 4 times faster than the early warming period—or, more realistically, the early warming period should have warmed at a rate that’s 22% of the rate of the late warming period—yet the observed warming rates are comparable.

Figure 12

That’s one of the inconsistencies with the hypothesis that anthropogenic forcings are the dominant cause of the warming of global surface temperatures over the 20th Century. The failure of the models to hindcast the early rise in global surface temperatures illustrates that global surface temperatures are capable of warming without the natural and anthropogenic forcings used as inputs to the climate models.

Another way to look at it: the data also indicate that the much higher anthropogenic forcings during the latter later warming period compared to the early warming period had little to no impact on the rate at which observed temperatures warmed. In other words, the climate models do not support the hypothesis of anthropogenic forcing-driven global warming; they contradict it.

THE OTHER MAJOR FLAW IN THE HYPOTHESIS ON HUMAN-INDUCED GLOBAL WARMING

Ocean heat content data since 1955 and the satellite-era sea surface temperatures indicate the oceans warmed naturally. Refer to the illustrated essay “The Manmade Global Warming Challenge” (42mb).

CLOSING

Climate models cannot simulate the observed rate of warming of global land air plus sea surface temperatures during the early warming period (1917-1944), which warmed at about the same rate as the recent warming period (1976-2012). The fact that the models simulate the warming better during the recent warming period does not indicate that manmade greenhouse gases were responsible for the warming—it only indicates the models were tuned to perform better during the recent warming period.

The public should have little confidence in climate models, yet we are bombarded by the mainstream media almost daily with climate model-based conjecture and weather-related fairytales. Climate models have shown little to no ability to reproduce observed rates of warming and cooling of global temperatures over the term of the GISS Land Ocean Temperature Index. The IPCC clearly overstates its confidence in model simulations of the climate variable most commonly used to present the supposition of human-induced global warming (e.g., surface temperature). After several decades of development, models continue to show no skill at establishing that global warming is a response to increasing greenhouse gases. No skill whatsoever.

SOURCE

The GISS LOTI data and the outputs of the CMIP5-archived models are available through the KNMI Climate Explorer.

UPDATE 1

DIFFERENCE

The difference between the GISS land-ocean temperature index data and the CMIP5 multi-model mean (Data Minus Model) is shown in Figure Supplement 1. The recent divergence of the models from observations has not yet reached the maximum differences that exist toward the beginning of the data—maybe in a few more years.

Figure Supplement 1

The differences do of course depend on the base years used for anomalies. As noted in the post, I’ve used the base years of 1961-1990 to be consistent with the base period used by the IPCC in their model-data comparison in Figure 10.1 from the Second Order Draft of AR5. Using the base years of 1880 to 2012 (the entire term of the GISS data) does not help the models too much. Refer to Figure Supplement 2. The recent divergence is still considerable compared to those of the past.

Figure Supplement 2.

Thanks Bob, it is always good to see your posts with clean data analysis. It shows that the models do not perform well not even against the heavy adjusted GISS data.

The evolution of the GISS data which we see year after year adjusted, adapted so that “accidentally” it fits more and more the model trends is another story.

http://www.real-science.com/giss-temperature-trend-complete-garbage

http://wattsupwiththat.com/2011/02/15/controversial-nasa-temperature-graphic-morphs-into-garbled-mess/

http://www.real-science.com/cooling-nuuk

http://notalotofpeopleknowthat.wordpress.com/2012/03/11/ghcn-temperature-adjustments-affect-40-of-the-arctic/

http://notalotofpeopleknowthat.wordpress.com/2012/01/25/how-giss-has-totally-corrupted-reykjaviks-temperatures/

http://uddebatt.wordpress.com/2011/01/23/how-the-world-temperature-%E2%80%9Crecord%E2%80%9D-was-manipulated-trough-dropping-of-stations/

and many more

Bob Tisdale:

Thank you for this clear and cogent assessment.

All that needs to be known about the models is stated by your conclusion which says

<blockquote>The fact that the models simulate the warming better during the recent warming period does not indicate that manmade greenhouse gases were responsible for the warming—it only indicates the models were tuned to perform better during the recent warming period.

Richard

Thanks Bob for another great analysis.

Bob, I’ve always wondered why you don’t publish? I know there are barriers to publishing but it seems your work is solid and would be that much more sturdy being scrubbed by your opponents.

Busy read, good busy indeed. I still have problem with global data dating back to 1880 given the only really, in any way possible, reliable global data is from the satellite data which started in 1979.

Brad says: “Bob, I’ve always wondered why you don’t publish?”

I assume you mean publish in peer-reviewed journals. First, my audience is not the scientific community; it’s the public. If the climate scientists haven’t bothered to check sea surface temperature and ocean heat content data to determine if the data indicate the oceans warmed naturally, that’s their problem. If I can get the public to understand the significance of the natural warming of the global oceans, then the scientific community will have to respond. One of my goals this year is to get op-eds published in online versions of periodicals.

Second, (to borrow a comment I made at another blog recently) I’m not paid for the service I provide—other than an occasional book sale and donation. I’m not contractually obligated to have papers published in peer-reviewed publications like government-financed researchers. The phrase “publish or perish” does not apply to me. If someone would like to provide me with a grant worth a couple hundred thousand dollars and require that I submit my work to peer-reviewed journals, then I’d write articles for peer-reviewed journals.

Or you could look at it another way: I write op-eds in which I present data.

I also occasionally provide a service that you won’t find in peer-reviewed publications. I provide step-by-step instructions so that the average person who’s interested can download the data and confirm for themselves that I’ve presented the data correctly. For example, I provided those instructions in my essay “The Manmade Global Warming Challenge”, which I also linked earlier, starting on page 154:

http://bobtisdale.files.wordpress.com/2013/01/the-manmade-global-warming-challenge.pdf

Regards

Another crap post about things that can’t be measured with any confidence…yeah i am talking about temperature data. I don’t even want to spend time about models with inputs no one knows.

The failure of the models to hindcast the early rise in global surface temperatures illustrates that global surface temperatures are capable of warming without the natural and anthropogenic forcings used as inputs to the climate models.

============

Climate science ignores the statistics of natural processes. Consider the statistics of water. In the air water clumps to form clouds. Why is this? Statistically it should try and randomly spread out until the skies are an even shade of grey. Instead it strongly clumps together to leave cloudy patches with blue skies in between. This is quite unexpected behavior.

This clumping behavior of clouds is not behaving as random noise and the swings in global temperature do not resemble random noise. Something ignored in the attempt to average models together to eliminate noise. Instead, these natural systems show the dynamics of scale independent fractals. These fractals do not have the statistical behavior of “classical statistics” – the statistics of the roulette wheel, the dice and the coin toss. The statistics of nature do not follow the same rules.

Something that hydrologists have known for years but has been ignored by climate scientists, which is why the predictions and models of Climate Science have gone off the rails. Climate Science have been so busy studying CO2 they have ignored the science of water.

Typo: “latter” should be “later” in:

“latter warming period compared to the early warming period”

Roger Knights says: “Typo: ‘latter’ should be ‘later'”

Corrected. Thanks for pointing it out.

AlexS, I understand your sentiment. We all know about GISS pseudo-data. However, keep in mind that people are publicly pushing that pseudo-data as meaningful as well as the models. It really helps to have the ammunition Bob provides to counter their BS.

Thanks, Bob.

I have a problem with the start and end points in Figures 3, 5, 7, and 9: They are generally chosen to be near extremes, that is to start at a low point and end at a high point for warmings and to start at a high point and end at a low point for coolings. Such cherry picking is skewing the ‘trends’.

There are numerous different models.

If the models correctly modelled the laws of physics, since these laws are uniform, each model would yield the same result. The fact that they do not yield the same result establishes as fact, that either the models do not properly capture and model the laws of physics, or modelling a chaotic system produces no meaningful prediction. There can be no other explanation.

The reality of the situation is that each model does nothing more than run out the assumptions and prejudices/dogma of the person who programmes the model. They are not fact based, they are not physics based. It is therefore no surprise that they can neither correctly hindcast (even though fudge factors are applied to try and tune them to past temperature data), still less produce a worthwhile prediction of future trends.

Climate science will not take a step forward until such time as models are ditched. The commentator who posted “If a single simulation is not a good predictor of reality how can the average of many simulations, each of which is a poor predictor of reality, be a better predictor, or indeed claim to have any residual of reality?” is correct. The average of a sow’s ear does not give rise to a silk purse. The average of cr*p remaims cr*p.

The holy grail in climate scernce is to seek to know all that there is to know about natural variation, what this comprises of, how each natural component forces the climate, it s upper and lower bounds, and what, if any, inter action each natural component has with one another.

Until we fully understand natural variation, we will never know what effect CO2 or other manmade aerosols may have on the climate and the climate sensitivity to such manmade emissions. Until we have that knowledge and understanding, it is simply guesswork since, until we have the requisite knowledge and understanding, we cannot seperate the signal of climate sensitivity from the noise of natural variation.

At least it looks like, over the course of the next 10 to 20 years, the sun will give us some further insight into the effect of the sun on climate. I know that Leif considers that it has little effect. It looks like the correctness of that view will be tested and taht will at least enable us to rule in or rule out the effects of that big shinny thing in the sky in whose rays we bask.

Reminds me of the comment, “But the models are all in very good agreement with each other.” Response, “The trouble is, the models are not in very good agreement with reality.”

The red trace in your figure 2 doesn’t actually look that bad for a model, although, since these fellows collect and manipulated the data and have the benefit of “correcting” their models every few years, I suppose the red line should be a reasonable fit. The fact that red line really diverges in the recent data reinforces this notion. It will surely be bent downwards in the coming year or so.

Isvalgaard,

Cherry picking…oh, OK. I kind of thought the same (a tiny bit) in Figure 11. TO get the early rise in T to be similar to the late rise in T, Bob had to take the late rise in T out into 2012. I would have called the late rise completed in 1998-2000. That would have made the late rise a bit more steep then the early rise and maybe a little less comparable. I would have added a 21st century flat T timeline from 2000-2012.

But you miss the forest through the trees. And to me that was figure 12. No matter what points you take in that figure to make the early rise and late rise comparison of the models..well there can be no points to cherry pick by the AGW crowd, which I assume you are not part of. So that is the coup de grâce that maybe you might like to address.

Thanks Bob for a good read….as always.

Richard Verney says @9:38 AM: “The holy grail in climate science is to seek to know all that there is to know about natural variation, …” Suggested change: “The holy grail in climate science should be, but isn’t, to seek to know all that there is to know about natural variation, …”

What you have done is apply Regionality to Time: grouping everything together creates a mathematical artifact supporting the CAGW models. Temporal regionality shows what geographic regionality shows: events are non-global in time as they are in space.

Procedural Certainty or Accuracy: the IPCC models are reasonable at showing where we are given hindcasting to post-1990 and only 20 years of prediction (actually, less, right?). Representational Certainty or Accuracy: not so good, though how good are they at showing what is really coming at us will be known in just a few years. When it will be irrelevant.

Bob:

lsvalgaard says:

April 20, 2013 at 9:10 am says: “…I have a problem with the start and end points in Figures 3, 5, 7, and 9….” These appear to be the same you use in Figure 2. That graph is followed by this IPCC quote:

“…Clearly, the changes are not linear and can also be characterized as level prior to about 1915, a warming to about 1945, leveling out or even a slight decrease until the 1970s, and a fairly linear upward trend since then (Figure 3.6 and FAQ 3.1)….”

My question is this. Does the IPCC Figure 3.6 (or the FAQ) use the same end points their purposes as you use in your Figure 2 (and 3, etc.)? If those time periods designated by the IPCC are explicit, that is what you should use in your comparisons.

Thanks for all the good data and analysis you bring to our attention.

Regarding the modern warming period. You are comparing model runs with TWO DIFFERENT SETS OF PARAMETERS and then comparing as if it were one model to real observations.

Tuned model comparision to known observations used to tune the model should be disregarded in terms of results. The modelers tune their favorite dials till a match is made to known observations, using those observations to work on their fine tuning. That’s called calibrating your instruments. The other model is set without further tuning and without the advantage of known observations. So it isn’t that the match to a model is good at the beginning and then craps out at the end. The only period worth looking at is the “set to one set of parameters without the benefit of observation data to help with tuning”. Highlight that period and we can say the entire model (the one without benefit of tuning and AFTER calibration has ended) is trash. Once again, the tuned period should not be considered as part of the resultant data. It isn’t resultant data. It is simply the calibration period. The real experiment starts after the calibration period ends.

Addendum: I think the climate modelers include the calibration period in their results graph without labeling the knee to lead some to think the model is a pretty damned good one. I think it is a slight of hand trick and unprofessional.

Leif, in other types of stimulus response graphs, such as brainstem research, amplitude from peak to trough is one of the measures used to determine the health of the brain pathway. That is an instance of cherry picking that actually provides very useful and sometimes even vital information.

Go Home says:

April 20, 2013 at 10:59 am

Cherry picking…oh, OK. I kind of thought the same (a tiny bit) in Figure 11. TO get the early rise in T to be similar to the late rise in T, Bob had to take the late rise in T out into 2012.

I know what Bob is trying to do, and for that he has to make decisions. I was commenting that I personally would not have used extreme points as start/end [as such is problematic in my book], but clearly Bob’s bar is lower than mine.

Pamela Gray says:

April 20, 2013 at 11:51 am

That is an instance of cherry picking that actually provides very useful and sometimes even vital information.

Then it would not reasonably be called ‘cherry picking’.

As JimF says:

“Does the IPCC Figure 3.6 (or the FAQ) use the same end points their purposes as you use in your Figure 2 (and 3, etc.)? If those time periods designated by the IPCC are explicit, that is what you should use in your comparisons.”. The IPCC can hardly be accused of cherry picking to support Bob’s analysis.

Isvalgaard,

Since your bar is proclaimed to be higher than Bob’s, what periods would you use to pick 4 time frames over the same period to allow similar comparisons of models vs temps? And what would be your reasons for each?