Guest Post by Willis Eschenbach

Over in the X-Twitterverse, I see that Roger Hallam (@RogerHallamCS21) is doing his very best to scare people. Here’s his xtweet:

If ever there was one datapoint which proved that humanity is inevitably moving into a period of revolutionary social disruption, it is the top right hand point of this chart.

The global sea level temperature for the first 5 months of 2024 is literally off the chart. The super exponential hypothesis is alive and kicking.

Meaning, in everyday language, things are going to get so bad so quickly political regimes are going to collapse like dominoes.

As I keep saying – the key question of our time is this: WHAT COMES NEXT? – Fascism or Radical Democracy?

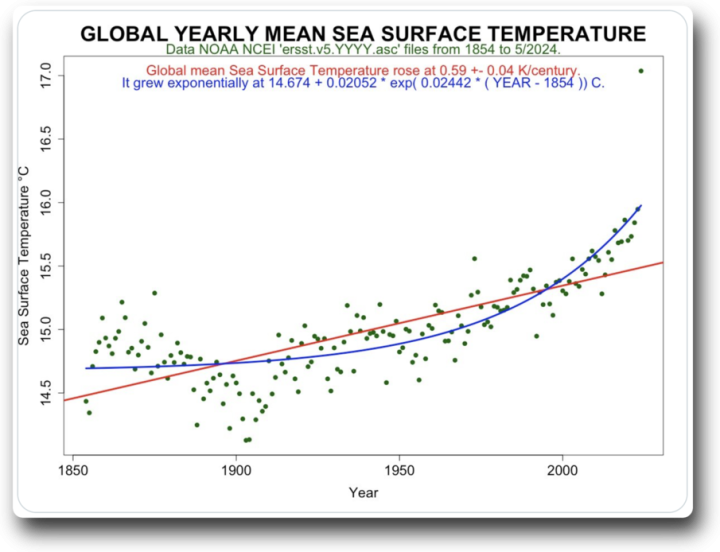

Figure 1. Roger Coppock’s graph referred to in Roger Hallam’s tweet.

So what’s not to like about this chart?

Well, first off, every dot in the chart represents a full year of data … except for the dot at the top right, which only has 5 months of data, from January to May. If memory serves, this is known as “comparing apples to orange peels” or something similar. And in any case, under any name … it’s not done.

Next, they’ve thrown away about 90% of the data by averaging it into years. Why not use monthly data, since we have it?

Next, the idea that a few months of warmer-than-usual sea levels “proves that humanity is inevitably moving into a period of revolutionary social disruption” is a joke. It assumes that we’ve never seen rapid sea surface temperature increases before.

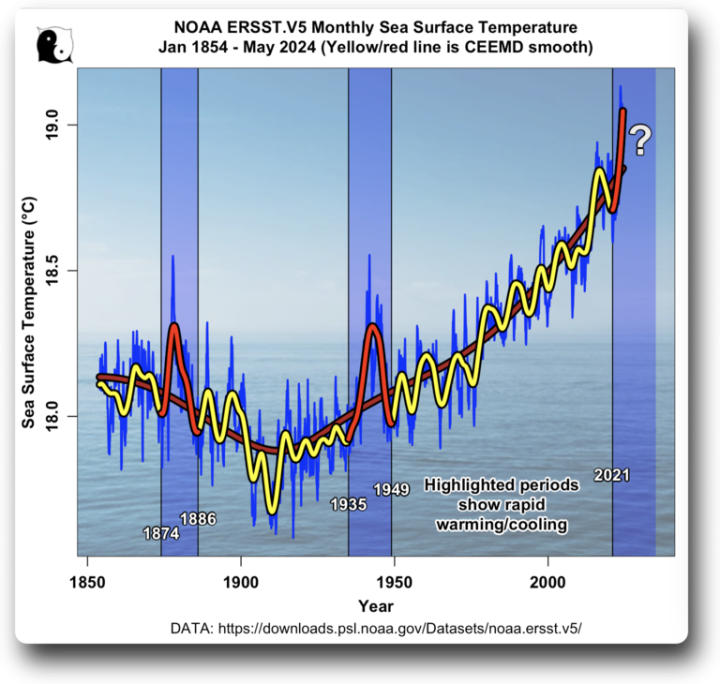

So, what would a real graph of the ERSST data look like? To answer that, as is my habit, I went and got the underlying data and graphed it up. Here’s the result.

Figure 2. Graph of the full ERSST.V5 monthly sea surface temperature (SST) dataset. Periods with red line and blue background are times of rapid warming or cooling.

Now, there are several interesting things about this dataset. First, there were two times in the past, around 1878 and around 1942, when we saw similar large jumps in the sea surface temperature. I’ve highlighted those two anomalies, along with the current warming, in red. Curiously, neither of them led to, what was it … “a period of revolutionary social disruption“. In fact, were it not for thermometers, nobody would even have known they occurred.

I mean, when’s the last time you woke up and thought “Wow, it certainly feels like the global ocean surface temperature is a quarter of a degree C warmer than a couple of months ago!”?

So what caused the jumps in 1878 and 1942? And more than that, in both cases why did the temperature return quite quickly to the status quo ante?

As we used to say during the many seasons I spent commercial fishing, “More unsolved mysteries of the sea.”

Next, although the CO2 levels were rising during the half-century from 1860 to 1910, sea surface temperatures were dropping over that time… go figure. Another unsolved mystery of the sea …

Next, there’s a relatively strong cycle with a period of about 9.1 years in the data … too short to be sunspot-related. Why nine+ years? Dang those mysteries!

Finally, we come to the question mark in the upper right corner in Figure 1—what will tomorrow bring? My first guess would be that it would do what it did in the past, go up and come down again. However, to get a better sense of where it’s going, Figure 2 shows a closer look at the recent part of the same data shown in Figure 1, with the same yellow/red smoothed lines as in Figure 1.

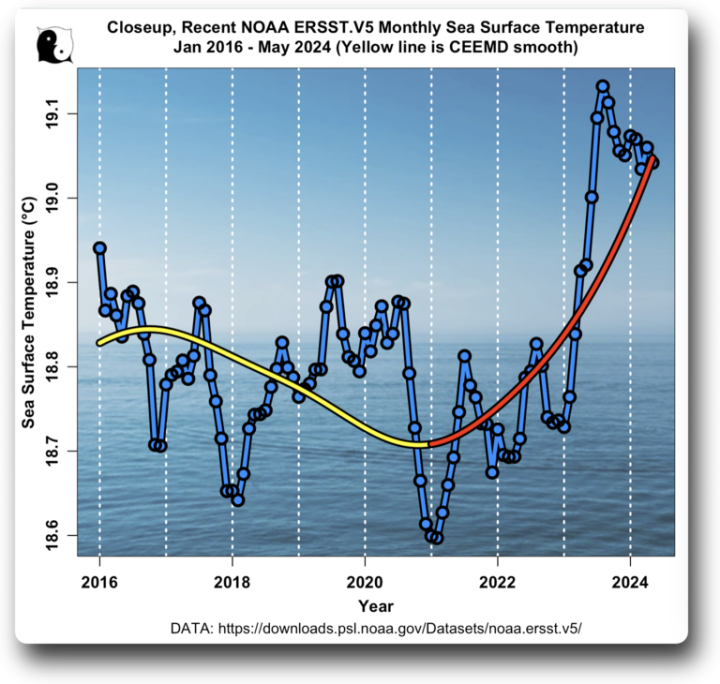

Figure 2. Same data as in Figure 1, but only showing the recent sea surface temperature since 2016. Yellow/red lines are the same CEEMD smooth of the data as shown in Figure 1

And this reveals a curious fact … sea surface temperatures are not skyrocketing as Hallam and Coppock claim. Quite the opposite—the temperature peaked in August of last year, 2023, and has generally dropped in the nine months since then.

And finally, we can see why in the Hallam / Coppock graph the average of the first five months of the 2024 temperature data is so much higher than the average of the full twelve months of 2023 data, despite the fact that sea surface temperatures have been dropping for nine months.

TL;DR Version: We may indeed be “moving into a period of revolutionary social disruption”, but it’s not because of one very misleading dot on a graph of global sea surface temperatures …

My very best wishes to all, now I gotta go mow the lawn.

w.

Yeah, you’ve heard it before: When you comment, please quote the exact words you’re discussing. I can defend my words, but I can’t defend your interpretation of my words.

And if you want to show I’m wrong, here are instructions on How To Show Willis Is Wrong.

ATTN: Willis

RE: Climate Science Fraud

Please use Google to obtain the essay “Climate Change Reexamined” by Joel M. Kauffman.

The essay is 26 pages and can be downloaded for free.

Shown in Fig. 7 is the IR absorption spectrum of sample of Philadelphia city air from 400 to

4,000 wavenumbers. A wavenumber is the number of IR light waves per centimeter. The wavenumber scale is linear in energy and spans an order of magnitude in energy.

Integration of the spectrum determined that water absorbed 92% of the IR light and CO2 only 8%. Unfortunately, Kauffman did not measure the concentration of CO2 in the city air. Since the air sample was city air, it likely that the concentration of CO2 was somewhat higher than that of a remote or rural location.

In 1999 the concentration of CO2 at the MLO in Hawaii was 367 ppm by volume. This is about 0.721 grams of CO2 per cubic meter of air. At 28 deg. C and 76% RH, the concentration of water was 29,549 ppm by volume. This is 23.7 grams of water cubic meter of air. At 28 deg. C, a cubic meter of air has mass of about 1.17 kg.

Presently at the MLO, the concentration of CO2 in dry air was 427 ppm by volume for March. This

is 0.839 grams of CO2 per cubic meter of air. In 1920, the concentration of CO2 in air was about 300 ppm by volume. This is 0.589 grams of CO2 per cubic meter of air. After a century, the amount CO2 released into atmosphere from all sources is only 0.250 grams per cubic meter of air. The small amount of CO2 in the air can only cause a small of heating of such a large amount of air.

Based on Kauffman’s essay and the above analysis, I have concluded that the UN, the IPCC, the UNFCCC, and a coterie of unscrupulous scientist have been perpetrating the greatest scientific fraud in recent human history. The scientists perpetrate this fraud to obtain “easy money” from governments to support their research on “global warming and climate change”. The UN’s objective is the transfer of large amounts of funds from fines of the rich countries (i.e. the big polluters of the environment to all the poor countries to help the cope with global warming and climate change.

If the people could see the IR absorption spectrum in Fig. 7, they would concluded that water is the major greenhouse gas by far and CO2 is the minor greenhouse gas, and we don’t have to worry about CO2 emissions.

Is there a way the spectrum in Fig. 7 can be displayed at WUWT for everyone to see? Do you know how to this?

PS: I am a retied organic chemist with a B.Sc.(Hon), Ph.D.

SSTs are not warmed by CO2 though, LW cant penetrate water, and the ‘skin effect’ (SAGE experiments, Tangaroa) show a minute warming for 100 watts of power.

Warming SSTs come from increased solar, due most likely to reduced cloud cover from reduced SO2

Hello Willis,

Roger Hallams temperature range goes from 14,5°C to 15,8°C. Yours from 17,7°C to 19°C.

Any Explanation?

Yes. Coppock is not using area weighting. That means that high latitude cells count for much more than they should and brings the average down.

Use anomalies.

Anomalies shouldn’t compensate for lack of area weighting.

They do. It comes back to their homogeneity. High lattitude cells are small and cold. Because there are may of them, they bring down the average, here by about 2C.

But high latitude cells do not have any particular type of anomaly. The cold climatology has been subtracted out. So the small cells do not bring any particular bias.

Area weighting is basically a joke. It’s still based that an ‘TRUE VALUE” of temperature can be found by averaging the stated values of measurements given as “stated value +/- uncertainty”. It all comes back to the meme that all measurement uncertainty is random only, Gaussian, and cancels so it can be ignored.

Weighting measurements that have uncertainty intervals still gives you an uncertain value when averaged. When you compare those weighted measurements that have uncertainty the uncertainty of the result GROWS, it doesn’t decrease.

The blindingly obvious question which has just occurred to me after all this time (I can be a slow learner) is “why use area weighting anyway?”

The earth is a sphere (alright, an oblate spheroid), so it is eminently feasible to use equal areas and avoid the additional complexity of area weighting.

Granted, that leads to sampling errors due to missing data, but it is what it is.

Area weighting gives you a best approx to a spatial integral.

It is feasible (and best) to use equal areas. I do it (eg here). But it isn’t required to do a proper spatial integral (Riemann style).

So why isn’t the equal area approach used more widely?

It doesn’t matter. You can’t integrate an intensive property. The color of a gallon of pink paint will be the same as the color of a pint of pink paint. An integral of the color over the two containers will give you nothing useful. It’s the same for temperature.

You can integrate enthalpy, but nobody seems to do that despite the data being available.

“You can integrate enthalpy, but nobody seems to do that despite the data being available.”

Yep. Ask yourself why climate science simply refuses to do so.

Temperature is an intensive property. Exactly what do you think you are gaining by integrating an intensive property?

Temperature, like color, is a property of a “thing”. It doesn’t depend on the amount of that “thing”. A gallon of pink paint has the same color as a pint of the same pink paint. The temperature of that gallon of pink paint is the same as temperature of the pink paint in the pint if they are side by side. “Integrating” over the gallon and the pint won’t change the property of the color one iota.

What you are *really* doing is trying to justify the meme in climate science that all measurement uncertainty is random, Gaussian, and cancels. By “averaging” enough temperatures you can cancel all the measurement uncertainty and get a “true value” for the temperature in an area.

Tim Gorman says:

“Temperature, like color, is a property of a “thing”. It doesn’t depend on the amount of that “thing”. A gallon of pink paint has the same color as a pint of the same pink paint. The temperature of that gallon of pink paint is the same as temperature of the pink paint in the pint if they are side by side. “Integrating” over the gallon and the pint won’t change the property of the color one iota.”

Well … kinda.

Suppose we have one liter of water at 290K and three liters of water at 310K. Obviously, we can’t just average them.

BUT … we can do a weighted average

(290K * 1 liter + 310K * 3 liters) / 4 liters = 305K

which is the temperature we’d get if we mixed all the water together.

w.

That works for mixtures where the masses are known.

With atmospheric temperatures averaging without knowing the humidity (mass of a volume) at each location introduces lots of uncertainty.

This is one more item of measurement uncertainty for a monthly temperature average. You can’t assume a constant humidity throughout.

Weight is a function of mass. Mass is an extensive property.

Temperature and color are not.

1 liter of water has the same color as 3 liters of water.

Mixing two different masses of water involves the extensive property of mass. You can’t pick a 1 milliliter parcel of water from the 1 liter bucket and say you can average its temperature with the 999 milliliters left and get something different for the average temperature. They will both have the same temperature.

You can get a different value for the average mass of the two parcels of water.

Area weighting is useful if you are trying to compare total masses. Mass is an extensive property. Temperature is not an extensive property. It is an intensive property. You may as well try averaging colors in an area in order to compare it to the average color of a different area by weighting the size of each individual area. Color and temperature are a property of something that doesn’t depend on the amount of the something.

Climate science supporters don’t even understand the difference between extensive and intensive properties.

It basically all boils down to the meme of measurement uncertainty is random, Gaussian, and cancels. If you can combine enough multiple temperatures into an average you can somehow cancel out all the measurement uncertainty and come up with a “true value” of the temperature in an area. Then you can average those averages to get the “true value” of the temperature over a wider area.

You are ignoring the uncertainty of the random variables used to calculate an anomaly.

Tell everyone here what happens to the variances of the monthly average and the baseline monthly average when the means of those random variables are subtracted.

No, think about it. All the anomalising has done is to subtract a site-specific offset from each site’s observations.

Unweighted anomalies will give a smaller difference than unweighted absolute values, but potentially have a larger relative difference.

((273.15 + 19.0) – (273.15 – 15.8))/(273.15 + 19.0) = 3.2 / 292.15 = 0.01

What would the equivalent relative anomaly calculation be?

Do you expect an unweighted anomaly average would be too warm or too cool?

It should be 0 during the baseline period. Outside that period, it depends on the changes in the smaller and larger areas relative to each other.

What I was trying to get at is that the relative differences of unweighted anomalies may be higher than the relative difference of unweighted absolute temperatures.

OC,

here is the issue in more detail. A global average is a sample average. A lat/lon grid is bad sampling. It has a much higher density of sampling near the poles.

For bad sampling to have a bad effect, you need an alignment of the data with the sampling deficiency. To take an example from opinion polling, if you have a sample with too many men, you will get a bad result if men and women are apt to differ on the subject. But if you have too many blood group A citizens, it probably won’t matter, since that probably does not align with the opinion.

There are four cases here

Bad sampling+abs T gives bad result, because the dense areas are cold.

Good sampling, which could include repair by area weighting, gives a much better result.

Bad sampling with anomaly, where the aligning property (cold climate) has been subtracted out, gives a not bad result, since there is no major alighnemt.

Good sampling plus anomaly is best of all, and is what all serious global T providers use.

I think we largely agree on the use of equal areas or area weighting to correct some of the deficiencies of using this particular sample of convenience.

In this particular case, though, it is quite likely that the use of unweighted temperature anomalies merely masks the poor sampling methodology. A stratified sample would give some indication as to whether there is a latitudinal difference. Lumping anomalies together loses information.

While the area weighted anomalies are less worser, there is still quite a bit of information lost regarding the effect of the sample selection.

To my mind, the main problem of using equal area or area weighted absolute temperatures is the effect of station changes (site move, instrument change, site closure, new site).

If the sites used remained unchanged, the absolute temperature deltas and anomaly deltas would be identical.

Do you know if there have been any decent studies conducted using multiple random subsets of the station data to quantify the effects of sampling differences?

Old Cocky,

Weighting anomalies is closing the barn door after the animals have already escaped. Anomalies are an attempt to glean more information from measurements than the measurements actually contain. This doesn’t even discuss the uncertainty in measurement values.

Anomalies are a contrived mathematical process to artificially expand perceived information that simply is not known. For much of the temperature record the precision is based on integer values. Averaging to obtain multiple additional decimal places for calculating anomalies is not allowed in any other scientific endeavor. Climate science has latched onto the customary practice of using an extra decimal or two for interim calculations TO PREVENT ROUNDING ERRORS as also allowing those decimal places to be carried on into the final answer. Every lab PROFESSOR I ever had would have failed me for keeping those decimals that were not available in the original measurement.

This doesn’t even address the fact that temperature measurements at best have a measurement uncertainty that makes these extra decimal places unknowable. Every data point in every “sample” has uncertainty that is simply thrown away at every stage where additional averaging is done. I would have thought that the simple Example 2 in TN 1900 would have shown to everyone that the uncertainty of monthly averages is very significant. Yet CAGW and GCM modeling folks continue to shove that right down the rat hole out of sight.

You may as well sample the color of the sky at various locations in each area in order to get a “true value” of the color of the sky.

Face it, you are trying to justify that you can find the “true value” of the intensive value of temperature in an area by averaging the intensive value known as “temperature” – using the meme that all measurement uncertainty is random, Gaussian, and cancels.

This has nothing to with sampling – it *all* has to do with trying to find a “true value” which you can never know because of measurement uncertainty.

The sampling you are talking about is nothing more than a job guarantee for mathematicians.

First, you have countered in the past that infilling ΔT’s out to 1200 km has studies that show anomalies are homogenous in that radius.

Second, what is being investigated is ΔT, not absolute temperatures.

Third, averaging intensive properties is not proper science.

Last, pick one station in a given area that encompasses 1200 km. Make sure it is well calibrated and maintained often. Determine the anomaly at that station and use it as the ΔT for that area. No reason to deal with density of stations or weighting of any kind. Either the GLOBAL, that is, all over the earth is changing or it is not changing over the whole globe. Pick one. Show proof.

Here are two links to articles that are appropriate. One is a study about homogenizing stations in South Africa. What struck me was that the error terms bely any anomaly values. The other one is from WUWT written by Dr. Tim Ball, ten years ago. Funny how the same things are being discussed. Models wrong, uncertainties in temperature, averaging temperatures from climate zones that have different variances, and ignoring uncertainties from infilling.

Climate | Free Full-Text | Evaluation of Infilling Methods for Time Series of Daily Temperature Data: Case Study of Limpopo Province, South Africa (mdpi.com)

Where Was Climate Research Before Computer Models? – Watts Up With That?

Ten years and billions if not trillions of dollars in research and still no firm answers about anything. From what I see, we are still dealing with trying to match new temperature stations on land and in the ocean to old temperature stations in order to determine a “global temperature” of dubious value.

First, you have to show me that the density of stations increases as you move toward the poles. I might believe that in the NH, there are many more stations in the mid-latitudes than elsewhere.

Second, I have no problem with using equal areas or area weighting.

Third, what I do have a problem with is not properly determining the measurement error in either case. That simply can not be ignored when attempting to discern anomalies in to three decimal places.

Temperature anomalies are not specified as percentages of the base temperature in climate science. They are compared to each other.

The change from a 0.1 anomaly to a 0.2 anomaly is a 100% change. Sounds like a *big* change, doesn’t it? But if you look at the absolute temp, say 15.1 to 15.2, it’s only a 0.7% change. It gets even smaller if you use the Kelvin temps.

You have to use Kelvin or Rankine for absolute temperatures.

I agree, but the percentage comparing anomalies (small values) is not the same as the percentage comparing absolute temps. Comparing anomalies is only useful for scaring people.

That’s why I was wondering how the relative differences of the unweighted anomalies vs area weighted anomalies compared to the unweighted absolute temps vs area weighted absolute temps up above.

With temperature anomalies, the zero point is the baseline temperature for that site rather than the melting point of (pure or salt) H20, or absolute zero

Anyone who has ever peed in a swimming pool should realize the futility of trying to measure the average temperature of the planet’s oceans. For that matter, the average temp of your swimming pool would be devilishly hard to measure within 0.1 degrees.

Certainly modern science has no way to measure ocean temperature.

Your swimming pool is the same as the ocean, the temperature of each parcel of water is constantly changing. In order to calculate a real average all measurements of each parcel would have to be measured at *exactly* the same time and that average will be totally different the next instant. Would the average at both instants of time be exactly the same? Hmmmm…… To 0.1 or .01 degree? Hmmm…

In ancient times, deities were invented to explain the inexplicable. Even today, Pele is invoked over Hawaiian eruptions, Kilauea even today.

What hubris thinking humans can control the weather (and thus climate change) when we can not even regulate a room in a house to better than +/- 3F.

What insanity claiming just one thing controls the entire ecosphere of the planet. So CO2 is the modern deity we are being forced to worship. The socio-historical correlation is quite stark.

Gaia is laughing at us.

Willis, you say:

“Next, there’s a relatively strong cycle with a period of about 9.1 years in the data … too short to be sunspot-related. Why nine+ years? Dang those mysteries!”

To me, the period of 9.1 years looks like a system harmonic, i.e. a resonant frequency within the ocean system. It is interesting that it is roughly twice as long as the resonant ENSO frequency, which is also clearly visible in your figure 2.

If this 9.1 year period is indeed a resonant frequency of the ocean system, then it says nothing about the frequency of the forcing mechanism, and about whether or not the solar cycle is a forcing mechanism for sea-surface temperatures.

Temperatures don’t resonate,

Sure they do. Temperatures are often the amplitude of a periodic phenomenon. You need to broaden your ideas into really long wavelengths of periodic functions.

Read Part IV of Planck’s Theory of Heat Radiation, A SYSTEM OF OSCILLATORS IN A STATIONARY FIELD OF RADIATION

Max Planck. The Theory of Heat Radiation by Max Planck (English Edition) – Unraveling the Mysteries of Heat Radiation: Max Planck’s Groundbreaking Theory in English (p. 176). Prabhat Prakashan. Kindle Edition.

Another question. I thought the boiling point for water was 100 degrees centigrade. Has that changed? After all, we keep hearing about oceans boiling and such. And, we are just talking about an overall warming of 1 degree, right? Is that supposed to be alarming.

It is affected by atmospheric pressure and water purity.

If the dot at the top right of Figure 1 represents January through May only, it’s not surprising that it’s much higher than annual averages for previous years.

There is much more sea surface area in the Southern Hemisphere than in the Northern Hemisphere, and January through March is summer in the Southern Hemisphere and winter in the Northern Hemisphere, so that a January-May average includes a large amount of relatively warm Southern Hemisphere water, and relatively little cold Northern Hemisphere water.

The exponential “curve-fit” (blue line in Figure 1) is particularly misleading, since it flattens out the obviously decreasing trend between circa 1860 and 1910. The problem with an exponential curve is that it explodes when extrapolated into the future, although that may serve an alarmist’s agenda.

A more realistic approach would be a linear fit to the data between 1910 and the present. This would have a higher slope than 0.59 C/century, but would not predict a future runaway, which is highly unlikely physically.

How much heat did the Tonga eruption release?

water vapor in the stratosphere is the claimed villain.

Excellent synopsis and summation, Willis!

There is also the little matter of discarding obvious outliers, especially outliers far out of sync with historical temperature.

Discovering that the extreme outlier is not an equal measurement to other annual averages is prestidigitation flim flam.

Hallam is beclowning himself in his desperation.

Nice expose of the data as always Willis.

No mystery. 9.1y is a lunar cycle, not a solar one. It is the period corresponding to the frequency mean of 9.3y and 8.85y . Nicolas Scafetta (2010?) rather elegantly showed this was physically rea and of lunar origin by comparing frequency analysis of the Earths distance and E-M barycentre distance from the sun . The earth’s orbit has the 9.1y component but the earth-moon pair does not.

The frequency if found in many SST of many ocean basins:

That’s an observation. A hypothetical mechanism would be that tides are essentially horizontal movement, though we measure it as height. Tides are affected by the moon and magnitudes vary with intensity of the tidal raising forces ( a cubic fn of distance ).

Horizontal movement of water means heat transport. Variations in heat transport in and out of tropics affects the global heat engine.

Thanks, Greg. While that all sounds good, I just went and got a century’s worth of earth-sun distances from the JPL ephemerides. There’s no visible 9.1-year cycle in the data.

I also couldn’t find any 9.1 year cycle in the Nino34 data …

I’d be very, very careful with any of Scafetta’s claims.

w.

Thanks for the reply Willis. I’m duly skeptical of Scafetta, like anyone else.

I think this is paper https://arxiv.org/pdf/1005.4639 , see fig 8. It was not distance but speed rel to Sol centre (not SS barycentre) he used, my mistake, but I expect it should show in both. It looks legit but I did not try to replicate.

Yes, you will see in my graph the 9.1y component is not as strong in Nino34 as it is in N. Atl and N. Pacific, bit it is there. Different spectra techniques vary in the ability to separate peaks. It would be interesting to revisit this. I think I did that analysis in 2013.

Since you are finding a strong 9.1y too, it would be interesting to see whether this is of lunar origin. It may explain your mystery.

FWIW I just grabbed 200y of daily “r-dot” values from JPL. I ran 365d triple running mean and did quick z-chirp frequency analysis.

I wouldn’t have much confidence in those smaller bumps but they do seem to match quite closely to the values I cited earlier. If I use different windowing fn. with less ringing, it fails to resolve the two and I get one peak centred on 8.95y. So it seems there is something there. Though these are down in the weeds, in terms of changing the earths radial speed, the tide raising forces clearly are significant.

I have no experience of Scafetta’s “maximum entropy” method(sound dangerous!) so I can’t attempt to replicate that.

Neither the exponential nor the linear model even get the right DIRECTION for the first 50y of the dataset. So they have zero explanatory or predictive value.

Arbitrary fn fitting is meaningless and unscientific.