By Andy May

Christian Freuer has translated this post to German here.

The climate sensitivity to CO2 and other greenhouse gases (GHGs) is arguably the most important number in the climate change debate. AR6[1] claims the sensitivity, which they call “ECS” or the equilibrium climate sensitivity, is three degrees per doubling of CO2, or 3°C/2xCO2 (“/2xCO2” simply means per doubling of the atmospheric CO2 concentration). They claim the very likely (10% to 90%) range of possible values is from 2 to 5°C/2xCO2 and the likely (66%) range has narrowed to 2.5 to 4°C. Since 1979, with the publication of 1979 Charney Report,[2] the range of possible ECS values has normally been about 1.5 to 4.5°C for a total range of 3°C, how has it now narrowed to 2.5 to 4°C, a full likely uncertainty range of only 1.5°C? It is generally accepted that the direct warming effect of CO2 and other greenhouse gases is small, only about one degree per doubling of CO2,[3] so the debate is all about the feedbacks, especially cloud feedback to the greenhouse gas warming.[4]

The real-world effect of changing the CO2 and GHG atmospheric concentration on climate, whether natural or emitted by humans, has never been measured, only modeled. ECS is defined as the ultimate warming due to an instantaneous doubling of the atmospheric CO2 concentration. The ultimate climate response to that doubling will not occur for hundreds or thousands of years and everything else affecting the climate, like cloudiness, and insolation will not stay static for that long, so it is an artificial quantity that cannot be measured. Importantly, the IPCC estimate of ECS can probably not be falsified through real world measurements, which means it is not a proper scientific hypothesis. Even with a climate model it is difficult, in Sherwood, et al., they write:

“To calculate the ECS in a fully coupled climate model requires very long integrations (>1,000 years).”[5]

A more relevant measure of climate sensitivity is the transient climate response, or TCR, which is also calculated by the AR6/CMIP6 climate models. This is the climate response to a steady increase in CO2 concentration of about 1% per year, to the point where CO2 doubles, roughly 70 years.[6] Thus, it is a more realistic and, given the short time frame, it is possibly measurable in the real world. Sherwood, et al.,6 a source relied upon in AR6 (Chapter 7 mentions the Sherwood paper 43 times), defines a term called “effective climate sensitivity” that is the climate response to an instantaneous doubling of CO2, or more specifically half of the climate response to an instantaneous quadrupling of CO2. By adding “effective” to the name rather than “equilibrium” they cut the time frame to 150 years.

AR6 constrains their estimates of TCR and ECS using four lines of evidence: process (mostly feedbacks) understanding, climate model simulations, historical observations, and paleoclimatic observations, plus a fifth category they call a synthesis of evidence, in explaining this new ECS evaluation they write:

“All four lines of evidence rely, to some extent, on climate models, and interpreting the evidence often benefits from model diversity and spread in modelled climate sensitivity. … unlike in previous assessments, climate models are not considered a line of evidence in their own right in the IPCC Sixth Assessment Report.” AR6, page 1024.

As explained above, ECS is not measurable since it is derived from an unreal model scenario. In AR6 ECS and TCR are referred to as “idealized quantities … that can be inferred from [observations] or estimated directly using climate [model] simulations.”[7] Thus, all attempts to estimate them in nature require some sort of model to transform the measurements to the modeled ECS or TCR scenario given in AR6.

Nic Lewis and Judith Curry try to simplify the conversion from observations of temperature and CO2 by carefully selecting periods of time when natural forces are as comparable as possible. However, they only consider volcanism and major ocean oscillations, like ENSO and the Atlantic Multidecadal Oscillation (AMO). We have more to say about this idea in Part 4.

The world was cooler in the 19th century and the Little Ice Age was just ending. For this reason, there were fewer El Niños then than now. El Niños occur due to excess heat buildup in the Pacific Ocean that must be expelled to the atmosphere. They warm Earth’s surface for a few years, but longer term they act as a cooling agent.[8] The number of strong El Niños and their strength increases as warm periods end and the world grows cooler, as happened at the end of Medieval Warm Period when the Earth dipped into the Little Ice Age from ~1050AD to ~1400AD. Once the world became colder, their strength and number reduced, as observed until the late 20th century.[9]

While there is considerable debate on the subject, it is likely that solar variability also plays a role in climate change and the Sun was less active in the 19th century than during the Modern Solar Maximum from ~1935 to ~2005.[10] While the IPCC believes that solar variability and other natural factors, except for volcanos over short periods, play no role in climate change over the past 270 years,[11] Javier Vinós and Ronan Connolly, et al.[12] have presented considerable evidence that this is not the case. Thus, the calculations Lewis and Curry make to convert their measurements to the modeled quantities of ECS and TCR might be contaminated by natural factors that they did not take into account. Even so, their calculations of ECS and TCR are considerably below the IPCC likely lower limits of 2.5°C and 1.4°C, respectively. Tables of estimates of ECS and TCR will be presented in part 3.

Besides TCR and ECS, the classical climate sensitivity quantity, which we simply call “climate sensitivity to CO2,” is totally evidence based and determined from observations. The classical quantity is best defined as the surface air temperature sensitivity (SATS) to an increase in CO2.[13] The units used for SATS are degrees C per Watts per square meter of forcing. By assuming all the forcing is due to CO2, the value can be converted into °C/2xCO2. The further conversion of this value to ECS or TCR requires making assumptions about the time required for Earth’s surface (mainly the oceans) to come into equilibrium from the change in forcing inclusive of any feedbacks to the CO2-caused warming. In the tables shown in part 3, we list many of these observation-based estimates of climate sensitivity. Some of them, including Lewis and Curry, use simple models to translate the measurements into pseudo ECS and TCR. When this is done, typically the same assumptions made by the IPCC are used for the conversion model. Other estimates of the classical climate sensitivity just present the measurements, but they assume a forcing for the CO2 changes observed.

Mauna Loa[14] measured atmospheric CO2 is increasing at about 2 ppm (0.5%) per year and is not far off from the TCR defined 1% per year. Since the preindustrial era, or the Little Ice Age, CO2 has increased about 50% (one-half of a doubling), thus we are in a time when TCR is relevant.

As mentioned above, AR6 does not use models to directly compute ECS and TCR as they did in the past. Instead, they use five lines of evidence to constrain the final ECS and TCR model calculations.[15] This process is laid out in considerable detail in Sherwood, et al.[16] and in AR6 section 7.5. Sherwood’s analysis tries to show that all values used in the current calculation of ECS are narrowly constrained except for the cloud feedback to surface warming, and in particular, the feedback due to lower-level clouds. It is important to understand that all the methods that AR6 uses to constrain their estimates of ECS and TCR rely, to some extent, on climate models. We show the cloud feedback relationship to ECS in part 2.

The statistical analysis methods that Sherwood, et al. use to integrate various estimates of climate sensitivity into one range are subjective and seriously flawed, as shown by Nic Lewis.[17] Lewis corrected their errors and lowered their estimate of climate sensitivity from 3.23 to 2.16K, about 33%. Nic Lewis points out that “Climate sensitivity has been estimated from various types of evidence, but none of these has narrowly constrained its value.”

AR6 isolates the processes that they think contribute to warming and constrain them with observations, this is a reasonable approach if the full range of possible processes affecting warming are considered. The authors of Connolly, et al. [18] believe that AR6 have not properly considered the potential influence of solar variability. Connolly, et al. demonstrate that insolation and other solar variability may be much more important than assumed by the IPCC. As shown in figure 1, the AR6 estimate of natural warming (volcanism and solar variability) is zero, or slightly negative.

Global surface warming from 1971 through 2018 is about 0.85°C, according to HadCRUT4.[19] According to AR6[20] this corresponds to a top of the atmosphere (TOA) energy imbalance of +0.57 W/m2 (+0.2% of the incoming ~340 W/m2 from the Sun) for the same period. For Earth’s surface to warm, it must retain more thermal energy than it emits to space. When this surface energy imbalance, which is measured in Watts per square meter of surface (W/m2), causes warming, it is positive by convention. Some of the excess energy warms the surface, and the warmer surface and lower atmosphere emit more energy to space, resulting in the positive 0.57 W/m2 increase in emissions at the TOA.

Thus, if we were to assume all the surface warming is due to increasing CO2 and other greenhouse gases (GHGs), the surface air temperature sensitivity (SATS) to GHGs is about 1.6°C/W/m2, if nothing else changes. This is an extraordinarily large number. The classical values, based on observations,[21] are typically between 0.1°C/W/m2 to 0.5°C/W/m2. This suggests that all recent warming is not entirely due to GHGs.

The oceans are not really a factor in the short term, since IR (Infrared Radiation) emitted by CO2 and other GHGs cannot penetrate far below the ocean surface, like sunlight does. Most incident IR is absorbed in the first millimeter of the ocean and re-emitted or evaporated away shortly after. IR does warm the sea surface and some of this heat will go into the deeper ocean through conduction and turbulent mixing, but IR is not as transmissible to the deep ocean as visible light, especially blue light.[22]

If the AR6 estimate of the radiative imbalance is correct, the 48-year period from 1971 to 2018 had an average imbalance of 0.01 W/m2 per year. This is tiny and far below what we can measure today. The accuracy of our satellite measurements of Earth’s incoming and outgoing radiation is no better than ±2 W/m2.[23] Besides the contribution of GHGs, there are other natural factors, such as changes in cloud cover and type, that can play a large role in either increasing or decreasing the radiative imbalance at Earth’s surface.

In part 2 of this series, we will examine the largest uncertainty in the AR6 ECS estimate, cloud feedback. In part 3 of the series, we will compare the AR6 ECS and TCR estimates to observation-based estimates. Some of the observation-based estimates are considered by AR6, and some are not. We will see that many observation-based estimates of climate sensitivity are considerably lower than the AR6 likely lower bound of 2.5°C.

Finally, in part 4 we examine how Lewis and Curry[24] convert their selected observations into a value that can be compared to the totally model-based value called “ECS.” It is unusual to convert measurements to model values, usually it is done the other way around, but is their conversion valid? What assumptions do they make? The Lewis and Curry ECS is significantly lower than the AR6 likely lower bound, how do we interpret that difference?

The bibliography can be downloaded here.

- (IPCC, 2021) or AR6. ↑

- Charney, J., Arakawa, A., Baker, D., Bolin, B., Dickinson, R., Goody, R., . . . Wunsch, C. (1979). Carbon Dioxide and Climate: A Scientific Assessment. National Research Council. Washington DC: National Academies Press. doi:https://doi.org/10.17226/12181 ↑

- (Charney, et al., 1979, p. 8) ↑

- Dessler, A. E. (2013). Observations of Climate Feedbacks over 2000-10 and Comparisions to Climate Models. J of Climate, 333-342. ↑

- Sherwood, S. C., Webb, M. J., Annan, J. D., Armour, K. C., J., P. M., Hargreaves, C., . . . Knutti, R. (2020, July 22). An Assessment of Earth’s Climate Sensitivity Using Multiple Lines of Evidence. Reviews of Geophysics, 58. ↑

- AR6, p 992 ↑

- AR6, p 992 ↑

- Vinós, J. (2022). Climate of the Past, Present and Future, A Scientific Debate. Spain: Critical Science Press. Pages 53-54. Link. ↑

- (Moy, Seltzer, & Rodbell, 2002) ↑

- Vinós, J. (2022). Climate of the Past, Present and Future, A Scientific Debate. Spain: Critical Science Press. Page 192 and Connolly et al., R. (2021). How much has the Sun influenced Northern Hemisphere temperature trends? Research in Astronomy and Astrophysics, 21(6). Link. ↑

- AR6, page 961. ↑

- Connolly et al., R. (2021). How much has the Sun influenced Northern Hemisphere temperature trends? Research in Astronomy and Astrophysics, 21(6). ↑

- Newell, R., & Dopplick, T. (1979). Questions Concerning the Possible Influence of Anthropogenic CO2 on Atmospheric Temperature. J. Applied Meterology, 18, 822-825 and (Idso S. , 1998). ↑

- Global Monitoring Laboratory – Carbon Cycle Greenhouse Gases (noaa.gov) ↑

- AR6, page 993 ↑

- Sherwood, S. C., Webb, M. J., Annan, J. D., Armour, K. C., J., P. M., Hargreaves, C., . . . Knutti, R. (2020, July 22). An Assessment of Earth’s Climate Sensitivity Using Multiple Lines of Evidence. Reviews of Geophysics, 58. doi:https://doi.org/10.1029/2019RG000678 ↑

- Lewis, N. (2022). Objectively combining climate sensitivity evidence. Climate Dynamics. ↑

- Connolly et al., R. (2021). How much has the Sun influenced Northern Hemisphere temperature trends? Research in Astronomy and Astrophysics, 21(6). ↑

- (Met Office Hadley Centre, 2017) ↑

- AR6 p 937 ↑

- Newell, R., & Dopplick, T. (1979). Questions Concerning the Possible Influence of Anthropogenic CO2 on Atmospheric Temperature. J. Applied Meterology, 18, 822-825. and Idso, S. (1998). CO2-induced global warming: a skeptic’s view of potential climate change. Climate Research, 10(1), 69-82. ↑

- Homewood, P. (2015, May 28). Yes, The Ocean Has Warmed; No, It’s Not Global Warming. Retrieved from Not a Lot of People Know That. Also see Britannica here. ↑

- Loeb, N. G., Doelling, D., Wang, H., Su, W., Nguyen, C., Corbett, J., & Liang, L. (2018). Clouds and the Earth’s Radiant Energy System (CERES) Energy Balanced and Filled (EBAF) Top-of-Atmosphere (TOA) Edition-4.0 Data Product. Journal of Climate, 31(2). ↑

- (Lewis & Curry, The impact of recent forcing and ocean heat uptake data on estimates of climate sensitivity, 2018)

Just what the doctor ordered!

I’ve been trying to come up with a reasonable estimate of climate sensitivity, if any, based upon observable changes from 1850 to present, and projected on to 2100.

The temperature response to more CO2 is logarithmic. Thus, if Earth’s average T has indeed warmed by one degree C since 1850, then near term CS cannot possibly be 3.0 degrees C. Some of the putative warming from the end of the LIA has to be natural, but assume it’s all man-made. Due to its logarithmic nature, the supposed one degree C since AD 1850, with now 417 rather than 287 ppm of CO2, must be most of the warming to be expected, 1850 to 2100, with 574 ppm.

Warming above 2.0 degrees C is hence not physical. Even this is dubious, given 1.1 to 1.2 degree C without feedbacks.

“The climate sensitivity to CO2 and other greenhouse gases (GHGs) is arguably the most important number in the climate change debate.”

________________________________________________

No! “Is a warmer world a problem?” is arguably the most important question in the climate change debate.

1. More rain is not a problem.

2. Warmer weather is not a problem.

3. More arable land is not a problem.

4. Longer growing seasons is not a problem.

5. CO2 greening of the earth is not a problem.

6. There isn’t any Climate Crisis.

No, probably the most important question in the debate is not about climate at all. Its whether countries can at the same time:

— convert power generation to wind and solar

— convert transport to EVs

— convert heating to electricity via heat pumps.

Or, in short, whether they can double or triple demand for electricity while moving its generation to wind and solar.

And a secondary question of almost equal importance is, if you think it can be done at all, what will it cost?

The UK, the US, Australia and NZ appear to be bent on trying to do this. Failure is inevitable. The only issue is what form it takes.

Michel, I don’t think your “most important question” needs much debate. The answer to all three of your sub-questions is No, at least without enormous technological leaps, which obviously can’t be predicted. You’re right that failure of the whole project is inevitable, but then the next question to ask has to be “why are they carrying on doing it?” That takes us into the realm of political philosophy.

Anyway, I agree with Steve Case that a warmer world isn’t a problem. As far as I am aware, no objective cost-benefit case for or against a warmer world has yet been made. That sounds like a very sensible thing to do; but the UK government, for one, has gone out of its way to prevent any objective cost-benefit analysis being done.

“why are they carrying on doing it?”

Good question, which I’ve cogitated on for a while. The only reason I can fathom, is that there is a class of society, that want to control the world. To do so, requires total command of energy.

However, at the moment, it is fossil fuels that are the dominant source, they can’t compete directly with that (they don’t have the finances), so they are creating their own “industry”.

If they succeed, in completely disposing of the use of FFs, there will obviously be a massacre of the world population.

Interestingly, Stanley Johnson, father of the infamous Boris, was actually seen on GBNews to say, he would like to see the UK population reduced to around 15M, better still 10. He even advocated, that air travel be for the wealthy only.

So, the meek shall not inherit the earth.

“there is a class of society, that want to control the world”

Not sure about that- well, some of their leaders may feel that way but I think the followers are simply brain damaged.

Yes, agree, they must think, that their masters will take them along for the ride. But will be rudely disabused, when they find themselves in the same cart as the plebs.

“a warmer world isn’t a problem”

so true, so true! Here in damp, cold New England, I’ve been waiting for 6 months for it to warm up!

And Old England 😊

And that failure could result in more wars as nations like Russia and China attempt to expand their power against the weakened West. We should start counting the NPV of those future conflicts.

Steve, read Bastiat’s original parable below, he had more to say than most people assume…and realize that CC spending is a modern economic theorists’s dream, where the broken window has trickle down effects throughout the economy (in their view).

All they really care about is that enough people “believe” that it allows them to spin more money faster. They really don’t care what you personally think or that you might be correct. It doesn’t matter if they waste money in their view…someone gets it….

https://en.wikipedia.org/wiki/Parable_of_the_broken_window

6.a. There has been no deterioration in any climate metric over the past 100+ years. This includes hurricanes, floods, droughts, tornadoes, strong storms, wildfires & etc. Additionally, there has been no SLR acceleration. Anytime “10% To The Big Guy” Joe or his handlers says climate emergency people must scream out the facts for all to hear.

IPCC greenhouse conjecture will remain irrelevant outside of political discourse until such a time as the lower boundary active radiating surface boundary is adequately described. The active radiative emission surface should be defined as the combined clear sky and cloudy sky surfaces visible to a spaceborne observer. Radiative equilibrium process mechanistic descriptions can only start at this lower boundary.

Curiously, the effective planetary emission temperature of a hypothetical no atmosphere system would be approximately 278K. This is easily computed using well known astrophysical concepts based on earth-sun distance and solar luminosity.

With the addition of atmosphere, the effective planetary surface boundary radiative emission temperature remains the same. However, the existence of the condensing fluid dynamic atmospheric mass makes possible the non-radiative fluxes which define the climates we enjoy today.

The sum total effect of atmosphere vs no atmosphere from a thermodynamic perspective is 288K-278K = 10K potential maximum at sea-level. Globally averaged is about 8K.

The mechanism is to lift the average effective radiating surface roughly 2km by creating a turbulent boundary layer within the lower reaches of atmosphere. Obviously global heat distribution is improved as well, by allowing lateral fluid dynamic heat transport within the free troposphere.

Fluid mass flows across the terrestrial surface create aerodynamic eddies which ‘force’ turbulent mixing. This turbulent flux dominates the atmosphere-surface heat dynamics. It is constantly transporting heat to the optimal effective radiating surface.

Curiously, the power of non-radiative flux matches almost exactly the reflected sunlight by albedo.

The boundary layer terminates at the condensation height which creates a capping inversion by tangible heat release in phase change. Just enough to create a stable cloud deck.

Non-radiative fluid dynamic heat transport completely dominates below the cloud deck, in the boundary layer. The depth of the boundary layer is fixed by the triple point phase temperature of H20, being 273K.

Indirect ‘forcing’ by trace gas is immediately compensated by enhanced turbulent flux to condense at 273K. This temperature imposes a fixed limit on the system. More virtual IR radiative forcing, more heat transported in turbulent flux to condense.

it is a fascinating system; and very well designed.

Another interesting observation, as seen on nullschoolearth app, is above 5km the air mass moves in same direction as earth rotation (vorticity height). Below this, and above 20km, the equator reverses direction.

This is presumably frictional gravitational drag(?) while the poles stay the same (like an beach sea rip) the mass has to find balanced escape.

Reportedly the (thin) air mass above 50km move faster in same direction than earth rotational direction. How?

Good observations, but I am not equipped to comment on such matters.

The lack of understanding the boundary layer and its effect on energy transport is the big problem with climate science. Unfortunately, Andy makes the same mistake here:

This is incorrect as it doesn’t consider boundary layer effects. The real number is zero. As long as skeptics continue to use the same pseudo-science as alarmists, no progress will be made.

I have no idea where Andy is going with this 4 part series but starting off on the wrong foot guarantees the final result will be wrong.

Or at least unmeasurable from zero. An addition of ppm to the atmospheric mass has negligible direct impact on surface temperature. The fact that the “greenhouse effect” has gained so much credibility shows how poorly the vast majority of people understand energy. The whole concept of GHE is junk science.

Wow, your definition of GHE must be different from the rest of the world.

What’s your definition, DMac? Does it involve a positive amount of power developed from a colder object to a warmer one?

The “rest of the world” has apparently not given adequate consideration of the boundary conditions with which they are defining their greenhouse.

Many challenges will not be resolved until such a time as the condensed matter suspended in atmosphere is understood to form a significant part of the active planetary surface.

It is no more important, and no less important, than the condensed matter which forms the ground under our feet, and the vast oceanic reservoirs of liquid water. it’s all the same.

Your Nobel Prize is in the mail, Richard.

The facts are already covered in papers that have been around for years. All you need to do is dig in a little to understand why.

1) Miskolczi 2007. 2010, 2014 used NOAA data to show there’s been no change in the overall greenhouse effect. This is also backed up by Gero/Turner 2011.

2) Gray 2012 lays out why increases in CO2 will drive increases in evaporation and enhanced convection.

3) Hug 1993 points out that 99.94% of all the energy absorption from CO2 occurs in the first 10 meters which is very low in the boundary layer.

4) Several sources provide the fact that CO2 shares absorbed energy with the rest of the atmosphere rather than reemitting the energy 99.9999% of the time.

My own contribution is to put all this together to show that the warming effect from increased CO2 absorption is countered by the cooling from increased evaporation. In addition, boundary layer effects do not allow increased DWIR to cause any warming based on the 2LOT. Finally, high altitude reductions in water vapor will counter the increase in energy released by more efficient condensation.

This is all based on basic physics. I’m simply the messenger.

Great post Andy, look for parts 2 and ? to follow and then comment. I like to see quality posts that support / build WUWT reputation as one of the few “honest” climate blogs.

Andy May is correct that all of IPCC’s methods of deriving the equilibrium temperature response to doubled CO2 (ECS) after including feedback response are dependent upon diagnosis of feedback strength from the outputs of general-circulation models, which do not themselves implement feedback formulism directly, but attempt (and fail) to solve the Navier-Stokes equations in computational fluid dynamics.

The problem lies in the method of diagnosis. Official climatology imagines that there is no feedback response to the 260 K emission temperature, effectively adding it to, and miscounting it as though it were part of, the actually minuscule feedback response to direct warming by natural and anthropogenic greenhouse gases.

After correcting climatology’s error of physics, which arose when climatologists borrowed feedback formulism from control theory in engineering physics without understanding what they had borrowed, the interval of absolute feedback strengths implicit in the [2, 5] K ECS prediction is only 0.03 Watts per square meter per Kelvin of reference temperature. Thus, each 0.01 W/m^2 added to estimated feedback strength increases ECS by 1 K.

However, there is no way that our measurements of the climate are sufficiently well resolved to allow us to derive feedback strength to anything like the required precision. It is for this reason that it has proven impossible to constrain ECS despite decades of research.

All of IPCC’s methods of deriving ECS, because they are based on faulty feedback-strength diagnoses, produce predictions that are no better than guesswork.

It is necessary instead to use observationally-based methods that do not depend on knowledge of feedback strength. Monte Carlo simulation based on mainstream observational data suggests ECS of 1.3 [0.9, 2,0] K, which is far too little to worry about.

Were computing power to increase 100,000-fold, then the effects of high and low clouds, at least, if not other unknown relevant terms, such as evaporative cooling, could actually be modeled, rather than “parameterized”, ie picking a number the programmer likes.

I’m not sure if we really know the correct atmospheric CO2 content at the end of the LIA.

To model climate and/or ECS on the base of an unknown number is suspective dice playing.

In already industrialized cities, it was higher of course, but the “canonical” 287 ppm in AD 1850 might be close to reality.

If today’s annual average be 417 ppm, then that’s about a 45% CO2 increase in 173 years, but a tiny fraction of all GHGs.

180 Years of Atmospheric CO2 Measurement By Chemical Methods

The view of the Intergovernmental Panel on Climate Change (IPCC)—a United Nations body that is responsible for advising governments on climate change—follows closely the views of three influential scientists, Svante Arrhenius, G.S. Callendar, and Charles Keeling, on the importance of CO2 as the major driver of climate change.

The linchpin in the IPCC argument is the assumption that prior to the industrial revolution, the level of atmospheric CO2 was in an equilibrium state of about 280 parts per million (ppm), around which little or no variation occurred. This presumption of constancy and equilibrium is based upon a selective review of the older literature on atmospheric CO2 content by Callendar, and later Keeling, of the University of California at San Diego, which essentially discounted all data not conforming to their preconceived notions of historical CO2 levels. (See Figure 1.)

The truth is that between 1800 and 1961, more than 380 tech42 Spring 2008 21st Century Science & Technology

nical papers were published on air gas analysis containing data on atmospheric CO2 concentrations. Callendar [16, 20, 24], Keel-

ing, and the IPCC did not provide a thorough evaluation of these papers and the standard chemical methods that they employed. Rather, they discredited these techniques and data, rejecting most

as faulty or highly inaccurate [20, 22, 23, 25-27]. (See Table 1.)

Publication of Ernst-Georg Beck ́s Atmospheric CO2 Time series from 1826-1960

On Christmas Day 2008, I received an e-mail from the German researcher Ernst-Georg Beck.

He asked me to analyze an atmospheric CO2 time-series from 1820 to 1960. A CO2 time-series from 1820 was something special. Next day I went to work, analyzed the time-series, wrote a short note and e-mailed back a comment. It was all done in an hour. I wrote that there was nothing special about this time-series. The CO2 variations coincided with North Atlantic water surface temperature from 1900 to 1960 (Yndestad et al. 2008) [1].

Accurate estimation of CO2 background level from near ground measurements at non-mixed environments

Atmospheric CO2 background levels are sampled and processed according to

the standards of the NOAA (National Oceanic and Atmospheric

Administration) Earth System Research Laboratory mostly at marine

environments to minimize the local influence of vegetation, ground or

anthropogenic sources. Continental measurements usually show large diurnal

and seasonal variations, which makes it difficult to estimate well mixed CO2

levels.

Historical CO2 measurements are usually derived from proxies, with ice cores

being the favorite. Those done by chemical methods prior to 1960 are often

rejected as being inadequate due too poor siting, timing or method. The CO2

versus wind speed plot represents a simple but valuable tool for validating

modern and historic continental data. It is shown that either a visual or a

mathematical fit can give data that are close to the regional CO2 background,

even if the average local mixing ratio is much different.

Measurements taken in some coal-fired European cities in the mid-19th century found results in the 400s ppm, but most of Earth had much lower levels then. Not just ice core data, but stomata find below 300 ppm at the end of the LIA.

The CO2 Record in Plant Fossils

A standardized way of counting stomata– called the stomatal index ( SI [%] )– has been found to be a good way to estimate the CO2 content of the atmosphere when the plant was alive. The SI-CO2 relationship varies according to plant species, habitat altitude, and other factors.

Stomata from the Eemian highstand show about 330 ppm, which exceeds readings from the Holocene Climatic Optimum, as you’d expect. That puts a pretty hard ceiling on naturally occuring CO2 levels during all but the longest, warmest interglacials.

Also interesting:

CO2 concentration – Available data and – critical analysis

In fact, CO2 concentrations constantly vary, from one place to another and from one time to another, just as temperatures do. To claim that the data collected at a small number of observatories (one hundred of them!), and then processed and expurgated in the ways we have described, are representative of the global value is an absurdity. This restricted view comes from a consensus of experts, and has never been validated. The different measurement methods give different results, which is not at all surprising given the variability of the phenomenon. Reference to cores extracted from the ice is an absurdity: these ice cores are representative of the CO2 concentration at the place of extraction (and over a very long period, as well!), and can tell us nothing about concentrations elsewhere.

There is a consensus within a certain community to present as ‘scientific’ the results

obtained by the methods it recommends, even though these methods have never been

validated and evidently suffer from major methodological defects.

Fair enough Krishna, but you should know that the CO2 levels in ice cores have been homogenized and depleted by the open system to the surface as snow is compressed to firn then ice at 50-80m depth. At depth liquid water interacts with gas bubbles and at great depth ice pressure forces the gas into solids, so resultant measurements from cores at depth are not representative of the original air composition. Jaworowski (2004) stated, “the basis of most of the IPCC conclusions on anthropogenic causes and on projections of climatic change is the assumption of low levels of CO2 in the global pre-industrial atmosphere. This assumption, based on (recent) glaciological studies, is false. Therefore, IPCC GHG projections should not be used for national and global economic planning.”

The Historical Data the IPCC Ignored

If we look at data from scientists of the 19th century, who’s measurement methods were accurate enough, we find CO2 levels as high as 445, as shown below.

The presumption that modern scientists have more integrity than prior ones is of course ludicrous.

Totally agree Krishna, after all 90,000 historical measurements by the older chemical method up to 1960, should not be automatically discounted because they don’t agree with subsequent measurements by followers of the orthodoxy. The IPCC are cherry-picking the data they use, to suit their political agenda- this is not proper science.

Six easy pieces of the water cycle that schools should teach students, but are stuck on CO2.

1) Water vapor is the predominant IR absorbing gas.

2) Clouds are the primary reflector of sunlight back into outer space.

3) Clouds are the shutters that control the planet’s average temperature.

4) Rainfall and sea surface temperature are the primary water vapor producing agencies.

5) As wet surfaces get warmer, there is 7% more water vapor per degree in the air in contact with that surface, which ultimately produces more clouds, more rainfall, more wetness in general, which cools the surface somewhere else tomorrow back down by some opposing amount.

6) CO2 at present concentration is nearly a nothing-burger of which 2x minorly changes the altitude at which clouds form.

It is not as if the Little Ice Age was pleasant weather, so an increase from that should be regarded as a benefit.

And as the global circulation models do not explain the LIA or Medieval Warm Period, their use is pure faith in the model, despite evidence.

A fine review. Thank you, Andy May. Looking forward to the remaining parts.

The “forcing + feedback” framing is the basis for all this work, for both the models and for the observation-based methods. I realize that ECS, TCR, and other formulations of a climate response to GHGs are baked into the mainstream debate. I have been posting comments here at WUWT suggesting different ways of framing the issue. Assessing the soundness of attribution of reported warming to increases in non-condensing GHGs is the core aim.

Here is an example of another way to look at attribution.

“Global surface warming from 1971 through 2018 is about 0.85°C, according to HadCRUT4.[19]” Attribution of at least some of this trend to incremental GHGs sounds plausible at first.

But on the other hand, that trend is about 0.018C per year. During the same period of 48 years, the surface temperature on a whole-planet basis has warmed and cooled on an annual cycle of about 3.8C. It is natural.

[ See Javier Vinós in his book Climate of the Past, Present and Future A Scientific Debate, 2nd ed. Figure 10.2. https://judithcurry.com/wp-content/uploads/2022/09/Vinos-CPPF2022.pdf Also here. https://climatereanalyzer.org/clim/t2_daily/ %5D

So what?

The 0.018C reported warming per year is 1 part in about 211 of the obviously natural annual cycle as the climate system (land + ocean + atmosphere) gains and loses enough energy to produce this well-recognized temperature response. The slow trend does not physically exist separately from these cycles.

So it seems unsound to me, especially considering the dynamic operation of the atmosphere, to suppose that the minor static radiative absorption and emission effect of rising concentrations of GHGs can be isolated for reliable attribution of such a tiny fraction of the observed response (to energy flows) on a whole-planet basis. How, exactly, does that one part stand out from the 210 to be so different?

It seems I messed up the links. Sorry about that.

https://judithcurry.com/wp-content/uploads/2022/09/Vinos-CPPF2022.pdf

https://climatereanalyzer.org/clim/t2_daily/

Agreed. Although my main concern in this series is that a non-scientific and untestable value like ECS has somehow taken over the conversation.

Yes, understood, and your analysis of it is greatly appreciated.

Ja. You can also stop right there and check my report.

https://breadonthewater.co.za/2022/12/15/an-evaluation-of-the-greenhouse-effect-by-carbon-dioxide/

Figure 1 from AR6 is merely ‘the hockey stick’ in elaborate disguise.

While Figure SPM.1 includes both “proxy” and “observations” lines on the same graph it seems to be too closely associated with the PAGES2k dataset, especially as it starts from “Year 1”.

A better candidate for the IPCC attempting to more directly “replicate” the Mann et al 1999 curves that were so prominent in the TAR back in 2001 is “Box TS.2 Figure 2 (panel b)”, which can be found on page 46 of the AR6 WG-I report (screenshot attached below).

“Ninety percent of incident IR is absorbed in the first meter of the ocean and re-emitted or evaporated away shortly after.”.

I think this should be “millimeter”, or even “10 micrometers”, not “meter”. See “infrared” in the chart:

(From Nick Stokes’ blog Moyhu)

Incidentally, the water vapour concentration immediately above the ocean surface is so high that much of the IR doesn’t even reach the ocean surface.

Moyhu link is here.

Mike,

The IR spectrum is very large. Lots of IR is stopped at the surface, but not all.

Solar Radiation & Photosynthetically Active Radiation – Environmental Measurement Systems (fondriest.com)

Mike,

You are correct. The IR is absorbed in the first millimeter or so. I confused the light with the depth the heat reaches. I will fix the text. see here:

The Response of the Ocean Thermal Skin Layer to Variations in Incident Infrared Radiation – Wong – 2018 – Journal of Geophysical Research: Oceans – Wiley Online Library

Hi Andy,

This is a little off topic, but I was hoping to pick your brain.

Forcing efficacy, as I understand it, is a measure of how long energy photons that are associated with a given forcing remain circulating in the earth’s system.

While there is a lot of discussion about the various system feedbacks, these may not actually be the key to understanding ECS etc.

Mike Jonas’s comment above is correct. Energy reemitted by CO2 is generally returned from the ocean to the atmosphere as latent heat and then relatively quickly transported to space.

It doesn’t stay in the earth’s system very long at all when compared to solar energy that, as you say, can remain circulating in the oceans for many years.

For this reason I believe that CO2 forcing may have a significantly lower efficacy than Solar forcing.

Is it possible that this explains the strong connection between solar forcing and temperature for many years as well as the lack of temperature response to increased CO2 in recent years?

The IPCC assumes CO2 and solar have similar efficacy, their Energy Balance Models are based on this and form the basis of their ECS estimates.

I had a paper published on this a few years ago. It disappeared without trace.

I was hoping you might let me know your thoughts.

A very interesting idea! I see your point; most sunlight is absorbed much deeper in the ocean than GHG emitted IR. Most emissions from Earth come from the atmosphere, which is why we periodically have El Ninos, they are needed to move excess energy from deeper in the ocean to the atmosphere. It follows that Sun deposited energy has a longer lifetime than GHG emissions. I like the idea; it had not occurred to me before though.

When solar activity increases, it is not the increase that makes the difference, it is the time. The Modern Solar Maximum lasted from 1935 to 2005, the longest such period in 600 years.

Hi Andy,

Thanks for your response.

I don’t know if the modern ocean models handle incoming LW radiation differently than the ones in 2008.

“Technical Guide to MOM 4.0, GFDL Ocean Group Technical Report 5, 2008) divides solar penetration into the water column into three exponentials. Quote from MOM Guide 8.3.2;

“The first exponential is for wavelengths >750nm (i.e., IIR) and assumes a single attenuation of 0.267 meter…”

Importantly, they treat LW reemitted by CO2 in the same way.

The climate models used the MOM 4.0 ocean models back then, and I’m assuming there has been no significant change since.

If they do then then they assume the energy from back radiation is absorbed on average 267mm below the ocean surface and is, therefore thoroughly mixed in the ocean.

The model grid squares are too big to pick up the evaporation affect on reemitted CO2 radiation at the surface and for this reason produce similar efficacy for CO2 and solar forcing.

I put together a crude Energy Balance Model 10 years ago that included the assumption that CO2 forcing had a quarter the efficacy of solar forcing. The resulting centennial temperature series fitted well and included the 1960s cooling, the pause from 2000 and all other main features.

The whole GHE is based on energy residence time in the system. CO2 delays the release of energy to space and therefore warms the planet. Solar would have a much larger effect for this reason alone. The models don’t pick up on this factor for the reasons described above.

Any further comments appreciated.

Hi Bob,

Thanks for the reference. I downloaded it and will look at it, at first glance it looks very good. I don’t have any more comments right now, but maybe later after I’ve read the report.

Thanks.

Very nice Andy, what would be helpful is a final take away paragraph. Something like they say this we can’t see how it could be higher than this. I know you have said everything in the article but nothing could be more powerful than a few concise words flat out showing the difference.

Maybe in next installment.

Parts 3 and 4 will get you there, I think.

One mole of CO2 requires 846 J of energy to rise 1 K at 300 K. If I double the amount of CO2 and keep the amount of energy the same (the sun’s input is invariant) I get 0.5 K rise.

The emissivity of CO2 is almost zero at the temperature and pressure in atmosphere. There is no sensitivity to it.

Emissivity and pressure ( movement of mass) is so underrated. Additional manmade CO2 gas emissions affecting global temperatures have to be miniscule.

https://reality348.wordpress.com/2021/10/26/new-book-the-movement-of-the-atmosphere/

CO2 forcing – it works just like this

The notion that there is any debate over greenhouse gasses with regard Earth’s climate shows how little science is actually involved in this religious “debate”. The lack of scientific understanding and debate over nonsense is pathetic.

Open ocean surface cannot sustain a temperature over 30C. Ocean surface water cannot exist below -1.7C. Two temperature limits that stabilise Earth’s climate and control the weather patterns in response to the ever shifting solar intensity. The attached comparison for western Pacific shows how silly the climate models really are. They have temperature in 1980 2C cooler than actual and a steady warming trend to be perpetually over 30C by 2080 – a physical impossibility on this Earth with its present atmospheric mass. The reality is little to no trend in temperature over the same region because it is always close to the 30C limit.

There is no relationship between local absorbed radiation energy and local temperature change. Why should there be any relationship between the two on a global scale!

How did the energy that is being extracted with wind turbines get into the wind! How did the energy in fossil fuel get into the fossils. How much energy are trees locking up.

The largest part of ECS is based on specific detail, that makes the difference between some 0.5K and about 3K. The radiative forcing (and feedback) is neither derived from a delta in “back radiation”, nor emissions TOA. Instead it is about the delta in emissions top of the troposphere PLUS the delta in “back radiation” from above, that is the stratosphere. This “logic” is transparently applied for instance in Ramanathan et al 1987.

*Climate‐Chemical Interactions and Effects of Changing Atmospheric Trace Gases (nasa.gov)

What I have not found so far is the reasoning behind it. Does anyone know where this originated, and if there is a ressource explaining the rationale?

Syukuro Manabe was awarded the 2021 Nobel prize in physics for his atmospheric modelling that gave credibility to this rubbish about GHE.

The fact that the award was offered and that he accepted shows how corrupt the whole science community has become.

If you want to find someone to blame then start with Manabe. If he was honourable he would have declined the prize because he knows that clouds are not understood or modelled in any useful way.

Did it originate with Manabe? If yes, which paper?

The idea dates back a long way but Manabe was credited with modelling the atmosphere and giving a definitive answer to the impact of CO2.

Manabe developed the first ocean-atmosphere model and reported on it in J of Atmospheric Sciences in 1969 with Kirk Bryan. Two preceding papers in 1967 and 1964 are also important. Title: “Climate Calculations with a Combined Ocean-Atmosphere Model.”

Rick, in 1967, after 31 Figures and 11 Tables, part of Manabe and Wetherald’s 6 conclusions was “4) Doubling the existing CO2 content of the atmosphere has the effect of increasing the surface temperature by about 2.3C for the atmosphere with the realistic distribution of relative humidity…”, plus “6) The effects of cloudiness, surface albedo, and ozone distribution on the equilibrium temperature were also presented.”

In 1967, Texas instruments invented the electronic calculator. Manabe didn’t have one. Sixty-five years later, we use his algorithms in supercomputers plus the results of immensely costly research programs and satellites…and don’t get a better answer.

Maybe you could have a read:

https://geosci.uchicago.edu/~archer/warming_papers/manabe.1967.rad_conv_eq.pdf

Why would I want to waste time on nonsense. The concept does not deserve intelligent inquiry.

One thing I learnt about modelling a long time ago is that if your model does not replicate observation then it is not useful beyond the point of discarding its premise.

The whole horrible saga started with Manabe. It should have been binned before it got any attention.

When there is a climate model that limits open ocean surface temperature to 30C through convective cloud persistence they may have something worthy of attention.

Any climate model that shows a warming trend in the tropical western Pacific is useless.

RADIATIVE FORCING BY CO2 OBSERVED AT TOP OF ATMOSPHERE FROM 2002-2019

https://arxiv.org/pdf/1911.10605.pdf

“The IPCC Fifth Assessment Report predicted 0.508±0.102 Wm−2RF resulting from this CO2 increase, 42% more forcing than actually observed. The lack of quantitative long-term global OLR studies may be permitting inaccu-racies to persist in general circulation model forecasts of the effects of rising CO2 or other greenhouse gasses.”

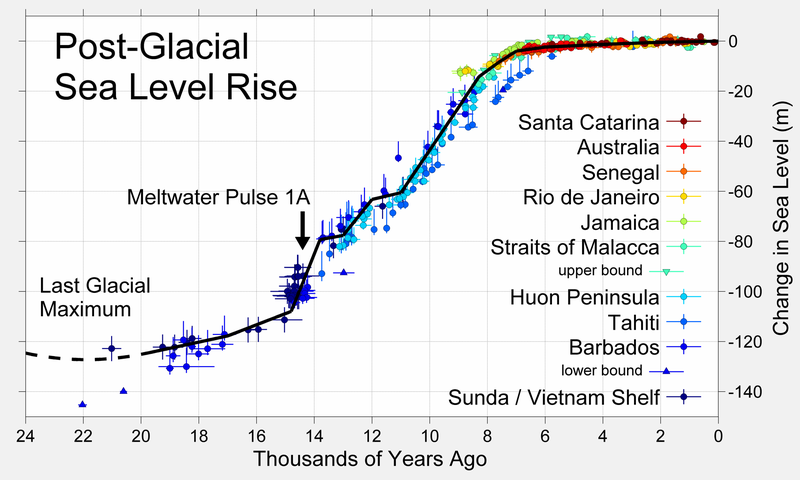

The rise in sea level has been approximately linear for the last 7,000 years. If we assume that sea level is primarily driven by melting ice and thermosteric expansion, then sea level rise can be used as a proxy for long-term warming. If natural warming initiated ~11,000 BP has continued (there is no reason obvious to me why it shouldn’t) then there should be an obvious increase in the slope with anthropogenic warming. There isn’t! To speculate that natural variability stopped with the beginning of the Industrial Revolution, one is obligated to provide a reason for it to do so. I have not read of any such reason.

Andy, nowhere does anyone I know of in this debate ever acknowledge that the Le Châtelier Principle is unquestionably a central operating agent in the dynamics of the climate system, including what ECS will be and the status of a kaleidoscope of other factors through its interactions between atmosphere, biosphere, oceans and earth surface.

How does it work. The Principle states that any perturbing change, to one (or more) of the interacting components of the system (composition《including chemical, biol, and states of matter》 temperature, pressure, fluid kinetic regimes, etc…..) that the the other components react to resist this perturbing change, thereby ending up with a change much reduced from what was expected (“The Physics” perhaps?).

The net feedbacks are incontrovertibly negative, essentially by definition.

A couple of easy to see examples before I scare readers away: what happens when you heat the atmosphere by whatever agent? The atmosphere expands, which is a counter cooling reaction. Moreover plants accelerate photosynthesis and grow, the reaction being endothermic, which means cooling! The warmth of the sun causes evaporative cooling, particularly over the oceans and a series of ever stronger cooling events generate themselves with persistent heating, well described in Willis Eschenbach’s many fine articles on the earth’s temperature governor.

Similarly, reactions by the minons of the system to the addition of CO2 result in accelerated plant and plankton growth, increased solution of the gas into the ocean where it is sequestered as carbonates in shells and even inorganic precipitation as limestone…..

Am I wrong in thinking that physicists generally are unaware of the Le Châtelier Principle? Let us suppose that climate scientists had done “The Physics” right when their anomaly forecast for the early 2000’s proved to be 300% too warm using their models. Well, that would mean that chemists should supply them with an LCP coefficient of 0.33 for their models.

The logarithmic diminution of the temperature effectiveness of any added CO2 beyond the current level of 420ppmv is almost saturated. So, further CO2 emissions can only cause minor additional Global warming. The effects of CO2 on temperature effects can be estimated as follows:

Climate models that assume massive positive temperature feedback leading to alarmist conclusions of excessive temperature rise are in error.

Methane and Nitrous Oxide both react rapidly in the atmosphere and this inherent chemistry means that they dissipate rapidly. So, they can only maintain very low residual levels, even with further Manmade CH4 and N2O emissions.

The total warming effect of CH4 and N2O amounts to ~+0.53°C. This effect has persisted since the atmosphere became rich in enough in Oxygen to purge the majority of these minor Greenhouse gasses. There has been a Manmade contribution to increased temperature can be assessed as follows:

The Manmade contributions to extra CH4 and N2O have a wholly trivial temperature effects and cannot justify any policy actions to control such emissions. This is especially important as any actions would mean major losses of agricultural productivity: not using artificial nitrogen fertilisers would lead to famine for millions / billions of the human population.

Further Global Warming arising from the use of Fossil fuels by Mankind is a Non-Problem.

https://edmhdotme.wordpress.com/an-historical-context-for-man-made-climate-concerns/

https://edmhdotme.wordpress.com/the-minor-greenhouse-gasses-co2-ch4-n2o/

I wonder.

I think the gh gas effect works that it delays heat going out to space.

Not so?

What I wonder about is that this apparently works differently on the NH and the SH.

Does anyone here maybe have an explanation for this?

Wood for Trees: Interactive Graphs

Any idea? Anyone?

koolstofdioxide niet de dominante factor was voor de opwarming (climategate.nl)

The “official” explanation is that most of the land is in the NH, and land warms and cools faster than water.

The reality is more complicated. The NH land has an impact on variability. But the main difference is due to differences in the efficiency of meridional transport of energy from the tropics to the poles. More here:

(6) (PDF) Climate of the Past, Present and Future. A scientific debate, 2nd ed. (researchgate.net)

I’m starting to conclude that nothing is more complicated than the Earth’s climate other than the Universe in its totality! So when I hear anyone say “the science is settled” I feel sorry for that ignorant person.

“The climate sensitivity to CO2 and other greenhouse gases (GHGs) is arguably the most important number in the climate change debate.”

Maybe you really meant to say “in IPCC fantasy climate models”, because in the real world, the climate doesn’t follow CO2. CO2 actually follows the ocean temperature, just as the atmospheric temperature follows the ocean temperature, so CO2 is unimportant to the climate and the climate debate other than it’s very measurable photosynthetic greening role.

Andy, this obviously means the ECS of CO2 is exceedingly close to zero. if not zero.

As ECS~0, it is obviously not the most important number in the climate change debate.

Andy, you write “The world was cooler in the 19th century and the Little Ice Age was just ending. For this reason, there were fewer El Niños then than now”

This might not be correct. According to the MEI index going back to 1871, the trend is in long-term decline for both ENSO phases.

The figure is here.

From this article at NTZ.

That plot is the number of months in each state, not what I was talking about. see Javier’s discussion on p 54 of his book, where you will find the attached plot, major El Ninos are in red. The book can be downloaded here:

(6) (PDF) Climate of the Past, Present and Future. A scientific debate, 2nd ed. (researchgate.net)