Reposted from NOT A LOT OF PEOPLE KNOW THAT

AUGUST 15, 2021

By Paul Homewood

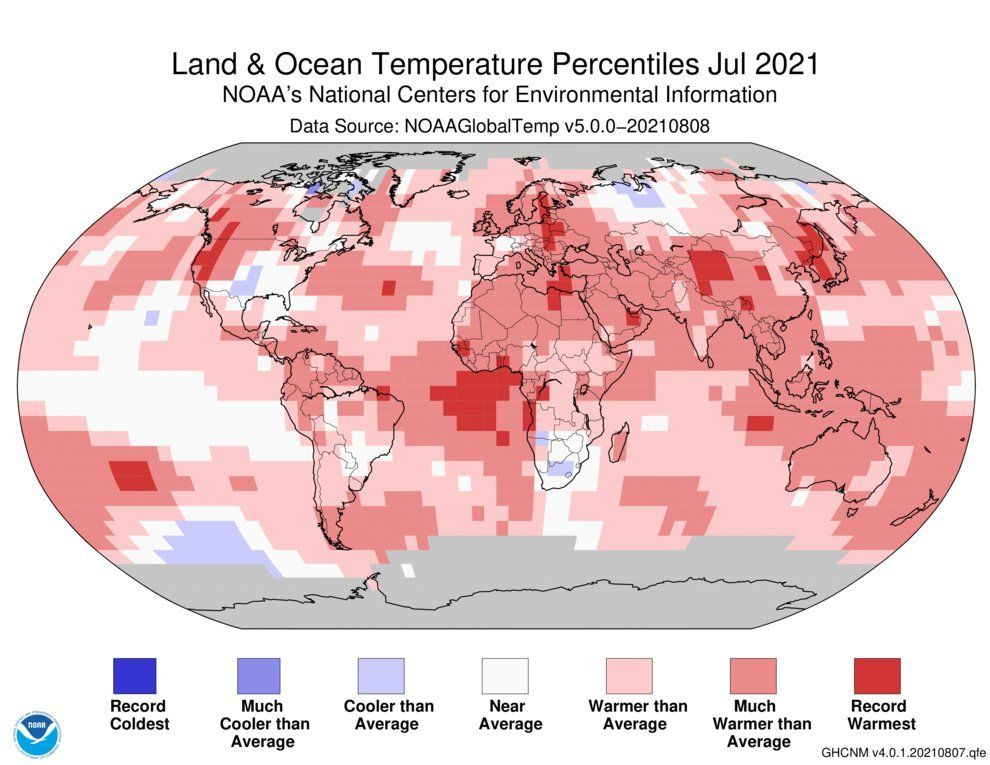

You will recall this story from 1st August, regarding the coldest weather in decades which has been affecting Brazil for much of July. Strangely the fraudsters at NOAA have decided that last month was warmer than average!

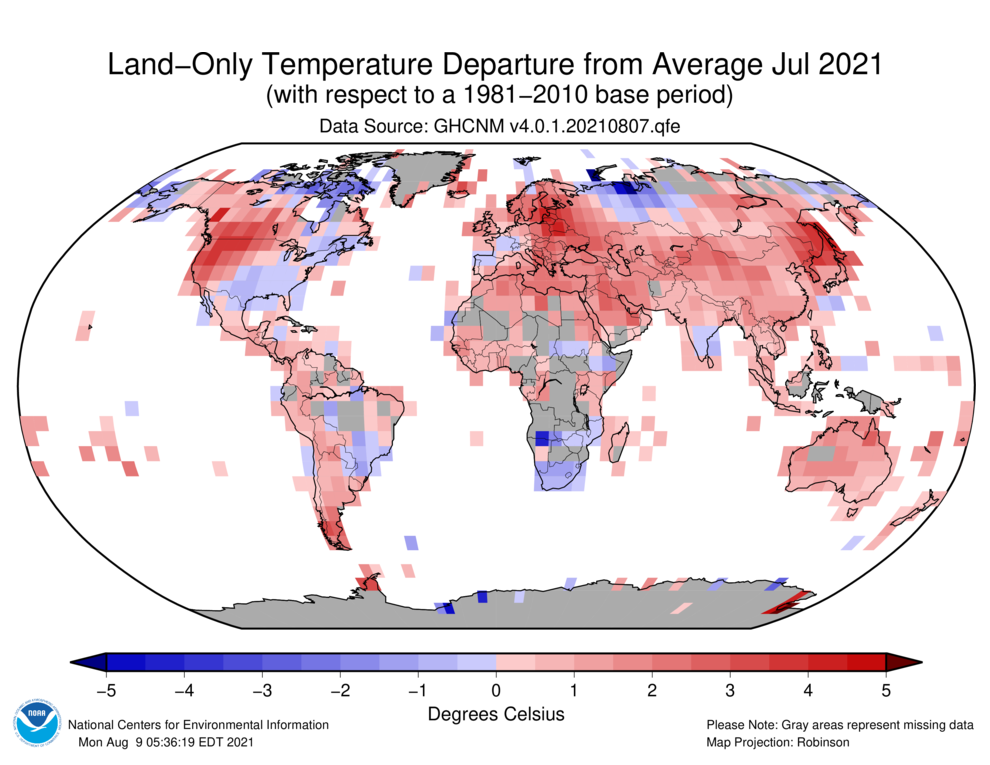

Despite having no data for much of the country, and most of the data they do have shows it has been a cold month:

And they call it science!

Outrageous! Their noses must be meters long from telling all these porkies!

While the measured data they do have is scant regarding area of coverage, that which they do have is indicating mildly warmer in some areas and mildly cooler in others (+/- 1-2C) the problem is, when they replace the missing data with that from “Near By” grid sources, they always opt to adjust the un-measured grid cell to match the warmer cell, then homogenization forces an artificial warming trend onto the cooler regions

Zachary like the BoM in Australia … real data that does not match the agenda is dumped and replaced with ‘homogenised’ data from the hot red centre of the country.

Oh no, I am sure that John Phillips and bdgwx have a perfectly reasonable explanation for why this is totally, totally not fraud.

With the geriatric now running the nursing home they probably figure they can get away with anything.

So tragic.

Meanwhile Creepy Dementia Joe and Cackling Karmela are hiding, and Circle-Back PSnarki is on vacation.

It might just be me, but I would have evacuated the embassy before evacuating the military.

There you go, trying to make sense again.

It certainly seems the Biden and his advisors gave little thought to the logistics of leaving. And, Vietnam wasn’t all that long ago! Maybe the mental state of Biden should be considered when looking for someone to blame for the atrocities that will almost certainly follow.

Several Democrats are already trying to blame Trump.

They have short memories. Initially Trump proposed withdrawing from Afghanistan in an organised way, but he then took the advice of his generals and halted the withdrawal when advised that the Afghan army could or would not stand alone.

Of course. What else can the Democrats do?

I saw an opinion poll this morning about Biden’s Afghanistan disaster, and he was getting bad ratings even from the Democrats. The Independents and Republicans were giving Biden “F’s”.

Biden is not going to escape blame for this one.

Crash and burn time, even the leftists can see it now.

It’s easy to make those strategic insights after the event.

In the UK you’d be called “Captain Hindsight”.

Yeah, I suppose he makes the same mistake going to the toilet.

You mean he fills his britches before he heads for the john? I can just see the guy shuffling around the halls of the WH …

perhaps the reason for his now famous …

“mybutt’sbeenwiped”

Precisely.

Bwuahahaha, everyone knew this would happen. You grow up in a lead paint house?

Blimey. Didn’t you guys recognise the sarcasm?

My point was that such self-evident cock-up is identical to our UK PM’s performance.

His excuse is to call the person who had warned him, “Captain Hindsight“.

I considered it, but I didn’t appreciate the insult.

My apologies. I did not realise that the”Captain Hindsight” reference was not common knowledge in the USA.

Seriously, I do apologise for the misunderstanding.

It is a common part of the political discussion in the UK.

Here is one of many references I could point to as examples.

https://www.politics.co.uk/cartoon/2021/05/26/and-they-call-me-captain-hindsight/

🙂 Thank you. I should have known better.

BS! The incompetence and lies are obvious. Even Ray Charles could see it.

Closing up the Bagram airbase before they were setup for a proper evacuation is the most obvious idiotic thing they did. Anyone with two working brain cells should be able to see that!

There can be no covering this up. It’s too big! And here are a couple of the idiots below Biden lying their asses off to Congress about how they had a plan and how well it was all going not long ago. It is quite simply criminal.

https://youtu.be/9bpS8Zcic-U

Joe’s social distancing and telecommuting as Commander in Chief at the Camp David Command Center, as seen here:

As others online have pointed out, there are serious problems with this photo: 1) The Moscow time is incorrect – it shows a 3 hour variance with London, yet, currently, there is only a 2 hour variance. And 2) the little black squares (monitors) on each side of the main screen are dark/off. They would have to be lit up, with “Mic On” displayed in bold letters, for Black-Eyed Biden to actually be able to speak to anyone.

But, you know, the adults are back in charge! Or something.

Well, as you can clearly see, ALL of his supporters are present. Meaning that he didn’t NEED to have his mike turned on. It’s reminiscent of his pre-election ‘rally’s’!

“serious problems with this picture” are you serious? The cartoon image stretched bigger than the width of the monitor didn’t catch your eye? It’s just a gag, everybody knows Biden wouldn’t waste time watching cartoons – it would eat up too much of his nap time.

climate refugees no doubt

Sociopolitical climate change is a first-order forcing of catastrophic anthropogenic immigration reform.

Meanwhile Harvard professors proclaims that people who wave the flag are faux patriots and responsible for the fall of Kabul.

https://www.foxnews.com/politics/harvard-professor-says-flag-waving-kristi-noem-unserious-kabul-crisis-is-what-happens-when-us-not-serious

Alternate universe.

https://pbs.twimg.com/media/E820GeqXoAUupq_?format=jpg&name=medium

And it’s academics and democrat apparatchik with no real world experience and a military leadership more worried about being woke than fighting wars or even now protecting American citizens that are the culprits. Biden is no more than a sock puppet.

I mean you appoint a lawyer with zero military or strategic experience as your National Security Advisor, what do you expect?

Video unavailable. Prb’ly censored.

Definitely, as the truth seems to be an ever greater threat.

From the following article: “Normally we would never post videos of what appears to be two people either getting seriously injured or dying. But the situation in Afghanistan is so horrible that the repercussions of the Biden regime’s actions must be documented.”

https://conservativeplaylist.com/2021/08/16/disturbing-video-shows-desperate-afghanis-clinging-to-u-s-c-17-before-plummeting-hundreds-of-feet/

Deplorable & shameful repeat of US evacuation of Saigon in 1975.

Yes and you may recall that it was caused by the same Democrats who REFUSED to let the military handle the battle! Once it was clear that Congresses way wasn’t working, the evacuations began! Hmm. Sounds kind of familiar, doesn’t it?

“Yes and you may recall that it was caused by the same Democrats who REFUSED to let the military handle the battle!”

The loss of South Vietnam was definitely caused by the same Democrats, and Biden was one of them.

Biden has been on the wrong side of three foreign policy debacles: South Vietnam, the Rise of the Islamic Terror Army in the aftermath of the Iraq war, and now Afghanistan.

Like former Defense Secretary Gates said, Joe Biden has been wrong on just about every foreign policy and national security issue for the last 40 years.

Nothing has changed. Biden is still wrong. So how many lives will Biden ruin this time with his delusional thinking?

You actually think that old senile fool that walks off into the bushes and has great difficulty even reading notes and completing a full sentence is making the decisions?

I think he made this one. All indications are that he ignored all the advice he was given, and Joe Biden has a history of having no regard for Afghanistan. He once said “Fxxx That” back in 2010, when asked if the U.S. was obligated to protect the Afghan people.

This Afghanistan debacle is all on Joe.

The head towel-head of the Taliban has an active Twit account, while the account of 45th President of the US has been canceled.

Anything wrong with this picture?

You called him a towel head instead of an austere religious scholar? Just guessin

/me nods

YouTube removed the video for violating community guidelines

Community or communist?

Same thing.

Visit the Whote House Channel on Utube – no comments allowed and it gets thumbsdown 10 to 1.

ytube only to view from aus AND sign in required

no way do I have acct with that mob

Political science?

Nowadays, it is an oxymoron.

It’s the scientific method. Postulate, hypothesis, experiment, oops.

I like your version of the scientific method. When I was young it started with observe before hypothesise. Nowadays when most researchers never leave the computer screen the scientific method is : identify funding opportunities, apply for grant while making sure to include all key trigger words, develop model using other people’s data however unfit for purpose or how unfamiliar you are with the datasets weakness, generate model output misleadingly referred to as data, adjust parameters until model output meets funding agencies mandate, initiate promotional sequence with media alarmist partners, send manuscript to journal with highest media presence that your status allows, participate in sham pal review process, accept awards/accolades from people receiving funding from the same or similar agencies, repeat until early retirement.

True to form, the Caterwaulers will blame this on the Code Red Climate Catastrophe. Because Carbon Magic.

Yesterday in Nature-Climate Change: Increased CO2 increases passivity in common people but enhances aggressivity of terrorists, experts say.

Corruption in high places. They know they can get away with it.

We call it corruption.

They call it doing their jobs.

They are trying to frighten us by threatening the demise of our coffee.

They tried to do that to beer a few years ago. They claimed, using RCP8.5 fantasy of course, that hops and barely production were threatened. The beer crafters association set them straight and publicly called it out as nonsense showing hops and barely production would only increase under CC.

And yet barely production was barley affected.

Thanks zig

It does seem that barely anybody on this blog can spell barley

Quick! The YADAs are needed PDQ!

Nothing new, just more lies from NOAA.

We who are lucky enough to inhabit wealthy western democracies now live in a world where what is said has more value and power than what is true. This is done deliberately so that criminal politicians, fraudsters and undemocratic nations can steal what our parents and grand parents built with their bare hands and heroic effort. All of our rights and freedoms will be the first things we lose unless we fight back.

I live in west-central Argentina and I remember when the cold air went through on its way to SW Brazil, it was unusually cold for weeks. The track of the cold air can be traced by news of crop failures, which would be a Reality Check.

What can you say when supposed “scientific” bodies cannot be trusted? Pathetic.

But what we can expect, I guess, when temperatures are “divined” to be different from what the thermometer said based on what another said kilometers away, because that one gave an answer more suitable for pushing “climate change” propaganda.

Well the thing is, this wasn’t a period of extreme cold weather, but a one off ‘freak frost’

‘freak frosts reported on July 20, when temperatures dropped to freezing levels in minutes…’.

Frosts are not unusual in July in the Bazil coffee region… the sudden onset and drop to minus 1.2 was unusual.

so the average monthly temperatures for Brazil wouldn’t have been severely impacted by this one off event, would they? And what about Argentina: unusually warm in July in the North!

Give up the lies. See Ron Long’s comment above.

Thanks, Scissor. We might hear from John Tillman, who lives in central Chile, about the cold air going through?

Same amount of warmig wouldt be, for you only, a catastrophic climate overheating.

You are soooooo clueless.

Not clueless. Predictable, dishonest, disingenuous, dishonest, monotonous, dishonest, propagandistic, dishonest. Did I mention dishonest?

Amazing how one week of hot weather is proof of global warming, but one week of cold weather is just a freak frost that means nothing.

To Griff!

I laughed out loud when I saw Griff’s reply.

It’s all weather, Griff.

griff,

On another thread you said one-off rain events were an indication of global warming. Rain events are not unusual at any time of year almost all over the globe.

So which is it? Are one-off events climate indicators or are they not climate indicators?

Or does it just depend on whether it fits your agenda at the time?

One off events are truly Climate Indicators whenever Griffie-Poo says they are. The Griff has spoken

Nor is hot weather unusual in Greece and Turkey in July. You dismiss unusually cold weather in southern hemisphere Winter (27 years since last event), but rant about hot weather in northern hemisphere Summer. You lack objectivity. You see what you want to see to confirm your belief system.

The entire “climate change” narrative is founded on confirmation bias … not to mention equivocation, appeal to authority, appeal to credulity, appeal to pity, appeal to population, straw men, red herrings, shifting the goal post and cherry picking … with a shocking amount of hypocrisy.

OH, the hypocrisy of it all!

https://riotimesonline.com/brazil-news/nosubscription/expectation-of-intense-snowfall-and-record-cold-fills-hotels-in-the-mountains-of-brazils-southern-state-of-santa-catarina/

Must publish it, Griff, in a peer-reviewed journal!

You just stated thet there are “one off ‘freak frosts’ “, but you always classify any heat spell as a necessary result from “climate change”.

An asymmetry in nature: hotter, is climate change; colder, is “one off freak frost”.

The sky is the limit: next, you can claim a Nobel prize!

In the real world, Joao; if anything, the exact opposite would be true in a geologically cooling world. The recent warming is an anomaly and it’s cooling we should be preparing for.

Yes, I know. I “grew up” during the “climate cooling” psychosis, I was university student (finished course in 1973). I had very good professors of biology and geology. We often discussed the hype and reduced it to its real size, and understood the more important conclusion: we were in an interglacial and cooling to the next glaciation; but it would not happen tomorrow!

Also, already in high school we got penalties in our marks if we confused weather and climate. Unfortunately, there are nowadays full professors at the university that talk in their lessons and even when arguing PhD theses, of “the climate of the year when you made your observations…”. Sad…

You identified the sarcasm: actually, that asymmetry occurs, but griff is seeing it upside-down: climate change is the cooling tendency, warmer spells or “pauses” are the “one off freaks”…

Their language actually gets it right (talking about anomalies), but for the wrong reason. That’s why I say that “climate change” is equivocation (appeal to ambiguity), a logical fallacy. We’re led to believe that humans are causing this anomaly, whereas the periodic warm periods are the anomalies. This is why their 30 year climate reference is silly, in the face of temperature change in geological time.

I agree with that. Especially with your last statement, where you identify the time frame. The 30 year period is useful in historical time; for old geographers it was a kind of geographic measure: the earth was dividen by a kind of longitude and by “climates”, i.e., belts of latitude with similar conditions, and this “constancy” was ascertainable in the scope of (long) human life or the writen or otherwise transmitted knowledge from previous generations. When our time frame ranges to geological time, the 30 year period is ridiculous.

Climate science: the realm of “pauses” and “one off freaks”!

Griff makes a good point.

Climate change is irrelevant when compared with the weather.

The magnitude and speed of weather changes are far greater and the impact on crops is greater too.

Wise words!

Only one “one off freak day” with hail and a one year crop is lost!

Hail, late frost, heat,… a “one off freak day” is enough to anihilate one year’s work (and in certain cases, of two years).

The griffter gets all depressed this time of year…only weeks before the Arctic begins to start accumulating ice – the Big Freeze returns.

If anything there makes sense at all griff you hid it well.

Never mind! Please tell us, in which time period would you prefer to live your life?

[__] Benign low CO2 1675-1750

[__] “Dangerous” CO2 1950-2025

The first map shows temperature anomalies relative to the 20th Century July average; the second shows them relative to the warmer 1981-2010 July average. That’s why the colours on the maps don’t match up. Why NOAA do this I don’t know.

The author says the second map shows that Brazil had “a cold month” in July. However, you can see that large areas of north and east Brazil were warmer than the 1981-2020 average. Of the 33 or so grid squares I count in or touching on Brazil, 14 show warmer than average temperatures, 12 show cooler than average (including the coffee region mentioned) and 7 have insufficient coverage. Overall it is not surprising that this would make much of Brazil slightly warmer than average in July, based on a 20th century anomaly base.

UAH use a warmer-still 1990-2020 anomaly base and they determined that Brazil (or the lower troposphere above it at any rate) was pretty close to the 1990-2020 July average this year. The only exception is right over the coffee region. Again, that’s pretty consistent with the NOAA data. It’s confusing, but when the different base periods are taken into account the mystery kind of vanishes.

And why they use the outdated base periods ?

Using different measuring sticks is par for the course. If you confuse things enough with inconsistent units, you can say whatever you want and no one can show you are wrong.

I forgot the sarc tag… 😀

I am only arguing that climate science isn’t the only field this happens in. Your “sarc” was understood by me anyway.

The answer is literally always 42 all you have to do is invent new units for every result.

The first map shows land and sea, with no baseline

The second land only

#Obfuscation

Try going to the source ….

From:

https://www.ncdc.noaa.gov/monitoring-references/faq/anomalies.php

How is the average global temperature anomaly time-series calculated?

The global time series is produced from the Smith and Reynolds blended land and ocean data set (Smith et al., 2008). This data set consists of monthly average temperature anomalies on a 5° x 5° grid across land and ocean surfaces. These grid boxes are then averaged to provide an average global temperature anomaly. An area-weighted scheme is used to reflect the reality that the boxes are smaller near the poles and larger near the equator. Global-average anomalies are calculated on a monthly and annual time scale. Average temperature anomalies are also available for land and ocean surfaces separately, and the Northern and Southern Hemispheres separately. The global and hemispheric anomalies are provided with respect to the period 1901-2000, the 20th century average.

Why do some of the products use different reference periods?

The global maps show temperature anomalies relative to the 1981–2010 base period. This period is used in order to comply with a recommended World Meteorological Organization (WMO) Policy, which suggests using the latest decade for the 30-year average. For the global-scale averages (global land and ocean, land-only, ocean-only, and hemispheric time series), the reference period is adjusted to the 20th Century average for conceptual simplicity (the period is more familiar to more people, and establishes a longer-term average). The adjustment does not change the shape of the time series or affect the trends within it.

Justifing adjustments again, if I was a noaa or met office employee I would be doing the same ,considering there on board with the lie of global warming.

I asked you yesterday to prove your statement “I am a retired UKMO meteorologist who knows the science and is not motivated by ideology.”

If you use the above statement to qualify your posts then you need to prove it otherwise your posts are hollow , its also ( as stated yesterday) people like you only post when the manipulation of temperature data is brought to light , the top commandment in the climate church the lie of climate change its deep root

Temperature.

Averaging averages which have first been interpolated from artfully selected, already biased data is utterly meaningless. Averaging discrete information is already a fools game, like averaging phone numbers or street addresses. Then adjusting this concocted pseudo data down, in the past, whenever present temperatures don’t correspond with modeled projections is simply fraud and if not fraud, it’s delusional.

These so called “temperature anomalies are nothing of the sort. They’re 100% fantasy pseudo-data.

“Averaging discrete information is already a fools game, like averaging phone numbers or street addresses”

“We have had this argument before. Uncertainty *GROWS* when you combine unrelated measurements, it doesn’t disappear.”

“The uncertainty *grows* when you add numbers. Dividing by a constant doesn’t affect that uncertainty. ”

Yes we keep having this argument, because you are unable to accept you may be wrong yet are unable or unwilling to provide any type of evidence to show why I’m wrong. I’ve showen the very books you use to argue your point show the opposite, I’ve poited out the absurdities your claim would lead to, and I’ve given empirical evidence that demonstrates you are wrong. Did you see my example of estimating π using just 2 discrete values, and how the estimate becomes more certain as sample size increases?

Let’s try another example using your point about the area under a sine wave. Calculus says that the area under a sine wave between 0 and π is 2, and dividing by π gives us approximately 0.637, which is almost what you claimed. If I’m right it and averages means something then it should be possible to get an estimate for this value by taking random samples. I also claim that as sample size increases the uncertainty of the estimate will decrease. I also claim that adding uncertainty will not have much of an impact on the estimate especially as the sample size increases.

My understanding of your point is that the average will be no different than averaging telephone numbers, that the uncertainty will increase as sample size increases, and that the average will tell you “absolutly nothing”. Is that what you would expect?

Do you want to do the experiment?

I’ve given you the evidence. It’s right there in Taylor’s book. All you have to do is read it. A constant has no uncertainty. It doesn’t matter if it is a multiplier or divisor, it doesn’t contribute anything to uncertainty. It doesn’t increase it or decrease it.

You only have ONE sample – *PERIOD*. What you are doing is trying to say you can sample different things and combine them as if they are samples of the same thing. When you measure a temperature you get one try at it. What you measure then disappears into the past, never to be seen again. You can’t sample it multiple times in order to create a probability distribution.

So you don’t have an increasing sample size. You have a multiplicity of sample sizes of ONE.

It is obvious that you don’t understand integrals. You are measuring the area under the curve. That area is is *NOT* two between 0 and pi. Think about it. How can a sine wave of amplitude 10 have an area of two while a sine wave of amplitude 20 has the same area under the curve? The area under the curve is calculated by multiplying the height of the curve times the width of the curve being measured. With calculus this becomes the integral of Asin(x). You can’t just eliminate the amplitude “A”. The width becomes dx and the height at any point is Asin(x). That is why the area under the curve is .636(A).

“Did you see my example of estimating π using just 2 discrete values, and how the estimate becomes more certain as sample size increases?”

I don’t think that is what you said. You implied you could model a continuous function using just two values. That’s an impossiblity. It’s why you got called out about learning how digital signal processing works.

“My understanding of your point is that the average will be no different than averaging telephone numbers, that the uncertainty will increase”

Telephone numbers are CONSTANTS. They do not represent data points consisting of samples of a continuous or even discrete variable. In other words none of the samples have any uncertainty. They are constants. Averaging them would be like averaging integers over a specified interval on the number line. Those integers are not measurements of anything, they are constants with no uncertainty. Such an average really tells you nothing about the physical world.

Independent, random measurements of different things each time a measurement is made *WILL* see the uncertainty of their sum increase as the number of different things being measured grows. Again, consult Taylor on this.

“I’ve given you the evidence. It’s right there in Taylor’s book. All you have to do is read it.”

No you haven’t. You keep pointing me to Taylor and then ignoring what he says, including the parts where he directly points out why you can divide uncertainty. I want you to point me to where Taylor says – a) uncertainty grows as sample size grows, and b) dividing a measure by a constant does not reduce the uncertainty.

“What you are doing is trying to say you can sample different things and combine them as if they are samples of the same thing.”

Yes I am. That’s because they are samples of the same thing, that same thing being the population mean. You keep failing to undersatnd that when you calulate an average you are not trying to estimate one individual measurement,you are trying to estimate the mean of something. In the case of temperature it might be the mean temperature over an area, or the mean temperature over a period of time, or a combination of both. I don’t care what one specific measurement is, excpet that it is a sample of the mean. That doesn’t mean that if I am interested in a specific value at a specific time I cannot go back to the sampe, but if I want to now what the mean is that is what I am trying to estimate.

This is in principle no different to what Taylor does in the section where he shows how you can take multiple measurements of the length of a piece of metal to get a more accurate measurement. He specifically says to measure it using different instruments and to measure different parts. Why does he say that if you don’t expect different measurements to give different results? What is the final measurement of? It cannot be any one part of the metal because then you would only need to measure at that place. The final average measurement is trying to measure the average length of the sheet.

“It is obvious that you don’t understand integrals.You are measuring the area under the curve. That area is is *NOT* two between 0 and pi.”

Maybe I wasn’t clear, but when I said the area under a sine wave, I was talking about the standard sine wave, not a multiple of phase shifted one. The area under the curve from 0 to pi is 2. It’s a simple application of the definite integral. And of course if you multipley the sine by a constant the area under the curve will also be multipled by the same constant.

I’m ot sure why you are being rude here, when I’m actually agreeing with you. But t does abvoid you answering any of the questions about how you can estimate the average of the sine wave by sampling.

“You implied you could model a continuous function using just two values.”

I don’t think I’ve said that, but you do keep changing the discussison. What I’ve been talking about is the way uncertainty of a mean changes with sample size. You keep changing this to the mean of temperature over a day being aproximated by the mean of the max and min values. I don’t think you can model the daily temperature cycle from just two values, just that it’s the simplest way of estimating the daily mean if you only have those two values. There might be a lot more to be said about how to best estimate the daily mean given two values, but I think it’s a distraction from what we were discussing, how sample size changes the precision of a global or monthly mean.

Here are the results of my experiments to see how well a sampling of random points on the positive part of a sine wave approximate the average of the sine wave.

All I am doing here is generating N random numbers with a uniform probability distribution between 0 and pi, and then averaging the sine of each point. There is little uncertainty in each value. For each sample size I’ll show the first 10 sample means alongside the error compared with the known value of 2 / pi.

First for N = 10

With a small sample size the results are varied. A few are fairly close but others are way out.

Increase to N=100

All are within 0.1 of the expected value.

Let’s really ramp things

N = 10000

All are within 0.01, some ares pot on to three decimal places.

My main takeaways from this part are that averages of “different” things can still give meaningful estimates, and that averages become more precise the larger the sample size.

Part two.

So what if we add some uncertainty to the values, and what if the values are more discrete? Will the uncertainty in the mean increase as sample size increases?

I now repeat the exercise but this time round the samples to 1 dp, so each value is sine(x)±0.05. As the values only go from 0 to 1, that only leaves 11 possible values for each sample, so it is pretty discrete.

N = 10

N=100

N=10000

Even using discrete values, with an uncertainty of 0.05, using a large enough sample size gives results much better than the uncertainty of any individual measurement. And of course what does not happen is the uncertainty increases as sample size increases.

Note:

Although the rounded figures do give values that are close to the expected value, the rounding does introduce a systematic error. The average of all rounded values will give us the average of an approximation of the sine wave, but it happens to be a reasonable approximation. Using a much larger sample size (N=1000000) all the results come back as 0.638 to 3dp, out by 0.001. The mean is precise but not completely true.

Bwaahaaa! You tried to imply you could use two measurements to establish a sine wave, not multiple sampled points, a minimum and maximum temp – i.e. a mid-range value would emulate a sine wave!

The base uncertainty of almost every surface temperature measurement device in use today has an uncertainty of +/- 0.5C if not higher! That is *far* greater than the uncertainty you are attributing to your sample data!

Averages of different things can *NOT* tell you anything. It hides at least the maximums and minimums which are ABSOLUTELY NECESSARY to understand the climate at even one location let alone globally!

The *mean* of the data set can be calculated more and more accurately with more samples IF YOU ARE MEASURING THE SAME THING! Why is this so hard to understand? If you calculate the average of two boards found in a ditch on two different highways, i.e. totally different things, what does the average tell you? You still won’t know what length each board is by looking at the average!

“You tried to imply you could use two measurements to establish a sine wave”

No I haven’t. You’re the one who keeps wanting to talk about the daily mean values. All I’ve been trying to do is establish that the uncertainty in a mean decreases as sample size increases. Daily mean temperatures are a distraction from that you keep bringing up. You were the one who wants to treat the daily temperature cycle as a sign wave and derive the mean daytime average by multiplying the max by 0.63.

“…an uncertainty of +/- 0.5C if not higher! That is *far* greater than the uncertainty you are attributing to your sample data!”

The exact value doesn’t matter, the 1dp is with regard to a value that moves between 0 and 1.

“Averages of different things can *NOT* tell you anything.”

“The *mean* of the data set can be calculated more and more accurately with more samples IF YOU ARE MEASURING THE SAME THING!”

You really need to explain what you mean by “different things” verses the “same thing”. If the sine wave moves from 0 to 1 and back is it the same thing or different things? If the temperature changes from day to day or across the globe is it the same or different things? If I measure a sheet of metal at different points with different instruments am I measuring the same thing or different things? If I measure a stack of paper and use that to calculate the thickness of a single sheet of paper, am I measuring the same or different things? If I measure the number of days between babies being born in a hospital am I measuring the same or different things?

“You still won’t know what length each board is by looking at the average!”

No, of course you don’t. That is why an average is not a list of thousands of different values, it’s a summary of them. Not knowing what every value that makes up an average is, does not mean the average tells you nothing. Pointing to examples of averages that are not very useful does not mean that all averages are useless.

“If you want the “average* of the daytime temps then you integrate the temperature curve from 0 to 180deg. That average *is* 0.63 x Tmax. You do the same for nighttime, integrate nighttime temps from 180deg to 360deg. That average is 0.63 times the Tmin.”

And I’ll try to explain again why this is wrong, even assuming the daily temperature profile is a sine wave.

First your argumet about distinguishing day and night temperatures doesn’t make sense unless you think the mid points of the daily temperature cycle are at dawn and dusk. This would also mean the minimum is at midnight and the maximum at noon. This seems unlikely to me.

Secondly, your argument that you can derive the average of day and night time temps by multiplying the max and min by 0.64 is correct if, and only if, the mid point is zero. e.g. if the max is +5℃ and the minimum is -5℃. Obviously whether any particular day meets this requirement will depend on what temperature scale you are using, In the very likely event that the mid point is not zero, e.g. temperatures go from 5℃ to 15℃, multiplying ax and min by 0.64 will not give you any sort of average.

The correct formula for “daytime” average would be (max – mean) * 0.64 + mean. Where mean is (max + min) / 2 i.e the mid point.

But even if you correct the maths, I’ve still no idea what you are trying to achieve here. The mean for the whole day will still be the average of daytime temperatures plus the average of nighttime temperatures divided by 2, which will be equal to the mean obtained by (max + min) / 2.

I told you several times before about cooling degree-days and heating degree days. These are integrals of the temperature curve above and below specific set points (e.g. 65F). These values give a *much* better picture of the climate at a specific location than a mid-range value.

Dawn and dusk are arbitrary points on the daily time line. Adjust them as you want. It is still the integral of the temperature curve that defines climate, not the mid-range value.

The point is (that you seem to be actively trying to convince yourself that somehow, some way the uncertainty of measuring two different things can have the uncertainty of their additive/subtractive sum somehow divided by two so the uncertainty can be lessened instead of growing. Thus leading to the conclusion that if you just have enough samples from different things you can eliminate the uncertainty associated with adding/subtracting them by dividing by the number of samples. The law of large numbers *only* work to lessen uncertainty when you are measuring the same thing, not different things. Maximum temp and minimum temp are TWO DIFFERENT THINGS. Each has an uncertainty and when you add the two together the uncertainty grows by at least sqrt(2) if not a direct addition. You don’t divide that uncertainty by 2 because you have two samples!

Stop trying to convince yourself that mid-range values hold some magic meaning. They don’t. They aren’t even a good representation of the climate at even one location because you lose the data telling you the min and max temps which is a much better representation of the climate at a location.

*IF* day and night are equal in length then .63 * Tmax gives a much better representation of the daytime climate than a mid-range value. Same for night – .63 * Tmin gives you a much better representation of the nighttime climate. If day/night intervals are not the same then it just complicates the calculation but it doesn’t invalidate it. It remains a much better representation of climate at a location than a mid-range value. And it doesn’t lose data, you can still find Tmax and Tmin which you can *not* do with a mid-range value.

We have had the ability to collect 1-minute temperature data for at least two decades, if not longer. That would allow a much better representation of the actual temperature curve and would allow a numerical integration of the curve at least. There is no doubt that it would complicate the models and the modelers tasks but that should not be used as an excuse for not moving to a better representation of climate from the models. My guess is that the reason this isn’t being done is because it would also show just how badly the models are at actually predicting future temps and climate!

“I told you several times before about cooling degree-days and heating degree days.”

Yes, and this has nothing to do with multiply the max temperature by 0.637. In the case of a CDD the magic line isn’t the mid point of the sine wave. Consider what happens if the temperature never goes above the magic number, or stays above it all night. If you assume the day follows a sine wave you still need to know both the max and min to estimate the CDD.

But if you can assume the day follows the sine wave, then the mean derived by finding the mid-point between max and min will also be just as good an estimate of the true mean.

“The point is (that you seem to be actively trying to convince yourself that somehow, some way the uncertainty of measuring two different things can have the uncertainty of their additive/subtractive sum somehow divided by two so the uncertainty can be lessened instead of growing.”

You keep confusing these different means. I’m talking about the mean of global anomalies or the means of monthly or annual anomalies, not using two samples, but thousands. This has nothing to do with how accurate dividing max + min by 2 is to a true daily mean. A sample size of 2 is not much better than of 1, and these aren’t random samples, they are specifically the 2 extremes. Taking the mean of the two is not about reducing uncertainty, it’s about having a value that represents both of the extremes.

“The law of large numbers *only* work to lessen uncertainty when you are measuring the same thing, not different things.”

Rather than trying to convince me, you should try to convince the authors of every text book on statistics, who all say it does.

“*IF* day and night are equal in length then .63 * Tmax gives a much better representation of the daytime climate than a mid-range value.”

A mid-range daily mean is not meant to be representing the daytime temperatures, it’s representing the entire 24 hour period – day and night. If you only want a representation of the daytime, why not just use the max value, without this meaningless scaling?

.

“We have had the ability to collect 1-minute temperature data for at least two decades, if not longer.”

Which is great as long as you don’t want to compare them with temperatures from the last century. But I’m still not sure what you want done with these minute samples. You keep insisting that any sample is just a sample of one, that if you take an average of different things you increase the uncertainty. Each minute sample is measuring a different thing, and averages tell you absolutely nothing. So if you do average the 1440 samples, what does it give you except the daily mean temperature, which you insist is meaningless.

“Yes, and this has nothing to do with multiply the max temperature by 0.637.”

Do you understand what you are saying? The average of a sine wave *is* .637 * Amplitude. It is the area under the sine wave from 0deg-180deg. What do you think cooling degree-days and heating degree-days *are*?

Cooling and heating degree-days were developed for use by engineers trying to size HVAC systems. If the temperature never goes above the set point then that tells the engineer that air conditioning isn’t a real need at that location. I.e. the cooling degree-day value is ZERO. Same for heating degree-day values.

“But if you can assume the day follows the sine wave, then the mean derived by finding the mid-point between max and min will also be just as good an estimate of the true mean”

Not for evaluating climate! Two different locations can have the same mid-range value while having vastly different climates! It is the average, i.e. the cooling degree-day value, that tells you what the daytime climate is. Same for nighttime. The mid-range value is useless – as is the GAT!

“I’m talking about the mean of global anomalies or the means of monthly or annual anomalies, not using two samples, but thousands.”

Anomalies derived from mid-range values are just as useless as the mid-range values the anomalies are calculated from. And, once again, you have thousands of samples THAT AREN’T MEASURING THE SAME THING! The uncertainty grows with root-mean-square and is *NOT* divided by the number of samples! Why is that so hard to understand? Taylor explains it magnificiently!

“Rather than trying to convince me, you should try to convince the authors of every text book on statistics, who all say it does.”

Uncertainty is *NOT* a probability distribution and is, therefore, not subject to statistical analysis. If you read the statistics books they speak to a data set that is a random, *dependent” data set – i.e. measurements of the same thing. This is subject to statistical analysis. The uncertainty of random, independent measurements is simply not the same thing.

“A mid-range daily mean is not meant to be representing the daytime temperatures, it’s representing the entire 24 hour period – day and night.”

Then how does it tell you anything about climate? Two vastly different locations can have the same mid-range value. How do you differentiate the difference in the climate at each location? The mid-range value certainly won’t tell you!

“Which is great as long as you don’t want to compare them with temperatures from the last century.”

Why is that necessary? Why not just track the values over the past twenty years? The past is the past. You can’t change it. Knowing it won’t actually tell you what is happening *now*. I would much rather have a more accurate picture of what has happened over the past twenty years than an inaccurate picture of how the past compares to today!

“Do you understand what you are saying?”

I think so, I finding it increasingly difficult to understand what you are saying. There a multiple concepts here, and you seem to be obsessed by solving them all with this 0.637 multiplication.

Let’s start with concept 2. If you know the minimum temperature of the day was 5°C and the maximum was 15°C, what do you think it would mean to say that the average day time temperature was around 9.6°C and the average nighttime temperature was 3.2°C? What would be the difference if you measured the max and min in Fahrenheit or Kelvin?

“Two different locations can have the same mid-range value while having vastly different climates!”

Yes, as can two locations having the same CDD. But if I’m not interested in specific climates but just in the question is the world getting warmer then can be quite useful. And you know what’s especially useful? Just because you have a global average mean temperature, doesn’t mean you have to ignore all other data. You can still look at the data for specific areas, you can still look at changes to min and max temperatures, you can still look at rainfall and sunshine.

“And, once again, you have thousands of samples THAT AREN’T MEASURING THE SAME THING!”

Still waiting for a definition of “the same thing” verses “different things”. And still waiting for any evidence that the mathematics of averaging changes between the two.

“The uncertainty grows with root-mean-square and is *NOT* divided by the number of samples!”

I’ve shown empirical evidence that this is not true. I’m still waiting for your evidence that it is true.

“If you read the statistics books they speak to a data set that is a random, *dependent” data set – i.e. measurements of the same thing.”

Point me to one of these statistics books that says you can only average “the same thing”.

“The uncertainty of random, independent measurements is simply not the same thing.”

As I keep trying to tell you, the uncertainty in the mean of different things is mostly due to the sampling. The uncertainty in the measurements is usually of little importance, but the formula is the same, divide the standard deviation by the square root of the sample size.

“Then how does it tell you anything about climate?”

And you still don’t seem to grasp that just because something doesn’t tell you everything, it does not mean it tells you nothing. I still find it incredible that you cannot fathom how the mean temperature might tell you something about the climate, whether we are talking about the climate in a specific place or specific time or globally.

If I know the mean temperature of a place in December is -5°C, and the mean temperature in July is 20°C, can you not deduce something about the climate of the place during those two months?

Climate is determined by the daytime heating and the nighttime cooling. It is *NOT* determined by the mid-range temperature. It truly is that simple. Two different locations with vastly different climates can have the same mid-range value, the mid-range value tells you nothing about the climate at each location.

The daytime temp is pretty much a sine wave. So is the nighttime temp. Depending on things like wind, humidity, and clouds each may not be a *perfect* sine wave but they are pretty close. They are certainly not triangle waves or square waves or any other commonly understood types of waves. The daytime temps and nighttime temps are close enough to sine waves that .63 * Tmax or .63 * Tmin will get you pretty close to the *average* daytime or nighttime heating/cooling as measured using temperature as a proxy.

“ what do you think it would mean to say that the average day time temperature was around 9.6°C and the average nighttime temperature was 3.2°C? What would be the difference if you measured the max and min in Fahrenheit or Kelvin?”

What do you think the mid-range value would tell you? If the daytime/nighttime temps are close to a sine wave then I can tell you the Tmax and Tmin values. You can’t do that with a mid-range value or at least I know of no way to do so.

9.6C is about 50F. Divide by .63 and you get a Tmax value of about 79F. A nice daytime temp. 3.2C is about 40F, or a Tmin of about 65F. A nice nighttime min temp. About what we are seeing here in Kansas right now. It’s a nice climate to be in (except for the humidity).

Do that from a mid-range value.

“Yes, as can two locations having the same CDD”

You continue to demonstrate that you don’t understand what an integral is. How can two sine waves of different amplitudes have the same area under the curve? As you pointed out earlier the integral of sin(x) evaluated from 0 to pi equals 2. But the function we need to evaluate is Asin(x), not just sin(x). So the integral becomes 2A. So how can two locations have the same area under the curve unless A_1 and A_2 are the same? Since the average value is .63 * A how can the average value of two locations be the same unless they have the same value for A?

” But if I’m not interested in specific climates but just in the question is the world getting warmer then can be quite useful.”

What do you mean by “is the world getting warmer”? Every alarmist says it means that maximum temps are going up which is what causes the mid-range value to go up. But it can just as easily mean that the minimum temps are going up. Do you *really* care if minimum temps are going up? How many alarmists are going to say that droughts are increasing because minimum temps are going up? Who would listen to them? Higher minimum temps have all kinds of benefits such as longer growing seasons, higher plant growth at night, more food for humans and livestock, fewer homeless people in San Francisco and Seattle expiring on the street from hypothermia, etc. What *bad* impacts do *you* see from higher minimum temps?

How do you distinguish what is actually happening from the use of mid-range values? How do you tell exactly what is warming? Cooling/heating degree-days *will* tell you, mid-range values will not.

“I’ve shown empirical evidence that this is not true. I’m still waiting for your evidence that it is true.”

No, you haven’t given us any evidence. You would have us believe that you can take two independent, random boards whose length has been measured by two different device with possibly different uncertainties, lay them end to end and have the uncertainty of overall length go DOWN by a factor of two!

Such a belief flies in the face of rational thinking. Look at Taylor on page 57: “When measured quantities ae added or subtracted, the uncertainties add; when measured quantities are multiplied or divided, the frational uncertaies add. In this and the next section, I discuss how, uncer certain conditions, the uncertainties calculated by useing these rules may be unessarily large. Specifically, you will see that if the original uncertainties ae INDEPENDENT and RANDOM (caps are mine, tpg), a more realistic(and smaller) esitimate of the final uncertainty is given by similar rules i which the uncertainties (or fractional uncertainties) are DDED IN QUADRATURE (caps are mine, tpg) (a procedure defined shortly).

You want us to believe that you can take 1000 measurements of 1000 independent, random boards, each measurement with its own uncertainty (e.g. +/- 1″), lay them end to end and that the final length will have an uncertainty of 1/1000″. Simply unfreaking believable.

Remember., this is what you are doing when you calculate an average, you are laying boards end-to-end to get a final result and then dividing by the number of boards. That is no different than laying temperatures end-to-end to get a final result that is then divided by the number of temperatures you use. In both cases that average tells you nothing about the boards, you may have a bunch of short ones (e.g. nighttime minimum temps) and a bunch of long ones (e.g. daytime maximum temps). The average is meaningless and useless for trying to describe anything about the boards! Just as it is for trying to describe something like a “global average temperature).

“As I keep trying to tell you, the uncertainty in the mean of different things is mostly due to the sampling.”

Uncertainty of the calculation of the means is *NOT* the same thing as the uncertainty associated with the combination of the data. You *can* calculate the mean ever more accurately but it is only meaningful if you that data is associated with the SAME THING. Those measurements are then many times considered to be a probability distribution associated with the same thing. When you have DIFFERENT THINGS, no probability distribution is associated with the data, therefore calculating the mean more and more accurately is meaningless once you go past the uncertainty interval which is the root-sum-square of the uncertainties of the independent, random multiplicity of the things you have stuck into the data set. You can *NOT* decrease that uncertainty by adding more data, that just increases the uncertainty of the final result! Just like laying random, independent boards end-to-end. The more boards you add the more uncertain the final length becomes!

“And you still don’t seem to grasp that just because something doesn’t tell you everything, it does not mean it tells you nothing”

Mid-range temperatures tell you nothing about the climate. You *still* haven’t show how you can determine minimum and maximum temps from a mid-range value. If you can’t do that then you know nothing of the climate associated with that mid-range value! In other words, it means nothing. And if the data set you are using is composed of values that mean nothing then the end result means nothing either!

“The uncertainty in the measurements is usually of little importance”

Have you *ever* framed a house? Have you *ever* had to order I-beams for a bridge construction? Have you *ever* designed an audio amplifier for commercial use using passive parts? Have you *ever* turned the crankshaft journals in a racing engine on a lathe? I have.

The uncertainties in the measurements of all of these is of HIGH IMPORTANCE if you are going to do a professional job. These are just a sample, the number of situations in the real world where the uncertainty of measurements is of high importance is legion!

You are the typical mathematician or statistician who’s work product has never actually carried some real world liability for you if it isn’t done properly. You would *never* make it as a professional engineer!

“If I know the mean temperature of a place in December is -5°C, and the mean temperature in July is 20°C, can you not deduce something about the climate of the place during those two months?”

You can deduce a seasonal influence, that’s about all. If the mean temperature in July is 20C what is the maximum temp associated with that? What is the *mean* maximum temperature? If you don’t know those then how do you judge what is happening to the July climate? If next year the mean temperature in July is 21C how do you know what caused the increase? Did max temps go up? Did min temps go up? Was it a combination of both? If you can’t answer these then how do you judge anything about the local climate?

“The daytime temps and nighttime temps are close enough to sine waves that .63 * Tmax or .63 * Tmin will get you pretty close to the *average* daytime or nighttime heating/cooling as measured using temperature as a proxy.”

You keep saying I don’t understand calculus, but when you first made this claim, I showed you the integral, explained why you were wrong, and suggested what you might be grasping at. Yet you still keep repeating this meaningless claim that 0.63*TMax is close to the daytime average, etc. Explain why I’m wrong, or show your workings.

For a start consider a day that has minimum of 10°C and maximum 30°C. You say that the average daytime temperature is 0.637 * 30 ≈ 19.1°C, and the average nighttime temperature is 0.637 * 10 ≈ 6.4°C.

So how do you define “average” so that the average daytime temperature is less than the coolest part of the daytime (assuming daytime starts and ends at 20°C) and even more impressively how the average nighttime temperature is less than the minimum temperature for the whole day.

And if you don’t mean average day or night time temperatures but CDD and HDD then explain how the colder the minimum temperature gets the lower the HDD, or how you can have a negative CDD or HDD.

“9.6C is about 50F. Divide by .63 and you get a Tmax value of about 79F. A nice daytime temp. 3.2C is about 40F, or a Tmin of about 65F. A nice nighttime min temp.”

Now go and have a good think about what you’ve just said. In my example max was 15°C, which according to you meant average daytime was 9.6°C. You then convert that to 50°F and reverse engineer the daytime average of 50°F to get a maximum temperature of 79°F. But 79°F ≈ 26°C, quite different to the actual maximum of 15°C ≈ 59°F.

Similarly switching the scale has managed to change a minimum of 5°C, in to a minimum of 65°F ≈ 18°C, warmer than the original maximum.

Does nay of this make you consider that there might just possibly be a flaw in your multiply max and min values by 0.637 to get the average?

“You keep saying I don’t understand calculus, but when you first made this claim, I showed you the integral, explained why you were wrong, and suggested what you might be grasping at. Yet you still keep repeating this meaningless claim that 0.63*TMax is close to the daytime average, etc. Explain why I’m wrong, or show your workings.”

You didn’t do *any* of that. You just showed that you don’t know what an integral actually is. You can’t come up with the same area under two sine curve unless the amplitude of each curve is the same!

“Explain why I’m wrong, or show your workings.”

I’ve explained it over and over and over and over and over till I’m blue in the face. CLIMATE IS THE ENTIREITY OF THE TEMPERATURE CURVE. Climate is not defined by a mid-range value. Two different locations can have the same mid-range value while having different temperature curves and different climates. Trying to define climate using mid-range values is just a joke on the uninformed.

The average value of the daytime sine wave defines the entire daytime sine wave. From it I can calculate the maximum temp. Same for the nighttime sine wave. I can tell you if maximum temps are going up/down, I can tell you if nighttime temps are going up/down, or if it is a combination. I can tell you immediately what is happening to the climate at a location. *YOU* can’t do that with your mid-range values therefore you can’t tell what is happening to the climate. Since mid-range values contain no information on the climate then combining a bunch of mid-range values to form another average won’t tell you anything about the climate either!

“So how do you define “average” so that the average daytime temperature is less than the coolest part of the daytime (assuming daytime starts and ends at 20°C) and even more impressively how the average nighttime temperature is less than the minimum temperature for the whole day.”

You *really* don’t understand integrals at all, do you? The integral of sin(x) from pi to 0 is a -2. Divide by pi to get the average value and you get a -.63. So the average nighttime value is (-.63) * Nmax. Since you are multiplying by a decimal then how does the average nighttime temp wind up being lower than Nmax?

And how did you come up with the daytime start and end points are 20C when you said that the minimum daytime temp was 10C? An average of 19C is certainly between 10C and 30C! Slow down and check your work!

“And if you don’t mean average day or night time temperatures but CDD and HDD then explain how the colder the minimum temperature gets the lower the HDD, or how you can have a negative CDD or HDD.”

Wow! You haven’t studied up on anything I’ve given you, have you? CDD and HDD ARE NOT AVERAGE VALUES. They are the area under the curve defined by the set points you pick! You don’t divide by anything to get an average. You just get the integral value – the area under the curve.

A sine wave with an average of 9.6 will have a maximum of 15C. That’s about 60F. You are right. I shouldn’t have changed scales. But it doesn’t alter my point at all! I can calculate the max temp from the average value. *YOU* can’t do that with a mid-range value. Same for the nighttime temps.

And that is *still* the whole point. Mid-range values are useless for describing climate. They contain no information about climate.

“You *really* don’t understand integrals at all, do you? The integral of sin(x) from pi to 0 is a -2.”

You still don’t get that you are integrating a sine centered on zero and that therefore results will be different for a temperature profile that is not centered on zero, that is nearly all of them.

“So the average nighttime value is (-.63) * Nmax.”

What, now you want to multiply by a negative number? What is Nmax?

“And how did you come up with the daytime start and end points are 20C when you said that the minimum daytime temp was 10C? ”

10°C is the minimum for the day, not for daytime. You know, like the TMin.

“But it doesn’t alter my point at all! I can calculate the max temp from the average value.”

But you get completely different values if you do this with Fahrenheit, Celsius or Kelvin.

“And that is *still* the whole point. Mid-range values are useless for describing climate.”

You keep doing this, changing the subject. In this comment I’m not interested in whether an average daytime value would be better than a mean. I’m simply trying to help you understand why you cannot multiply Tmax by 0.637 to get a daytime average.

“You still don’t get that you are integrating a sine centered on zero and that therefore results will be different for a temperature profile that is not centered on zero, that is nearly all of them.”

You’ve just hit on one of the major problems with climate models today even though you probably don’t understand it.

What does (Tmax-Tmin)/2 trend toward in the limit? It tends toward ZERO. As the daytime and nighttime temperature excursions get closer together the mid-range tends toward zero instead of the absolute temperature. And that is true no matter what scale you use, celsius, fahrenheit, or kelvin. The mid-range temperature value has an in-built bias that can’t be eliminated.

So why do so many climate scientists, mathematicians, and statisticians remain so adamant that it properly represents the climate anywhere, let alone the global climate?

“What, now you want to multiply by a negative number? What is Nmax?”

What is the integral of sin(x) from pi to zero? Do I need to work it out for you? Nmax is my shorthand for the maximum nighttime temperature excursion. Same as Tmin.

The integral of sin(x) = -cos(x). Evaluated from pi to 0 you get -cos(0) – (-cos(pi)). -cos(0) = -1. Cos(pi) = -1. So you get -(-(-1)) for the second term or -1. -1-1 = -2.

Did you *really* take calculus in school?

“But you get completely different values if you do this with Fahrenheit, Celsius or Kelvin.”

I also get different measurements when I use the different scales. You won’t get the same error that you see when converting between scales. And nothing will change the fact that mid-range values trend toward zero as temperature excursions trend toward zero – an in-built bias from using mid-range values. Think a 24 hour blizzard in Siberia where the temperature might only change 1C from daytime to nighttime. The absolute temp might be -20C to -21C. You wind up with about a 20C bias in the mid-range value. How do you overcome that?

“You keep doing this, changing the subject. In this comment I’m not interested in whether an average daytime value would be better than a mean. I’m simply trying to help you understand why you cannot multiply Tmax by 0.637 to get a daytime average.”

Of course you can! The only reason you can’t would be if the temperature curve does not approach a sine wave. Daytime temps are mostly controlled by the sun. The angle of incidence from the sun to the earth is a sine wave so the temperature naturally tends to follow that same sine wave. You get the largest contribution to temperature when the sun is overhead and the sin(90) = 1. At sunrise and sunset the angle of incidence approaches 0 and the contribution of the sun to surface heat is sin(0) = 0. Of course the surface temp lags the actual sun position because it takes time for the heat input to actually result in a temperature rise.

I’ve attached a graph of our past weeks temperatures (or at least I’ve tried. I don’t know why it isn’t showing up. I’ll do it again in a separate message) If that temperature envelope doesn’t look like a sine wave (distorted perhaps but still some kind of a sine wave) then I don’t know what it looks like. If you want to quibble about the actual value of the integral then have at it. It might be 0.5 or 0.7 or something else. But it is *still* better than the mid-range value for representing the actual climate!

“What does (Tmax-Tmin)/2 trend toward in the limit? It tends toward ZERO. As the daytime and nighttime temperature excursions get closer together the mid-range tends toward zero instead of the absolute temperature.”

It’s rather pointless going over your comments error by error again, but here you introduce a completely new one.

The (Tmax-Tmin)/2 tends to zero as Tmin tends to Tmax, correct. Only problem is the equation for Tmean is (Tmax + Tmin) / 2. That equation tends to Tmax (or Tmin) as Tmin tends towards Tmax.

You’re right, my bad. The mid-range value is *still* useless in describing anything to do with climate. It *loses* data. You cannot reproduce the temperature curve from the mid-range data. If you can’t do that, then it is of no use.

BTW, you never addressed the fact that my graph shows that the temperature curve approaches a sine wave. Which begs the question of why climate scientists refuse to move to a metric that actually describes the climate.

Is it just for the funding and the ability to use it to scare people?

“BTW, you never addressed the fact that my graph shows that the temperature curve approaches a sine wave.”

I’m not denying that a sine wave might be a reasonable model for a daily cycle. The point is irregardless of the shape of the wave you cannot ignore the displacement from zero, so you cannot simply multiply the max by 0.637 to get an average.

But if a sine wave is a good fit for a daily cycle, it also means that the mean derived from the average of max and min is a reasonable approximation of the actual daily mean.

“I’m not denying that a sine wave might be a reasonable model for a daily cycle.”

Then why did you say:

“Says the person who wants to model every day by a perfect sine wave.”

“The point is irregardless of the shape of the wave you cannot ignore the displacement from zero, so you cannot simply multiply the max by 0.637 to get an average.”

As I told you before, if there is a DC component then subtract it out, calculate the average, and add the DC component back in.

Again, the mid-range value is *NOT* the average value of a sine wave.

“But if a sine wave is a good fit for a daily cycle, it also means that the mean derived from the average of max and min is a reasonable approximation of the actual daily mean.”

No, it isn’t! The amount of daytime heating is the integral of the temperature profile during the day. It is *NOT* the mid-range value between Tmax and Tmin. The mid-range value is *NOT* an average value. It is not a mean. It is a mid-range value. You keep mixing up terms. Is that on purpose?

“As I told you before, if there is a DC component then subtract it out, calculate the average, and add the DC component back in. ”

I’m not sure what you mean by DC component, these are temperatures not electricity. But assuming you mean the mean daily temperature, what you’re describing is exactly what I’ve been trying to tell you for ages. You cannot just multiply the max temperature by 0.637 to get the “daytime” average, you have to subtract the mean multiply by 0.637 and add the mean back, hence 0.637(max – mean) + mean.

“Again, the mid-range value is *NOT* the average value of a sine wave.”

Again, maybe you need to define what you mean by the average value of a sine wave. I’m talking about the sine wave over a whole cycle or series of cycles. Isn’t the DC component the average value of a sine wave?

“No, it isn’t! The amount of daytime heating is the integral of the temperature profile during the day. It is *NOT* the mid-range value between Tmax and Tmin.”

Yes, the amount of day time heating is not the mid-range value between Tmax and Tmin. That’s because it’s not the average daily temperature.

“The mid-range value is *NOT* an average value. It is not a mean. It is a mid-range value. You keep mixing up terms. Is that on purpose?”

Yes, it’s on purpose. I’m purposely trying to stick to accepted meanings and you keep changing definitions. By “average value” or mean temperature I mean the average of all temperatures during the day, and by day I mean a 24 hour period. If temperatures follow a sine wave during the day, the average value will be mid-range value between min and max, because sine waves are symmetrical about their mid point.

If you don’t think the mid-range value is equal to the average value, explain what is? If you mean it isn’t the average value during the daytime, however you define that, you are correct. But that doesn’t mean it isn’t the average value over the whole day.

“The only reason you can’t would be if the temperature curve does not approach a sine wave.”

Yet again, it’s not the shape of the sine wave that’s the problem, it’s the translation.

“If you want to quibble about the actual value of the integral then have at it.”

I’ve said before, and maybe you didn’t notice, that as far as I can tell, what your integral should be is 0.637*(TMAX – TMEAN) + TMEAN. That’s rather more than a quibble.

here is the graph.

Where’s 0°F on your graph? What does an average daytime temperature of 85*0.637 ≈ 54°F look like on your graph?

If you think about these questions you might begin to see your problem.

“Where’s 0°F on your graph? What does an average daytime temperature of 85*0.637 ≈ 54°F look like on your graph?”

It doesn’t matter where zero is. The graph shows that the daily temperature curve. It *is* close to a sine wave albeit with some distortion.

If it has a DC component you don’t like then subtract it out!

*I* don’t have a problem at all. I’ve analyzed waveforms my entire life, pure and distorted.

You are looking for any excuse you can find to show that a mid-range temperature (an average!) has some meaning. And that an average of mid-range temperatures has some actual meaning in the real world. And none of your excuses have any bearing on the issue at all. You can’t even tell the difference between dependent and independent measurements, i.e. multiple measurements of the same thing and a set of measurements of different things!

*That* is where the problem lies. You have a hammer and see everything as a nail, refusing to admit that screws exist. They are just another nail to hammer in!

“It doesn’t matter where zero is.”

It does if you are trying to find the area under the curve.

“If it has a DC component you don’t like then subtract it out! .”

Careful, you might be on the brink of figuring it out.

“You are looking for any excuse you can find to show that a mid-range temperature (an average!) has some meaning”

Yet again, this particular discussion has nothing to do with the virtues or otherwise of the standard daily temperature mean. It’s entirely about trying to get you to understand that you cannot get the “daytime average temperature” by multiplying the max by 0.637. Accepting this doesn’t in anyway effect the usefulness or otherwise of TMean. If anything it would make your argument stronger to derive the correct daytime average.

Part 2

“So how can two locations have the same area under the curve unless A_1 and A_2 are the same?”

You are talking about CDDs here, they are not the area under the sine wave, they are the area under the positive part of the sine wave minus the magic number. And of course, the daily temperature cycle is not Asin(x), its Asin(x) + m, where m is the mean temperature.

The most obvious way two different maximums can both have the same CDDs is if neither reach the magic number, then the CDDs are both zero. But for days when there is cooling, being able to play with both the amplitude and the displacement can easily result in similar CDD values for different max and min values.

“You are talking about CDDs here, they are not the area under the sine wave, they are the area under the positive part of the sine wave minus the magic number.”

Minus what magic number? And they *are* the area under the curve – that *IS* the definition of an integral.

Actually the CDD is the Asin(x) – Asin(0). Zero is the baseline, not the mid-range value. A temp of +10C is based on a baseline of 0C, not some mid-range value. The area of the curve to be evaluated is based on the set points. That’s no different than evaluating sin(x) from 20deg to 100deg. You still get the area under the part of the curve that is between 20deg and 100deg.

This is why Kelvin should be the scale used for all climate related stuff, not celsius or fahrenheit. You get rid of the arbitrary 0 baseline.

“The most obvious way two different maximums can both have the same CDDs is if neither reach the magic number, then the CDDs are both zero.”

Oh, I get it. The magic number is the set point! Remember what degree-days are used for. If the set point is 65F and the daytime temps never reach that value then what use is air conditioning? Remember, degree-days are *not* an average. They are a direct value obtained from an integral. They are not divided by time interval to get an average. I only used degree-days as an example of a better way to evaluate climate. That’s what HVAC engineers use to evaluate climate. They don’t use “average” temperature. I trust actual engineers whose personal liability depends on evaluating climate in the real world far more than a climate scientist, mathematician, or statistician whose connection to the real world is tenuous at best and has no personal liability at stake.

“But for days when there is cooling, being able to play with both the amplitude and the displacement can easily result in similar CDD values for different max and min values.”

Really? And exactly how would this happen if the temperature curves are not the same? What displacement are you talking about? The only way I can think of for this to happen with different temperature curves is if the curves are not approaching a sine wave. Where does this happen and how often does it happen?

“Minus what magic number?”

By Magic number simply meant whatever value you are using as the base line.

“And they *are* the area under the curve – that *IS* the definition of an integral.”

I take it you didn’t read the rest of my sentence where I said “they are the area under the positive part of the sine wave minus the magic number.”.

“Actually the CDD is the Asin(x) – Asin(0).”

What fresh nonsense is this? sin(0) = 0, for all values of 0.

“Zero is the baseline, not the mid-range value”

Come again? You’re using 0°C as a baseline for CDDs? How cold do you want your buildings?

“Remember, degree-days are *not* an average.”

They’re an average of temperatures, over the baseline, minus the baseline per day.

“They are not divided by time interval to get an average.”

I’m assuming that they would actually be calculated from readings taking at set times, every hour, half hour or minute. The shorter the interval the closer the average will get to the integral (that’s the definition of an integral), but I can’t see how you could do an actual integral as you have no way of knowing the true function, and any sampling method will be more accurate than simply assuming it is a sine wave.

“They are not divided by time interval to get an average.”

Oh yes they are. If you have 24 hourly readings you have to divide the total degrees above the baseline by 24 to get the value in degree days. If not you would have Cooling Degree Hours. Even if you take the integral you are still dividing the sum by the number of time intervals, it’s just that the number tends to infinity. (You also have to divide it by whatever scale you are using, so if for example you are modelling a sine wave from 0 to 2pi to represent the day, you have to divide the area under the curve by 2pi.)

“ I trust actual engineers whose personal liability depends on evaluating climate in the real world far more than a climate scientist, mathematician, or statistician whose connection to the real world is tenuous at best and has no personal liability at stake.”

Says the person who wants to model every day by a perfect sine wave.

“They’re an average of temperatures, over the baseline, minus the baseline per day.”

An integral is not an average.Do your dimensional analysis.

\int_{a}^{b} \! sin(x)\,\mathrm{d}x.Where a=0 and b=pi.

sin(x)dx is an area. sin(x) is a height and dx the width of a rectangle. The integral from 0 to pi sums all the areas under the curve sin(x).

To get an average you would have to divide by pi, the total interval over which the integral is done.

If this isn’t clear enough then consider the integral of a velocity curve. The integral doesn’t give you an average velocity. Velocity is distance/time. When you multiply by dt you wind up with distance, not average velocity.

Perhaps the sin(x) is confusing you. x is not time, it is radians or an angle, e.g. theta.

The integral is actually Asin(theta)dtheta. There isn’t any time involved. A is the amplitude of the sine wave.

“Says the person who wants to model every day by a perfect sine wave.”