Guest Post by Willis Eschenbach

I got to thinking about the lack of progress in estimating the “equilibrium climate sensitivity”, known as ECS. The ECS measures how much the temperature changes when the top-of-atmosphere forcing changes, in about a thousand years after all the changes have equilibrated. The ECS is measured in degrees C per doubling of CO2 (°C / 2xCO2).

Knutti et al. 2017 offers us an interesting look at the range of historical answers to this question. From the abstract:

Equilibrium climate sensitivity characterizes the Earth’s long-term global temperature response to increased atmospheric CO2 concentration. It has reached almost iconic status as the single number that describes how severe climate change will be. The consensus on the ‘likely’ range for climate sensitivity of 1.5 °C to 4.5 °C today is the same as given by Jule Charney in 1979, but now it is based on quantitative evidence from across the climate system and throughout climate history.

This “climate sensitivity”, often represented by the Greek letter lambda (λ), is claimed to be a constant that relates changes in downwelling radiation (called “forcing”) to changes in global surface temperature. The relationship is claimed to be:

Change in temperature is equal to climate sensitivity times the change in downwelling radiation.

Or written in that curious language called “math” it is

∆T = λ ∆F Equation 1 (and only)

where T is surface temperature, F is downwelling radiative forcing, λ is climate sensitivity, and ∆ means “change in”

I call this the “canonical equation” of modern climate science. I discuss the derivation of this equation here. And according to that canonical equation, depending on the value of the climate sensitivity, a doubling of CO2 could make either a large or small change in surface temperature. Which is why it the sensitivity is “iconic”.

Now, I describe myself as a climate heretic, rather than a skeptic. A heretic is someone who does not believe orthodox doctrine. Me, I question that underlying equation. I do not think that even over the long term the change in temperature is equal to a constant time the change in downwelling radiation.

My simplest objection to this idea is that evidence shows that the climate sensitivity is not a constant. Instead, it is a function inter alia of the surface temperature. I will return to this idea in a bit. First, let me quote a bit more from the Knutti paper on historical estimates of climate sensitivity:

The climate system response to changes in the Earth’s radiative balance depends fundamentally on the timescale considered. The initial transient response over several decades is characterized by the transient climate response (TCR), defined as the global mean surface warming at the time of doubling of CO2 in an idealized 1% yr–1 CO2 increase experiment, but is more generally quantifying warming in response to a changing forcing prior to the deep ocean being in equilibrium with the forcing …

By contrast [to Transient Climate Response TCR], the equilibrium climate sensitivity (ECS) is defined as the warming response to doubling CO2 in the atmosphere relative to pre-industrial climate, after the climate reached its new equilibrium, taking into account changes in water vapour, lapse rate, clouds and surface albedo.

It takes thousands of years for the ocean to reach a new equilibrium. By that time, long-term Earth system feedbacks — such as changes in ice sheets and vegetation, and the feedbacks between climate and biogeochemical cycles — will further affect climate, but such feedbacks are not included in ECS because they are fixed in these model simulations.

Despite not directly predicting actual warming, ECS has become an almost iconic number to quantify the seriousness of anthropogenic warming. This is a consequence of its historical legacy, the simplicity of its definition, its apparently convenient relation to radiative forcing, and because many impacts to first order scale with global mean surface temperature.

The estimated range of ECS has not changed much despite massive research efforts. The IPCC assessed that it is ‘likely’ to be in the range of 1.5 °C to 4.5 °C (Figs 2 and 3), which is the same range given by Charney in 1979. The question is legitimate: have we made no progress on estimating climate sensitivity?

Here’s what the results show. There has been no advance, no increase in accuracy, no reduced uncertainty, in ECS estimates over the forty years since Carney in 1979. Let’s take a look at the actual estimates.

The Knutti paper divides the results up based on the type of underlying data upon which they were determined, viz: “Theory & Reviews”, “Observations”, “Paleoclimate”, “Constrained by Climatology”, and “GCMs” (global climate models). Some of the 145 estimates only contained a range, like say 1.5 to 4.5. In that case, for the purposes of Figure 1 I’ve taken the mean of the range as the point value of their estimate.

Next, I looked at the 124 estimates which included a range for the data. Some of these are 95% confidence intervals; some are reported as one standard deviation; others are a raw range of a group of results. I have converted all of these to a common standard, the 95% confidence interval. Figure 2 shows the maxima and the minima of these ranges. I have highlighted the results from the five IPCC Assessment Reports, as well as the Charney estimate.

The Charney / IPCC estimates for the range of the ECS values are constant from 1979 to 1995, at 1.5°C to 4.5°C for a doubling of CO2. In the Third Assessment Report (TAR) in 2001 the range gets smaller, and in the Fourth Assessment Report (AR4) in 2007 the range got smaller still.

But in the most recent Fifth Assessment Report (AR5), we’re back to the original ECS range where we started, at 1.5 to 4.5°C / 2xCO2.

In fact, far from the uncertainty decreasing over time, the tops of the uncertainty ranges have been increasing over time (red/black line). And at the same time, the bottoms of the uncertainty ranges have been decreasing over time (yellow/black line). So things are getting worse. As you can see, over time the range of the uncertainty of the ECS estimates has steadily increased.

Looking At The Shorter Term Changes.

Pondering all of this, I got to thinking about a related matter. The charts above show equilibrium climate sensitivity (ECS), the response to a CO2 increase after a thousand years or so. There is also the “transient climate response” (TCR) mentioned above. Here’s the definition of the TCR, from the IPCC:

Transient Climate Response (TCR)

TCR is defined as the average global temperature change that would occur if the atmospheric CO2 concentration were increased at 1% per year (compounded) until CO2 doubles at year 70. The TCR is measured in simulations as the average global temperature in a 20-year window centered at year 70 (i.e. years 60 to 80).

The transient climate response (TCR) tends to be about 70% of the equilibrium climate sensitivity (ECS).

However, this time I wanted to look at an even shorter-term measure, the “immediate climate response” (ICR). The ICR is what happens immediately when radiation is increased. Bear in mind that the effect of radiation is immediate—as soon as the radiation is absorbed, the temperature of whatever absorbed the radiation goes up.

Now, a while back Ramanathan proposed a way to actually measure the strength of the atmospheric greenhouse effect. He pointed out that if you take the upwelling surface longwave radiation, and you subtract upwelling longwave radiation measured at the top of the atmosphere (TOA), the difference between the two is the amount of upwelling surface longwave that is being absorbed by the greenhouse gases (GHGs) in the atmosphere. It is this net absorbed radiation which is then radiated back down towards the planetary surface. Figure 3 shows the average strength of the atmospheric greenhouse effect.

The main forces influencing the variation in downwelling radiation are clouds and water vapor. We know this because the non-condensing greenhouse gases (CO2, methane, etc) are generally well-mixed. Clouds are responsible for about 38 W/m2 of the downwelling LW radiation, CO2 is responsible for on the order of another twenty or thirty W/m2 or so, and the other ~ hundred watts/m2 or so are from water vapor.

So to return to the question of immediate climate response … how much does the monthly average surface temperature change when there are changes in the monthly average downwelling longwave radiation shown in Figure 3? This is the immediate climate response (ICR) I mentioned above. Figure 4 below shows how much the temperature changes immediately with respect to changes in downwelling GHG radiation (also called “GHG forcing”).

There are some interesting things to be found in Figure 4. First, as you might imagine, the ocean warms much less on average than the land when downwelling radiation increases. However, it was not for the reason I first assumed. I figured that the reason was the difference in thermal mass between the ocean and the land. However, if you look at the tropical areas you’ll see that the changes on land are very much like those in the ocean.

Instead of thermal mass, the difference in land and sea appears to be related to land and sea snow and ice. These are generally the green-colored areas in Figure 4 above. When ice melts either on land or sea, much less sunlight is reflected back to space from the surface. This positive feedback increases the thermal response to increased forcing.

Next, you can see evidence for the long-discussed claim that if CO2 increases, there will be more warming near the poles than in the tropics. The colder areas of the planet warm the most from an increase in downwelling LW radiation. On the other hand, the tropics barely warm at all with increasing downwelling radiation.

Seeing the cold areas warming more than the warm areas led me to graph the temperature increase per additional 3.7 W/m2 versus the average temperature in each gridcell, as seen in Figure 5 below.

The yellow/black line is the amount that we’d expect the temperature to rise (using the Stefan-Boltzmann equation) if the downwelling radiation goes up by 3.7W/m2 and there is no feedback. This graph reveals some very interesting things.

First, at the cold end, things warm faster than expected. As mentioned above, I would suggest that at least in part this is the result of the positive albedo feedback from the melting of land and sea ice.

There is support for this interpretation when we note that the right-hand part of Figure 5 that is above freezing is very different from the left-hand part that is below freezing. Above freezing, the temperature rise per additional radiation is much smaller than below freezing.

It is also almost entirely below the theoretical response. The average immediate climate response (ICR) of all of the unfrozen parts of the planet is a warming of only 0.2°C per 3.7 W/m2.

Discussion

We’re left with a question: why it is that forty years after the Charney report, there has been no progress in reducing the uncertainty in the estimate of the equilibrium climate sensitivity?

I hold that the reason is that the canonical equation is not an accurate representation of reality … and it’s hard to get the right answer when you’re asking the wrong question.

From above, here’s the canonical equation once again:

This “climate sensitivity”, often represented by the Greek letter lambda (λ), is claimed to be a constant that relates changes in downwelling radiation (called “forcing”) to changes in global surface temperature. The relationship is claimed to be:

Change in temperature is equal to climate sensitivity times the change in downwelling radiation.

Or written in that curious language called “math” it is

∆T = λ ∆F Equation 1 (and only)

where T is surface temperature, F is downwelling radiative forcing, λ is climate sensitivity, and ∆ means “change in”

I hold that the error in that equation is the idea that lambda, the climate sensitivity, is a constant. Nor is there any a priori reason to assume it is constant.

Finally, it is worth noting that in areas above freezing, the immediate change in temperature per doubling of CO2 is far below the amount expected from just the known Stefan-Boltzmann relationship between radiation and temperature (yellow/black line in Figure 5). And in the areas below freezing, it is well above the amount expected.

And this means that just as the areas below freezing are showing clear and strong positive feedback, the areas above freezing are showing clear and strong negative feedback.

Best Christmas/Hannukah/Kwanzaa/Whateverfloatsyourboat wishes to all,

w.

AS USUAL, I ask that when you comment you quote the exact words you are discussing, so we can all be certain what you are referring to.

The Equation, ∆T = λ ∆F, is ‘derived’ all right! If it were actually measured the science would be settled. We do know the speed of light as it *HAS* been measured (and there is no controversy). Since no one has a paper published that shows an actual repeatable value for ‘F’, the scam goes on…

Regarding its derivation, both dH/dt and dT/dt are zero in the steady state, thus C only affects the rate at which a steady state will be achieved and not what that steady state will be. So while temperature is linear to stored Joules, emissions increase as T^4 and in the steady state, only emissions matter, in other words, it’s just a radiant balance. The derivation would be more correct if the surface wasn’t also radiating energy away.

temp is linear with thermal energy if you are talking about the same medium ( not mixing all media together ) and there is not change of state and not change of mass in and out of the “bit” you are looking at. No one of those ifs is actually the case.

Mixed medium doesn’t really matter to the linearity. Since T is linear to stored energy and superposition applies to Joules, a proportional average of the heat capacity of its components is as linear as the heat capacity of any single component. In addition, if the constituents vary in time, but have discernible averages integrated over time, the linearity legitimately applies to the averages.

Superposition is a powerful tool to both simplify and conceptualize complexity. To the climate system this just means that 2 Joules can do twice the work of 1 Joule which makes perfect sense since Joules are the units of work and it takes work to both change and maintain the state (temperature). Changing the state requires work proportional to the temperature change, while maintaining the state requires work proportional to the absolute temperature raised to the 4’th power. Once a steady state has been achieved, only the work required to maintain it is required. The IPCC’s ECS seems based on the relationships between the temperature and the energy required to change it while ignoring the energy required to maintain it which is exactly the opposite to what should be done.

Babsy,

The equation ∆T = λ ∆F is used to define λ. It is that simple. You change ∆F and wait several thousand years until the system settles down again and measure ∆T. Divide one by the other and you have λ.

Now the equilibrium climate sensitivity is clearly not a constant and no-one suggests that it is.

For a blackbody the radiated power goes as the 4th power of the temperature and so the equilibrium climate sensitivity depends on the cube root of the temperature. Furthermore many people think the climate is at least bistable (ice-ages and interglacials) and so the value of λ will depend on the starting point.

The bi-stable nature is an illusion of ice affected albedo and is more like a hysteresis effect. While this is often modeled a ‘feedback’ it’s not and is actually a real change in forcing whose surface emissions sensitivity per W/m^2 arising from more or less reflection is the same as for any other W/m^2. This is only a significant influence when there’s a lot of surface ice to melt, or grow. Currently, we are close to minimum ice, so there’s not much headroom left in the warm direction and most of any influence that remains is in the cold direction.

The way that the IPCC defines forcing fails to distinguish between an increase in solar input (actual forcing) and a decrease in emissions at TOA caused by increased atmospheric absorption. They are not equivalent since all of the increase in solar forcing contributes to warming the surface and it’s radiant balance with the atmosphere, while a decrease in emissions resulting from an increase in GHG concentrations means that the atmosphere is absorbing more, only about half of which ends up contributing to the radiant balance at the surface while the other half contributes to the radiant balance at TOA.

It’s the difference between adding new energy to the system and recirculating existing energy within the system. Only by considering recirculated energy the same as new energy does the implicit violation of COE they depend on to claim an absurdly high ECS become plausibly deniable.

Why wait several thousand years?

Excerpts from article:

Well lordy, lordy, lordy, …. of course there hasn’t been any advances, any increase in accuracy or any reduced uncertainty in/of ECS estimates.

Why in the world would one change the value of their “estimate” when they don’t know what the actual “value” is or should be …… or if there really is a “value”? Whatever “value” they claim is the correct “value” because no one can disprove it. “HA”, ….. forty years and still holding.

Personally, I say forget the ECS of atmospheric CO2 and concentrate on the ECS of atmospheric H2O vapor. Via H2O vapor they won’t have to wait another “thousand years” to equilibrate the “effect”.

Yup. Not only is there no reason to believe that “ECS” is constant, neither is there reason to believe that the users can define it in a plausible manner.

It is a product of the models and the modelers imagination, not an observable and measurable number in the real world. A better way to describe it, is one using plainer English: “If we do w in our model then the number x changes by amount y in time period z.”

It then becomes more obvious that the Emperor is dressed in fig leaves.

Let me solve the equation:

λ =0

The Earth climate is a complex system. The idea that such a system, that has been relatively stable for millions of years, can be strongly influenced by one single factor creating a feedback loop is highly suspect – such systems are rarely stable for any period of time. Hence the feedback must be very, very, small, and the system must be inclined toward stability. The only thing that makes sense is for λ =0, or as close to zero as is possible. It may be that the number cycles around zero – at some temperatures it is slightly positive, at others slightly negative.

Or 0.85 tops, may well be 0. As I keep posting…..

Such an equation is based on the assumption that the system it describes is linear time-invariant. The Earth’s climate, especially over century and millennium time scales, is neither linear, nor time-invariant. The equation is complete unmitigated hogwash.

commieBob,

The equation is unmitigated hogwash, not because the climate system doesn’t have an identifiable linear response, but because what they’ve identified as being a linear relationship between W/m^2 and temperature is not even approximately linear to the actual response.

The AVERAGE relationship between the BB emissions corresponding to the surface temperature and the emissions at TOA above that point on the surface is testably linear where the steady state ratio between them is about 1.62 independent of the surface temperature or the emissions at TOA. The inverse of this verifiably constant ratio is the equivalent emissivity of a gray body whose temperature is that of the surface and whose emissions are that of the planet. The equivalent emissivity is 0.62 and when the expected behavior of this equivalent gray body is plotted in green against 3 decades of monthly measurements of the surface temperature vs. the emissions at TOA for each 2.5 degree slice of latitude from pole to pole plotted as small red dots, the correlation is undeniable. The long term averages for each slice are the larger green and blue dots where the correlation is unambiguous.

http://www.palisad.com/co2/tp/fig1.png

When plotted to the same scale, the IPCC nominal sensitivity (the short blue line) is represented by a slope that passes through the origin, rather than being a slope tangent to the actual response at the average temperature. This is represented by the short green line, which coincidentally? is the same as the slope of an ideal BB corresponding to the so called ‘zero feedback’ response shown in black.

Since in the steady state, the emissions at TOA are equal to the incident energy at TOA, this ratio is exactly equivalent to the incremental effect of the next W/m^2 of solar power, where 1.62 W/m^2 per W/m^2 of forcing corresponds to an ECS of about 1.1C based on doubling CO2 being equivalent to 3.7 W/m^2 of solar forcing keeping CO2 concentrations constant.

I’ll make another testable prediction for you. If you were to plot the same data coming from the output of any GCM attempting to mimick the historical data against the actual data in the same form as the plot I’ve shown above, the fact that there are serious errors in the model will become glaringly obvious.

There cannot be any delay in the equilibrium process without breaching the Gas Laws. If the surface temperature changes then the volume of the atmosphere changes instantly via conduction and that change is instantly neutralised by adjustments in convection.

The radiative theory of gases is in breach of the Gas Laws by virtue of the fact that during any delayed process of achieving equilibrium the atmosphere will not be behaving in accordance with the Gas Laws.

Actually, the absorption and emissions of photons by GHG molecules is independent of the Gas Laws which only apply to the kinetic motion of gas molecules and not to the photons absorbed and re-emitted by GHG’s which are governed by the laws of Quantum Mechanics and Electromagnetics.

An error arises by assuming that a photon of energy absorbed by a GHG is quickly ‘thermalized’ into the kinetic energy of molecules in motion. It’s not and the data is unambiguously clear about this. When we examine the emitted spectrum at TOA over clear skies, the attenuation in absorption bands is only about 50%, yet the probability that a photon emitted by the surface in those bands will be absorbed is near 100%. The only possible source of these absorption band photons are the re-emissions by GHG molecules since O2 and N2 are completely transparent to these wavelengths.

The failure arises by considering that vibrational energy translated into rotational energy is a one way path, while in fact, it symmetrically bidirectional and thus there’s no net conversion.

That may be so but if the temperature does change then equilibrium must occur instantly otherwise there is a breach of the Gas Laws.

Stephen,

Nothing happens instantaneously since there’s always a time constant involved, moreover; an exact equilibrium can only be approached and never achieved. None the less, relative to the kinetic behavior of the atmosphere, the time constant is only on the order of hours in response to surface temperature changes.

Note that none of this violates the ideal Gas Laws, since the atmosphere is not an ideal gas.

The response is instant at point of contact with the ground but does need a short time to flow through a complete convective overturning cycle.

The atmosphere is near enough to an ideal gas for the Gas Laws to apply for all practical purposes.

Stephen — this discussion is basically theological — how many angels can dance on the head of a pin? — not scientific. But I’m pretty sure that the fine print for the ideal gas law (pv=nrt, right?) includes the magic phrase “in thermal equilibrium”. A CO2 molecule absorbing or emitting IR is not, I believe, in thermal equilibrium.

My understanding is that in most cases the energy exchanged by gases such as water vapor, CO2 etc via radiation is too energetic to be seamlessly converted to kinetic energy at Earthly temperatures. (That’s addressed in the Book of Quantum Mechanics which — like The Book of Revelation is beyond the understanding of most mortals. Certainly me. And probably you.). The scriptures^h^h^h … textbooks equate heat/temperature to kinetic energy. So the IR energy related energy (which is thought to be stored in molecule rotation/vibration, not molecular motion) is latent heat, not sensible heat. And therefore it is exempt from the gas laws.

But if it’s latent and not sensible, how is it going to warm the planet and exterminate humanity? Beyond my pay grade.

Don,

It turns out that the energy of a 10u photon is about the same order of magnitude as the kinetic energy of a gas molecule at its nominal speed. It’s not that the photons are too energetic, but that in general, the energy manifested by photons is orthogonal to the energy manifesting translational motion which is the only energy that the Ideal Gas Law is concerned with.

Trenberth’s arbitrary conflation of the energy transported by photons with the energy transported by matter is a source of significant confusion.

co2isnotevil, at ground level (1 Atm pressure, typical temperatures), the mean time for a CO2 molecule to give up the energy it absorbed from a 15 μm photon is:

● a couple of nanoseconds, for energy transfer by collision with another air molecule

● about 1 second , for energy loss by emission of another photon

In other words, when CO2 molecules absorbs a 15 μm photons, > 99.9999998% of the time they lose that energy by collision with another air molecule, rather than by emitting a photon. (At higher altitudes the ratio is somewhat reduced, of course.)

When people learn that, they often make the mistake of assuming that means the CO2 in the atmosphere emits almost no radiation. That’s incorrect, because the CO2 not only gives up energy to other air molecules by collisions, it also absorbs energy from other molecules by collisions.

When a CO2 molecule gives up kinetic energy (heat) by collision with another air molecule, the energy is not lost. The other air molecule gains exactly the same amount of energy. N2, O2, Ar, CO2, etc. molecules are continually exchanging energy by collisions with one another.

So, regardless of how much LW IR radiation the CO2 in the atmosphere absorbs, it remains at the same temperature as the rest of the atmosphere. The absorption of radiation by CO2 in the atmosphere doesn’t directly affect the amount of radiation emitted by the CO2. Rather, absorbing radiation simply raises the temperature of the atmosphere. The temperature of the atmosphere (and the CO2 partial pressure) governs the amount of radiation emitted by the CO2.

You’re correct that essentially all of the 15 μm radiation from the surface is absorbed by that atmosphere (mainly the CO2 in it). You’re also correct that when a satellite looks down at the Earth, it sees a substantial amount of outbound 15 μm LW IR. But that IR is not coming from the ground, it is coming from the CO2 in the atmosphere.

The average altitude from which those photons are emitted is called the “emission height.” It varies with wavelength. At the center of the 15 μm CO2 absorption band, the emission height is much higher than it is at the fringes.

Additional CO2 in the atmosphere raises the emission height. It is commonly claimed that doing so lowers the temperature of the CO2 at the emission height, according to the tropospheric lapse rate. That’s used to explain global warming, because lowering the temperature at the emission height would reduce emissions by CO2, “insulating” the Earth from some of the energy that it would otherwise have lost.

However, it turns out that at the center of the 15 μm absorption/emission band, the emission height is already at or near the tropopause, where the lapse rate is zero. So it’s only due to wavelengths at the fringes of the 15 μm absorption band that increased CO2 concentration has any warming effect at the Earth’s surface.

Dave,

If as you say, collisions convert all of the state energy into translational motion upon a collision within nanoseconds, what’s the origin of the significant photon flux at TOA in strong absorption bands? If can’t be the GHG molecules, as the state energy from all the available photons emitted by the surface has already been converted into translational energy and quickly shared by subsequent collisions, so there’s not enough energy available in subsequent collisions to re-energize GHG molecules. To do so requires converting just about all, or more of the translational kinetic energy into state energy resulting in reducing the velocity of the colliding molecules to near zero (absolute zero in terms of the temperature), which clearly isn’t happening. Something similar happens with laser cooling, but this can only occur under highly controlled conditions. The bottom line is that atmospheric collisions are not energetic enough to either consume or provide state energy for GHG molecules, but it is enough of an impact to significantly increase the probability of de-energization by the emission of a photon.

What happens upon a collision, is that the GHG molecule may be de-energized by the emission of a photon. This isn’t a spontaneous emission, but an induced emission, where that emitted photon is quickly absorbed by another GHG molecule. Another process that can induce the emission of a photon is the absorption of a second photon, in which case the mean time befor a spontaneous emission decreases to near zero. What you’re saying does have a finite probability of occurring, it’s just so low as to be insignificant, although to be fair, Joules are Joules, so in principle, the 2 mechanisms could have the same net effect.

Why would all state energy will be converted into translational motion upon any collision? A collision with a ground state molecule of the same type has a finite probability to exchange state, but the velocities of the molecules are otherwise unaffected. Note that most absorption physics is based on there being only one type of molecule in the mix. Another problem is trying to support the magnitude of the flux in absorption bands at TOA if it can only come from spontaneous emissions since even in the stratosphere, collisions will likely occur before an actual spontaneous emission.

The only actual ‘thermalization’ occurs when an energized GHG molecule condenses upon or is absorbed by the liquid and solid water in clouds. We can even see this in the spectrum at TOA where there’s slightly more than a 50% reduction in the energy in the wavelength bands most affected by water vapor absorption.

CO2:

As Dave says, N2 & O2 collisions with CO2 maintain it at a steady velocity distribution, the Maxwell-Boltzman distribution, which actually allows a significant kinetic energy spread. IR emission from CO2 would be more probably when it resides near the upper energy side of that distribution. If all that CO2 energy distribution is too small for 10 micron emission to occur, then CO2 absorption of 10u IR would slowly raise the overall energy level of all molecules at that level, until CO2 does have enough energy to emit. And then, as I comment below, it only emits at a Rate proportional to its temperature.

Don,

Yes, collisions of CO2 molecules with N2/O2 redistribute their translational kinetic energy upon collisions. The issue I’m pointing out is that whether or not a GHG molecule is energized has no bearing on the translational kinetic energy available to share with other molecules upon a collision. State energy can only be shared with other GHG molecules and only in whole quantums. The classic case is the transfer of state from an energized molecule to a ground state molecule upon collision. It seems that equapartition of energy has been overly generalized to degrees of freedom that aren’t actually free, but constrained by quantum mechanical considerations.

Have you noticed the similarities between the distribution of kinetic energy in molecules in motion corresponding to a temperature and the distribution of photon energies in an ideal Planck spectrum corresponding to a temperature?

CO2 and Don

There is one thing missing from the explanations above which are generally on the mark. It is that the average path length of an average photon of IR radiation is 1.8 time the depth of the atmosphere.

The implication is that some of the IR is not absorbed at all, and some is absorbed once. Much less is absorbed twice and so on. The atmosphere is quite “holey” so don’t over-state the absorption/re-radiation thing.

If there were no IR rising from the surface the CO2 and other GHG’s would still emit photons because as they cooled by doing so they would be heated by collisions with other molecules.

Lastly, there is no functional difference between insulation wrapped around a baking oven and the atmosphere wrapped around the Earth other than the energy put into the oven is not passed through the walls.

Adding more energy input increases the internal temperature until equilibrium is attained, just as adding more insulation does. The net effect is the same. The discussion above about a new equilibrium based on more solar input v.s. more GHG was on the mark. The TOA output always balances the total energy input save when the oceans are absorbing energy, which long term can be ignored as an average energy content.

The idea that there is a TOA “imbalance” is laughable when the value claimed is one tenth of the uncertainty. The uncertainty is 50 Watts/m^2 and the value claimed is 3-5. Good luck with that on your student project. Gets an F.

Crispin in Waterloo but really in Goleta December 28, 2019 at 10:32 am

Cite? I ask because my bible, Geiger’s “The Climate Near The Ground”, gives the following percentages for the origin of downwelling LW radiation at the surface:

So I’d doubt greatly that overall the mean free path for a LW photon is actuall greater than the thickness of the atmosphere.

Now, to be complete, I need to note that there is an “atmospheric window” allowing about 40W/m2 of LW of a particular frequency band to escape directly to space.

But for the rest of the frequencies, they’re absorbed down low and reabsorbed on their way further up from the surface.

w.

When a CO2 molecule gives up energy kinetically it spreads that energy over all the molecules of the local atmosphere in the form of kinetic energy. It is not reserved to only accelerating other CO2 molecules or to re-exciting other CO2 molecules. Therefore all the other atmospheric molecules are warmed. When a CO2 molecule is re-excited by collision it REMOVES heat from the atmosphere generally. Therefore there is NO net heating of the atmosphere from the IR photon that was originally emitted from the Earth.

The same with the theory of downwelling IR. If there is downwelling IR it has never been used to heat the atmosphere previously.

The originally emitted IR is captured by CO2 within a few tens of meters. Of the captured energy only about a billionth or so is able to be re-emitted because of the time constants of collision to re-emission as determined by the Einstein A coefficient and the time to collision in the atmosphere. A billionth! The lower Earth Atmosphere is a quenching atmosphere for an excited CO2 molecule, it practically NEVER re-emits. We see a huge amount of IR in the 15 micron missing from the TOA spectra.

I have been told that the method for calculating downwelling IR is to take the IR emitted from the Earth and then subtract the missing IR at TOA to calculate the amount of downwelling IR at the surface. (or to other CO2 molecules.)

If in fact there is downwelling IR, It simply cannot be measured directly. AERI instruments on the North Slope of Alaska and in Oklahoma, are specifically designed to DIRECTLY measure this radiation. They in fact measure downwelling radiation in other frequency ranges but in the 15 micron band they do not measure it. What they do is calculate a small amount by comparing the actual measurement with a simulated spectra.It is so tiny as to be unobtainable by direct measurement. Jon Gero originally claimed that it was only seen in the far wings. After a repeat study where Daniel Feldman applied a simulated spectra to the actual spectra was any difference discerned. It certainly did not account for the missing TOA energy.

butch123: Your first paragraph comments are correct.

However, the greenhouse heating generated by CO2 occurs because the RATE IR is emitted to space by those CO2 molecules is much lower than the rate the same IR would be emitted from the surface, directly to space. The reason is the surface–high atmosphere temperature difference.

YES, YES, YES !!

Dave, your comments make sense.

The atmosphere is heated by CO2 absorption. I fail to see how that increases the temperature of the surface, except when the surface is colder than the atmosphere. Any down-welling radiation to a warmer surface must be immediately re-radiated, i.e. reflected back upwards.

Have you felt the warming effect of CO2-initiated down-radiation on a cold cloudless night? Me neither. However we both have felt the warming effect of clouds on a cold night. Water condensation in clouds produces latent heat release that is the real reason that the Earth has a liveable climate. CO2 is beneficial to crops and has a negligible effect on the surface temperature. Am I wrong? Anyone?

“Any down-welling radiation to a warmer surface must be immediately re-radiated, i.e. reflected back upwards.”

No, but the NET radiation flux from the surface must be greater than the down-welling radiation (though not in the same frequency bands).

tty – December 27, 2019 at 5:08 am

Why not …… the same frequency bands? To wit:

tty is correct. The Earth’s surface’s does not become more reflective when it warms, and whether the surface absorbs or reflects incoming radiation does not depend on whether it is warmer or colder than the air above it.

You do, indeed, experience the warming effect of downwelling radiation from CO2 in the atmosphere, regardless of whether or not the air emitting it is cooler than your body temperature. Your skin just can’t separate the warming effect of that LW IR from the warming and cooling effects of convective/conductive and evaporative processes. However, the down-welling LW IR can be measured with instruments.

That’s what Feldman et al 2015 did: they measured the 1downwelling LW IR, during clear-sky conditions, using Atmospheric Emitted Radiance Interferometers. They took measurements over a ten year period, during which average atmospheric CO2 level increased about 22 ppmv.

They reported that a 22 ppmv increase (+5.953%, starting from 369.55 ppmv in 2000) in atmospheric CO2 level resulted in 0.2 ±0.06 W/m² increase in downwelling LW IR from CO2. (5.953% is about 1/12 of a doubling.)

(1/log2(1.05953)) × 0.2 W/m² = 2.40 W/m² (central value)

(1/log2(1.05953)) × (0.2 – 0.06 W/m²) = 1.68 W/m² (low end)

(1/log2(1.05953)) × (0.2 + 0.06 W/m²) = 3.12 W/m² (high end)

(note correction of a small typo in my first comment on this article, which affected the last digit)

So, Feldman’s results indicate that CO2 forcing is 2.40 ±0.72 W/m² per doubling, which is consistent with Happer’s result, and inconsistent with the commonly claimed figure of 3.7 ±0.4 W/m² per doubling.

“The atmosphere is heated by CO2 absorption. I fail to see how that increases the temperature of the surface”

The mean height to say 90% absorption drops from say 7 meters to 5 meters with the higher CO2 concentration.. The net change to convection is negligible, but presumably happens to maintain the lapse rate.

The surface increase in DWR heats the surface more, producing more moist warm air convection, which is a cooling function.

In moist conditions, no temperature change happens on the surface. In dryer conditions, such as at the poles, warming should occur, which is moderated by T4 radiative cooling.

It is very hard for me to see any measurable greenhouse effect. It is a dynamic, turbulent system, with surface temperature governed by air density, and cloud/ozone controlled insolation. How does HITRAN compute that? It doesn’t.

Clouds alone produced 10X the radiative forcing during the “brightening” before the turn of the century. Whatever caused that is my subject of interest. We are living inside this experiment.

There must be some reflection of IR by the clouds to produce back radiation. However, John, if your theory was correct the oceans would boil over, because release of latent heat upon condensation is the only way that the 75 W/m^2 of latent heat produced by evaporation from ocean water can be got rid of by the water molecules in the air over the oceans. If even 50% of that came back down to surface as back radiation, the oceans couldn’t evaporate it fast enough to prevent from boiling over. The oceans emit only ~ 13 W /m^2 of direct LWIR on average, so evaporation dwarfs any other heat release process from the oceans. The sun shines directly on surface more than 8 hours per day in most of the US and huge parts of the ocean get much more sunlight. This sunlight radiation has to escape back out into upper atmosphere from the oceans somehow, and that process is by evaporation Since even a desert in summertime is very cold on a cloudless night there cant be enough back radiation by CO2 to worry about. There may be some latent heat release to surface upon condensation but in generally, hot air rises.

Dave Burton – December 27, 2019 at 3:34 pm

That was nice, Dave, but I don’t recall responding to any such “reflective” claim by tty.

Well now, Dave B, iffen that’s what you believe, …… then it is you who has the problem, not me. Alan Tomalty will explain it to you in the following quote, to wit:

Shur nuff, Dave B, ……. and my common sense results INDICATES that you are partially addicted to the AGW Kool Aide.

Alan Tomalty – December 28, 2019 at 12:31 am

Alan T, very, very, very few people are willing to discuss “desert climates” when it involves “downwelling” radiation from atmospheric CO2. They all should try spending a “summer night” in a desert without a coat or blanket to keep warm.

Another example of why atmospheric CO2 is a literal failure as a global warming, …. uh, human body warming “radiant” or “conductive” in-home atmospheric gas.

“DUH”, in-home heating systems, both forced air and radiant heating are highly ineffective at keeping one’s body “warm” if the humidity (H2O vapor) is extremely low.

So, iffen you feel chilled, chilly or cold when your thermostat reads 75 F or so, then put a pot of boiling water on the stove or turn on your “humidifier”.

How do we start with a broadband TSI estimate to separate energy contributions in the adsorption bands versus vibrational contributions in other millimeter wave bands?

Stephen, I think that the question of equilibrium here is one of equilibrium with the deep ocean (not within the atmosphere-surface system). Equilibrium with the deep ocean may take hundreds or thousands of years due to slow mass transport. Transient temperature gradients will persist for a very long time. In fact it seems to me that equilibrium can never actually occur due to chaotic, constantly changing factors that oppose each other. Equilibrium in this case assumes that every factor, including CO2 remains completely unchanged for many centuries. How impossible is that? Indeed the ridiculous assumption is that the earth was absolutely static until we came along and burned some fossil fuels. It’s a kind of anti-hubris, that assumes everything we do has catastrophic impacts and there is nothing we can do that is beneficial.

Rich,

The temperature of the deep ocean is dictated by the pressure, temperature, density profile of water. As long as there’s cold water at the poles, the thermohaline circulation insures that even the deep ocean at the equator will be close to 0C independent of the planets average temperature. Gravity works very quickly and quite well to stratify hot and cold in the oceans.

Only the top few 100 meters of the oceans has anything to do with the steady state surface temperature. Assuming that the entire mass of the oceans needs to adapt to surface temperature changes is incorrect. The thermocline may shift up or down as a result of changing surface temperatures, but this only has a net effect on the energy content of the oceans for the small sliver of water at bottom edge of the thermocline and the water between the top of the thermocline and the surface.

Sure, there will always be “colder” surface water at the poles than at the equator, and I understand that cold water at depth is flowing from the poles and almost as cold near the equator as elsewhere, but I don’t see any justification to claim that the water sinking to the deep ocean is necessarily constantly at the freezing point of salt water, (and therefore a constant temperature over time) both in winter and in summer, or that the salinity level controlling that freezing point must be constant, or that geothermal heat flux is uniform across the seafloor and constant over time or that the currents flow at precisely the same rate or that there are no other factors that vary over time ultimately affecting the heat content of upwelling ocean currents.

It’s my understanding that some currents flow at such slow rates that it takes centuries for a volume of sinking water to reach the point of upwelling. Can you persuade me that something fixes the temperature of the polar water that sinks to depth such that there is a fixed temperature profile longitudinally from pole to equator along the seabed that is unaffected by the seasons? I grant you that the deep currents are likely in laminar flow with little turbulent mixing. If there is seasonal variability and there are currents that take hundreds of years (variable seasonal cycles) to complete a circuit, then there must be variation in the heat content of upwelling water that affects atmospheric temperatures and relative humidity. Moreover, if those variations are not perfectly cyclical, there must be long-term variation that is very complex. Do we observe variation in the temperature of upwelling currents? If you are correct, we should not.

The water with CO2 sinks is heavier and sinks to the bottom of the oceans where it flows to the south pole and is upwelled due to winds that circulate around the south pole.

Ralph is that an attempt at sarcasm? You couldn’t get it much more backward, so I assume you were trying.

Rich,

It’s a hydrological process where evaporation of tropical oceans exceeds tropical rainfall while rainfall across the rest of the planet exceeds local evaporation. The excess water falling on the oceans near the poles replaces the excess evaporation in the tropics by pushing up from the bottom owing to the intrinsic thermal stratification by gravity that keeps denser cold water below warmer surface waters. In addition, the transfer of water from the tropics to the poles is also a significant, if not the primary, mechanism for moving energy from the equator to the poles.

While we don’t often consider water to be a thermal insulator, at a sufficient thickness it can insulate hot from cold and that this sets the thickness of the thermocline which keeps the deep water cold, even in the tropics.

When you calculate the heat flow through the thermocline based on the thermal conductivity of water, it seems to exactly offset the average heat flow coming from the planet below, both on the order of about 1 W/m^2. This makes the NET energy flux through the thermocline approximately zero meaning that the NET heat being added to the deep ocean comes from the Earth’s interior which is completely independent of the temperature of the virtual surface of Earth in direct equilibrium with the Sun.

I mean ‘hydraulic’ not hydrological although water is the uncompressible fluid involved.

Stephen Wilde wrote, “There cannot be any delay in the equilibrium process without breaching the Gas Laws.”

No. The fact that it takes time for things to warm up, and takes a long time for large things to warm up, when they are absorbing energy, does not breach any laws. Neither does the fact that it takes time for things to melt, or evaporate, when they are absorbing energy.

The LW IR energy that is absorbed by the atmosphere’s GHGs (and thus warms the atmosphere) comes from the surface, which does not respond instantly to changes in the conditions that affect its temperature.

I do, however, suspect that the large ECS-to-TCR ratios built into most CMIP GCMs (rightmost column here) are very far from the mark.

Dave,

Yes, there should actually be no difference between the ECS and TCR. The planet is adapting to changing CO2 concentrations about as fast as those concentrations are changing. The most missing effect that could be claimed is the equivalent of about half of the total effect from the CO2 emitted during the last 12 months.

The idea of warming yet to come is based on the amplification seen entering and leaving ice ages. They fail to realize that on the warming side, this only occurs when there’s significant average ice and snow coverage to melt, which isn’t the case today since we are close to minimum possible ice. We also know that both Antarctic and Greenland glaciers have already survived sustained warmer global average temperatures than we have today for thousands of years at a time. Even when the planet gets warmer, winters are still cold and dark.

co2isnotevil – December 26, 2019 at 4:59 pm

I think you got that “arse backward”, …… didn’t you?

OK.

… just as slowly as those concentrations are changing …

I am puzzled that so many people donot understand Henry’s Law [no pun intended / my own name is also Henry…]

here is my take on it

http://breadonthewater.co.za/2019/12/15/greta-thunberg-the-savior-of-the-world/

let me know what you think?

Henry’s law is a useful approximation for the absorption of a gas by a liquid. At low concentrations in the liquid phase and for nearly ideal gas behavior, the fugacity of the gas can be assumed to be equal to its partial pressure, and the solubility of the gas is nearly proportional to its partial pressure in the gas phase (at constant temperature). Thus we use H=Py/x as an approximation for the rigorous definition, which I have to look up to get correctly, and can’t type in this blurb. This applies for a nearly ideal gas phase, which our atmosphere is. It also applies for low concentration of the gas in the liquid phase and no reactions. Therefore, Henry’s law is not used for the solubility of ammonia in water. It is a reasonable approximation for CO2 since the reaction of CO2 with water does not proceed very far near room temperature. It is a very good approximation for the solubility of gasses like nitrogen, oxygen, argon, methane, helium, etc. in most fluids.

Loren Wilson, the effects of Henry’s Law is the only sensible explanation for the plotted CO2 ppm on the Keeling Curve Graph, ….. both the biyearly (seasonal) and yearly quantities.

Except that if you compare the 5 ppm seasonal variability to the 2 ppm linear trend and consider the 5 ppm the response to changing average temperatures, the 2 ppm linear trend would need to correlate to a temperature trend of about 1C per year. Henry’s Law can’t reconcile this. Yes, it has a small effect, but other factors have even larger effects.

You’re doing the same thing alarmists do by focusing on one finite, but relatively small contributing mechanism to the exclusion of everything else.

co2isnotevi -l December 29, 2019 at 10:40 am

Don’t be accusing me of what you are guilty of because it is obvious to me that you “can’t see the forest for all the trees that are blocking your view”.

co2is, ….. the following “max/min” monthly CO2 ppm data was extracted directly from NOAA’s Mauna Loa Record for the years 1979 thru 2019, …. to wit:

year mth “Max” _ yearly increase ____ mth “Min” ppm

1979 _ 6 _ 339.20 …. + ….. El Niño ___ 9 … 333.93

1980 _ 5 _ 341.47 …. +2.27 _________ 10 … 336.05

1981 _ 5 _ 343.01 …. +1.54 __________ 9 … 336.92

1982 _ 5 _ 344.67 …. +1.66 El Niño __ 9 … 338.32 El Chichón

1983 _ 5 _ 345.96 …. +1.29 _________ 9 … 340.17

1984 _ 5 _ 347.55 …. +1.59 __________ 9 … 341.35

1985 _ 5 _ 348.92 …. +1.37 _________ 10 … 343.08

1986 _ 5 _ 350.53 …. +1.61 _________ 10 … 344.47

1987 _ 5 _ 352.14 …. +1.61 __________ 9 … 346.52

1988 _ 5 _ 354.18 …. +2.04 __________ 9 … 349.03

1989 _ 5 _ 355.89 …. +1.71 La Nina __ 9 … 350.02

1990 _ 5 _ 357.29 …. +1.40 __________ 9 … 351.28

1991 _ 5 _ 359.09 …. +1.80 __________ 9 … 352.30

1992 _ 5 _ 359.55 …. +0.46 El Niño __ 9 … 352.93 Pinatubo

1993 _ 5 _ 360.19 …. +0.64 __________ 9 … 354.10

1994 _ 5 _ 361.68 …. +1.49 __________ 9 … 355.63

1995 _ 5 _ 363.77 …. +2.09 _________ 10 … 357.97

1996 _ 5 _ 365.16 …. +1.39 _________ 10 … 359.54

1997 _ 5 _ 366.69 …. +1.53 __________ 9 … 360.31

1998 _ 5 _ 369.49 …. +2.80 El Niño __ 9 … 364.01

1999 _ 4 _ 370.96 …. +1.47 La Nina ___ 9 … 364.94

2000 _ 4 _ 371.82 …. +0.86 La Nina ___ 9 … 366.91

2001 _ 5 _ 373.82 …. +2.00 __________ 9 … 368.16

2002 _ 5 _ 375.65 …. +1.83 _________ 10 … 370.51

2003 _ 5 _ 378.50 …. +2.85 _________ 10 … 373.10

2004 _ 5 _ 380.63 …. +2.13 __________ 9 … 374.11

2005 _ 5 _ 382.47 …. +1.84 __________ 9 … 376.66

2006 _ 5 _ 384.98 …. +2.51 __________ 9 … 378.92

2007 _ 5 _ 386.58 …. +1.60 __________ 9 … 380.90

2008 _ 5 _ 388.50 …. +1.92 La Nina _ 10 … 382.99

2009 _ 5 _ 390.19 …. +1.65 _________ 10 … 384.39

2010 _ 5 _ 393.04 …. +2.85 El Niño __ 9 … 386.83

2011 _ 5 _ 394.21 …. +1.17 La Nina _ 10 … 388.96

2012 _ 5 _ 396.78 …. +2.58 _________ 10 … 391.01

2013 _ 5 _ 399.76 …. +2.98 __________ 9 … 393.51

2014 _ 5 _ 401.88 …. +2.12 __________ 9 … 395.35

2015 _ 5 _ 403.94 …. +2.06 __________ 9 … 397.63

2016 _ 5 _ 407.70 …. +3.76 El Niño __ 9 … 401.03

2017 _ 5 _ 409.65 …. +1.95 __________ 9 … 403.38

2018 _ 5 _ 411.24 …. +1.59 __________9 … 405.51

2019 _ 5 _ 414.66 …. +3.42 __________9 … 408.50

La Nina – El Nino index: https://ggweather.com/enso/oni.htm

https://wattsupwiththat.com/2019/12/28/two-more-degrees-by-2100/#comment-2880462

And the above MLR data doesn’t lie. And if you subtract the September (9) “min” CO2 ppm from the May (5) “max” CO2 ppm of the same year, …… 99% of the time you will get an Average 6 ppm summertime decrease in CO2 …. regardless of what us humans or the plant world does or has been doing.

And. Co2is, if you subtract the September (9) “min” CO2 ppm of the previous year (say 1982) ….. from the May (5) “max” CO2 ppm of the next year (1983), …… 99% of the time you will get an Average 8 ppm wintertime increase in CO2 …. regardless of what us humans or the plant world does or has been doing.

So, CO2 has been down an average 6 ppm in summer, and up an average 8 ppm (6 + 2 ppm) in winter, …… steadily and consistently for the past 61 years.

Which means that the bi-yearly (seasonal) cycling of average 6 ppm CO2 is a direct result of the ingassing/outgassing of CO2 …… as a direct result of seasonal temperature changes of the ocean waters in the different hemispheres.

And that the yearly average 2 ppm increase in average CO2 ppm is a direct result of the ocean water re-warming from the cold of the LIA. As the ocean waters warm, they outgas more CO2 than they ingas, thus the 2% average increase. And of course, El Ninos, La Ninas and volcanic eruptions leave their “signature” in the MLO CO2 record as noted above.

Sam,

“Don’t be accusing me of what you are guilty of …”

I’m doing no such thing. I never said that ocean temperatures have no bearing on CO2 levels, just that there are other things that have a larger influence, most importantly, CO2 emissions and biology where you seem to be ignoring both.

Don’t be disturbed by falsification tests of your hypothesis. This is how science works. Ignoring falsification is what alarmists do. Testing hypotheses is what I do and when I test yours, it breaks. Your test of the Moana Loa data may not fail, but it’s not the only test. In fact, there are few, if any, falsified hypotheses that don’t also have a test that would seem to confirm them and in fact, it was often the apparently confirming test that led to the incorrect hypothesis in the first place.

Your explanation doesn’t work for why a 6 ppm difference arises from a global 3C temperature swing, yet 2 ppm arises from a yearly temperature trend of a small fraction of a degree per year. If the 6 ppm seasonal swing arose from a 3C temperature swing, it would take an ‘LIA recovery’ warming trend of 1C per year to manifest a 2 ppm linear trend. In other words, it would take only 3 years to warm the planet by an amount equal to the difference between winter and summer.

Science is all about testing hypothesis, so lets apply some arithmetic for a more robust test. For a yearly trend of .02C per year (0.2C per decade) to cause a 2 ppm yearly increase, the effect is 1 ppm of CO2 per .01C. If the 3 C yearly global tempearature swing had the same effect, it should have caused a CO2 swing of 3/ .01 = 300 ppm! Time constants can’t explain this discrepancy, as both seasonal change and the LIA recovery are subject to the same time constants and the same rates of change, although relative to the solubility of CO2 in water, the time constant is too short to have any real effect.

Of course, the temperature change from year to year isn’t monotonic and some years are colder than the one before, yet CO2 continues to increase monotonically. Would you like to try and explain this?

co2isnotevil – December 30, 2019 at 9:44 am

co2isnotevil, …… GETTA CLUE, ….. I earned an AB Degree in Biological Science, with emphasis on Botany, Bacteriology and other aspects of the “natural world” that we live in.

“DUH”, there is absolutely, positively NO greater influence on atmospheric CO2 ppm quantities than the GREATEST “sink” ever of CO2, which is the ocean waters, and primarily the ocean waters residing in the Southern Hemisphere, to wit:

“ The Northern Hemisphere is 60% land and 40% water. The Southern Hemisphere is 20% land and 80% water”.

OH GOOD GRIEF, co2isnotevil, ….. of course my hypotheses “breaks” when you apply your “junk science” fictional quantities for “testing”. I and my hypothesis don’t give a damn about your estimated/guesstimated average near-surface air temperature of 3C.

GETTA CLUE. co2is, …. it is the “changing” of the seasonal temperature of the ocean surface waters that determines the rate of ingassing/outgassing CO2.

“If the, ..if the, ..if the, ……”If you say so, co2isnotevil, ….. but why in hell did you make such an asinine claim if you knew it was FUBAR?

co2isnotevil, ….. you really shouldn’t be asking dumb arsed questions and expecting intelligent answers.

Which temperature change(s) are you referring to in your above?

Local, regional or global average near-surface atmospheric temperature increases/decreases?

Or maybe local, regional or global average soil temperature increases/decreases?

Or local, regional or global SEASONAL average sea surface water temperature increases/decreases?

Or local, regional or global average Urban Heat Island temperature increases/decreases?

And last but not least, …. monotonic nature of the local, regional or global average yearly increase in ocean surface water temperature, ….. the result of the slow “warming up” following the demise of the LIA.

Referring to the comment about CO2 being “well mixed”, I offer the following thoughts.

The earth emits over 95% of the annual emission of CO2. Humans are responsible for less than 5%. It is emitted at the surface, and it is reabsorbed at the surface. We know about the heat islands around cities, said to be due to high concentrations of CO2.

Thanks to gravity, the atmosphere is densest at the surface, and less dense with altitude. How can CO2 be well mixed with the rest of the atmosphere if the bulk of it is coming from and going back to the surface, and it is densest at the surface?

Well mixed is a relative matter.

Certainly CO2 is well mixed compared to water vapor and clouds.

John, nobody I know of is claiming that the surface CO2 is well mixed.

However, it is indeed well mixed in the bulk atmosphere compared to the major greenhouse gas, which is water vapor, which is what folks are referring to.

w.

Re whether or not CO2 is a well mixed gas in the atm, I’m confused.

Carbon Dioxide Not a Well Mixed Gas and Can’t Cause Global Warming

By: John O’Sullivan

http://bit.ly/2kiynGC

“…Acceptance of the “well-mixed gas” concept is a key requirement for those who choose to believe in the so-called greenhouse gas effect. A rising group of skeptic scientists have put the “well-mixed gas” hypothesis under the microscope and shown it contradicts not only satellite data but also measurements obtained in standard laboratory experiments.

Canadian climate scientist, Dr Tim Ball is a veteran critic of the “junk science” of the International Panel on Climate Change (IPCC) and no stranger to controversy.

Ball is prominent among the “Slayers” group of skeptics and has been forthright in denouncing the IPCC claims; “I think a major false assumption is that CO2 is evenly distributed regardless of its function.“

“…CO2: The Heavy Gas that Heats then Cools Faster!

The same principle is applied to heat transfer, the Specific Heat (SH) of air is 1.0 and the SH of CO2 is 0.8 (heats and cools faster). Combining these properties allows for thermal mixing. Heavy CO2 warms faster and rises, as in a hot air balloon. It then rapidly cools and falls.”

“…The cornerstone of the IPCC claims since 1988 is that “trapped” CO2 adds heat because it is a direct consequence of another dubious and unscientific mechanism they call “back radiation.” In no law of science will you have read of the term “back radiation.” It is a speculative and unphysical concept and is the biggest lie woven into the falsity of what is widely known as the greenhouse gas effect.

Professor Nasif Nahle, a recent addition to the Slayers team, has proven that application of standard gas equations reveal that, if it were real, any “trapping” effect of the IPCC’s “back radiation” could last not a moment longer than a miniscule five milliseconds – that’s quicker than the blink of an eye to all you non-scientists…”

Please help and explain the two opposing claims about CO2. As I said above, I’m confused.

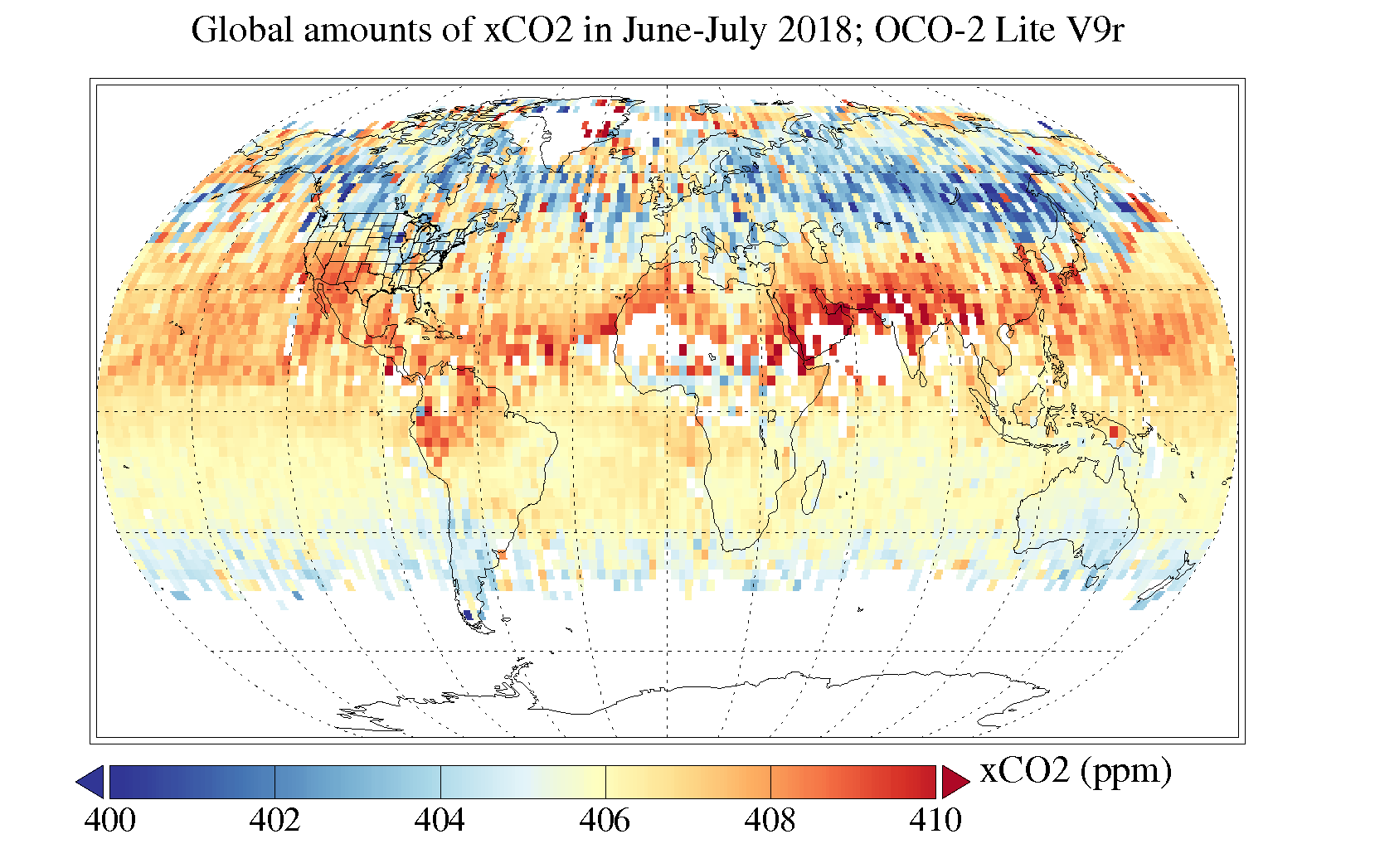

KcTax, you ask:

This is where you gotta decide who you gonna believe—actual measurements from the OCO satellite I showed above … or someone’s claim in some paper somewhere who is waffling on about density and specific heat without any actual measurements.

Your choice.

Let me note, if it helps, that CO2 is generated at the surface. As a result, at the surface there are places with CO2 levels five or ten times the background level.

But that’s in a thin layer at the surface, and above that it gets well mixed by the action of the air.

I discuss some of these issues in detail here.

w.

By way of clarification, the way KcTaz copied & pasted that material, some readers might think he was attributing it to Dr. Tim Ball. I think the only part of it actually by Dr. Ball was this lone sentence: “I think a major false assumption is that CO2 is evenly distributed regardless of its function.”

The rest of it, including the nonsense claiming that “back radiation” doesn’t exist, was written by Mr. John O’Sullivan.

I’ll be blunt: O’Sullivan’s organization, “Principia-Scientific International” (PSI), and their web site, are evil. It’s a disinformation site, run by crackpots (often called “slayers,” after their ridiculous book). Their role in the climate debate is to help climate activists discredit climate skepticism.

This despicable smear of Roy Spencer, Judith Curry, Anthony Watts, and Fred Singer is perfectly in character for them. Lies are the stock-in-trade of PSI.

Some of the material on the PSI site is good, but THAT material is always copied (and I think often plagiarized) from OTHER sites. The material which originates from PSI’s own member authors is predictably nonsense.

Unfortunately, that mix truth and fiction makes their web site even more deceptive, because it makes the fiction harder to recognize.

“Falsehood is never so false as when it is very nearly true.”

– G.K. Chesterton

Their leader, CEO John O’Sullivan, is a notorious fraudster. Three other longtime PSI authors are Pierre Latour, Joe Postma & Joe Olson. (They used to have a 5th prolific author, one Douglas Cotton, but he had a falling out with the others.)

I call O’Sullivan “the bad John O’Sullivan,” to distinguish him from “the good John O’Sullivan,” of National Review. The bad John O’Sullivan has been known to impersonate the good John O’Sullivan, on occasion.

Joe Olson is a “9-11 truther” who accused President W. Bush of staging the 9-11-2001 terrorist attack, and destroying the World Trade Center with “micro-nukes.” (Really, I’m not making this up. Hmmm… well, the recording of his 9-11 nutter conference presentation entitled “Unequivocal 9/11 Nukes,” which was linked from this page, is gone from YouTube, but, not to worry, I saved a copy; email me if you want it.)

In 2015 O’Sullivan & Pierre Latour blatantly lied on the PSI web site, and also in emails, about Dr. Fred Singer’s views. The web site article was entitled, “Singer Concurs with Latour: CO2 Doesn’t Cause Global Warming.”

After several people objected, O’Sullivan doubled down in an email, writing, “Fred Singer has now come over to PSI’s view that CO2 can only cool. I suggest you contact him.”

So I did. I forwarded that to Prof. Singer, who was then 90 years old, and asked him:

Prof. Singer replied succinctly:

That’s what I expected, of course. I forwarded it to O’Sullivan & the other slayers, plus some of the people who had been trying to persuade PSI to remove the dishonest article, including Dr. Singer’s friend, Lord Christopher Monckton. But O’Sullivan STILL refused to to remove the dishonest article from the PSI web site.

Prof. Singer elaborated in a subsequent email:

“Jo” is Jo Nova. She weighed in, and appealed to O’Sullivan (and cc’d the other three), to do the right thing:

PSI’s Joe Postma weighed in, defending O’Sullivan’s and Latour’s dishonesty! He wrote:

Eventually, after Lord Monckton threatened legal action, PSI finally did edit and tone down the article, making it less flagrantly false. It now says “Singer Converges on ZERO Climate Carbon Forcing.”

It is still misleading — as is just about everything from PSI.

Joe Postma

Shows that the “flat earth model”of the IPCC is too simple. Their real models are built into the GCMs which don’t fit the real data.

https://climateofsophistry.com/2019/10/19/the-thing-without-the-thing/

https://climateofsophistry.com/2019/09/05/real-climate-physics-vs-fake-political-physics/

https://principia-scientific.org/webcast-no-radiative-greenhouse-effect/

I find Postma’s stuff straight forward.

philf, Postma’s “stuff” is 100% crackpot nonsense.

I tried to watch one of those videos, the one he entitled “There is no Radiative Greenhouse Effect – Main Presentation”. It’s 70 minutes long on YouTube (where he has disabled comments, for obvious reasons).

The more I watched the worse it got. I made it through 17½ minutes of bovine dung before I couldn’t endure the stench any more.

But I took notes…

At 1:19 he showed several examples of very simple diagrams used to illustrate the principles of so-called “greenhouse warming,” and said, “You’ll find these sort of diagrams everywhere, exclusively.”

That’s untrue. You mostly find diagrams like this:

The diagrams he showed are very simplified.

2:00 he said, “[these diagrams are] the consensus and the only derivation of where the greenhouse effect comes from.”

Wrong. Those aren’t “the derivation” of the so-called greenhouse effect. They are just simplified illustrations of it.

2:10 “There’s one, basic, fundamental problem with this diagram… What’s the problem? The Earth is flat.”

It’s an approximation, not an error — and it’s a very close approximation, too.

It is not a problem that the Earth and atmosphere are shown as flat in some highly simplified illustrations, since the atmosphere is just a very layer relative to the diameter of the Earth. The Earth’s diameter is nearly 8,000 miles. The height of the tropopause averages just 1/10 of 1% of that.

If the diagram represents ten miles of horizontal distance, then to correctly show the curvature, the center should be elevated by 4’2″. That’s 4.17/52,800 = 0.0079% above the horizontal.

If the scale is one mile to the inch (so that the horizontal line is ten inches long on your screen) then the true curvature would require the center of the 10″ line to be raised by eight ten-thousand-th of an inch.

(Also, the more commonly seen Trenberth diagram does not show the Earth as flat; in fact, it greatly exaggerates the curvature of the Earth’s surface — which makes no difference at all, w/r/t the concepts involved.)

5:30 “One error is that Earth’s effective temperature is being used as the solar imput.”

No, it isn’t. The solar input is measured, by satellites. It is between 1360 and 1370 W/m² if the Sun is directly overhead, and 1/4 of that averaged over the surface of the Earth.

Postma might be confused by the fact that the energy fluxes to and from the Earth are, on average, nearly identical. We know that fact because if they were not nearly identical then the Earth would be either heating or cooling rapidly.

6:08 “The solar input to the Earth does not occur over the same surface area as Earth’s radiative output.”

Can he really be that confused about his topic, that he doesn’t realize the figures in the diagrams are averages, over the entire Earth, including the dark side?

Roy Spencer did an excellent job of explaining Postma’s confusion, here:

https://wattsupwiththat.com/2019/06/04/on-the-flat-earth-rants-of-joe-postma/

6:23 “By equating fluxes, you dilute the solar input over the entire surface area of the Earth.”

“Dilute?” It’s called averaging.

7:08 “This derivation says that sunshine is unable to melt ice, and it says that sunshine isn’t responsible for summer…”

That’s total nonsense. He apparently thinks that calculating an average is equivalent to declaring that everything is at the average.

7:18 “[This derivation says that]… the greenhouse effect is responsible for melting ice, and for creating summer…”

Also complete nonsense. It says nothing of the kind.

7:24 “Now the real absorbed solar constant is actually really hot, it’s 121°C, raw. If you take off 30% absorption for albedo then it’s 88°C.”

That’s gibberish. The solar constant is an irradiance flux, not a temperature, and there’s no such thing as an “absorbed solar constant.”

The numbers he made up are meaningless nonsense, too.

10:24 “…so you have two sources that are at -18°C…”

The two sources he’s talking about are solar radiation, and LW IR back-radiation from radiatively active “greenhouse gases” in the atmosphere. They are not “at -18°C” or any other temperature. They are just radiation.

Radiation has energy density, and spectral characteristics, and it can even be polarized. But it does not have a temperature.

10:32 “…lets add two ice cubes together, do they make a higher temperature? …that’s fake thermodynamics.”

That’s complete gibberish. Ice cubes are not radiation.

If you pour energy into something from two sources, it will heat faster than if you cut off one of the two sources. If you turn on two burners on your electric stove, your kitchen will warm faster, and top out at a higher temperature, than if you turn on only one burner. Of course.

10:50 (waves pointer at a box labeled “energy balance at the Earth’s surface”) and says: “this part here, basically what they’re doing is adding two temperatures together and saying that this is going to create a new temperature.”

Apparently he doesn’t know the difference between energy and temperature. Unbelievable!

On the screen you can see that he has written, “Temperatures/fluxes never add in thermodynamics!” and he also says that at 12:00. Clearly, he thinks temperature and energy flux (power) are the same thing.

It astonishes me that he apparently managed to get two scientific degrees from the U. of Calgary, without learning such basic concepts.

12:12 “The only thing temperatures and fluxes do in thermodynamics is subtract, in which case you get heat.”

That’s nonsense. Energy fluxes sum, they don’t “subtract.” In an adiabatic system, temperatures tend toward an average, they don’t “subtract,” either.

And he obviously doesn’t know what heat is.

16:41 “… ‘if there are temperature differences, the heat energy flows from the hotter region to the colder region.’ Alright, so this establishes that the cooler atmosphere cannot send any heat to the surface.”

Nonsense. All it establishes is that if the surface is warmer than the atmosphere, the NET flow of heat will be from the surface to the atmosphere.

However, BOTH radiate (cooling in the process), and EACH absorbs radiation from the other (warming in the process). The reason the net flow of heat is from the surface to the atmosphere is simply that the transfer of energy from warmer to cooler is faster than the transfer of energy in the opposite direction, because warmer things radiates faster than the cooler things.

17:21 “Radiant flux from the cooler atmosphere cannot transfer as heat to the warmer surface.”

Nonsense. (The word “as” seems to be spurious.) Radiation from the atmosphere absolutely is absorbed by the surface, warming it in the process.

All the 2nd Law requires is that the NET heat flow is from warmer surface to cooler air.

If you heat the air, it’ll increase the rate at which the air radiates and heats the surface, thus reducing the NET rate of flow of heat from surface to air, thus making the surface warmer than it otherwise would have been.

And there I stopped. I hit the limit of the amount of ridiculous nonsense I’m willing to wade through in one day. I’m a patient man, but my patience is not limitless.

I don’t think that it’s the case that the UHI effect is said to be due to high concentrations of CO2. It’s due to changes in surface albedo from land use, structures with high heat capacity that absorb solar energy and then re-radiate at night, as well as the fact that all sources of energy used in an urban environment will dissipate as heat into the environment there.

John, I will grant you that urban areas have a higher concentration of CO2 than do rural areas. However. would need to see data showing that urban CO2 concentration causes the UHI.

UHI

Nothing to do with CO2 bubbles around urban centres

Concrete, steel, glass, roads…comparatively few trees

Step inside a garden/park in a major city on a warm day and the temperature is significantly lower.

CO2 is about 60% denser that air with a molar mass of 44 g/mol, so it does sink. On the other hand H2O as a gas is about 18g/mol.

So, one gas sinks, the other rises until it undergoes another phase change.

Turbulence does some mixing but the molecular weights generally determines location.

Not true, Keith. In fact, while a bolus of CO2 will sink to the ground and even run downhill, it quickly is broken up and mixed upwards by turbulence and wind.

We don’t have to guess about this. We can actually measure the vertical profile of CO2 from satellite observations. There’s an interesting journal article here with more than you’d ever want to know about it.

TL;DR version? Your claim that “Turbulence does some mixing but the molecular weights generally determines location” is incorrect.

w.

Interesting paper, thanks for the link. Figure 6 was surprising to me, though. It shows that from about mid-July through early October of every year, the concentration of CO2 below 10 km altitude (for the 50 to 60 degree N latitude band) is lower than that above 10 km – significantly lower. The rest of the year, the situation is reversed. In fact, it appears that for most of the year, CO2 concentration in the 16 to 18 km altitude range is always about 8 ppm less than it is in the 8 to 10 km range. That has to have some kind of effect, but it makes my head hurt trying to figure out what it might be.

John S,

UHI can be caused by many processes. I have never considered CO2 as being other than trivial. What mechanism do you imagine for CO2? Geoff S

NASA/JPL’s OCO-2 mission was launched on July 2, 2014 with much fanfare from the press and Climate Change porn pushers.

NASA and the climate change science-pornography industry of course were expecting the actual data from this orbiting platform to confirm their ideas about the near-exclusive human sources, thus pinning the blame on humanity for the rise in the Evil Molecule concentration both in its gaseous form in our atmosphere and in partial pressure suspension in our oceans, lakes and rivers. It’s a gas we know as essential for all plant and animal life by providing the base-level carbon substrate. The Evil Molecule exists of course as a trace gas, which since the end of the LIA, has been rising with some portion from human burning long-sequestered stores of stored energy forms. Our burning for energy that is in their words (paraphrasing here), “polluting our atmosphere, threatening catastrophic warming and wrecking ecological destruction on the planet and an existential threat to civilization.”