Author: Thomas K. Bjorklund, University of Houston, Dept. of Earth and Atmospheric Sciences, Science & Research

[Notice: This November 2019 post is now updated with multiple changes on 5/3/2020 to address many of the issues noted since the original posting]

Key Points

- From 1850 to the present, the noise-corrected, average warming of the surface of the earth is less than 0.07 degrees C per decade, possibly as low as 0.038 degrees C per decade.

- The rate of warming of the surface of the earth does not correlate with the rate of increase of fossil fuel emissions of CO2 into the atmosphere.

- Recent increases in surface temperatures reflect 40 years of increasing intensities of the El Nino Southern Oscillation climate pattern.

Abstract

This study investigates relationships between surface temperatures from 1850 to the present and reported long-range temperature predictions of global warming. A crucial component of this analysis is the calculation of an estimate of the warming curve of the surface of the earth. The calculation removes errors in temperature measurements and fluctuations due to short- duration weather events from the recorded data. The results show the average rate of warming of the surface of the earth for the past 170 years is less than 0.07 degrees C per decade, possibly as low as 0.038 degrees C per decade. The rate of warming of the surface of the earth does not correlate with the rate of increase of CO2 in the atmosphere. The perceived threat of excessive future global temperatures may stem from misinterpretation of 40 years of increasing intensities of the El Nino Southern Oscillation (ENSO) climate pattern in the eastern Pacific

Ocean. ENSO activity culminated in 2016 with the highest surface temperature anomaly ever recorded. The rate of warming of the earth’s surface has declined 45 percent since 2006.

Introduction

The results of this study suggest the present movement to curtail global warming may by premature. Both the highest ever recorded warming currents in the Pacific Ocean and

technologically advanced methods to collect ocean temperature data from earth orbiting satellites coincidently began in the late 1970s. This study describes how the newly acquired high-resolution temperature data and Pacific Ocean transient warming events may have convolved to result in long-range temperature predictions that are too high.

HadCRUT4 Monthly Temperature Anomalies

The HadCRUT.4.6.0.0 monthly medians of the global time series of temperature anomalies, Column 2, 1850/01 to 2019/08 (Morice, C. P., et. al. 2012) was used for this report together with later monthly data to 2020/02. Only since 1979 have high-resolution satellites provided

simultaneously observed data on properties of the land, ocean and atmosphere (Palmer, P.I., 2018). NOAA-6 was launched in December 1979 and NOAA-7 was launched in 1981. Both were equipped with microwave radiometry devices (Microwave Sounding Unit-MSU) to precisely monitor sea-surface temperature anomalies over the eastern Pacific Ocean and the areas of ENSO activity (Spencer, et al., 1990). These satellites were among the first to use this technology.

The initial analyses of the high-resolution satellite data yielded a remarkable result. Spencer, et al. (1990), concluded the following: “The period of analysis (1979–84) reveals that Northern and Southern hemispheric tropospheric temperature anomalies (from the six-year mean) are positively correlated on multi-seasonal time scales but negatively correlated on shorter time scales. The 1983 ENSO dominates the record, with early 1983 zonally averaged tropical temperatures up to 0.6 degrees C warmer than the average of the remaining years. These natural variations are much larger than that expected of greenhouse enhancements and so it is likely that a considerably longer period of satellite record must accumulate for any longer-term trends to be revealed”.

Karl, et al. (2015) claim that the past 18 years of stable global temperatures is due to the use of biased ocean buoy-based data. Karl, et al. state that a “bias correction involved calculating the average difference between collocated buoy and ship SSTs. The average difference globally was

−0.12°C, a correction that is applied to the buoy SSTs at every grid cell in ERSST version 4.” This analysis is not consistent with the interpretation of the past 18-year pause in global warming. The discussion below of the first derivative of a temperature anomaly trendline shows the rate of increase of relatively stable and nearly noise-free temperatures peaked in 2006 and has since declined in rate of increase to the present.

The following is a summary of conclusions by Karl, et al. (2015) (called K15 below) by Mckitrick (2015): “All the underlying data (NMAT, ship, buoy, etc.) have inherent problems and many teams have struggled with how to work with them over the years. The HadNMAT2 data are

sparse and incomplete. K15 take the position that forcing the ship data to line up with this dataset makes them more reliable. This is not a position other teams have adopted, including the group that developed the HadNMAT2 data itself. It is very odd that a cooling adjustment to SST records in 1998-2000 should have such a big effect on the global trend, namely wiping out a hiatus that is seen in so many other data sets, especially since other teams have not found reason to make such an adjustment. The outlier results in the K15 data might mean everyone else is missing something, or it might simply mean that the new K15 adjustments are invalid.”

Mears and Wentz (2016) discuss adjustments to satellite data and their new dataset, which “shows substantially increased global-scale warming relative to the previous version of the dataset, particularly after 1998. The new dataset shows more warming than most other middle tropospheric data records constructed from the same set of satellites.” The discussion below shows the warming curve of the earth has been decreasing in rate of increase of slope since July 1988; that is, the curve is concave downward. Based on this observation alone, their new dataset should not show “substantially increased global-scale warming.”

Analysis of Temperature Anomalies-Case 1

All temperature measurements used in this study are calculated temperature anomalies and not absolute temperatures. A temperature anomaly is the difference of the absolute measured temperature from a baseline average temperature; in this case, the average annual mean temperature from 1961 to 1990. This conversion process is intended to minimize the effects on temperatures related to the location of the measurement station (e.g., in a valley or on a mountain top) and result in better recognition of regional temperature trends.

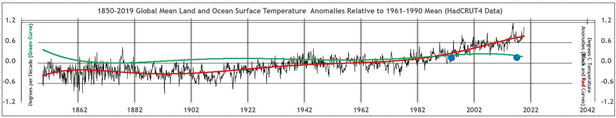

In Figure 1, the black curve is a plot of monthly mean surface temperature anomalies. The jagged character of the black temperature anomaly curve is data noise (inaccuracies in measurements and random, short term weather events). The red curve is an Excel sixth-degree polynomial best fit trendline of the temperature anomalies. The curve-fitting process removes high-frequency noise. The green curve, a first derivative of the trendline, is the single most important curve derived from the global monthly mean temperature anomalies. The curve is a time-series of the month-to-month differences in mean surface temperatures in units of degrees Celsius change per month. These very small numbers are multiplied by 120 to convert the units to degrees per decade (left vertical axis of the graph). Degrees per decade is a measure of the rate at which the earth’s surface is cooling or warming; it is sometimes referred to as the warming (or cooling) curve of the surface of the earth. The green curve temperature values are close to the values of noise-free troposphere temperature estimates determined at the University of Alabama in Huntsville for single points (Christy, J. R. May 8, 2019). The green curve has not previously been reported and adds a new perspective to analyzes of long-term temperature trends.

In a recent talk, John Christy, director of the Earth System Science Center at the University of Alabama in Huntsville, reported estimates of noise-free warming of the troposphere in 1994 and 2017 of 0.09 and 0.095 degrees C per decade, respectively (Christy, J. R., May 8, 2019). These values were estimated from global energy balance studies of the troposphere by Christy and McNider using 15 years of newly acquired global satellite data in 1994 (Christy, J. R., and R.

T. McNider, 1994) and, a repeat of the 1994 study in 2017 with nearly 40 years of satellite data (Christy, J. R., 2017). From this work, using two points derived from the 1994 data and the 2017 data they concluded the earth warming in the troposphere for the last 40 years was approximately a straight line that sloped 0.095 degrees per decade. They call this curve the “tropospheric transient climate response”, that is, “how much temperature actually changes due to extra greenhouse gas forcing.”

The green curve in Figures 1 and 3 could be called the earth surface transient climate response after Christy, although some longer wave-length noise from 40 years of intense ENSO activity remains in the data. The 2017 average value for the green curve is 0.154: this value is 0.059 degrees per decade higher than the UAH estimate for the troposphere. The latest value in February 2020 for the green curve is 0.117 degrees C per decade. The average degrees C per decade value of earth warming based on the green curve over 2,032 months since 1850 is 0.068 degrees C per decade. The average from 1850 through 1979, the beginning of the most recent ENSO, is 0.038 degrees C per decade, a value too small to measure.

A warming rate of 0.038 degrees C per decade would need to significantly increase or decrease to support a prediction of a long-term change in the earth’s surface temperature. If the earth’s surface temperature increased continuously starting today at a rate of 0.038 degrees C per decade, in 100 years the increase in the earth’s temperature would be only 0.4 degrees C., which is not indicative of a global warming threat to humankind.

The 0.038 degrees C per decade estimate is likely beyond the accuracy of the temperature measurements. Recent statistical analyses conclude that 95% uncertainties of global annual mean surface temperatures range between 0.05 degrees C to 0.15 degrees C over the past 140 years; that is, 95 measurements out 100 are expected to be within the range of uncertainty estimates (Lenssen, N. J. L., et al. 2019). Very little measurable warming of the surface of the earth has occurred from 1850 to 1979.

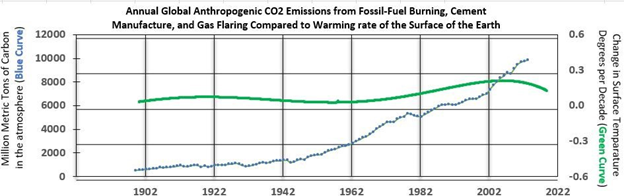

In Figure 2, the green curve is the warming curve; that is, a time series of the rate of change of the temperature of the surface of the earth in degrees per decade. The blue curve is a time series of the concentration of fossil fuel emissions of CO2 in units of million metric tons of carbon in the atmosphere. The green curve is generally level from 1900 to 1979 and then rises slightly due to lower frequency noise remaining in the temperature anomalies from 40 years of ENSO activity. The warming curve declined since early 2000 to the present. The concentration of CO2 increased steadily from 1943 to 2019. There is no correlation between a rising CO2 concentration in the atmosphere and a relatively stable, low rate of warming of the surface of the earth from 1943 to 2019.

In Figure 3, the December 1979 temperature spike (Point A) is associated with a weak El Nino event. During the following 39 years, several strong to very strong intensity El Nino events (single temperature spikes in the curve) are recorded; the last one, in February 2016, the highest ever recorded mean global monthly temperature anomaly of 1.111 degrees C (Goldengate Weather Services (2019). Since then, monthly global temperature anomalies declined over 23 percent to a temperature of 0.990 degrees C in February 2020 as the ENSO decreased in intensity.

Points A, B and C mark very significant changes in the shape of the green warming curve (left vertical axis).

- The green curve values increased each month from 0.088 degrees C per decade in December 1979 (Point A) to 0.136 degrees C per decade in July 1988 (Point B); this is a 60 percent increase in rate of warming in nearly 9 years. The warming curve is concave upward. Point A marks a weak El Nino and the beginning of increasing ENSO intensities.

- From July 1988 to September 2006, the rate of warming increased from 0.136 degrees C per decade to 0.211 degrees per decade (Point C); this is a 55 percent increase in 18 years but about one-half the total rate of the previous 9 years because of a decrease in the rate of increase each month. The July 1988 point on the x-axis is an inflection point at which the warming curve becomes concave downward.

- September 2006 (Point C) marks a very strong El Nino and the peak of the nearly 40- year ENSO transient warming trend, imparting a lazy S shape to the green curve. The rate of warming has declined every month since peaking at 0.211 degrees per decade in September 2006 to 0.117 in February 2020; this is nearly a 45 percent decrease in 14 years. When the green curve reaches a value of zero on the left vertical axis, the absolute temperature of the surface of the earth will begin to decline, and the derivative of the red curve will be negative. The earth will be cooling. That point could be reached within the next decade.

The premise of this analysis is the rate of increase of surface temperatures over most of the past 40 years reflects the effects of the largest ENSO ever recorded, a transient climate event. Since September 2006 (Figure 3), the rate of increase in surface temperatures has slowly decreased as the intensity of the ENSO has decreased. The derivative of the red temperature trendline, that is; the green curve, does not remove all transient noise during the past 40 years

of ENSO activity. The curve shows a slight increase and decrease in rates of change of temperatures during that period. Nevertheless, the continuous slowing of the rates of increase in surface temperatures since September 2006 is highly significant and should be accounted for in long-term earth temperature forecasts.

Analysis of Temperature Anomalies-Case 2

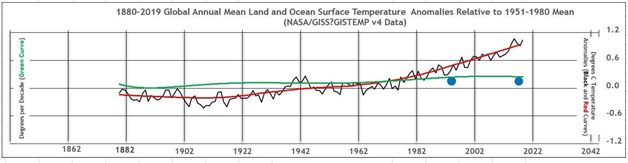

Scientists at NASA’s Goddard Institute for Space Studies (GISS) updated their Surface Temperature Analysis (GISTEMP v4) on January 14, 2020 (https://www.giss.nasa.gov/). To help validate the methodology of the HadCRUT4 earth temperature data analysis described above, a similar analysis was carried out using NASA earth temperature data and NASA’s derivation of the temperature trendline.

Figure 4 is comparable to Figure 1 but derived from a different data set. The NASA land-ocean temperature anomaly data extend from 1880 to the present with a 30-year base period from 1951- 1980. The solid black line in Figure 4 is the global annual mean temperature, and the solid red line (trendline through the temperature data) is the five-year Lowess Smooth. Lowess Smooth (https://www.statisticshowto.datasciencecentral.com/lowess-smoothing/) creates a smooth line through a scatter plot to determine a trend as does the Excel sixth-degree polynomial best fit method.

The director of the Earth System Science Center at the University of Alabama in Huntsville reported estimates of noise-free warming of the troposphere in 1994 and 2017 of 0.09 and 0.095 degrees C per decade, respectively, located by the solid blue circles on Figure 1 (Christy,

J. R., May 8, 2019). The rates of warming of the earth surface estimated from the derivatives of the red temperature trendline shown on Figure 1 and Figure 4 are 0.078 (average of 170 years of data) and 0.068 (average of 138 years of data) degrees C per decade, respectively. These temperature estimates are probably too high because not all noise from several decades of strong ENSO activity has been removed from the raw temperature data. Before the beginning of the current ENSO in 1979, the average rate of warming from 1850 through 1979 estimated from the derivatives of the red temperature trendline is 0.038 degrees C per decade, possibly the best estimate of the long-term rate of warming of the earth.

Truth and Consequences

The “hockey stick graph”, which had been cited by the media frequently as evidence for out-of- control global warming over the past 20 years, is not supported by the current temperature record (Mann, M., Bradley, R. and Hughes, M. 1998). The graph is no longer seen in the print media.

None of 102 climate models of the mid-troposphere mean temperature comes close enough to predicting future temperatures to warrant drastic changes in environmental policies. The models start in the 1970s at the beginning of a time period that culminated in the strongest ENSO ever recorded and by 2015, less than 40 years, the average predicted temperature of all the models is nearly 2.4 times greater than the observed global tropospheric temperature anomaly in 2015 (Christy, J. R. May 8, 2019). The true story of global climate change has yet to be written.

The peak surface warming during the ENSO was 0.211 degrees C per decade in September 2006. The highest global mean surface temperature ever recorded was 1.111 degrees C in February 2016; these occurrences are possibly related to the increased quality and density of ocean temperature data from the two, earth orbiting MSU satellites described previously rather than indicative of significant long-term increase in the warming of the earth. Earlier large intensity ENSO events may not have been recognized due to the absence of advanced satellite coverage over oceans.

The use of a temperature trendline to remove high frequency noise did not eliminate the transient effects of the longer wavelength components of ENSO warming over the past 40 years; so, estimates of the rate of warming for that period in this study still include background noise from the ENSO. A noise-free signal for the past 40 years probably lies closer to 0.038 degrees C per decade, the average rate of warming from 1850 to the beginning of the ENSO in 1979 than the average rate from 1979 to the present, 0.168 C degrees per decade. The higher number includes uncorrected residual ENSO effects.

Foster and Rahmstorf (2011) used average annual temperatures from five data sets to estimate average earth warming rates from 1979 to 2010. Noise removed from the raw mean annual temperature data is attributed to ENSO activities, volcanic eruptions and solar variations. The result is said to be a noise-adjusted temperature anomaly curve. The average warming rate of the five data sets over 32 years is 0.16 degrees C per decade compared to 0.17 degrees C per decade determined by this study from 384 monthly points derived from the derivative of the temperature trendline. Foster and Rahmstorf (2011) assume the warming trend is linear based on one averaged estimate, and their data cover only 32 years. Thirty years is generally considered to be a minimum period to define one point on a trend. This 32-year time period includes the highest intensity ENSO ever recorded and is not long enough to define a trend. The warming curve in this study is curvilinear over nearly 170 years (green curve on Figures 1 and 3) and is defined by 2,032 monthly points derived from the temperature trendline derivative.

From 1979 to 2010, the rate of warming ranges from 0.08 to 0.20 degrees C per decade. That trend is not linear.

Conclusions

The perceived threat of excessive future temperatures may stem from an underestimation of the unusually large effects of the recent ENSO on natural global temperature increases. Nearly 40 years of natural, transient warming from the largest ENSO ever recorded may have been

misinterpreted to include significant warming due to anthropogenic activities. All warming estimates are theoretical and too small to measure. These facts are indisputable evidence global warming of the planet is not a future threat to humankind.

The scientific goal must be to narrow the range of uncertainty of predictions with better data and better models before prematurely embarking on massive infrastructure projects. A rational environmental protection program and a vibrant economy can co-exist. The challenge is to allow scientists the time and freedom to work without interference from special interests. We have the time to get the science of climate change right. This is not the time to embark on grandiose projects to save humankind, when no credible threat to humankind has yet been identified.

Acknowledgments and Data

All the raw data used in this study can be downloaded from the HadCRUT4 and NOAA websites. http://www.metoffice.gov.uk/hadobs/hadcrut4/data/current/series_format.html https://research.noaa.gov/article/ArtMID/587/ArticleID/2461/Carbon-dioxide-levels-hit- record-peak-in-May

References

- Boden, T.A., Marland, G., and Andres, R.J. (2017). National CO2 Emissions from Fossil- Fuel Burning, Cement Manufacture, and Gas Flaring: 1751-2014, Carbon Dioxide Information Analysis Center, Oak Ridge National Laboratory, U.S. Department of Energy, doi:10.3334/CDIAC/00001_V2017.

- Christy, J. R., and R. T. McNider, 1994: Satellite greenhouse signal. Nature, 367, 325.

- Christy, J. R., 2017: Lower and mid-tropospheric temperature. [in State of the Climate 2016]. Bull. Amer. Meteor. Soc., 98, 16, doi:10.1175/ 2017BAMSStateoftheClimate.1.

- Christy, J. R., May 8, 2019. The Tropical Skies Falsifying Climate Alarm. Press Release, Global Warming Policy Foundation. https://www.thegwpf.org/content/uploads/2019/05/JohnChristy-Parliament.pdf

- Foster, G. and Rahmstorf, S., 2011. Environ. Res. Lett. 6044022

- Goddard Institute for Space Studies. https://www.giss.nasa.gov/

- Golden Gate Weather Services, Apr-May-Jun 2019. El Niño and La Niña Years and Intensities.

- https://ggweather.com/enso/oni.htm

- HadCrut4dataset. http://www.metoffice.gov.uk/hadobs/hadcrut4/data/current/series_format.html

- Karl, T. R., Arguez, A., Huang, B., Lawrimore, J. H., McMahon, J. R., Menne, M. J., et al.

- Lenssen, N. J. L., Schmidt, G. A., Hansen, J. E., Menne, M. J., Persin, A., Ruedy, R, et al. (2019). Improvements in the GISTEMP Uncertainty Model. Journal of Geophysical Research: Atmospheres, 124, 6307–6326. https://doi.org/10. 1029/2018JD029522

- Lowess Smooth. https://www.statisticshowto.datasciencecentral.com/lowess- smoothing/

- Mann, M., Bradley, R. and Hughes, M. (1998). Global-scale temperature patterns and climate forcing over the past six centuries. Nature, Volume 392, Issue 6678, pp. 779- 787.

- Mckitrick, R. Department of Economics, University of Guelph. http://www.rossmckitrick.com/uploads/4/8/0/8/4808045/mckitrick_comms_on_karl2 015_r1.pdf, A First Look at ‘Possible artifacts of data biases in the recent global surface warming hiatus’ by Karl et al., Science 4 June 2015

- Mears, C. and Wentz, F. (2016). Sensitivity of satellite-derived tropospheric temperature trends to the diurnal cycle adjustment. J. Climate. doi:10.1175/JCLID- 15-0744.1. http://journals.ametsoc.org/doi/abs/10.1175/JCLI-D-15-0744.1?af=R

- Morice, C. P., Kennedy, J. J., Rayner, N. A., Jones, P. D., (2012). Quantifying uncertainties in global and regional temperature change using an ensemble of observational estimates: The HadCRUT4 dataset. Journal of Geophysical Research, 117, D08101, doi:10.1029/2011JD017187.

- NOAA Research News: https://research.noaa.gov/article/ArtMID/587/ArticleID/2461/Carbon-dioxide-levels- hit-record-peak-in-May June 4, 2019.

- Palmer, P. I. (2018). The role of satellite observations in understanding the impact of El Nino on the carbon cycle: current capabilities and future opportunities. Phil. Trans. R. Soc. B 373: 20170407. https://royalsocietypublishing.org/doi/10.1098/rstb.2017.0407.

- Perkins, R. (2018). https://www.caltech.edu/about/news/new-climate-model-be-built- ground-84636

- Science 26 June 2015. Vol. 348 no. 6242 pp. 1469-1472. http://www.sciencemag.org/content/348/6242/1469.full

- Spencer, R. W., Christy, J. R. and Grody, N. C. (1990). Global Atmospheric Temperature Monitoring with Satellite Microwave Measurements: Method and Results 1979–84.

Journal of Climate, Vol. 3, No. 10 (October) pp. 1111-1128. Published by American Meteorological Society.

Similar material on the WUWT website is copyright © 2006-2019, by Anthony Watts, and may not be stored or archived separately, rebroadcast, or republished without written permission. For permission, contact Watts. All rights reserved worldwide.ro

Similar material on the medium.com website may need permission to be republished.

All I can say at the moment is…”The warming can’t come fast enough! I am freezing my *ss off and I live in Texas. Good Gawd I feel sorry for you northerners.”

Sun will decide what happens next and when, but it does appear that there is still a bit of warming in front of us, mainly due to oceans’ stored energy oscillating along absorption/release cycles.

According to number of solar scientists sun is heading for a prolonged minimum. Working out the solar deep minimum’s effect on the global cooling isn’t an easy task. About couple of years ago I did an exercise analysing the Maunder minimum type effect on the N. Hemisphere’s climate trends.

The ‘conclusion’ was that a ‘short’ solar minimum effect would be negligible while a 50 year Maunder type minimum would result in up to 0.75 degree C temperature fall, or about 20 years of cooling in excess of 0.5 degree C.

The analysis is based on the past ‘reality’, which suggest there are long term natural variability cycles, not necessarily directly related to the deep solar minima.

The intensity of cooling that may occur depends where the Maunder type minimum falls in relation to the multi-decadal & multi-centenary cycles.

In this exercise I looked at possibility that the MM start coincides with any of the three future cycles: SC25 or SC26 or SC27.

In this link I show graphic representation of my analysis

http://www.vukcevic.co.uk/NH-GM.htm

From the above, accounting for the Atlantic’s multi-decadal and global multi-centenary trends, initial cooling was a slow process while the subsequent warming appears to have been more rapid.

Many years ago I stated that the climate effects of solar variability would be greatly modulated by the combined effect of separate cycles in all the ocean basins sometimes supplementing and sometimes offsetting the solar effect on jet stream tracks (which affects global cloudiness).

A cooling of 0.75 C average would be “peanuts” compared to the temperature day/night/seasonal swings. I would think more important would be some kind of NH/SH cyclical cooling over land caused by jet stream swings. The average air temp, as by satellite, would not show this since the ocean is ~70% of the data and with the equatorial belt, even more. Average world air temp data could vary little while the USA and Northern Europe freezes to an ice age.

Hi BFL

Yes, I agree, even individual years annual temperature at most of the N.H’s locations vary as much and often more. My analysis is based on the estimated drop of about 1C degree in the CET (Central England Temperature) during the Maunder minimum.

Imo, the downside to a cooling trend/period is not limited just to a change in temperature. The weather this spring across much of the NH was lousy for farmers. Next spring should be similar or worse for getting crops in the ground. The question is will early winter/delayed spring become a pattern in the years ahead, perhaps for several decades. The weather over the last several months has been very similar to last year, so far. Now to see if the storms start coming in off of the Pacific around late Dec/early January to ease the fire danger.

No such thing as bad weather, just the wrong clothing. 😇 Snowing here.

clothing doesn’t help when you have to drive in it. Not one bit.

Unless you are driving an EV and have to walk when your battery dies

Oh come on! It’s not even winter and you’re in the middle of a catastrophic global-warming crisis!

This is consistent with my hypothesis that a more active sun reduces global cloudiness by making jet stream tracks more zonal and causes them to shift poleward so that more energy enters the oceans and El Ninos become more dominant relative to La Ninas.

That sounds very plausible. During the next decades, with a less active sun, there should be more La Ninas then and much less temperature increase (or even a decrease). Unfortunately, the policy makers will not be patient enough to wait for that the happen. Luck is with the dumb ….

Does anyone take any notice of these findings?

Not yet but the evidence is accumulating so they must eventually.

Shows what you can do with headlines. It could just as easily be “ Recent Global warming .154 degrees per decade, Up from historic .038”…..which puts a totally different spin on the article.

Why that’s a 400% increase!!!

If your temperature readings are only accurate to 0.1*C, then your warming /cooling calculations are only significant to 0.1*C per decade. I strongly doubt that any reading from multiple sources are accurate to more than 1*C on a global scale. Extrapolation of air temperature over vast ocean grids doesn’t cut it. Why is this point so conveniently ignored?

Historically, temperature readings were only recorded to the nearest degree.

They also only recorded the daily high and low.

Anyone who believes you can get an accurate daily average temperature from just a high and low temperature has never been outside.

And what are the cumulative totals of all the uncertainties involved in this assertion?

The problem with error propagation in these series is that taking 27,353 temperature samples from stations all over the earth is not the same as measuring the length of a meter stick 27,353 times. However, the same Law of Large Numbers, and Central Limit Theorem, and standard deviation and uncertainty in the mean calculations can be made, but they mean very different things.

This is hardly ever made clear in discussions on these topics, but it works like this:

If I measure a meter stick with a meter-and-a-half-stick, marked in 1 mm graduations, the measurement error in my observations will be ±0.5mm. Let’s say I take 100 measurements, and my standard deviation is 1.2 mm. I employ the LLN and divide the standard deviation by the square root of 100 to get ±0.12mm. The measurement error pretty much falls out, because it gets added in quadrature and then divided by the number of measurements.

sqrt(100*0.5^2)/100 = ±0.05mm.

I’m not sure how to handle the two kinds of uncertainties here; are they added? If so, the final calculation would be something like 1000.2 ±0.2mm.

If you have 27,353 temperature measurements from all over Earth, they can be averaged, and the LLN be applied, but it has a completely different meaning. In the case of measuring the meter stick 100 times, our measurement increased in precision from 0.5mm to 0.2mm. The average measurement didn’t get more accurate, the mean was more precise, meaning closer to the “true” value.

If our 27,353 measurements have a standard deviation of 7.8 and the calculated mean is 14.3C, then the uncertainty in the mean is

7.8/sqrt(27353) = 7.8/165.4 = ±0.05C

In this case, though, the uncertainty in the mean is not a measure of the precision of the mean in relation to the “true” value, but instead is a prediction. It says that if the entire temperature collection process was repeated with all new values, the mean calculated from those measurements would have a probability of 67% of being within that ±0.05C of the first calculation of the mean.

It’s the same equations used in both cases, but the answer has a completely different meaning, because of the different kind of measurements being taken each time.

James,

the LLN with its 1/sqrt(n)-argument for the composite error works if the n observations are i.i.d. (independent identically distributed). For non-i.i.d. observations we would have in the case of Gaussian noise a covariance matrix with “lots of” non-zero off-diagonal elements. Thus, computing the error (=standard deviation) of the average of n correlated observations leaves us with a finite non-vanishing error.

The same is true with any experiment: if we measure the gravitational constant of Newton’s law of gravity, say, in London, Paris, New York, Tokyo and Buenos Aires we may treat these 5 measurements as independent with zero correlation matrix. However, if we repeat our experiment in 10000 other places, including here in addition places like Baltimore and Kyoto, we cannot ignore the correlations in those measurements: the temperature and other physical variables which might have an impact on our measurement might be highly correlated for New York and Baltimore and likewise for Tokyo and Kyoto. Therefore the correlation matrix for the aggregation of our measurement results will have non-diagonal elements leading to a natural lower bound to the error of the combined value for the gravitational constant.

Problem is: how do we estimate possible covariance structures for all our measurement points?

Measure with a cubit (approximate length of a forearm), mark it with chalk, cut it with a razor blade.

I took notice that some people think that the average temp of the whole planet, land and sea, was known at every point in time back to 1850 to within a hundredth of a degree.

Hard to get past that observation right off the bat.

How seriously can/should one take anything based on such obvious ridiculousness?

Oh, look, real science.

Is there a hyperlink to the official publication. I didn’t see one.

This is the official publication. Online review.

You’re going to get hammered about Bjorklund being a fossil fuel supporter no matter what he says.

Real scientists will focus on the discussion of the technical work. Anyone criticising the writer is not a real scientist. Real scientists seek out “what is right”. Everyone else seeks out “who is right”.

“The rate of warming of the surface of the earth does not correlate with the rate of increase of fossil fuel emissions of CO2 into the atmosphere.”

A correlation between temperature change and CO2 emissions may or may not happen – it’s irrelevant. What matters is the correlation between temperature change and forcing change. Ideally this “forcing” would be a sum of all the known forcings. Insert here the usual but necessary caveats: that we only have accurate CO2 measurements since 1958, that aerosol forcing is uncertain, etc.

And yes, there is pretty good correlation between a year’s temperature anomaly and its forcing anomaly. It depends on the exact datasets you use but it’s easily above 0.7. See figures 2 and 3 in this article:

https://judithcurry.com/2016/10/26/taminos-adjusted-temperature-records-and-the-tcr/

You may argue that this correlation is spurious, that it’s caused by something else, etc. You cannot argue it does not exist.

Also, the article’s figure 2 is supposed to show the lack of correlation between emissions and temperatures, but I have no idea where the “emissions” data comes from. (The text below says emissions, but the axis clearly shows concentrations).

Figure 2 shows an increase of 400 ppm since 1900, which is about four times the actual increase in CO2 concentrations since then. Even if one talked about CO2 emissions, these are roughly two times bigger than the the increase in concentrations (it takes about 2 ppm of emissions to increase concentrations by 1ppm), so it’s not clear how the chart gets to 400ppm.

Figure 2 would also be wrong if it charted CO2 forcing, because it compares a *rate* of increase (in temperature’s case) with an absolute increase (for emissions, or concentrations, or whatever the other line on the chart represents). You can compare rates of increase, you can compare absolute increases, and more – but you have to be consistent and do it for both variables.

The increase in CO2 whether in absolute terms or in concentration clearly has no relation to the rate of temperature change so Fig 2 is useful.

There is no denying that the rate of temperature rise has decreased of late yet CO2 emissions continue to accelerate.

As the effects of the last EL Nino fade away the ‘pause’ is returning.

However, the caption to Figure 2 states:

“The blue dotted curve showing total parts per million CO2 emissions from fossil fuels in the atmosphere …”

No, I believe that the curve is for CO2 concentration in the atmosphere, from all sources. And it definitely isn’t just “… from fossil fuels …”

I agree with Retired_Engineer_Jim.

It cannot be CO2 concentration in the atmosphere.

Look at where it starts out…way below 100ppm.

That(the blue dots in figure 2) has got to be the most poorly labelled and described graph I have ever seen in a serious work.

On the graph, it is labelled as if it is concentration of CO2 in the atmosphere, but in the description it seems to describe the dots as representing how much CO2 is being emitted from human sources at each point in time.

But it is a terrible description, mangled grammar and very badly stated.

See here:

“and the total reported million metric tons of carbon are converted to parts per million CO2 for the graph. ”

Total reported million metric tons?

I think it is meant to say total reported AMOUNT, converted to PPM of CO2, which presumably is a comparison of emitted CO2 from human sources to the total mass of the atmosphere.

The graph itself seems to say something very different from the text description below the graph.

*** Global CO2 Emissions from Fossil-Fuel Burning, ***

*** Cement Manufacture, and Gas Flaring: 1751-2014 ***

*** ***

*** March 3, 2017 ***

*** ***

*** Source: Tom Boden ***

*** Bob Andres ***

*** Carbon Dioxide Information Analysis Center ***

*** Oak Ridge National Laboratory ***

*** Oak Ridge, Tennessee 37831-6290 ***

*** USA ***

*** ***

*** Gregg Marland ***

*** Research Institute for Environment, Energy ***

*** and Economics ***

*** Appalachian State University ***

*** Boone, North Carolina 28608-2131 ***

*** USA ***

Oak Ridge, Tennessee 37831-6290 ***

Mt C divided by 24.4 equals ppm CO2

Thanks for the comment. If you think I am still wrong, let me know.

The author states: Global CO2 Emissions are from Fossil-Fuel Burning, Cement Manufacture, and Gas Flaring: March 3, 2017. Source: Tom Boden and Bob Andres. Carbon Dioxide Information Analysis Center Oak Ridge National Laboratory Oak Ridge, Tennessee 37831-6290

https://cdiac.ess-dive.lbl.gov/ftp/ndp030/global.1751_2014.ems

Mt C are converted to ppm CO2 by dividing by 24.4.

Tom

In Figure 2 of the above article, the blue curve y axis is clearly labeled as “Parts per million CO2 in the atmosphere” and the whole graph is clearly labeled as “Comparison of Atmospheric CO2 Concentration and Warming Rate of Surface Temperature of the Earth”.

Something is definitely wrong with the blue curve in this graph because it begins around year 1900 with an asserted atmospheric CO2 concentration of about 30 ppm and there is NO scientific data to support this. Alternatively, if the blue curve and entire graph were mislabeled and the blue curve was to represent only the anthropogenic amount of CO2 in the Earth’s atmosphere (as is asserted in the body text underlying the Figure 2 graph), then this too is not credible because the value around year 2012 exceeds 400 ppm. That is, the entire amount of atmospheric CO2 in 2012 was attributed to burning fossil fuels, with no natural contributions, which is also NOT supported by any scientific data.

Very sloppy for what otherwise appears to be a solid, credible article.

I agree with you Gordon.

Just what those blue dots represent is as clear as mud.

It cannot be what it says within the box, and what it says below the box makes no sense, and is so poorly written that it is impossible to discern what the author is meaning to assert.

Is it supposed to be cumulative emissions, or is each dot meant to represent annual emissions of CO2?

“I have no idea where the “emissions” data comes from”

As referenced under Figure 2, the data comes from the Carbon Dioxide Information Analysis Center

Just seems like another pile of doubt-mongering from, yes, a Petroleum Geologist in the pocket of ” major oil and gas companies”. https://www.uh.edu/nsm/earth-atmospheric/people/faculty/tom-bjorklund/

MODERATOR WARNING!

(No more ad hominem/funding fallacies comments accepted, you MUST post a constructive critical comment against the article, or you will get snipped) SUNMOD

Loydo is such a hypocrite, using technology derived from petroleum to attack petroleum.

Just some friendly info, hypocrisy is not a virtue regardless of how you wield it.

Start writing your comments on the cave wall where you should be living.

The usual ad hominem smear from Loydo.

So sorry you don’t like the conclusion of the article.

The post is junk, the method is junk and the conclusion is junk. Concocting a reducing rate with an Excel sixth-degree polynomial best fit? Then using that to cast doubt, and here is the rub to prevent “changes in environmental policies”.

I know, I know, the type of industry he is employed by is a complete coincidence. So-called sceptics.

That is your OPINION, you have not posted any refutations against the paper.

You have nothing………

Oh look the conspiracy theory from the troll … so lets go with our own conspiracy that Loydo is a paid troll. Same argument really as you clearly have links to enviroment groups.

I sometimes suspect he’s actually a skeptic trying to make doomsters look stupid.

If so, he is succeeding very well.

Lloydo,

I was fortunate to have lived in an era when the main, everyday scientific advances were from industrial rather than academic research. It is childish to declare that one of these is tainted for some reason. Geoff S

Geoff, speaking as an analytical chemist, do you believe it’s at all possible that the, “95% uncertainties of global annual mean surface temperatures range between 0.05 degrees C to 0.15 degrees C over the past 140 years,” when the lower limit of resolution of the thermometers was ±0.25 C?

It seems to me they’re magicking data out of thin air.

As done in the rest of AGW so-called science.

I agree completely Pat.

No way anyone knows average temp within anything close to that amount of uncertainty.

Of the whole planet no less?

It is preposterous.

Pat,

Answer is “No.”

Here is a relevant email from the Australian BOM –

11 April 2019

Dear Mr Sherrington,

Thank you for your correspondence dated 1 April 2019 and apologies for delays in responding.

Dr Rea has asked me to respond to your query on his behalf, as he is away from the office at this time.

The answer to your question regarding uncertainty is not trivial. As such, our response needs to consider the context of “values of X dissected into components like adjustment uncertainty, representative error, or values used in area-averaged mapping” to address your question.

Measurement uncertainty is the outcome of the application of a measurement model to a specific problem or process. The mathematical model then defines the expected range within which the measured quantity is expected to fall, at a defined level of confidence. The value derived from this process is dependent on the information being sought from the measurement data. The Bureau is drafting a report that describes the models for temperature measurement, the scope of application and the contributing sources and magnitudes to the estimates of uncertainty. This report will be available in due course.

While the report is in development, the most relevant figure we can supply to meet your request for a “T +/- X degrees C” is our specified inspection threshold. This is not an estimate of the uncertainty of the “full uncertainty numbers for historic temperature measurements for all stations in the ACORN_SAT group”. The inspection threshold is the value used during verification of sensor performance in the field to determine if there is an issue with the measurement chain, be it the sensor or the measurement electronics. The inspection involves comparison of the fielded sensor against a transfer standard, in the screen and in thermal contact with the fielded sensor. If the difference in the temperature measured by the two instruments is greater than +/- 0.3°C, then the sensor is replaced. The test is conducted both as an “on arrival” and “on departure/replacement” test.

In 2016, an analysis of these records was presented at the WMO TECO16 meeting in Madrid. This presentation demonstrated that for comparisons from 1990 to 2013 at all sites, the bias was 0.02 +/- 0.01°C and that 5.6% of the before tests and 3.7% of the after tests registered inspection differences greater than +/- 0.3°C. The same analysis on only the ACORN-SAT sites demonstrated that only 2.1% of the inspection differences were greater than +/- 0.3°C. The results provide confidence that the temperatures measured at ACORN-SAT sites in the field are conservatively within +/- 0.3°C. However, it needs to be stressed that this value is not the uncertainty of the ACORN-SAT network’s temperature measurements in the field.

Pending further analysis, it is expected that the uncertainty of a single observation at a single location will be less than the inspection threshold provided in this letter. It is important to note that the inspection threshold and the pending (single instrument, single measurement) field uncertainty are not the same as the uncertainty for temperature products created from network averages of measurements spread out over a wide area and covering a long-time series. Such statistical measurement products fall under the science of homogenisation.

Regarding historical temperature measurements, you might be aware that in 1992 the International Organization for Standardization (ISO) released their Guide to the Expression of Uncertainty in Measurement (GUM). This document provided a rigorous, uniform and internationally consistent approach to the assessment of uncertainty in any measurement. After its release, the Bureau adopted the approach recommended in the GUM for calibration uncertainty of its surface measurements. Alignment of uncertainty estimates before the 1990s with the GUM requires the evaluation of primary source material. It will, therefore, take time to provide you with compatible “T +/- X degrees C” for older records.

Finally, as mentioned in Dr Rea’s earlier correspondence to you, dated 28 November 2018, we are continuing to prepare a number of publications relevant to this topic, all of which will be released in due course.

Yours sincerely,

Dr Boris Kelly-Gerreyn

Manager, Data Requirements and Quality

Regardless of the resolution of the thermometers, what they wrote down was rounded to the nearest degree.

Which makes the resolution of the records themselves 1.0C.

Assuming the station attendant took the time to accurately read the thermometer.

People forget that reading old mercury/alcohol thermometers was not as easy as it is today, where you just copy down the numbers on the display.

Among other problems, unless your mark I eyeball was exactly at the same level as the top of the mercury column, you would add an error to your reading.

And don’t get me started regarding the problems with rounding the readings to the nearest degree.

Geoff, Boris Kelly-Gerreyn’s answer is 100% baffle-gab.

He didn’t answer your question at all.

If they’re not using aspirated-shield sensors to calibrate their field sensors, they’ve got nothing. And Boris didn’t mention aspirated standards at all.

I was going to make a similar sarcastic comment, Loydo. (You forgot the tag by the way.)

The data is hard to refute.

Translation: I can’t refute the science, so I’ll attack the scientist.

Typical Loydo.

Secondly, his link doesn’t support his claim that Dr. Bjorklund is in the pocket of petro-chemical interests.

I’d call on Loydo to apologize, but she isn’t man enough.

As opposed to alarmists being in the pockets of governments, with infinitely bigger……pockets than any oil company? Thought so.

Just seems like another wash of unicorn tears from, yes, a reality-denier hiding in the fetal position under his desk in his mom’s basement.

I see no doubt mongering here. It is a direct refutation and falsification of global warming/climate change, not a misdirection.

Loydoedoe — He’s in the pay of BIG OIL!!! He’s colluding w/BIG OIL!!! He is, he is!!!

Loydo is just another alarmist shill. https://en.wikipedia.org/wiki/Useful_idiot

Loydo

Do you have any facts or logic to offer to dispute the claims?

Of course he doesn’t. If he did he would have use those, instead of attacking the messenger because he can’t refute the message.

(Loydo, and others who are proven trolls never do this:

LINK

If everyone can do this, Moderators can go on vacation……) SUNMOD

SUNMOD

Thanks for the link to the interesting article. While I might quibble over some minor points, overall, I thought he did a good job in covering how people avoid honest debate. I particularly liked the statement:

MODERATOR WARNING!

(No more ad hominem/funding fallacies comments accepted, you MUST post a constructive critical comment against the article, or you will get snipped) SUNMOD

Fair enough. “a constructive critical comment”.

However, there are thousands of comments on this site that come nowhere near to meeting that requirement, tens of thousands. Would you like me to start pointing them out?

Yes, I disagree with a lot of what gets posted here, but the majority of my comments *are* accompanied by links to reseach papers, quotes, graphs, etc. The credibilty of those source is routinely derided with unsupported ad hominen.

I expressed my opinion that was to question the Bjorklund’s credibilty:

1. Not his area of research

2. A consultant to “major oil and gas companies”.

3. His argument seems to rest on a plunging decadal temperature increase which is highly questionable.

Surely casting doubt is acceptable around here.

I think you are holding me to a higher standard.

(This is my only reply to you here, further comments from you over my moderation will be deleted)

(I do not have the time to moderate every comment, there are so many spam comments I have to view, then to look in the trash bin and then read in comment threads. Your comment was too far off the ideal comment standard for this blog, that I had to warn you to stop the attack on the PERSON, to instead attack what he WRITES, here is that forum policy:

“Trolls, flame-bait, personal attacks, thread-jacking, sockpuppetry, name-calling such as “denialist,” “denier,” and other detritus that add nothing to further the discussion may get deleted…”. This is what YOU wrote that is normally considered an attack on the person with an useless fallacy, since you didn’t address his research at all, the one you make clear you don’t like.:

There will be no more attacks on the PERSON, it doesn’t help you and irritates others here who are tired of your funding/education/authority fallacies.) SUNMOD

Loydo

If someone has an apparent conflict of interest, then it warrants a more rigorous examination of their claims. That responsibility falls on the shoulders of the reader(s) who feel that the author has reason to be biased. However, ultimately, the claims made should be evaluated based on the legitimacy of the facts and the logic used to reach a conclusion. Anything less, and you are becoming the very thing you are complaining about — a biased participant.

MM, where to satrt. Loydo, your “senior scientist” at the IPCC is a former Exxon executive, fact check all you can. The previous ‘senior scientist’ at the IPCC was a senior executive at India Oil and a railway engineer. I would suggest that a geologist is FAR more qualified at climate than a railwayman .. Your Death Cult God AlBoar is tied at the lips with Occidental, again fact check yourself. He is also tied at the market rigging game with his mates at Goldman Sachs, GIM.

bye

Well said Sunmod .

Loydo is a troll flying past throwing manure .

I have seen nothing that he has written that adds anything to the debate .

I would say that the geologists that I know and have met are very much down to earth .Pun intended They have studied the earth and the climate and most have concluded that CO2 is a very minor player in the earths atmosphere and temperature .

They all know that the planet has been much colder and warmer than present .

Mainly because the aerosol fudge factor was defined to provide that correlation.

It is explained in the text, it is the accepted emissions data from Boden et al. with the units transformed from gigatons into ppm. As you remember, we have emitted about double of what the levels have increased in the atmosphere.

Javier

You said, “we have emitted about double of what the levels have increased in the atmosphere.” Which is a small fraction of the total CO2 involved in the Carbon Cycle!

To say nothing about how much if any has come out of or gone into solution in the oceans, no?

I am also rather dubious that the value of total human emissions or additions to the air is known with any accuracy or precision.

Concrete manufacturing, for example releases CO2, but much of this is absorbed back into the concrete over time, eventually amounting to all that was released.

And what about land use changes, forests removed, wood used in construction and manufacturing, etc?

I doubt anyone can do better than make some WAG on these numbers. Likewise between things like coal fires, nat gas flaring, oil field fires, lack of precise data on mined coal or pumped oil for many places and times, how accurately is CO2 from fossil fuels known?

Some of this may be nit picking and the amounts insignificant compared to what is known, but with no discussion of these and other sources, how should anyone know that?

Nicholas

That is really my point. It may just be coincidence that the annual increase in CO2 is approximately half of human emissions. One would expect that as the Earth warms, CO2 will come out of solution in the oceans and lakes. Also, as I wrote my my first article for WUWT, we don’t even have a good handle on the total anthropogenic contributions. We have a lower-bound with fossil fuels and concrete, but the other sources are poorly characterized.

http://wattsupwiththat.com/2015/05/05/anthropogenic-global-warming-and-its-causes/

How is contribution to atmospheric CO2 from the ElNino warming measured, or more accurately segregated from the CO2 measurements?

if there were an “increase” of 400 ppm, that would mean we started with 0 ppm CO@ur momisugly, which is obviously impossible

Alberto, thanks for citing my article at Judies. There is a clear link between the increase in the antropogenic forcing, in this case ex volcano, ex solar because the used GMST also didn’t include the impact of this agents +ENSO which is a natural variability, and the warming.

It’s a pitty that so many “sceptics” don’t want to see this. The much more interesting question is: what is the impact of this forcing ( namely the forcing due to doubling of CO2) and the result of my article gives 1.35 °C for TCR. This is remarkably below the models mean estimate on 1.85 for the CMIP5’s. The upcoming CMIP6 shall anhance this value as one speculates. The most interesting question is: Why are models running hot but not the question if they respond to the forcing in any way which they do of course.

Does Greta know about this?

Clearly not. Maybe someone could induce

her to read some science?

Can the Gretin read, or comprehend?

How DARE you!

“The rate of warming of the surface of the earth does not correlate with the rate of increase of fossil fuel emissions of CO2 into the atmosphere”

See also

https://tambonthongchai.com/2018/12/14/climateaction/

https://tambonthongchai.com/2018/12/19/co2responsiveness/

https://tambonthongchai.com/2018/09/08/climate-change-theory-vs-data/

https://tambonthongchai.com/2019/09/21/boondoggle/

https://tambonthongchai.com/2019/11/08/remainingcarbonbudget/

https://tambonthongchai.com/2018/05/06/tcre/

It was very clear from day 1 that the Karl, et al’s (K15) Pause Buster SST adjustments were bogus, and merely a desperate attempt by climate fraudsters to erase the inconvenient Pause, probably on order from the WH OSTP (Mr. Holdren) with the upcoming Paris COP. But to my understanding, after Tom Karl retired, the newest NCDC ERSST adjustment backed-out most of that K15 SST data fraud because it was an outright embarrassment for NCDC.

The Figure 2, as pointed out by Alberto above, looks very wrong. And with Fig 2 very wrong, the whole thing looks very bad.

So bad in fact, I’d have to guess this presentation is either an attempt to make skeptics look bad, or some kind of fake presentation to discredit some group who might use it.

Wishful thinking. The conclusions and basic data are sound even if one can nit pick details.

I don’t think you can dismiss Fig 2 by describing it as “nit-picking”, Stephen. It hit this layman between the eyes as an error immediately. The axis shows CO2 concentrations apparently increasing from 0 to 400 (414 with the outlying dot) since the start of last century.

So either the graph is wrong or the scale on the axis is wrong. Careless either way and in this perfervid (!) climate a gift to the “opposition”!

Agreed. The shape of the curve matches emissions not concentrations so the left labels should be changed. Possibly divided by 100. The growth of concentrations is pretty smooth (Keeling curve) but it is also not the result of fossil fuel emissions as the anthropogenic contribution annually is only about 3% of the flux.

Read the Figure 2 description newminster!

“The blue dotted curve showing total parts per million CO2 emissions from fossil fuels in the atmosphere is modified from Boden, T. A., et al. (2017); the time frame shows only emissions since 1900, and the total reported million metric tons of carbon are converted to parts per million CO2 for the graph.”

“

It is very poorly worded and the description is inadequate even besides for the poor diction.

I think it is meant to represent total cumulative emissions from human sources only.

Instead of ” total reported million metric tons of carbon” it ought to say (if I am interpreting it correctly) total emissions of CO2.

Why mention million metric tons, if it just means total amount (which is reported in millions of metric tons, although it is billions these days), and why say carbon if it means CO2?

It is very unclear.

What is the point of taking a lot of trouble to do such an essay and not describe what is being represented in a way which is clear and concise?

Bad descriptions and imprecise language makes what is being said hard to discern.

The purpose is to communicate, and we have ways to do that effectively.

Among the ways is to use clear language and concise descriptions.

There is nothing sound about adjusting the high quality buoy data so that it better matches the much lower quality ship borne sensor data.

Is it so difficult to see that figure 2 shows our emissions transformed into ppm units?

Not the usual way to present this data, but it is not wrong.

It is always more difficult to see what you don’t what to see.

Whole industries have been created out of not seeing what is obvious if you take the time and effort to “look then think, then look again, then think again”.

It is much easier to say it “doesn’t look right, because it challenges my preconceived notions, so it must be wrong”. And then tag it as a “fake presentation”.

Analysis done by “how it makes me feel” 101.

Correct. Whenever I see something that doesn’t look right, my first thought is, what am I not understanding, because I know that the problem most likely is with me.

That happened with Figure two, and a close reading of the paragraph below clearly explains it as Javier did.

I do not interpret the criticisms to mean that people do not WANT to see what is meant.

How about just taking such criticisms literally, IOW why not assume the person asking for a better explanation actually wants a better and more clear explanation?

It does not say it is cumulative emissions, although that seems to be what is meant.

It says carbon, but the source listed refers to CO2, which is not the same as carbon.

Did the author only count the part of CO2 which is carbon, or did he just say carbon when he meant CO2?

Why do that?

Why talk about millions of metric tons when what is meant is simply the amount of emissions, which may be given in units of millions of metric tons, but also may be given in kg, or in billions of regular tones, or in pounds, or whatever?

When vague and sloppy language is used, what is said becomes a matter of interpretation, and we can see just within a dozen or two comment how much of a problem that can be.

I for one do not read and comment to waste time or nit pick, but to obtain and disseminate accurate information, and to refute or clarify bad info.

It is probably not helpful do dismiss people with questions with a blanket assertion that they do not really want to know.

You are thinking of WANT as a conscious decision. It is a subconscious reflex that is NOT a decision. It is a subconscious act that filters your understanding what the data shows.

If you don’t like the presentation DO IT YOURSELF!!!

The references are included.

Demands for spoon fed knowledge, only shows that you are unwilling to do any thinking for yourself, and you require an analysis that is exactly how you would do it.

It is about the data, and whether it correlates or not, to a measurable effect that requires action or not It is not about the style of presentation.

Javier,

So are claiming anthro-CO2 emissions raised the molar ratio of CO2 by 400ppm? Because that IS what Figure 2 is implying.

Forcing doesn’t care about anthro emissions.

Forcing doesn’t care whether the CO2 molecule came from petroleum combustion or natural organic matter decomposition.

And a then a 6-order polynomical fit? Seriously. Look a the HadCrut data, clearly a linear downward trend (negative slope) from 1945 to 1976, but the polynomial slope stays positive with the derivative staying above zero. Clearly wrong.

Figure 2 is complete junk.

Then the author wrote this junk

“The peak surface warming during the ENSO was 0.211 degrees C per decade in September 2006. The highest global mean surface temperature ever recorded was 1.111 degrees C in February 2016; these occurrences are possibly related to the increased quality and density of ocean temperature data from the two, earth orbiting MSU satellites described previously. Earlier large intensity ENSO events may not have been recognized due to the absence of advanced satellite coverage over oceans.”

Well if he is now claiming the peak warming was 2006, but that would also put it in the middle of the Pause (hiatus). And the highest global mean surface temp, and then giving it to 3 decimal places???? And then what previous discussion of “earth orbiting MSU satellites described previously.” Huh? Where previously? HadCRUT is the data presented.

Seriously, this whole manuscript is junk.

It’s all complete junk-gibberish.

I agree much of what is written here is very unclear.

Very badly written.

I had not even got to this part Joel, because so much of it at the very beginning was dubious and hard to understand.

Does he mean to say highest ever recorded ANOMALY?

He must mean that.

And so he should say that.

Re “The peak surface warming during the ENSO was 0.211 degrees C per decade in September 2006”, does this mean that a monthly rate of warming was extrapolated out to a decadal rate of change?

By ENSO does he mean during the (or an?) el nino?

ENSO is the name for the entire oscillation, which is continuous and ongoing.

Joel I am not defending all the article, just explaining that the emissions part of figure 2 is correct, when several people commented it was not. Emissions can be expressed in several units, normally Gigatons of carbon or CO2, but they can also be expressed as ppm equivalents which allows a comparison with CO2 levels in the atmosphere. That doesn’t mean that the figure implies anything about atmospheric levels as you say.

Javier,

The CO2 part of Fig 2 just junk. Pure junk.

And the polynomial curve of the temperature fit is also worse than junk.

Admit it. Move on.

This is a bombshell moment for all those Extinction Rebellion protesters who demand that we trust the scientists. Well, here are the scientists telling us that the earth has not warmed since 1850 and, in any event, CO2 is not the control knob for catastrophic global warming. Game over.

Can’t wait to see the BBC’s coverage of this research. Oh….er…hang on….

John, that is not what the article above states. It clearly shows an ongoing increase in temperature, but at a moderate rate, and at a currently declining rate of increase.

Agreed. It is warming. That is good. There is no acceleration of warming, even though CO2 in the atmosphere is clearly rising. The models used to justify the climate change hysteria are broken.

Either CO2 is in of itself a weaker forcer of atmosphere temperature than the propaganda claims, or the Earth’s negative feedback systems are powerful enough to counter most of that forcing from CO2 that does exist.

Either way, the case for catastrophic change fails.

And Paul we can easily see the Pause 🙂

Another point of error is Figure 3, where the author wrote, “3. September 2006 (Point C) marks a very strong El Nino …”

Not hardly the El Nino that year (2006) was pretty weak, as ONI was only at/above +0.5 for five 3-month periods with max of +0.9.

ref: https://origin.cpc.ncep.noaa.gov/products/analysis_monitoring/ensostuff/ONI_v5.php

All in all, very bad. This does not appear to me to be a manuscript that any knowledgeable skeptic should reference or cite. It is so bad, it looks intentionally bogus.

Doesn’t affect the main point which is that there was an El Niño and it shows up as a temperature spike.

If I make a gallon of chocolate ice cream, and you saw me mix in a spoonful of fresh dog crap with it, would you still buy that ice cream from me?

The entire credibility of this manuscript is shot.

Just like dog crap flavored ice cream…. don’t buy it. And most certainly do not ingest it.

Well I would say that the ice cream is the ENSO effect and the dog crap is human emissions of CO2.

That remains true regardless of the quality or otherwise of certain details of the head post.

There is no doubt that the rate of increase in global temperatures Is out of sync with human emissions and one doesn’t need the details of the head post to appreciate that simple fact.

It appears to me that the author of the headline must have spent some time and put some effort into this essay and the included graphs.

So it makes no sense to not take the time to make the language clear and the descriptions concise.

I was just looking up one phrase from the article using Cortana and this second item given was an article in Tamino trashing WUWT using this article as an example of so-called shoddy work published here.

So I think it does matter, when warmistas are so quick to jump on any example to paint skeptics in a bad light.

BTW, I agree that one does not need the details of this essay to know that temps and human CO2 emissions are not correlated, regardless of whether one uses anomalies or not, rate of change of temp or not, annual or cumulative emissions, or total atmospheric concentration, or whatever metric one wants.

I’m inclined to agree with Joel. To me this paper looks like it might be a decoy: a paper so deliberately spiked with error of methodology that it will attract copious criticism from alarmists, whereupon it will be “retracted” with a huge fanfare in the press. An early attack might be on the use of a high-order polynomial fit, given that such a fit is numerically unstable at the ends of the fit range. Even if it is not a deliberate decoy and is simply wrong it the errors will be jumped on and advertised as evidence that skeptics can’t do science. even if its conclusions turn out to be correct but for the wrong reasons. Treat with a long pair of tongs and put it in quarantine in a fume chamber with the hood down!

Desperation.

No, not desperation. I was about to compose and post my objections to use of a 6th order polynomial, for reasons such as the instability near the ends of the data set. You can try fits of order 5, 6 and 7 to see this effect. The mathematical derivation becomes uncoupled from the physical data. Geoff S

I have been looking at a website called temperature.global.

It claims real world, unadjusted temperatures, collated in real time.

There is not much info on the website. It claims to use mostly NOAA data.

The information that is presented, does seem to gel with this oped.

However, the current global temperature, and temperature trend, is certainly at odds with the current ‘wisdom’!

I would appreciate it, if anyone is familiar with this body of work and website, if the info contained therein, be trusted? It looks legit.

http://temperature.global/

Its Bogus.

Sorry. They use METAR data and what they do is a simple average of the values.

A simple average is guaranteed to give you the wrong answer.

Look folks it is warming.

There was an LIA

Yes the English Lit adjusted data gives that nice comfy feeling. You have to slow baste your data while applying the Colonel’s 11 secret adjustments and then rest it for that perfect time and then you get that perfect finger lickin good data.

Steven,

In a few lines, what are the main objections to use of Metar for temperatures? I can surmise a few, but surely their trend over time since Metar started should agree with other measurements. If not, why not? Geoff S

I assume the METAR data you mention is the same data I get from the airport before I land. I sure as heck hope it is accurate or I might have an issue with density altitude or crosswinds.

All the new flight software gives you the “weather” at the airports along the way and the multiple airports around a city all are slightly different depending on the local conditions. So just what is the temperature of the area at any given moment? I can tell you the exact temperature at the measuring spot and can give you a general temperature of the area, but there is no way I can give you an exact answer to hundredth’s of a degree.

Then throw in the areas I fly over with no airports so I can estimate it from other airport data but again, I don’t know exactly. And from experience, there are a lot of areas that may be exposed or snow covered on winter nights that can be 10C deg colder than surrounding areas.

Sounds a lot like trying to measure the Earth. You can’t do it accurately so why don’t we all just stop trying to do it?

“Steven Mosher November 15, 2019 at 2:08 am

Its Bogus.

A simple average is guaranteed to give you the wrong answer.”

Thank you!

Mosher not even wrong as usual.

Steven,

How would you account for stations with 30-year cooling trends thoroughly mixed in with stations with 30-year warming trends? The only “global warming” that appears to be going on is on the average, because there are more stations warming than cooling..

How much of the surface temperature, I assume global, was recorded 170, 150, 130 or 110 years ago given ~70% of it is water?

This is precisely my objection to the whole idea of a “global temperature”. Even the coverage of terrestrial temperatures is sparse and unbalanced, especially from a century or more ago. Add to that the inherent uncertainties in the calibration of thermometers, time of measurements and so forth, and the data become deeply suspect. Of course, one cannot average an intensive property like temperature, so the whole exercise is futile.

I can measure 3 different temperatures in my back yard, with the same instrument. Global average is pseudoscience

I have 3 different brand thermometers in my yard, none in the sun and just now (2pm Pacific Standard time) they read on average 51.3 degrees F + or – 0.9, where 0.9 is the average deviation.

When trying to measure very small temperature differences, i.e., 1 degree C over 100 years, the uncertainties of the instruments (and any other uncertainties) will overwhelm establishing a true difference.

Most of the data are made up. There simply were not enough measurement points for at least half of the temperature record to be able to get an accurate determination. Scroll down to the interactive globe for an eye opening view of how few temperature gauges there were in the world for most or recorded history.

https://data.giss.nasa.gov/gistemp/station_data_v3/

What on earth is the point of this? The results are actually not different from what is well known. Hadcrut has a rather lower trend than it should, because it doesn’t so Arctic properly, as Cowtan and way showed. A trend of about 0.15°C/decade for Hadcrut in recent times is what is generally found, and a trend of 0.07°C since 1850 is also standard. To deal with the key points:

1. Yes, about 0.07 average trend since 1850. Most of that period has little GHG effect.

2. Yes, the rates doesn’t correlate. They shouldn’t. The rate of warming should correlate, if anything, with total GHG. That provides the flux.

3. Yes, there have been some large ENSO oscillations recently; more down than up. So?

Fitting a sixth order polynomial is not a sensible way of calculating trend. It has to be about right in the longer term, but direct regression is better. In the short term it shifts variation around, and so the dip at the end is probably spurious.

The point is that the interpretation of the evidence differs from the alarmist meme.

For many other reasons the author’s interpretation looks more likely to be correct.

Quibbling about extraneous detail does not invalidate the central point.

Nick almost said something sensible and he hasn’t even realized it 🙂

Lets correct a few things and see if Nick can get his head around it. The rate won’t equate directly to the total GHG either because you are dealing with radiative transfer and QM heat baths.

lets go out on a limb and assume you actually want to learn something, nature magazine has a reasonable write up on the quantum thermodynamic laws. It really isn’t hard, try reading

https://www.nature.com/articles/srep35568

The sections you need to pay attention

– Exchange of heat and work between two interacting systems

-Second law of thermodynamics

What will directly correspond is the thing they called “pseudo-temperature”, there term not mine. I am not sure anyone has calculated it via direct methods for climate science, but lets say the system isn’t in equilibrium (it’s always chasing) the value will be 2/3 the average energy. Now if you are puzzling why 2/3 it’s because you have a Maxwell–Boltzmann distribution with three degrees of freedom …… E = 3/2KbT.

Google “Maxwell–Boltzmann distribution”

So QM actually predicts your classical answer will be wrong and by a rather large amount as all that other energy is in the QM domain 🙂

Now if you run the calculations with proper physics I suspect your graphs might line up at least for a while. Why they won’t remain locked is a story for another day.

We would expect to see greater rates of warming in lock-step with greater rates of forcing.

Positive and negative feedback’s complicate this over the short term, but on a multi-decade time scale each of those changes in forcing or feedback’s are either deterministic for changes in temperatures or offset by opposing feedback’s which makes them non- deterministic for changes in temperature.

If increased CO2 due to our emissions created greater rates of warming, then there would be a correlation between the “cause” and the “effect”.

So increased emissions of CO2 is either not deterministic or sufficiently offset by opposing negative feedback’s.

The current rates of warming are similar to those that we know of in the past. It is reasonable to assume we are not on a “dangerous trajectory” that requires immediate action.

The intelligent course of action would be to monitor the situation for changes to the current understanding, but making substantial changes based on dubious claims is not warranted.

The difficulty with a presentation which involves extensive adjustments is the the non-expert like myself is left wondering whether the adjustments in anyway reflected the bias of the author. I speak only for my ignorant self here but I would be unhappy with that noise elimination if it produced a warmest result and I think I should try to be consistent.

Doubtless those of you who understand such things can explain.

I would love this to be correct and to be spread around but I fear the XR mob will just say he is an oil company person.

It took two minutes to find this article on the University of Houston website:

https://www.uh.edu/nsm/earth-atmospheric/people/faculty/tom-bjorklund/an-analysis-of-the-mean-global-temperature-in-2031_january-2019_revised.pdf

He admits his expertise is in the oil industry and only has a general knowledge of climate science. They will jump on that.