Guest Essay by Kip Hansen

Preview: In this essay I will discuss the efforts of various scientific bodies and individual scientists to regularize, to bring into line with correct scientific procedures, the budding field of science investigating the effects of increasing atmospheric concentrations of CO2 on the oceans, its chemical make-up including pH, the atmosphere/ocean carbon cycle and what those changes might mean for ocean organisms over the next 100 years – a subject popularly known as Ocean Acidification (hereafter OA).

The 6 August 2015 issue of the journal Nature carried a highlight article under the subject heading Ocean Acidification entitled “Seawater studies come up short — Experiments fail to predict size of acidification’s impact.” (.pdf here)

The Nature highlight article, by Daniel Cressey (a full time Nature reporter based in London) states:

“The United Nations has warned that ocean acidification could cost the global economy US$1 trillion per year by the end of the century, owing to losses in industries such as fisheries and tourism. Oyster fisheries in the United States are estimated to have already lost millions of dollars as a result of poor harvests, which can be partly blamed on ocean acidification.

The past decade has seen accelerated attempts to predict what these changes in pH will mean for the oceans’ denizens — in particular, through experiments that place organisms in water tanks that mimic future ocean-chemistry scenarios.

Yet according to a survey published last month by marine scientist Christopher Cornwall, who studies ocean acidification at the University of Western Australia in Crawley, and ecologist Catriona Hurd of the University of Tasmania in Hobart, Australia, most reports of such laboratory experiments either used inappropriate methods or did not report their methods properly.”

(all in reference to Cornwall and Hurd ICES J. Mar. Sci. http://dx.doi.org/10.1093/icesjms/fsv118 ; 2015 )

followed by:

“Cornwall says that the “overwhelming evidence” from such studies of the negative effects of ocean acidification still stands. For example, more-acidic waters slow the growth and worsen the health of many species that build structures such as shells from calcium carbonate. But the pair’s discovery that many of the experiments are problematic makes it difficult to assess accurately the magnitude of effects of ocean acidification, and to combine results from individual experiments to build overall predictions for how the ecosystem as a whole will behave, he says.”

(Just to be clear, the two quotes above are from the Creesey Nature highlight.)

The paper by Cornwall and Hurd is a masterful piece of science of a type rarely seen in academia today (with a few exceptions to be discussed later). It investigated the experimental design of the current crop of papers in a scientific field and evaluated whether or not the study designs and results analyses used were appropriate to return scientifically meaningful results.

This paper was published in International Council for the Exploration of the Sea (ICES) – Journal of Marine Science. Here’s the abstract:

“Ocean acidification has been identified as a risk to marine ecosystems, and substantial scientific effort has been expended on investigating its effects, mostly in laboratory manipulation experiments. However, performing these manipulations correctly can be logistically difficult, and correctly designing experiments is complex, in part because of the rigorous requirements for manipulating and monitoring seawater carbonate chemistry.

To assess the use of appropriate experimental design in ocean acidification research, 465 studies published between 1993 and 2014 were surveyed, focusing on the methods used to replicate experimental units. The proportion of studies that had interdependent or non-randomly interspersed treatment replicates, or did not report sufficient methodological details was 95%. Furthermore, 21% of studies did not provide any details of experimental design, 17% of studies otherwise segregated all the replicates for one treatment in one space, 15% of studies replicated CO2 treatments in a way that made replicates more interdependent within treatments than between treatments, and 13% of studies did not report if replicates of all treatments were randomly interspersed. As a consequence, the number of experimental units used per treatment in studies was low (mean = 2.0).

In a comparable analysis, there was a singnificant decrease in the number of published studies that employed inappropriate chemical methods of manipulating seawater (i.e. acid–base only additions) from 21 to 3%, following the release of the “Guide to best practices for ocean acidification research and data reporting” in 2010; however, no such increase in the use of appropriate replication and experimental design was observed after 2010.

We provide guidelines on how to design ocean acidification laboratory experiments that incorporate the rigorous requirements for monitoring and measuring carbonate chemistry with a level of replication that increases the chances of accurate detection of biological responses to ocean acidification. “

(I have added paragraphing to the above for readability – kh)

Note: Despite heroic efforts, I have been unable to find a freely available full copy of C&H 2015 online. Chris Cornwall kindly supplied me with an Advance Access .pdf copy of the full study and the supplemental information file. Those wishing to read the full study should either email Dr. Cornwall requesting a copy or email me (my first name at the domain i4 dot net).

First, let me point out that Chris Cornwall and Catriona Hurd are OA research insiders. Unfortunately, the title of the Nature highlight makes their study sound like an indictment of OA research, which it is not.

Chris Cornwall tells me (in personal communication) that their study has been generally well received in the OA field and that “Many scientists have received the suggested solutions with open arms.” And while the Nature highlight will go a long ways towards making the points raised in C&H 2015 clear to scientists all across the OA research field — a good thing — he felt that the Nature piece had elements that were “overly dramatic or incorrect” which had been latched onto by the popular press. Further, Chris says “Debates between scientists about improving a field of research do not invalidate that field, contrary to that reported by the Daily Mail.”

(Late addition: Chris Cornwall responds to the Daily Mail here. There is some slight contradiction between his public statement and his published paper but he does have to continue to work in the field – Cornwall and Hurd intentionally did not publish the details of their analyses of the 465 papers – which ones were appropriate and which inappropriate and why – they only published, as a supplement, a list of the titles of those studies surveyed, for what I assume is the same reason.)

Just what have he and Catriona Hurd done? They have looked at published OA papers from 1993 to 2014 – 465 of them, which must have been an incredibly time consuming task — mostly laboratory manipulation experiments (manipulating atmospheric CO2 concentrations associated with ocean water tanks, usually with oceanic organisms, and pH manipulation of the same). They evaluated each one for inappropriate experimental design and/or analysis of results. The main issue and the major problem with the papers, though not the only one, dealt with the replication, or lack of, of experimental units.

Definition: Experimental units for this discussion can be thought of as individual tanks of sea water + organisms to be studied + treatment (or lack of treatment, in the case of a control tank). In the following diagram, from Cornwall and Hurd (C&H 2015), only experimental designs precede by the letter A are acceptable – all those preceded by B are not. (ref: Hurlbert 1984). (In 2013, Hurlbert used the definition “the smallest… unit of experimental material to which a single treatment (or treatment combination) is assigned by the experimenter and is dealt with independently …”).

The point being that “Regardless of the degree of precision that the treatment is applied and its effects measured, if treatment effects are confused with the effects of other factors not under investigation, then an accurate assessment of the effects of the treatment cannot be made.” If experimental units are not independent, if they are not truly randomized, if co-confounders can be seen to exist, then the results are not scientifically reliable.

What did C&H find in this regard? Out of the 465 OA studies done between 1993 and 2014, “The proportion of studies that had interdependent or non-randomly interspersed treatment replicates, or did not report sufficient methodological details, was 95%.” That leaves just 5% of the studies judged to have appropriate experimental designs.

We all know that there are many things that can go wrong in lab experiments such as these, those which take months and months, require constant monitoring of finicky details and that can be sabotaged by a moment’s inattention of a lab assistant. These factors we understand and are part of the difficulty of all lab work. But when the original experimental design is insufficient for the purpose from the outset then time, money, and effort are wasted and results become difficult or impossible to interpret – certainly impossible or very difficult to use to perform any sort of meta-analysis across studies.

Further, “the number of experimental units used per treatment in studies was low (mean = 2.0).” Think about that — imagine doing a medical study, an RCT, but using only 2 patients per cohort. Then consider that there are obvious co-confounders with the two patients, such as being siblings! No journal would touch the resultant paper – it would have no significance at all. Granted, one might get away with reporting it as a Case Study, but it would never be considered clinically important or predictive. And yet that is precisely the situation we find generally in OA research – very small numbers of experimental units poorly isolated, often with co-confounders that obfuscate or invalidate treatment effects.

C&H report (at the head of the discussion section):

“This analysis identified that the most laboratory manipulation experiments in ocean acidification research used either an inappropriate experimental design and/or data analysis, or did not report these details effectively. Many studies did not report important methods, such as how treatments were created and the number of replicates of each treatment. The tendency for the use of inappropriate experimental design also undermines our confidence in accurately predicting the effects of ocean acidification on the biological responses of marine organisms.”

The authors maintain nonetheless that even poorly designed studies contain useful information, even if getting at it requires a full re-analysis of reported results. Some experiments however are hopelessly compromised by poor study design.

Having determined the biggest problem to be:

“Confusion regarding what constitutes an experimental unit is evident in ocean acidification research. This is demonstrated by a large proportion of studies that either treated the responses of individuals …. to treatments as experimental units, when multiple individuals were in each tank, or used tank designs where all experimental tanks of one treatment are more interconnected to each other than experimental tanks of other treatments (181 studies total).”

C&H proceed to give suggestions on proper experimental design that will prevent the problems found in the majority of previous studies as well as a series of suggestions regarding statistical evaluation of results. They attempt to set a gold standard for OA research in which known problems are avoided to improve reliability, significance, and usefulness of results.

C&H recommend 1) various approaches to be determined and adopted before the OA manipulation system is designed, 2) lab layout and randomization of the positions of experimental tanks, 3) measurement schemes to avoid pseudo-replication and statistical confusion caused by treatment of measurements — either interdependent measurements treated as independent, or multiple measurements of the same unit treated as independent measurements, and other similar offenses, 4) tips for reviewers (and self-review by authors) . Those interested in the details of this should read this section of the study – it is a valuable lesson in how complicated good experimental design can be even for “simple” hypotheses (I give suggestions above on how to obtain a full copy of C&H 2015).

This study follows up on a major effort in 2010 along the same lines – an effort by the European Project on OCean Acidification (EPOCA) – which produced the booklet “Guide to best practices for ocean acidification research and data reporting” (mentioned in C&H 2015), which gave strict guidelines meant to correct the 21% of pH perturbation experiments that, as of 2010, had been found using methods that did not properly replicate real ocean carbonate chemistry (see sections on seawater carbonate chemistry). The good news from C&H 2015 is that the percentage of studies that contained gross carbonate chemistry errors in the OA studies after 2010 were reduced to just 3% (down from 21% before 2010). The rest of C&H 2015 is the bad news: even though the “Guide to best practices…..” contained an entire section on “Designing ocean acidification experiments to maximise inference” (Section 4 of the guide), 95% of studies surveyed in 2014 failed to meet minimal standards of experimental design (some of these, of course, must have been carried out before the guide was published – nonetheless, C&H report no improvement in experimental design between 2010-2014).

This new field is to be congratulated on its internal attempts to set itself right – to correct endemic errors in its research and educate those involved in better ways to conduct that research so that results will be significant and meaningful in the real world – results that not only are correct and get published, but that add to the sum total of human knowledge.

And yes, it is a shame that so much effort and so many research dollars have been spent for results that, so far, cannot tell us very much that is reliably useful and almost nothing that can be considered accurately predictive. But the hopeful thing is that this field of endeavor is actively engaged in self-correction.

Try to imagine such a thing happening in some other field of Climate Science – insider scientists producing a survey of research that points out that the majority of those studies about some aspect of Climate Science are seriously flawed and will have to be redone with experimental designs and statistical approaches that will actually produce dependable, scientific results.

Back in the OA world, Chris Cornwall has expressed his hope that their new paper in ICES – Journal of Marine Science (and the Nature editorial highlight which significantly raised its profile), will bring improvements to OA experimental design over the next five years similar to those improvements they found for the chemistry aspects of OA studies post-2010.

I hope so too – Chris Cornwall and Catriona Hurd have my congratulations and I wish the entire OA field success, looking forward to new research based on proper experimental design and correct oceanic carbonate chemistry.

* * * *

And elsewhere in Science?

Psychology has been rocked by this NY Times story – “Many Social Science Findings Not as Strong as Claimed” which reports about the Reproducibility Project: Psychology. The original report summary is here: Estimating the Reproducibility of Psychological Science. The Times quotes Ioannidis (author of “Why Most Published Research Findings Are False”):

“Less than half [of 100 experiments were able to be replicated]— even lower than I thought,” said Dr. John Ioannidis, a director of Stanford University’s Meta-Research Innovation Center, who once estimated that about half of published results across medicine were inflated or wrong. Dr. Ioannidis said the problem was hardly confined to psychology and could be worse in other fields, including cell biology, economics, neuroscience, clinical medicine, and animal research.”

The Reproducibility Project (RP) was attempting to validate studies, not invalidate them. They involved original authors in the design of replication attempts. Psychology has long known that many of their journal articles reported experiments that were unlikely to be correct, which exaggerated effect size and significance or did not report real effects at all. The RP is trying to help Psychology as a field of research to regain some semblance of reliability and public confidence, especially after a series of high profile exposés of falsified data and subsequent retractions.

Ioannidis gives a series of suggestions in his “Why Most Published….” paper on what could be done to improve this dismal record.

Reading Ioannidis will give you a lot of insight into what is wrong with CliSci research. Among the suggestions: “large studies with minimal bias should be performed on research findings that are considered relatively established, to see how often they are indeed confirmed. I suspect several established “classics” will fail the test.” For examples of this, see “Contradicted and initially stronger effects in highly cited clinical research” and, from the Mayo Clinic, “A Decade of Reversal: An Analysis of 146 Contradicted Medical Practices” .

Annemarie Zand Scholten & her team at the University of Amsterdam have produced an online course at https://www.coursera.org called Solid Science: Research Methods primarily aimed at social science students/researchers on the theory and practice of proper experimental design.

In the field of forecasting, J. Scott Armstrong has been leading the way with “Standards and Practices for Forecasting.”. There are several leaders in Statistics as well, battling against the dreaded “P-value hacking” (and here).

Those of us (if there is an “us” amongst readers) who believe we need better (not just more) and Feymanian-honest (not just correct) science should applaud these efforts to improve various fields of research, to point out their flaws while avoiding the temptation to “throw the baby out with the bathwater”.

A lot of the ongoing conversation regarding what to do about the what-some-believe-to-be-broken peer-review system include such things as advanced registration of all proposed experiments with their hypotheses, approvals, proposed methods and metrics. Along with this, repositories for all research data and results, raw and processed, all findings, along with resultant papers and subsequent corrections. I believe all these efforts should be supported as well.

I encourage readers to share, in comments, other “self-correction of science” efforts that they are aware of.

It is long past time to end Climate Science’s standard approach which seems to be “Instead of Correction, Collusion.”

(and “Yes, you may quote me on that.”)

# # # # #

Author’s Comment Policy: I am happy to try to answer your questions about the topics I have brought up in this essay. I act here as a free-lance science journalist, and not a climate or oceanic scientist. Though somewhat knowledgeable, I am unable, and mostly unqualified, to answer questions regarding the science of AGW, CAGW, Global Warming, Global Cooling, Climate Change, Sunspot numbers, solar irradiation, ocean/CO2/carbonate chemistry or other related topics – and will not engage in conversations on those issues. It would be nice if comments here could be about the positive side of self-correcting science efforts and not on the “I knew those blank-ity-blank ocean acidification guys were full of it” side. Thank you for reading.

# # # # #

Ocean acidification-the latest in a chain of scare campaigns. Once again, the “science” is a sham. Buffering capacity of basalt in particular is ignored. A doubling of atmospheric CO2 does not cause a doubling in ocean CO2. How convenient to conveniently forget the oceans contain 48x more CO2 than the atmosphere. The effects are out by over a full order of magnitude. The figures I have seen are totally fraudulent- .4 pH points for a doubling of CO2 concentration suggests a very high percentage of the CO2 becomes carbonic acid-remember, pH is logarithmic. To add to the fraud, doubling the atmospheric CO2 does not mean doubling ocean CO2-far from it. Double atmospheric CO2-even if all the extra dissolves in the oceans is only a 2% increase – hardly likely to do anything, certainly not dissolve the planet.

Once again, scare mongering LIES to dupe the science illiterate in to surrendering their freedoms. Really, how science illiterate can anyone be to believe the ocean acidification scare-of-the-day.

Once again, the UN are the ones plugging the lies. Wake up, the UN is a massive fraud-one of the grandest if not the grandest in history. “Above all else, appear respectable”- one of the mottoes of the Fabian Society, one of the instigators of the UN.

Exactly, but when their temperature rise scare is a failure, where else can they go but into the deep oceans? They are grasping for straws.

Not straws, they need life jackets the size of Zeppelins.

High Treason,

You need to take into account the time frames involved. The deep oceans contain a lot more carbon derivatives than the atmosphere, but the exchange rate between these two is quite slow, a half life time of about 40 years. As long as there is an increase in the atmosphere, the main increase is in the ocean surface layer (the “mixed layer” a few hundred meters depth) at about 10% of the change in the atmosphere (due to buffer chemistry). It is there that the main influence (as far as there is an influence) may be seen.

The mixed layer contains about 1000 GtC as different inorganic carbon species (DIC – dissolved inorganic carbon), the atmosphere currently contains about 800 GtC as CO2. That is of the same order. The mixing between atmosphere and mixed layer is rapid with a half life time of about 1 year.

As a lot of sea life is in the mixed layer, it is important to know what the influence of a CO2 doubling is. Until now there is no measurable influence on any sea life, thus we need good designed experiments to know what may happen with elevated CO2…

If (IF) Trenberth’s heat is being mixed into the deeper oceans faster than models predicted, then so is the CO2.

Michael,

An exchange of 0.001°C/decade at a level of 4-5°C may be possible (although highly unlikely), an exchange of 0.4% in DIC/decade (caused by a 4% change/decade in the atmosphere) is very unlikely to have been distributed over the deep oceans (certainly not by diffusion), or the increase in the mixed layer would have been (much) lower…

People need to get a grip, the ocean is not acidifying.

Consider, the mixed layer in coastal regions where most of the sea life concerned exists… Has a massive alkalinity and buffering capacity due to the (essentially) unlimited amount of limestone etc in those waters.

Until all of that physical material is dissolved, acidification cannot have any substantial effect.

This is a primary reason people who keep saltwater aquariums go to such lengths to include aragonite sand and limestone materials in their tanks (to stabilize the pH over periods of time). In small aquariums, this can be a problem. In coastal waters? No way. The base material will immediately begin to dissolve until the acid is neutralized (this happens very quickly; try pouring vinegar on limestone. Really you can do this at home- see how much acid you can afford to neutralize with a handful of limestone…).

So no, unless you postulate the entire Gulf Coast dissolving away, not going to happen.

I certainly am not against experimentation but have to wonder. Is there not clear geologic and Paleontology records which would be a good guide to focus of current experiments? IOW, has it already been demonstrated that during times in the past when there was higher than present CO2 levels in the atmosphere that certain types like of sea life like those in the Phylum Mollusca declined? And when there were lower levels of CO2 in the atmosphere than present those same types of sea life thrived?

Or is there some reason(s) why the known geologic and paleontology record should not be a guide to focus current experiments?

Reply to Ferdinand Englebeen ==> Your comments on DIC are spot on, of course. You would be well served by reading the EPOCA booklet booklet “Guide to best practices for ocean acidification research and data reporting”, particularly the chapter on oceanic carbonate chemistry. The OA research field made a major push in 2010 to correct the worst mistakes being made in this regard in ongoing research, and have been pretty successful — C&H found that the percentage of inappropriate chemistry in OA studies reduced from 21% to just 3% post publication of the guide.

A reasonable question. Truth is however that geology and paleontology are, for the most part, not very exact sciences. With the exception of the very short (in geological terms) ice core records,neither age nor CO2 concentration of most sedimentary deposits is known with all that much precision. And fossil deposits — even when they can be dated precisely and when CO2 can be estimated — rarely preserve the entire flora and fauna of a location in the proportions in which they were found in life. For the most part, it would be very hard — likely impossible — to come to meaningful conclusions about CO2 and marine invertebrate populations based on the fossil record, and when reasonable error limits were worked out, it would probably turn out that any answer was so inexact as to be unhelpful.

In plain English, applying shaky CO2 estimates to vaguely dated rocks that contain fossils that are probably selectively preserved is unlikely to yield useful results.

Not that people won’t try.

Absolutely, Ferdinand. It could only happen by mass transfer, for both heat and CO2. If Trenberth wished to genuinely look for his missing heat, he should also be looking for the missing carbon dioxide in the same place.

Reply to the thread started by High Treason ==> What this field of research, popularly called Ocean Acidification, is trying to find out is what will happen in the future as atmospheric CO2 levels rise over the next 100 years or so.

The basic science is pretty clear — increased atmospheric CO2 by itself — disregarding all other confounding mechanisms of oceanic carbonate chemistry — will change the pH of ocean water downwards — which scientifically means less alkaline and, by definition, more acidic. This fact is called “ocean acidification” not because it makes the ocean “acidic” but because it is the adding of carbonic acid to the ocean water mix through the dissolution of CO2.

The reason for the research is, of course, that one can not, in the real world, disregard all other confounding mechanisms of oceanic carbonate chemistry — researchers must take them into account and try to design experiments that will tell us what effects to expect from increasing atmospheric CO2. Trying to get the science of this right is what C&H 2015 and the work of the EU’s EPOCA is all about.

Knee-jerk reactions, conspiracy theory slinging, intentionally misunderstanding the underlying theory, transmogrifying the claims of OA researchers into ridiculous mockery and other adolescent behavior does not add to the discussion.

This essay is about a CliSci-related research field and the attempts of insider scientists (C&H and the EPOCA project) to see that the science methodologies and experimental designs are up to the task of discovering data that will inform us and add to the sum total of human knowledge. These efforts are hugely positive and need to be instituted in other Climate Science fields of research.

Hi Kip Hansen,

Good for you to bring this forward and engage us. Anytime someone in science is trying to improve the process, make it reproducible, actually publish the method(s) and data- that’s a good thing. So no disagreement there. And you want to do science? God bless you.

But when you say:

“This fact is called “ocean acidification” not because it makes the ocean “acidic” but because it is the adding of carbonic acid to the ocean water mix through the dissolution of CO2. ”

that process is technically correct but the name is factually incorrect, and intended to mislead.

It is called ocean acidification to scare the uninformed. Many have watched while this particular theme has been played. That’s not a conspiracy theory- that’s a reality of the current science by news release. Skeptics did not coin that phrase, so please don’t accuse people of conspiracy theories when they call it BS.

I followed a PhD in Ocean Chemistry years ago who observed something applicable here. No aquarium (or Experimental Unit if you like that better) is anything like a model of the true ocean, but carbonate chemistry in a vessel is no mystery.

Yes, the problems creating anything like a realistic model of the True Ocean are staggering (if you’ve never tried, you really have no idea). And if that’s how you chose to pursue your research dollars, that’s great. But if OA researchers want to be taken seriously, stop with the attempted scare-mongering.

Excellent point about the confounding effects, which combine to make the data uncertain. Anthony has detailed the measurement issues with surface temperature, there are similar and more profound issues with pH measurement. Even though we have had thermometers, of varying degrees of accuracy, for hundreds of years, the pH meter was not invented until 1934. And even though you can infer some information from wet chemistry data that came before, there are still accuracy, sampling and correlational issues with that, can you say proxy? And what about the influence of undersea volcanoes, they are putting some real sulfuric acid out there, along with saturated solutions of CO2 under pressure.

I did a calculation once to determine neutral pH under nuclear reactor conditions (PWR). I found at 650F and 2250 psi, neutral pH is approximately 5, not 7, due to the self ionization of water at those conditions. I can surmise that some volcanic eruptions are under similar conditions at depths of greater than 4,000 feet.

Reply to Gary Parker ==> When you use language like this: “and intended to mislead” you assume something not in evidence, and not knowable to you — the intentions of another person.

It is quite proper and allowable to say “tends to mislead” — which it does, as most lay persons are almost entirely ignorant of even the basic principles and definitions of chemistry.

We can say it is unfortunately named….and tends to lend itself to propagandistic use — that is certainly true.

Kip, I have posted more below but after reading the EPOCA booklet, I said:

“That booklet is the most flagrantly political piece of propaganda dressed as science, I have ever read. What ever else it is, it isn’t science.”

And it does not back up or prove OA! It remains an unsupported assertion that is not dealt with in the whole document!! The acidification due to Increasing levels of atmospheric CO2 is not verified and the document demonstrates that it is not currently verifiable. It is assumptions and solipsism and models all the way down!

Reply to Scott Wilmot Bennet ==> I hope you read past the obligatory introduction and executive summary, which by design and intent in these days of politicized science are flagrantly alarmist, and got down to the basic science and the chapters laying out how to correct the research that was being done to get results that will inform us more clearly about what is happening now and what might happen in the future.

It is worth the effort.

You are correct in all you say, especially about the UN fraud underlying it all. One reason these antisocial fraud plots get so much traction is the adaptation of words, not well-understood or well-defined, by the fraudsters and massaging the meaning to add to their urban myths. For example, physical chemists needed a means for describing the ration of two common ions in aqueous systems, hydroxyl and hydroxy. They did this in ingeneous mathematical fashion, and the terms acid (acidic) and base (basic) resulted. The pysical chemistry of pH, chemical equilibria and buffered solutions is well understood by university chemistry graduates.

Pseudo-scientists, ever seeking useful scientific terms to corrupt to their own pursuits, derived acidification and postulated the great myth of ocean acidification in support of the grand myth of global warming. The choice of the two pseudo scientific terms that are not able to be defined nor measured directly, global temperature and acidification, has been pivotal to the whole scam.

“A doubling of atmospheric CO2 does not cause a doubling in ocean CO2. How convenient to conveniently forget the oceans contain 48x more CO2 than the atmosphere.”

So who said that it did? Quotes? Links?

What’s the change in pH between distilled water and carbonated distilled water? The oceans can’t get any worse than that, can they?

Via the Wikipedia reference, “Modern Food Microbiology” by James M. Jay, Martin J. Loessner, David A. Golden says, on page 210, “The pH of non-carbonated water should be around neutrality, whereas that of carbonated water is typically between 3 and 4.0 — ideally at or below pH 3.5.”

I believe there is a big difference in the pH between distilled water (approx. 7pH) and carbonated water (approx. 5.5pH).

The pH of distilled water is around 5.5. The CO2 in the atmosphere creates Carbonic acid and with zero buffering capacity(the huge variable in real world conditions) the pH of pure water is definitely not 7.0, Now in a controlled environment without CO2 sure but that only happens in experimental models…

Say what? The pH of pure distilled water is 7.00. pH+pOH = 14. Since H+ = OH- in pure distilled water, pH is exactly 7.0.

Also, what do you think the pH would be if you took a 1 liter of distilled water and added 0.1mole of finely powdered CaCO3 to it and then bubbled in 0.1 mole of CO2?

Ans: 8.2, which is essentially the pH of the ocean… one does not need to wonder why. The ocean is exposed to Gigatons of limestone, aka CaCO3 so it should be no surprise, and precisely why the oceans will never be acidic at equilibrium.

I think you actually mean the pH of fresh, pure rain water is around 5.5, not pure distilled water.

Were you surprised to find that the distilled water did not have a neutral pH?

Pure distilled water would have tested neutral, but pure distilled water is not easily obtained because carbon dioxide in the air around us mixes, or dissolves, in the water, making it somewhat acidic. The pH of distilled water is between 5.6 and 7. To neutralize distilled water, add about 1/8 teaspoon baking soda, or a drop of ammonia, stir well, and check the pH of the water with a pH indicator. If the water is still acidic, repeat the process until pH 7 is reached. If you accidentally add too much baking soda or ammonia, either start over or add a drop or two of vinegar, stir, and recheck the pH.

http://www.epa.gov/acidrain/education/experiment1.html

He is saying distilled water equilibrated with atmospheric CO2 is around pH 5.5 and that is true. If you keep the CO2 out, then it will be 7.

People – distilled water is being mistaken for pure H2O which is neutral. It is difficult to achieve pure H2O – it must be de-ionized by mixed resin beds to approach purity. GK

alcheson wrote: “The ocean is exposed to Gigatons of limestone, aka CaCO3 so it should be no surprise, and precisely why the oceans will never be acidic at equilibrium.”

That is true but misses an important point. The CO2 enters the ocean from the air and the CaCO3 is on the bottom of the ocean. Since mixing is slow, it takes thousands of years to reach equilibrium. Because of that, a gradual increase in atmospheric CO2 (as has happened at times in the Earth’s past) is not a big problem. But a sudden increase in CO2 might be a big problem, at least until equilibrium is re-established.

So although ocean acidification, like all the other climate change stuff, is blown way out of proportion, there is a real issue there that deserves careful attention.

A few decades ago my copy of the American Water Works Association Standard Methods for the Examination of Water and Wastewater had on the inside cover instructions for preparing “reagent grade” water, “CO2 free” water and maybe a couple of others.

They all started with distilled water and distilled it again using equipment made of borosilicate glass.

That doesn’t happen in nature or in your kitchen.

Water is called “the universal solvent” because it can dissolve a little bit of just about everything it comes in contact with. Some of those things can effect pH.

“Pure water” in quantity pretty much only exist in a lab and even then it won’t stay “pure” for long.

Reply to Mike M. (period) ==> You have this exactly right.

There might be a problem with rapidly increasing atmospheric CO2 as it affects oceanic carbonate chemistry at different depth levels and in different oceanic environmental niches — this is what all the research is about.

That said, there is no doubt that “scare effect” has been used to attract research funds which fact biases both research topics and published results — something that is true for way too many fields of scientific endeavor in today’s world.

I make this stuff for a living. We add chlorine to precipitate Fe, Mg, and to a degree Al. Then dechlorinate with granular carbon (1:1 molar). Run it through a highly efficient two-pass reverse osmosis unit, and here is the interesting part…

While concentrating the dissolved ions in the feedwater reject, the dissolved gasses concentrate with the RO permeate (the good water). So while the RO feedwater pH was 6.4 – 6.6 to keep aluminum in solution, the permeate will drop to 5.5 to 5.8. This drop is caused by dissolved carbon dioxide concentrating through the RO. Prior to feeding this acidic permeate into the second pass RO elements, we add a little sodium hydroxide (OH’s) to form carbonate, which then becomes rejectable across the RO membranes.

After all this the water is fed into a bed of mixed highly charged resins. About half are positive and the other are negative. The effluent is devoid of any and all charged particles and has a perfect pH of 7.0. This doesn’t last long because as soon as it is exposed to the open atmosphere, we can actually watch the conductance and pH rapidly move until it comes to stability with the atmosphere.

pH of tomatoes is 5, pH of orange juice is 4, and pH of lemon juice and vinegar is 3. MOL.

The pH of soda is listed as three, but as someone who has suffered from acid reflux and at one time had a gastric ulcer and another time a duodenal hematoma, I can tell you that pH is not the whole story.

Eating tomatoes, or lemon juice, or vinegar will cause a severe reaction in people who have any of these conditions. Drinking soda will not. The simple reason is that all acids are not created equal, and the effects of buffering change the situation even more.

When multiple acids and bases and buffering systems exist, the situation become extremely complicated.

In any case, we have had many conversation about the inappropriately named ocean acidification meme before.

As with much of what passes for climate related science these days, researchers seem obligated to ignore Earth history, and also either feign ignorance, or actually be ignorant of, whole bodies of knowledge that bear on the issues being studied.

One example which makes the point quite clearly is the idea that an increase in atmospheric CO2 will somehow cause mollusks, corals, and other organisms which depend on carbonate based shells, will dissolve, or be unable to create the shells they need.

Anyone making this assertion, and there have been many, would have to be almost comically ignorant of the many situations where vast colonies of such creatures happily exist in acidic freshwater lakes, rivers and ponds, or the legions of such creatures that are living quite happily right next to hydrothermal vents and other undersea environments, that are highly acidic, not just less basic.

Here was one recent such article. The article and the ensuing discussion were quite interesting, illuminating, and not a little bit hysterically funny at times.

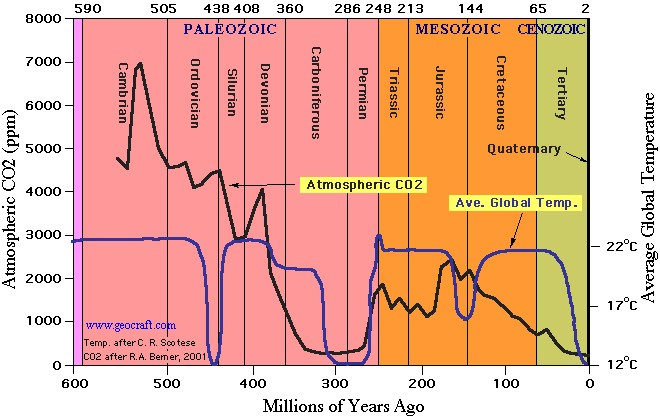

CO2 has been far higher, by an order of magnitude, than at present for most of Earth’s history, including the periods of time when many, if not most or all, of these organisms first evolved and spread throughout the world ocean:

As for the title, I believe the only one who was the slightest it astonished was the “scientist” performing the original investigation:

http://wattsupwiththat.com/2015/06/10/astonishing-finding-coral-reef-thriving-amid-ocean-acidification/

Menicholas wrote : “CO2 has been far higher, by an order of magnitude, than at present for most of Earth’s history, including the periods of time when many, if not most or all, of these organisms first evolved and spread throughout the world ocean”

True, but irrelevant, as indicated by Menicholas: “When multiple acids and bases and buffering systems exist, the situation become extremely complicated.”

See my comment, Sept. 5, 8:24 a.m.:

http://wattsupwiththat.com/2015/09/04/ocean-acidification-trying-to-get-the-science-right/#comment-2021660

Not the way to look at it. CO2 dissolved in the ocean precipitates bicarbonate which is utilized by biologic organisms to lay up shell. Living organisms are hydrogen ion pumps which make their own acidic microenvironment.

After the “anthropogenic climate change” lie, even stupid people won’t buy into this lie surely ?

Said by a guy who really, really thought that the emergence of the internet would help uninformed people know the actual science-based truth ……

It’s hard to admit to being so abjectly wrong.

(That’s not a flippant comment. I really did think that)

Pull funding. What a waste of space. That this junk is even considered science is disgraceful. The sheer audacity to even suggest ocean acidification from tiny increases in the trace amount of CO2 in the atmosphere beggars belief.

Reply to wickedwenchfan ==> You would benefit from a review of the basic chemistry — it can be found in simple form in “Part 1: Seawater carbonate chemistry — Basic chemistry of carbon dioxide in seawater” in the EPOCA publication with a good general explanation starting on page 20.

If by chance the oceans were becoming more acidic or less alkaline, doesn’t that just mean we’d eat more of certain fish and less of others?

Any impact from altered pH would be undetectable within the “noise” from the impacts of over-fishing, habitat degradation, pollution, etc.

Supporting links for your statement?

Are scientists actually taking actual seawater and measuring not only the PH of the water, but also mass spectroscopy investigating the nature of the acids in question? Something tells me dissolved CO2 isn’t the villain here. Particularly since in the past the atmospheric CO2 levels were about 7k ppm back in the cambrian period, which people will remember as the period where everything lived in the ocean!

How can learned people, calling themselves scientists, in full seriousness believe that they can solve a chaotic problem such as ocean acidification in a laboratory over a few weeks or months? You would need a mighty big laboratory tank, throw in masses of basaltic rocks, gravel and clay, some layers of photosynthetic life floating and thriving on top. Also a self-sustaining system of three-dimensional water currents, thank you, with ever changing pockets of varying salinity, temperatures, rates of evaporation, etc.

And, not to forget, a huge source of radiating energy over it all appearing every 24 hours just to keep things going in the tank and winds blowing above it.

No wonder you will be unable to reproduce any laboratory results.

To my mind you will need at least one hundred years of just plain recordings of observations to, perhaps, stand a reasonable chance of presenting a plausible theory for ocean acidification caused by increased atmospherfic CO2.

Reply to AndyE ==> Teasing out the effects of one perturbed element in a complicated system is what most science is about…that’s why we do it — we want to know “What would happen if…?” or “I wonder if this is caused by that?”

Strictly speaking, Ocean Acidification itself is not a chaotic problem (in the Chaos Theory sense). It is simply a very very complicated and complex problem.

It should be possible to discover the proximate, generalized effects of increased atmospheric CO2 on the ocean carbonate system under certain conditions and what how those effects might affect various ocean organisms. As in medicine, the laboratory findings might not translate to real world effects — that is another scientific step altogether. There are ongoing attempts at in situ experimentation (see the EPOCA booklet, page 223.

Kip Hansen – I agree that changes in ocean acidification cannot, strictly speaking, end in “chaos” – I used that word in a general way.

I am not against laboratory experiments investigating the effect of pH changes on organisms or whatever – but I think it ludicrous that results from such research should be translated into meaning that similar changes in atmospheric CO2 will cause similar results to oceanic CO2 levels.

The oceans have been alkaline for over a half billion years – and levels of CO2 have at times been many times higher than today. In fact, it has hardly ever been any lower than today (it can’t because life would not then be possible). How can any one then deduct from a laboratory experiment that a brief period of slightly increasing atmospheric CO2 (just 200 years so far) will justify hugely expensive emergency measures to decrease CO2 emissions?

It is the alarmism I object to – with each scientific paper written on the subject it should be spelled out that the results in no way can be compared to what increasing CO2 will do to our oceans.

Reply to AndyE ==> And I agree that you are quite right to object to the “alamism” — in this and many other fields. Some of it is intentional, some of it political, some of it self-aggrandizement, some of it ill intended.

One must fight alarmism, in my mind, with solid sensible science and responsible science journalism.

I try, in my vanishing small way, to do that.

After looking into this subject a little, it is clear that many of the earlier experiments used crude methods of adjusting ph, by simply dumping acid in the water to instantly and dramatically achieve an effect predicted to occur over 100 years, without adjusting the other complex factors of carbon chemistry and buffering that affect marine calcification. Well, duh! Is it not just possible that the biota might adapt and acclimatise a little bit across 100+ generations, just as their ancestors did over the hundreds of millions of years they’ve been around?

In any case, it appears that, when you actually look at a real situation and do not immediately leap to the lazy, well-funded QED explanation “It’s the CO2 and fossil fuels what done it!”, other possibilities emerge:

http://www.climatecentral.org/news/saturation-state-ocean-acidification-18644

In this case, the cause of larval oyster die-off has been shown to be ocean carbonate saturation state, with no observable effect of ph in an 8-year study. Upwelling events bring deep water up to the surface, which is much less saturated in Ca++ and CO3– ions than shallower water.

There’s always an explanation if you don’t immediately take the easy way out and jump on the bandwagon. It’s nice to see these Australian researchers calling their colleagues to account for their sub-optimal science.

Reply to Bob Highland ==> Thank you for reminding us of the Whiskey Creek oyster farm story.

There is a tendency in all alarmist science (CliSci, nutrition, alternative health issues, etc) to point blame at some basic piece of science (such as OA’s “increased atmospheric CO2 will naturally lower oceanic pH” — which, by itself, is true) and then blaming all sorts of problems seen in the real world on that bit of science — most often not even measuring or reporting whether the posited causative agent actually exists or took place in the case being discussed — did the pH of the sea water there and then change, when and to what, and what was the cause of the change?

The ClimateCentral article implies the saturation state is lowered due to CO2 increase.How did the deep ocean upwelling become more carbon-dioxide enriched than the surface water?

First of all, the oceans were NOT acidic even when the atmosphere was several thousand PPM CO2. The oceans were teaming with life. Number two… ALL “ocean simulating experiments” should be done with finely powdered CaCO3 and limestone rocks/pebbles in the tank. As long as there is CaCO3 available for dissolution, the pH will NEVER be acidic at equilibrium if the only acid added to the system is CO2. The BIGGEST mistake made in these so-called ocean experiments is the lack of a CaCO3 buffering system in the water. The reason they generally do NOT use CaCO3 in the studies is because that PREVENTS the water from becoming acidic. In addition, with CaCO3 readily available, as CO2 is added, the CO3– concentration actually increases, contrary to common misconception.

Reply to Alcheson ==> You might read the suggestions in this regard in the EPOCA booklet

starting with the basic chemistry on page 20.

The concern is not for the equilibrium state (at some hugely distant time in the future, assuming atmospheric CO2 quits changing) but in the interim period with expected “rapidly” increasing levels over the next century or so.

And again, no one is expecting the oceans to become acidic — that is a red herring/strawman position foisted off on the world by the anti-AGW side of the Climate Wars to counter the scare-mongering position regarding OA put out by the pro-AGW forces — political propaganda all the way down.

Kip, you are NOT correct, it is the CAGW scientists and their supporters that are spreading the acidic oceans scare. For example, from http://www.pmel.noaa.gov/co2/story/What+is+Ocean+Acidification%

“Since the beginning of the Industrial Revolution, the pH of surface ocean waters has fallen by 0.1 pH units. Since the pH scale, like the Richter scale, is logarithmic, this change represents approximately a 30 percent increase in acidity. Future predictions indicate that the oceans will continue to absorb carbon dioxide and become even more acidic. Estimates of future carbon dioxide levels, based on business as usual emission scenarios, indicate that by the end of this century the surface waters of the ocean could be nearly 150 percent more acidic, resulting in a pH that the oceans haven’t experienced for more than 20 million years.”

Clearly the official position is to lead the casual observer to believe the oceans will become a corrosive acid bath. No where do they mention that the oceans may become just a little bit less basic.

Reply to Alcheson ==> You are agreeing with me….as I said above “to counter the scare-mongering position regarding OA put out by the pro-AGW forces” (your version is “CAGW scientists and their supporters that are spreading the acidic oceans scare.”). Or, we can say that I agree with you.

In the end, both the OA scare stories and the craziness of the distorted claims are “political propaganda all the way down.”

Mr. Hansen,

In the past I have found it necessary to quote the definition of the word “acidification” from numerous chemistry texts, and also from a collection of regular dictionaries, in order to counter the sophistry of the CAGW alarmists, who claim the acidification is a common term for a situation in which a basic solution is becoming more neutral.

It is not, and it never was.

It is only used in that context in the alarmist memes of impending disaster.

BTW, I shall repost if you wish, or you may wish to prove me wrong by finding such a definition is a chemistry text, scientific or medical or regular dictionary, from any time period you may happen to find convenient, unless it is one which makes direct reference to “ocean acidification”.

Reply to Menicholas ==> No amount of being right will change the actuality of how the word is being used in this modern context.

For better or for worse, we appear to to stuck with the name for now.

I’m puzzled. CO2 is meant to cause a lot of warming. Warming means a warmer sea. Warmer sea holds less CO2 than a colder sea (or is the sea not CO2 saturated yet?).

So the sea should exhale CO2 as it warms and so get more base, or alkali? They want it both ways. Again.

I always asked that question but I believe there is a 200 year lag so if the oceans are taking in all this carbon dioxide then expect it be released in 2200.

https://www.newscientist.com/article/dn20413-warmer-oceans-release-co2-faster-than-thought/

I’ve always wondered if the models of atmospheric carbon dioxide take account of the amount going into the oceans, or being a magical compound, it is in both places at the same time? The oceans seem to be the ultimate carbon capture system!

David Schofield wrote: “I’ve always wondered if the models of atmospheric carbon dioxide take account of the amount going into the oceans, ”

Wonder no more. The models do indeed take that into account. If you want a source for my claim, see IPCC AR5, Chapter 6.

If there were no human emissions, warmer temperatures would mean less CO2 derivatives (DIC: CO2 + bicarbonates + carbonates) in the oceans and thus a higher pH, the difference gets into the atmosphere due to higher temperatures.

Per Henry’s law, that gives about 16 ppmv/°C (4-17 ppmv/°C in the literature) extra in the atmosphere. Currently we are at 110 ppmv extra CO2 in the atmosphere, far beyond the (dynamic) equilibrium for CO2 between oceans and atmosphere for the current temperature (around 290 ppmv). Thus CO2 is driven from the atmosphere into the oceans. That is measured over time at several ocean stations as an increase in DIC and a slightly lower pH:

http://www.tos.org/oceanography/archive/27-1_bates.pdf

Ferdinand Engelbeen

September 5, 2015 at 4:56 am

hello Ferdinand.

“Currently we are at 110 ppmv extra CO2 in the atmosphere, far beyond the (dynamic) equilibrium for CO2 between oceans and atmosphere for the current temperature (around 290 ppmv).”

—————————

Are you claiming that the “110 ppmv extra CO2 in the atmosphere ” is human?”

cheers

Whiten,

Are you claiming that the “110 ppmv extra CO2 in the atmosphere ” is human?”

Human caused, indeed, but not of human origin anymore, as most of the original emissions are replaced by CO2 from other reservoirs, mainly the deep oceans. About 1/3rd of the original human used fossil fuels still are remaining in the atmosphere, as can be seen in the decreasing δ13C level in the atmosphere and the mixed layer of the oceans:

http://www.ferdinand-engelbeen.be/klimaat/klim_img/sponges.jpg

Ferdinand Engelbeen

September 5, 2015 at 4:56 am

Hello again Ferdinand.

Thank you for the reply.

Hopefully you would not mind with my further arguing with you.

You actually say:

“Per Henry’s law, that gives about 16 ppmv/°C (4-17 ppmv/°C in the literature) extra in the atmosphere. Currently we are at 110 ppmv extra CO2 in the atmosphere, far beyond the (dynamic) equilibrium for CO2 between oceans and atmosphere for the current temperature (around 290 ppmv).”

—————-

Your above argument makes that “extra” 110 ppmv in the atmosphere look like not possibly natural.

First from my point of view and my understanding, it does not really matter what method or physical law used to get to a conclusion or a number supporting your argument.

What matters is that the number and the conclusion is actually supported from the reality.

Just saying “Per Henry’s law” does not make the “16 ppmv/°C (4-17 ppmv/°C in the literature)” true or acceptable as the truth by default.

It must stand also to the test of it compared with the data, in this case with the climate data we have.

As far as I can tell it fails very badly in that account.

Looking carefully and taking in account the paleo climate data we have, anything below ~55ppmv/C fails very badly…….to a point that it makes the last interglacial look and be neither natural or anthropogenic, but something more like alien.

You see the latest most problematic issue with climatology or climate science is the same.

The scientific projections of climate, have an assessment of ~25ppmv/c, a 4-7C temp swing in correlation, association, with ~120ppmv CO2 swing (a climatic projection of `20-25ppmv/C).

Even an ~25ppmv/c, which is higher than yours, fails very badly, making that scientific assessment look very dubious and wrong.

You see, with a ~20-25ppmv/C we end up with a climatic period, the last 6K years, the full period of human civilization, to be a period of ACC-AGW, from Noah to Napoleon. A climate ~2C warmer than naturally supposed to be and with ~60ppmv higher than supposed to.

If you have any doubt about this just read the AR5.

You will find there a lot of “work” and struggle to show and convince the rest that there has always being an anthropogenic effect in climate since the very beginning of our civilization.

That is actually the main force in the AR5.

Also look at how many studies and researches are being focused lately in the “reconstruction” of the last 10K years period in climate, because it does contradict the “scientific” assessments and projections we have about climate. And the result of such attempts are too many “hockey sticks”.

Actually the extra 110ppmv/C, where in reality the last ~40ppmv (of the 110ppmv) are above the 360-380ppmv mark, is not that strange or unnatural.

60-70ppmv/C is wrong and not acceptable only from the AGW and the AGWers…..CLIMATE seems not to have a problem at all with it.

Even GCMs projections if considered for what really these projections are, will give you a 60-70ppmv/C, ~2.8C increase for 200ppm increase.

So from where I stand, your try to portray the 110ppmv increase as clearly not natural, is faulty and wrong.

The climate data does not support the ~16ppmv/C….. it actually contradicts it………and that is not hard to see or realise if you want to, otherwise you have to follow the steps and support the other AGW “scientists” in their ridiculous and useless attempt at “killing” the paleo climate and its data through the claim of better “reconstruction” of it.

Actually in climate terms, an ~16ppmv/C is wholly unnatural…….

cheers

Whiten,

CO2 levels over the last 1,000 years:

http://www.ferdinand-engelbeen.be/klimaat/klim_img/antarctic_cores_001kyr.jpg

The best resolution ice cores have a resolution of ~20 years over the full time span and a repeatability of measurements for one part of the core of 1.2 ppmv (a sigma). Differences between ice cores maximum 5 ppmv, despite huge differences in temperature and accumulation rates.

The same small variability over the past 10,000 years after the start of Holocene for CO2, δ13C, CH4 and N2O levels, until about 1850. Not by coincidence the start of the increasing use of fossil fuels. CH4 levels somewhat earlier, maybe the result of rice cultivation for an increasing population.

Temperature shows some 8 ppmv/°C over the past 800,000 years in the Vostok and Dome C ice cores, but that is for local temperatures, that makes about 16 ppmv/°C for the whole SH (and extended for the globe, as NH proxies show similar changes). The Law Dome ice core shows a small (~6 ppmv) change from the MWP to the LIA which may have been ~0.8°C colder globally. That is ~8 ppmv/°C.

The warming since the LIA may be maximum 1°C warmer into the current warm period, that is thus 8 ppmv extra, far from the 110 ppmv above steady state:

http://www.ferdinand-engelbeen.be/klimaat/klim_img/law_dome_1000yr.jpg

That are observations, where temperature changes did drive CO2 changes up to ~1850. The 110 ppmv extra since then is not caused by temperature but by human emissions. If the extra CO2 will have an influence on temperature and how much is an entirely different discussion…

Ferdinand Engelbeen

September 6, 2015 at 2:55 am

thank you again Ferdinand for your reply.

You still miss the point.

Reality as per the modern era shows a clear 110ppmv/C, too high from your ~16ppmv/C, and still high from the claimed as correct in natural terms of the 60-70ppmv/C.

While there can be a reasoning to bring down that to ~80ppmv/C by arguing that any CO2 increase in concentration above 360-380ppmv mark does not consist with warming or increase in temps and therefor bringing it very close to the 60-70ppmv/C, that can not be claimed under any circumstances for a 16ppmv/c or even in the case of an ~20-25ppmv/C.

YOU SAY also:

“The same small variability over the past 10,000 years after the start of Holocene for CO2, δ13C, CH4 and N2O levels, until about 1850. Not by coincidence the start of the increasing use of fossil fuels. CH4 levels somewhat earlier, maybe the result of rice cultivation for an increasing population.”

————————

That is what I tried to explain to you.

Through the scientific estimation of the climate projections which is at ~20-25ppmv/C, we end up with

~ the last 7K of a cooling trend to be neither natural or anthropogenic.

There is only a drop of ~20ppm CO2 concentration and a drop of ~1.2C through that whole 7K cooling trend, when actually according to the paleopclimate data there should have been a 80ppm drop and

an ~ 3-3.5C drop in temps, always according to the scientific orthodox climate projections meant in the other reply of mine to you.

There seems to be a 60ppm block in an expected 80ppm drop and a ~2C block in an expected ~3-3.5C drop, ACCORDING TO THE agw “science” climate projections of a 20-25ppmv/C.

The numbers do not add up Ferdinand for anything around an ~ 20-25ppmv/C, let alone for any thing lower than that, in climate terms that is.

That can not be explained (no matter how hard have being tried n the AR5) either as a natural or anthropogenic climate period, that is why I name it as “alien”. That is what the whole AGW “rationale” ends up to be, a cuckooland “alien” rationale.

Beside as I said the GCMs projections fare much better and still show an ~60-70ppmv/C, which when compared to paleoclimate data make the last 7K years of the cooling trend to be inside the natural variation, with only a 0.4-0.6C divergence or anomaly in an 1.8C drop and with an ~ 20-40ppm divergence or anomaly in a 120-140ppm drop.

According to the GCMs projections the last 7K years of the climate have been in a cooling trend of 0.4-0.6C warmer than supposed to, and with a ~20-40ppm higher than supposed to when actually the drop in ppm is ~100ppm instead of the expected 120-140ppm and the drop in temps is actually ~1.2C when expected to have being~1.8C

This is much easier to accept as real and definitely inside the natural variation than your estimate or that of the AGW “science”.

Hopefully you understand this.

Your AGW numbers must be tested and validated before taken for granted.

Neither reality, paleoclimate data and neither GCMs validate such numbers.

Such numbers do not add up as to make any sense or accepted rationale, really, unless in the AGW cuckoo land.

cheers

Whiten,

I don’t know where you have your “paleo” data from, but Henry’s law is confirmed by over three million seawater measurements since Henry established the laws of solubility of any gas in any liquid in 1803.

Thus regardless of what AGW makes of it or what climate models show, the 16 ppmv/°C is what ocean chemistry and real paleo data in ice cores show over the past 800,000 years.

Anything beyond that is not caused by the oceans and neither by vegetation, as that is an increasing sink for CO2, thanks to increased temperatures and CO2 levels, the earth is greening…

As humans released about twice the increase found in the atmosphere, that is a very likely cause, which fits all known observations…

Ferdinand Engelbeen

September 6, 2015 at 10:58 am

“I don’t know where you have your “paleo” data from, but Henry’s law is confirmed by over three million seawater measurements since Henry established the laws of solubility of any gas in any liquid in 1803.”

——-

Are you claiming that paleo date does not support my claim that the interglacial period is a period ~15K years that starts at the end of a glacial period and ends at the beginning of the next one, where there is not much difference either in ppms or the temps between a beginning and the end of a glacial period?

~ as much the temps and ppms increase during the first part of the interglacial period of ~ 7,5K years, that much ~ the temps and the ppm will come down, otherwise there will not be a glacial starting point to be considered…………. that is what the paleo climate data basically show.

According to our scientific projections and assessment of 25ppmv/c, after 15K years of the end of the last glacial period we were still 2C short of the next glacial period.

When Napoleon was fighting all over Europe, ~15K years after the end of the last glacial period the climate happens to be at ~2C away from the BEGINNING OF NEXT GLACIAL PERIOD.

And if it actually did take an ~7K years for an ~ 1.2C drop, how many thousands of years do you think it will take to drop a further 2C to get reasonably at a beginning of the next glacial?

That is how bad a 25ppmv/c fares with paleo data, which is higher than your estimate.

According to our orthodox estimation and projection, during the first part of this current interglacial the temps went up by ~ 3-3.5C and the ppm of CO2 went up by ~80ppm…..and in the second part of the interglacial the ppm have gone down by only ~20ppm and the temps only ~1.2C.

A huge discrepancy……….one that is not seen for any other interglacial period before this one in the paleo data. Not at this extent. This extent is not in any way to be considered as natural in its substance.

Hope this helps you on at least getting my point, regardless of it being or not acceptable by you..

Never the less, your claim of 16ppmv/c, no matter which way you got at it, still fails badly with GCMs and the reality of 110ppmv/c of our modern era records. Too far and wide from both these two other validations.

cheers

Whiten,

You have it upside down: the paleo data over the past 800,000 years, that is 8 glacial and interglacial periods, shows 8 ppmv/°C for the local temperatures at central Antarctica, that is about 16 ppm/°C on SH scale or about global scale. That is all.

There is no reason to attribute the current 110 ppmv extra to temperature, as neither the oceans or the biosphere can do that.

during the first part of this current interglacial the temps went up by ~ 3-3.5C and the ppm of CO2 went up by ~80ppm

What paleo record shows such a ratio? Certainly not Vostok over the past 420,000 years:

http://www.ferdinand-engelbeen.be/klimaat/klim_img/Vostok_trends.gif

Ferdinand Engelbeen

September 6, 2015 at 3:14 pm

hello again Ferdinand.

Your last comment to me, from where I stand, seems more like in the lines of a straw man argument.

You see, you have cherry-picked in one of my lines of argument, by trying to give it a different meaning and also trying to make it hard for me to answer your following question .

When you selected this from my argument:

“during the first part of this current interglacial the temps went up by ~ 3-3.5C and the ppm of CO2 went up by ~80ppm”

You did miss, intentionally or not, the preceding part of the argument:

“According to our orthodox estimation and projection….”

And also you left out the last following part of it, which means to me that you were not actually trying to argue and show where I could have being wrong, in a normal manner of arguing or debating.

And as far as for your following question:

“What paleo record shows such a ratio? ”

The simple answer that I was trying a tell you is:

“None of any paleo records actually do, but never the less that is the “scientific” and the climatology estimation and projections about climate and climate change, not actually mine, you see!”

Let me remind you again about these estimation… a climate swing is estimated and projected (“scientifically”) to be at ~ 4-7C swing in correlation and association with a 120ppm swing in CO2 concentration.

That is the orthodox official stand in climatology,

which also shows to you clearly that the 16ppmv/C of the Vostok Ice Core data is not considered as accurate or correct for the global climate projections.

The Vostok data also shows a 12C swing in temps…..and that is not considered also as accurate or correct.

The estimate again is at 4-7C nearly half of the 12C given by the Vostok data…..making the 16ppm/v of the Vostok moving to 20-25ppmv/C in global, according always to the climate “science”, not me.

One thing that really can not be argued against about the Ice-core data is the interglacial periods and the basic meaning and shape the interglacials have.

No mater what numbers in the climate projection or estimation, the 3/4 of the swing (what ever the numbers) either in ppms or temps belong to the interglacial period, either in the up swing or down swing of climate, more or less, otherwise nothing make sense in climate……..

3/4 of 120ppm and 4-7C swing stand at round about the value of 90ppm and 3-4.5C for the share of the interglacial swing…..and I was generous enough to consider 80ppm and 3-3.5C.

It basically means that the swing during an interglacial should be inside the range, for temps should be inside the 3-4.5C,,,,,, THE WARMING AND COOLING SHOULD NOT BE CONSIDERABLY OUTSIDE THIS RANGE.

According to the official scientific assessment and projections, our current interglacial cooling trend is at about 2C outside the lower range of 3C, a huge discrepancy…..as I said previously, no any where to be explained as a natural anomaly….well outside any possible natural variations.

If you read this and want to show me how wrong I am, please do try by telling me and showing me that my perception and the understanding of what the paleo climate data shows about the interglacials as per my claim is wrong……that is the only long term paleo data feature I am relying at in my argument, the rest is what basically is “scientific” estimation and projections.

Or try to show me that my perception or understanding of the cooling trend of the last interglacial is wrong, and there has actually being a higher drop of temps in that trend….more than ~1.2C, considerably enough so that it turns my argument wrong…. or that the orthodox “scientific” estimation and projection is not and does consist not with a climate swing at the values given by me.

thank you for your reply…

cheers

Precisely, but since when is alarmism based on REAL science and actual logic. It is all about a political aim-the “science’ is made to fit in with the rhetoric. Take note, the Nazis had declared the science of Jewish inferiority to be settled. We must all smell a rat here with how political forces have steamrolled over elementary scientific principles.

Nothing so sullies the integrity of humanity as the subversion of science for the servitude of politics.

Why wasn”t acidification a problem a million years ago, when atmospheric CO2 was 2X – 3X higher than it is now? Hmmmm?

Reply to Kurt Hanke ==> To be honest, we don’t know if there was, or was not, a “problem” a million years ago. There is a research effort going on along those lines, but I doubt that it will give us much of a clue.

We don’t really know yet what a problem would look like at a distance of a million years. We do know something about the ocean a million years — they were not dead lifeless bodies of water, but full of life of different kinds, some more or less the same as now, some different.

The questions being asked are along the linres of: “If we continue to undergo a fairly rapid increase in atmospheric CO2, what will the differences be?”

How about the magnitude of masses involved?

Ocean: 1 400 000 teratonnes

Atmosphere: 500 teratonnes

Atmospheric CO2: 0.3 teratonnes

Est. anthropogenic atmospheric CO2: 0.01 teratonnes

If this 1:140 000 000 ratio runs the Earth, why not harness it to engineering? Should be rather handy in battery acid production, central heating systems etc? Or are the deñalists blocking progress again with their scientific principles and other annoying stuff?

Two problems here:

Amounts don’t tell anything about effect, although in this case, the water thermostat has certainly more effect than the CO2 stove… For climate, only CO2 what is in the atmosphere has some effect, but it remains to be seen if its effect is measurable around the effects of water in all its forms…

Anthro CO2 caused (near) the full rise, thus 0.1 teratonnes of the current atmosphere, be it that the original “human” CO2 molecules are long gone as exchanged with CO2 from the other reservoirs, mainly the oceans.

Right. Homeopathic dilutions are about the same. Nothing can be done about that either. Points to homeopathy for trying to makes others feel better.

LOL!

Note the key finding: 95% of Ocean Acidification studies that got published were inadequately designed.

It is probable that the papers that didn’t get published (except in university PhD files) were also inadequately designed.

Therefore this is a field with almost no technical expertise at all. It is worthless without supervision from competent experimental scientists.

And seemingly there are very few competent experimental scientists in the field.

Is this a general problem for new fields?

If so it would apply to most environmental research as most environmental research is looking at new issues.

Reply to MCourtney ==> There are really two questions here:

1) Is this a field with almost no technical expertise at all. It is worthless? I would answer a fairly certain “No.” Like climate science, there were no specific university departments teaching OA research…no degrees issued in Oceanic Chemistry. Like many other CliSci related research fields, it is handicapped by relying on IPCC-approved viewpoints and “projections” about the future. The fact that the EPOCA project took place, trying to turn the field around to get the science right….and the fact that C&H 2015 has been published and its reception in the field has been “mostly good” is very encouraging.

2) Is this a problem for new fields? again, I would answer a fairly certain “No.” It is, to me, a problem for all of Science as a human endeavor. This is what the second section of the essay — “And elsewhere in Science?” — is about. Some fields are seriously working on the problems … some, like Climate Science, are ignoring the problem for what I believe to be reasons of science politics — they tend to collude instead of correct inspired by their perceived need to “save the world”.

You need to understand that geochemists have been studying ocean chemistry, including carbonate reactions for many decades prior to the recent buzz regarding OA. As with many subjects, a well developed discipline is pushed to the side and ignored as a strident group of newcomers emerges flush with climate alarmism money and a sense they are breaking new ground.

Steve R:

I was compiling my reply when yours was being posted.

I agree with you that

There is a difference between “a new field” and newcomers flooding into an established field.

Richard

Kip Hansen:

MCourtney wrote and asked

And you have made two responses.

Your first response ignores the point that “95% of Ocean Acidification studies that got published were inadequately designed” and says

Well, despite your “No”, your response is a clear listing of faults with the field resulting from it having so little “expertise” that it relies on “IPCC-approved viewpoints and “projections” about the future” instead of real research. Indeed, the field is so aware that it is lacking in “expertise” that you say it is “encouraging” that C&H 2015 has been welcomed as a method to correct errors in the field.

Frankly, I cannot equate your “No” with your explanation of it which is a clear explanation of ‘Yes’.

And your second response says

People with significant expertise (e.g. as competent experimental scientists) are in demand in established research fields. So, whether or not “new fields” are distorted by “perceived need to “save the world” “, it is clearly a problem for “new fields” that they have difficulty obtaining adequate expertise. And oversight needs to be greatest for “new fields” where there is little past experience to provide guidance.

Hence, it is hard to see how any new field is likely to have adequate expertise initially.

The work of C&H 2015 has been welcomed BECAUSE workers recognise their field is new but is starting to obtain an ability to identify its lacks of expertise.

Richard

Reply to Richard ==> My answers are clearly my opinions on the two questions asked.

I appreciate you input, but you offer no information that might change my answers.

The second question deals with whether the problems experienced in OA research are “new field” problems, which I do not believe they are — the greater problems are those which are currently effecting most areas of “hot item” scientific research.

Ocean Acidification is a label that is designed and used to dissemble. At best it could be described as “a term of art”, I prefer to call this ruse “a term of artifice”.