Guest Post by Bob Tisdale

The topic of discussion is their new sea surface temperature dataset, ERSST.v4. Based on a breakpoint analysis recently promoted by RealClimate, NOAA appears to have reduced the early 20th Century warming rate to agree with the climate models used by the IPCC.

PRELIMINARY NOTES

NOAA introduced its new and improved sea surface temperature reconstruction ERSST.v4 with the papers (both are paywalled):

- Huang et al. (2014) Extended Reconstructed Sea Surface Temperature version 4 (ERSST.v4), Part I. Upgrades and Intercomparisons, and

- Liu et al. (2014) Extended Reconstructed Sea Surface Temperature version 4 (ERSST.v4): Part II. Parametric and Structural Uncertainty Estimations.

We provided an initial look at the new NOAA ERSST.v4 data, primarily during the satellite era, in the post Quick Look at the DATA for the New NOAA Sea Surface Temperature Dataset. An error was discovered in the November 2014 update of the ERSST.v4 sea surface temperature data supplied by NOAA…a teething problem with the update of a new dataset at NOAA. Subsequent to the correction, KNMI added the new ERSST.v4 dataset to the Monthly observations webpage at their Climate Explorer. After NOAA corrected the error, the new ERSST.v4 data fell back in line with their predecessor ERSST.v3b during the satellite era (November 1981 to present), which means they have a slightly higher warming rate than the NOAA Reynolds OI.v2 satellite-enhanced data.

Regarding the breakpoint years that divide the data into warming versus hiatus/cooling periods in this post, I’ve initially used 1912, 1940 and 1970 from the RealClimate post Recent global warming trends: significant or paused or what? Yes, I realize those breakpoints are controversial. See the BishopHill post Significance doing the rounds. And I also understand (1) those breakpoints are based on GISS land-ocean temperature index (LOTI) data, which includes the ERSST.v3b data, (2) that the breakpoint years are not based on the individual sea surface temperature datasets, and (3) that the breakpoints might change if GISS used the ERSST.v4 data. My use of these breakpoints does not mean I agree with them. I’m simply using them to avoid claims that I’ve cherry-picked them, and to show the impacts of breakpoint years on model-data comparisons.

The headline and initial discussions in this post are based on those 1912, 1940 and 1970 breakpoints. If we were to revise those changepoints to 1914, 1945 and 1975, also determined through breakpoint analysis of GISS LOTI data, then the warming rate of the new ERSST.v4 data during the early warming period of 1914 to 1945 falls back in line with the other sea surface temperature datasets, well above the trend simulated by climate models. We’ll illustrate this also.

I’ve excluded the polar oceans from the data presented in this post. That is, the data are for the latitudes of 60S-60N. This is commonly done in scientific studies because the data suppliers (NOAA and UKMO) account for sea ice differently. See Figure 8 from Huang et al. (2014) for an example.

Unless otherwise noted, anomalies are referenced to the WMO-preferred base years of 1981-2010.

The data and climate model outputs are available through the KNMI Climate Explorer.

For many of the illustrations, as opposed to adding the climate model outputs to the graphs, I’ve simply listed the simulated warming (or cooling) rate of the global sea surface temperatures, excluding the polar oceans, as represented by the multi-model ensemble-member mean of the climate models stored in the CMIP5 archive (historic and RCP8.5 forcings). The worst-case RCP8.5 forcings only impact the last few years and have little impact on the results for the recent warming period. For simulations of sea surface temperatures, there are also more ensemble members (model runs) using the RCP8.5 scenario (73 members) than there are with the RCP6.0 scenario (43 members). Additionally, see the post On the Use of the Multi-Model Mean.

THE EARLY 20th CENTURY WARMING PERIOD (1912-1940)

For years, we’ve been illustrating and discussing how the climate models used by the IPCC do not properly simulate the warming of the surface of the global oceans during the early 20th Century warming period, from the 1910s to the 1940s. They underestimate it by a wide margin. As a reference, Figure 1 illustrates the modeled and observed global sea surface temperature anomalies (60S-60N), without the polar oceans, for the early warming period of 1912 through 1940. Again, those are changepoint years promoted in the recent post at RealClimate. The data are represented by HADSST3 data from the UKMO and by the ERSST.v3b data currently in use by NOAA and GISS. The models are represented by the average of the outputs of the simulations of sea surface temperatures (based on multi-model ensemble-member mean) of the climate models stored in the CMIP5 archive. Those models were used by the IPCC for their 5th Assessment Report.

Figure 1

The observed warming based on the HADSST3 and ERSST.v3b data, from 1912 to 1940, was more than twice the rate simulated by climate models. Because the mean of the climate model outputs basically represents the forced component of the climate models, logic dictates that the additional observed warming was caused by naturally occurring, coupled ocean-atmosphere processes.

With the new ERSST.v4 data, the warming rate has been lowered almost to the modeled rate for the period of 1912 to 1940. See Figure 2 for a comparison of the ERSST.v4 data with the ERSST.v3b and HADSST3 data.

Figure 2

The ERSST.v3b data warming rate for the period of 1912 to 1940 is +0.056 deg C/decade, which is only slightly higher than the modeled rate of +0.048 deg C/decade.

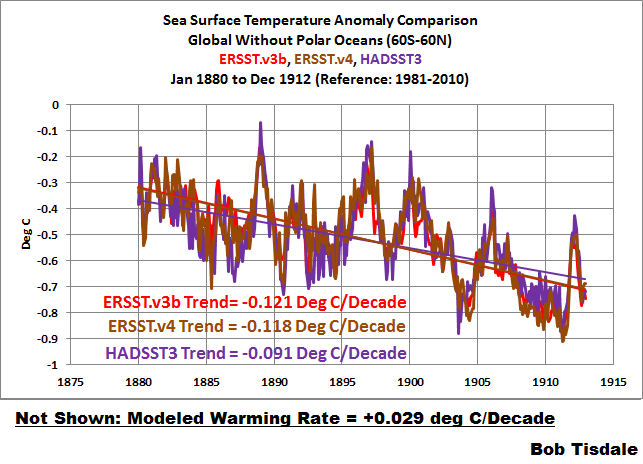

EARLY COOLING PERIOD (1880-1912)

We’ll define the early cooling period as extending from 1880 to 1912. As we can see in Figure 3, the cooling rate of the new ERSST.v4 data is comparable to the ERSST.v3b data currently used by NOAA…both of which are slightly faster than the cooling rate shown by the HADSST3 data. Of course, the models show a slight warming during this period.

Figure 3

The models don’t simulate the warming from 1912 to 1940 shown by the ERSST.v3b and HADSST3 data because they don’t simulate the cooling from 1880 to 1912. But that doesn’t help to explain the slower warming rate of the ERSST.v4 data during the early-20th Century warming period. We’ll return to that discussion in a little while.

MID-20th CENTURY HIATUS (1940-1970)

The new ERSST.v4 data for the global oceans (without the polar oceans), for the period of 1940 to 1970, are compared to the ERSSTv3b and HADSST3 data in Figure 4. Where the ERSST.v3b data showed a very slight warming during this period, the ERSST.v4 data now show cooling…agreeing better with the models and the HADSST3 data.

Figure 4

LATE WARMING PERIOD (1970-2014)

As shown in Figure 5, the revisions to the ERSST.v4 data have increased the sea surface warming to a rate that is slightly higher than the ERSST.v3b data during the period of 1970 to present, but the ERSST.v4 data still has a slightly slower warming rate than the HADSST3 data. And, of course, the models show a slightly higher warming rate than the observations. We would expect the models to perform best during this period, because some of the models are tuned to it.

Figure 5

LONG-TERM WARMING RATES

Figures 6, 7 and 8 present the long-term global sea surface warming rates (without the polar oceans) of the new ERSST.v4 data and the ERSST.v3b and HADSST3 data, starting in 1854 (full term of the data), 1880 (full term of the GISS and NCDC combined land+ocean data) and 1900 (start of the 20th Century, give or take a year, depending on how you define it). The data in all have been smoothed with 12-month running-mean filters. The trends shown are based on the raw data.

Figure 6

# # #

Figure 7

# # #

Figure 8

As an afterthought, I’ve included a comparison starting in 1915 (the last 100 years). See Figure 8.5. The models, of course, underestimate the warming because they can’t simulate the cooling that took place from the 1880s to the 1910s.

Figure 8.5

YOU MAY BE WONDERING…

How did NOAA manage to decrease the warming rate of its ERSST.v4 data during the early warming period, while maintaining long-term trends that are comparable to the other datasets?

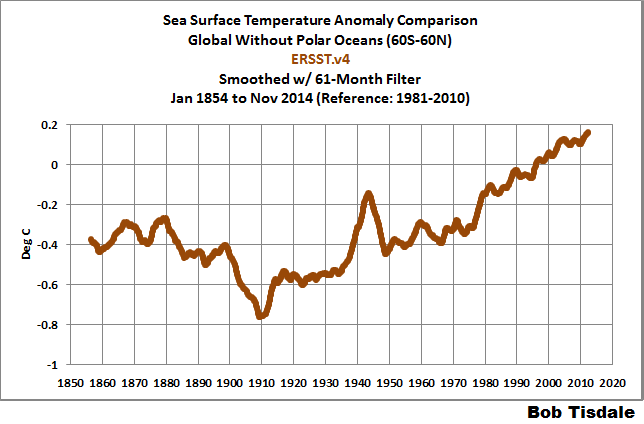

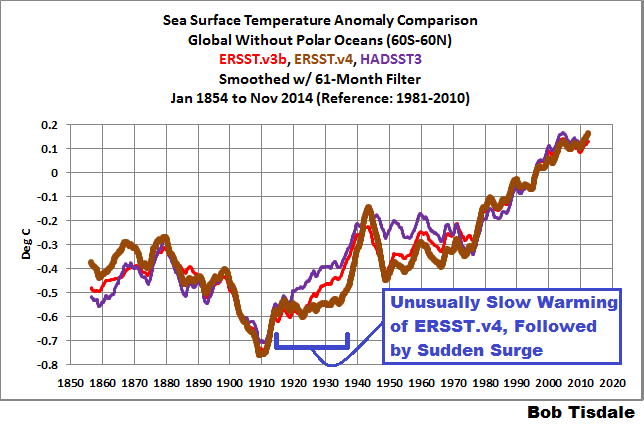

NOAA resurrected the spike in the late-1930s and early-1940s. See Figure 9. The data have been smoothed with a 61-month running-mean filter to minimize the ENSO- and volcano-related volatility. The 61-month filter also helps to emphasize that spike in sea surface temperatures.

Figure 9

Figure 10 presents the three sea surface temperature datasets, for the period of 1854 to present. Again, all data are smoothed with 61-month filters. For the new ERSST.v4 data, NOAA severely limited the warming from the early-1910s until the mid-1930s and then added an unusual sudden warming.

Figure 10

I’ve included the ICOADS source data to the graph in Figure 11. Compared to the ERSST.v3b and HADSST3 data, NOAA appears to have suppressed the pre-1940 “Folland correction” in its ERSST.v4 data. See Folland and Parker (1995) Correction of instrumental biases in historical sea surface temperature data.

Figure 11

Animation 1 compares the new ERSST.v4 and HADSST3 data, for the period of 1854 to present, with both datasets smoothed with 61-months filters. The UKMO Hadley Centre has worked for decades to eliminate the spike in the late-1930s and early-1940s. NOAA, on the other hand, has enhanced it. (Note: If the animations don’t show on your browser, please click the link. Recently, there has been a problem with gif animations on my WordPress posts.)

Animation 1

THE SPIKE LIKELY COMES FROM A NEWER MARINE AIR TEMPERATURE DATASET THAT IS USED AS A REFERENCE FOR THE NEW ERSST.v4 DATA

Sea surface temperature source data for the older ERSST.v3b data are adjusted before 1941 using an older version of nighttime marine air temperature data, like the one shown in Figure 12. This would help to explain the agreement between those two datasets during the period of 1912 to 1940.

Figure 12

One of the features of the new ERSST.v4 data is the use of a newer and improved UKMO nighttime marine air temperature dataset. (It’s not available through the KNMI Climate Explorer, so I can’t include it in a comparison graph.) The source sea surface temperatures for the new ERSST.v4 data are adjusted for the full term by the new nighttime marine air temperature data, not just prior to 1941 like ERSST.v3b.

Huang et al. (2014) includes:

Firstly, ERSST.v3b does not provide SST bias adjustment after 1941 whereas subsequent analyses (e.g. Thompson et al. 2008) have highlighted potential post-1941 data issues and some newer datasets have addressed these issues (Kennedy et al. 2011; Hirahara et al. 2014). The latest release of Hadley NMAT version 2 (HadNMAT2) from 1856 to 2010 (Kent et al. 2013) provided better quality controlled NMAT, which includes adjustments for increased ship deck height, removal of artifacts, and increased spatial coverage due to added records. These NMAT data are better suited to identifying SST biases in ERSST, and therefore the bias adjustments in ERSST version 4 (ERSST.v4) have been estimated throughout the period of record instead of exclusively to account for pre-1941 biases as in v3b.

I suspect the newer nighttime marine air temperature data and its use as a reference for the full term are the reasons for the delayed warming from the early 1910s to the late 1930s and the trailing sudden upsurge in the ERSST.v4 data in the late 1930s.

SUPPOSE WE USED DIFFERENT BREAKPOINT YEARS

It is pretty obvious that the late-1930s to mid-1940s spike in the ERSST.v4 sea surface temperature data would impact the trends of the early-20th Century warming period and the mid-20th Century hiatus period, depending on the years chosen for analysis.

For my book Climate Models Fail, I used the breakpoints of 1914, 1945 and 1975. The changepoints of 1914 and 1945 were determined through breakpoint analysis by Dr. Leif Svalgaard. See his April 20, 2013 at 2:20 pm and April 20, 2013 at 4:21 pm comments on a WattsUpWithThat post here. And for 1975, I referred to the breakpoint analysis performed by statistician Tamino (a.k.a. Grant Foster).

As you might have suspected, because of that spike in the new ERSST.v4 data, using the 1914, 1945 and 1975 breakpoint years does have a noticeable impact on some of the warming and cooling rates.

For the early cooling period, nothing’s going to help the climate models. The models cannot simulate the cooling if we define that period by the years 1880 to 1914. See Figure 13.

Figure 13

The revised breakpoints have a noticeable impact on the early warming period. See Figure 14. Using 1914 to 1945, the warming rate of the new ERSST.v4 data is slightly lower than that its predecessor and in line with the HADSST3 data…and more than 3 times faster than modeled.

Figure 14

NOTE: With that spike, the warming rates of the new ERSST.v4 data during the early-20th Century warming period depend very much on the choice of end year…while the changes in trends are not as great with the ERSST.v3b and HADSST3 data. Refer again to Figures 2 and 14.

With the breakpoint years of 1945 to 1975, Figure 15, the warming rate of the new ERSST.v4 data is considerably lower than the ERSST.vb data, almost flat, during the mid-20th Century hiatus, but not negative (cooling) as shown by the HADSST3 data and the models.

Figure 15

Last but not least, with the 1975 breakpoint, Figure 16, the warming rates of the ERSST.v3b and ERSST.v4 data are basically the same for the recent warming period, which are less than the HADSST3 data, and in turn, less than the modeled rate.

Figure 16

MODEL-DATA DIFFERENCE

Animation 2 includes 2 graphs that show the differences between the modeled and observed sea surface temperature anomalies for the period of January 1880 to November 2014. The model outputs and data are referenced to the period of 1880 to 2013 so that the results are not skewed by the base years. The models are again represented by the multi-model ensemble-member mean of the models stored in the CMIP5 archive (historic/RCP8.5 forcings). And the differences are created by subtracting the data (HADSST3 and ERSST.v4) from the model outputs. The rises from a negative difference (data warmer than models) in 1880 to the substantial positive difference (data cooler than models) in 1910 are caused by the observed cooling that can’t be explained by the models. NOAA tries to recover from that dip in global sea surface temperatures with a sudden upsurge in the late 1930s to early 1940s, which creates the odd looking spike in the ERSST.v4 data.

Animation 2

That spike in the new ERSST.v4 data cannot be explained by the models and stands out like a sore thumb.

CLOSING

Quite remarkably, if the breakpoint years of 1912, 1940 and 1970 are used, the warming and cooling rates of the CMIP5 models and the new NOAA ERSST.v4 sea surface temperature dataset agree reasonably well for the early and late warming periods and for the mid-20th Century hiatus period. That’s three out of four periods. See Figures 2, 4 and 5. On the other hand, if the breakpoint years of 1914, 1945 and 1975 are used, the models can only simulate the warming after 1975 shown in the new ERSST.v4 data. See Figures 13 through 16. During the period of 1945 to 1975, the ERSST.v4 data are closer to the models, which show a slight cooling. Prior to that, with the ERSST.v4 data, the models fail miserably at simulating the cooling of global sea surfaces from 1880 to 1914 and the rebound from 1914 to 1945.

When NCDC starts to include the new ERSST.v4 data in its combined land+ocean surface temperature data, and if GISS uses it, we’ll have to keep an eye on the breakpoints used by climate scientists in their model-data comparisons. In an effort to make models appear as though they can simulate global surface temperatures, I suspect we’ll see breakpoints that flatter the models…and all sorts of arguments about those breakpoints.

Then again, they can argue all they want, but the models still can’t explain that curious spike in the ERSST.v4 data. See Animation 2.

Happy New Year!!!

Many Happy returns to you and yours, Bob, and thanks so much for all the hard work. I look forward to 2015 and reading more of yours, and the others, who post here on WUWT. I hope it’s a good year for you.

Best regards, Eamon.

Enter your comment here…Nice piece of work! There does appear to be a typo in the text following Fig. 2… v3b vs .v4 data ref. (Should the word tuned be in ” ” or handled with tongs ? )

I fully expected NBC News to declare 2014 “the hottest year on record” two days early because of the mass of frigid Arctic air due to cover the country on the last two days. I figured it would ruin their mantra. Sure enough, on Monday night, Lester Holt trumpeted the news and of course it was due to “climate change.” I am curious if the cold wave was enough to disqualify 2014 in their own records, prompting the early call.

Hello Mr. Tisdale, Happy New Year.

Why not use some simple moving average (100 day, 200 day etc..) method (commonly used for stocks) to reduce the influence of the 1914, 1945 and 1975 breakpoints?

Thanks Bob.

We may soon see Congressional inquiries into these “adjusted” data sets. Let the rationalizations (and the whining) begin.

Happy New Year, everyone.

Has NOAA Once Again Tried to Adjust Data to Match Climate Models?

I seem to remember Albert Einstein having something to say about that.

Thanks, and “Happy New Year” to you as well.

Interesting to read this:

…the models still can’t explain…

I personally doubt that they ever will, due to the chaotic nature of these matters and scientists more interested in their sinecures than in science.

So the takeaway from this is that once again they’re changing the data to fit the models so that they can then claim the models fit the data? And nobody is meant to notice or ask questions? It’s amazing the faith that the world puts in those who call themselves scientists!

That’s because doing many runs on super computers is very expensive. While data adjustments can be done on PCs

I wonder what happens to inconvenient runs of models.

If the underlying signal (if present) critically depends upon user selected start and end dates then a wise person should conclude the signal is too small to be of concern!

following on from the above comment, in this case, observing the last animated graphic, the temperature ‘spike’ is shown as -0.15 and -0.2 a difference of 0.05, a very small signal.

I think you’re just looking at the bottom of the spike. Look at the top as well. It starts at about +0.15, spikes down to -0.2 and rebounds to about +0.12.

A spike on a graph is called such because it looks like a big nail (spike). It is a rapid change of amplitude followed immediately by an equally rapid return to the previous level.

The models don’t replicate (or explain) the rapid change down and then back up again.

Scientists who win Nobel prizes see anomalous data, look for an explanation, and discover something great. Hacks decide the data must be bogus, adjust it, discover nothing, and are generally a waste of lab space.

Al Gore will be hard a work to discover the true cause of the spike. Nobel prize in hand, jetting around the world, telling us all to reduce the size of our carbon footprint to match his example. Super-glutton to the rescue.

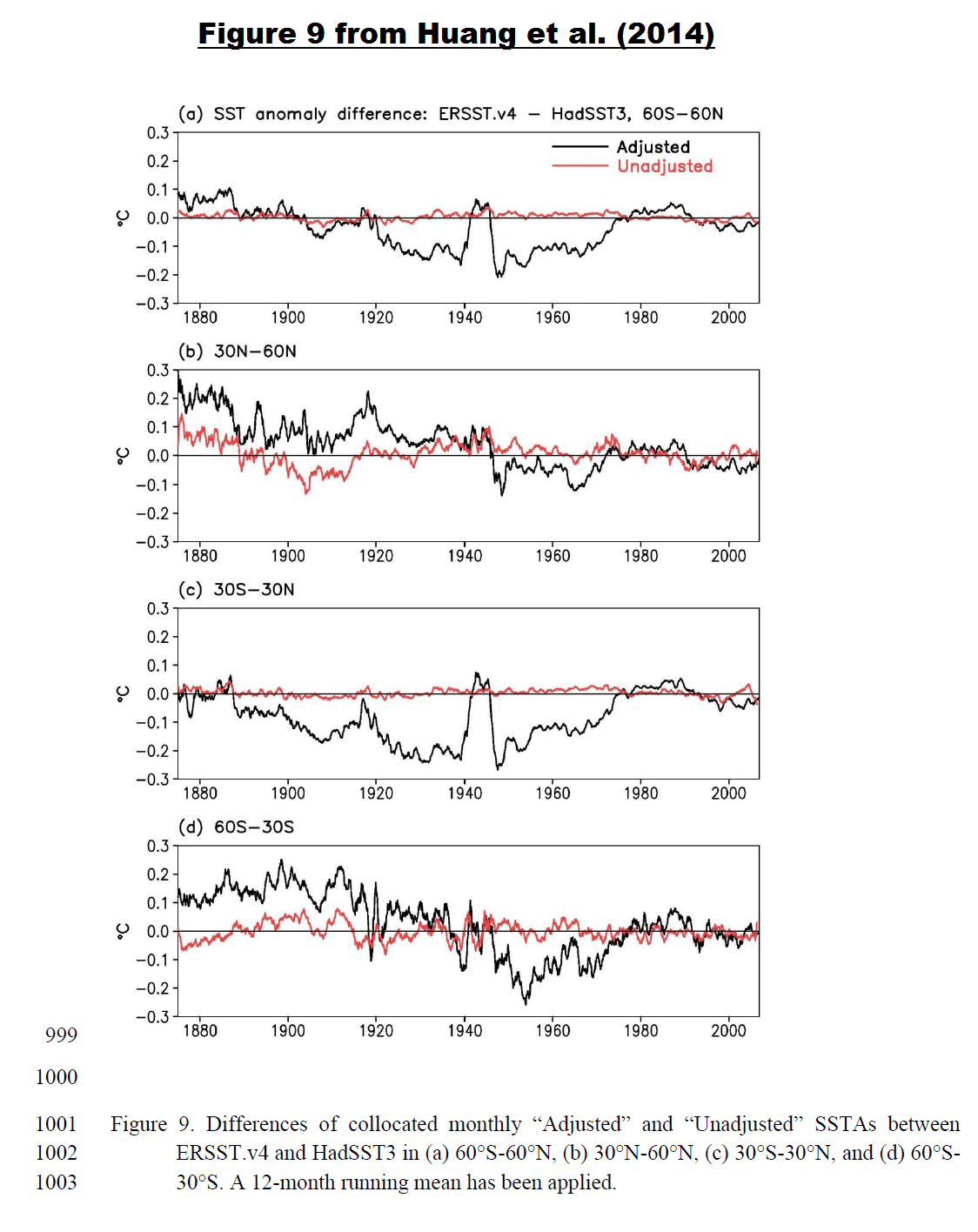

steverichards1984: Not sure where the 0.05 deg C comes from. The difference between HADSST3 and ERSST.v4b that’s associated with the spike is much greater than 0.05 deg C. See Figure 9 from Huang et al (2014)

They have to “fudge” the data so that the models “work.” Otherwise they couldn’t write the IPCC reports, which are almost completely dependent on the models. It’s not the first time they’ve done this, and unfortunately it probably won’t be the last time.

Vigilance is the price we must pay in order for science to survive. Otherwise we are going end up back in the dark ages.

I think that it has gone to far to reverse now. I imagine that the data is adjusted because that is how they are taught in the post modern world. The narrative always explains the “Right thing to do”. and all things must conform to this even original raw data. Anybody who does not conform finds themselves on the outside and out of the gravy train. The Politicians will do nothing because they are now all career politicians and no nothing of the real world let alone anything about science. Politicians are also now at the mercy of the bureaucracies and only imagine that they are the decision makers. Any of the elected representatives of the people who start to go outside the narrative are hounded by the bureaucrats,

The next problem is around the too big to fail concept and all of the vested interests that are now plugged into the taxpayer funded gravy train. The rent seekers in all sorts of green industries and the grant grabbers in universities, government funded science organisations and ngos must now run into the 10s of millions of people around the planet. In the CSIRO in Australia, for example, it is my understanding that over half of their staff are now involved some way or another in climate research.

Add to this the greed that is driving the third world dictators and thugs who, along with the socialists in the green NGOs, now control the UN, All of these people and groups still feel that they have a chance to direct billions of dollars from western taxpayers into their pockets for so called climate debt. Take all of this together and you start to see the scope of the problem. Who would be game to take all of this on.

Forecast for North America is still unfavorable. Polar vortex will remain shifted, allowing the flow of air from the Arctic to the south.

http://www.cpc.ncep.noaa.gov/products/stratosphere/strat_a_f/gif_files/gfs_z50_nh_f240.gif

Climate ‘fudge’ anyone?

Or Climate Flocker “sausage” ?

First up happy new year everybody and thanks Anthony for all your great work over the years. Love visiting your site and enjoy reading your work thank you.

I’ve always wondered what it would look like if the graphs like the ones on this page had a extra line a + or – a one hole degree line, a comparison line, like what we see on the weather reports. We don’t see weather reports giving tomorrows weather forecast to one hundredth of a degree now do we. So were use to seeing the temperature in hole degrees, so what does the temperature record show us rounded to the nearest hole degree and what does it look like compared right along side to what the climate scientist show us? Just as a comparison. I’m just taking a guess here but if someone like Anthony rounded the temperature record to the nearest hole degree for as long as we have them for I’m guessing a almost prefect straight line for over a hundred years or so. Just wondering what do you think? A One hundred year straight line comparison line on their temperature graph now that would put it up the Climate Scientists now wouldn’t it. Hope everyone has a great 2015 bye

+1 Ozzie !

I think if you look at the data with less smoothing, it looks a little different.

I’m not sure what they were trying to do. There has always been this “mission” to remove the 1944-45 downblip in the Ocean SST series (or smooth it out somehow – reduce 1940-1944, raise 1945-50 for example). But they didn’t really do that. The peak adjustment down is 1939 and the peak adjustment up is 1945 but they still left the downblip in there and, in fact, they made it an even bigger downspike. I’m glad to see the 1877-1878 Super El Nino impacts put back in after the V3b changes removed some of that.

Monthly SSTs V4 and V3b.

http://s12.postimg.org/g6xt5gby5/ERSST_V4_and_V3b.png

And then, just the monthly changes between each version. Certainly looks unusual. 1935-1947 is wierd. Overall, the change is for less total warming back to 1854 but the most recent period from 2005 is warmed and the 1997-98 Super El Nino period impacts are cooled (all the better for claiming a record year kind-of-thing).

http://s15.postimg.org/m0zh47y6z/ERSST_V4_Changes_from_V3b.png

Thanks, Bill.

Large data-sets representing cash accounts always have to balance to the penny. No one will accept their bank saying we just re-worked the data and you have less money in your account than we thought 1 year earlier. Even in stock market investments where value of a share varies by the minute, at any particular point in time a share is worth a specific amount that cannot be re-jiggered years later. A share at closing on 12/31/2010 but cannot never be a different value than it was at closing on 12/31/2010.

With climate data it is the opposite, there is a continual effort figuring out what the values of a particular environment are at any particular point in time. The reason is the data is inconsistent, enormously imprecise due to continually changing methods of capturing data (which makes models calculating to a hundredths of a degree silly), and often missing (proxy data or extrapolation used instead). There are tons of this inconsistent, imprecise data which is massaged based on the needs and fancy of the day. When the data is so loosey-goosey almost any outcome desired can be had and often is.

So given that,

does the data generally show a global warming trend? Depends on when you choose your start date.

Is a warming trend good or bad? Depends on where you live.

Is there any practical purpose or application from this research. Not yet, unless having an excuse to raise taxes is now considered applied science.

Conjuring up something (a signal) out of nothing (noise) always requires a sojourn through the unreal

but think of all the skill required for 97% of the climate models to get the wrong answer. if you or I tried this, we would have a very hard time being so consistently wrong. to screw up to the degree the climate models have, well that takes years of training and experience.

The average person on the street only manages to be wrong 50% of the time, and to be wrong year after year, the odds are so astronomically small as to defeat even the most gifted of numbskulls.

Every year climate science sets new records in numbskullery. 2014 was without a doubt the highest level ever recorded. And with increased forcings set to continue, 2015 is likely to set new records as well. It will take decade, if not centuries, for anyone outside of climate science to recreate their accomplishments.

A very relevant analogy.

How can NOAA, a publicly funded governmental organization, paywall research that WE paid for? I can understand if a private college or a business contracted NOAA to perform research, the resulting research would be proprietary (minus any public data sets), but to have to pay for something that we already paid for? I’m a bit confused and a bit outraged.

BTW.. A Happy and joyous New Year to all…

The UK Met Office do the same, Mike

paywall research that WE paid for?

============

because only climate scientists are qualified to read what they produce. to the rest of us it would only seem like self-serving nonsense, so we are not encouraged to read it.

Yeh, that’s right.

You people here would only find error in the data.

Happy new year all.

Because if everybody could read it for free the odds of one finding something wrong with it goes up exponentially. We’re “messing with their paychecks” so by charging us for something we’ve already been taxed to pay for they figure they can get a few more bucks, over and above those taxes, before they get shoved out of the trough by truth. Hopefully sooner rather than later.

What I wonder about is the nature of the peak in both Ersst v3b and v4 in 2014. In 1998 we had, everyone agrees a very strong el niño and it had an effect on global sea temperatures. In 2014 we have had a subdued el niño, and according to these curves, it has had an even stronger effect on global sea temperatures. Does that agree with actual Argos data for example? (as opposed to the final NOAA product). Given the meme on 2014 being the warmest year, that 2014 peak looks strange

Keith says: “In 2014 we have had a subdued el niño, and according to these curves, it has had an even stronger effect on global sea temperatures. Does that agree with actual Argos data for example?”

ARGO data are not used to determine sea surface temperatures. ARGO data are used for subsurface ocean temperature-based datasets.

The 2014/15 El Nino only account for part of the 2014 spike in sea surface temperatures. The primary cause was the unusual warming event in the eastern extratropical North Pacific…

https://bobtisdale.wordpress.com/2014/12/04/did-enso-and-the-monster-kelvin-wave-contribute-to-the-record-high-global-surface-temperatures-in-2014/

…which is linked to the “ridiculously resilient ridge” of high pressure there, which is also linked to the California drought.

[Snip. Sockpuppetry not allowed. ~mod.]

Rooter: Check the Niño 3.4 index. There has been a weak El Niño for the last few months and still is, though it seems to be winding down now.

“Why should increased heat loss during ninjo-years lead to global warming?”

Because the heat is lost from the oceans to the atmosphere, and it is the atmospheric temperature we’re measuring.

tty:

The ONI-index has not reached ninjo in 2014.

http://www.cpc.ncep.noaa.gov/products/analysis_monitoring/ensostuff/ensoyears.shtml

“By historical standards, to be classified as a full – fledged El Niño or La Niña episode, these thresholds must be exceeded for a period of at least 5 consecutive overlapping 3 – month seasons.”

Quite a stretch to call 2014 a ninjo year. If it is not ninjo in the beginning of the year, then it is not a ninjo-year.

It seems a lot depends on the new Met Office night-time marine air temperatures dataset. Ideally, I’d like to know more about that.

Where does it come from?

How was it made?

Why wasn’t this data incorporated before?

The take home for me is that we need to know more about this new dataset before we can understand how it has been used.

What we need is better governance of the data sets ideally by someone totally uninvolved and therefore disinterested in politics, global warming, climate change, etc, with guaranteed funding regardless of the output from the users of the data. The governance should be fully reported and open to public view and meet all the international standards requirements for maintenance of high value datasets. The original data should be preserved completely unaltered, any adjustments or modifications would be held as different datasets and could/should be compared with the original with documented reasons for each change. Neutral statisticians could then be used to identify the impacts of changes and document the researchers’ justifications for the changes.

At the moment the researchers who want a particular result are allowed to ‘adjust’ the input data and worse lose or hide the original datasets and the methods that they used to massage the data. Considering the importance and implications of this research this cannot be allowed to continue.

This was exactly why the data program at East Anglia University was created. Problem is the inmates are in charge of the asylum.

Ian W,

Absolutely spot on.

If the tax department adjusted my record in similar fashion, I would be taking direct action for fr**d.

Imagine that the adjusters got a temperature wrong by say 0.2 deg C. When you convert the before and after to absolute and raise to the 4th power as in Stefan Boltzmann, it makes quite a difference.

Thanks Bob, once again, for the interesting info.

So, what exactly does adjusting for increased ship deck height look like?

Did they adjust for additional heads in the bigger ships too?

Heck, the addition friction from larger hulls could account for the warming over a centrury.

Happy day 1 of 2015 to all!

Are you sure these guys aren’t just practicing for presenting $5,000 a head “get rich quick by trading the stock market” seminars?

All these graphs and trend lines. I’m just waiting to hear the words “double top” and “margin calls”.

Parlour tricksters at best, malevolent fraudsters at worst. Oink, oink oink..

Happy and prosperous 2015 (2558 in buddhist calendar) to you all..

“When the President does it, that means that it’s not illegal.” – Richard M. Nixon

All the best in 2015, y’all!

You sure that was not Bubba talking to Monica, while re-zipping himself, just outside the Oval Office

Let us assume that the keepers of the keys are honest and genuinely trying to get the dataset as accurate as they possibly can. No fiddling. No bias. No ulterior motives. Under that scenario the fact that they change it every week, every month, every year ad infinitum simply shows that the data is inadequate for any purpose other than curiosity. Is it not time to stop the stupid before everyone is contaminated by it?

Nineteen charts this time Bob.

Actually 23, he managed to add 4 more in a comment. Illis also added some. Charts galore!!! It all comes down to what kind of canvas was the bucket made from, or how many inches above the sea the deck is, or whether the ship had intakes above or below the thermohaline cline. Dizzying, it is, science, it is not…

Indeed! I appreciate the work of Bod T and many others, and the alarmist answer is to make ever more complicated arm waving adjustments, that consistently require ever more work to debunk. It like they tell skeptics like Bob, here, now do 2000 jumping jacks. Fortunately Bob is in very good shape.

And 2700 words!

Bob

Over on Bishop Hill I had a discussion post about the temperature record, with my first gut feeling being that having an uncertainty of ±0.1 °C was in contrast to my own experience as a scientist and engineer.

Anyway, John Kennedy of the Met Office was very helpful in showing me the papers, methods and metadata that were used to create and adjust the temperature anomalies. This included Folland et al 1995 for the bucket correction. It turns out the actual data is from Folland et al 1991 where the bucket experiments on ships were performed and then matched to an uninsulated canvas bucket model.

Now having read through the data and the papers what struck me was that an initial scientific experiment had been carried out that appeared to match the experiments. The temperature uncertainty model (measurement error + bias + bias error) appeared to be compatible with the findings. But at this point, theory was used in place of more extensive characterisation. For example, using different types of bucket, different measurement processes (drawing methods, pre thermalisation of thermometers etc), using controlled lab experiments that allowed unnatural conditions to be created (much in same way that any hardware gets qualified)

This theoretical correction with only a cursory experimental backing has been used for over 20 years and forms the spine of all subsquent anomaly uncertainty studies and updates. Steve Mc addressed something in a similar vein awhile back.

Of course if the temperature anomaly data was purely a scientific data set then it wouldn’t matter as much that the anomaly correction be characterised to a basic degree but it’s not just a scientific data set – it is being used as if it’s engineering level and fit for policy. Not good.

In particular one of the things I spotted was if the pre-thermalisation process (which the UK Admiralty recommended) was used the actual bucket adjustment may have to be larger, as in it needs to be adjusted up. Pre thermalisation involves getting the thermometer to a temperature similar to the water temp so you take an initial sample, let it sit for a minute or two then throw out the water and resample.

According to the model, the water temperature will cool when sampled due mostly to relative humidity effects. But the thermometer should also do the same (maybe it doesn’t I don’t know). So when you resample is the thermometer even colder. Again I don’t know.

But if there is a compounding effect the actual SST may be higher still – maybe another 0.5 degrees. It would come more into line with the models BUT more importantly it would mean that the 1910 to 1940s would be approaching today’s temperature – hence much less AGW.

Anyway that is just pure speculation but I hope you see how concerning it is that something as important as bucket bias corrections don’t seem to have been given a thorough going over before being applied. Or applied with a very sunny day type error analysis.

The whole AGW story hinges on the start of the 20th century and the 1940s as you point out.

Interesting that the spike seems confined to 30n to 30s.