Guest Post by Bob Tisdale

The topic of discussion is their new sea surface temperature dataset, ERSST.v4. Based on a breakpoint analysis recently promoted by RealClimate, NOAA appears to have reduced the early 20th Century warming rate to agree with the climate models used by the IPCC.

PRELIMINARY NOTES

NOAA introduced its new and improved sea surface temperature reconstruction ERSST.v4 with the papers (both are paywalled):

- Huang et al. (2014) Extended Reconstructed Sea Surface Temperature version 4 (ERSST.v4), Part I. Upgrades and Intercomparisons, and

- Liu et al. (2014) Extended Reconstructed Sea Surface Temperature version 4 (ERSST.v4): Part II. Parametric and Structural Uncertainty Estimations.

We provided an initial look at the new NOAA ERSST.v4 data, primarily during the satellite era, in the post Quick Look at the DATA for the New NOAA Sea Surface Temperature Dataset. An error was discovered in the November 2014 update of the ERSST.v4 sea surface temperature data supplied by NOAA…a teething problem with the update of a new dataset at NOAA. Subsequent to the correction, KNMI added the new ERSST.v4 dataset to the Monthly observations webpage at their Climate Explorer. After NOAA corrected the error, the new ERSST.v4 data fell back in line with their predecessor ERSST.v3b during the satellite era (November 1981 to present), which means they have a slightly higher warming rate than the NOAA Reynolds OI.v2 satellite-enhanced data.

Regarding the breakpoint years that divide the data into warming versus hiatus/cooling periods in this post, I’ve initially used 1912, 1940 and 1970 from the RealClimate post Recent global warming trends: significant or paused or what? Yes, I realize those breakpoints are controversial. See the BishopHill post Significance doing the rounds. And I also understand (1) those breakpoints are based on GISS land-ocean temperature index (LOTI) data, which includes the ERSST.v3b data, (2) that the breakpoint years are not based on the individual sea surface temperature datasets, and (3) that the breakpoints might change if GISS used the ERSST.v4 data. My use of these breakpoints does not mean I agree with them. I’m simply using them to avoid claims that I’ve cherry-picked them, and to show the impacts of breakpoint years on model-data comparisons.

The headline and initial discussions in this post are based on those 1912, 1940 and 1970 breakpoints. If we were to revise those changepoints to 1914, 1945 and 1975, also determined through breakpoint analysis of GISS LOTI data, then the warming rate of the new ERSST.v4 data during the early warming period of 1914 to 1945 falls back in line with the other sea surface temperature datasets, well above the trend simulated by climate models. We’ll illustrate this also.

I’ve excluded the polar oceans from the data presented in this post. That is, the data are for the latitudes of 60S-60N. This is commonly done in scientific studies because the data suppliers (NOAA and UKMO) account for sea ice differently. See Figure 8 from Huang et al. (2014) for an example.

Unless otherwise noted, anomalies are referenced to the WMO-preferred base years of 1981-2010.

The data and climate model outputs are available through the KNMI Climate Explorer.

For many of the illustrations, as opposed to adding the climate model outputs to the graphs, I’ve simply listed the simulated warming (or cooling) rate of the global sea surface temperatures, excluding the polar oceans, as represented by the multi-model ensemble-member mean of the climate models stored in the CMIP5 archive (historic and RCP8.5 forcings). The worst-case RCP8.5 forcings only impact the last few years and have little impact on the results for the recent warming period. For simulations of sea surface temperatures, there are also more ensemble members (model runs) using the RCP8.5 scenario (73 members) than there are with the RCP6.0 scenario (43 members). Additionally, see the post On the Use of the Multi-Model Mean.

THE EARLY 20th CENTURY WARMING PERIOD (1912-1940)

For years, we’ve been illustrating and discussing how the climate models used by the IPCC do not properly simulate the warming of the surface of the global oceans during the early 20th Century warming period, from the 1910s to the 1940s. They underestimate it by a wide margin. As a reference, Figure 1 illustrates the modeled and observed global sea surface temperature anomalies (60S-60N), without the polar oceans, for the early warming period of 1912 through 1940. Again, those are changepoint years promoted in the recent post at RealClimate. The data are represented by HADSST3 data from the UKMO and by the ERSST.v3b data currently in use by NOAA and GISS. The models are represented by the average of the outputs of the simulations of sea surface temperatures (based on multi-model ensemble-member mean) of the climate models stored in the CMIP5 archive. Those models were used by the IPCC for their 5th Assessment Report.

Figure 1

The observed warming based on the HADSST3 and ERSST.v3b data, from 1912 to 1940, was more than twice the rate simulated by climate models. Because the mean of the climate model outputs basically represents the forced component of the climate models, logic dictates that the additional observed warming was caused by naturally occurring, coupled ocean-atmosphere processes.

With the new ERSST.v4 data, the warming rate has been lowered almost to the modeled rate for the period of 1912 to 1940. See Figure 2 for a comparison of the ERSST.v4 data with the ERSST.v3b and HADSST3 data.

Figure 2

The ERSST.v3b data warming rate for the period of 1912 to 1940 is +0.056 deg C/decade, which is only slightly higher than the modeled rate of +0.048 deg C/decade.

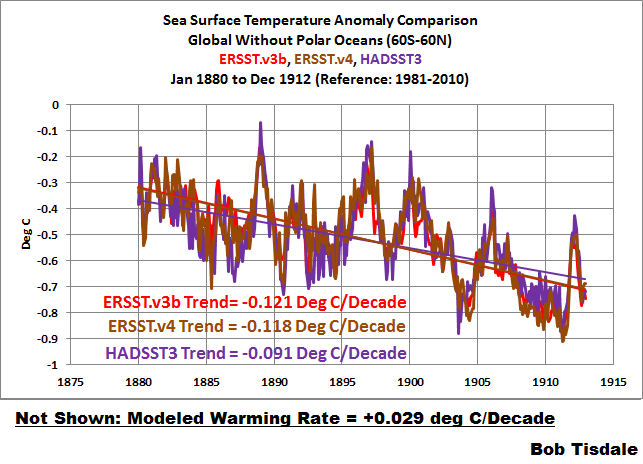

EARLY COOLING PERIOD (1880-1912)

We’ll define the early cooling period as extending from 1880 to 1912. As we can see in Figure 3, the cooling rate of the new ERSST.v4 data is comparable to the ERSST.v3b data currently used by NOAA…both of which are slightly faster than the cooling rate shown by the HADSST3 data. Of course, the models show a slight warming during this period.

Figure 3

The models don’t simulate the warming from 1912 to 1940 shown by the ERSST.v3b and HADSST3 data because they don’t simulate the cooling from 1880 to 1912. But that doesn’t help to explain the slower warming rate of the ERSST.v4 data during the early-20th Century warming period. We’ll return to that discussion in a little while.

MID-20th CENTURY HIATUS (1940-1970)

The new ERSST.v4 data for the global oceans (without the polar oceans), for the period of 1940 to 1970, are compared to the ERSSTv3b and HADSST3 data in Figure 4. Where the ERSST.v3b data showed a very slight warming during this period, the ERSST.v4 data now show cooling…agreeing better with the models and the HADSST3 data.

Figure 4

LATE WARMING PERIOD (1970-2014)

As shown in Figure 5, the revisions to the ERSST.v4 data have increased the sea surface warming to a rate that is slightly higher than the ERSST.v3b data during the period of 1970 to present, but the ERSST.v4 data still has a slightly slower warming rate than the HADSST3 data. And, of course, the models show a slightly higher warming rate than the observations. We would expect the models to perform best during this period, because some of the models are tuned to it.

Figure 5

LONG-TERM WARMING RATES

Figures 6, 7 and 8 present the long-term global sea surface warming rates (without the polar oceans) of the new ERSST.v4 data and the ERSST.v3b and HADSST3 data, starting in 1854 (full term of the data), 1880 (full term of the GISS and NCDC combined land+ocean data) and 1900 (start of the 20th Century, give or take a year, depending on how you define it). The data in all have been smoothed with 12-month running-mean filters. The trends shown are based on the raw data.

Figure 6

# # #

Figure 7

# # #

Figure 8

As an afterthought, I’ve included a comparison starting in 1915 (the last 100 years). See Figure 8.5. The models, of course, underestimate the warming because they can’t simulate the cooling that took place from the 1880s to the 1910s.

Figure 8.5

YOU MAY BE WONDERING…

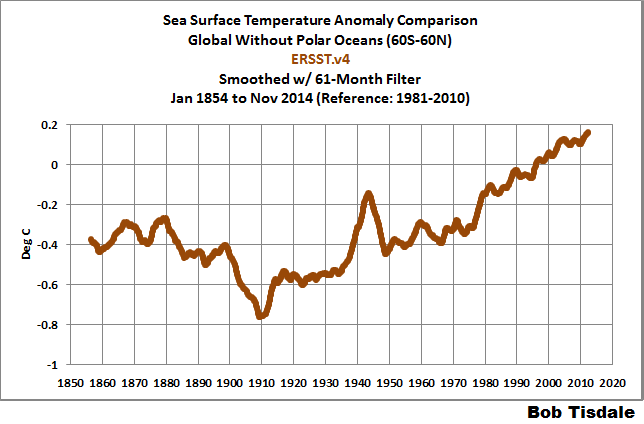

How did NOAA manage to decrease the warming rate of its ERSST.v4 data during the early warming period, while maintaining long-term trends that are comparable to the other datasets?

NOAA resurrected the spike in the late-1930s and early-1940s. See Figure 9. The data have been smoothed with a 61-month running-mean filter to minimize the ENSO- and volcano-related volatility. The 61-month filter also helps to emphasize that spike in sea surface temperatures.

Figure 9

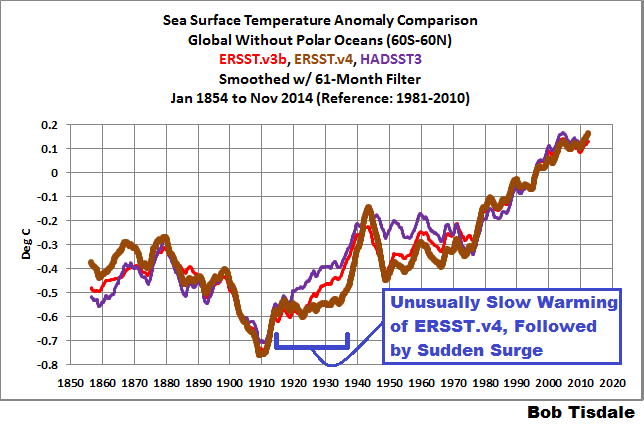

Figure 10 presents the three sea surface temperature datasets, for the period of 1854 to present. Again, all data are smoothed with 61-month filters. For the new ERSST.v4 data, NOAA severely limited the warming from the early-1910s until the mid-1930s and then added an unusual sudden warming.

Figure 10

I’ve included the ICOADS source data to the graph in Figure 11. Compared to the ERSST.v3b and HADSST3 data, NOAA appears to have suppressed the pre-1940 “Folland correction” in its ERSST.v4 data. See Folland and Parker (1995) Correction of instrumental biases in historical sea surface temperature data.

Figure 11

Animation 1 compares the new ERSST.v4 and HADSST3 data, for the period of 1854 to present, with both datasets smoothed with 61-months filters. The UKMO Hadley Centre has worked for decades to eliminate the spike in the late-1930s and early-1940s. NOAA, on the other hand, has enhanced it. (Note: If the animations don’t show on your browser, please click the link. Recently, there has been a problem with gif animations on my WordPress posts.)

Animation 1

THE SPIKE LIKELY COMES FROM A NEWER MARINE AIR TEMPERATURE DATASET THAT IS USED AS A REFERENCE FOR THE NEW ERSST.v4 DATA

Sea surface temperature source data for the older ERSST.v3b data are adjusted before 1941 using an older version of nighttime marine air temperature data, like the one shown in Figure 12. This would help to explain the agreement between those two datasets during the period of 1912 to 1940.

Figure 12

One of the features of the new ERSST.v4 data is the use of a newer and improved UKMO nighttime marine air temperature dataset. (It’s not available through the KNMI Climate Explorer, so I can’t include it in a comparison graph.) The source sea surface temperatures for the new ERSST.v4 data are adjusted for the full term by the new nighttime marine air temperature data, not just prior to 1941 like ERSST.v3b.

Huang et al. (2014) includes:

Firstly, ERSST.v3b does not provide SST bias adjustment after 1941 whereas subsequent analyses (e.g. Thompson et al. 2008) have highlighted potential post-1941 data issues and some newer datasets have addressed these issues (Kennedy et al. 2011; Hirahara et al. 2014). The latest release of Hadley NMAT version 2 (HadNMAT2) from 1856 to 2010 (Kent et al. 2013) provided better quality controlled NMAT, which includes adjustments for increased ship deck height, removal of artifacts, and increased spatial coverage due to added records. These NMAT data are better suited to identifying SST biases in ERSST, and therefore the bias adjustments in ERSST version 4 (ERSST.v4) have been estimated throughout the period of record instead of exclusively to account for pre-1941 biases as in v3b.

I suspect the newer nighttime marine air temperature data and its use as a reference for the full term are the reasons for the delayed warming from the early 1910s to the late 1930s and the trailing sudden upsurge in the ERSST.v4 data in the late 1930s.

SUPPOSE WE USED DIFFERENT BREAKPOINT YEARS

It is pretty obvious that the late-1930s to mid-1940s spike in the ERSST.v4 sea surface temperature data would impact the trends of the early-20th Century warming period and the mid-20th Century hiatus period, depending on the years chosen for analysis.

For my book Climate Models Fail, I used the breakpoints of 1914, 1945 and 1975. The changepoints of 1914 and 1945 were determined through breakpoint analysis by Dr. Leif Svalgaard. See his April 20, 2013 at 2:20 pm and April 20, 2013 at 4:21 pm comments on a WattsUpWithThat post here. And for 1975, I referred to the breakpoint analysis performed by statistician Tamino (a.k.a. Grant Foster).

As you might have suspected, because of that spike in the new ERSST.v4 data, using the 1914, 1945 and 1975 breakpoint years does have a noticeable impact on some of the warming and cooling rates.

For the early cooling period, nothing’s going to help the climate models. The models cannot simulate the cooling if we define that period by the years 1880 to 1914. See Figure 13.

Figure 13

The revised breakpoints have a noticeable impact on the early warming period. See Figure 14. Using 1914 to 1945, the warming rate of the new ERSST.v4 data is slightly lower than that its predecessor and in line with the HADSST3 data…and more than 3 times faster than modeled.

Figure 14

NOTE: With that spike, the warming rates of the new ERSST.v4 data during the early-20th Century warming period depend very much on the choice of end year…while the changes in trends are not as great with the ERSST.v3b and HADSST3 data. Refer again to Figures 2 and 14.

With the breakpoint years of 1945 to 1975, Figure 15, the warming rate of the new ERSST.v4 data is considerably lower than the ERSST.vb data, almost flat, during the mid-20th Century hiatus, but not negative (cooling) as shown by the HADSST3 data and the models.

Figure 15

Last but not least, with the 1975 breakpoint, Figure 16, the warming rates of the ERSST.v3b and ERSST.v4 data are basically the same for the recent warming period, which are less than the HADSST3 data, and in turn, less than the modeled rate.

Figure 16

MODEL-DATA DIFFERENCE

Animation 2 includes 2 graphs that show the differences between the modeled and observed sea surface temperature anomalies for the period of January 1880 to November 2014. The model outputs and data are referenced to the period of 1880 to 2013 so that the results are not skewed by the base years. The models are again represented by the multi-model ensemble-member mean of the models stored in the CMIP5 archive (historic/RCP8.5 forcings). And the differences are created by subtracting the data (HADSST3 and ERSST.v4) from the model outputs. The rises from a negative difference (data warmer than models) in 1880 to the substantial positive difference (data cooler than models) in 1910 are caused by the observed cooling that can’t be explained by the models. NOAA tries to recover from that dip in global sea surface temperatures with a sudden upsurge in the late 1930s to early 1940s, which creates the odd looking spike in the ERSST.v4 data.

Animation 2

That spike in the new ERSST.v4 data cannot be explained by the models and stands out like a sore thumb.

CLOSING

Quite remarkably, if the breakpoint years of 1912, 1940 and 1970 are used, the warming and cooling rates of the CMIP5 models and the new NOAA ERSST.v4 sea surface temperature dataset agree reasonably well for the early and late warming periods and for the mid-20th Century hiatus period. That’s three out of four periods. See Figures 2, 4 and 5. On the other hand, if the breakpoint years of 1914, 1945 and 1975 are used, the models can only simulate the warming after 1975 shown in the new ERSST.v4 data. See Figures 13 through 16. During the period of 1945 to 1975, the ERSST.v4 data are closer to the models, which show a slight cooling. Prior to that, with the ERSST.v4 data, the models fail miserably at simulating the cooling of global sea surfaces from 1880 to 1914 and the rebound from 1914 to 1945.

When NCDC starts to include the new ERSST.v4 data in its combined land+ocean surface temperature data, and if GISS uses it, we’ll have to keep an eye on the breakpoints used by climate scientists in their model-data comparisons. In an effort to make models appear as though they can simulate global surface temperatures, I suspect we’ll see breakpoints that flatter the models…and all sorts of arguments about those breakpoints.

Then again, they can argue all they want, but the models still can’t explain that curious spike in the ERSST.v4 data. See Animation 2.

Back in 1981, James Hansen wrote this:

—————————

http://www.atmos.washington.edu/~davidc/ATMS211/articles_optional/Hansen81_CO2_Impact.pdf

“The most sophisticated models suggest a mean warming of 2 to 3.5 C for doubling the CO2 concentration from 300 to 600 ppm. The major difficulty in accepting this theory has been the absence of observed warming coincident with the historic CO2 increase. In fact, the temperature in the Northern Hemisphere decreased by about 0.5 C between 1940 and 1970, a time of rapid CO2 buildup. The time history of the warming obviously does not follow the course of the CO2 increase (Fig. 1), indicating that other factors must affect global mean temperature.”

“[R]ecent claims that climate models overestimate the impact of radiative perturbations by an order of magnitude have raised the issue of whether the greenhouse effect is well understood.”

“Northern latitudes warmed ~ 0.8 C between the 1880s and 1940, then cooled ~ 0.5 C between 1940 and 1970, in agreement with other analyses. Low latitudes warmed ~ 0.3 C between 1880 and 1930, with little change thereafter. The global mean temperature increased ~ 0.5 C between 1885 and 1940, with slight cooling thereafter.”

“A remarkable conclusion from Fig. 3 is that the global mean temperature is almost as high today [1980] as it was in 1940.”

—————————

Graph from the paper showing the global trends (notice 1940 was still about 0.05 C to 0.1 C warmer than 1980):

http://www.giss.nasa.gov/research/features/200711_temptracker/1981_Hansen_etal_page5_340.gif

—————————

Today, NASA’s graph of NH temperature shows only -0.2 C of cooling in the NH between 1940 and 1970 (instead of the -0.5 C NASA’s Hansen identified in 1981), only +0.4 C of warming between 1880 and 1940 (instead of the +0.8 C identified by NASA’s Hansen in 1981):

http://data.giss.nasa.gov/gistemp/graphs_v3/Fig.A3.gif

—————————

And NASA’s graph of global temperatures now shows 1980 as +0.2 C warmer than 1940 rather than -0.05 C to -0.1 C colder as on Hansen’s 1980 graph:

http://data.giss.nasa.gov/gistemp/graphs_v3/Fig.A.gif

—————————-

So

“Has NOAA Once Again Tried to Adjust Data to Match Climate Models?”

(A). Yes

(B). No.

(C). We’re doomed.

(D). All of the above.

The small furry animals want to know.

@Julian Flood 1/1/15 11:00am

Is your meaning that the oil slicks from sunken ships in WWII have a measured effect on sea surface temperatures

on a global scalewhere they are measured by later ships?An interesting theory. I still prefer the explanation that during the wars, the sampling methodology, frequency, and sampling locations are highly different than at other times. But leaking oil from sunken ships might be a real effect on surface temperatures and possibly on evaporation.

I’m skeptical that the volumes of leaking oil would be enough evenly spread to make a difference.From the numbers below, there was enough oil that if spread thin enough, it is a sizable fraction of the Pacific.According to this: measuring thickness of an oil slick some oils can spread to a thickness of 10^-9 meters. The USS Missouri held 2.5 million gallons of fuel oil, and while it never sank, many other battleships did, such as the HMS Prince of Wales.

According to this Nat Geo article, there are 3700 known sinkings in the Pacific. If the average sinking was 5% of the Missouri (and remember, some of them were tankers), keeping to round numbers, call it 100,000 gallons of fuel oil per ship sunk, 100 ships per month. 40 million liters / month of spilled oil. One liter spread to 10^-9 meters will cover 1 km^2. So in theory, 40 million liters could spread as much as 6000 km by 6000 km, or one fifth of the Pacific Ocean. How many days (or hours) would that thin layer last before being eaten by bugs?

Any link to experimental data to what an oil slick does to surface temperatures?

How thick does a fuel oil slick need to be to interfere significantly with evaporation and surface temperatures in the open ocean?

Is the effect the same in the cold North Atlantic vs the hot SW Pacific?

Fixing the links above:

According to this Nat Geo article there are 3700 known sinkings in the Pacific. If the average sinking was 5% of the Missouri (and remember, some of them were tankers), keeping to round numbers, call it 100,000 gallons of fuel oil per ship sunk, 100 ships per month. So 40 million liters / month of spilled fuel oil. If one liter of oil spreads to 10^-9 meters thickness (about one molecule), it will cover 1 km^2. So in theory, 40 million liters of oil could spread as much as 6000 km by 6000 km, or about 1/5 of the Pacific Ocean.

How many days (or hours) would that thin layer last before being eaten by bugs? What fraction of the month does that slick last? This fraction can translate to what fraction of the 40 million sq km of theoretical ocean area is permanently covered by oil slick during the Pacific War?

Any link to experimental data to what an oil slick does to surface temperatures?

Is the effect the same in the cold North Atlantic vs the hot SW Pacific?

How thick does a fuel oil slick need to be to interfere significantly with evaporation and surface temperatures in the open ocean? The numbers above are based upon a thickness of 10^-9 meters.

Any effect would be much more pronounced off the Eastern and Gulf Coasts of the US in the spring-summer of 1942 during the Unternehmen Paukenschlag/Neuland massacre of tankers (at least 63 tankers were sunk from January 14 to June 10, i. e. one per 2.5 days). .

[Snip. You use a lot more sockpuppets than just this one. ~ mod.]

Nope. I have only posted with “rooter” or “wollie” here.

What are you afraid of? Some common sense in the commentaries?

Adjusting data is okay if you can Prove that the data you are adjusting is wrong. But Proof is difficult. It would have to be like we determined sensor was bad. Then you should still keep old data but make make note of what you changed. There must be history log of changes kept, and reasons for change and who signed off on changes.

The dead last thing they want is to keep a record of all their meddling changes. This is typically held as if it is top secret!

Re Stephen Rasey,

I’ve been banging on for years about what I call the Kriegesmarine effect — there are various warming mechanisms in an oil polluted ocean. As for how long a smooth (not a slick, it’s really thin) will last I don’t know, but I’ve seen one covering tens of thousands of miles in the Atlantic north of Madeira — it had formed after a prolonged Azores high — and must have been there some time, weeks probably. I’m trying to persuade rgb to take his boat out with a bottle of oil and carry out an experiment in the sound off his campus, but he’s very reluctant. Lower albedo, smaller waves, less breaking wave droplets, less stirring and nutrient elevation, less air turbulence to engender stratus…. etc etc. I put the whole thing together on Judith Curry’s site. The redoubtable Judith tried to get a friend of hers to check aerosol effects above the big Gulf oil spill, but the aircraft was busy elsewhere. I’m sure that I can see the slick eroding low level cloud cover in the satellite pictures, but that’s just the fond eye of parenthood. Maybe.

If you’re an American you’ll be proud of a prescient experiment carried out by the great Benjamin Franklin — google Franklin Clapham Common pond.

JF

The fact of the matter is that no one is in a position to specify what the global average SST was prior to the satellite era. Prior to that, global coverage is simply not there in shipboard observations, which invariably are instantaneous readings made via a wide variety of methods at regular times at random locations, rather than daily averages at fixed locations. Numerical manipulations of manufactured indices simply reconfigure the shape of conceptual sandcastles built upon inadequate foundation. The academic pretenses of “climate science” notwithstanding, it should be recognized that fiction remains fiction.

Is there anyone with a REAL data/historical background that believes the data used, or alledged or whatever, prior to the Argo’s has ANY merit at all? If you do, I have a bridge to sell you in NYC…cheap.

Completely irrelevant, my good man. Only after adjustment by the climate elites does the data gain merit and become unassailable. And no, you can’t see it, either!

I go by what the satellite data shows which is no added global warming. End of story.

Kinda like the crooked accountant. Someone asks him, âHow much is two plus two?â The accountant answers: âWhat do you want it to be?â

Cheers,

Jim

On 27 March 2006, Prof Phil Jones of Uni of East Anglia sent me an email containing this explanation:'”This shows that it is necessary to adjust the marine data (SSTs) for the change from buckets to engine intakes. If models are forced by SSTs (which is one way climate models can be run) then they estimate land temperatures which are too cool if the original bucket temps are used.”

You can therefore see that the adjustment process, justified to fit the model, has been going on for some years.

There is a backstory around this detailed at

http://climateaudit.org/2007/05/09/more-phil-jones-correspondence/