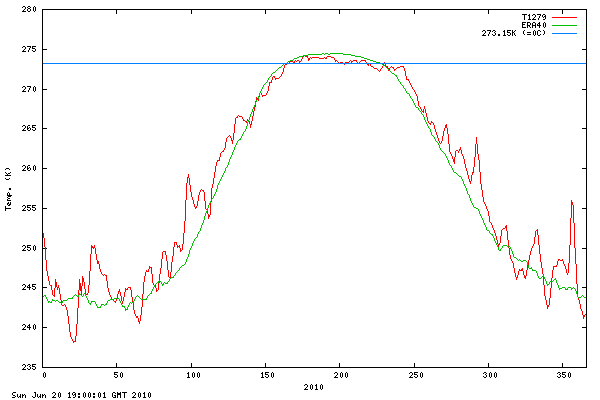

We’ve all seen this graph below of Arctic Temperature above 80°N from DMI. But, there’s something surprising about how it is created.

In this guest post by Harold Ambler, he finds that DMI actually goes to the trouble of applying as many data sources as they can to their numerical weather prediction model input, not just extrapolate from the nearest ground based stations as GISS does. – Anthony

Guest post by Harold Ambler

As has been well covered by Steve Goddard on WUWT, the “interpretation” of Arctic conditions by NASA/GISS is based on astonishingly little data north of 80 degrees latitude, which is to say no data at all.

As the Danish Meteorological Institute (DMI) has been offered as a source of actual data and information, rather than imaginary data and imaginary information, and as the word “model” has been bandied around on WUWT as a problematic aspect of DMI’s temperature product, I thought now might be a good time to share an e-mail exchange I had several months ago with the DMI’s Gorm Dybkjær. Below is a lightly edited version of our exchange. Many of Dybkjær’s statements are very interesting.

Dear DMI:

I am an American journalist completing a book about climate change and have been studying your Arctic temperature graph for some time. The graph says that the data are obtained by the use of a model.

I wonder if you can tell me how many temperature stations the average represents, and why the word model is used. (I would anticipate the word ”model” to be a predictive computer analysis, as opposed to descriptive.)

Would it be possible to clear this up?

Thank you in advance.

Sincerely yours,

Harold Ambler

To which Dybkjær responded:

Dear Harold

Concerning your question about the number of in situ temperature observations (direct measurements) there is available in the Arctic – the brief answer is – there are not many! My guess is that the number of buoys in the Arctic Ocean that provide near-real-time temperature observations for e.g. numerical weather prediction (NWP) models are around 50. The number of land based weather stations on the rim of the Arctic Ocean are probably even less. You must contact WMO (world meteorological organization) for more accurate numbers. So by dividing the area that the ‘mean temperature’ graph represents by 100 temperature observations, you will of course find that each observation must represent an enormous area. That is exactly why you want to use NWP models to estimate distributed temperatures in the Arctic.

The NWP models used for the ‘mean plus 80N temperature’-graph on ocean.dmi.dk are, as you mention, a predictive numerical model. However, before you let the model ‘go’ to do the weather forecast calculations, you must estimate the initial state of the atmosphere. The initial state of the atmosphere is the best guess, based of all observation you have available and the coupled physical constrains of the model. The approximately 100 in situ surface temperature observations is only a very limited part of ‘all available observations’ you feed into the model. You have measurements from airplanes, atmospheric profiling instruments mounted on balloons and then of course the far most valuable input to NWP models today – a huge amount of observations from satellite.

From these data sources all kinds of atmospheric variables are measured/estimated and assimilated into the NWP models. From a ‘bargain’ between the coupled model physics and all the applied observations the model calculates the best initial state of the entire atmosphere. That initial state – the model analysis – is the best guess of e.g. distributed surface temperatures in the Arctic you get.

Hope you can use this clarification.

Best Regards

Gorm /Center for Ocean and Ice, DMI

I found Dybkjær’s response helpful and also confusing. Below are follow-up questions I sent him paired with his responses:

Hi Gorm,

Thank you for your response.

I think I am understanding you somewhat and have a few follow-up questions:

1. Does DMI’s ‘mean plus 80N temperature’-graph use measurements from airplanes?

All available observations, including measurements from airplanes, are used by the models to calculate the best guess of the atmospheric condition. This ‘best guess’ (or ‘analysis’) is calculated 4 times per day of which the 00z and 12z are the basis for the ‘plus 80North’ temperature graph. I must recommend you to contact “European Centre for Medium-Range Weather Forecasts” (ecmwf.int) for details on the amount of observation they use for any of their model analysis.

2. Do you use measurements from satelllites?

Dybkjær: A huge amount of satellite data are also used to produce the ‘best guess’… (see above)

3. Do you use measurements from balloons?

see above

4. Does the number of data-sources change on a daily basis?

Yes – but I do not believe this has a significant effect on the day to day quality. Contact the ECMWF!

5. Do you adjust for this?

No

6. If you do use the sources listed in 1-3, who provides you with the data?

At DMI we get most of our ground based measurements through the WMO – satellite data we either retrieve our selves or get them through various data networks. I guess the same is the case at ECMWF, who run the models used for the temperature graph we are talking about here – so for more details on this please contact ECMWF.

7. Some of the spikes in the record look extraordinarily sharp, and I had previously understood such moments to be cases where sub-polar air overran the Arctic basin. But I wonder if, to some extent, they represent the model over-reacting to a single spike in data from just a few sources? For instance, when I eyeball the temperatures around the Arctic basin, they don’t in every case appear to correspond to the spikes on your graph?

I believe – in general terms – that the spikes of the graph are realistic, but to discuss this further we have to look at specific cases. As I mentioned in an earlier mail, the ‘plus 80 North mean temperature’-values are the mean of all model grid points in a regular 0.5 degree grid – meaning that along each half degree parallel North of 82N, you have 720 temperature values! That means that the ‘plus 80 North mean temperature’ is strongly bias towards the temperatures in the very central arctic and therefore less affected by temperatures along the rim of the Arctic Ocean. Therefore – You can use all the plotted ‘plus 80 North mean temperature’ graphs to compare one year to another or the climate line and you should NOT compare the mean temperatures to a specific temperature measurement.

8. The word model is still confounding here: Basically, the graph represents initial conditions for you to run the model predictions. But the initial conditions are not generated by the model. They are generated by you and the staff at DMI, correct?

The initial conditions are generated by the model using state-of-the-art atmosphere physical knowledge.

Although our interchange left some questions unanswered, I had learned what I wanted to by this point: DMI’s data for the topmost portion of the globe, north of 80 degrees latitude, while a hodge-podge, and plagued with its own set of issues, was far, far more reality-based than the Arctic data published by NASA/GISS and, thus, the lesser of two evils. I will be in the Sierras and away from my computer for the next 10 days.

=================================================

About Harold Ambler

I was obsessed with weather and climate as a young boy and have studied both ever since. I have English degrees from Dartmouth and Columbia and got my career in journalism at The New Yorker magazine, where I worked from 1993 to 1999. My work has appeared in The Wall Street Journal, The Huffington Post, The Atlantic Monthly online, Watts Up With That?, The Providence Journal, Rhode Island Monthly, Brown Alumni Monthly, and other publications.

Visit Harold’s website :Talking About the Weather

And hit the tip jar if so inclined. -Anthony

Name of book, please.

And I’d like to salute your courage for the post at the Puffington Host.

================

At last DMI is recognized about time…..

And THIS is why this is the #1 science blog….

1200 KM smoothing vs. actual Arctic temperature measurements. It is unfortunate that our tax dollars fund GISS and NOAA when a published metric for the Arctic is superior.

Well said, MattN!

Huh? Using real observed temps in the Arctic to determine the temps? Unheard of!! Do you think if we asked pretty please, Mr. Dybkjær could share this new found methodology with Hansen? I think he’s on to something!

Since Big Oil pumps mega millions into the sceptics activities, the real warmists are stuck with no funding. They must resort to arm chair science. It is warm inside and cold up there. Nobody would want to actually go to the arctic. It is cold and dangerous. We have models that work very well.

After all this WUWT Northern Exposure, I wonder if DMI is “hotting”?

Has anyone else noticed that, while there is great variability throughout the data range, the ‘actual’ measurement almost always seems to intersect with the average curve and the day where the average cross 273.15? Seems to be 6 out of 7 years that it does this.

Why would NASA/GISS want real data that removes their justification for claiming that this is the warmest day/month/year/decade since the last ice age? As as US taxpayer, I am wondering if anyone from the Congress or the Executive branch will ever call for open, accurate collection and reporting of weather and climate information.

This is fantastic and so nice to finally know how things are actually recorded. So why do we pay tax dollars to NOAA to give us estimated biased results, when the DMI can give us more accurate information??

A significant question remains: If a “mssing M” (Metadata) is fouind where a -20.0 C is read as a +20.0 C, who cab caatch the error – if anyyone at all, and is that the cause for the rougher “winter and fall temp curves?

MattN says:

July 28, 2010 at 6:27 pm

And THIS is why this is the #1 science blog….

___________________________________________

I will second that. The education you get at this site is amazing.

i apologize for the off topic comment but i thought you might find this interesting…

http://www.american.com/archive/2010/july/science-turns-authoritarian

Winter has arrived

GISS paints the Arctic blood red

But the snow falls white

Can we compare how well the GISS interpolation method agrees with the DMI data?

It seems to me that it could possibly falsify the claims that they can accurately interpolate over 1200 km.

Excellent sleuthwork, Harold.

Note the DMI official’s comments, more than once, about DMI’s specific reliance on the ECMWF, which it seems, many meteorologists hint, is the most superior general circulation model in the world.

Figures DMI is so realistic. They rely on a GCM whose head is usually not in the clouds…plus more direct observations.

They seem to be more on track than our own GISS-based “GI**.”

But that’s not saying much. Everybody is more on track than GI**.

Chris

Norfolk, VA, USA

Sounds like a good process, despite the lack of data. Of course, with 100 measurements for the region, it’s probably a higher density than most areas covered by GISS worldwide.

I wonder if a observation coupled has ever been tried world-wide? I’d be curious how it differed from the major temperature indices……

Can we get DMI to do the rest of the globe?

kim says:

July 28, 2010 at 6:07 pm

Name of book, please.

Book is called Don’t Sell Your Coat and should be out on Kindle by October.

Some of the spikes in the record look extraordinarily sharp…..

The spikes are normal. They are from eddies. Richard Lindzen talks a little about that in this video

It is a relief to know they are trying to use as much data as they can unlike GISS and some others that are trying to use as little data as they can.

Ed Caryl says:

July 28, 2010 at 7:59 pm

Better yet, can we fire Hansen GISS and hire DMI?

The Cheshire Sun grins;

The oceans oscillate cool.

Don’t sell your coat, please.

===============

Geez… using observations? How novel… 😉