by Roy W. Spencer, Ph. D.

INTRODUCTION

My last few posts have described a new method for quantifying the average Urban Heat Island (UHI) warming effect as a function of population density, using thousands of pairs of temperature measuring stations within 150 km of each other. The results supported previous work which had shown that UHI warming increases logarithmically with population, with the greatest rate of warming occurring at the lowest population densities as population density increases.

But how does this help us determine whether global warming trends have been spuriously inflated by such effects remaining in the leading surface temperature datasets, like those produced by Phil Jones (CRU) and Jim Hansen (NASA/GISS)?

While my quantifying the UHI effect is an interesting exercise, the existence of such an effect spatially (with distance between stations) does not necessarily prove that there has been a spurious warming in the thermometer measurements at those stations over time. The reason why it doesn’t is that, to the extent that the population density of each thermometer site does not change over time, then various levels of UHI contamination at different thermometer sites would probably have little influence on long-term temperature trends. Urbanized locations would indeed be warmer on average, but “global warming” would affect them in about the same way as the more rural locations.

This hypothetical situation seems unlikely, though, since population does indeed increase over time. If we had sufficient truly-rural stations to rely on, we could just throw all the other UHI-contaminated data away. Unfortunately, there are very few long-term records from thermometers that have not experienced some sort of change in their exposure…usually the addition of manmade structures and surfaces that lead to spurious warming.

Thus, we are forced to use data from sites with at least some level of UHI contamination. So the question becomes, how does one adjust for such effects?

As the provider of the officially-blessed GHCN temperature dataset that both Hansen and Jones depend upon, NOAA has chosen a rather painstaking approach where the long-term temperature records from individual thermometer sites have undergone homogeneity “corrections” to their data, mainly based upon (presumably spurious) abrupt temperature changes over time. The coming and going of some stations over the years further complicates the construction of temperature records back 100 years or more.

All of these problems (among others) have led to a hodgepodge of complex adjustments.

A SIMPLER TECHNIQUE TO LOOK FOR SPURIOUS WARMING

I like simplicity of analysis — whenever possible, anyway. Complexity in data analysis should only be added when it is required to elucidate something that is not obvious from a simpler analysis. And it turns out that a simple analysis of publicly available raw (not adjusted) temperature data from NOAA/NESDIS NOAA/NCDC, combined with high-resolution population density data for those temperature monitoring sites, shows clear evidence of UHI warming contaminating the GHCN data for the United States.

I will restrict the analysis to 1973 and later since (1) this is the primary period of warming allegedly due to anthropogenic greenhouse gas emissions; (2) the period having the largest number of monitoring sites has been since 1973; and (3) a relatively short 37-year record maximizes the number of continuously operating stations, avoiding the need to handle transitions as older stations stop operating and newer ones are added.

Similar to my previous posts, for each U.S. station I average together four temperature measurements per day (00, 06, 12, and 18 UTC) to get a daily average temperature (GHCN uses daily max/min data). There must be at least 20 days of such data for a monthly average to be computed. I then include only those stations having at least 90% complete monthly data from 1973 through 2009. Annual cycles in temperature and anomalies are computed from each station separately.

I then compute multi-station average anomalies in 5×5 deg. latitude/longitude boxes, and then compare the temperature trends for the represented regions to those in the CRUTem3 (Phil Jones’) dataset for the same regions. But to determine whether the CRUTem3 dataset has any spurious trends, I further divide my averages into 4 population density classes: 0 to 25; 25 to 100; 100 to 400; and greater than 400 persons per sq. km. The population density data is at a nominal 1 km resolution, available for 1990 and 2000…I use the 2000 data.

All of these restrictions then result in thirteen 24 to 26 5-deg grid boxes over the U.S. having all population classes represented over the 37-year period of record. In comparison, the entire U.S. covers about 31 40 grid boxes in the CRUTem3 dataset. While the following results are therefore for a regional subset (at least 60%) of the U.S., we will see that the CRUTem3 temperature variations for the entire U.S. do not change substantially when all 31 40 grids are included in the CRUTem3 averaging.

EVIDENCE OF A LARGE SPURIOUS WARMING TREND IN THE U.S. GHCN DATA

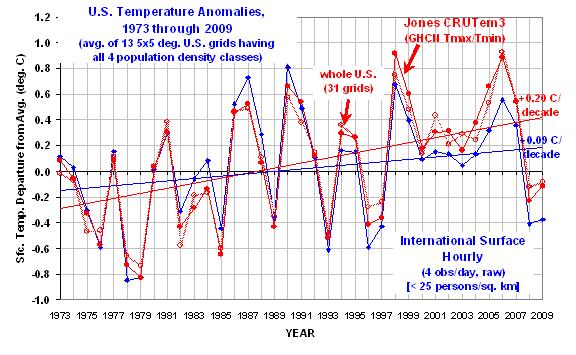

The following chart shows yearly area-averaged temperature anomalies from 1973 through 2009 for the 13 24 to 26 5-deg. grid squares over the U.S. having all four population classes represented (as well as a CRUTem3 average temperature measurement). All anomalies have been recomputed relative to the 30-year period, 1973-2002.

The heavy red line is from the CRUTem3 dataset, and so might be considered one of the “official” estimates. The heavy blue curve is the lowest population class. (The other 3 population classes clutter the figure too much to show, but we will soon see those results in a more useful form.)

Significantly, the warming trend in the lowest population class is only 47% of the CRUTem3 trend, a factor of two difference.

Also interesting is that in the CRUTem3 data, 1998 and 2006 would be the two warmest years during this period of record. But in the lowest population class data, the two warmest years are 1987 and 1990. When the CRUTem3 data for the whole U.S. are analyzed (the lighter red line) the two warmest years are swapped, 2006 is 1st and then 1998 2nd.

From looking at the warmest years in the CRUTem3 data, one gets the impression that each new high-temperature year supersedes the previous one in intensity. But the low-population stations show just the opposite: the intensity of the warmest years is actually decreasing over time.

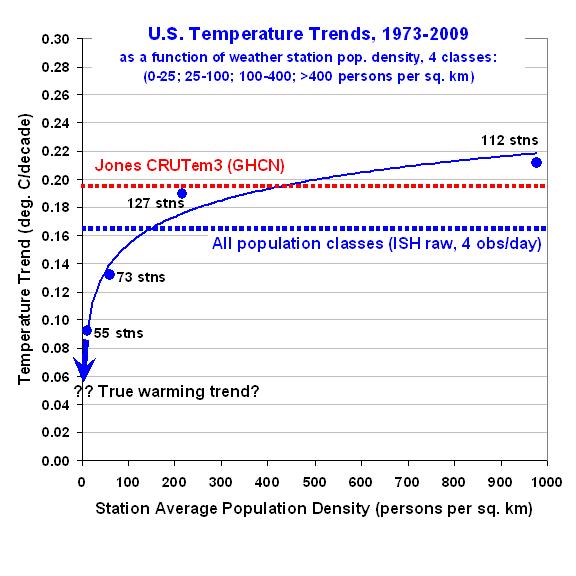

To get a better idea of how the calculated warming trend depends upon population density for all 4 classes, the following graph shows – just like the spatial UHI effect on temperatures I have previously reported on – that the warming trend goes down nonlinearly as population density of the stations decrease. In fact, extrapolation of these results to zero population density might produce little warming at all!

This is a very significant result. It suggests the possibility that there has been essentially no warming in the U.S. since the 1970s.

Also, note that the highest population class actually exhibits slightly more warming than that seen in the CRUTem3 dataset. This provides additional confidence that the effects demonstrated here are real.

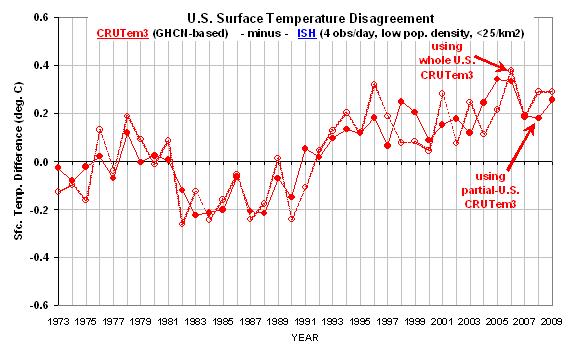

Finally, the next graph shows the difference between the lowest population density class results seen in the first graph above. This provides a better idea of which years contribute to the large difference in warming trends.

Taken together, I believe these results provide powerful and direct evidence that the GHCN data still has a substantial spurious warming component, at least for the period (since 1973) and region (U.S.) addressed here.

There is a clear need for new, independent analyses of the global temperature data…the raw data, that is. As I have mentioned before, we need independent groups doing new and independent global temperature analyses — not international committees of Nobel laureates passing down opinions on tablets of stone.

But, as always, the analysis presented above is meant more for stimulating thought and discussion, and does not equal a peer-reviewed paper. Caveat emptor.

Asking some more obvious questions: Dr. Spencer:

How long do you believe that CONUS won’t show the heating evidenced across the planet?

And to keep from being too US centric, what about our neighbors to the north? Is there also only spurious warming in Canada? Or for that matter, Alaska? Or Mexico? Or even the Western CONUS?

I guess I just don’t understand the significance of this work.

“OT. I do not understand statistics. But there’s a commentator (VS) who is leaving Tamino crazy. He even forgot you Anthony.”

Tamino’s fury and the technical complexity of his posts are in direct proportion to the weakness of his case. He basically invokes all this irrelevant technicality in order to try to bludgeon his less informed readers into belief. Its very funny stuff.

For another classic example, go through his posts on PCA. All is fine until the last, when he tries to justify using the mean of a subset of the series instead of the mean of the series. He does not explain why, if this method is so good, its not generally used in, for instance, medicine.

It would be funny were it not sad.

Dr Spencer,

Thank you for posting on Anthony’s WUWT.

Congratulations on writing [once again] science in clear language.

Your article provides great leverage for a cultural shift about how science can do its business.

Anthony – Thank you for providing a vehicle for transmitting the antidote against compromised science/scientists that form the root of the CAGW agenda.

John

NickB. (12:33:26) :

Wren (09:52:04) :

As I recall, average global temperature starts rising sharply after the 1970’s. So if increases in population density drive increases in temperature, why is there a lag?

Hey, nice post!

I think there are a number of corollaries for UHI (some which are corollaries for CO2, strangely enough) that should imply that the per capita UHI effect should also change around that time. Take, for example, number of cars on the road. For the life of me I cannot find a good source of historical information on the number of cars globally, but I would propose that starting after WWII this is at best a linear growth rate if not mildly exponential. There is also a point in time somewhere (probably related to economic/GDP growth) where a given country starts moving towards cement or asphalt roads vs. dirt/gravel for an “average” road. Air travel, as has been mentioned here before, really started to take off in the 60’s. Air conditioning and centralized HVAC adoption has had exponential adoption starting in the 60’s I think. Cement/masonry building construction in areas where it was wood prior definitely another post World War II trend.

Were it not for the political/economic consequences of attribution of causation, the chicken-egg relationship here would almost be humorous. Cement production, for example, is a significant driver of CO2, but it’s also a significant driver of UHI. How much of its temperature impact is really one vs. the other?

====

True, it’s kind of hard to completely separate the affects of CO2 and UHI on temperature increases.

I’m not aware of a series on world motor vehicle registrations. A proxy of sorts might be annual global crude oil production(1960-20008) available from DOE.

http://www.eia.doe.gov/aer/txt/ptb1105.html

However, I don’t know if this series is a reliable indicator of trend.

michel (20:35:03) :

DirkH (12:19:12) :

NickB. (08:42:12) :

paulo arruda (07:56:49):

I went VS-Comment searching at your suggestion. I learned some ideas of statistics in short order. He/She comes across as an objective observer.

Yes, VS is providing Tamino-san [I am in Japan today, so going with the -san honorific] with some challenges.

VS-san, where are you?

John

I have to pretty much agree with VS that dhogaza is actually Tamino/Foster.

If you subtract all the dhogaza posts from that thread, and the VS responses, there are not many other posts left.

Since “tamino” is silent, it could well be that “dhogaza” is tamino’s sock puppet.

That’s the hypothesis. Falsify it, if anyone can.

Yeah this is a great read. It makes more sense than many I’ve read.

Dr. Spencer don’t bother responding to “Paul K2”.

He’s not interested in trying to understand anything or learn anything new.

kwik (10:54:37) :

David44 (09:44:36) :

Here is a 6′th grader looking at some rural stations;

Did I hear Nobel Prize for kids?

=====

The findings conflict with an article by Long previously featured on this site. Perhaps picking different stations gives different results.

http://wattsupwiththat.com/2010/02/26/a-new-paper-comparing-ncdc-rural-and-urban-us-surface-temperature-data/#m

TERRY BEAR (17:52:58) :

GREAT WORK DOC!

This research plus what Dr. Jones admitted in his most recent BBC interview:

NO statistically significant warming from 1995-2010 while C02 increased by 30+%. In my book that is enough to reject the NULL….

======

Type II Error ?

Why station drops matter – Creation of a warming trend from 3 easy pieces:

http://boballab.wordpress.com/2010/03/16/costa-rican-warming-a-step-artifact/

A very nice example of merging 3 fairly flat stations in one grid /box in Coast Rica and “finding warming” as a result.

I think it’s becoming pretty clear that “The Splice” of rural to urban, of high altitude to low altitude, etc. is the real cause of “global warming” in the anomaly products…

The population density data is at a nominal 1 km resolution, available for 1990 and 2000…I use the 2000 data.

Which naturally leads to a question about comparing 1990 data to 2000 data.

Paul K2 (19:33:28) : And to keep from being too US centric, what about our neighbors to the north? Is there also only spurious warming in Canada? Or for that matter, Alaska? Or Mexico?

Well, Canada is substantially flat from 1930 until a “Splice” in 1990 or so glues together two different “Duplicate Number” series (to use the NCDC term – I thought they were called “Mod Flags” in GIStemp):

http://chiefio.files.wordpress.com/2010/03/canada_hair_1880.png

So if there is any “global warming” in Canada, it all showed up as a step function in 1990.

(BTW, that graph is “all anomalies all the time”. And every anomaly is only “self to self” in that each thermometer series is compared only to itself. The purest kind of anomaly possible. Computed month over same month, then averaged for a year, so seasonal dropouts don’t impact it.)

You can see some examples of “The Splice” for specific stations here:

http://chiefio.wordpress.com/2010/02/25/canadian-concatenation-conundrum/

For Mexico, we have a more interesting case. It took a big cooling in the beginning (but hasn’t recovered yet so is still net cooler) and then was basically flat, up until The Splice. But the splice happens in two pieces, one in about 1980, another in 1990 (where you see the volatility of the monthly anomaly ranges go to zero… a very odd splice indeed…)

http://chiefio.files.wordpress.com/2010/03/mexico_hair.png

Don’t have a graph for Alaska yet, but here is a nice little Caribbean Island that is dead flat (so was Bermuda, until it was cut off in 1995) :

http://chiefio.files.wordpress.com/2010/03/stpierremiquelon_hair.png

(Anyone wanting an explanation of the details on those graphs can hit the “north american” link above – that I lifted them from, and where they are explained – or just leave me a note over at my place…)

If that Island isn’t enough for you, here are a couple of other places not warming either. One very warm, the other very cold:

http://chiefio.wordpress.com/2010/03/15/didnt-get-the-memo-korea-french-polynesia/

If that’s not enough, I’ve identified dozens more. Don’t have graphs done for all of them yet (just tabular reports), but I presume you can read tabular reports… and I’ll make graphs out of them all eventually. I do have a graph for Germany but I’m kind of pushing it on links in one comment so to see it you will need to look around at my place in the right margin)

The world is divided into Hockey Stick countries (usually pivoting in 1990, sometimes 1980) and Step Function countries (where things go jump / flat / jump / flat as in Japan) and FLAT countries. These get averaged together to “create global warming”…

http://chiefio.wordpress.com/2010/03/01/japan-poster-child-for-the-smith-effect/

AGW is just an artifact of “over artful” data splicing and screwing around with the instrumentation while the experiment is in progress.

Paul K2 (19:24:47) : … the UAH record is confirming the GISS record and near-record highs set in the last five years. …

Isn’t this the important message?

Yes, it certainly is. Since GISS is horridly broken, it is a very important message that there is something very wrong with the UAH record.

Dr Spencer – I suggest you look at maximum and minimum temperatures, seperately.

I am slowly working through the Australian BOM database.

I am finding that individual locations have enduring linear temperature trends, some rising quite rapidly, others flat or even falling slightly.

But the Max and Min charts are qiute surprising.

In some, max rises faster than Min, in others, the reverse.

In some, either Max and Min are flat while the other rises sharply.

The key things are enduring multi, multi, decade long linear trends

and divergence between Max and Min.

So far, I have found little evidence of a post 1975 acceleration and no sign of the very pronounced 65 year zigzag cycle, so obvious in global NCDC data.

I will report in more detail eventually, but I am very slow.

E.M.Smith (23:48:15) :

Paul K2 (19:24:47) : … the UAH record is confirming the GISS record and near-record highs set in the last five years. …

Isn’t this the important message?

Yes, it certainly is. Since GISS is horridly broken, it is a very important message that there is something very wrong with the UAH record

Last June/July I remember wondering how long it would be before posters on here started to question the satellite record in he event of a decent El Nino. The answer seems to be about 6 months.

“Bear in mind that surface temp records in use today (NOAA USHCN, GISS, CRU) only use two data points (max/min) to create a mean temperature. -A”

Maybe, but I’m just criticizing this curve. 4 points, to try to get a trend feels like not enough. And the fact it validates what’s expected is the exact reason you’d need something more precise.

Imagine if the last one is just an error and should be 0.1 higher ? Or 0.05 less ? Your logarithm would be changed a lot.

Wren (09:52:04) : Cement production, for example, is a significant driver of CO2, but it’s also a significant driver of UHI. How much of its temperature impact is really one vs. the other?

What’s the difference between a few days and 100+ years?

Steve Keohane (07:28:14) :

Wren (09:52:04) : Cement production, for example, is a significant driver of CO2, but it’s also a significant driver of UHI. How much of its temperature impact is really one vs. the other?

What’s the difference between a few days and 100+ years?

——-

That was NickB’s comment. I know little about cement production.

“Peerless.”

Arkh (05:39:31) :

“Bear in mind that surface temp records in use today (NOAA USHCN, GISS, CRU) only use two data points (max/min) to create a mean temperature. -A”

Maybe, but I’m just criticizing this curve. 4 points, to try to get a trend feels like not enough. And the fact it validates what’s expected is the exact reason you’d need something more precise.

Imagine if the last one is just an error and should be 0.1 higher ? Or 0.05 less ? Your logarithm would be changed a lot.

I see your point now. When you originally posted it looked like your concern was Dr. Spencer’s 4 daily temp averaging method vs. max/min – which I have commented on as well.

I think the critique on the second graph (i.e. only 4 points to derive the curve) is well taken, but keep in mind that the underlying data uses (per the graph) 367 stations categorized into the 4 population categories, and these groups of stations (55 in Group 1, 73 in Group 2, 127 in Group 3, and 112 in Group 4) are what then create the 4 data points in question.

This could be an oversimplification, but I would caution against thinking that every single station should fit exactly on the curve – a (significant?) amount of noise on a station by station basis should be demonstrated due to microclimate influences – the important part here is the general underlying relationship. Having all 367 stations individually plotted should allow us to generate a similar curve, but it might not be quite as clean and intuitive for presentation purposes.

And probably a 1000-fold increase in air freight, which is something not captured by passenger-related statistics.

5-deg x 5-deg boxes seem awfully big. I would think most would exhibit a high degree of spatial heterogeneity in the population density. (Apologizes if this has already been mentioned. Haven’t read through all the comments yet.)

Steve Keohane (07:28:14) :

What’s the difference between a few days and 100+ years?

Wren was quoting me in his reply, so I hope I’m not speaking out of turn for responding… but is the “few days” a ref to CO2? The IPCC claims a persistence of ~100 years for CO2. I don’t agree with that necessarily, but I’m not sure if it can really be proven or disproven.

This might be the worst possible example but this is really just a thought experiment, so lets say you want to put in a 500sf concrete patio , this will require ~6 cubic yards of concrete with a “carbon footprint” of 537 lbs/yard (ref) for a total 3,222 lbs of CO2.

Lets say half of that CO2 stays in the atmosphere after a year and that portion is essentially permanent (ref) – we’re talking an effective permanent atmospheric footprint of said patio of 1,611 lbs or .73 metric tons. I think this is a fair back of the envelope assessment – but again, just doing a thought experiment here.

Now this is when the wheels start to come off. For a straight albedo analysis (the usual standard for climate land use analysis) “traditional” concrete ranges from an albedo of .2 (old) to .5 (new) – ref. Compare that to the albedo of the well manicured lawn it replaces (estimated somewhere between meadow and savanna – ref) that should have an albedo somewhere between .1 and .2. I think what this means is that per a normal “climate” analysis, your concrete patio should be cooler than your lawn. Put another way, standing in the middle of a concrete parking lot you should be cooler than standing in the middle of your lawn!

I’m not sure if I’m the only one who finds that counterintuitive… so I went digging for another way to quantify the thermal effects of surfaces and came across a measure called SRI which is a derivation of albedo and emittance (“also known as emissivity of a surface, is a measure of how well a surface emits or releases heat”). Trouble is, it’s relatively easy to find emittance and SRI measures for manmade surfaces, but the natural surfaces they replace is a big question mark (especially considering that “roughness” of the surface in question seems to be an issue, and most natural surfaces are rough vs. smooth man made surfaces). I keep hitting paywalls when I try and dig any deeper on it.

So what can we conclude from this failed attempt to compare the heat island effect of concrete vs. its CO2 contribution? I think it’s safe to say that from a standard “climate” analysis of concrete, despite what common logic might tell you, replacing grass (or other natural surfaces for that matter) with concrete will have a cooling effect due to increased albedo.

I’m not sure if anyone else is still reading this thread but… hmmm… that’s a little odd don’t you think?

…An additional note:

On one of the prior threads someone commented about a common sense analysis of the albedo measurements and predictions, I think after someone (might have been me not sure) mentioned that land use was a net negative per the IPCC due to “cooling” caused by deforestation. Which is the logical follow-on to grassland/farmland having a higher albedo (10-25%) than forest (5-15%) – ref.

He said, again from a practical experience standpoint, that walking in a wooded area was cooler than walking out into a field. Now that could be due to you feeling cooler in the shade vs. when you have the sun beating on your head, but if you think about it from a surface temp standpoint (2m/6ft off the ground) I don’t think it’s *such* a stretch to imagine that a temperature station surrounded by trees would exhibit a lower avg. temp vs. one in the middle of a field (or for that matter, going back to my prior post, one in the middle of a concrete parking lot).

I need to go dig into some of Pielke Sr.’s research, I think this is his area… but for the life of me I cannot see how the albedo analysis that is used in mainstream (“consensus”) climate science makes sense here(?)

So I hate to exhibit posting diarrhea but I did a little more digging as to what the “consensus” opinion is on land use changes. No mention of the effects of cement, but an update regarding their view of replacing forests with grasslands/crops, which could then be extended to cement I guess. From Stephen Schneider’s page (yeah, that guy):

“…it is well known that clearing land raises albedo but lowers evapotranspiration. The first process cools the Earth’s surface, and the second warms it, but together they still cool the planet unless feedbacks negate that. This is why the role of land use change is still largely speculative — there are many interactions and feedbacks to be considered. Also, the dramatic rise in temperatures in the past thirty years would be much harder to explain using a land use change explanation than the overall rise over the past hundred years would be, since land use has not changed dramatically over the last few decades compared to century-long trends

…

[ Scale and importance of the effect:] Largely regional: net global climatic importance still speculative.”

http://stephenschneider.stanford.edu/Climate/Climate_Science/CliSciFrameset.html?http://stephenschneider.stanford.edu/Climate/Climate_Science/Science.html

It’s logical to conclude that replacing your lawn with cement would cut down on evapotranspiration, but does that really explain it?

Another thought – if you look at green roofs – allegedly they can cut down on building cooling loads by 50-90%. I’m not sure what that means from a W/m2 or C perspective. I’m not sure if that really all is due to evaporative effect (as claimed) vs. diffusion of a “rough” surface vs. differences in emissivity… but regardless, it does appear that mainstream/consensus analysis has not given appropriate attention to this issue.