New Work on the Recent Warming of Northern Hemispheric Land Areas

by Roy W. Spencer, Ph. D.

INTRODUCTION

Arguably the most important data used for documenting global warming are surface station observations of temperature, with some stations providing records back 100 years or more. By far the most complete data available are for Northern Hemisphere land areas; the Southern Hemisphere is chronically short of data since it is mostly oceans.

But few stations around the world have complete records extending back more than a century, and even some remote land areas are devoid of measurements. For these and other reasons, analysis of “global” temperatures has required some creative data massaging. Some of the necessary adjustments include: switching from one station to another as old stations are phased out and new ones come online; adjusting for station moves or changes in equipment types; and adjusting for the Urban Heat Island (UHI) effect. The last problem is particularly difficult since virtually all thermometer locations have experienced an increase in manmade structures replacing natural vegetation, which inevitably introduces a spurious warming trend over time of an unknown magnitude.

There has been a lot of criticism lately of the two most publicized surface temperature datsets: those from Phil Jones (CRU) and Jim Hansen (GISS). One summary of these criticisms can be found here. These two datasets are based upon station weather data included in the Global Historical Climate Network (GHCN) database archived at NOAA’s National Climatic Data Center (NCDC), a reduced-volume and quality-controlled dataset officially blessed by your government for climate work.

One of the most disturbing changes over time in the GHCN database is a rapid decrease in the number of stations over the last 30 years or so, after a peak in station number around 1973. This is shown in the following plot which I pilfered from this blog.

Given all of the uncertainties raised about these data, there is increasing concern that the magnitude of observed ‘global warming’ might have been overstated.

TOWARD A NEW SATELLITE-BASED SURFACE TEMPERATURE DATASET

We have started working on a new land surface temperature retrieval method based upon the Aqua satellite AMSU window channels and “dirty-window” channels. These passive microwave estimates of land surface temperature, unlike our deep-layer temperature products, will be empirically calibrated with several years of global surface thermometer data.

The satellite has the benefit of providing global coverage nearly every day. The primary disadvantages are (1) the best (Aqua) satellite data have been available only since mid-2002; and (2) the retrieval of surface temperature requires an accurate adjustment for the variable microwave emissivity of various land surfaces. Our method will be calibrated once, with no time-dependent changes, using all satellite-surface station data matchups during 2003 through 2007. Using this method, if there is any spurious drift in the surface station temperatures over time (say due to urbanization) this will not cause a drift in the satellite measurements.

Despite the shortcomings, such a dataset should provide some interesting insights into the ability of the surface thermometer network to monitor global land temperature variations. (Sea surface temperature estimates are already accurately monitored with the Aqua satellite, using data from AMSR-E).

THE INTERNATIONAL SURFACE HOURLY (ISH) DATASET

Our new satellite method requires hourly temperature data from surface stations to provide +/- 15 minute time matching between the station and the satellite observations. We are using the NOAA-merged International Surface Hourly (ISH) dataset for this purpose. While these data have not had the same level of climate quality tests the GHCN dataset has undergone, they include many more stations in recent years. And since I like to work from the original data, I can do my own quality control to see how my answers differ from the analyses performed by other groups using the GHCN data.

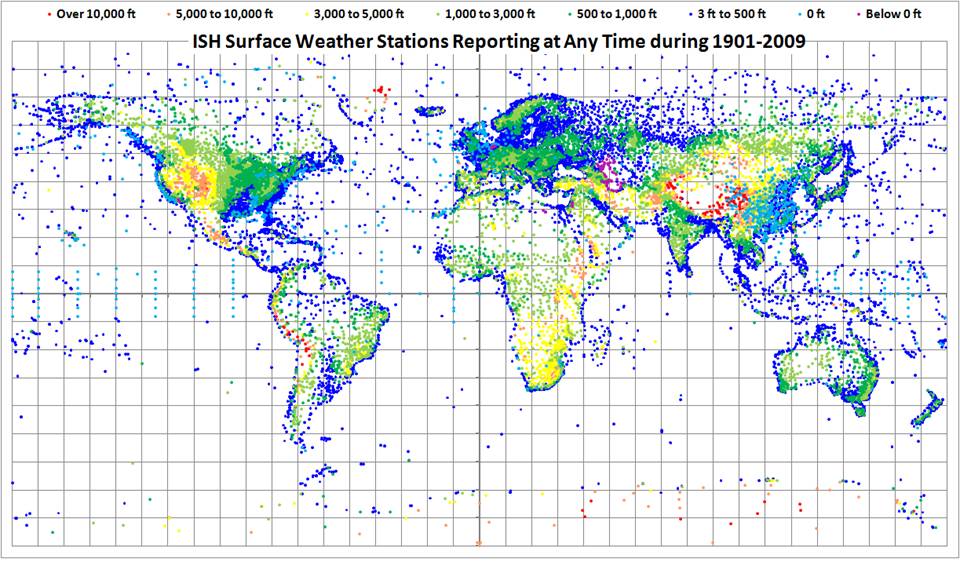

The ISH data include globally distributed surface weather stations since 1901, and are updated and archived at NCDC in near-real time. The data are available for free to .gov and .edu domains. (NOTE: You might get an error when you click on that link if you do not have free access. For instance, I cannot access the data from home.)

The following map shows all stations included in the ISH dataset. Note that many of these are no longer operating, so the current coverage is not nearly this complete. I have color-coded the stations by elevation (click on image for full version).

WARMING OF NORTHERN HEMISPHERIC LAND AREAS SINCE 1986

Since it is always good to immerse yourself into a dataset to get a feeling for its strengths and weaknesses, I decided I might as well do a Jones-style analysis of the Northern Hemisphere land area (where most of the stations are located). Jones’ version of this dataset, called “CRUTem3NH”, is available here.

I am used to analyzing large quantities of global satellite data, so writing a program to do the same with the surface station data was not that difficult. (I know it’s a little obscure and old-fashioned, but I always program in Fortran). I was particularly interested to see whether the ISH stations that have been available for the entire period of record would show a warming trend in recent years like that seen in the Jones dataset. Since the first graph (above) shows that the number of GHCN stations available has decreased rapidly in recent years, would a new analysis using the same number of stations throughout the record show the same level of warming?

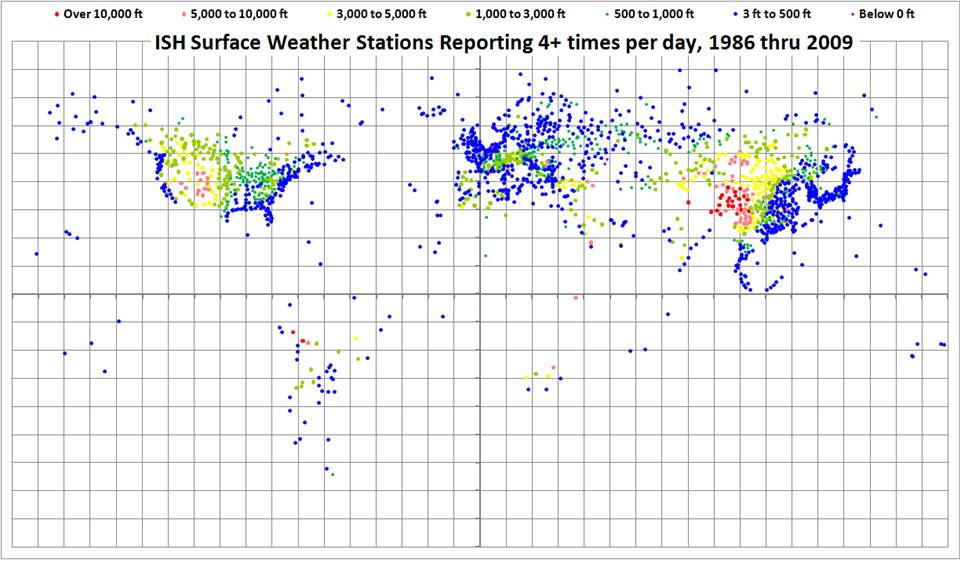

The ISH database is fairly large, organized in yearly files, and I have been downloading the most recent years first. So far, I have obtained data for the last 24 years, since 1986. The distribution of all stations providing fairly complete time coverage since 1986, having observations at least 4 times per day, is shown in the following map.

I computed daily average temperatures at each station from the observations at 00, 06, 12, and 18 UTC. For stations with at least 20 days of such averages per month, I then computed monthly averages throughout the 24 year period of record. I then computed an average annual cycle at each station separately, and then monthly anomalies (departures from the average annual cycle).

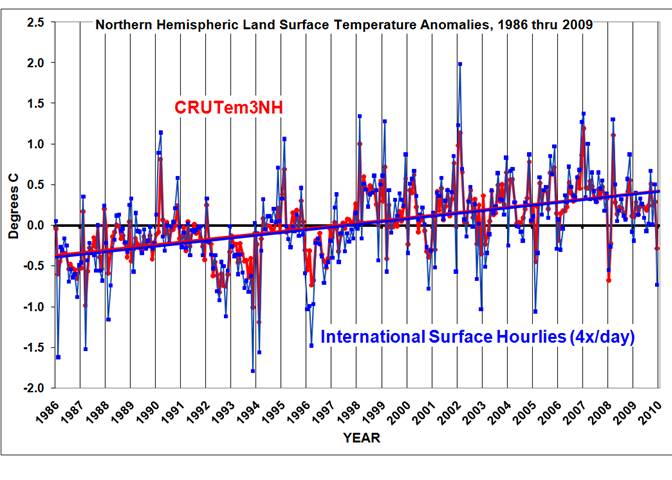

Similar to the Jones methodology, I then averaged all station month anomalies in 5 deg. grid squares, and then area-weighted those grids having good data over the Northern Hemisphere. I also recomputed the Jones NH anomalies for the same base period for a more apples-to-apples comparison. The results are shown in the following graph.

I’ll have to admit I was a little astounded at the agreement between Jones’ and my analyses, especially since I chose a rather ad-hoc method of data screening that was not optimized in any way. Note that the linear temperature trends are essentially identical; the correlation between the monthly anomalies is 0.91.

One significant difference is that my temperature anomalies are, on average, magnified by 1.36 compared to Jones. My first suspicion is that Jones has relatively more tropical than high-latitude area in his averages, which would mute the signal. I did not have time to verify this.

Of course, an increasing urban heat island effect could still be contaminating both datasets, resulting in a spurious warming trend. Also, when I include years before 1986 in the analysis, the warming trends might start to diverge. But at face value, this plot seems to indicate that the rapid decrease in the number of stations included in the GHCN database in recent years has not caused a spurious warming trend in the Jones dataset — at least not since 1986. Also note that December 2009 was, indeed, a cool month in my analysis.

FUTURE PLANS

We are still in the early stages of development of the satellite-based land surface temperature product, which is where this post started.

Regarding my analysis of the ISH surface thermometer dataset, I expect to extend the above analysis back to 1973 at least, the year when a maximum number of stations were available. I’ll post results when I’m done.

In the spirit of openness, I hope to post some form of my derived dataset — the monthly station average temperatures, by UTC hour — so others can analyze it. The data volume will be too large to post at this website, which is hosted commercially; I will find someplace on our UAH computer system so others can access it through ftp.

While there are many ways to slice and dice the thermometer data, I do not have a lot of time to devote to this side effort. I can’t respond to all the questions and suggestions you e-mail me on this subject, but I promise I will read them.

carrot eater (04:12:06),

Is this a result of “dodgy homogenization, or somesuch”: click [these are blink gifs, they take a few seconds to load]. Same question for this GISS/USHCN series collated by Mike McMillan: click

Or are these upward “adjustments” always the result of legitimate changes to the raw temperature data record – which always seems to result in more artificially rising temperatures than the actual raw data itself shows?

John Hooper (03:34:37),

“So the world is warming just like everyone said?

Guess ClimateGate was a red herring after all.”

That is not the question, and skeptics know it. But since you are not an AGW skeptic, I will explain it for you:

The planet has been following a gradual warming trend since the LIA, and from the last great Ice Age before that. Multi-decadal cycles of warming and cooling ride on top of that natural long term warming trend.

The key point is this the fact that the long term warming of the planet began well before the Industrial Revolution. Therefore, the planet’s gradual warming has been entirely natural. Despite a large increase in atmospheric CO2, the current warming trend is in line with the trend. Therefore, according to Occam’s Razor, CO2 is an extraneous entity and should be eliminated from the likely causes: Never increase, beyond what is necessary, the number of entities required to explain anything.

In fact, postal rate increases have a stronger correlation to temperature than CO2 does: click

And your conflating Climategate with entirely natural global warming is the real red herring argument.

“PJB (02:17:58) :

[…]

Really, poking around in the datasets that the alarmists have put out onto the web is the easiest sort of check to do, and it is almost guaranteed to back up their claims.”

Not really if you use a constant set.

Here’s a guy who did a very simple analysis of raw data who comes to the conclusion that there is no discernible trend:

http://crapstats.wordpress.com/2010/01/21/global-warming-%e2%80%93-who-knows-we-all-care/

Dr. Spencer

Since there is going to be a lot more money in the NASA budget for Earth Sciences there should be a way of improving your dataset in the future in the following manner.

A new land based temperature sensor, based upon the latest technology in temperature sensing (usually accurate to less than .001 degree), could be built. These sensors would be solar/battery powered in order to have them independent of any power grid. Additionally, they would have a satellite transponder that would use a satellite system like OrbComm, Iridium, or Globalstar (all low earth orbit constellations) that could provide real time temperature data on pretty much a global basis. This data could be integrated into your satellite dataset in real time via ground processing of the two datasets.

This would provide a high quality data product that is independent of the UHI effect, and could be sited anywhere, including on long lived floating bouys in the pacific in the southern oceans and land masses.

We do a lot of remote sensing work via satellite on other planets, why not here?

Interesting comments all.

Steve Goddard: the January 1998 warm spike in the satellite data compared to surface data is because peak El Nino warmth is in the eastern tropical and subtropical Pacific, which is poorly sampled by thermometers. The same is true during most if not all El Nino years.

Clearly, I need to do the ISH comparison to just those grids that Jones also has data for, which will be a much more meaningful and informative comparison.

Smokey (05:06:09) :

Smokey (05:27:44) :

So first Hargreaves is unsurprised that Spencer’s totally unhomogenised data set, based on only continuous stations, matches CRU so well because we know the Earth is warming and this isn’t in doubt; it’s just natural.

Then it’s implied ( Smokey (05:06:09), DirkH (05:47:11) : )that recent warming is only observed because of homogenisation, station drop-offs, and whatever else.

Then we’re back to warming being evident and undoubted; it’s just natural. [Smokey (05:27:44) ]

Can you please clarify?

It’s going to take a month and an extra hard drive to download this whole data set.

” pft (22:42:32) :

Wish I lived at 14,000 ft where all the warmth is (what is it, about -25 deg F there)”

The ICAO standard atmosphere has 5.5°F at 15’000 ft or -17.5°C at 5000 m.

Dr Spencer

In the hope that you will return to this thread and read this, I would suggest that the most important thing to do in pursuit of the observation of the trend in these global aggrgates is to assess dynamics of the global temperature field. This will enable us to determine what is required to meet the requirements of the Sampling Theorem.

This into the realms of discrete signal processing, and is not a question of statistics or statistical “error”. It is quite easy to show how the statistics of discrete data cannot be relied upon to measure the statistics of the underlying analogue signal if the requirements of the Sampling Theorem have not been met (i.e. if the discrete data is aliased due to inadequate sampling).

I’m not aware of any assessment which addresses this question with respect to the global temperature field. That’s not to say it doesn’t exist – but I would have thought that such an important step in the analysis would have been widely referred to in the literature.

As discussed in an earlier thread, Jones tried to use correlation to justify certain steps in his analysis of 150 year temperature trend. As statistical aggregates are not valid if discrete data is suffering from aliasing, his tests could not demonstrate that the data is free from the effects of alasing. And he made no reference to the mathematical literature to support his method.

To carry out an identification of the spatial and temporal dynamics of the global temperature field is no small task. And one of the things to be established at the outset would be “which temperature field” (e.g. surface, top of troposphere, etc).

Such a study would add to the sum total of knowledge, and is a logical step.

Until this is done, these aggregate global temperature series should be viewed with the greatest of caution. Interpretation of their trends could be meaningless.

Here are two videos I posted in that earloer thread. The first shows how even a very simple signal can be completely misrepresented if the discrete data is suffering from aliasing. The second shows how it can be completely meaningless to read observations if we are unaware that our data is aliased.

Regards

http://www.youtube.com/watch?v=LVwmtwZLG88

The graph of GHCN Active Temperature Stations looks a bit dubious.

Looking at the source http://savecapitalism.wordpress.com/2009/12/09/american-thinker-shows-the-code-meddling/ and then comparing it with http://savecapitalism.wordpress.com/2009/12/13/on-request-ghcn-measurements-per-country/ there appears to be a chunk missing.

In this thread http://wattsupwiththat.com/2010/02/12/noaa-drops-another-13-of-stations-from-ghcn-database/ there are 1113 stations

I think it would be very intresting if Dr Spencer could use all available data back to 1901 and make that analyse as well. Since the last year seems to be in correlation a control of correlation with the entire available data period may show someting else or not.

My bet? A large divergence with Jones data before 1960.

Nick Stokes (02:51:09) :

“It’s not clear to me how you achieve that by fitting low order harmonics. The LS fit would still be over-influenced by regions of high station density.”

Not if the density function has some degree of smoothness. An analogy would be where you have a complex waveform limited to a specific interval and wrapping around at the endpoints. Something like this (hope the spacing works out when I post it)

Y

|*

| * *

| * *

| * *

——————–X

| o o

| o o

| *

Your measurement samples are the “*” points. The function continues as the “o” points but you haven’t measured them – you mostly only have data points in the positive “Y” direction, i.e., in the Northern hemisphere. You can try filling in the “o” points via ad hoc linear interpolation, but you will get the wrong answer for the bias level. But, information on the low order orthognonal basis functions in an expansion is contained in your measured data – you just have to do a proper interpolation based on a proper set of orthogonal basis functions. If you do an appropriately normed fit to the coefficients of the lower order basis functions and integrate that, you will markedly reduce the error. I would start with an L2 norm since it is easiest, and see how much the result is changed, then perhaps pursue an L1 normed result to the the best possible performance (see exception to Nyquist-Shannon sampling theorem discussed below).

This sort of thing is well established in numerical theory. If you want to integrate a function, the worst way would be to pick points, arbitrarily interpolate across the blank areas, and rectangularly integrate what you get. The best way is to strategically choose your abscissae, interpolate with an orthogonal basis, and integrate the resulting functional approximation. See, for example, the chapter on Integration of Functions, section on Gaussian Quadratures and Orthogonal Polynomials here.

carrot eater (04:12:06) :

Bart (02:09:03) :

“Just to be clear, are you suggesting this method for satellite data, or these surface data?”

You could do it with both, but the greatest advantage would be for the sparsely sampled surface data. There likely would be little advantage at all with finely sampled satellite data.

“Anyway, I agree with Nick’s reservations.”

There can be pathological cases, but as I say, this kind of operation is well established in numerical theory, and 99 times out of 100 will give significantly enhanced results.

Jordan (09:56:20) :

“It is quite easy to show how the statistics of discrete data cannot be relied upon to measure the statistics of the underlying analogue signal if the requirements of the Sampling Theorem have not been met (i.e. if the discrete data is aliased due to inadequate sampling).”

Keep in mind, the Nyquist-Shannon sampling theory is sufficient, but not necessary to reconstruct a signal from sampled data. There’s a pretty good write-up at <Wikipedia.

Anyway, the data are what they are. We go to war with the army we have, using every available resource to our advantage.

“hope the spacing works out when I post it”

It didn’t. Hopefully, you get the gist.

I’m surprised no one asked the following question:

If the monthly temperature anomalies are, on average, 36% larger than Jones got…why isn’t the warming trend 36% greater, too? Maybe the agreement isn’t as close as it seems at first.

Well to my uncalibrated eyeballs it appears that your data is not only 1.036 higher than CRUTem3NH’s anomalies but 1.036 lower as well, so minimal net effect.

Bart (12:55:06) :

“Keep in mind, the Nyquist-Shannon sampling theory is sufficient, but not necessary to reconstruct a signal from sampled data.”

I don’t think that alters the point Bart – we need knowledge of the temperature field in order to know how to sample it. Until we have surveyed and understood what it is we are seeking to faithfully reconstruct using discrete data samples, we have insufficient knowledge about how to sample it.

The point of an initial survey is the gain enough information to determine what sampling strategy is both sufficient and necessary.

Until then, who can say whether any particular trend over a defined space or time period is- or is not distorted by aliasing.

An average 36% difference on the anomalies is pretty huge, could this be a calibration problem?

I’ve found that plotting the delta of the two traces can be very informative and might lead the direction to the next step.

Anyway, great first step.

Should the thermal mass of a volume measurement be taken into account? For example, presumably a humid space contains more heat energy than an arid volume of the same temperature.

re Robert (12:32:21) & later about measuring radiation in/out, can we ignore the biosphere?

I know growth (absorbs energy) is followed by decay (releases energy) but think how much was trapped to make all the coal, oil, & gas we have been using.

Obviously, better to have the measures of radiation than not, but careful interpretation needed…

Steve Koch,

I have been wondering the same thing. Isn’t temperature, by itself, just an arbitrary and incomplete way to look at it?

Someone posted on a thread here that the GCM’s assumed constant humidity levels, when they had actually dropped somewhat (1% I think is what they said).

I always thought you’d need to analyze both temperature and humidity to really understand the atmospheric heat content.. but then again, what do I know.

Cheers!

NickB:

You have to wonder about the validity of assuming a constant humidity.

I looked up “thermal mass” and found this interestng article:

http://wattsupwiththat.com/2009/05/06/the-global-warming-hypothesis-and-ocean-heat/

Have not finished it yet but it is saying, so far as I have read, that energy is more important than temperature and that the ocean heat content (OHC) is orders of magnitude more important than atmospheric heat content as a measure of global heat content.

I guesst that using infrared measurement as Miskolczi did would be out question? I mean if there woudl be an spot where there is a direct visibility to surface and this spot is located at rural area based on map data, could that serve as a reference point for calibration?

How many of these automated weather stations, providing real time or hourly measurements are loacted in places where real time information is needed, like at the airports for aviation weather?

In my country the goal is to increase the usage of aviation weather (at the airports) to replace the older groud stations to automate the data gathering process..

I presume there is no need to “manipulate” the temperature data when “calibrating” the satellites.

Rather, merely do a point by point (station by station) matchup of satellite “signal” (from the restricted area of each temperature station) to measured temperature — day by day — or hour by hour if the data allows. Or, is the satellite coverage “pixel size” too coarse to allow a per station comparison?

Hence, I presume you will not be using the average temperatures (as shown in this post) for calibration. Rather, you will compare the “calibrated”-satellite plot to the thermometer plot shown above.

Not really expecting an answer. Just questions I’d have about the final calibrated satellite product.

Steve,

Thanks for the link – great (complex) reading! As much time as everyone spends on the surface temps, the divergence between projected and observed heat content really does raise some significant questions

Re: Allen63 (Feb 21 18:11),

Allen63 – Here is your answer about calibration for UAH AMSU:

Spencer, Ph.D., Roy W. “How the UAH Global Temperatures Are Produced.” Scientific. Global Warming (drroyspencer.com), January 6, 2009.

http://www.drroyspencer.com/2010/01/how-the-uah-global-temperatures-are-produced/

“Microwave temperature sounders like AMSU measure the very low levels of thermal microwave radiation emitted by molecular oxygen in the 50 to 60 GHz oxygen absorption complex. This is somewhat analogous to infrared temperature sounders (for instance, the Atmospheric InfraRed Sounder, AIRS, also on Aqua) which measure thermal emission by carbon dioxide in the atmosphere.”

“Once every Earth scan, the radiometer antenna looks at a “warm calibration target” inside the instrument whose temperature is continuously monitored with several platinum resistance thermometers (PRTs). ”

“A second calibration point is needed, at the cold end of the temperature scale. For that, the radiometer antenna is pointed at the cosmic background, which is assumed to radiate at 2.7 Kelvin degrees.”

An if the CO2 levels are changing?

http://www.agu.org/pubs/crossref/1976/GL003i002p00077.shtml

“The results also show that at mid to high northern (winter) latitudes the O2 concentration is about a factor of two higher than at low southern (summer) latitudes, thus revealing an apparent reversal of the winter to summer increase in O2 deduced from optical and incoherent scatter measurements during higher levels of solar activity.”

Hmmm

Should be:

“And if the O2 levels are changing?”

“re Robert (12:32:21) & later about measuring radiation in/out, can we ignore the biosphere?

I know growth (absorbs energy) is followed by decay (releases energy) but think how much was trapped to make all the coal, oil, & gas we have been using.

Obviously, better to have the measures of radiation than not, but careful interpretation needed…”

You can turn energy from the biosphere into heat by burning stuff, but the amount of heating is extremely small relative to the sun. This is also true of geothermal activity. So if you measure the energy entering and leaving the atmosphere, you have an account of 99.9% of any energy imbalance (http://en.wikipedia.org/wiki/Radiation_budget).

We would still care about where the heat was in the biosphere: how much in the oceans, in the air, expended in melting ice, etc. But if we could track the radiation budget accurately through direct measurements, it’d be a great advance in our knowledge.