New Work on the Recent Warming of Northern Hemispheric Land Areas

by Roy W. Spencer, Ph. D.

INTRODUCTION

Arguably the most important data used for documenting global warming are surface station observations of temperature, with some stations providing records back 100 years or more. By far the most complete data available are for Northern Hemisphere land areas; the Southern Hemisphere is chronically short of data since it is mostly oceans.

But few stations around the world have complete records extending back more than a century, and even some remote land areas are devoid of measurements. For these and other reasons, analysis of “global” temperatures has required some creative data massaging. Some of the necessary adjustments include: switching from one station to another as old stations are phased out and new ones come online; adjusting for station moves or changes in equipment types; and adjusting for the Urban Heat Island (UHI) effect. The last problem is particularly difficult since virtually all thermometer locations have experienced an increase in manmade structures replacing natural vegetation, which inevitably introduces a spurious warming trend over time of an unknown magnitude.

There has been a lot of criticism lately of the two most publicized surface temperature datsets: those from Phil Jones (CRU) and Jim Hansen (GISS). One summary of these criticisms can be found here. These two datasets are based upon station weather data included in the Global Historical Climate Network (GHCN) database archived at NOAA’s National Climatic Data Center (NCDC), a reduced-volume and quality-controlled dataset officially blessed by your government for climate work.

One of the most disturbing changes over time in the GHCN database is a rapid decrease in the number of stations over the last 30 years or so, after a peak in station number around 1973. This is shown in the following plot which I pilfered from this blog.

Given all of the uncertainties raised about these data, there is increasing concern that the magnitude of observed ‘global warming’ might have been overstated.

TOWARD A NEW SATELLITE-BASED SURFACE TEMPERATURE DATASET

We have started working on a new land surface temperature retrieval method based upon the Aqua satellite AMSU window channels and “dirty-window” channels. These passive microwave estimates of land surface temperature, unlike our deep-layer temperature products, will be empirically calibrated with several years of global surface thermometer data.

The satellite has the benefit of providing global coverage nearly every day. The primary disadvantages are (1) the best (Aqua) satellite data have been available only since mid-2002; and (2) the retrieval of surface temperature requires an accurate adjustment for the variable microwave emissivity of various land surfaces. Our method will be calibrated once, with no time-dependent changes, using all satellite-surface station data matchups during 2003 through 2007. Using this method, if there is any spurious drift in the surface station temperatures over time (say due to urbanization) this will not cause a drift in the satellite measurements.

Despite the shortcomings, such a dataset should provide some interesting insights into the ability of the surface thermometer network to monitor global land temperature variations. (Sea surface temperature estimates are already accurately monitored with the Aqua satellite, using data from AMSR-E).

THE INTERNATIONAL SURFACE HOURLY (ISH) DATASET

Our new satellite method requires hourly temperature data from surface stations to provide +/- 15 minute time matching between the station and the satellite observations. We are using the NOAA-merged International Surface Hourly (ISH) dataset for this purpose. While these data have not had the same level of climate quality tests the GHCN dataset has undergone, they include many more stations in recent years. And since I like to work from the original data, I can do my own quality control to see how my answers differ from the analyses performed by other groups using the GHCN data.

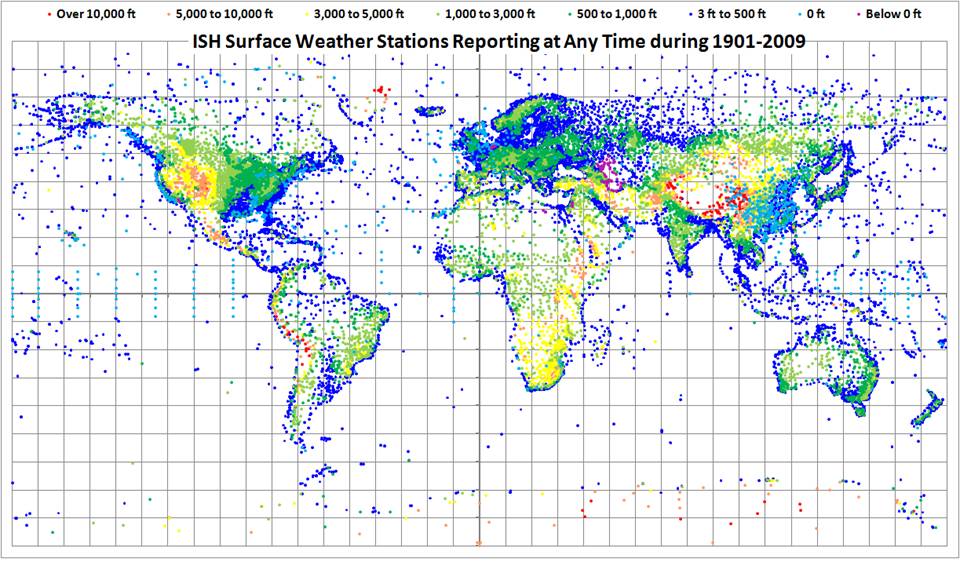

The ISH data include globally distributed surface weather stations since 1901, and are updated and archived at NCDC in near-real time. The data are available for free to .gov and .edu domains. (NOTE: You might get an error when you click on that link if you do not have free access. For instance, I cannot access the data from home.)

The following map shows all stations included in the ISH dataset. Note that many of these are no longer operating, so the current coverage is not nearly this complete. I have color-coded the stations by elevation (click on image for full version).

WARMING OF NORTHERN HEMISPHERIC LAND AREAS SINCE 1986

Since it is always good to immerse yourself into a dataset to get a feeling for its strengths and weaknesses, I decided I might as well do a Jones-style analysis of the Northern Hemisphere land area (where most of the stations are located). Jones’ version of this dataset, called “CRUTem3NH”, is available here.

I am used to analyzing large quantities of global satellite data, so writing a program to do the same with the surface station data was not that difficult. (I know it’s a little obscure and old-fashioned, but I always program in Fortran). I was particularly interested to see whether the ISH stations that have been available for the entire period of record would show a warming trend in recent years like that seen in the Jones dataset. Since the first graph (above) shows that the number of GHCN stations available has decreased rapidly in recent years, would a new analysis using the same number of stations throughout the record show the same level of warming?

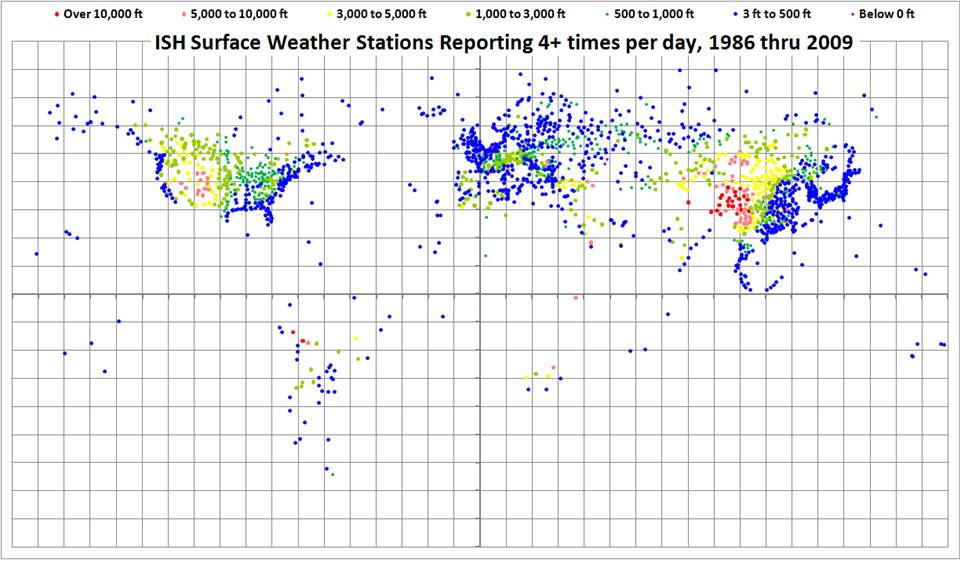

The ISH database is fairly large, organized in yearly files, and I have been downloading the most recent years first. So far, I have obtained data for the last 24 years, since 1986. The distribution of all stations providing fairly complete time coverage since 1986, having observations at least 4 times per day, is shown in the following map.

I computed daily average temperatures at each station from the observations at 00, 06, 12, and 18 UTC. For stations with at least 20 days of such averages per month, I then computed monthly averages throughout the 24 year period of record. I then computed an average annual cycle at each station separately, and then monthly anomalies (departures from the average annual cycle).

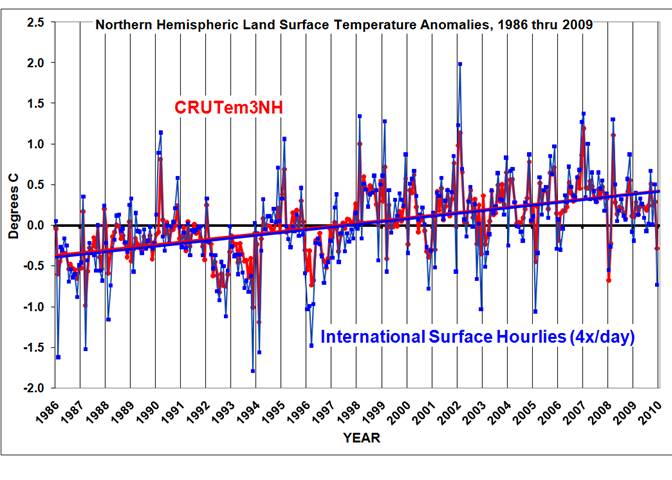

Similar to the Jones methodology, I then averaged all station month anomalies in 5 deg. grid squares, and then area-weighted those grids having good data over the Northern Hemisphere. I also recomputed the Jones NH anomalies for the same base period for a more apples-to-apples comparison. The results are shown in the following graph.

I’ll have to admit I was a little astounded at the agreement between Jones’ and my analyses, especially since I chose a rather ad-hoc method of data screening that was not optimized in any way. Note that the linear temperature trends are essentially identical; the correlation between the monthly anomalies is 0.91.

One significant difference is that my temperature anomalies are, on average, magnified by 1.36 compared to Jones. My first suspicion is that Jones has relatively more tropical than high-latitude area in his averages, which would mute the signal. I did not have time to verify this.

Of course, an increasing urban heat island effect could still be contaminating both datasets, resulting in a spurious warming trend. Also, when I include years before 1986 in the analysis, the warming trends might start to diverge. But at face value, this plot seems to indicate that the rapid decrease in the number of stations included in the GHCN database in recent years has not caused a spurious warming trend in the Jones dataset — at least not since 1986. Also note that December 2009 was, indeed, a cool month in my analysis.

FUTURE PLANS

We are still in the early stages of development of the satellite-based land surface temperature product, which is where this post started.

Regarding my analysis of the ISH surface thermometer dataset, I expect to extend the above analysis back to 1973 at least, the year when a maximum number of stations were available. I’ll post results when I’m done.

In the spirit of openness, I hope to post some form of my derived dataset — the monthly station average temperatures, by UTC hour — so others can analyze it. The data volume will be too large to post at this website, which is hosted commercially; I will find someplace on our UAH computer system so others can access it through ftp.

While there are many ways to slice and dice the thermometer data, I do not have a lot of time to devote to this side effort. I can’t respond to all the questions and suggestions you e-mail me on this subject, but I promise I will read them.

Folks – lets get one thing straight for the analysis portion of the post:

The hypothesis being tested was that, for the northern hemisphere at least, the massive GHCN station count drop-off in the last twenty years introduced or exacerbated the warming trend.

It was not to test instrumentation accuracy, UHI, or even the accuracy of CRU vs. an independent analysis on a historical timeframe.

I do appreciate that, for good measure, he used raw data as well, but given the nature of the analysis it wasn’t actually necessary. I’d also be curious to see GISS and UAH on the graph since I have heard rumors about divergences between the 3 over the last few years

Bart, there’s no interpolation. I’m not a big fan of creating data where there are none. As it is, spreading a single thermometer over a 5×5 deg grid square (90,000 sq. nautical miles at the equator) is a bit of a stretch already. That’s one advantage of the satellite data…every square mile of the Earth’s surface is sampled (except near the poles).

NH Land temps today are the same as 1990, or twenty years ago. I thought global warming was a runaway train.

So the world is warming just like everyone said?

Guess ClimateGate was a red herring after all.

“40 Shades of Green (13:23:19) :

[…]

This looks to be bang in the middle of AR4 projections, does it not.”

For which scenario[s], 40 Shades of Green? You do know that they made different assumptions re the CO2 emissions, do you?

I’m not at all surprised by the close agreement between Spencer’s and Jones’ analysis of essentially similar station data for recent decades. The devil lies in the patching together of anomalies from different stations in the early decades of the past century. That’s where offsets and adjustments in the name of “homogenizing” the records introduce dubious “trends.”

Although this is a step right direction, my concerns about data sampling remain.

Until we have analysed the temperature field (in space and time), how do we know how to sample it to avoid aliasing?

Until we understand the dynamics of the temperature field and determined a sampling regime which meets the requirements of Shannon’s Sampling Theorem, any such series will have a great deal of uncertainty hanging over it.

Those sharp steps in the series are a cause for concern. Do they indicate high frequency components in the signal (“frequency” meaning for time or space) and the possibility of aliasing of the underlying (analogue) temperature signal?

Remember that faithful reconstruction of a signal from discrete samples is a much more onerous task than being able to calculate statistical aggregates. But even then, if inadequate sampling results in aliased data, we cannot rely on the calculation of statistical aggregates of the samples to give an accurate measire of the statistical aggregates of the analogue signal.

Sorry if this is repetitive as I debated these points at length on an earlier thread. There is possibly little to add by debating them again here, so I propose not to do so.

I’d be interested to hear about any analysis of the dynamics of the temperature field (specifically, identification of the temporal or spatial bandwidths) which could help to address the question of whether the requirements of the Sampling Theorem have been met.

Cheers.

nc (13:08:48) :

Maybe it’s the raw adjusted data?

Is the data you’re using adjusted in any way before you get it?

I can’t access the noaa data ftp directory (no surprise) but couldn’t stop myself from digging around on that ftp server… some data is publicly accessible. I found all time snow record files. Compare 19 Feb 2010 to 19 Feb 2007:

ftp://ftp.ncdc.noaa.gov/pub/data/extremes/all-time/snow/20070219.txt

Number of Broken Records: 0

Number of Tied Records: 1

ftp://ftp.ncdc.noaa.gov/pub/data/extremes/all-time/snow/20100219.txt

Number of Broken Records: 229

Number of Tied Records: 117

“Robert (13:35:26) :

[…]

Makes perfect sense. You have a problem with the concept of a radiation budget?”

So you say we model/measure (like Hansen+Schmidt) a radiation imbalance to an absurd precision (or at least purport that we do), deduce that the earth is storing energy and stop looking for where it might be? That’s nothing new, Robert, Hansen and Schmidt have done exactly that. It was nonsense back then and it’s nonsense now. Google for radiation imbalance 0.85 Watt/m^2 or something like that but you (or your telephone) probably now that already.

If the earth purportedly stores energy (you say yourself “stored heat”) it would have to be, well, warm somewhere, wouldn’t it? You’d rather stop measuring altogether? And that sounds like an intelligent way to go about things to you? A smart thing to do, smart on an Archimedes-type smartness level? Really? You’re a joker.

John Hooper,

I must have missed the part about how all the various issues raised in those e-mails hinged on GHCN station dropout for the Northern Hemisphere in the last 24 years.

In fairness, this does seem to show that for the past 24 years the CRU results for the Northern Hemisphere do seem to past the smell test around station drop out and adjustments.

@ur momisugly DirkH

Wow, Dirk, you’ve got a lot of fear there. You have no rational case, of course. Measuring the radiation budget more accurately is good for everybody who cares about the science. But given that you put “stored heat” in quotation marks, that apparently doesn’t include you.

I have to thank that a good way to guess which said of a debate is full of crap it would be the side that’s afraid of better measurements.

NH extratropics are a good measure of warming/cooling, since the Nino effect is not much visible there. Looks that NH has peaked in 2006-2007. This Dec-Jan has anomaly equal to early 80ties 😮

I accept that cold winter in one part is not the whole globe, but where is all that warmth from the whole hemisphere hidden? It is a travesty!

http://climexp.knmi.nl/data/icrutem3_hadsst2_0-360E_23.5-90N_n_1980:2011a.png

Robert, I don’t understand your reference to Archimedes. As far as I know he was only interested in buoyancy/gravity:

http://en.wikipedia.org/wiki/Eureka_(word)

My understanding is that there is some quality testing with quality flags included with each ISH observation. I doubt that any of the temperatures have been altered in any way.

NOW…in retrospect, I’m surprised no one asked the following question:

If the monthly temperature anomalies are, on average, 36% larger than Jones got…why isn’t the warming trend 36% greater, too? Maybe the agreement isn’t as close as it seems at first.

I would be careful about infilling empty grids. You could be crossing climate zones and thus ascribing a temperature that would be false, IE it may be true for the climate zone it came from, but cannot be accurate for the climate zone it is infilling next to it if that climate zone has no data.

UAH showed a huge spike in January which was much smaller in GISS. Same thing in 1998. It raises questions about the accuracy of TLT measurements.

A major complication is gridding/interpolation, as Bart (12:57:41) : notes above at 20/2. For years I have seen reporting of 5 x 5 grid cells with area weighted averages. There are many different ways to arrive at composite figures from sparse observations and the method above is only one. I’d be inclined to bring in some mining expertise if this had not been done already. One way miners approach this differently is to delete or assign low grade vales to blocks with insufficient information. It costs money to process barren rock that looks like paydirt because the math was unsuited.

You note that “I’ll have to admit I was a little astounded at the agreement between Jones’ and my analyses” I do not think that the analaysis is particularly close. Most extremes are in blue, for a start, possibly meaning that there is some discconnect between measuring 1.5 m above the surface and at the higher altitude satellite region; or that CRU has more severe smoothing or whatever.

There is reasonable agreement at this scale on the time axis, but that needs a bit more mechanism discussion. I’ve been working on the 1998 hot year and can find no explanation for it, particularly one involving GHG. So until the reasons for the ammual excursions can be explained, they remain as lines and points on a graph.

Since the core of the earth is hotter than the surface (by a considerable extent) it is not enough just to form an energy budget by simply looking at the amount of radiant energy arriving at or leaving the surface. You’ve got to also make some accounting for the heat rising to the surface from the interior.

Furthermore heat energy will not manifest directly as a temperature rise. Much will be absorbed in state transitions – ice to water and water to steam being the main two. And some will be absorbed in driving chemical reactions – for example the chlorophyll mediated reaction converting CO_2 into sugar.

I don’t see that accounting for all this is going to be any simpler than trying to keep accurate temperature records. In particular a heat budget isn’t going to end ambiguity since a lot of assumptions will have to be made about these things.

Dr. Roy,

I had caught the comment about anomalies in your post, and I guess for a rusty economics student that does IT for a living I’m sure I don’t grasp the consequences of it. I was thinking, incorrectly I suspect, that it meant the amplitude of your analysis was greater but, due to the graph, the resulting averages were consistent. Maybe not…

Any insight to what this tells us about CRU and what it doesn’t?

It will be good to have a new surface temperature data set, and I congratulate Dr Spencer on the initiative.

But that GHCN plot suggests that there has been a large recent drop to about 400 stations. You see this sometimes, and it’s always the last month. It’s an elementary error – we saw it a little while ago with the Langoliers. The fact is that GHCN updates with new monthly data as it comes in, a few days at a time. If you look at the file early in the month, you’ll see only a few hundred reporting the latest month. But there has been a base of 1200+ stations reporting consistently (if sometimes a few days later) for many years now. I’ve documented this here. I don’t believe you’ll find a single month in the last fifty years that, by the end of the following month, did not have reports from at least 1100 stations.

It wouldn’t be too hard to compute a GISS or CRU record for the same area that was used here. Both provide gridded data, after all. Apples to apples.

That’d test the idea of whether a lack of tropical coverage increased the variability in the Spencer set.

“I computed daily average temperatures at each station from the observations at 00, 06, 12, and 18 UTC.”

This really is UTC, and not local time for each station? This in itself is somewhat interesting, if correct. It basically says that you can measure the temp every 6 hours, and no matter what the phase, you’ll still get a trend that correlates really well with the trend in (Tmax+Tmin)/2.

I can’t quite decide whether I’m surprised by that, or not.

Dr. Roy.

It really is nice to see your participation in the thread that arose from your post.

That way we all get feedback. What’s more, it’s ‘interactive’. 🙂

Did you ever ride a motorcycle? I did (in my youth). One thing you realise when you ride a motorcycle is that your ‘spatial awareness’ is increased. Riding through the countryside in summer you can feel the air temperatures alter as you ride through different temperature regions, and these can alter three, or even four, times for each kilometre of your travel. Autumn, winter and spring aren’t so significant because it just feels ‘cold’ here in the UK when biking, but hey, that’s just a sensual human thing.

This brings me to the point that ‘Jordan’ made (Jordan (14:07:31)). Signal resolution!

Steve_M once asked me what my preference for resolution of surface temperature stations would be and I replied ‘a 1 km grid’, to which he replied ‘we wish’! I’m sure you realise the ramifications of this, if networking nodes are altered the overall signal reception is also altered and especially when the ‘resolution’ is well outside of the ‘1/3 of the signal frequency’ required for signal definition.

I wish you luck with your project, but I’m apprehensive of it’s outcome.

Best regards, suricat.

I’d been thinking about doing something like this using SYNOPs, but didn’t realise these data were all stored here. Your map shows that Africa is still sparse, but I wonder if you’ll get more if you relax the data completeness standards a bit.

Get out your automatic downloaders, gentlemen, it’s a lot of files.