Jeff Id emailed me today, to ask if I wanted to post this with the caveat “it’s very technical, but I think you’ll like it”. Indeed I do, because it represents a significant step forward in the puzzle that is the Steig et all paper published in Nature this year ( Nature, Jan 22, 2009) that claims to have reversed the previously accepted idea that Antarctica is cooling. From the “consensus” point of view, it is very important for “the Team” to make Antarctica start warming. But then there’s that pesky problem of all that above normal ice in Antarctica. Plus, there’s other problems such as buried weather stations which will tend to read warmer when covered with snow. And, the majority of the weather stations (and thus data points) are in the Antarctic peninsula, which weights the results. The Antarctic peninsula could even be classified under a different climate zone given it’s separation from the mainlaind and strong maritime influence.

A central prerequisite point to this is that Steig flatly refused to provide all of the code needed to fully replicate his work in MatLab and RegEM, and has so far refused requests for it. So without the code, replication would be difficult, and without replication, there could be no significant challenge to the validity of the Steig et al paper.

Steig’s claim that there has been “published code” is only partially true, and what has been published by him is only akin to a set of spark plugs and a manual on using a spark plug wrench when given the task of rebuilding an entire V-8 engine.

In a previous Air Vent post, Jeff C points out the percentage of code provided by Steig:

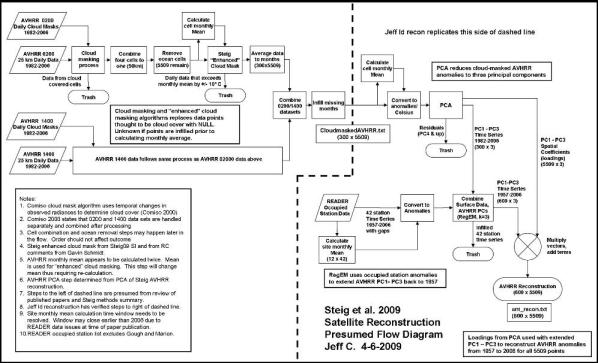

“Here is an excellent flow chart done by JeffC on the methods used in the satellite reconstruction. If you see the little rectangle which says RegEM at the bottom right of the screen, that’s the part of the code which was released, the thousands of lines I and others have written for the rest of the little blocks had to be guessed at, some of it still isn’t figured out yet.”

With that, I give you Jeff and Ryan’s post below. – Anthony

Posted by Jeff Id on May 20, 2009

I was going to hold off on this post because Dr. Weinstein’s post is getting a lot of attention right now it has been picked up on several blogs and even translated into different languages but this is too good not to post.

Ryan has done something amazing here, no joking. He’s recalibrated the satellite data used in Steig’s Antarctic paper correcting offsets and trends, determined a reasonable number of PC’s for the reconstruction and actually calculated a reasonable trend for the Antarctic with proper cooling and warming distributions – He basically fixed Steig et al. by addressing the very concern I had that AVHRR vs surface station temperature(SST) trends and AVHRR station vs SST correlation were not well related in the Steig paper.

Not only that he demonstrated with a substantial blow the ‘robustness’ of the Steig/Mann method at the same time.

If you’ve followed this discussion whatsoever you’ve got to read this post.

RegEM for this post was originally transported to R by Steve McIntyre, certain versions used are truncated PC by Steve M as well as modified code by Ryan.

Ryan O – Guest post on the Air Vent

I’m certain that all of the discussion about the Steig paper will eventually become stale unless we begin drawing some concrete conclusions. Does the Steig reconstruction accurately (or even semi-accurately) reflect the 50-year temperature history of Antarctica?

Probably not – and this time, I would like to present proof.

I: SATELLITE CALIBRATION

As some of you may recall, one of the things I had been working on for awhile was attempting to properly calibrate the AVHRR data to the ground data. In doing so, I noted some major problems with NOAA-11 and NOAA-14. I also noted a minor linear decay of NOAA-7, while NOAA-9 just had a simple offset.

But before I was willing to say that there were actually real problems with how Comiso strung the satellites together, I wanted to verify that there was published literature that confirmed the issues I had noted. Some references:

(NOAA-11)

Click to access i1520-0469-59-3-262.pdf

(Drift)

(Ground/Satellite Temperature Comparisons)

Click to access p26_cihlar_rse60.pdf

The references generally confirmed what I had noted by comparing the satellite data to the ground station data: NOAA-7 had a temperature decrease with time, NOAA-9 was fairly linear, and NOAA-11 had a major unexplained offset in 1993.

Let us see what this means in terms of differences in trends.

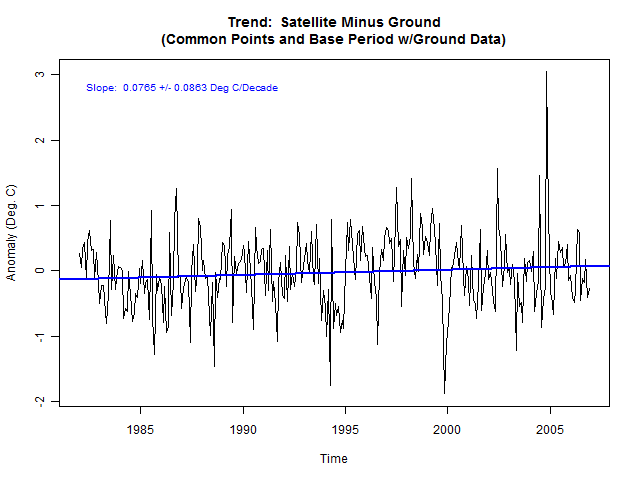

The satellite trend (using only common points between the AVHRR data and the ground data) is double that of the ground trend. While zero is still within the 95% confidence intervals, remember that there are 6 different satellites. So even though the confidence intervals overlap zero, the individual offsets may not.

In order to check the individual offsets, I performed running Wilcoxon and t-tests on the difference between the satellites and ground data using a +/-12 month range. Each point is normalized to the 95% confidence interval. If any point exceeds +/- 1.0, then there is a statistically significant difference between the two data sets.

Note that there are two distinct peaks well beyond the confidence intervals and that both lines spend much greater than 5% of the time outside the limits. There is, without a doubt, a statistically significant difference between the satellite data and the ground data.

As a sidebar, the Wilcoxon test is a non-parametric test. It does not require correction for autocorrelation of the residuals when calculating confidence intervals. The fact that it differs from the t-test results indicates that the residuals are not normally distributed and/or the residuals are not free from correlation. This is why it is important to correct for autocorrelation when using tests that rely on assumptions of normality and uncorrelated residuals. Alternatively, you could simply use non-parametric tests, and though they often have less statistical power, I’ve found the Wilcoxon test to be pretty good for most temperature analyses.

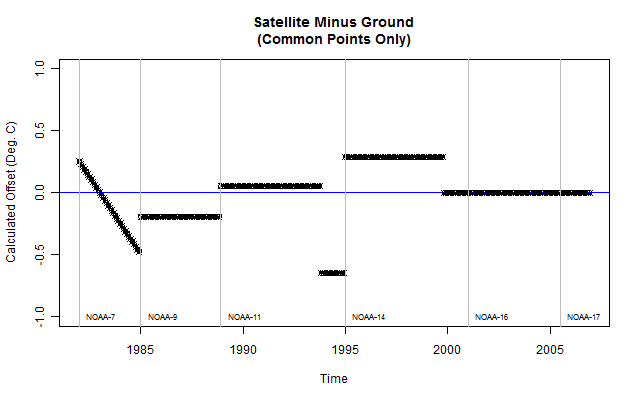

Here’s what the difference plot looks like with the satellite periods shown:

The downward trend during NOAA-7 is apparent, as is the strange drop in NOAA-11. NOAA-14 is visibly too high, and NOAA-16 and -17 display some strange upward spikes. Overall, though, NOAA-16 and -17 do not show a statistically significant difference from the ground data, so no correction was applied to them.

After having confirmed that other researchers had noted similar issues, I felt comfortable in performing a calibration of the AVHRR data to the ground data. The calculated offsets and the resulting Wilcoxon and t-test plot are next:

To make sure that I did not “over-modify” the data, I ran a Steig (3 PC, regpar=3, 42 ground stations) reconstruction. The resulting trend was 0.1079 deg C/decade and the trend maps looked nearly identical to the Steig reconstructions. Therefore, the satellite offsets – while they do produce a greater trend when not corrected – do not seem to have a major impact on the Steig result. This should not be surprising, as most of the temperature rise in Antarctica occurs between 1957 and 1970.

II: PCA

One of the items that we’ve spent a lot of time doing sensitivity analysis is the PCA of the AVHRR data. Between Jeff Id, Jeff C, and myself, we’ve performed somewhere north of 200 reconstructions using different methods and different numbers of retained PCs. Based on that, I believe that we have a pretty good feel for the ranges of values that the reconstructions produce, and we all feel that the 3 PC, regpar=3 solution does not accurately reproduce Antarctic temperatures. Unfortunately, our opinions count for very little. We must have a solid basis for concluding that Steig’s choices were less than optimal – not just opinions.

How many PCs to retain for an analysis has been the subject of much debate in many fields. I will quickly summarize some of the major stopping rules:

1. Kaiser-Guttman: Include all PCs with eigenvalues greater than the average eigenvalue. In this case, this would require retention of 73 PCs.

2. Scree Analysis: Plot the eigenvalues from largest to smallest and take all PCs where the slope of the line visibly ticks up. This is subjective, and in this case it would require the retention of 25 – 50 PCs.

3. Minimum explained variance: Retain PCs until some preset amount of variance has been explained. This preset amount is arbitrary, and different people have selected anywhere from 80-95%. This would justify including as few as 14 PCs and as many as 100.

4. Broken stick analysis: Retain PCs that exceed the theoretical scree plot of random, uncorrelated noise. This yields precisely 11 PCs.

5. Bootstrapped eigenvalue and eigenvalue/eigenvector: Through iterative random sampling of either the PCA matrix or the original data matrix, retain PCs that are statistically different from PCs containing only noise. I have not yet done this for the AVHRR data, though the bootstrap analysis typically yields about the same number (or a slightly greater number) of significant PCs as broken stick.

The first 3 rules are widely criticized for being either subjective or retaining too many PCs. In the Jackson article below, a comparison is made showing that 1, 2, and 3 will select “significant” PCs out of matrices populated entirely with uncorrelated noise. There is no reason to retain noise, and the more PCs you retain, the more difficult and cumbersome the analysis becomes.

The last 2 rules have statistical justification. And, not surprisingly, they are much more effective at distinguishing truly significant PCs from noise. The broken stick analysis typically yields the fewest number of significant PCs, but is normally very comparable to the more robust bootstrap method.

Note that all of these rules would indicate retaining far more than simply 3 PCs. I have included some references:

Click to access North_et_al_1982_EOF_error_MWR.pdf

I have not yet had time to modify a bootstrapping algorithm I found (it was written for a much older version of R), but when I finish that, I will show the bootstrap results. For now, I will simply present the broken stick analysis results.

The broken stick analysis finds 11 significant PCs. PCs 12 and 13 are also very close, and I suspect the bootstrap test will find that they are significant. I chose to retain 13 PCs for the reconstruction to follow.

Without presenting plots for the moment, retaining more than 11 PCs does not end up affecting the results much at all. The trend does drop slightly, but this is due to better resolution on the Peninsula warming. The rest of the continent does not change if additional PCs are added. The only thing that changes is the time it takes to do the reconstruction.

Remember that the purpose of the PCA on the AVHRR data is not to perform factor analysis. The purpose is simply to reduce the size of the data to something that can be computed. The penalty for retaining “too many” – in this case – is simply computational time or the inability for RegEM to converge. The penalty for retaining too few, on the other hand, is a faulty analysis.

I do not see how the choice of 3 PCs can be justified on either practical or theoretical grounds. On the practical side, RegEM works just fine with as many as 25 PCs. On the theoretical side, none of the stopping criteria yield anything close to 3. Not only that, but these are empirical functions. They have no direct physical meaning. Despite claims in Steig et al. to the contrary, they do not relate to physical processes in Antarctica – at least not directly. Therefore, there is no justification for excluding PCs that show significance simply because the other ones “look” like physical processes. This latter bit is a whole other discussion that’s probably post worthy at some point, but I’ll leave it there for now.

III: RegEM

We’ve also spent a great deal of time on RegEM. Steig & Co. used a regpar setting of 3. Was that the “right” setting? They do not present any justification, but that does not necessarily mean the choice is wrong. Fortunately, there is a way to decide.

RegEM works by approximating the actual data with a certain number of principal components and estimating a covariance from which missing data is predicted. Each iteration improves the prediction. In this case (unlike the AVHRR data), selecting too many can be detrimental to the analysis as it can result in over-fitting, spurious correlations between stations and PCs that only represent noise, and retention of the initial infill of zeros. On the other hand, just like the AVHRR data, too few will result in throwing away important information about station and PC covariance.

Figuring out how many PCs (i.e., what regpar setting to use) is a bit trickier because most of the data is missing. Like RegEM itself, this problem needs to be approached iteratively.

The first step was to substitute AVHRR data for station data, calculate the PCs, and perform the broken stick analysis. This yielded 4 or 5 significant PCs. After that, I performed reconstructions with steadily increasing numbers of PCs and performed a broken stick analysis on each one. Once the regpar setting is high enough to begin including insignificant PCs, the broken stick analysis yields the same result every time. The extra PCs show up in the analysis as noise. I first did this using all the AWS and manned stations (minus the open ocean stations).

I ran this all the way up to regpar=20 and the broken stick analysis indicates that 9 PCs are required to properly describe the station covariance. Hence the appropriate regpar setting is 9 if all the manned and AWS stations are used. It is certainly not 3, which is what Steig used for the AWS recon.

I also performed this for the 42 manned stations Steig selected for the main reconstruction. That analysis yielded a regpar setting of 6 – again, not 3.

The conclusion, then, is similar to the AVHRR PC analysis. The selection of regpar=3 does not appear to be justifiable. Additional PCs are necessary to properly describe the covariance.

IV: THE RECONSTRUCTION

So what happens if the satellite offsets are properly accounted for, the correct number of PCs are retained, and the right regpar settings are used? I present the following panel:

(Right side) Reconstruction trends using just the model frame.

RegEM PTTLS does not return the entire best-fit solution (the model frame, or surface). It only returns what the best-fit solution says the missing points are. It retains the original points. When imputing small amounts of data, this is fine. When imputing large amounts of data, it can be argued that the surface is what is important.

RegEM IPCA returns the surface (along with the spliced solution). This allows you to see the entire solution. In my opinion, in this particular case, the reconstruction should be based on the solution, not a partial solution with data tacked on the end. That is akin to doing a linear regression, throwing away the last half of the regression, adding the data back in, and then doing another linear regression on the result to get the trend. The discontinuity between the model and the data causes errors in the computed trend.

Regardless, the verification statistics are computed vs. the model – not the spliced data – and though Steig did not do this for his paper, we can do it ourselves. (I will do this in a later post.) Besides, the trends between the model and the spliced reconstructions are not that different.

Overall trends are 0.071 deg C/decade for the spliced reconstruction and 0.060 deg C/decade for the model frame. This is comparable to Jeff’s reconstructions using just the ground data, and as you can see, the temperature distribution of the model frame is closer to that of the ground stations. This is another indication that the satellites and the ground stations are not measuring exactly the same thing. It is close, but not exact, and splicing PCs derived solely from satellite data on a reconstruction where the only actual temperatures come from ground data is conceptually suspect.

When I ran the same settings in RegEM PTTLS – which only returns a spliced version – I got 0.077 deg C/decade, which checks nicely with RegEM IPCA.

I also did 11 PC, 15 PC, and 20 PC reconstructions. Trends were 0.081, 0.071, and 0.069 for the spliced and 0.072, 0.059, and 0.055 for the model. The reason for the reduction in trend was simply better resolution (less smearing) of the Peninsula warming.

Additionally, I ran reconstructions using just Steig’s station selection. With 13 PCs, this yielded a spliced trend of 0.080 and a model trend of 0.065. I then did one after removing the open-ocean stations, which yielded 0.080 and 0.064.

Note how when the PCs and regpar are properly selected, the inclusion and exclusion of individual stations does not significantly affect the result. The answers are nearly identical whether 98 AWS/manned stations are used, or only 37 manned stations are used. One might be tempted to call this “robust”.

V: THE COUP DE GRACE

Let us assume for a moment that the reconstruction presented above represents the real 50-year temperature history of Antarctica. Whether this is true is immaterial. We will assume it to be true for the moment. If Steig’s method has validity, then, if we substitute the above reconstruction for the raw ground and AVHRR data, his method should return a result that looks similar to the above reconstruction.

Let’s see if that happens.

For the substitution, I took the ground station model frame (which does not have any actual ground data spliced back in) and removed the same exact points that are missing from the real data.

I then took the post-1982 model frame (so the one with the lowest trend) and substituted that for the AVHRR data.

I set the number of PCs equal to 3.

I set regpar equal to 3 in PTTLS.

I let it rip.

Look familiar?

Overall trend: 0.102 deg C/decade.

Remember that the input data had a trend of 0.060 deg C/decade, showed cooling on the Ross and Weddel ice shelves, showed cooling near the pole, and showed a maximum trend in the Peninsula.

If “robust” means the same answer pops out of a fancy computer algorithm regardless of what the input data is, then I guess Antarctic warming is, indeed, “robust”.

———————————————

Code for the above post is HERE.

I thought I had a vague idea of what was being said until I read “Bootstrapped eigenvalue and eigenvalue/eigenvector” and realised the whole thing is the world’s most complex ever anagram competition.

Wow – I am thoroughly impressed!

Ryan, I’m sure that the critics will be all over this, but after reading all the comments on Steig, et all on CA – well done sir! As esoteric as some of this is, I can still follow it.

Keep this up and WUWT will be the best science blog for a second year.

It would be interesting to hear Eric Steig’s comments on all this. He must surely be back from his 3 months in Antarctica now. Perhaps you could ask him.

Great work! I hope you plan on submitting this for publication to the same folks that so eagerly offered Steig et al a soapbox to trumpet their obviously less worthy work. Of course, if they do actually to publish it, I’ll owe you a six pack of Guinness, ’cause I’m betting their bias won’t allow them to do it.

It’ll be interesting to see if Steig can mount a defense of using only 3 PCs. I’m betting he won’t even bother — either ignore you or reply ad hominem. The intellectual community will, despite their biases, step up (I think/hope).

When this paper by Steig et al. was first being discussed I think I wrote something to the effect that in some types of analyses (socio/economic) where the first few components do have a meaningful interpretation one should go beyond those with caution, but in this case it shouldn’t matter. That was meant to imply that one should use all that helped in the reconstruction. Many years have passed since I’ve worked with such techniques (punched cards on an IBM 360) but I’ve retained just enough to appreciate what you have done. You have done an amazing amount of work. Also, I’m amazed that I can follow along. Must be your excellent writing skills. Good job, John.

Excellent job on a herculean task!!! What we need is a brave journalist to risk his livelihood to hand this to the editor/producer. I happen to know the planet is cooling. Last night I awoke to find 2 feet of ice in my bed. Both of them belonged to my wife. 8^]

OT, Just on Icecap

http://icecap.us/images/uploads/GlobalWarmingMyth.pdf

Somewhere in all this there must be a joke about how many PHD’s it takes to read a thermometer.

Why is measuring temperature such a pseudoscience? If an accurate answer is so important lets develop some new temp stations recalibrate them once or twice a year and take an ave temp. Remove the outliers and voila we have the answer of it getting colder or hotter over some arbitrary timespan. Lets find a way to calibrate the satellite data with high altitude balloons or something.

To me if you have to apply a multitude of algorithms to it the data is not even worth using. We are ultimately talking about measuring temps not tweaking an atom smasher to recreate the big bang. Why is this so hard?

Steig had a reputation of being a nice guy but hanging around Michael Mann has tarnished his reputation.

I think it should be added that there is cooling instead of warming, if the starting date is shifted a few years forward, falsifying the CO2 thesis even more drastically.

@ur momisugly FatBigot (21:19:30) :

I believe the Jackson article referenced in the text is: Stopping Rules in Principal Components Analysis: A Comparison of Heuristical and Statistical Approaches, Donald A. Jackson (1993) which corresponds to the referenced url: http://labs.eeb.utoronto.ca/jackson/pca.pdf.

FatBigot (21:19:30) :

I thought I had a vague idea of what was being said until I read “Bootstrapped eigenvalue and eigenvalue/eigenvector” and realised the whole thing is the world’s most complex ever anagram competition.

I’ve always had trouble with eigenvalues, too. I do remember that in “No Way Out” while looking for who killed Gene Hackman’s mistress, the CIA took a throwaway Polaroid backpaper, kicked up the eigenvalue, and came up with a studio quality photo of Kevin Costner. Impressive technology.

This is similarly a great piece of crime scene investigation.

It’s nice to know we have a few folks who aren’t gee-whizzed by the “real” scientists’ techspeak. Excellent work, Jeff, Jeff, and Ryan.

After the Seattle Times ran a story giving coverage to this fiasco ( Steig et al ), I emailed both the paper and the ” scientist ” protesting the very unscientific methods and conclusion of this ” piece of work “. I received no response from the individual and the Times editor ignored my email

This man gives computer science and the state of Washington no service.

Perhaps readers here would like to drop a postal mail to the individual and invite him to come here and defend his ” slop ” ?

Eric J. Steig

Department of Earth and Space Sciences and Quaternary Research Center, University of Washington, Seattle, Washington 98195

Saw a report yesterday in the UK about climate protestors in Washington, angry about coal as a fuel, who were trying to close down a power generatation plant. James Hansen was amongst them.

I noticed that there was snow in Washington. Was this recent? Is snow in mid-May usual in this part of the world?

If an accurate answer is so important lets develop some new temp stations recalibrate them once or twice a year and take an ave temp.

No, it’s far more important to spend that huge pile of money they just got to spruce up the home office in Asheville.

Great post. Of course what was done at the end should have been the very first step performed by the Steig team. “If we create a plausible data set, then run our algorithms on it, do we more or less get a summary of the original data set back out”. That is science. Instead it went through the meat grinder and came out with the answer they wanted and that of course was enough to get it published.

While looking at previous posts on this, a key issue identified was that 35% of the weather stations were in the peninsula which is 5% of the land mass. We know that the peninsula is warming for other (including Urban Heat Island) effects. Another issue was that four weather stations on offshore islands were thrown in for good measure. As I understood it, the approach of Steig was to essentially pretend that the weather stations were evenly spread.

This of course seems like a nonsensical approach to the average layman. Many posters here likened it to trying to map the climate history of the United States based on temperatures in Florida.

So this gets me to the question. This article would seem to imply that the underlying methodology is sound, but that the statistical manipulation is wrong.

… or are both the underlying methodology and the statistical manipulation wrong?

In any case, incredible work.

As suspected it is the Steig methodology that is flawed.

Well done to everyone for a great effort.

Anthony should consider giving you guys a medal for this work.

Impressive on all fronts – I hope this work can find its way to publication.

So is Steig’s work/publication in Nature, now falsified?.. this is really the crucial question.

I haven’t a clue what most of that means and my eyes began to glaze over after the first couple of graphs. However, I was able to conclude that the warmists have been caught cooking the books yet again.

Well done!

Fantastic work! Now if only the Establishment would admit this work even exists. I guess no chance of getting Nature to retract Steig’s paper….

@VG

IMHO it puts Steig’s work in nearly as bad light as Mann’s infamous hockey stick. Which is to say it’s a badly done analysis, which tends to give the Alarmists the answer they want (i.e. more warming than is likely to have actually occurred).

Whether or not this was intentional, one can only speculate. I guess Steig’s response (or lack thereof) will determine that.