A guest post by Jeff Id

Well John Christy gave me a lot to think about in satellite temp trends as far as an improved correction over my last post. Steve McIntyre pitched in some comments as well. It is going to take a bit to work out the details of that for me but I think I can produce an improved accuracy slope over my last posts. In the meantime, I downloaded sunspot numbers from the NASA.

Cycles are interesting things. There are endless cycles in nature, orbits, ocean temp shifts, solar cycles, magnetic cycles the examples are everywhere. What makes a cycle unusual is also an interesting topic. Some solar scientists have claimed that our current solar cycle is not unusual by the record. They are certainly the experts but recently the experts have been forced to update their predictions for the next solar cycle.

Well, I’m no expert on the sun but I do find the data regarding sunspots interesting, particularly in the fact that we are again in at least a short term cooling at the same time sunspots and solar magnetic level have plunged.

Here’s an article from our all understanding US government.

What’s Wrong with the Sun? (Nothing)

And a few beginning lines.

July 11, 2008: Stop the presses! The sun is behaving normally.

So says NASA solar physicist David Hathaway. “There have been some reports lately that Solar Minimum is lasting longer than it should. That’s not true. The ongoing lull in sunspot number is well within historic norms for the solar cycle.”

Cool picture …….

See where the tiny little 2009 tick is. We should be increasing now and well on our way by 2010. By the way, this is an updated graph from the original predition.

Hathaway said, well within historic norms. Forecasting is the most dangerous sport, but I am as curious about this claim as any —he is the expert after all. Here’s a plot of the sunspot data from NASA NOAA numbers.

I did a sliding slope fit to the data to find when the slopes shifted from negative to positive in each cycle. I placed a red line above each point identified. These points are not intended to mean the beginning of a cycle( that is for the experts) but rather to be a consistent software identified point between each cycle.

The red lines represent solar minima. The only line which may not be a minima is the most recent in Jan 09 which we need to reference how unusual solar activity is.

Below is a list of the years the red lines are centered on.

1755.667, 1766.250. 1775.583, 1784.500, 1798.167, 1810.583, 1823.167, 1833.833, 1843.833, 1856.167, 1867.167, 1878.750

1889.500, 1901.750, 1913.167, 1923.417, 1933.750, 1944.167, 1954.250, 1964.833, 1976.250, 1986.250, 1996.417, 2009.041

The years between each minima are currently

10.583, 9.333, 8.916, 13.666, 12.416, 12.583, 10.666, 10.000, 12.333, 11.000, 11.583, 10.750, 12.250, 11.416, 10.250, 10.333,

10.416, 10.083, 10.583, 11.416, 10.000, 10.166, 12.625

So far there has been only one solar cycle which has exceeded the length of the current one. The cycle extended extra long (13.66 years) from 1784 – 1798 and was the last cycle leading into the Dalton Minimum.

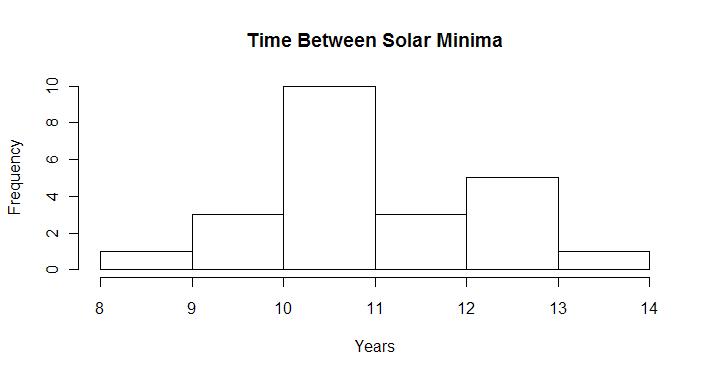

A histogram of the distribution of the time between solar cycles looks like this.

The standard deviation of the total record is 1.18 years the mean is 11.01. Well there’s the eleven year solar cycle we hear about.

Two sigma (two standard deviation) difference from the mean corresponds to a 95% certainty of something unusual in our current situation. The numbers this year at mid Jan correspond to about 1.37 sigma of all time records, which is getting close. But that’s not the end of the story, after all I just included the dalton minimum cycles in the data right after we identified the solar cycle prior to the dalton minimum as the one with the longest time span on record. That means, I treated it as though it were a normal event. —– Well I do believe (on faith in nature) this length is normal, the sun isn’t doing anything different from before but there is only one of these long events on record and were we to look for a similar event it would be stupid to include it in the standard deviation dataset. We should only look at data which is not related to another potential dalton minimum from Figure 2 this would be after the dalton minimum and before present day (from 1833 – 1996).

The standard deviation of the cycle start after the dalton minimum 1833 and before 2009 was only 0.79 years. The average Jeff Id solar cycle in the same period is 10.83 years. This puts the two sigma limits of the solar cycle at 9.26 years on the short side and 12.42 years on the long side.

Of course this puts my reasonable analysis of solar cycle outside of the last 176 year normal to a two sigma 95% interval 12.6 years has crossed the limit. With little sign of the next cycle beginning yet, this might get worse. I tell you what, I prefer the taxes from global warming to the cost of glaciers in my yard, it seems like a balance of evils to me. I hope this solar cycle changes soon but we can no more effect the sun with a dance than we can effect global warming with a tax so what choice do we have.

In Dr. David Hathaway’s defense, he made his statement above in July which put the current minimum at 2008.583 which comes to 12.166 years and just inside the 95% two sigma certainty of 12.42.

Now that we’re at 12.6, I wonder if they’ll extend the predictions for the beginning of the next cycle again.

matt v. (08:39:23) : I like your analysis / prediction [or should that be “projection” as per IPCC?]. Regarding your “in my opinion it is not the sun alone or the oceans alone that drive the weather. it is their interaction together” – yes I agree, but what is really crucial for climate is the total heat content of the oceans. If the IPCC is correct, then atmospheric CO2 is continually causing heat to be retained by the planet, and the only realistic place that the heat can be stored is in the oceans. Stuff can slosh around all over the planet, changing the weather, the “global surface temperature”, sea surface temperature, atmospheric temperatures, regional temperatures, etc, etc, but the total heat content of the oceans MUST increase.

We now have peer-reviewed scientific papers (AGWers please note : peer-reviewed, so they must be true) that show (a) oceans cooling (in total not just surface) and (b) albedo increasing to match. IMHO the inference is very clear :

1. Something drives albedo.

2. Albedo drives ocean heat content.

3. Ocean heat content is a driver of climate.

In 2. there is no timelag. In 3 there can be.

Links to the two papers are :

albedo : http://start.org/journals/pip/jd/2008JD010734-pip.pdf

oceans : http://sciences.blogs.liberation.fr/home/files/Cazenave_et_al_GPC_2008.pdf

But arising from the papers alone (ie, not relying on my analysis) is the inescapable conclusion that the use of “cloud feedback” by the IPCC [IPCC Report AR4 8.6.2.3] to provide 40% of global warming via ECS (Equilibrium Climate Sensitivity) is just plain wrong.

Jeff Id (13:32:54) : “I’d like to try and reproduce those greenhouse calculations for my own understanding.”

Please do, the sooner the better. The more ways we can find of getting away from fake science the better. But to my mind, the recent findings re albedo and oceans (as above) already destroy the AGW argument. Not that they should have been needed, as many scientists have already demolished AGW from all sorts of different angles – but for some reason no-one will listen.

George E. Smith,

I want to find the wavelength absorption data in a series for gaseous CO2, methane, air and water vapor. I am familiar with the vibration mode analysis for absorption as you described but am hoping for measured data. I like to get a feel for the magnitude of numbers and without these curves it is difficult for me to understand the true magnitude of energy increase by CO2 (I’m not trusting other peoples numbers). I think the calcs will be a little tricky but I have some ideas on how I would like to handle it. Only trouble is, finding a source is difficult.

Some other posters left links which I looked at but haven’t thoroughly gone through (I will, my thanks to them). I didn’t see any data series jump out, only graphs. If you have an idea, it would be appreciated.

Leif said “Yes, this is well-understood and monitored. The total power input [includes flares and CMEs] is of the order of tens of GigaWatt, which is about a million times less than what TSI gives.”

Leif,

My original question for calculations was not for solar wind input but for induced earth currents, telluric currents, and how those currents are impacted by changes in solar output.

I agree relative to TSI , the power input maybe considered negligible but only if you are modeling that input in the same way you model radiative heating. However a small input of energy can be amplified much like pushing a rock over a ledge. Small changes in solar input could greatly magnify the energy of telluric current because the energy due to telluric currents would include not just solar input effects on magnetic fields but couples that change to the mechanical energy from the earth’s rotation and ocean currents, etc. It is then transformed to electrical energy analogous to a cold mountain stream being able to burn your toast by transforming mechanical energy into electrical.

From everything I can read there is great difficulty in measuring telluric currents on a global scale due to the vast variations in topography and conductance as well as impacts of drought, etc.. It seems that after a spurt of inquiry into telluric currents from the late 19th century and early, the difficulty of modeling these currents has relegated research to engineering problems of protecting cables, pipelines and grids.

I was hoping that your interest in solar impacts on climate would have examined the effect of such external source variations on telluric currents and they might have been recently modeled. But judging from your answer I assume that has not been done. However if I assume incorrectly I would greatly appreciate any links to that research.

“” Jeff Id (13:39:08) :

George E. Smith,

I want to find the wavelength absorption data in a series for gaseous CO2, methane, air and water vapor. I am familiar with the vibration mode analysis for absorption as you described but am hoping for measured data. I like to get a feel for the magnitude of numbers and without these curves it is difficult for me to understand the true magnitude of energy increase by CO2 (I’m not trusting other peoples numbers). I think the calcs will be a little tricky but I have some ideas on how I would like to handle it. Only trouble is, finding a source is difficult.

Some other posters left links which I looked at but haven’t thoroughly gone through (I will, my thanks to them). I didn’t see any data series jump out, only graphs. If you have an idea, it would be appreciated. “”

Well Jeff, that is the $64 question; I have frustrated myself over the very same considerations. The problem is that it is difficult to calculate such things at a fundamental level, and actual measurments have to be made under some specific experimental conditions.

If I were designing an RF amplifier for the front end of a new HD television to receive broadcast signals, I know that I can obtain a signal generator, that is capable of producing controlled monochromatic signals at any and every frequency int he range that ineed for my amplifier, so bench testing the performance is relatively straight forward.

The trouble is we can’t very easily make a “signal generator” that we can tune over the range of from say 0.1 micron wavelength to 100 micron wavelength (3000-3 THz).

And I can buy an oscilloscope that is capable of viewing the signals over the whole TV range, but there is no such thing as a detector for that whole range, that is of interest in climate physics.

Nuclear physicists are used to specifying the probabilities of nuclear “reactions” in terms of “crossections”. In the case of a nitrogen atom’s response to an incoming high energy proton or maybe a neutron; they paint a target of a certain size centered on the atom, and if the incoming hits that target, the reaction happens; but if it misses, the reaction doesn’t happen, and they specify the area of those crossection targets in “Barns” which is 10^-24 squ cm. Yes literally it derives from hitting the side of a barn.

Now in principle, the question of an IR thermal energy photon being captured by a CO2 molecule and exciting one of the known modes of vibration, can also be specified as a crossection. Then from the density of CO2 molecules in the atmosphere, and the size of the bullseye, you can calculate the probability of absorption of that energy in a certain path length. But those things are usually measured as an absorption coefficient, in an exponential absorption equation, where the flux decays as e^-alpha x, where alpha is the absorption coefficient.

That simple equation works in things like optical color filter glasses, where energy once absorbed is turned completley into local heating, and the transmission can be calculated easily for any thickness x .

It gets complicated though if the glass happens to be fluorescent, so even though it absorbs the original photon, it subsequently emits a lower energy longer wave photon, instead of making nothing but heating effects.

Same thing happens in gases, where the excited gas molecule, cna re-emit virtually the same photon it absorbed and return to the original state. sometimes, particularly in atomic spectra, that re-emission may be prohibited by various rules, so the probability of it happening may be very low. But the excited molecule, will usually collide with some ordinary molecule of the air, and some sort of maybe elastic collision will occur, with an energy re-distribution that depends on teh species, and also the collision geometry.

These collisions result in a distribution of molecular velocities, which end up shifting the wavelength of photons that can be absorbed or emitted, and these result in broadening of the intrinsically narrow molecular absorption line.

The first atomic clock that was successful, used the ammonia molecule which has a triangle of hydrogens, with a nitrogen at the top of the tetrahedron. The nitrogen is capable of popping throught the H triangle, and ending up at the bottom of an inverted tetrahedron, and that mode of vibration is excited by a microwave frequency; which is known very accurately. But such events are performed in a very low density of ammonia molecules, or better yet in a controlled molecular beam, where molecular collisions are virtually eliminated. Then the intrinsic width of the ammonia line is extremely narrow making for a very accurate atomic clock. Of course modern Cesium or rubidium oscillators have replaced ammonia, and hydrogen and verious laser sources promise even more accurate clocks.

In the hustle and bustle of the atmosphere, the CO2 line is broadened out to something like 13.5 to 16.5 microns, due to the effetcs of collisions (pressure) and temperature, which affects bith collision rates and molecular velocites, which Doppler modulate the range of wavelengths that can be absorbed or emitted from the CO2.

Running outside to you barbecue patio to read the official thermometer, is one thing, and as Anthony has shown us, is difficult to get right.

But developing fundamental reaction parameters is a very tedious laboratory process, and you don’t do it unless you really want to know the answer in a big way.

The Military is reasonably happy with the results that can be measured out in an actual environment, along a horizontal path, because mostly what they want to know is signal propagation ranges in infrared sensing systems.

Anyhow, I too have looked for fundamental absorption parameters and such, for CO2 or even water vapor, and it is out there somewhere but hard to locate. AS I said Jim Peden sent me what is the best graphs I currently have; which itself is very illuminating, when you compare the water and the CO2 spectra.

George

gary gulrud (12:33:08) :

Our luminary so effectively argued […]

So much for refusing to accomodate compromise.

My discussion was about the physics and natural phenomena. Yours seems to have taken on an odious ad-hom tone. You should be ashamed of yourself.

vukcevic (12:28:57) :

Despite a strong disagreement on the matter with Dr. Svalgaard I believe that Hale cycle is only a surface (top layers) effect only.

First, let’s stop talking about the ‘Hale’ cycle. There is no Hale cycle. You can arbitrarily consider cycle N and N+1 to be two parts of a 22-year cycle, but so is also the other arbitrary pairing N and N-1. The 22-year cycle is not a ‘physical’ cycle.

Second, the Babcock dynamo that is the basis for my prediction is a shallow dynamo. There are two shear zones [where dynamos can operate] in the interior: one at the bottom of the convection zone [deep dynamo, long magnetic memory] and one just below the surface [shallow dynamo, short magnetic memory]. ‘Mine’ is the shallow one.

Reversal of the polar fields is a surface process in any case [irrespective of where the dynamo is].

Jim Steele (13:39:41) :

induced earth currents, telluric currents, and how those currents are impacted by changes in solar output.

The telluric currents are induced in the Earth by the ionospheric currents via an electromagnetic field propagated in the space between the ionosphere and the Earth’s surface, like a guided wave between two conducting plates. As such the telluric currents ‘follow’ the ionospheric ones [their magnetic effect being about half of that from above]. Since the subsurface conductivity varies a lot more than the ionospheric conductance, the telluric currents are more irregular [can actually be used to probe the conductivity and are therefore useful prospecting tools]. As far as the impact of changes in the solar output, the telluric currents just follow the external currents. They do not ‘live their own lives’, so to speak.

Robert Bateman (09:27:47) :

any word on the Gauss strength of 11010?

From the man himself: 1969 G, umbral intensity 0.85

Leif Svalgaard (10:29:37) :

Even if we count from previous maximum, SC22 produced spots 19 years later, SC21 produced spots 20 years later, SC20 produced spots 20 years later, SC19 produced spots 20 years later, etc. Being factually correct beats creative thinking every time.

I might wait for Dr. Archibald’s response.

Jim Steele (13:39:41) :

But judging from your answer I assume that has not been done. However if I assume incorrectly I would greatly appreciate any links to that research.

They have. The easiest for me is simply to ask you to do a Google search on: mantle conductivity magnetic

George E. Smith

“But those things are usually measured as an absorption coefficient, in an exponential absorption equation, where the flux decays as e^-alpha x, where alpha is the absorption coefficient.”

This is what I want to find

“That simple equation works in things like optical color filter glasses, where energy once absorbed is turned completley into local heating, and the transmission can be calculated easily for any thickness x .”

As I said, I work in optics and have designed di-electric optical coatings, ray trace software, non-imaging lenses, vision systems, worked with multiple forms of interferometery and dozens of other optics related works. I am familiar with the “signal generator and detector requireements”. I am also intimately familiar with phosphors and the absorption/reemission problem.

The re-emission is something I haven’t solved in the puzzle (besides finding the data), if I can treat it as a bulk material a greybody emission may would be enough due to the thermal transfer between molecules (there are references which indicate vast majority of the re-emission from CO2 is through collisions) but I need to do more work on that.

I have seen several absorption curves in other articles as generated by tunable sources, I just can’t find the raw data assembled into a continuous curve. If it isn’t available I can use the calculated curves (no data there either).

I don’t plan to make a full climate model but my thought is, I can make a 3D finite element version of the atmosphere to perform a monte-carlo raytrace analysis which will give an idea of the actual improvement in energy absorption by CO2. A basic version would be able to take into account atmospheric density and moisture level, then I could add in some feedback and other things just to explore the net energy difference.

If nothing else, it would make an interesting blog post.

Thank you, Leif. That’s getting close to 1500, and I’ll assume that was on the best day or somewhere near it.

1969 G and .85 Umbral are right on track with Livingston & Penn Fig.3 & 4.

Robert Bateman (19:54:58) :

I’ll assume that was on the best day or somewhere near it.

The average of the first two days. The third day was very difficult to get a good measurement.

E.M.Smith

Re burning a steak. I hope you were joking since steak is primarily made up of hydrocarbons which, if heated sufficiently in the presence of oxygen, will definitely burn.

George E. Smith

I don’t suppose you’re the same George E. Smith who invented the CCD camera’s I have used so many of?

“have taken on an odious ad-hom tone. You should be ashamed of yourself.”

Au contraire, good Dr. The issue is whether the solar minimum is indeed a deterministic identification. Here at WUWT, on or about July 27 of last year, at the halfway point of your local minimum in SS count and radio flux, both you and Janssens argued the continued viability of the March ’08 minimum.

If indeed, the smoothed SS count for succeeding months had to risen and stayed above 5 March would have been ‘the’ minimum. But this was by then a very long shot and the gambit relied, in your expert opinions, on ancillary tangibles.

Second, the geomagnetic indicies were inarguably of no use whatever at that point in establishing ‘the’ minimum because they are likewise dependent on another cause. Near zero they and SS counts are not meaningfully related.

actuator (21:14:11) :

E.M.Smith

Re burning a steak. I hope you were joking since steak is primarily made up of hydrocarbons which, if heated sufficiently in the presence of oxygen, will definitely burn.

I was joking but … the lean portions are mostly water… 75% or so. See:

http://www.fsis.usda.gov/Factsheets/Water_in_Meats/index.asp

Maybe the steaks you buy are marbled enough to catch fire and burn, but mine just char and sneer at me even on the BBQ 😉

Leif Svalgaard (05:08:38) :

E.M.Smith (02:00:30) :

My best guess so far is a composite of the Svalgaard GCR thesis

Svensmark, not me, please/

Sorry… brain thought Svensmark, fingers typed what they had seen most of recently… wish I knew why that happens. (The form of the error is called “Proximity Capture” and is why you sometimes find yourself headed somewhere familiar like home or work, when you really were headed somewhere near there.)

Mike J (12:44:59) :

We now have peer-reviewed scientific papers (AGWers please note : peer-reviewed, so they must be true) that show (a) oceans cooling (in total not just surface) and (b) albedo increasing to match. IMHO the inference is very clear :

1. Something drives albedo.

2. Albedo drives ocean heat content.

3. Ocean heat content is a driver of climate.

In 2. there is no timelag. In 3 there can be.

To which I would add that we have cold polar air (like, oh -50 F falling on the central USA) that can not be from a cold water pool in the ocean making the air cold. That argues for heat leaving the air at the poles to somewhere other than water, which argues for an IR window left open over the poles. GCRs have been shown to reduce O3 and O3 is the only gas blocking the 9-10 micron window. (And potentially lower UV from the sleepy sun might also be causal in the lower O3 levels observed lately).

gary gulrud (06:15:07) :

“have taken on an odious ad-hom tone. You should be ashamed of yourself.”

Au contraire, good Dr.

Some people never are, even when they should.

actuator (21:14:11) :

E.M.Smith

Re burning a steak. I hope you were joking since steak is primarily made up of hydrocarbons which, if heated sufficiently in the presence of oxygen, will definitely burn.

As I have demonstrated unintentionally with my ‘cordon noir’ cooking technique on several occasions. 🙁

E.M.Smith (15:13:51) : I read of a paper recently, that linked increased GCRs to reduced O3. I don’t have the paper itself but here are 2 references to it. I have no idea whether it is credible.

http://www.cgfi.org/2008/10/03/record-south-pole-ozone-hole-predicted-by-dennis-t-avery/

http://www.theozonehole.com/waterloo.htm

@Mike J

Thanks!

“You should be ashamed of yourself…Some people never are”

The final shabby refuge of the helpless, assigning shame.

gary gulrud (04:32:48) :

“You should be ashamed of yourself…Some people never are”

The final shabby refuge of the helpless, assigning shame.

Sounds like the final shabby refuge of the clueless and shameless

A third argument against using the geomagnetic method to establish SS minimum is that it provides no advantage over direct count, e.g., the butterfly diagrams, which stretch back into the 1800’s, to solve an issue known to investigators for that duration.

In any event, this determination of cycle length (where the counts of adjacent cycle spots are equal) overestimates the length of 23 and underestimates the length of 24, at most half as prolific as its predecessor.

A fourth, and final argument, is that one establishes a local solar parameter by a distant, weakly related effect. This is the very error snaring Hathaway in his original 24 Rmax estimate–using a measure of geomagetic storms following Rmax to predict the SS count of the subsdequent cycle, distant in space and time.

To recap, 1.) The method is no help in prediction of the minimum, 2.) It is no help in identifying a minimum at hand, 3.) It is not as accurate as existing methods already considered in minima definition, 4.) The method relies on a correlation of weak causal relation.

Clueless?