If you have been following the rapid expansion of AI infrastructure, you have probably seen the latest claims that data centers are now creating their own “heat islands” large enough to affect hundreds of millions of people. The narrative comes courtesy of a recent working paper, quickly picked up and amplified by Fortune in: Data centers are so hot their ‘heat island’ effect is raising temperatures up to 6 miles away, which presents the findings in a way that suggests a new and potentially significant environmental driver emerging from the digital economy.

According to the article, researchers examined more than 6,000 data centers worldwide and found that surrounding areas experienced an average land surface temperature increase of about 2°C over the period from 2004 to 2024, with some locations showing increases as high as 9°C. The reported influence extends outward roughly six miles from facilities, and when combined with population maps, the authors estimate that as many as 343 million people could be affected These are large numbers, and presented without context they give the impression of a widespread and growing climate signal tied directly to AI infrastructure.

The underlying paper, however, tells a more nuanced story. The key variable being analyzed is not air temperature in the meteorological sense, but land surface temperature derived from satellite observations. That distinction matters because land surface temperature is extremely sensitive to local surface characteristics. Replace vegetation with buildings, pavement, and industrial equipment, and the measured surface temperature will rise, regardless of whether the underlying atmospheric conditions have changed in any meaningful way.

The authors attempt to address this by focusing on data centers located outside dense urban areas, presumably to isolate the effect of the facilities themselves. They use NASA MODIS data at roughly 500 meter resolution, aggregate it over time, and compute temperature differences before and after the start of operations at each site. The result is what they describe as a “data heat island effect,” characterized by an average increase of about 2.07°C at the site level, with a range from roughly 0.3°C to over 9°C.

What stands out immediately is that the magnitude of the reported effect overlaps with well-known land use change signals. The paper itself notes that classic urban heat island effects typically fall in the range of 4 to 6°C, driven by factors such as reduced vegetation, altered albedo, and concentrated human activity. In that context, the observed signal around data centers begins to look less like a novel phenomenon and more like a subset of the same broader category of land transformation effects that have been studied for decades.

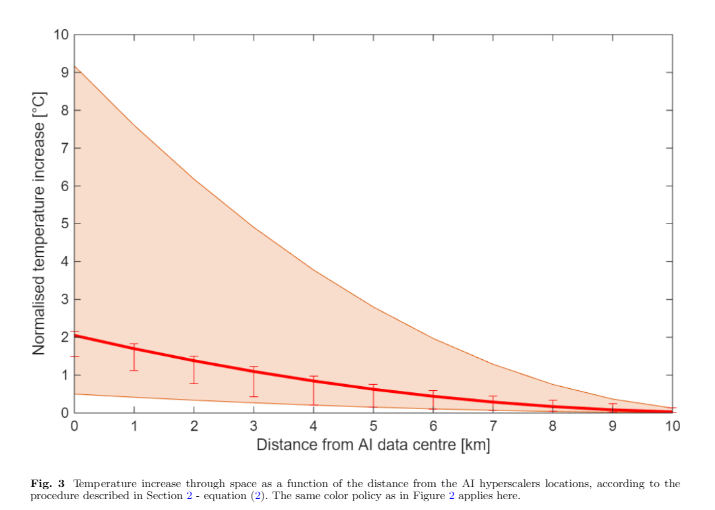

The spatial analysis reinforces this interpretation. The paper shows that the temperature signal diminishes with distance, dropping to about 30 percent of its peak within roughly 7 kilometers, and falling to around 1°C at distances of about 4.5 kilometers. This gradient is consistent with localized surface effects rather than a large-scale atmospheric influence. In other words, what is being observed behaves exactly like a localized heat retention and dissipation pattern tied to the physical footprint of infrastructure.

There is also the question of attribution, which the authors themselves acknowledge is fraught with uncertainty. Even after excluding dense urban areas, it is difficult to completely isolate data centers from other nearby activities, including industrial development, transportation infrastructure, and general land use changes. Satellite-derived surface temperatures integrate all of these influences, making it challenging to assign causality with high confidence.

Another point worth noting is that the study focuses on the period following the construction and operation of facilities, but construction itself is a major driver of land surface change. Clearing land, altering soil composition, and installing large-scale structures all modify the thermal properties of the surface. Some critics cited in the Fortune article point out that a significant portion of the observed temperature increase may simply reflect this transition from natural or semi-natural land cover to built environment.

The paper also ventures into broader claims about societal impact, suggesting that the data heat island effect could influence welfare, healthcare, and energy systems, drawing parallels with urban heat islands. While that is plausible in a general sense, it rests on the assumption that the measured land surface temperature changes translate directly into meaningful impacts on human environments. That link is not demonstrated in the study and remains an open question.

None of this is to say that data centers are thermodynamically insignificant. They consume large amounts of energy, generate waste heat, and require extensive cooling systems. The Fortune article highlights that some facilities can consume power on the order of a gigawatt and involve substantial water use and noise pollution These are real engineering and infrastructure considerations, particularly at local scales.

But moving from those practical realities to claims of widespread climate-relevant heat islands requires a careful handling of definitions and measurements. Land surface temperature is not the same as air temperature, localized heat retention is not the same as regional climate forcing, and correlation with facility locations does not establish causation without ruling out confounding factors.

In the end, what this paper appears to document is a familiar phenomenon under a new label. When you build large, energy-intensive facilities on previously undeveloped land, you change the thermal characteristics of that land. Satellites detect that change. Calling it a “data heat island” may be useful shorthand, but it does not transform it into a new category of climate driver.

As AI infrastructure continues to expand, there will no doubt be more studies attempting to quantify its environmental footprint. The challenge will be separating measurable local effects from broader claims that extend well beyond what the data can support. That distinction tends to get lost once headlines take over, but it remains central if the goal is to understand what is actually happening rather than what makes for the most compelling narrative.

The above article invites this question:

Has any AI bot ever described AI data centers as being a contributing cause of “global warming”?

Looks like it’s recognised by Duck’s search assist, but the focus is power and water use. Thermal output or environmental change aren’t mentioned.

The paper never implied that data centers were a significant contributor to planetary warming. Of course it cannot be , since the incremental power generation for data centers is trivial compared to the net of solar energy absorption minus infrared heat rejection by/from the earth. This second factor –net absorption of energy into earths system– is a result of an increasing greenhouse effect due to the accumulation of CO2 in the atmosphere. Data centers, however, can cause meaningful local temperature rise, as the paper documents.

Yes, the exact reason that I asked my question . . . duhhh.

I’ll invite you to provide one—just one will suffice—scientifically credible document that proves your assertion beyond reasonable doubt. On the other hand, there are numerous, credible, published, scientific papers that rebut that assertion from renowned scientists such as:

Dr. Will Happer,

Dr. William van Wijngaarden,

Dr. Richard Lindzen,

Dr. John Clauser,

Dr. Judith Curry,

Dr. Fred Singer,

Dr. Willie Soon, and

Dr. Patrick Michaels.

All noted climate deniers, paid by fossil fuel companies to write articles, not peer reviewed research; their articles are contradicted by the enormous body of scientific peer reviewed research published over the last 60 years — because no reliable scientific journal would accept the unsupported unscientific nonsense your cited authors have written.

If you want science, go to America’s premier scientific institution, the National Academy of Sciences, the IPCC Assessments, or any major research University.

Hmmm . . . I observe that you provide no evidence whatsoever to support that spurious, outrageous and libelous statement.

Your statement is so easy to falsify using a Web search, taking me less than 10 minutes total to find the following:

— Dr. Will Happer has authored or co-authored over 200 peer-reviewed scientific papers,

— Dr. William van Wijngaarden has authored over 75 to 80 peer-reviewed publications,

— Dr. Richard Lindzen has authored or co-authored more than 200 to 230 peer-reviewed papers and books,

— A scientometric analysis of Dr. John Clauser’s work published in the Journal of Data Science, Informetrics, and Citation Studies identified 55 peer-reviewed articles authored or co-authored by him between 1966 and 2023,

— Dr. Judith Curry has authored or co-authored over 180 peer-reviewed scientific papers and journal articles,

— Dr. Fred Singer was a prolific researcher who authored or co-authored more than 200 technical papers in peer-reviewed scientific journals,

— Dr. Willie Soon has published a significant body of work, with sources indicating over 90 to 150+ refereed (peer-reviewed) papers and publications,

— Dr. Patrick Michaels published over 100 papers in peer-reviewed journals throughout his career.

Besides these facts, I note that you failed to address my challenge:

“I’ll invite you to provide one—just one will suffice—scientifically credible document that proves your assertion beyond reasonable doubt.”

Lastly, there is this humor that you provided . . . thank you:

Ummm . . . wouldn’t that be the same NAS that elected “Mr. Hockey Stick” Michael Mann as a fellow of the academy in 2020?

Then too, for many years, PNAS allowed National Academy of Sciences (NAS) members to “contribute” or “communicate” papers, bypassing traditional peer review. Data from 2013 showed an extraordinarily high acceptance rate for these papers, leading to accusations of cronyism.

PNAS has had to retract articles, including cases involving falsified data and cases involving lapses in handling conflicts-of-interest by high-profile scientists, such as a 2021 case involving White House official Jane Lubchenco.

Highly-respected scientist Richard Feynman resigned from the National Academy of Sciences because he disliked the organization’s focus on honors, titles, and exclusivity over scientific work.

I need not comment about “science” being done by the IPCC, nor by undefined “research” universities. Hah!

Your web search would not find any papers by those frauds, but you can find spurious claims by those FRAUDS. No one with any creds believes the nonsense they are paid to write in return for their survival income.

Someone making intentionally false claims, such as accusing other more competent persons of fraud without presenting any objective supporting evidence, is de facto guilty of . . . guess what . . . FRAUD.

Prove me wrong.

You’ll have to do better than that. None of the Deniers you cited have published any arguments in scientific peer reviewed papers that dispute the scientific conclusion that man’s activities are responsible for all the warming seen since 1970.

Hopeless. Deny Web search results if you wish.

Good luck with that!

Of course, you dare not offer an objective response to my listing of peer-reviewed publications from each of those “climate deniers” (your term).

Typical, and as expected!

ROTFLMAO!

u didn’t list any peer reviewed papers that debunked the universal conclusion of science that rapid warming after 1970 is entirely caused by human activities.

If wind and solar are the cheapest why aren’t these data centers built unplugged from the grid?

About 80-90% of new generating capacity added in recent years (about half of which is to accommodate new data centers) aero solar and wind, which indeed are the cheapest form of generation.

throughout the 24 hour cycle 7 days a week?

Wind and solar add a HUGE amount to cost to grid infrastructure..

They are also parasitic to the grid, sucking the reliability and adding massively to costs.

They are also the most environmentally damaging form of electricity there is, over their short life time.

Capacity added means absolutely nothing when wind maybe can only average 20% of capacity and very often far less, and solar averages less than 12% of its installed capacity and only when the sun is shining.

Here are figures for German wind and solar capacities for 2015, and 2016 based on 5 minute data provided by a renewable zealot

Funny how reality and renewable energy fantasies never manage to meet.

Yes. They are a faith based answer where theology overrules electrical engineering

Actually they are a demonstration of science and engineering overruling science denial.

Of course, that “overruling”, continues to require government grants and subsidies for wind and solar “renewable energies” to continue to exist in today’s practical reality. Funny that.

Nope. Without subsidies, central solar and onshore wind have lowest capital and operating costs over their liftimes vs any other power generation technology.

Which, of course, explains exactly why an extraordinary number of corporate and individual investors are “rushing in” to invest in solar and onshore wind projects that don’t have governmental subsidies.

/sarc

From Google’s AI bot:

“Global spending on clean energy, including solar and wind . . . the year-on-year growth rate slowed from 17% in 2022 to 6% in 2025.”

Which, of course , doesn’t dispute my post.

I can guess where the new weather monitoring stations are going.

Ha ha ha ha ha ha ha ha ha ha ha ha ha!!

First chuckle of my day (-:

Have they exhausted all the runways and industrial parks?

Yep, don’t tell the UK Met Office.

Satellites report air temperatures “near the surface.” Surface temperature stations generally report temperatures about six feet above the surface. What is the difference.

With the now-obvious realization that “surface temperature stations”, en masse, are likely prone to report air temperatures that have been corrupted (generally to the upside by several degrees-C or more) by UHI-effects . . . whereas the averaging of the much larger areal coverage by satellites, including mostly rural instead of urban areas, tends to minimize UHI distortion of near-surface temperatures . . . that’s the major difference, not the variation in “near-surface” temperature due to altitude.

It’s a bit more complicated than this. Using wider areas, UHI to rural, also increases the variance of the collected data because of increased variability. Since variance is a direct metric for measurement uncertainty, an increased variance also means the average value has a higher measurement uncertainty – i.e. what *is* the actual average temperature? Since the variance of the temperature data will be different at different distances from the UHI, the data from different distances shouldn’t even be jammed together willy-nilly to create an “average”. Weighting should be used to equalize the contribution across the temperature gradient in order to create a more accurate “average”.

Someday perhaps climate science will learn to include variance (standard deviation) statistical descriptors for its data components instead of just looking at the “mean”. Without the variance there is really no way to objectively judge the actual impact the data is representing.

Sorry Tim, but I did specifically mention “averaging” and made no mention of “variabilty”.

Also, data variance is NOT a “direct metric of measurement uncertainty”. A clear example of this:

a fixed location where I measure air temperature with a suitable thermometer (say a PRT calibrated to be accurate to +/- 0.1 deg-F) to peak at, say, 87.4 deg-F during one day and at 82.6 deg-F the following day and yet have corresponding minimum temperatures of, say, 51.8 deg-F and 47.7 deg-F during the successive nights. The variances about the means of both the daytime peak temperatures and the nighttime minimum temperatures are not due so much to instrumental (aka measurement) uncertainty as they are due to other independent, time-varying parameters, such as weather (cloud overage, winds, fronts, temperature inversions, etc.)

Variance is a statistical measure of how far data points spread out from their mean (average) and can be essentially independent of measurement uncertainty.

“tends to minimize UHI distortion of near-surface temperatures”

Averaging masks variability. Variance (standard deviation) *is* a necessary statistical descriptor necessary to understand the data.

“Also, data variance is NOT a “direct metric of measurement uncertainty””

From the GUM:

Typically a higher variance means a smaller peak in the distribution at the location of the average (assuming an approximately Gaussian distribution). In histogram terms that means that the bins surrounding the location of the average have approximately the same number of entries. That, in turn, means that the actual value of the average becomes less certain. Shift a few observations between the bins and you change the average value. Since measurement uncertainty affects the assignment of values to bins it affects the measurement uncertainty associated with the value designated as the average.

“The variances about the means of both the daytime peak temperatures and the nighttime minimum temperatures are not due so much to instrumental (aka measurement) uncertainty as they are due to other independent, time-varying parameters, such as weather (cloud overage, winds, fronts, temperature inversions, etc.)”

From the GUM:

Instrument effects are not the only impacts on measurement uncertainty. Each and every thing you list has an impact on measurement uncertainty – and each and every item in your list contributes to the variation of the data. Which Section 3.3.5 defines as the measurement uncertainty.

“Variance is a statistical measure of how far data points spread out from their mean (average) and can be essentially independent of measurement uncertainty.”

Your definition of “measurement uncertainty” seems to be restricted to only instrument effects. That is *not* the definition of measurement uncertainty according to the GUM. Your definition is a corollary to the typical climate science meme of: “all measurement uncertainty is random, Gaussian, and cancels”. Assumption 1: random effects from instrument readings cancel overall. Assumption 2: And then climate science ignores all the other impacts that affect measurement uncertainty – such as the color of the grass below the measuring station or whether it is on the east or west side of a mountain.

Interesting, but not surprising, that you did not address the variances I cited in my two simple, hypothetical examples of two peak daytime temperature measurements and two minimum nighttime temperature measurements, where clearly the data variance in both cases was an order of magnitude or more greater than the measurement uncertainty based on using a calibrated thermometer.

Oh well, you did provide the service to other readers of pointing out what is said (and what is left unsaid) in The Guide to the Expression of Uncertainty in Measurement (GUM) document.

Actually I *did* address those variances.

tpg: “Instrument effects are not the only impacts on measurement uncertainty.”

This is instrument uncertainty. It is *not* the only contribution to the measurement uncertainty.

Data variance *is* a metric for measurement uncertainty, the GUM confirms that. In fact, as you now admit, it is an order of magnitude greater than the instrument contribution to measurement uncertainty.

An “order of magnitude or more greater” means it is the primary contribution to measurement uncertainty – just as I said. That contribution propagates onto any calculation of the average – making it impossible for averaging to minimize UHI distortion. That UHI distortion will remain as part of the measurement uncertainty that goes along with the mean of the data. Averaging doesn’t reduce measurement uncertainty. Climate science just ignores this by assuming that all measurement uncertainty, including the variance of the data, is random, Gaussian, and cancels.

Fossil fuels are consumed to produce energy and heat is a byproduct. It has nothing to do with “capturing” heat and preventing it from escaping to the atmosphere. More energy consumed, more heat needed to replace it. UHI is a result of man adapting to its’ environment.

. . . whereas UHI causing errors in reporting and compiling “surface air temperature” measurements is the result of man ignoring basic physics or, perhaps, pursuing a hidden agenda.

I went and read the Fortune article about the University of Cambridge study. The UK study is just more ‘climate obsessed’ handwringing. The Fortune.com article about it is best charitably described as clickbait.

Data centers are not new. UHI is not new. The only thing ‘new’ is AI driven data center expansion.

Which is causing significant heat island effects. Ie, the entire point of the new paper.

thanks. now they know where to put their next thermometers

Tennis court surface raises temperature by 5.341C°. All games in the future must be played on grass surface. Film at 11:00.

Its all just hot air. 😉

Mr Watts misses the point entirely. Data centers are intense consumers of electrical energy, about 80% for the servers, plus another 20% for the data center cooling systems. Data centers that are cooled by chillers (that is, most of them) reject all that energy to the ambient air. That the measured temperature rise is as high as it is will be no surprise to anyone who understands conservation of energy, which apparently does not include Mr Watts.

“all that energy to the ambient air ”

Air temperature wasn’t the thing that was measured.

They measured surface temperature. The rejected energy

of which you write will surprise me if it can warm the ground

5 miles away. I’m told I give off energy equivalent to 100 W lightbulb.

I just texted a friend a few miles away and asked her to report when

my energy got to her back yard.

LOL.. that is EXACTLY what our host was saying. !!

That data centres heat the surrounding area to quite a substantial degrees.

I warrant that our host has several times more understanding than you do.

I don’t see you actually contradicting anything that was listed in the article.

Not that it matters to you.

Data centres heat their surroundings – duh!

Just like the homes and buildings in my town in mid winter when they are drawing a couple of MW of grid power. Of course the sun is also heating each sq metre during the day

1000W per sq m2 on clear day at noon.

Oh woes , it’s worse than we thought

Decades ago NASA said that a single building can affect temperatures up to 600 feet away. No surprised that huge buildings would also do so.

How does the expected warming from these data/computation centers compare to the warming that has been going on in places like New York City, Miami, Florida, Sao Paulo, Tokyo and Beijing for a century or two? And let us not forget the runways, taxiways, ramps and parking lots that are part of the world’s modern airports.

Oh, the concrete. Oh, the asphalt.

No, Fortune author Rogelberg, data centers are not becoming “epicenters” for UHI without earthquakes. Simply, “centres” is the correct word.

Moving on, any place with more energy consumption like more electricity use will produce more heat.

Also, the purpose of electrical air conditioners at data centers is to create cold air. The topic of climate change is rife with talk of high temperatures and their dangers. Low temperatures get little discussion.

As Anthony correctly notes, much depends on where the temperature is measured. For this study, the temperature of the top of the soil layer is measured via its IR emission to satellites. The shallow soil, to its outer limits of the claimed soil heating, several km from the machinery, is more likely to be heated by conduction from adjacent warm moving air and warmed buildings than by lateral conduction through soil. Air temperatures should have been studied as well. Soil profile temperatures down to say 10 cm would also have helped, to clarify diurnal changes.

The study that Anthony discussed might be overall correct in the sense that more electricity use over the years created more overall energy. However, the paths by which the energy dissipates are far from clear. The article is paywalled, hence unread, so I decline to get into more detail. Geoff S

100%

The variance of the temperature profiles will increase meaning any averages calculated from the temperature data will see an increased measurement uncertainty. What *is* the average temperature?

The water vapor that data centers emit can be used to heat greenhouses. The water vapor is good for the plants in greenhouses.

https://www.ri.se/en/how-much-greenhouse-space-can-one-data-center-heat

Not just greenhouses. I know of at least one power plant in Kansas that’s not far away, where the “cooling” water is recycled through a large man-made lake at the site. Best fishing year-round I’ve ever seen. It’s like fishing in the Florida Everglades for largemouth bass. While it’s never been commercialized at this location there isn’t any reason why the process couldn’t be used for productive fish farms!

[“The water vapor is good for the plants in greenhouses.“]

Depending on the plant, the range is between 40-90% RH.

Too much humidity can result in Mould, Mildew, & Fungal Infection.

But

The waste heat from a data centre can heat greenhouses via wet pipe heating & also provide heat to power a brewery ( CO2 from there is pumped into the greenhouses), (some brewery waste can be composted). A % of water vapour can be used directly.