Jennifer Marohasy

I’ve been assured over the last few years, including by the director of the Australian Bureau of Meteorology Andrew Johnson, that the change from mercury thermometer to platinum resistance probe is not the cause of, nor a contribution to, global warming as reported on the nightly television news.

If it was, this would be evident as an increase in the number of hot days and their average temperature – just the same as what we are told has been caused by increasing levels of atmospheric carbon dioxide.

The most straightforward way to know the effect of the change to probes – and to distinguish this from the potential effects of warming from carbon dioxide – would be to compare the automatic readings from the probes with the manual readings from mercury thermometers at many weather stations over many years.

The bureau has been collecting this data as handwritten recordings on A8 forms. There is no official list but, piecing together information, I am confident that parallel data – measurements from probes versus mercury – exists for 38 weather stations and from many of these there should be more than 20 years of daily data available to enable comparisons. Access to all this information, and its analysis, would enable some assessment of the consequence of the equipment change. The issue is doubly complicated by the bureau using more than one type of probe, changing the type of probe used, and the type of data transmitted electronically – initially averaging values and then changing to the recording of instantaneous values. I detailed some of my initial concerns in a letter to the Chief Scientist Alan Finkel back in 2014, nearly ten years ago.

More recently, I have been pondering the issue with Chris Gillham – from http://www.waclimate.net. We have discussed the application of our method for understanding trends in extreme rainfall to understanding extreme temperature, or at least how the bureau might be hyping maximum daily temperatures through equipment changes and how to quantify this even if we don’t have access to the parallel data.

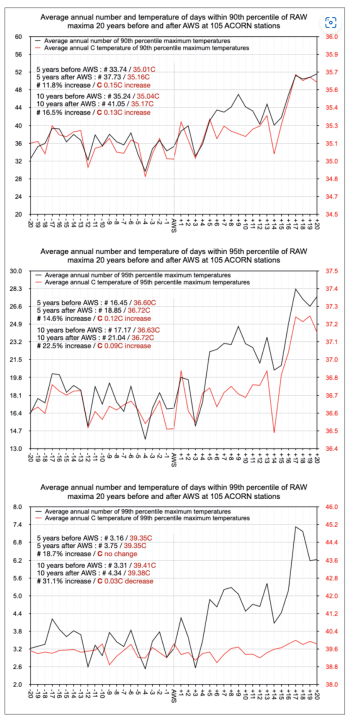

While I have been somewhat distracted with the Administrative Appeals Tribunal mediation, Chris Gillham has just got on with the job of downloading the unadjusted temperature data and calculating the 99th percentile (hottest 1%), 95th percentile (hottest 5%), and 90th percentile (hottest 10%) for all the sites with automatic weather stations that are used by the Australian Bureau of Meteorology to calculate climate variability and change. These are what are known as the ACORN-SAT (Australian Climate Observations Reference Network – Surface Air Temperatures) locations. There are 112 weathers stations that make up this network, and 105 of them are automated with platinum resistance probes as the primary instrument recording official temperatures.

Chris Gillham has downloaded the data and sorted it such that the start date for the switch to automatic weather stations (AWS) with platinum resistance probes is synchronised. To be clear for some locations, for example Bridgetown, the AWS was installed in 1998 while at Birdsville it was installed in 2001. To calculate, for example, the 1% of hottest days annually starting 20 years before an AWS was installed and then for as many years afterwards as we do in a soon to be published report, then the start years need to match.

It has been a huge job, with the data analysis now complete. It will all be published as a report in due course, with results for many of the individual stations available eventually via spreadsheets to be hosted at http://www.waclimate.net. Chris calculated the three different classes (99th percentile, 95th percentile and 90th percentile) based on all daily observations since 1910 (yes, a truly mammoth undertaking).

The question is did platinum resistance probes in automatic weather stations increase the frequency of extreme temperature observations, most likely because they have faster response times than mercury thermometers.

Let me summarise some of the findings here:

At a majority of stations there was a rapid increase in the average annual frequency and temperature of 90th percentile (hot) and 95th percentile (very hot) days when their first platinum resistance probes were installed, with 99th percentile (extremely hot) days showing a frequency increase but little influence on average temperatures.

When the frequency of extreme maximum percentiles increases, average temperatures within those percentiles usually also increase.

There are inconsistent results among many stations. For example, at many stations the frequency increases sharply in the 1st, 5th, 10th, 90th, 95th or 99th percentile range when an AWS is installed, as does the average temperature within those percentiles. However, at some stations the frequency increases but there is no change or a cooling of temperatures within the percentile. Alternatively, the frequency might drop while the temperature also drops, remains the same or increases.

Similarly, a station might show an increase in 90th percentile frequency or temperature averages, but a decrease in 99th percentile frequency or temperature averages.

This may be indicative of different platinum resistor brands being installed and/or each probe of whichever brand having different reaction characteristics in how frequency or temperature is logged.

Also, the AWS installation might involve another environmental variable such as a slight change in location or the commensurate installation of a small Stevenson screen which alters exposure to hot or extremely hot air in different ways.

Only through the collective averaging of all synchronised observations can the influence of AWS installation be established.

At most stations there is a rapid shift, whether higher or lower, in frequency, average temperature or both within the different extreme percentiles when an AWS is installed, suggesting platinum resistors do not log these extremely hot and cold temperatures in the same way as preceding manual thermometers.

A majority of ACORN stations (69 v 36) experienced an increase in the frequency of 90th, 95th and 99th percentile days, with the collective averages showing a sharp increase coinciding with AWS installation. Conversion from manual readings from mercury thermometers to AWS observations caused a frequency and temperature increase at some stations and a decrease at others, but the AWS extreme temperature influence is biased toward heating rather than cooling.

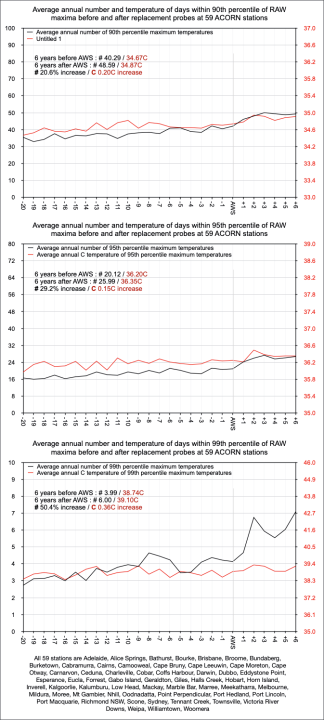

Maxima extreme percentiles didn’t plateau after their initial spike and there were stages of frequency and/or temperature increase in the years following original AWS installation. One possible reason for the gradual or sporadic increase in maxima is that among the 105 ACORN automatic weather stations, 59 have had their platinum resistance probes replaced at different times since the original installation of an AWS.

Chris further sorted the data to consider the effect of the replacement probes. Only six years are compared after probe replacement because this ensures all 59 stations are compared, with the most recent replacement being six years before, and inclusive of, 2022.

Replacement probes at the 59 ACORN stations had an influence on extreme maximum percentile frequency and average temperatures, particularly extremely hot 99th percentile days. Consider the 1% of hottest days after the installation of the replacement probes, the temperatures increased on average by 0.36 degrees Celsius, while the number of these hottest days increased by 50 percent.

Considering Alice Springs, as an example of replacement probe influence on extreme percentile frequencies and temperatures,in the five years before and after 2011 AWS probe replacement, Alice Springs had a 27.7% increase (44.8 v 57.2 pa) in the annual frequency of temperatures at or above the 90th percentile (10 years before and after 45.3 v 60.7 pa = 34.0% increase).

In the five years before and after 2011 AWS probe replacement, Alice Springs had a 0.1C increase in temperatures at or above the 90th percentile (10 years before and after 0.3C increase).

In the five years before and after 2011 AWS probe replacement, Alice Springs had a 23.3% increase (24.0 v 29.6 pa) in the annual frequency of temperatures at or above the 95th percentile (10 years before and after 23.5 v 33.6 pa = 43.0% increase).

In the five years before and after 2011 AWS probe replacement, Alice Springs had a 0.3C increase in temperatures at or above the 95th percentile (10 years before and after 0.4C increase).

In the five years before and after 2011 AWS probe replacement, Alice Springs had a 48.0% increase (5.0 v 7.4 pa) in the annual frequency of temperatures at or above the 99th percentile (10 years before and after 4.4 v 11.7 pa = 165.9% increase).

In the five years before and after 2011 AWS probe replacement, Alice Springs had a 0.4C increase in temperatures at or above the 99th percentile (10 years before and after 0.3C increase).

It’s worth noting that, for example, Alice Springs had only 76.8mm of rainfall in 2009, with 51 days in the 90th percentile (38.1C+), 23 days in the 95th percentile (39.6C+) and three days in the 99th percentile (41.8C+). 2012 had a much wetter 210.4mm so you would expect fewer hot, very hot and extremely hot days, but instead had 74 days in the 90th percentile, 41 days in the 95th percentile, and eight days in the 99th percentile.

In summary, the available 90th, 95th and 99th percentile data provides compelling evidence that platinum resistance probes in automatic weather stations increased the frequency of hot, very hot and extremely hot days in Australia since 1996, with a further change in the pattern of increase since installation of the replacement probes to as recently as 2016.

Back before I found WUWT and Al Gore’s Inconvenient “Truth”, Iooked into the record highs and lows for my little spot on the globe. (Central Ohio).

Most of the record highs were before 1950 and most of the record lows were after 1950.

I continued for awhile and compared past records to present “record” highs and lows.

About 10% of the daily records have been “adjusted”.

Even Nick admitted that.

I did a search for Nick’s reply. 0 hits.

I did a search for just “Gunga Din”. Still 0 hits.

What’s going on?

Thanks Gunga. A lot more than 10% of the daily records have now been adjusted/remodelled/homogenised.

The 1930s were particularly hot in parts of the US.

The data we are presenting here is about something quite different, it is about the new probes recording hotter for the same weather. So even the raw data/the unadjusted temperatures become useless for any proper analysis of climate variability and change.

… the available 90th, 95th and 99th percentile data provides compelling evidence that shifts the burden of proof to the data-keepers to prove that the data is accurate.

First you have to demonstrate some statistical significance.

That has already been done by Marohasy, et al..

Nothing in this post. And “Marohasy et al” has not even been written yet.

1. So what. It’s been done.

2. “Marohasy, et. al” are people. Not a document.

Thanks Janice.

I have certainly done a lot of statistical analysis over the years.

With the particular statistical test that I apply always changing depending on what I am analysing/the nature of the data, and also the question I am asking.

To understand whether there were any discontinuities in the temperature series from Rutherglen and also neighbouring stations I got into Control Charts in a big way. I still think they have so much application for climate science. You can read a little bit about this starting from about page 33 in this report, https://climatelab.com.au/wp-content/uploads/NW2016.001.PP_.Marohasy.pdf

When it comes to comparing paired values (e.g. probe versus mercury daily maximums), then there is a place for paired t-tests. As with any statistical test the underlying assumptions have to be met and this can be a problem when some of the data is with held, and/or not collected. So I could prove a statistically significant different between temperature measurements from the first probe at Mildura and the mercury, but not the most recent probe and the mercury.

I have nevertheless shown that the most recent probe/the current probe often records 0.4C warmer on the same day, that is 0.4C warmer that the mercury. That Nick, Bill and the Bureau (I’ve sent all this analysis to Andrew Johnson at the Bureau) dismiss the result as irrelevant is extraordinary. They have their heads firmly in the sand. That conservative commentators aren’t waving these findings about and calling for a proper inquiry is also somewhat puzzling. I’m not sure if they don’t understand the relevance, or simply don’t want to.

I was chatting with a retired climate scientists a couple of weekends ago, he likes playing around with the available temperature series. He said the problem with my findings is that they cast doubt over all the temperatures series, taking some of the pleasure away from his own research. He said that if the problems are as fundamental as I suggest, then it becomes very difficult to conclude anything.

As regards this new analysis that Chris Gillham has just completed. We need to provide a statistical overlay. And I’m wondering what Nick, Bill and/or others with more expertise might suggest by way of specific test/tests. :-).

Thank you, so much, for taking the time to respond to me with such a thoughtful, thorough, answer. MUCH appreciated.

Nick doesn’t care 2 figs about statistical overlays. He is simplemindedly just throwing out the window of his madly swerving jalopy one roadblock after another. So far, they are all merely straw and tissue paper and you are running right over them in FINE FORM.

Way to go!

With admiration,

Janice

For a start, I have been doing stats testing one station at a time, looking finally for a set of baseline pristine Australian stations free of effects like probe changes.

Currently have Alice Springs on screen.

Ten years before and after November1996, when the probe type changed.

Preliminary look shows no pattern in shape of annual distributions of daily T max. Mean, standard deviation C of v, skew, kurtosis of the 21 distributions show no pattern I can find useful. Now looking at correlation matrices, nothing of interest at first blush.

I started each year in November, made each year 366 days to deal with leap years, so my numbers might not be exactly the same as Chris got. Also, my software has percentile default values of 99%, 97.5% and 90% which are not the same as Chris uses. I get very similar counts of about 37 temperatures each year above 90% coldest, but have not yet averaged them. Also I have infilled imputed values where missing, might not be the same as Chris used.

Early days. My work needs checking, could be wrong.

How many readers here want to see the detailed stats?

Who can describe the Mahalanobis d-squared method without Dr Google?

Geoff S

I have started the same analysis and using the NIST Tn-1900 Example 2 as a standard for determining averages each month. My brother is doing a similar analysis on his personal recordings. So far my biggest problem is missing months and years of daily temperatures. I have just cleared cells missing daily data and it appears to not have much of a difference. The years, well so be it.

My first problem was realizing that Tmax and Tmin are very correlated (~0.9). In other words, they are not independent and that should follow into their average. If the averages of daily temperatures are not independent, then neither can any further averages be considered independent. That kinda ruins any inferences from the Law of Large Numbers or the Central Limit Theory. That leads into using Tmax and Tmin separately rather than Tavg.

Using the TN-1900 example, the expanded uncertainty of monthly averages of Tmax and Tmin is in the units digits. Even baselines of 30 years ends up with an expanded uncertainty in the high tenths of a degree. That makes a difference, i.e., anomaly carry a very large uncertainty which obviates any anomaly calculation with one hundredths or one thousandths of a degree.

By the way, I am using Kelvin temperatures to eliminate any possibility of bias in the other scales.

I should point out that Nick never ever quotes any variance associated with the means being used to calculate the GAT. Put a Tmax of 70 and a Tmin of 50 into a standard deviation calculator and see what you get. Then propagate those uncertainties throughout a months worth of daily averages and see what you get! Or, take a months worth of Tmax’s and find the variance. Then do the same for Tmin. What are those individual variances?

I was very surprised to learn that Tmax and Tmin have such a high degree of correlation. While that may be true of rural stations, I seem to remember reading papers showing that, because of UHI, long term graphs of Tmax and Tmin show small but quite distinct deviation.

I did not mean to imply that they move up and down the same amount in lock step. However they are very much correlated. Think about the seasons. As Tmax gets warmer so does Tmin. Cooling is the same. The covariance is also positive and a large value over a months time.

Either way, they are not independent, nor are they close to a normal distribution.

“He said the problem with my findings is that they cast doubt over all the temperatures series,”

That’s why they don’t want to address the issue. They don’t want this can of worms opened up. Then they would look like fools or criminals, whichever applies.

“It’s been done.” (Statistical testing)

So what was the result?

Jennifer says they are still thinking about what test to use.

You asked for “statistical significance,” which has been shown, on the face of her analysis, passim .

Your switch to “statistical testing” is only a mirroring of what Dr. Marohasy proposed doing.

Nonsense!

You do “statistical testing”.

If you do, you may or may not establish statistical significance.

But if you don’t, you won’t ever.

So where did she claim to show statistical significance? (I’m sure she isn’t claiming that).

Dr. Marohasy doesn’t need to “claim” anything. Statistical significance is demonstrated by her results, obvious on the face of her analysis.

Enough already. Time to snatch from your hands all the red scarves (hat tip, Kip Hansen 🙂 ) you are waving about so we can focus on the issue at hand.

Bottom line: the issue here is –> unreasonable withholding of data.

Don’t mess with Janice, Nick. 🙂

So tell us the microclimate difference with the 20 trees removed from Alice Springs site since 2011.

Of course, part of this is just warming over time.

But another may be this. In the featured graph, at least, the frequency goes up after changeover even if the measured temperature doesn’t. That may simply mean that the probes, being automatic, measure a greater fraction of the days in the year. Fewer missed days.

Hi Nick

Jennifer said […I was so often surprised at the extent to which as the decades go by the rainfall and temperature records for any particular location tend to deteriorate in quality with many more missing days in the later part of every record.]

Why would you be surprised Jen? I blogged on recent BoM rainfall records deteriorating in 2008.

Deterioration in BoM rainfall data quality this decade 19Oct2008

http://www.warwickhughes.com/blog/?p=178

and

Deterioration in recent BoM rainfall data 4Nov2019

http://www.warwickhughes.com/blog/?p=6342

Jennifer,

I’m not opposed to the release of the parallel data, if it exists. I think it is a decision for the BoM. If they think it is reliable and valuable, they should publish it. But they need to prioritise their time too.

I think probes for ACORN stations will have very few missing values. But they are high quality stations, so this may be true even before probes. But it is the sort of basic thing you need to test.

A basic stat test would be a t-test for whether the mean before change is different from the mean after change (of annual frequency or temperature). But the after change data doesn’t look very stationary.

But they need to prioritise their time too.

The fate of the world rests on this, does it not? If the alarmists are right, this would help bolster their case for immediate action? If they are wrong, the world is in a panic and bent on sending almost all of us back to the misery of the stone age for no reason?

What could possibly be higher priority Nick?

The fate of the world does not rest on whether it was -10C or -10.4C in the NSW country town of Goulburn on Sunday 2 July 2017. Yet a huge amount of BoM time was taken up pondering this question, pushed by Jennifer.

That I queried why 10.4 was converted to 10.0 resulted in the lifting of the limits on cold days at Goulburn and also Thredbo. So lower temperatures can, and have since been recorded. :-). Limits were in place for 15 and 10 years respectively, that were lifted within days of me doing media. :-).

Seriously Nick? Innacurate data that goes against your narrative may be dismissed out of hand? In that one claim you have encapsulated the entire problem.

You claimed that the fate of the world tests on it. It doesn’t.

So Nick, you’re saying that global warming is not an existensial threat?

“What could possibly be higher priority Nick?”

Excellent question!

We are on the path of economic and societal destruction based almost entirely on a bastardized global surface temperature record.

If not for this, there would be no need to destroy ourselves trying to control CO2.

Of course, that is why we more rely on the UAH satellite records regionally and globally for general trends. But to have reliable surface records would be excellent, pity that the BoM don’t appear to want to share their systems, so we can check they are doing it right. After all who are they working for, us the public, none of their algorhythms should be secret, especially when major policies are at risk if they are wrong. This is bureaucracy at its worst.

Nick

black box thinking time

if they think its reliable and valuable they should publish, agreed.

if its NOT reliable and/or NOT valuable they should have even more reason to publish

To ensure that people do not continue to shout malfeasance and to hope that improvements are forthcoming and that people then ignore the

possibly baddata and start a different line of research.The sooner we work together and stop banging heads we will all be in a better place to understand more about the climate and how it changes.

“if its NOT reliable and/or NOT valuable they should have even more reason to publish”

Totally irresponsible. They may know that the thermometer calibration was not checked. They may know that the numbers were from a student training exercise. I can just imagine what people here would be saying if the BoM published data they did not know to be reliable.

Just because you have found a piece of paper with numbers doesn’t mean they are “data”.

Following your line of reasoning, then why publish anything unless it is PROVEN reliable? If calibration was not checked, the world SHOULD know that, and for how long it went uncalibrated. If students were gathering readings, they should have been supervised so that correct data was gathered!

Your excuses are not convincing. They are more of a damnation of the expertise of the responsible organization. I’ll bet you are making them grimace with these excuses.

Totally irresponsible. They may know that the thermometer calibration was not checked. They may know that the numbers were from a student training exercise.

Then they could publish those facts also, could they not? Really, you’re excuse making wears thin.

Hello Jono1066,

There is seriously no point in putting raw, field-collected data in the hands of people who do not understand the data or the need for quality control, prior to release.

For instance, the data in an A8 field book was collected by an employee of the BoM, or some other organisation that contributed the data, and I filled in many of those forms over many years. That is a fact – data are collected by workers, not “The Bureau”. Data does not become Bureau data (in the sense that the BoM should stand by the data) until it has undergone a Q/A or a verification process. Which is a fact also.

As you would expect they have internal procedures that do error-trapping and various other routines to flag spurious data, which are outlined in various BoM publications. As a research scientist in my previous life, I spent a lot of time checking data, raising queries, detecting outliers etc. At http://www.bomwatch.com.au, I have a multi-attribute protocol that does that, and also and for analysing trend and change.

So to put this in another context, as a long-term paid-up member of Australia’s Institute of Public Affairs, I make a contribution to activities carried out by Jennifer Marohasy. Because she is paid to speak for members, I expect the IPA to have a QA process that prevents Marohasy from undertaking campaigns under their umbrella. It is simply reasonable that presenting on our behalf, that her science-methods are sound, not vocal, but sound in their approach, the methods she uses, and that her outputs are therefore entirely credible. If she makes mistakes I expect her to fix them. But she ignores them.

I would say for instance, that her failure to justify the statistical tests she uses is a form of test-shopping. Claiming something is significant by virtue of the wrong test, is misleading and unethical. For example, a way to both test and data-shop is to use linear regression on data that is not homogeneous – that word again, which means that no other factor except for the hypothesized causal one, affects the trend.

For time series data, if another factor, such as a site or instrument change impacts on the temperature being measured, the hypothesized causal relationship with time is spurious. Surely a simple concept.

For instance, if a site like at the post office and Aeradio office at Meekatharra was watered and the new site was not (https://www.bomwatch.com.au/climate-data/part-3-meekatharra-western-australia/). Or someone analysing data for Marble Bar were unaware of the move to the new post office in Francis Street in the 1940s (https://www.bomwatch.com.au/data-quality/part-2-marble-bar-the-warmest-place-in-australia-2/). All Australian weather station sites have changed. Thus, trend estimated using Excel is invariably misleading.

It takes a great deal of careful research to uncover these things and therefore be to be confident that trend and change in data truly reflects the climate.

Anyway, I don’t intend getting too bogged-down, so I’ll leave you to mull over this.

All the best,

Dr Bill Johnston

http://www.bomwatch.com.au

Troll.

Dear Jennifer,

You put these posts up; you should stand behind them.

I am no troll; however, as a scientist you must defend your work.

What I said was :There is seriously no point in putting raw, field-collected data in the hands of people who do not understand the data or the need for quality control, prior to release.

As it is central to the issue of flogging the BoM into submission, why not focus on that.

I know that like the Australian Broadcasting Corporation and CSIRO (and the Liberals, the Nationals and the loony ALP & Greens), since this whole malarkey started in 1990, BoM has become agenda-driven. Also, that global warming in Australia has increased manly due to site moves and the change from 230-litre to 60-litre Stevenson screens. I therefore believe that you and the IPA are marketing a weak narrative.

Also, by arguing about the thin-end of big-things we are continually behind the 8-ball. It was never going to be 0.22 degC at Mildura that made a difference and Chris’ graphs show that.

I personally think it is a dead horse.

While your frustration and rage may know no limits, and be assured that so long as you remain in Queensland, I do NOT dislike you as a person, woman, mother or scuba diver, it is a simple fact that no matter how many A8 sheets Moi digitises, a difference of 0.22 degC cannot be regarded as statistically significant.

That is the central issue. So let’s leave all the other sludge aside and stick with that.

Why not put Moi’s data online.

Yours sincerely,

Dr Bill Johnston

(27.353 negative brownie points in anticipation)

All you have done is to make the case for stopping the temperature record when a change is made and starting an entirely new temperature record for the new “station”.

Modifying data in order to CREATE a new LONG record is deceitful. It is also not science. If I buy a new meter that has a different calibration than the old one, do I get to go back and redo past radiation data from a nuclear plant? If I am measuring concrete strength for a building base, do I get to go back and change previous readings to obtain an homogenous stream of data?

Science relies on proper treatment of measurements. Changing past data eliminates what came before and no one can justify that for any reason. If an organization believes they know how to create better information from the past data, then they should publish information about their recommended change algorithms and let individual researchers make the decision on how to proceed.

Bill Johnston: “Claiming something is significant by virtue of the wrong test, is misleading and unethical.”

Personal attacks? I find your attitude towards Jennifer to be very offensive.

You can’t argue your points without making personal attacks? I guess not. I haven’t seen one of your arguments that didn’t contain a personal attack on Jennifer. It’s pathetic, is what it is.

“if they think its reliable and valuable they should publish, agreed. if its NOT reliable and/or NOT valuable they should have even more reason to publish”

Exactly right. There is no good reason not to provide the temperature data.

The only reason not to provide the data is they have something they want to hide.

And claiming that the BoM doesn’t have time to process the paper data is a strawman. Jennifer has volunteered to do the work for them, if they can’t get it done themselves.

There’s no good reason to withhold this data.

Careful Nick,

Jennifer does not understand stationary.

She has also left a considerable amount of wreckage behind her in the previous posts of this series that she has not tidied up or explained.

Then there is the use of paired-tests on autocorrelated difference data to prove significance, and her use of I-MR-R/S control charts on data that are demonstrably not homogeneous, neither of which she understands, and none of which she can justify, mainly because the weight of statistical evidence is against her.

Furthermore, if you disagree with her on technical grounds, or even try to reason with her, she will resort to calling you a liar.

I could never work with such a person, woman or not.

All the best,

Dr Bill Johnston

http://www.bomwatch.com.au

Hi Bill,

Here is your opportunity… Since the very beginning of this series, seven whole parts ago, you have told us that you know a lot about statistics. That you have a PhD and you know a lot about statistics.

So, which statistical test should we now apply to this data that Chris Gillham has so brilliantly sorted and analysed.

Dear Jennifer,

You know I approach the issue of extremes and trends in extremes differently and that I calculate those from raw data as part of my BomWatch QA routine. I also wrote in one of the previous posts in your current series (you can look it up), that Blair Trewin’s latest homogenisation methods result in increased upper-range extremes, without greatly affecting the mean or median.

I take no sides. I’ve also given a proper dressing-down to the Bureau that Nick Stokes would be proud of, all irrefutably backed by data.

I’ve also published a series of reports on the Bureau’s automatic weather stations, focusing particularly on aspects relating to AWS and extremes in the 30-page report on Charelville (https://www.bomwatch.com.au/bureau-of-meteorology/charleville-queensland/). Armchair reading really!

One of my conclusions with respect to Charleville (whre they actually made data up), was:

It is also no warmer in Central Queensland than it has been in the past. Conflating the use of rapid-sampling automatic weather station (AWS) probes housed in infrequently serviced 60-litre Stevenson screens under adverse conditions with “the climate” is misleading in the extreme.

You and Chris also know that I have done several posts on https://joannenova.com.au/ that explored the effect of site and instrument changes on maximum temperature trends and extremes, including at Bourke.

Also, the post on their new plastic screens which by their design, increase high extremes at the expense of low extremes (picture). You also know that when everyone spat the dummy over the Bill-issue (you colluding with the late BobFJ behind my back), due to the back-stabbing standard of interaction within the ‘group’, I was out of there. I don’t know why you don’t just zip-up your obsession with Rutherglen.

I also suggested in one of my replies to your recent posts that you get hold of, and use PAST from the University of Oslo (https://www.nhm.uio.no/english/research/resources/past/). That way, you will learn yourself and you won’t accuse me of lying. In another reply I even suggested a range of tests that may be useful.

As an overview of this post, my advice is to continue with what you are doing, but just use graphs and don’t use words like significant.

Why?

While you are using pooled data aligned with when probes replaced thermometers, and there are lots of numbers and graphs, all my research, which I am still working on, points to the change to 60-litre Stevenson screens and site changes, as being much more influential on trends at individual sites (see Marble Bar for instance (https://www.bomwatch.com.au/data-quality/part-2-marble-bar-the-warmest-place-in-australia-2/, pictures near the end of the Report).

My view of the frequency problem

In my view, frequencies are best analysed (or graphed) as frequencies within classes over time and expressed as percentages of viable observations/year; not as counts which can vary depending on the number of observations/year.

The thing with Goulburn

The issue with Goulburn is that since I first circulated the picture of the missing 60-litre screen to the ‘group’, I was trying to work out if its sudden appearance between the time I was there and you were there affected trend, the frequency of extremes and trend in extremes. So you see the exercise was not really about all the other frustrating, emotionally-charged spill-over toxic girlie stuff.

However, the question is now resolved. (You and Lance still have sludge to clean up, though.)

The attached picture shows a peek inside one of the new plastic screens installed out in central western New South Wales. The temperature probe is on the left, the humidity probe is on the right.

Although not obvious due to the lateness of the day and reflection, the inside of the screen was mat-black. The larger number of louvers (30 or so vs. 20 or so on a 60-litre wooden screen), would reduce the airflow, increase retention time and therefore would probably result in elevated temperatures on calm warm days, which I consider to be global-warming-cheating.

(Go boys and girls, I expect 20 negative brownie points.)

All the best,

Dr Bill Johnston

http://www.bomwatch.com.au

Troll.

No troll. I expect better than this and I’m on no one’s side.

Surely it is time that you (and Nick Stokes and the BoM’s marketing branch in particular) took stock of where this going.

Also, it is time that Nazi-sympathizes like ‘97-percent consensus‘ Reichsfuehrer-SS Dr John Cook and other grubby dummkopfs at the Climate Change Communication Research Hub at Monash University, including useless climate scientist David Holmes, were sacked.

Intent on scarring and scaring children and mothers as a mission statement, the whole caboodle should be sent to re-open the meteorological office at Halls Creek or preferably Oodnadatta.

Then the BoM can cut-off the power, issue crickets and candles so they are sustainable, build a dingo-proof fence and save all of us!

Those are the big issues.

Why are taxpayers putting up with this and why is the IPA silent?

Kind regards,

Bill Johnston

Why do you have an obsession with “time series” based on temperature? That generally occurs when someone believes the time series can prove or offer some non-obvious glimpse of the future, such as CO2 is the control knob.

To “prove” that assumption requires long, long temperature records that reveal the apparent connection and allows one to forecast the future. Thus the need to “homogenize” the records so they appear to be continuous.

You are barking up the wrong tree. Pauses already show that multiple things cause temperature changes. Using 30 years to classify climate is a joke. Do deserts spring up in 30 years? Do they green in 30 years? Will the ice at the poles disappear in 30 years? Forecasts of temperature have failed for the last 50 years. They won’t get better.

You should be addressing the need to combine temperature with humidity and wind readings from the automated stations to see what a combined metric might show.

Dear Jim,

i don’t have an obsession with time series. However, if you want to measure trend over time you need a homogeneous time series – one not affected by another factor (Yes/No). If you don’t want to do that it does not matter (pass GO and don’t collect $200).

As the screen has stayed the same, is the increasing trend in Tmax at Goulburn of 0.61 degC/decade over 29 years (1991 to 2019), due to the site move in 2016 (now confirmed), low rainfall from 2001 to 2009 and 2016 to 2019, the change from the Rosemont to the Wika probe on 23 February 2012 that everyone is sweaty about, something else, or a gradual change in the climate (Yes/No)?

Or is there actually no ‘real’ trend (Yes/No).

How are you going to find-out if you wanted to?

Options are, we could have an insane argument and pile-on about: (i), the couple of instances when T fell below 10 degC; (ii), that the BoM is not NATA-certified, when it actually is; (iii), the WMO requirement that isn’t; (iv), that I don’t know what I was looking for in 2016 because light no longer traveled in straight lines or something, therefore I must be lying, and (v), anyway there is a local unnamed expert who simply “knows” without a shadow of doubt that the screen could not be seen from the hanger door …. You pick Jim, or Lance, Jennifer, Richard Greene, Janice Moore … anyone!

I did a detailed summary/reply here, which actually took hours to write:

https://wattsupwiththat.com/2023/02/15/hyping-maximum-temperatures-part-6/#comment-3682117

was there any point? (Yes/No)

Don’t you see how absurd all this gets. Lucky there is no scanning issue (or Tim Gorman, did that https://wattsupwiththat.com/2023/02/15/hyping-maximum-temperatures-part-6/#comment-3682581, just quietly go down the gurgler?)

Its a time waster. Ridiculous claims and counter-claims, insults and degrading commentary do nothing but stir frustration.

However, Jennifer, Lance and Chris MUST get their story right. Jennifer owes that as a scientist and as an IPA spokesperson.

I have also given them the advice I believe they wanted, given the data and graphs they have. Consulting a statistician (not me) at the outset may have optimised the considerable effort Chris has put in to the project.

Use of a comprehensive framework such as R or Python, would have also saved a heap of time/work. I think looking again at the graphs, it may have been useful to mean-centre all the datasets (deduct the grand-mean of each series from all values in the series) and use anomalies from the mean as the transformed dataset.

(Centering may remove inter-site differences which is a major source of contamination; also as you are interested in inhomogeneties or not? you could try comparing a few detrended datasets – takes away the trend and centers the dateset, leaving just the inhomogeneties in residuals.)

To be clear, this is not my project and I do not even know what the hypothesis is. You need to do a lot of reading and thinking about it for yourselves, and less yelling at me.

Jim, if “You

shouldwant to be addressing the need to combine temperature with humidity and wind readings from the automated stations to see what a combined metric might show”, buy the data from the Bureau and go for it I say. Should be easy.To reduce the overall level of aggression and BoM-hate, you all could also get a comfort dog and some little black plastic bags; or a rainbow squisy for A$14.95 (https://sensoryspace.com.au), or tell a few jokes.

All the best,

Dr Bill Johnston

http://www.bomwatch.com.au

You are obsessed with time series and trying to decipher a way to have a LONG record with no disjointed sections. Along the way, changing previously recorded data is just good way to do so.

Nothing in your response indicates that you have done the very basic statistical work to determine the distributions you are dealing with.

Your own statements tell me that you would be very dissatisfied with time series pieces that are 10, 20, or even 30 years long. They won’t allow you to correlate CO2 increase in the atmosphere with increasing temperature for 100 years into the past.

Those are the consequences of updating technology and should be dealt with in an ethical manner. Changing data is not a scientific endeavor.

Thank Jim,

I am not obsessed with anything at all. There is no correlation between CO2 and Tmax at any of the 300 or so weather station datasets that I have analysed.

For Alice springs, I have not changed any data, however, some data may be problematic (possibly in-filled etc. (Shows up as outliers.)

Here are some preliminary results for data up to February 2022. I hope you understand it.

Alice Springs maxima. 1878 to 2002 (Daily data to 15 February 2023). Also cursory examination of daily airport data from 1974.

There is evidence that the post-1942 site at the airport was watered [aerial photographs and comparing means of overlap data between the PO which was warmer and the AP which was cooler (28.51oC vs 28.13oC Psame 0.013 (t-test for equal means].

Tests for probe and screen effects (raw daily data i.e., inclusive of rainfall effects) were evaluated for the post-1974 dataset (i.e., the met-office site only).

Based on dates, provided in metadata, five groups were compared. These were:

(1) 1 August 1974 to 20 March 1991 (thermometer only; Rosemont deployed 21 March 1991)

(2) 21 March 1991 to 31 October 1996 (thermometer/Rosemont, to probe becoming primary instrument)

(3) 1 November 1996 to 29 August 1998 (Rosemont, until 30-litre screen deployed)

(4) 30 August 1998 to 10 November 2011 (Sml screen, Rosemont)

(5) 11 November 2011 to 15 February 2023 (Sml screen, Temp control (probe 2))

So there five treatments (1 to 5) each being combinations of screens and probes, having variable numbers of daily observations per treatment. Total number of cases = 17,732.

I used R and the Rcmdr package and the routine lm, with treatments specified as factors, and means and differences determined by the emmeans package with turkey P value adjustment.

This is the output comparing all treatments simultaneously:

ScreenProbe emmean SE df lower.CL upper.CL .group

1 28.7 0.0948 17717 28.5 28.9 a

2 28.8 0.1632 17717 28.5 29.2 ab

3 29.2 0.2861 17717 28.7 29.8 abc

4 29.3 0.1064 17717 29.1 29.5 b

5 30.0 0.1153 17717 29.8 30.3 c

The first three treatment means are the same (they share the same group ‘letter’). However, each group is slightly warmer than the one before. Treatments 3 and 4 also could not be regarded as different, but the mean for Treatment 5 (the TempControl probe, small screen from 11 November 2011), may be warmer overall, by 0.77 degC (0.157se).

Cutting and dicing, there is no difference between the means for Treatments 1 and 2 (i.e., up to Nov 1996), which was ostensibly thermometer data, but there was between probes 1 and probes 2 after 11 Nov 1996 (same means as in the Table above).

Considering the screens alone, the difference was 0.82 degC (0.111se). [In the above Table, Treatments 1 to 3 were in the 230-lite screen; 4 and 5 were in the 60-litre screen.]

In interpreting the results, rainfall was low from 2002 to 2009 and 2016 to 2020, so as Tmax depends on rainfall, those years would have been warmer. Google Earth Pro also shows the site was sprayed-out sometime before 2011, which would reduce transpiration, and increase apparent warming. As rainfall reduces annual Tmax by 0.427 degC/100 mm, it does not take much of a reduction in rainfall/transpiration to impact Tmax.

Rainfall is both episodic (comes in episodes) and stochastic – there is no predicting wet or dry years, or years that are particularly wet/dry, or when episodes start or end. Thus, the relationship with Maximum temperature is also quite variable.

It is also not possible to work with rainfall as a factor affecting daily Tmax, because rain has a variable residence time in the landscape (depending on how much and when, in relation to evaporation), and lags for a single event may run out to months.

Briefly examining step-changes in annual Tmax ~ rainfall residuals (i.e., with the effect of rainfall statistically removed), there is a persistent Tmax up-step in 2013, which is unrelated to any notable site change, except perhaps the effect of herbicide or some other site-related factor.

Frequency (% within daily Tmax classes/year) stepped up in 1979 and 2012, for daily Tmax greater than 39.8 degC, and greater than 40 degC, at the expense of values less than 12 to 16 degC. [Rough analysis of daily data inclusive of rainfall effects.]

I have not examined probability density functions, or percentile differences for the raw-data Tmax step-change in 2013. But eventually I will.

There is at least another several days work in completing and verifying the findings and fully writing them up, so the results and interpretation given here is preliminary and subject to change. As of the last time I analysed Alice springs Tmax,, there was no residual trend or change that could be attributed to the climate, CO2, coalmining or anything else. .

Dr Bill Johnston

19 February 2023

http://www.bomwatch.com.au

What are the assumptions for a t test?

Something about independent, normal distribution, and similar variance. Have you shown that the necessary assumptions are being met before recommending a t test?

Jennifer is asking how to test her results for statistical significance. Do you have any useful suggestions?

Go and look them up for yourself. Type in “t test assumptions”.

Cheers,

Bill

I don’t need to look them up! Perhaps you noticed the examples I quoted. The question was something you need to answer.

Do the temperature data meet the assumptions for a t test?

Are they independent?

Are they normal distributions?

Do they have similar variance

Can you answer yes to each of these in all cases.

Why don’t you check yourself? I don’t need to answer any of your questions, unless you have a point to debate, and anyway who would use a t-test, and for what?

Cheers,

Bill

Nick Stokes would (per his comment above on February 17th at 4:58pm)

And by the way, Jim Gorman, I routinely check residuals statistically and graphically and I use influence plots to check outliers. Often I have to go back to the original dataset to work-out what is going on, most outliers are either missing data within a year, infilled rainfall values that I had to estimate from a neighboring site, or something that can’t be explained, such as a faulty thermometer or a change in observer.

I can also sometimes run a statistical test for particular outliers.

My advice to you is to be thorough. Know your test assumptions, and don’t use dodgy methods.

My BomWatch protocols have been explained here: https://www.bomwatch.com.au/data-quality/part-1-methods-case-study-parafield-south-australia-2/ and there is a downloadable report, and data for the study, in case you want to check anything.

All the best,

Dr Bill Johnston

“Why don’t you check yourself? “

I have!

Your lack of answers indicates that you have not done the checks for proper assumptions or you could answer the questions immediately with definite values.

Funny isn’t it that you have come to the same conclusion as I have, why do a t test! The only difference is that I have examined the assumptions closely to see if they are met while you can’t convince me that you have done the same.

Jennifer,

In a study of heatwaves at 8 main Australian cities, I can find little evidence that the warming historic temperature record at a city relates to warming heatwaves. The average or maximum official temperature for a city might show a rise of about 1 deg C from 1910 to now, but the hottest heatwaves each year do not systematically mimic that increase.

Mechanism might be that the heatwave temperature arises firstly in the hot centre before being blown to east coast capitals and Adelaide.

Support comes from observations that absolute hottest heatwaves are in Adelaide and Melbourne, then Sydney then Brisbane. Counter-intuitive, getting cooler closer to Equator.

The whole matter of historical temperature analysis is clouded by data quality and failure to correct T for rainfall.

Therefore, I have little idea what to expect of patterns of extreme high temperatures over the years. It makes it hard to interpret whether hotter extremes after probe changes are natural or from the probe differences.

It follows that moves to improve data quality, such as getting data for parallel observations, can be helpful and should be encouraged.

Geoff S

Thanks Sherro01.

First thing I would do is see if the Tmax and Tmin variances are similar between the thermometers. Then I would do a correlation test between Tmax and Tmin for each of the thermometers and see if the diurnal temperatures correlate the same. If they don’t, something is funky.

Something I have been doing is looking at Tmax and Tmin temperature frequency distributions. They should also be similar between thermometers. Your control charts should show something similar.

Lastly, don’t rely on Tavg. Use the separate Tmax and Tmin temps. They each come from a different distribution and the average hides so much variance. Tmax is from a sine wave pattern during most of the daytime and Tmin is from an exponential decay pattern at nighttime. A lot of statistical tests assume normal distributions and these are not. At least, using them separately keeps the two distributions together.

Dear Jim,

What tests do you use for Tmax and Tmin temperature frequency distributions; and do you mean-center them first?

Cheers,

Bill

Excel makes it pretty easy.

Use the min/max functions to get a range.

Decide on the bins you need between the min/max values.

Enter the bin values into a column.

Use the frequency function to get a distribution.

You can perform this on 5 minute daily, weekly, monthly, or even annually if you wish.

The 5 minute data will quickly show that daytime temps pretty much follow a sine function while at some point in the late afternoon an exponential decay begins.

None of this should come as a surprise to anyone. Insolation from the sun should ideally follow a perfect sine function from sunrise to sunset. Temperature doesn’t exactly do this for many reasons such as clouds, winds, evaporative effects, etc. At some point in the afternoon, insolation falls below what heat the earth is radiating and conducting away. This begins an exponential cooling function. The shape of the decay looks similar to capacitive discharge.

It is my understanding that average temperatures are calculated by averaging the daily maximum and minimum temperatures for each location. It is also my understanding that average temperatures are driven more by raised minimum temperatures due for example to the urban heat island effect. So perhaps it is not surprising that small increases in maximum temperatures due to a different measurement method may be observed which may have little effect on the averages. Is it your intention to also evaluate the minimum temperatures in the same way?

Having asked the question I agree that there should be consistency in reporting the temperatures and that automated instrumental methods should calibrated against the old analog thermometers and their measurement methods adjusted accordingly.

I assume that the reason for using automated instrument methods is to reduce human error and to reduce the labour required to maintain the database. If this is the case then how is it possible to maintain a 20 year comparison of the analog thermometers with the newer automated devices without daily intervention by humans? I guess that it may be possible to set up a camera to read the thermometers and a mechanism to reset the Max/Min indicators, in which case why would you bother to include a device that could give you a continuous record? All seems a little strange to me.

I also wonder what procedure is used to ensure consistent calibration of the electronic thermometers on a daily basis and what is done at replacement of the temperature probes.

Thanks Tmatsci.

The reason for automating is to reduce the need to manually read the thermometers (max and min) every day. There are now about 600 automated stations, with parallel readings potentially available from just 38.

The parallel manual readings were taken at 9am and written on to an A8 Form. A maximum thermometer will ‘hold’ the highest temperature from the previous afternoon until it is reset i.e. the mercury will continue to rise until it is reset. Same with the minimum, the alcohol will fall until the thermometer is reset. So it is possible to read the maximum that the thermometer reached and the minimum that the thermometer reached without actually being present. As long as the thermometers are manually reset at the same time every day.

So the 20 years of parallel data required a human presence every day at each of those 38 weather stations for all of the 20 years. Recording all of this was a huge job.

It is a disservice to all the men and women who went to the trouble to continue to take the manual readings including on weekends, to not now make this information public … to not now analysis it. It would be an appalling waste to now shred this valuable information from not only the perspective of its scientific value, but considering all the effort already expended.

Chris Gillham has also undertaken analysis of the minimum temperatures. I have only present a tiny summary of some of the analysis that he has done in this blog post. :-).

The parallel readings are important only from the standpoint of developing a recommended algorithm for researchers to use in normalizing readings between two devices in order to compare them.

Actually using that algorithm to officially change readings that have been previously recorded is deceitful. It also destroys the ability to research historical periods with accurate information.

If stations are changed for whatever reason, the data stream from that station id should be stopped and a new id should be created. Let individual researchers deal with it in any manner they so wish.

“It’s worth noting that, for example, Alice Springs had only 76.8mm of rainfall in 2009, with 51 days in the 90th percentile (38.1C+), 23 days in the 95th percentile (39.6C+) and three days in the 99th percentile (41.8C+). 2012 had a much wetter 210.4mm so you would expect fewer hot, very hot and extremely hot days, but instead had 74 days in the 90th percentile, 41 days in the 95th percentile, and eight days in the 99th percentile.”

This is such a nutty argument that I didn’t believe we’d hear it again. There may be a slight tendency for wet years to be cooler, but you can’t argue that getting some rain proves it couldn’t have been hot. You can easily fit hot days and wet days into the same year. So I did a plot of the 83 years of Alice data, showing annual rainfall versus number of days over 40C. There is a general tendency for hot weather to come in dry years, but there are plenty of years as wet as 2012 (which was below average rain). I’ve marked the years since 2011 in red. The most recent summers were hot and dry, but they aren’t outside the pattern relating to rain.

What is happening with these electronic thermometers is quite obvious, and there are two explanations possible:

Yep.

Their modus operandi looks familiar:

From: Phil J*nes

To: Gil C*mpo

Subject: Re: Twentieth Century Reanalysis preliminary version 2 data – One other thing!

Date: Tue Nov 10 12:40:26 2009

Gil,

One other good plot to do is this. Plot land minus ocean. as a time series.

This should stay relatively close until the 1970s. Then the land should start moving away

from the ocean

….

One final thing – don’t worry too much about the 1940-60 period, as I think we’ll be changing the SSTs there for 1945-60 and with more digitized data for 1940-45. There is also a tendency for the last 10 years (1996-2005) to drift slightly low – all 3 lines. This may be down to SST issues.

Once again thanks for these! Hoping you’ll send me a Christmas Present of the draft!

Cheers

Phil “

(https://wattsupwiththat.com/2009/11/19/breaking-news-story-hadley-cru-has-apparently-been-hacked-hundreds-of-files-released/#comment-207259 )

A potential problem here is that the BoM may have used PRTD data prior to 1 November 1996. You really do need those A8 forms from before the switch over date to see what they did use.

“Since 1 November 1996, the PRT temperatures from AWS were deemed the primary SAT measurement, and no overwriting of the PRT value with the manual in-glass measurement was allowed. Prior to 1 November 1996, and post the introduction of PRTs in 1986, the observer could replace the temperature observations from the PRT with manual measurements.”

So prior to November 1996 the values could be from either.

Quote from page 10 here

It is interesting that while attempting to defend the BoM, the Nokes/Johnston tag team have been effectively claiming they lied about this and used the Mercury value from the A8 form raw data example from Mildura that Jennifer shows matches the post 1996 ADAM data available from the BoM’s post Q.A. “climate data online”. Jennifer is claiming the BoM told the truth while it’s would be defenders claim it did not.

Oh what a tangled web we weave…

Dear Lance,

You have never filled in an A8 form and the A8 is actually the page size for a page within a Field Book, not a magic number. The A8 form contains no details about the type of probe. There is also no “Nokes/Johnston tag team” who ever Nokes is. You are simply talking like an EXPERT through your nether regions … again.

The 0.22 degC difference actually came from Table 3 p.20 of “THE AUSTRALIAN CLIMATE OBSERVATIONS REFERENCE NETWORK – SURFACE AIR TEMPERATURE (ACORN-SAT) VERSION 2” prepared by Blair Trewin, which is simply the tiny difference between two averages compared over years.

Furthermore, I checked real data for a site in Melbourne (as in, I went and sat in their office), and on any day, the difference betweem Tmax and Tmin reset values, and 9am dry-bulb is frequently more than 0.22 degC. Manually observed T-observations are not nearly as precise as you think. What is it about having never observed the weather that makes you an expert?

All the best,

Bill

Troll.

Thanks Lance. Attached is an A8 Form from before the 1 November 1996 change over.

The probe (see heading ‘remote sensors’ bottom left) was read at 9am and 3pm. Because the probe (unlike the liquid-in-glass thermometers/manual in glass/mercury and alcohol) does not ‘hold’ the TMax or TMin, the values recorded on A8 Forms from the probe before the change over are not very useful in so much as they would not represent the TMax or TMin.

While it is noteworthy that the report you quote from states that the observer ‘could replace’ an observation from a PRT reading for a manual observation before 1 November 1996, realistically when would this ever occur?

Reading from the report (page 10) it does not say that the observer could replace a manual observation with a PRT observation. To be sure, Chris used the observations as entered into the ADAM/CDO database before 1996.

We know, however, that for Cape Otway they were recording from a PRT before 1 November 1996, and entering that value into the ADAM/CDO database. How many other locations had only PRT before 1996 in direct contravention of what they mostly claim?

So many questions. Thanks for getting us to think through the issues that do potentially matter.

“The probe (see heading ‘remote sensors’ bottom left) was read at 9am and 3pm.”.The probes can only be read by the AWS. They can’t be read by a human at the Stevenson. Values can only be seen at a MetConsole . Values are retained for 72 hours by the AWS. From that same page 10 “At sites with MetConsoles (essentially a computer system with a direct link to the local AWS and communications network), observers are prompted by the console…” It seems the A8 forms would have been filled out at both the Stevenson and the MetConsole within minutes. With the primary instrument value going into the primary instrument position on the form. Thus they swapped position on the form at November 1 1996 but wrote numbers in the primary instrument position taken from the MetConsole. The A8 form being the only record if there was a telemetry failure lasting longer than 72 hours. I suspect that A8 forms from places with no MetConsole only have glass thermometer values. Mildura would have had a metConsole.

“With the primary instrument value going into the primary instrument position on the form. Thus they swapped position on the form at November 1 1996 but wrote numbers in the primary instrument position taken from the MetConsole.”

Is this the source of Jennifer’s nonsensical misreading? Why would anyone be handwriting probe results at all? They are all accessible to computer systems. They have posted them immediately on the internet for many years. They could easily copy them to disc storage, send them to a central office repository (even using Kermit).

What on Earth would it mean to write in a probe value “before touching” and “after setting”?

In fact the usage of the form is quite clear. They filled the thermometer readings as described by the very explicit headings (referring to “thermometer, not “primary instrument”), and they wrote in single values for the probe maxima and minima (presumably from MetConsole) in the column headed “Remarks”.

” Why would anyone be handwriting probe results at all?”

Because there are still glass thermometers.

Why don’t you attempt to use kermit or telnet etc with no telemetry and let us know how well it works.

“Because there are still glass thermometers.”

Yes, they wrote thermometer results on the A8 form, just as they always had.

“Why don’t you attempt to use kermit or telnet etc with no telemetry”

I did it from home. But if you can put the results on a MetConsole, surely they can be written to a floppy disc, at least.

Why they did it does not matter. What matters is they have said they did it.

“Since 1996, the A8 data collection, where both inglass and PRT measurements are co-located in the same instrument shelter, has been maintained but not digitised. ”

Page 10

http://www.bom.gov.au/climate/data/acorn-sat/documents/ACORN-SAT_Report_No_4_WEB.pdf

That just says the A8 collection has been maintained, which we know. It doesn’t say that they changed it to record PRT. In fact, it strongly suggests that where they don’t have both LiG and PRT, ie PRT only, they don’t use A8 forms. Of course.

“What on Earth would it mean to write in a probe value “before touching” and “after setting”?”

Exactly what it means for the glass thermometer. The AWS records the maximum since the last reset and the minimum since the last reset. It also records the the min max and the last instantaneous sample for each minute. The Before touching value would be the last instantaneous sample of the 60 at 9:00 am and 3PM etc. You are attempting to create problems that do not exist.

Correction. other way around.

The Before touching value would be the accumulated extreme. The after setting value would be the last instantaneous sample of the 60 at 9:00 am and 3PM etc.

Could you please explain what “last reset” for a PRT means?

And “before touching”? If it is the last sample before 9am, why is it so different from the 9am sample. In Jennifer’s example, they are 32.3C and 15.3C. Two consecutive samples?

And why “touching”?

It is the daily min or max and the current temp. Just like the inglass. You are inventing the difficulty.

No. With inglass, you actually reset at 9am, not at the time of the daily max (which you don’t actually know). And you still haven’t explained “before touching” for a PRT.

The Tech Report you cited above explains what really happened to PRT data:

“By the early 1990s, automated data collection of

hourly data from automated monitoring systems

were common, and with the gradual development of

better communications and storage capacity, hourly

statistical data were being stored in the climate data

archive. With the advent of computing systems,

punched cards were used to store temperature dataset,

and prior to the advent of the ADAM database in

1991, archived data were stored on magnetic tapes.”

Nothing there about handwritten A8 forms for PRT.

I will simply quote myself where i did explain these things again.

“Values are retained for 72 hours by the AWS.”

The AWS holds the recorded minimums, maximums and other statistics for 72 hours. Your claim about the times these were written is typical of you and Johnston. Just deliberate confusion.

I quote myself again. ” It seems the A8 forms would have been filled out at both the Stevenson and the MetConsole within minutes. ” This is at 3PM and 9AM. The “without touching” for the inglass prior to Nov 1996 was the recorded extreme from the inglass thermometer. After Nov 1996 it is the recorded extreme retained by the AWS and read at the MetConsole at 9AM and 3PM. I quote myself again.”they wrote thermometer results on the A8 form, just as they always had.” Both the AWS min and max are reset daily by clock whereas the inglass min and max were reset by “touching”.

Anyone can see the AWS min and max reset online at 6AM and 6PM.

Watch these numbers and or the equivalent for any other state.

http://www.bom.gov.au/nsw/observations/nswall.shtml

There are three readings current on the left and recorded “low” and “high” on the right.

“Values are retained for 72 hours by the AWS.”

Yes, by the AWS. But as the section I quoted about, there was an automated data collection, constantly recording data from the AWS on a central system. So the notion that scribes are handwriting the numbers off a MetConsole screen into a field log as a record is just ludicrous.

“Anyone can see the AWS min and max reset online at 6AM and 6PM.”

Well, that isn’t the 9am and 3pm mentioned on the forms. But it is just resetting the daily numbers on an internet display system. Nothing to do with “resetting” a PRT. And no “touching” involved.

The basic issue here is that neither you nor Jennifer has given any actual evidence for your claim that in 1994 the BoM switched from writing thermometer readings to writing PRT readings into the same slots of the A8 forms, in clear mismatch to the form headings. It didn’t happen. The only thing you have quoted in support is from the Tech Note:

““Since 1996, the A8 data collection, where both inglass and PRT measurements are co-located in the same instrument shelter, has been maintained but not digitised. ””

But that isn’t saying they entered PRT readings into the forms. It is just saying that the same practice of entering thermometer data in the A8 continued, whether or not there was a PRT sharing the shelter.

“The basic issue here is that neither you nor Jennifer has given any actual evidence for your claim that in 1994 the BoM switched from writing thermometer readings to writing PRT readings into the same slots of the A8 forms, in clear mismatch to the form headings. It didn’t happen.”

A deliberate confusion of the date. This is not one of Bills time shifting photos Nick.

The correct switch over date is November 1 1996. It is the BoM that claim that date not us and clear evidence was given. It is the photo of the max temp for February 27 recorded in the primary instrument position on the morning of February 28 as 32.3. This temperature matches the BoM online temperature given for that date. The form also shows the title “sensor” with a line through in the observers pen and the title word having been replaced with “therm”.

https://wattsupwiththat.com/2023/01/24/hyping-maximum-daily-temperatures-part-2/

It is the Bill and Nick team who have given no evidence to the contrary.

” It is the photo of the max temp for February 27 recorded in the primary instrument position on the morning of February 28 as 32.3.”

It is recorded into the cell clearly labelled max thermometer. If that is all the evidence for this unbelievable story, it proves only that they entered a thermometer value rather than a PRT value on that day. I don’t know why, but Trewin does say in his tech note that the discrepancy of 0.22C for Mildura was thought to be due to poor calibration of the PRT. It may be that PRT values were replaced with thermometer values for some time.

Of course it is also just possible that the PRT also measured 32.3, although that is not the value entered into the remarks column.

” It may be that PRT values were replaced with thermometer values for some time.”

And there we have it the would be BoM defender claims the opposite of:

“Since 1 November 1996, the PRT temperatures from AWS were deemed the primary SAT measurement, and no overwriting of the PRT value with the manual in-glass measurement was allowed. ”

From the BoM on page 10 here

Why are you attacking the BoM Nick?

There may have been no valid PRT to overwrite.

The statement you cite is in the section on “Reading manual thermometers”. It says that observers may not overwrite PRT with manual data. This has not happened. They can’t. Someone downstream determined what would go into the ADAM database.

The fact is that the A8 material must be from thermometers; the headings on the form say so, and it would be impossible to find AWS numbers to fit the cells. Plus the absurdity of filling out by hand thousands of forms with data that has already been automatically collected and stored in computer systems.

To settle this even further, here is another quote from that Tech Report, p 20, describing situations like that of Mildura:

“While these devices [in-glass] provided the means for the primary SAT measurements up until the 1 November 1996 (Bureau of Meteorology 1997), a significant number of sites still maintain manual in-glass SAT measurements (previously shown in Figure 5) and input into the Bureau’s data collection system via the A8 form.”

They even give Table 8 showing what ACORN stations still (in 2018) have LiG thermometers; Mildura is one. But that last cite explicitly says that that LiG data goes into the A8 forms. Elsewhere it specifies what that data should be, which is the whole before touching, after resetting business. There is only one lot of those in the A8 form, and the BoM says that there must be one from the LiG. Therefore those numbers must be from the LiG.

The boM says ” Prior to 1 November 1996, and post the introduction of PRTs in 1986, the observer could replace the temperature observations from the PRT with manual measurements. ”

Nick says:

“It says that observers may not overwrite PRT with manual data. This has not happened. They can’t. “

and he says “

“It may be that PRT values were replaced with thermometer values for some time.”

You appear to be losing your war on reality Nick.

If there is an automated data collection system, observers can’t overwrite.

But again, thee it is. An explicit statement that the numbers on the A8 form are from thermometers.

“But again, thee it is. An explicit statement that the numbers on the A8 form are from thermometers.”

Platinum resistance thermometers no less. “PRT.”

“a significant number of sites still maintain manual in-glass SAT measurements (previously shown in Figure 5) and input into the Bureau’s data collection system via the A8 form.”

“So many questions. Thanks for getting us to think through the issues that do potentially matter.”

Thanks to you for this mammoth effort and for pushing on for us all despite the determined heckler.

Dear Jennifer,

Parks Victoria who ran Cape Otway, apparently decided to no longer continue met observations in April 1994. It was not a “Bureau” site and never was. The Bureau for their part installed probes on the day Parks Victoria ceased manual observations, which was 15 April 1994. Why can’t you accommodate that information and cease recycling it as something “The Bureau” did? The big bad Bureau had no hand in it.

Why, what blocks you from understanding that is what happened? Why is it so hard for you? Why do you hate so much?

Then you confused a dry-bulb thermometer the the people at the Cafe installed in 30 October 2002, with overlap Tmax and Tmin data that never existed. Then you wanted that overlap data that did not exist. You have effectively driven people at the BoM mindless with your insistent demands. There was all the hastle you made about Wilsons Promontory also.

While I’m not on their side and I have never worked for the BoM how much of this crap do expect people to take, before you are isolated and yelling at the sky?

You are asked simple questions you should be able to answer, and you scream”troll”.

The BoM is giving you the same “troll” message in response to your incessant demands. Have you actually analysed all the available overlap data that you can access for every site (Yes/NO).

Just get a grip and take stock!

Yours sincerely,

Dr Bill Johnston

Jennifer said: “We know, however, that for Cape Otway they were recording from a PRT before 1 November 1996, and entering that value into the ADAM/CDO database. How many other locations had only PRT before 1996 in direct contravention of what they mostly claim?”

But they were not.

“They” were Parks Victoria not the BoM. Metadata shows “they” decided to cease manual observations on the day Tmax and Tmin thermometers were removed and replaced with electronic probes by the BoM on 14 April 1994. “They” did not break any rules, “they” were their own bosses and that is what “they” decided to do. Wet bulb thermometers were also removed and replaced by a humidity probe on the same day. End of story. There was never an obligation or an overlap in respect of Cape Otway data.

A wet-bulb PRT-probe installed on 15 April 1994, was removed on 15 January 2015, which is the day the composite humidity probe was installed. So for 10-years “They” changed the muslin and wick on the PRT-probe but did not record any manual data.

Someone at the café at Cape Otway, decided to install a dry-bulb mercury thermometer on 30 October 2002. There is no record of that instrument having been removed. However, while Jennifer Marohasy thought it did in her earlier post featuring Cape Otway, a dry-bulb mercury thermometer does NOT measure maximum or minimum temperature..

There is therefore no overlap temperature for thermometers and PRT-probes at Cape Otway, the decision by Parkes Victoria to cease manual observations, had nothing to do with the BoM. There is no obligation on third parties to abide by rules or protocols set by the Bureau of Meteorology. Similarly at Wilsons Promontory. Despite all the fuss she made as a fellow of the Australian Institute of public Affairs, the data she insisted be provided by the Bureau, did not exist.

Like the missing Stevenson screen at Goulburn and all that followed in that post, these are major missteps by a scientist and a person who represents the IPA, of which I am a long-term supporter and member. The Bureau of Meteorology should not be hassled by someone who with the IPA behind her, happens to be a loud voice. In any sense her behaviour is unreasonable, unconscionable, it has been very costly and has to stop. Because she is a driven woman is no excuse.

As a scientist Jennifer Marohasy must also openly and authoritatively deal with the statistical issues that underpin her claims. It is no longer fun-and-games and shouting, her credibility depends on it. At the very least she should place her comparative data for Mildura in the public domain, as I have done on www,bomwatch.com.au.

Yours sincerely,

Dr Bill Johnston

Correct the record:

I said:

A wet-bulb PRT-probe installed on 15 April 1994, was removed on 15 January 2015, which is the day the composite humidity probe was installed. So for 10-years “They” changed the muslin and wick on the PRT-probe but did not record any manual data.