Brief Note by Kip Hansen – 17 August 2022

I have been doing research for other people’s book projects (I do not write books). One of the topics I looked at recently was the USCRN — U.S. Surface Climate Observing Reference Networks (noaa.gov); Self-described as “The U.S. Climate Reference Network (USCRN) is a systematic and sustained network of climate monitoring stations with sites across the conterminous U.S., Alaska, and Hawaii. These stations use high-quality instruments to measure temperature, precipitation, wind speed, soil conditions, and more.”

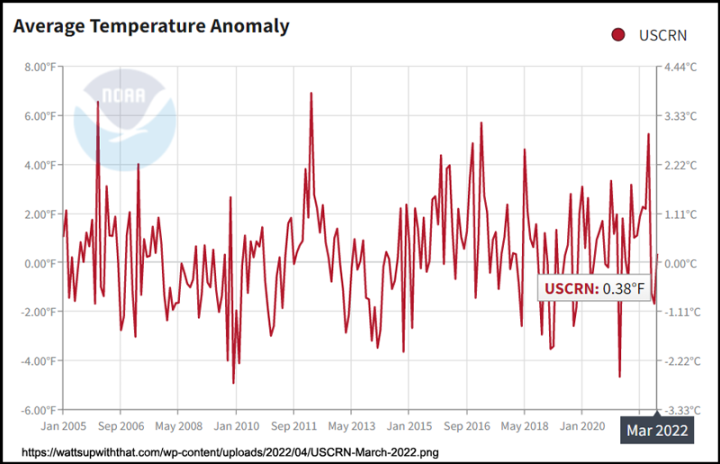

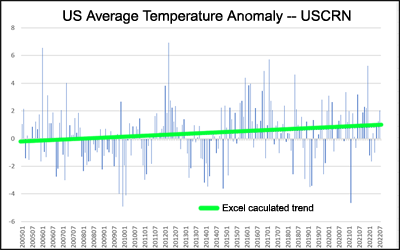

A main temperature data product produced by USCRN is Average Temperature Anomaly for the entire network over its full length of about 17 years. It is shown up-to-date here at WUWT in the Reference Pages section as “Surface Temperature, US. Climate Reference Network, 2005 to present” where it looks like this:

Now, a lot of people would like to jump in and start figuring out trend lines and telling us that the US Average Temperature Anomaly is either “going up” or “going down” and how quickly it is doing so.

But let’s start with a more pragmatic approach and ask first: “What do we see here?”

I suggest the following:

1. What is the range over the time period presented (2005-2022)?

Highest to lowest, the range is about 11 °F or 6 °C. This range represents not a rise or fall of the metric but rather the variability (natural or forced). Look at the difference between the high in late 2005 and the low in early 2021. If this graph had been unlabeled, I would have identified it as semi-chaotic.

2. Is the anomaly visually going up or down?

Well, for me, it was hard to say. Oddly, the anomaly seems to run a bit above “0” – which tells us that the base period for the anomaly must be from some other time period. And it is, USCRN uses a 1981-2010 base period for “0” when figuring these anomalies, the base period is not inside the time range of this particular time-series data set.

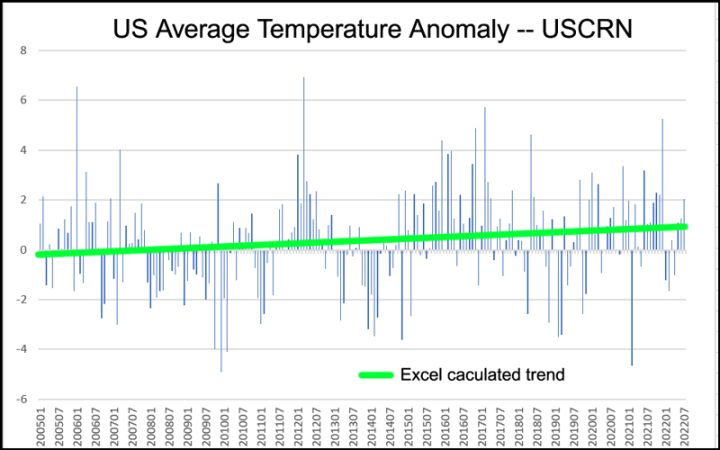

We can, however, ask Excel to tell us mathematically, what the trend is over the whole time period.

There, now you know. Or do you? MS Excel says that USCRN Average Temperature Anomaly is trending up, quite a bit, about 1 °F (0.6 ° C) over 17 years.

~ ~ ~

Now comes the FUN!

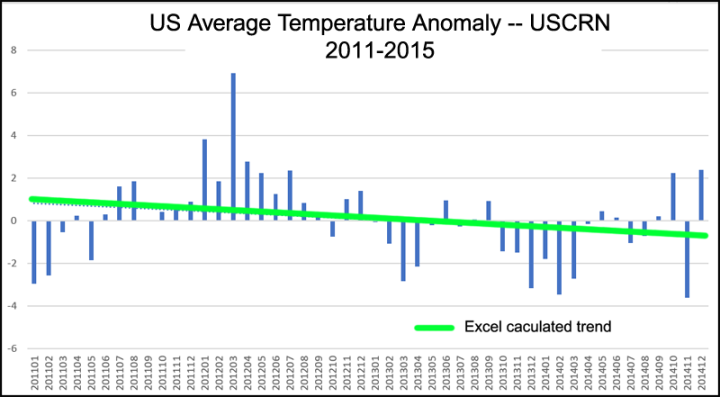

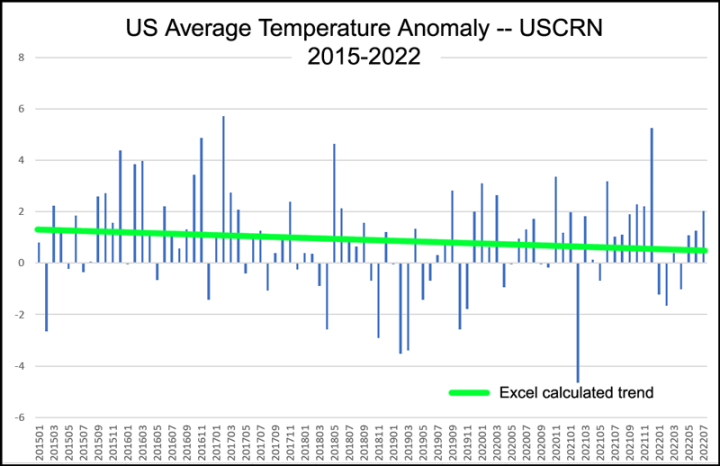

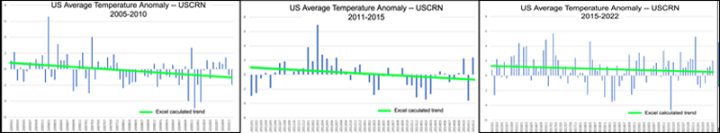

I’ve arbitrarily picked five-year time increments as they are about 1/3 of the whole period. Three five-year trends (the last one, slightly longer) which are all down-trending, add up to one up-trending graph when placed end to end in date order.

Lessons We Might Learn:

a. Don’t use short time periods when determining trends in a time series. Trends are always sensitive to start and end dates.

b. This phenomena is somewhat akin to Simpson’s Paradox: “is a phenomenon in probability and statistics in which a trend appears in several groups of data but disappears or reverses when the groups are combined.”

“In his 2022 book Shape: The Hidden Geometry of Information, Biology, Strategy, Democracy and Everything Else, Jordan Ellenberg argues that Simpson’s paradox is misnamed:”

“Paradox” isn’t the right name for it, really, because there’s no constriction involved, just two different ways to think about the same data. … The lesson of Simpson’s paradox isn’t really to tell us which viewpoint to take but to insist that we keep both the parts and the whole in mind at once.” [ source ]

c. It does bring to mind other data sets that change trend (or even trend sign) when looked at in differing time lengths — sea level rise comes to mind, with the short satellite record claiming to be double the century-long tide-gauge SLR rate.

d. Why look at trends that are obviously not reliable over different time scales? This is a philosophical question. Can a longer trend be real if all the shorter components of the trend have the opposite sign? Can three shorter down-trends add up to a longer up-trend that has applicability in the Real World? Or is it just an artifact of the time scale chosen? Or is the opposite true? Are three shorter down-trends real if they add up to an up-trend? (When I say “real” I do not mean just mathematically correct – but physically correct.)

e. Are we dealing with a Simpson’s-like aberration here? Is there something important to learn from this? Both views are valid but seem improbable.

f. Or, is what we see here just a matter of attempting to force a short highly variable data set to have a real world trend? Are we fooling ourselves with the interpretation of the USCRN Average Temperature Anomaly as having an upward trend – when the physical reality is that this rather short data set is better described as simply “highly variable”?

# # # # #

Author’s Comment:

I hope some reader’s will find this Brief Note interesting and that it might lead to some deeper thought than “the average and its trend have to be correct – they are simply maths”.

Many metrics of CliSci are viewed at an artificially assigned time scale of “since the beginning of the modern Industrial Era” usually interpreted as the late 19th century, roughly 1860 to 1890. Judith Curry, in her recent interview at Mind and Matter suggests that this is literally “short sighted” and that for many metrics, a much longer time period should be considered.

I hope I have time to keep up with your comments, I try to answer all that are addressed expressly to me.

Thanks for reading.

# # # # #

It seems fair to include all data in the trend unless there is a discussed reason not to.

The only graph that matters, if any do, is all of the data.

We can always find other things to discuss if we ignore some data for some unstated reason. But we shouldn’t do that.

M Courtney ==> If you mean we should always look at the mathematical trend of the whole data set then “maybe”.

But “the whole data set” is not the whole set of the physical reality we wish to interrogate — in the note above, we are addressing US National Average Temperature [as Anomaly]. That reality (if national temperature is a reality and not just an imaginary number) did not start in 2005 and will not end in 2022.

Thus “the whole data set” is an artificial time period, chosen because “that’s the data we have”. But that excuse does not make the trend any less a trend of partial data than the five-year sets used in the Note.

In the same way, looking at data sets arbitrarily starting in 1890 may very well be giiving us a non-physical idea about climate metrics.

The start date is not really arbitrary. It is supposedly the start of significant fossil fuel use, the idea being to correlate (previously buried) CO2 emissions with temperature. So there is a good reason why the start date is where it is.

Except that start date is also during the coldest period of the last 10,000 years.

Of course the world has warmed up since then, and most, perhaps all of that warming would have occurred even if man hadn’t burned any fossil fuels.

Heck, the world still hasn’t returned to the average temperature for the last 10,000 years.

MarkW ==> Nor has the Earth reached the “hoped for global temperature of an Earth-like planet” according to NASA.

kzb ==> Well, that is the standard consensus line — but you know that Atmospheric CO2 began to rise well before then….and up until 1970 (yes, 19 seventy) land use and forestry accounted for more than 50% of human “emissions” and burning fossil fuels was not realy appreciable until after WWII.

See: https://wattsupwiththat.com/2018/08/25/why-i-dont-deny-confessions-of-a-climate-skeptic-part-1/

Can you tell us about anything LESS ARBITRARY than the statement of (my stress) “significant fossil fuel use”? … and that statement implying that CO2 emissions are the sole cause of temperature changes?

According to some sources, the Industrial Revolution began in 1760.

Fossil fuel use in 1860 was not “significant”.

It didn’t become “significant” until sometime after 1950.

In SE Australia, most rural temperature readings show a cooling from about 1900 (the Federation drought) to the late 1970s than a warming tend. Sydney shows a warming from 1950, but I put that down to the immigration growth in the cities after WWII, and the associated UHI effect. All capital cities attracted migrants after WWII and this would explain a lot of the warming in the cities). The cool PDO (~1944-1978) may have had an effect on the cooling in the 1970s. The recent La Nina most likely affected last year’s Summer, which dropped up to 3 degrees C from the year before. I also think from the 50s in the country, weather stations were moved to the airports, also contributing to an increasing trend. Also, as an exercise, I graphed the temperatures (mean max summer-JJA) of 3 central England stations (CET (comp) , Sheffield & Oxford) and compared them to 3 remote Scotland stations (Lerwick, Stornoway & Tiree), also the mean Max Summer temp. The 3 mean England stations temperature trended at 1.1C per century, whereas, the Scotland temperature trended at only 0.2C per century. From what I have seen through my research is that the UHI effect is not adequately accounted for in any adjustment.

Make of it what you will.

Very interesting to see.

No matter how well

justifiedrationalized, one has to be concerned about spurious correlations. Correlations may have predictive value, but they may have little or no value for establishing causation, particularly when time is the independent variable.They may also have *no* predictive value. In a time series, giving equal weight to past history as you give to current history is a recipe for forecasting disaster. Unless you can define a functional causal relationship for the values of the time series you really don’t have an idea of what is going to happen in the future.

Ask anyone that has tried to forecast where a new telephone central office should be located or where a new grocery store should be located. If you just use population growth data over time with no weighting as a predictor your prediction is quite likely to be wrong. Your long term trend may indicate growth while the more recent data indicates movement away from the long term trend (e.g. conversion from landlines to cell phones, population movement away from from high density areas).

Focusing solely on CO2 as the main driver for the functional relationship of temperature and time is missing the forest for the trees!

Yes, implicit in the typical auto-correlation in time-series, the correlation tends to break down the farther one goes back in time. Note that I said, “may have predictive value.”

Of course, the start date is arbitrary.

Why not start with the MWP, the Roman Warm Period or the Minoan Warm Period?

Or would using the Holocene Climate Optimum as the starting point show the current warming period is nothing new?

Thanks for this posting and reply. As a geologist that specializes in detecting gold deposit trends, I am certain that not only should all data be studied, but that the investigator must update the trend interpretation every time new and relevant data appears. However, after studying all data not all needs to be included in updated thinking, some data needs to be set aside (but not destroyed).

Ron ==> I certainly agree that original data must never be destroyed for any scientific enterprise. Even if the data is determined or believed to have been faulty — it should be preserved and annotated as such… The future may have a differing opinion and need that original data again.

Gold Deposits are not expected to to be time series, which require a slightly different approach.

Kip: as a geologist, it is ingrained to consider larger time frames. A favorite one in the climate sphere concerns a single dead rooted tree at Tuktuyaktuk on the NW Canada Arctic coast that was dated at 5000 yrs ago:

This a white spruce, currently about 100km north of the modern tree line but, another couple of hundred km north of today’s living white spruce (Yes! the very same species) of this size where the average temperature would be some 6 to 8°C warmer than Tuk. Reckoning Arctic amplification at double the Global avg T at the time, suggests it was 3 to 4°C warmer globally 5000yrs ago and no runaway anything or Crisis at all. It would have been a nice quiet forest with pleasant bird noises and occasional soughing wind through the treetops.

Gary ==> Thanks for that — an interesting physical example.

The problem with that is that the end points could contain anomalies.

And, the presented slope(s) should always be presented with uncertainties and statistical significance, which is a rarity in climatology.

You just nailed the problem with *all* temperature data graphs. No uncertainties presented – meaning you don’t know if the data lies within the uncertainty interval or not, thus you simply don’t know what the true trend is.

All temperatures should be given as “stated value +/- uncertainty” and then the uncertainty should be propagated into the results using standard procedures.

I disagree. Sometime you already know “all the data” will be influenced by a known factor. All you will see is the effect of that factor. Hence, it would be much more informative to try and eliminate the influence of that factor.

In this case we know the PDO went negative in 2006 and turned positive in 2014. Hence, we would expect a positive trend based only on the influence of the PDO. In this case the only really meaningful data is what happened prior to and after the PDO switch. We were shown the 2015-2022 data. It’s a start. The 2006-2014 data would also be informative.

That is the “science” part of it! They manipulate the data to brainwash idiots!

If changing the endpoints changes the trend, then what you have is not a trend.

What you have is a curve fit of degree 1 with no predictive power..

From one month to the next, global T commonly jumps by a third of a degree. A handful more jumps up than down over a decade or even more so over a century is probably insignificant from a statistical point of view.

Scissor ==> I agree, but what about a more reality-based view? From a physical point of view (meaning the view of physics as a proxy for Reality)?

Yes, that’s a key question.

I know at least one physicist having the opinion that a number of contributors to the global climate system behave as nonlinear chaotic subsystems, which are difficult to model.

Scissor ==> Even the IPCC acknowledges that ““The climate system is a coupled non-linear chaotic system, and therefore the long-term prediction of future climate states is not possible.”

– IPCC TAR WG1, Working Group I: The Scientific Basis

There you go.

Haven’t they re-defined that already? To fit their agenda, like everything else?

Jeff ==> No, the IPCC still acknowledges the fact — it is undeniable according to physics.

Some CliSci researchers try to say that they can predict probabilities of chaotic attractors — but it is nonsense.

Jeff Alberts said: “Haven’t they re-defined that already? To fit their agenda, like everything else?”

No. They’ve always maintained that predictions of exact states (meaning exact properties at exact times at exact locations) is nearly impossible in the long range. In fact, the data suggests that useful skill of predictions of exact states will always be limited to about 15 days regardless of the advancements in modeling. After that predictions tend to focus on the movement of the attractors of the chaotic system and so only the average state (meaning average of the properties over large spatial and temporal domains) is possible.

This is from a book Confessions of a climate scientist by Mototaka Nakamura.

This from a researcher who worked with and did research using GCM’s.

There are endless ways to have fun–or maybe it’s make mischief–with trends. One that I always point out is the way in which climate-textbook author Raymond Pierrehumbert used sea-level trends to accuse Unsettled author Steven Koonin of cherry picking.

Looks like very improperly done low-passing to me! There are so much better algorithms to do this kind of thing – one might look into A/V processing techniques. If you’d mutilate an audio or video signal (which in its digital form is just the same thing as any other timeseries of values, it doesn’t matter if what you sample are sea levels, temperatures, movements of a microphone diaphragm, or brightnesses of a row of pixels) in the way I regularly see done to climate-related “signals”, your eyes and ears would tell you in no time that THIS DOESN’T WORK, because the resulting sound and image would be distorted beyond recognition. Lowpassing without ringing, rippling and phase/time-distortion isn’t easy and certainly not doable with sophomore mathematics like fitting trend lines or trailing averages. No wonder that people see everything and nothing in the resulting graphs (just like you might, with enough imagination, hear anything from Beethoven to the noise of Niagara Falls in audio “processed” in such crude ways….)

Dr. Pierrehumbert based his argument on trends, so in this case plotting trend values was the appropriate approach.

Yes, I often use Gaussian or binomial filters when I’m looking at temperature time series, but temperature series aren’t audio signals, and, esthetics aside, I haven’t been able to come up with a satisfactory argument for the proposition that in the case of temperature series such filters are necessarily superior to, say, rectangular filters.

Joe, the problem with using rectangular filters is exemplified by this graph from my post “Sunny Spots Along The Parana River“.

It shows the normalized sunspot anomaly, along with the 11-year rectangular “boxcar” filter of the same data. The correlation is horrible, R^2 is 0.01, and many peaks are converted into troughs and vice versa.

So yes, almost ANY filter would be preferable to a boxcar filter in this case.

w.

Yes, I understand that the filtered signal doesn’t look like the original signal. But preferable implies some criterion; what do you want the filtered signal to tell you? If the question were what the eleven-year average is, then the rectangular filter would be precisely what you want.

True, such a filter is usually undesirable in signal processing because it leaves what the guys I’ve dealt with seem to call “side lobes” in the resultant spectrum. I’m told that this is bad for, e.g. frequency-division multiplexing.

But I’m also told that the zeroes in such a filter’s Fourier transform (a sinc function) may enable you to suppress unwanted periodicity in the original signal.

Hey, you probably see something I’m missing; I don’t profess to be a filter expert. I made my living as a lawyer, not an engineer. But different horses for different courses is the impression I took from guys who seemed to know this stuff.

The only useful information conveyed by the analysis is that the sunspot cycle is approximately 11 years long, so you get an extreme moire effect through the filter. Think of filming a propellor at a frame rate that is almost an exact multiple of its rotation frequency.

The use of filters is primarily used to eliminate real “noise”. What is the noise you are hoping to eliminate and what is the signal you are hoping to isolate?

Is there something other than temperature you are hoping to see?

OMG, how appropriate. Someone who knows more than simple averaging. Looking for a “signal” in data that IS THE SIGNAL is confirmation bias of the worst kind.

Joe ==> Absolutely, using trends as propaganda tools is an old old practice.

I am not generally a fan of trends — as they obscure as much as they inform.

As I keep pointing out to thoise who think Monckton’s pauses and rapid cooling periods are relevant. Even Monckton points out that short term trends are bogus.

But just because some trend are cherry-picked to make a point, doesn’t mean that all trends are meaningless, and there are ways of determining one from another. Is it significant, does it represent a significant change, does the short term trend meet up with the end of the previous change or does it create an unrealistic discontinuity.

The trend is not bogus, the value of the trend is. But as you say Monckton points that out meaning that you agree with him. But then you keep arguing with him about showing the trend, which can only mean you dislike Monckton and not the trend.

The trend Monckton is using is to show that CO2 does not control temperature. That is all. The longer the pause the less likely that growing CO2 is even a factor!

Could you point to a single example where Monckton says that?

I don’t know that Monckton is saying that. But I find it ironic that several of us on here who have actually attempted to model the UAH temperature using CO2 as a parameter expect the pause to continue for several more months.

The alarmist thesis is that CO2 is the dominant driver of temperature; therefore, one would expect that there would be a high correlation between the measured CO2 increase and the temperature. “Monckton’s Pauses” clearly demonstrate the ineffectiveness of monotonic annual increases of CO2 and that it is easily over-powered by other factors.

During the July 1991 total solar eclipse that I observed at Cabo San Lucas (Baja), during the 6+ minutes of totality, I measured a drop in air temperature of about 1 deg F per minute. Obviously, there are things that affect global temperature (such as clouds and aerosols) that have more influence than CO2.

The Monckton Pause appears to be inline with expectations to me.

We just need one more model and then the science will be completely settled.

Meanwhile China doesn’t give 2 runny sh1ts and uses fossil fuels like their lives depend on it.

Which it does and so do ours.

So you think CO2 is THE CONTROL KNOB along with whoever wrote the model? Look at the model difference with wo/CO2 and w/CO2!

Looks like tuning to me. How does the projection from 5years ago from this compare to what it shows now? What did they change to show cooling?

No. I don’t think CO2 is THE control knob. The data is not consistent with that hypothesis. However, the data is consistent with it being A control knob.

A control knob 😉

That was funny

How do you know it isn’t an “effect” from other control knobs?

That model does not tell if you if CO2 is driven by other control knobs. What the model does is show that increasing CO2 is not inconsistent with the Monckton Pause.

It shows that the models are wrong.

bgwxyz has a big audio soundboard of control knobs…

Can you post the objective criteria by which you claim the model I posted above is wrong?

Like playing an electronic synthesizer.

If CO2 isn’t THE control knob, why is there so much effort and money being spent on controlling it?

Note that CO2 is just one of 4 explicit parameters. The other parameters are composites of several different physical measurements, chief among them being temperature.

The divergence supposedly due to CO2 doesn’t show up until about 1994. Mauna Loa measurements have been taken since 1958, and supposedly have been impacting temperature since the late 18th century. It is weak support of your claim.

My claim is that the Monckton Pause is not inconsistent with CO2 being a control knob. It is certainly inconsistent with CO2 being the control knob though.

Sorry, I don’t engage in policy discussions. I’m only interested in the science.

Lol the science 😉

Kinda like the gene therapy shot science?

I didn’t bring up any issues of policy. It looks like an attempt at deflection on your part instead of responding to the facts of science I pointed out that you had wrong.

CS said: I didn’t bring up any issues of policy.

You asked “If CO2 isn’t THE control knob, why is there so much effort and money being spent on controlling it?” That is a question that directly relates to public policy. I don’t participate in those kinds of discussions.

“The alarmist thesis is that CO2 is the dominant driver of temperature; therefore, one would expect that there would be a high correlation between the measured CO2 increase and the temperature”

If true, it’s the dominant driver of temperature on the scale of the next century or so. That doesn’t mean over a few years other factors, such as ENSO don’t have a bigger effect.

The correlation between CO2 and temperature has not changed other this “pause”. If anything it’s gotten stronger.

“Obviously, there are things that affect global temperature (such as clouds and aerosols) that have more influence than CO2.”

We’ll yes, turning off the sun will have a big effect than CO2. But what happened after the eclipse. Did temperatures remain 6°F lower or did they eventually return to equilibrium?

After the roosters quit crowing, the sun decided to grant their plea, and returned the air temperature to what it had been.

The point of the story is how quickly air temperatures respond to insolation. It doesn’t take 7 years to restore a perturbation.

This statement from CS is very important:

“the ineffectiveness of monotonic annual increases of CO2 and that it is easily over-powered by other factors.”

We are trying to see if one thing co-varies with another. If one data set has a monotonic function, you cannot theoretically carry out a covariance analysis (“covariance” with a small “c”).

We are trying to examine whether “planet temp” covaries with “co2.” A more complete way to say this is: if co2 goes up, does planet temp go up, and if co2 goes down, does planet temp go down?”

We can ask that. But to answer it, we have to have both ups and down in “planet temp,” and also in “co2.” –This is an “assumption” that must be met.

For our examinations of “does planet temp go up or down as co2 goes up or down,” we NEVER have co2 going down!

So, these time-based analyses of the relation between co2 and planet temp are not valid.

Mauna Loa goes back to 1960. Has been monotonic the whole time.

If we trust ice core estimates of co2 back to the last Ice Age, then we have a chance at data to suit the analysis. And, (proxy-based) temps and co2 trends do not really co-vary at all. So, the temp-co2 relation hypothesis is dead before it starts. co2 influence upon planet temp is so modest, if it exists, it cannot be discerned out of the other much greater influences – not even with PCA as was done in MBH98.

https://gml.noaa.gov/ccgg/trends/

+100

Actually, we do every Summer and Fall. It did during 2020, with the April decline being -18% of anthro’ emissions compared to 2019. However, the 2020 seasonal variations in CO2 were indistinguishable from 2019 and 2021. All of those years include the “Monckton Pause.”

One can unequivocally say that a decline in CO2 does not result in the immediate response seen with either an eclipse, thick clouds passing overhead, or the sometime effect of dense contrails.

https://wattsupwiththat.com/2021/06/11/contribution-of-anthropogenic-co2-emissions-to-changes-in-atmospheric-concentrations/

https://wattsupwiththat.com/2022/03/22/anthropogenic-co2-and-the-expected-results-from-eliminating-it/

No kidding, we had the 4th coldest April ever.

I often think of the “pre industrial” start point for the ‘climate’ nonsense as the ultimate cherry pick, one which allows continual “trend” obfuscation.

Just think of a roller coaster rising vs. falling measured relative to its start point. As it descends the first hill, at any point one could argue that the “long term trend” is “still up,” even as the coaster is going down.

This will be the climate fascist playbook until the inevitable decline of “average temperature,” as meaningless as that is, can no longer be hidden by “adjustments.”

A trend is a trend, is a trend, but the question is, will it bend? Will it alter its course, through some unforeseen force and come to a premature end?

~Sir Alec Carincross, economist

Trends have only 2 ways to go – up or down. They might be totally flat, but the chances for this are infinitely small with any random walk. Predicting the climate should be warming because fo CO2, has naturally a 50% chance of materializing. It is not the most compelling evidence.

And then you have this largely ignored issue. We have something that explains the 5 decadal warming trend, and it is NOT CO2. Moreover, if we take a closer look on the CO2 hypothesis, it is easy to see it is not even working, as overlaps were not considered in climate sensitivity estimates. Including overlaps, ECS simply collapses and turns negligible. Something even modtran shows..

I can only recommend to pay more attention to the contrail issue..

https://greenhousedefect.com/contrails-a-forcing-to-be-reckoned-with

Applying linear trend lines to cyclical data only works if the start and end points are at the same point in the cycle. When the system has multiple or irregular cycles, as in this case, or unknown cycles, an applied linear trend line can never be correct.

This is my big gripe. The parts are pretty much cyclical. From orbital, sun, currents, precession, etc. They all combine into a complex of what is called climate.

Trying to do a linear regression on a cyclical phenomena leads to HUGE uncertainty levels.

Sandwood ==> Quite right “as in this case, or unknown cycles” or even simply chaotic around a long-term level.

And, with multiple cyclical drivers with different periods and phases, one ends up with constructive and destructive interference, meaning that only by Fourier decomposition can anything be deduced about long-term trends.

Clyde ==> But if trends are less than 10% or Range, why would one think it was Physics Real?

It is not real. From the other thread on averaging intensive variables, the global average temperature just doesn’t relate well when you look at enthalpy. I’ve seen too many projections from people I trust for places like Iceland, Greenland, Japan, U.S., South Africa where individual stations show no warming. If CO2 is well mixed then everywhere should be warming at least at a constant rate, even if the rates differ.

For every place like the image I took from another thread, there must be another place that has a lot of warming. Why do none of the CAGW folks here never post any local graphs that show really high warming? The would need one, isolated from UHI, with what, at least 3 degrees of warming? Where does that exist using raw station data?

You typed an “r” for an “f” again. 🙂

Ten-percent is a relatively small change, but can be measured and be meaningful with high precision data. The problem is that it is not uncommon to observe an uncertainty of +/-50% in climatology measurements — when they even bother to cite the uncertainty.

Just do average temperature in Kelvin plotted for 100 years. You won´t even see a trend.

Go back and read what his purpose is. When you find a deterministic functional relationship for CO2 vs temp, post it.

Like this SST one?

Substitute U.S. Postal rates for sea surface temperature…

Macha ==> No idea what you are getting at here, but this looks to be a sad case of severe curve fitting/matching of arbitrary lengths of two disparate data sets.

Macha – the vertical axis is not CO2; it is “time.”

Prove me wrong.

Plotting this way does not get rid of the confounder of time.

Plot Mauna Loa x time. Same graph.

As Del Monte Carlo says: you can also substitute postal rate.

There is no up-and-down in time; time just goes up and up. Also, no up-and-down in postal rate; it just goes up, and pretty monotonically.

Lit ==> Ah, but your 200 years is just as arbitrary as the 17-year data set or its 5-year sections…..

Lord Monckton, take note!

TheFinalNail ==> Are you referring to his “The Pause is now X years long”? You might be right, but he is calculating the trend backwards (present to past) and telling us exactly how many years the trend has been flat….. He is not even implying that the whole is the same as the part….

Not much different from any statement about a time period of a similar nature — like “It hasn’t rained in three months and four days.” This type of statement ends when the rains come (or the trend changes).

Actually, he has it down to the month, at the moment. A trend is still a trend, whether you count backwards or forwards from or to a particular point. So your advice still applies to Lord M’s monthly UAH ‘pause’ updates: too short a period and highly dependant on start and end dates.

TFN,

I use this method because it helps detect a change in trend.

Like, Australia UAH LT shows no linear least squares fit warming for the last 10 years.

If we are in a transition from warming to cooling, we would expect this.

It indicates when the transition started, so we can examine what causative variables might have changed then.

There is no suggestion that coming temperatures will rise, fall or stay flat – the data are incapable of predicting the future, they merely observe the past. Geoff S

Time-series are often auto-correlated, so at least short-term predictions are more reliable than random guesses.

The Final ==> No, really, a PAUSE is a temporary phenomena — thus it must have a start and end (even it is ‘to-date’) and is expressly only the part of some greater whole (in time). A pause, by definition, is a short and temporary cessation of something.

You would be quite right if Lord Monckton had claimed that “Global Warming stopped 7 years ago” (or whatever). His claim is only that rise in temperature (UAH) has temporarily ceased rising since such-and-such a date.

Quite right. I have had no reluctance to criticize Lord Monckton’s ravings on other subjects, but his pauses are what they are, and he (largely) leaves us to make of them what we will.

To me they militate against the proposition that natural variation is minor.

He uses their math to show the follly of their theory.

“No, really, a PAUSE is a temporary phenomena — thus it must have a start and end (even it is ‘to-date’) and is expressly only the part of some greater whole (in time).”

Except he still keeps talking about the old pause, and that ended sometime after the new one began.

“His claim is only that rise in temperature (UAH) has temporarily ceased rising since such-and-such a date.”

UAH warming rate from 1979 to the start of pause was 0.11°C / decade.

UAH warming rate from 1979 to current date is 0.13°C / decade.

I’m not sure what the evidence is that warming has temporarily ceased.

WE were told that if a pause occurred and lasted over 15 years then all the models were wrong. Because models never predicted a pause that long.

When the pause lasted longer than required we got excuses. The models were still claimed to be valid even after alarmists said they should not be.

If your car engine has suddenly stopped on more than one occasion, would you conclude that there was some problem that needed to be discovered and fixed to increase the reliability?

The hiatus is an indicator that because there is not a close coupling between CO2 and temperature, the models are defective and are unreliable for periods of several years running.

Several months ago I tested the hypothesis that models do not predict long pause like the current one.

Using the CMIP5 model data from the KNMI Explorer and the Monckton method the data suggests that about 21% of the months should be included in a pause lasting at least 90 months. The UAH observations showed 26%. That’s not a bad prediction.

Using my trivial 3 component model above and the Monckton method the data suggests that about 26% of the months to be included in a pause lasting at least 90 months. Again, the observation is 26%. The model is in excellent agreement with the observations.

The problem is that the models turn into y = mx + b equations after a few years. Run’em for 100 years, 200 years, or 10000 years – the models will predict that the earth *will* turn into a cinder, no more ice ages. No pauses, no bending of the projection, no nothing. *That* is not a good model

This is true, and at least partly because relations between “forcings” have to include geometric functions – tipping points. Water heats and heats and heats – and then turns into steam.

If you do not model at least one “tipping point” correctly, one influence is going to be exaggerated, and it is only a matter of time until it runs crazy.

So, you add bounds to at least one of the relations. Well, then you either run off the chart another way, or are limited to an upper and lower bound forever, always to be hit and never exceeded.

Good luck.

Which one of the CMIP5 members shows Earth turning into a cinder in 100, 200, or 10000 years? Can you post a link to the data you are looking at so that I can download and verify it?

How about this graph: (see attached)

Do you see any bending in the 8.5 or 6.0 scenario’s? Even the 4.5 scenario shows continual growth although it does show the slope changing in the out years. The only one that shows growth stopping is the 2.5 scenario, the one no one believes will ever happen (see India and China for examples)

He lives in De Nile.

None of those show the Earth turning into a cinder.

I don’t really have much choice at this point to dismiss the claim that CMIP5 shows the Earth turning into a cinder.

When they continue going up with no bound then the only conclusion is that the earth *WILL* turn into a cinder. That’s what the “consensus” climate scientists are predicting. We will soon reach the tipping point where we can’t stop the warming and it will kill us all! Just ask John Kerry!

TG said: “When they continue going up with no bound then the only conclusion is that the earth *WILL* turn into a cinder.”

Where do you see that any of the CMIP5 models show the global average temperature continuing to go up indefinitely to the point that Earth turns into a cinder? All I’m asking for is a link or some kind of reference here. I’ll you one last chance here. After that I don’t have any other option but to dismiss the claim.

BTW…how would even be able to assess whether the Earth turns into a cinder using the global average temperature anyway? According to Essex et al. it does not actually exist.

I gave you a graph. In the out years both RCP 8.5 and 4.5 are nothing more than y = mx +b linear trends. The termination of the runs are generated by computing limits more than by the trend ending.

“After that I don’t have any other option but to dismiss the claim.”

That’s one of your main tactics. Argument by Dismissal, an argumentative fallacy. You simply can’t show where the 8.5 and 4.5 runs bend down in any way, shape, or form. So you just dismiss any argument that says the trend lines from the models will continue indefinitely until the oceans boil!

“BTW…how would even be able to assess whether the Earth turns into a cinder using the global average temperature anyway? According to Essex et al. it does not actually exist.”

It doesn’t exist. That doesn’t keep the climate models from saying it does and using it to forecast the end of the world.

I count 4 variables plus a constant.

Not knowing the details of how you did your analysis, I can’t provide an informed comment.

Yes. Typo. 4.

I performed the analysis using the Monckton method.

+100!

At those rates, in ten decades, you still wouldn’t notice a change in temperature.

The pauses continue to be a big problem for the climate cult. It’s now pretty obvious the breakpoint was due to the PDO phase change in 2014. When you look before and after that obvious warming influence you seeing nothing. The only possible conclusion is we’ve gone 25 years with no evidence for man made warming.

https://woodfortrees.org/plot/uah6/from:1997.5/to/plot/uah6/from:1997.5/to:2014.5/trend/plot/uah6/from:2015.5/to/trend/plot/uah6/from:2014.5/to:2015.5/trend

You mean if we leave out the periods of natural warming but keep in the periods of natural cooling we can get a cooler temperature series? Never thought of that.

Why don’t you post the reason for examining this short trend so everyone can see you are in error!

CO2 is not a control knob for temperature if temps don’t increase along with increasing CO2.

Why do you always want to ignore the uncertainty in these trend lines?

Funny that you would accuse Jim of that! The uncertainty is taken into account when determining the statistical significance.

No kidding, this is a troll comment if there ever was one.

Yes, ironic. Jim, Tim and Carlo have spent years trying to claim that UAH data is completely unreliable because uncertainty increases the more observations you make, that it’s impossible to know what any trend is , and now wonlt even accept that it’s possible to average temperature.

Yet will then insist that a carefully selected flat trend line covering a few years of highly variable data, is somehow so certain you can use it to prove anything you want. Last discussion I was being told that there is zero uncertainty in the trend, whilst at the same time being told the true trend over the 7 year period could be at least ±3°C / decade.

You’re insane.

Yep!

In other words you get pissed when someone else uses the data you look at as religious dogma in order to disprove the CAGW theory!

ROFL!!

I don’t know how many times I have to tell you this before it penetrates your understanding, but I do not regard UAH data as perfect. I don’t regard any data as perfect but UAH least of all. The only reason I go along with UAH data here is because up to recently it was the only data set regarded as acceptable.

Just because I don’t accept the ±1.5°C uncertainty you want to pluck out of the air, does not mean I regard Dr Spencer’s work as religious dogma.

“Just because I don’t accept the ±1.5°C uncertainty you want to pluck out of the air, does not mean I regard Dr Spencer’s work as religious dogma.”

The only data I accept as minimally usable is degree-day data for cooling and heating. That data is actually in use by professionals to size HVAC systems and by agricultural scientists to advise farmers. The users of those HVAC systems and of the ag advice would quickly abandon the professionals and the ag scientists is they are continuously wrong. Climate scientists have no real users except governments who don’t care if the climate scientists are wrong as long as their predictions lead to increased power in the hands of the politicians.

Even HDD/CDD has problems. It doesn’t tell you the enthalpy. It’s why you could have the same HDD/CDD values in Phoenix and Miami on the same day. Humidity and pressure aren’t considered. Until the climate scientists are forced to use actual physical attributes that can be compared I will remain doubtful of their conclusions. That doesn’t mean I won’t use their own data against them.

TG said: “The only data I accept as minimally usable is degree-day data for cooling and heating.”

Back in Kip’s previous article you said the average temperature is meaningless and useless and even advocated for the position of them being non-existent. Yet you find HDD and CDD usable despite them being dependent on a meaningless, useless, and non-existent metric?

“The only data I accept as minimally usable is degree-day data for cooling and heating.”

But they are based on the same temperature readings as any of the land based global sets. Do you use CDDs and HDDs as a global average or just for specific locations?

“But they are based on the same temperature readings as any of the land based global sets. Do you use CDDs and HDDs as a global average or just for specific locations?”

HDD and CDD are degree-days, not degrees. Still, I do *NOT* add them up to use them as a global average. Have you *EVER* seen me do that? Be honest!

What I do is to use them to evaluate if HDD/CDD is going up or down at a location. I can then assign them a cooling/warming metric for both minimum and maximum temps and can compare those metrics over a region. I try to use stations that are not located at an airport or in the middle of an urban heat complex.

If I find a region (e.g. central Peru) that shows mostly cooling/heating then I can ask “where is the offsetting region that would cause global warming?”. If I find a 0.5C cooling slope then there needs to be a 1.0C warming slope somewhere to offset it if we are to believe in “global” warming.

There is absolutely no reason why climate scientists could not do exactly the same. More complicated? Sure! So what? More believable result? Sure!

Doing minimums (HDD) and maximums (CDD) gives FAR more knowledge than the hokey, unphysical, global temperature mid-range average value. I suspect that is one reason why climate science refuses to do abandon their current method. It would make it easier to hold their feet to the fire over predictions of the future!

Can you imagine trying to make a model to spit out these numbers instead of a single global average?

“Can you imagine trying to make a model to spit out these numbers instead of a single global average?”

Actually I can. But it wouldn’t fit the agenda!

TG said: “What I do is to use them to evaluate if HDD/CDD is going up or down at a location.”

According to you HDD/CDD are dependent on a useless and meaningless quantity. So how exactly are finding meaning and use out of them?

A lie disguising a strawman.

CM said: “A lie disguising a strawman.”

If I’m mistaken then so be it. I assure you it is not intentional. My statement is based on the comments in Kip’s previous article in which TG advocated for the position that the average of temperature observations is meaningless and useless. Is that not TG’s position?

TG, what say you? Is your position that the average of temperature observations is meaningless and useless or not?

“TG, what say you? Is your position that the average of temperature observations is meaningless and useless or not?”

You don’t even know what the dimensions of HDD and CDD are, do you?

They are *NOT* averages. They are integration of the temperature curve – i.e. they are the area between the temperature curve and a base set line. They are an AREA, not a “degree”.

TG said: “They are *NOT* averages.”

I never said they were averages. I said they depend on temperature averages. Specifically the formula is HDD = 65 F – (Tmin + Tmax) / 2 or CDD = (Tmin + Tmax) / 2 – 65 F. [1]

bgwxyz throws another wad at the wall, hoping it will stick.

You think if you can find just a single example of averaging temperatures then the entire Essex paper must be wrong, so you go around throwing up nonsense like this hoping it will stick.

Yep.

“According to you HDD/CDD are dependent on a useless and meaningless quantity. So how exactly are finding meaning and use out of them?”

You *still* haven’t learned what an HDD/CDD is, have you? You *really* need to go learn about them. If they were meaningless then HVAC professionals would be using them to size installations.

TG said: “You *still* haven’t learned what an HDD/CDD is, have you?”

I learned about degrees days long ago. According to the National Weather Service degree days are “the difference between the daily temperature mean, (high temperature plus low temperature divided by two) and 65°F.”

“According to the National Weather Service degree days are “the difference between the daily temperature mean, (high temperature plus low temperature divided by two) and 65°F.””

That is the *old* way of doing it — and it is wrong, wrong, wrong. I’m not surprised to see the government still using an old, wrong definition.

(Tmax + Tmin)/2 is *NOT* the daily temperature mean. It never has been. It’s just a convenient relationship to use with no regard to its physical accuracy.

The mean of the daytime temp is .63Tmax. The mean of the nighttime temp is ln(2)/k where k is the decay factor. So what is the actual mean of the daily temperature profile? It is *NOT* (Tmax – Tmin)/2!

The process used today is to integrate the temperature curve since most useful measurement stations record in minutes if not more often. This finds the actual area under the temperature curve in degree-days.

That is where the .63 factor comes from for the day and the ln(2)/k comes from for the night.

If you don’t have a quasi-smooth curve for either the day or night you can still do a numeric integration.

In essence you are using the argumentative fallacy of Appeal to Tradition. Stop it.

Can you post a link to the literature 1) showing the new method and 2) showing that NWS retrospectively analyzed the data and replaced the old values with the new values in the official reporting? I’m very interested in review the materials you can provide.

go here: https://www.degreedays.net/calculation

“Some other sources (particularly generalist weather-data providers for whom degree days are not a focus) are still using outdated approximation methods that either simplistically pretend that within-day temperature variations don’t matter, or they try to estimate them from daily average/maximum/minimum temperatures. We discuss these approximation methods in more detail further below, but, in a nutshell: the Integration Method is best because it uses detailed within-day temperature readings to account for within-day temperature variations accurately.”

Like I told you, the government still uses the old method of finding the mid-range temps (Tmax+Tmin)/2 and comparing that with the set point temperature. I don’t know of any reputable engineer that still does that.

go here: https://www.researchgate.net/publication/299495512_An_integral_model_to_calculate_the_growing_degree-days_and_heat_units_a_spreadsheet_application

go here (this site only offers the integration method): https://energy-data.io/energy-tools/degree-day-calculator-free/

go here: https://www.researchgate.net/publication/262557717_Comparison_of_methodologies_for_degree-day_estimation_using_numerical_methods

I shouldn’t have to do this research for you. Having to do so only indicates to me that all you are interested in on this subject is being a troll.

TG said: “go here: https://www.degreedays.net/calculation“

Yep. That’s what I thought you were going to reference.

The integration technique still uses average temperatures.

Nevermind that each temperature observation is itself an average over a 5 minute period. See the ASOS User Guide for details. In other words, all methods use an average temperature in some way shape or form whether you understood that or not.

So why did you not just come right out and say what you found on the internet, and instead tried to lay what you think is a trap, mr. disingenuous?

WOOHOO! bgwxyz takes another vicollly lap!

As usual, you are about as genuine as a three-dollar bill.

And your “nevermind”? This is averaging data from ONE instrument at ONE location. Does your hallowed ASOS guide tell you how to propagate the measurement uncertainty in this procedure?

Try again to Keep the Rise Alive.

The point is this. I don’t think you, Jim, Tim, and Kip are as convicted regarding the proclamation that averaging temperatures is meaningless and useless as you let on. Afterall, we’re being told that HDD/CDD are meaningful and useful despite them being dependent on averaging temperatures. And it’s not just HDD/CDD. We’re being told that the particular soil moisture product used in the comments here is meaningful and useful enough to determine droughts despite it being dependent on averaging temperatures. It is a contradictory position. I’ll even argue that it is a hypocritical position since we are all being told that we shouldn’t do it yet the contrarians here not only do it themselves but defend their use vigorously.

You label anything you please “averaging”! Go lecture someone else.

CM said: “You label anything you please “averaging”! Go lecture someone else.”

Not at all. I only label the operation Σ[x_i, 1, n] / n as “averaging” and nothing more.

On the contrary, it is Essex et al. that were discussing how there are an infinite number of ways to average a set of values only one of which fits the canonical formula above. So you tell me…who’s labeling anything they please as “averaging”?

Liar, go read the GUM H3 again. YOU!

bdgwx said: “I only label the operation Σ[x_i, 1, n] / n as “averaging” and nothing more.”

CM said: “Liar, go read the GUM H3 again. YOU!”

I did as you requested. It still says t_bar = Σ[t_k, 1, n] / n which is the same as the last time I read it and the time before that.

Went to see the captain, strangest I could find

Laid my proposition down, laid it on the line

I won’t slave for beggar’s pay, likewise gold and jewels

But I would slave to learn the way to sink your ship of fools

Ship of fools sail away from me

It was later than I thought when I first believed you

But now I cannot share your laughter, ship of fools

The bottles stand as empty now, as they were filled before

Time there was and plenty, but from that cup no more

Though I could not caution all, I still might warn a few

Don’t lend your hand to raise no flag atop no ship of fools

Ship of fools sail away from me

It was later than I thought when I first believed you

But now I cannot share your laughter, ship of fools

Here is the deal. Many of us have been calling GAT meaningless for a long, long time. Do you think we made this decision out of clear blue sky? Most of us have scientific educations and have dealt with applying these in our careers to solve problems. We don’t just jump when someone pontificates without having some mathematics to back up their assertions.

My first recognition was when no one was ever quoting a standard deviation to go along with the distributions they were using to calculate a mean. Why? Still don’t have an answer, not even from you! Why? I would never have passed statistics if I had done that!

My second recognition was that only temperatures were being averaged. Temperatures NEVER tell you about the amount of heat in a substance, i.e., enthalpy. The amount of HEAT tells you the energy held by a substance. Mechanical and electrical engineers spend many hours in class learning about this. How do you think a steam boiler in a power plant or a heating plant is evaluated to determine how much energy can be extracted when turning a turbine or driving a process? Trial and error? Guess and by golly? Dude, this is all calculated before physical design is started. These are not a joke. Very, very high temperatures and pressures are involved. Personal and equipment safety is paramount.

My third recognition was that unreasonable uncertainties we’re being quoted based upon measurements that were AT BEST taken to an uncertainty of ±0.5 degrees. The predominate figure quoted was actually the SEM divided by √N. This computation is not defined in statistics. What is divided by the √N is the POPULATION standard deviation and not the standard deviation of the sample mean.

My fourth was sampling theory was not being applied correctly. Review the requirements of the different sampling theories and see if they are being meant.

Wise up and take some calculus based physics and thermodynamic classes and follow up with metrology classes.

“Afterall, we’re being told that HDD/CDD are meaningful and useful despite them being dependent on averaging temperatures.”

That is the OLD method, called the Mean Temperature Method, not the new method called the Integration method. If you had bothered to read the entire link I gave you rather than trying to cherry pick the first thing you came across you would realize that!

The Integration Method DOES NOT AVERAGE ANYTHING. I don’t know why you and bellman are so intent on defining integration as averaging. IT IS NOT!

“droughts despite it being dependent on averaging temperatures.”

Droughts are dependent on temperature? Droughts are typically defined by the amount of water in the soil (an extensive property) at specified depths plus the amount of surface water in lakes, ponds, streams, rivers, etc, again an extensive property, as well as precipitation, i..e the volume of rain, again an extensive property.

Do you and bellman live in the land beyond the looking glass?

“yet the contrarians here not only do it themselves but defend their use vigorously.”

You are looking through a fun house mirror! You can’t even define reality (e.g. droughts) correctly!

You nailed it!

I cannot for the life of me understand how they can willy-nilly ignore non-trivial details and context. This is the domain of religion and pseudoscience, not science and engineering.

You do realize that “at a distance” is vastly different than “an average over 5 minutes at the same place and with the same device”, right.

Go find an actual physics or thermodynamic reference for averaging intensive measurements of different objects at a distance to obtain a real physical measurement.

The GUM does not deal with this separation of physical quantities and qualities. It was never intended to. It was written for people trying to convey uncertainty in MEASUREMENTS, not for what appropriate measurements are and how they should be combined.

I know you are trying to destroy the belief that intensive values can not generally be averaged to obtain a real physical quantity. To do so you need to deal with physics, chemistry, and thermodynamic subjects to find references that deal with these issues. Uncertainty in MEASUREMENTS is not the appropriate choice of subject matter.

He relies on sophistry instead.

JG said: “Go find an actual physics or thermodynamic reference for averaging intensive measurements of different objects at a distance to obtain a real physical measurement.”

I did as you suggested. I pulled out my Mesoscale Meterology book by Markowski and Richardson and Dynamic Meteorology by Holton and Hakim. Both refer to average temperature and averages of various other intensive properties. It’s been awhile since I’ve opened these up. I hadn’t realized just how prolifically average temperatures are used in them until now. BTW…these are great references if you want to learn more about the kinematic and thermodynamic nature of the atmosphere.

My guess is that those books speak to the averaging the temperature in a single object that is in equilibrium. E.g. in a wall between the inside and outside. They are finding the average value of the gradient between two points.

This was part of an entire piece of Essex. Averaging two different, independent objects to find a value for a third independent object. I.e. the average of the gradient in one of your house walls, the gradient in one of your neighbor’s house walls, and the surface temperature of the asphalt on your nearest street!

None of those temperatures can act at a distance to determine either of the other two. So the average of the three is meaningless.

Yet you, for some reason, want to keep claiming that such an average *is* meaningful while never actually trying to explain what it is useful for! You can’t explain it because the average you find doesn’t exist!

Or a triple-point bath inside a laboratory controlled to less than ±0.1°C.

Without these meaningless global air temperature averages, they are totally and completely bankrupt.

TG said: “My guess is that those books speak to the averaging the temperature in a single object that is in equilibrium.”

They are in reference to volumes of the atmosphere. And I’m sure you already know that no volume of the atmosphere is ever in equilibrium which is why we have weather. And it’s not just temperature these books are referring to. Average vorticity, average density, average pressure, average divergence, and various other intensive properties are used throughout.

I’ll give you just one instance. Vorticity is a calculated value based on the mean angular displacement and center of mass. In other words it is *exactly* what you continue to do – conflate a calculated intensive value derived from extensive properties with a measured intensive property!

In none of the examples you give do you measure the intensive value and then average it. You measure extensive values and then calculate an intensive property. Density requires averaging mass and volume. two extensive values that can be measured! You don’t measure density and then average the values! What kind of probe would you use to measure density anyway!

You are just stomping on his belief that a GAT has some meaning. Temperature is not the appropriate measure to be using for determining the energy contained in the atmosphere. It only measures translational energy and totally ignores latent energy.

JG said: “Temperature is not the appropriate measure to be using for determining the energy contained in the atmosphere.”

Deflection and diversion. The claim is that averages of intensive properties regardless of context is meaningless and useless up to and including the claim that it doesn’t even exist and whether trends based on those values has meaning and usefulness as well. Nobody is challenging the idea that temperature only provides limited insights into the energy content of a body or even discussing the relation between temperature and heat/energy at all.

Do you think meteorology texts have good thought out math justifications for averaging temps at a distance? Do your books talk about averaging Sacramento and Rochester temps for a purpose.

I did reference physics and chemistry and thermodynamics on purpose. These subjects have detailed math justification for what is stated.

You are basically trying to justify averaging temps for something like an average temp at a location or small region. It is OK to average temps if all you are trying to find is average temperature. However, it is NOT OK to use that average temp as a proxy for the amount of heat (enthalpy) in two different locations or objects! If you are dealing with the sun’s ENERGY, you must use enthalpy to track the total energy in the system.

Have you had any calculus based science like physics, chemistry, or thermodynamics?

JG said: “Do you think meteorology texts have good thought out math justifications for averaging temps at a distance?”

Yes. They have good thought out math justification for averaging other intensive properties as well.

Show us the math. You can surely scan the pages that have the mathematical derivations of how temperature averages can determine the enthalpy in the atmosphere.

“The integration technique still uses average temperatures.”

You are as bad as bellman! Integration is not AVERAGING!

“In this simple example this is always the base temperature minus the average of the two recorded temperatures.”

This is *NOT* the integration method. It is the older method known as the Mean Temperature Method, not the Integration Method!

If you would read on down the page it shows using the integration method.

As usual with you and bellman, you are cherry picking something you think will prove a point you have made but you fail to understand the entire context of what you are cherry picking from!

“Nevermind that each temperature observation is itself an average over a 5 minute period.”

Not from my weather station. Nor does it need to be from any newer, digital weather station. You are still trying to use the Argument to Tradition fallacy.

from your link:

“Once each minute the ACU calculates the 5-minute average ambient temperature and dew point temperature from the 1-minute average observations (provided at least 4 valid 1-minute averages are available). These 5-minute averages are rounded to the nearest degree Fahrenheit, converted to the nearest 0.1 degree Celsius, and reported once each minute as the 5-minute average ambient and dew point temperatures” (bolding mine, tg)

Please note that the readings are rounded to the nearest Fahrenheit degree and then converted to the nearest 0.1C. That’s truly a violation of stating values! 1F is about 0.5C not 0.1C. This algorithm severely overstates the precision of the data!

“In other words, all methods use an average temperature in some way shape or form whether you understood that or not.”

I would also note what the uncertainty of the measurements are. For the range of -58F to +122F the uncertainty is +-/ 1.8F (+/- 1.0C)

With that kind of an uncertainty any error from averaging will be totally masked by the measurement uncertainty itself.

The one-minute average is obtained from 10 sec measurements (6 per minute). Each measurement is from approximately the same measurand. This simply isn’t like trying to find an average between two different locations. While not perfect, this is about 30 measurements per 5 minute average. This is as close to 30 measurements of the same measurand as one cat get.

Stop trying to cherry pick things without getting the full context. You only lower further your already low credibility!

TG said: “You are as bad as bellman! Integration is not AVERAGING!”

I didn’t say it was. I said their integration technique uses temperature averages. BTW…don’t conflate their integration technique with proper calculus integrals.

TG said: “This is *NOT* the integration method.”

That is what they call the “Integration Method”. It is documented under the heading How do you calculate degree days using the Integration Method? It definitely involves averaging temperatures. Note that it is different from what they call the Mean Temperature Method which also involves averaging temperatures.

TG said: “While not perfect, this is about 30 measurements per 5 minute average. This is as close to 30 measurements of the same measurand as one cat get.”

It’s still an average.

“BTW…don’t conflate their integration technique with proper calculus integrals.”

Your knowledge of calculus is just as bad as bellman’s. You’ve never heard of numerical integration I guess. I’m not surprised!

“That is what they call the “Integration Method”. It is documented under the heading How do you calculate degree days using the Integration Method?”

You STILL haven’t bothered to read the link I gave you! The link has another link to a page that explains how they calculate their degree-day value. It is *NOT* an average!

From the link:

———————————————————–

Although the Integration Method is the most accurate degree-day-calculation method, and the one that we use, many other sources (especially non-specialist sources) calculate degree days using an approximation method based on daily summary data (some or all of daily average, maximum, and minimum temperatures).

There are three popular methods for approximating degree days from daily temperature data:

The first two are both known as the “Mean Temperature Method”. They approximate the degree days for each day using the average temperature for the day. For HDD they subtract the average temperature from the base temperature (taking zero if the average temperature is greater than the base temperature on that day). For CDD they subtract the base temperature from the average temperature (taking zero if the base temperature is greater than the average temperature).

This sounds like one method, but it’s actually two because there are two commonly-used ways to calculate the average temperature for each day, both of which give slightly different results:

The third is the Met Office Method – a set of equations that aim to approximate the Integration Method using daily max/min temperatures only. They work on the assumption that temperatures follow a partial sine-curve pattern between their daily maximum and minimum values.

Another approximation method deserves mentioning: The Enhanced Met Office Method. This is based on the original Met Office Method, but, whilst the original Met Office Method approximates the daily average temperature by taking the midpoint between the daily maximum and minimum temperatures, the Enhanced Met Office Method uses the real daily average temperature (calculated from temperature readings taken throughout the day). The daily maximum and minimum temperatures also play a role in approximating within-day temperature variations, as they do for the Met Office Method.

————————————————————

“It’s still an average.”

OF THE SAME MEASURAND, or at least as close as you can get!

They just pile the lies higher and higher.

You’ll notice that the question about what calculus based physics, chemistry, and thermodynamic classes they have goes unanswered.

Oh yes, this is quite telling. Might as well be a physical geography background, which back in the day counted toward arts and humanities credits.

Just like how he avoids the issue of how a laboratory triple-point bath can be identical to averaging Timbuktu and Kalamazoo air temperatures.

TG said: “You STILL haven’t bothered to read the link I gave you!”

Yes I read it. That’s how I know their method uses an average temperature. They state it explicitly and even give examples. They not only use an average once or even twice but three separate times! First, they rely on the observations from the automated stations which are themselves averages. Second, they take those detailed observations and group them into “consecutive pairs” and take the average of all the pairs. Third, they multiple difference of that average and the reference by the time representing the pairs which as Bellman astutely recognized is effectively averaging; just a slightly different order than the canonical formula.

TG said: “From the link:”

What follows here is the alternative methods to their “Integration Method”. You did not post their method.

The link to their method appears on the link I gave you. This is just proof that you did not *READ* the link. You just cherry picked something with a glance and, as usual, it was wrong.

“First, they rely on the observations from the automated stations which are themselves averages.”

Multiple observations of the SAME THING, or at least as close as you can get! I’ve given you the answer on this after you referred to NWS standards. Of course you blew that off!

“Second, they take those detailed observations and group them into “consecutive pairs” and take the average of all the pairs.”

They actually INTERPOLATE a middle value. And, as I pointed out in another message, when doing interpolation/averaging between points when doing numerical integration, you get the *MOST ACCURATE” calculation of the area under the curve. And I am positive you have no clue as to why that is!

“Third, they multiple difference of that average and the reference by the time representing the pairs which as Bellman astutely recognized is effectively averaging; just a slightly different order than the canonical formula.”

It is *NOT* averaging! Neither you nor he understands calculus at all! Averaging gives you degrees/time, not degree-time! You can’t even do simple dimensional analysis that 8th graders can do! You split the time period into intervals, be they minute, hours, seconds, or whatever.

∫sin(x) dx

sin(x) in degrees multiplied by dx in hours, minutes, seconds, etc.

This way you wind up with degree-time units. If your period is 24 hours then your intervals could be in hours, or minutes, or seconds. NOT 1/24hours. Again, that would give you degree/hour!

You and bellman like to portray yourselves as very good mathematicians. You are nothing of the sort! Nor do either of you have any relationship to the real world! Neither of you understand that when calibrating a thermometer in a thermal bath that you don’t average the water temp across a number of thermal baths to determine the average temperature to be used as the calibration temperature of the thermal bath you are using in the calibration routine. You take multiple measurements of the SAME thermal bath and use the average of those!

“You are as bad as bellman! Integration is not AVERAGING!”

Have you still not understood that taking 24 temperature readings through the day, multiplying them each by 1/24 of a day, and adding them together is no different to adding the 24 values and then dividing them by 24?

“Have you still not understood that taking 24 temperature readings through the day, multiplying them each by 1/24 of a day, and adding them together is no different to adding the 24 values and then dividing them by 24?”

This is *NOT* how you do integration!

Sampling the temperatures is to create a temperature signal, just like sampling in a software defined radio (SDR) does for radio signals or a digital signal generator does for creating a sine wave.

That curve is then integrated. The intervals are *NOT* 1/24. Each interval is an interval of 1. You have 24 samples, that’s all. You are trying to build the averaging into the calculation. By doing so you wind up with a dimension of degree instead of degree-day or degree-hour (or whatever) for the final value! Learn some basic dimensional analysis for what you try to do. If the dimensions don’t match then you have done something wrong!

Interval 1 (of size 1/24) is a width of one. Interval 2 is a width of 1. Interval 3 is a width of one. Etc. The height of Interval 1 is sin(π/24). The height of Interval 2 is sin(2π/24). Etc.

That’s for numerical integration. If the temperature profile is at least quasi-sinusoidal then just do the standard integration:

2∫Asin(x)dx from 0 to π/2 => 2A where A is the peak value of the sine wave.

Please note carefully that is *NOT* the same as the sum of the values of the sine wave over an interval of pi.

2Σsin(x) from 0 to π/2 = 3.5. Divide that by the interval of π and you get 0.56, much different than the value of .637 you get when dividing the area under the curve by the π.

The dimensions don’t even match with the two. The sum gives you a dimension of “degree”. Divide that by radians and you get degree/rad. The integration gives you degree-rad and when you divide by radians you get a dimension of degree. Degree/rad is not the same thing as degree!

I keep telling you that you *need* to take a first year engineering calculus course. But for some reason you just want to continue down the path of trying to prove something that is wrong.

“This is *NOT* how you do integration!”

Correct. To do integration you have to take the sample width to the limit of zero. But it’s an approximation, and it is what your preferred source calls the “integration method”. I think they add a few bells and whistles which don;t make much difference.

“Learn some basic dimensional analysis for what you try to do. If the dimensions don’t match then you have done something wrong!”

And what happens to your dimensional analysis when you take your sum of degree days and divide by days?

“Interval 1 (of size 1/24) is a width of one.”

One what?

“The height of Interval 1 is sin(π/24). The height of Interval 2 is sin(2π/24). Etc.”

You need to take the proverbial remedial class in basic trigonometry.

“That’s for numerical integration. If the temperature profile is at least quasi-sinusoidal then just do the standard integration”

We keep going through this. You complain that multiple samples can never be exactly correct, but then are happy to approximate values based on just two readings and an assumption of a sine wave.

Which you then screw up because you still cannot see why daily temperatures are not defined just by the maximum temperature, and daily temperatures do not have a mid-point of zero.

“2Σsin(x) from 0 to π/2 = 3.5.”

What do you mean by sum here? What are you summing?

“The dimensions don’t even match with the two. The sum gives you a dimension of “degree”. Divide that by radians and you get degree/rad.”

If you are just adding temperatures then you are not going to divide by radians. You are just dividing by a dimensionless count. If you are doing an approximate integral you are not just adding degrees, you are adding degree * whatever the x axis is, in this case you are adding degree*radians. Then you divide by radians to get degrees.

“I keep telling you that you *need* to take a first year engineering calculus course. But for some reason you just want to continue down the path of trying to prove something that is wrong. ”

Sorry, but if your understanding is what comes from “engineering calculus” I think I’ll stick to my degree courses in analysis.

Just like bdgwx you have not *READ* the link I gave you. You glanced at it and left with no actual understanding of what they do.

Just like bdgwx, you have not heard of numerical integration either. Let alone doing piecewise numerical integration.

You get degrees. So what? Degrees is not what you are looking for!

One INTERVAL. Just what I said in my post!

We’ve been through this! You propagate the uncertainty through to the result. I tutored you earlier this year on how to calculate the uncertainty of a sine wave. The method is right there in Taylor’s book and examples. But you *STILL* have not actually studied Taylor or done the examples. You just keep on trying to cherry pick pieces without understanding what you are cherry picking.

Do we need to go over how to calculate the uncertainty associated with a sine wave again?

What link? What bit do you want me to read? Why do you think I don;t understand integration, yet you do?

The uncertainty of a sine wave depend on the assumption that what you are measuring is a sine wave.

“What link? What bit do you want me to read? Why do you think I don;t understand integration, yet you do?”

The link to degreeday.net. But you won’t go read it for meaning. I know you won’t.

“The uncertainty of a sine wave depend on the assumption that what you are measuring is a sine wave.”