By Dr Fabio Capezzuoli

Recent posts and discussions on WUWT regarding air temperature sampling frequencies and their influence on the daily average – propagating to monthly and yearly trends – demonstrated that the classic sampling method of Tmax and Tmin is not adequate to correctly represent daily averages (Tav); to produce a representative value at least 24 samples (hourly readings) should be used.

From the discussions also an idea emerged, that it could be possible to produce a “Standard Day” temperature curve and use it to correct older data sampled by Tmax and Tmin only, in order to obtain a more representative value of Tav.

Because I’d like to give a contribution to knowledge, and also because I want to hone my Python coding skills, I decided to process some data and see if it can help going towards the goal of a “Standard Day”.

The series I used for my study are hourly air temperature measurements:

PRM – Parma-Urban, PR, Italy, weather station (44.808 N, 10.330 E, Elev. 79 m), covering 2015-2016. Despite its latitude, Parma’s climate is classified as Humid Subtropical (Koppen: Cfa).

EVG – Everglades City 5 NE CRN Station – 92826 (25.90 N, 81.32 W, Elev. NA), Florida, USA, covering 2015-2016. The climate of Everglades City is classified as Tropical Savannah (Koppen: Aw).

Parma station location:

https://goo.gl/maps/8EESqED2Thq

Everglades City station location:

https://goo.gl/maps/TqtGhkTDtN62

Data were downloaded from the access systems provided by the station’s managing organization (ARPAE-ER, Italy and NOAA, US) and not preprocessed in any way except for removal of empty and invalid records.

I chose these stations as representative of the temperate latitudes situation (Parma), and of the tropical situation (Everglades City); my choice of tropical stations was limited, and in the end only the CRN produces data with the necessary frequency and quality.

Before diving head first into curve-fitting, I decided to do some grouping and visualization, so that I can get an idea of the parameters of a typical day. What I chose are:

– H(Tmin), H(Tmax): Hour of the day (local time) at which min and max temperatures are recorded.

– DTR: (Tmax – Tmix) – Diurnal Temperature Range

– Delta-H: (H(Tmax) – H(Tmin)) – Time interval (in hours) between Tmax and Tmin

Using a Python code, I calculated the parameters defined above for each day of the temperature series.

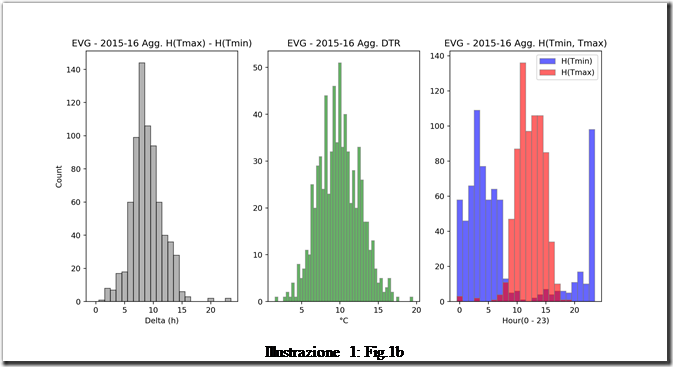

The aggregate parameters’ values over the whole time period (2015-2016) are shown in Fig.1a for Parma and Fig.1b for Everglades City.

For Parma (Fig.1a), the aggregate data show that – over the study period – Tmax and Tmin occur usually 9 – 11 hours apart; DTR has a very non-normal distribution spanning 0 – 15 °C but with higher count around 10 °C; finally Tmin is recorded most frequently around 07:00 and Tmax around 16:00 (all times are local).

For Everglades City (Fig1b), Delta-H is most frequently 8 hours; the DTR distribution is more bell-shaped and centered around 10 °C, while Tmin is most frequently recorded around 23:00 and 03:00 and Tmax around 12:00.

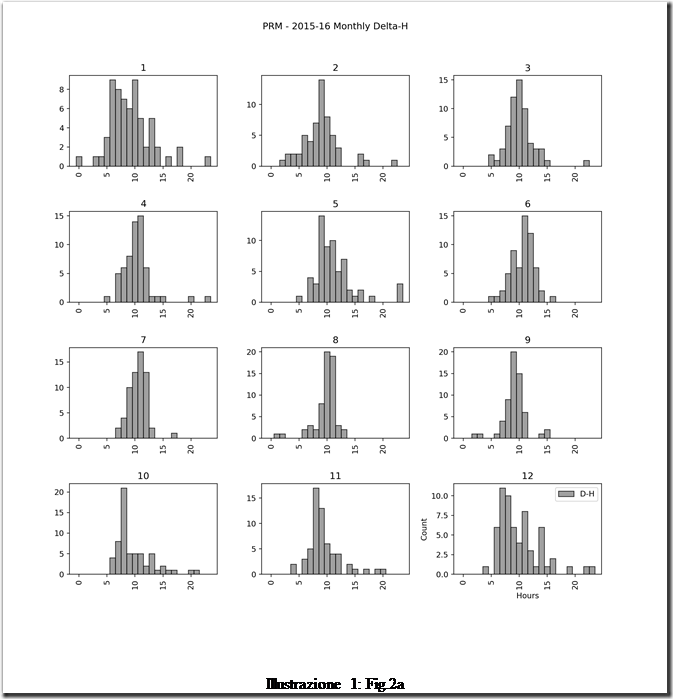

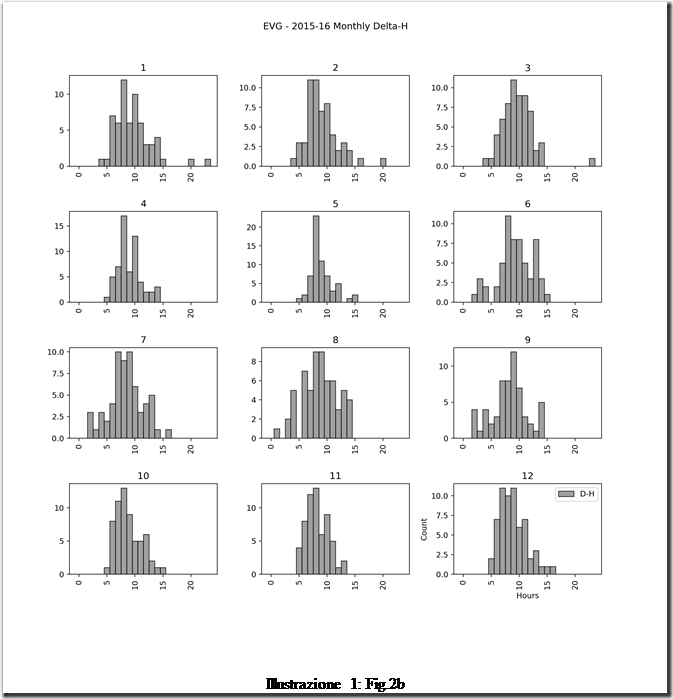

Then, I also plotted the same parameters month by month for the whole study period. The first quantity is Delta-H, for both locations (Fig.2).

Delta-H distributin for Parma (Fig.2a) is narrower and shifted towards longer timespan in the summer; wider and shifted towards shorter timespan in winter: this is consistent with the varying length of day in the temperate region.

Delta-H distributin for Parma (Fig.2a) is narrower and shifted towards longer timespan in the summer; wider and shifted towards shorter timespan in winter: this is consistent with the varying length of day in the temperate region.

Delta-H distribution at Everglades City (Fig.2b) is rather uniform throughout the year.

Delta-H distribution at Everglades City (Fig.2b) is rather uniform throughout the year.

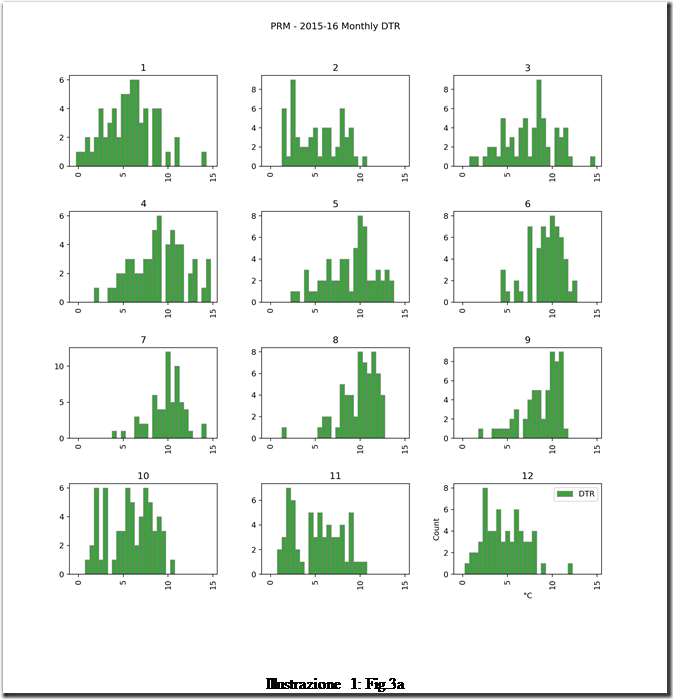

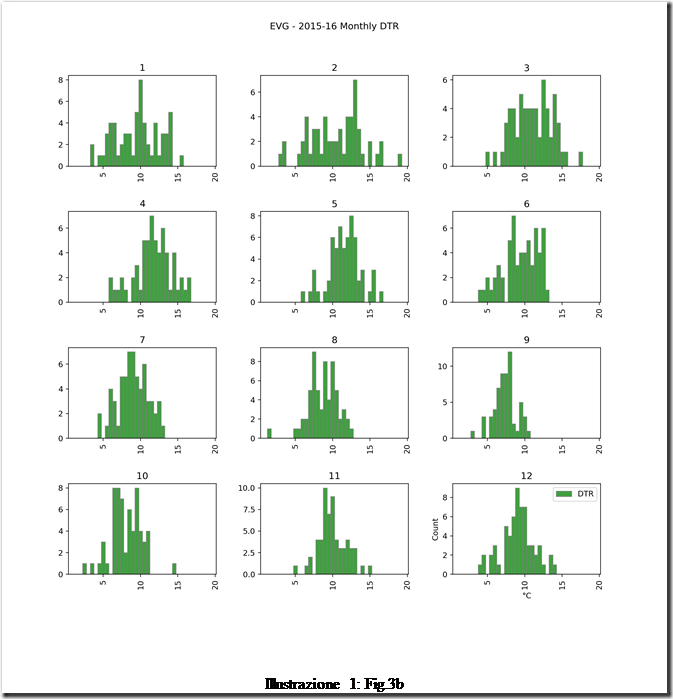

Then, DTR for both locations (Fig.3)

Parma shows DTR distributions (Fig.3a) that are wide all over the year, but with larger mean DTR in summer months than winter months.

Parma shows DTR distributions (Fig.3a) that are wide all over the year, but with larger mean DTR in summer months than winter months.

For Everglades City, DTR distribution (Fig.3b) is somewhat narrower and shifted to smaller values in the summer months, becoming wider and shifting to larger values in winter.

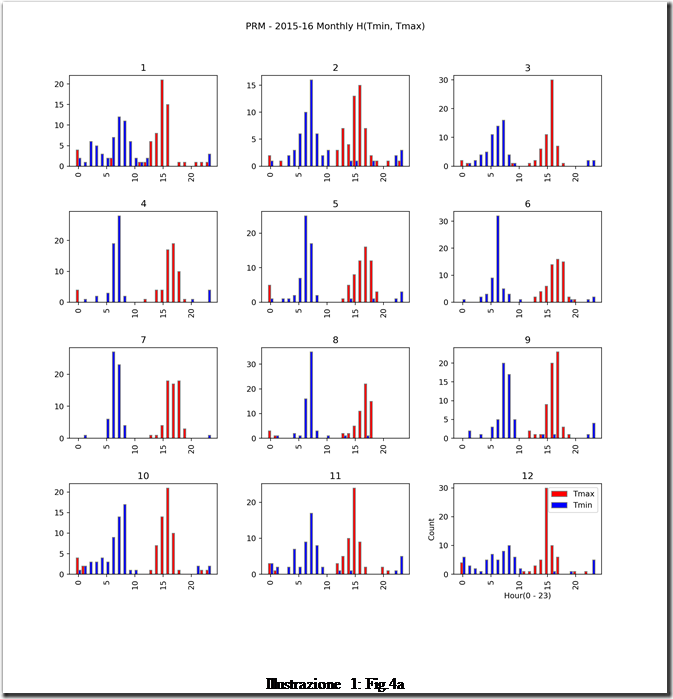

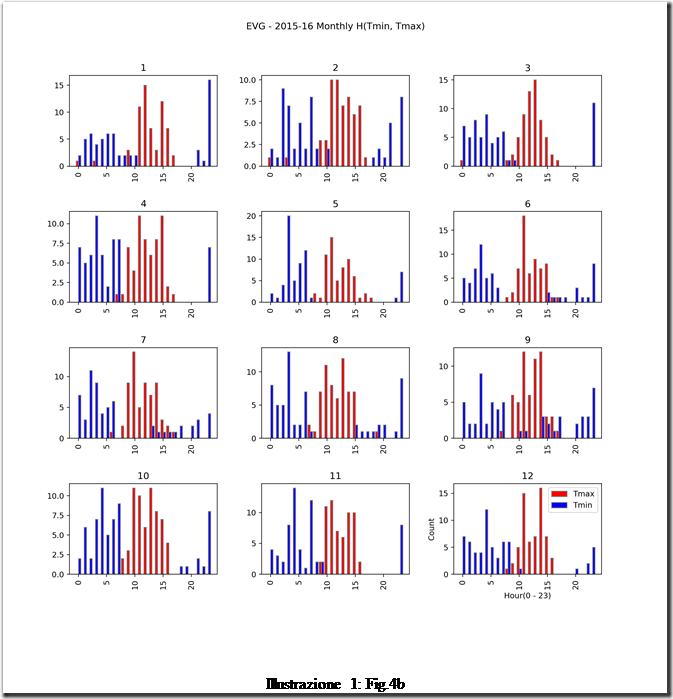

Fig.4 shows H(Tmin, Tmax).

In Parma (Fig.4a), Tmin is recorded most often between 05:00 and 10:00 while Tmax is recorded most often between 15:00 and 20:00, with a shift towards later hours in summer (this could be a consequence of the station being in an urban environment rich with heatsinks); the separation between Tmin and Tmax distributions is clear in summer but less so in winter.

In Parma (Fig.4a), Tmin is recorded most often between 05:00 and 10:00 while Tmax is recorded most often between 15:00 and 20:00, with a shift towards later hours in summer (this could be a consequence of the station being in an urban environment rich with heatsinks); the separation between Tmin and Tmax distributions is clear in summer but less so in winter.

In Everglades City (Fig.4b), Tmin occurs mostly at night (20:00 – 05:00) and Tmax around noontime (10:00 – 15:00); the distributions are wider and show an appreciable level of superimposition throughout the year.

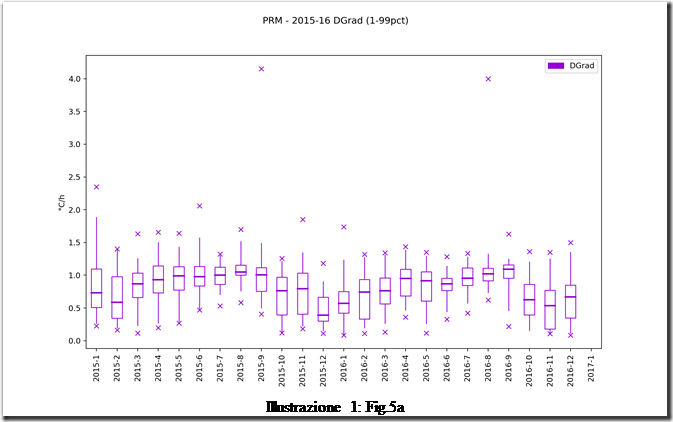

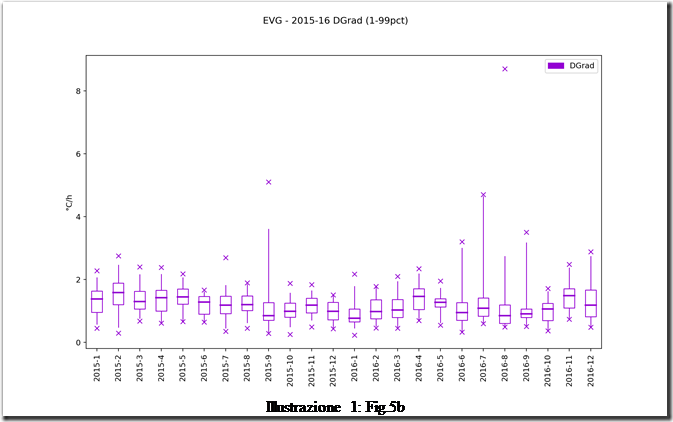

I computed also another parameter, which can be seen as a synthetic indicator for DTR and Delta-H together: the daily gradient, or Dgrad.

It is defined at (Tmax-Tmin)/Delta-H; it is by definition positive and gives a measure of how fast temperature changes over the course of one day. I also decided to visualize Dgrad to show its monthly variation over the whole study period, in Fig.5.

Dgrad for both stations varies in a rather narrow interval, but while for Everglades City it appears to reach a maximum in spring months and a minimum in summer, Parma shows a slowly rising trend from the winter minimum to September, followed by an abrupt fall in October / November.

Both series are also highly noisy and present a number of outliers. Curiously, the highest outliers occur in Sept-15 and Aug-16 for both stations.

Conclusions.

I never imagined this study would bring dramatic revelations, and this is in fact the case.

There still are, however, some conclusions that can be drawn even from a dataset limited in space and time:

– As far as defining a “standard day” can be desired, it should be limited to month-by-month and station-by-station. The variation of daily parameters in time and space is, at a first look, too large to warrant wider generalizations.

– The long-term trend of the daily parameters can be investigated in order to gain more information about variation of climate than just daily min, max and average temperatures.

– The long-term trend of daily gradient, can give information about whether climate is becoming more unstable and extreme phenomena are on the increase.

Afterword

Datasets and source code (Python 3) are available upon request.

Constructive criticism and observations are welcome. Please cite the words you are responding to, as to avoid confusion and misunderstandings.

OT, someone will blame coal;

https://www.smh.com.au/environment/weather/sydney-skies-graced-with-sun-halo-20190216-p50yaw.html

Yes I read that. In Australia, any unusual “weather” event is blamed on climate change. In Australia, climate change is blamed on coal mining and burning.

Ice in the atmosphere??!

A statistical average from which to determine a mean and modal values and standard deviation has been accepted for over 100 years as a minimum of 32, though some argue it should be 50. 24 samples in a day will not achieve this.

Donald’s comment needs it’s own comment.

Averages can be calculated for two or more samples. There isn’t a “statistical average”. The comment about standard deviations refers to the minimum number of data points for a certain standard type of analysis and a certain way of calculating is, and it is 29 samples. There is a mathematical foundation for this.

As for the statistics of small data sets, there are multiple tools available. Some are useful for sets of five numbers, provided it is established by testing for the type of distribution of that data. Such tests cannot be performed on a set of three numbers so the results are ” there” for three but are not indicative of much. Wonky distribution of five samples renders the standard deviation values pretty meaningless. Good distribution, not.

As for the 24 samples, calculating the average is totally legitimate. The arithmetic mean is the arithmetic mean, nothing statistical about it. Reporting the Mode, also. Reporting the Standard Deviation of hourly temperature is meaningless because that reflects a misunderstanding of what a SD represents.

What is usually forgotten (omitted) is that these are measurements, and have an uncertainty which must be propagated and reported together with the average. More samples, greater uncertainty band.

Most relevant of course, is that temperature on its own is a substitute, a representation of the quantity in which we have the greatest interest: the total heat energy content. Temperature is a proxy for energy; without the density and humidity, it is helpful but not definitive.

The total heat energy content is called the enthalpy. This is distinct from total energy which considers the altitude above a surface to which it potentially, might fall. That portion is a red herring when it comes to surface temperature.

If the temperature plots showed the heat energy (a function of the air density, water vapour and temperature) the Delta-H would change. Instead of Delta T we would plot delta H (the symbol for enthalpy), for which delta T is a proxy. The “warming of the Earth” is shown by a change in enthalpy, not only a change in T.

The “highest” and “lowest ever” for some location and date is a game for children, because they don’t understand the science. The “Humidex” is a step in the right direction, as is “wind chill”, but they still fall short.

Interestingly, the oceans are often discussed as “warming” and in that case, the enthalpy is reported, with great care taken to consider the salinity and thermal mass of the water being temperature-sampled. But for air temperature, it is not.

Why not?

If it gives the wrong answer anyway, why fuss about a change in processing the wrong metric?

CiW –> “What is usually forgotten (omitted) is that these are measurements, and have an uncertainty which must be propagated and reported together with the average. More samples, greater uncertainty band. ”

Don’t forget that adding significant digits to averages should be a no-no but it seems de rigueur practice among climate scientists.

I am uncertain whether climate scientists do not understand the propagation of measurment error and significant figures, or are willfully ignoring error and significant figures.

I have seen numerous examples of people failing to understand that the nice steady digital reading that they see is derived electronically from a highly fluctuating analog signal. Likewise, the advent of digital calculators did not suddenly increase the number of significant figures in a result.

Worse than not understanding is the deliberate misuse of statistics.

I’ve seen some climate “scientists” declare that if they take 12 readings, of 12 different sample of air, using 12 different thermometers, they can take the average of these 12 readings and the average will have more significant digits than any of the original readings.

I sincerely believe that most ‘climate scientists’ took a course in statistics once — years ago — almost passed i,t and then forgot everything about it except that it was named “statistics” and they didn’t care much for it because it required them to think! I addition I don’t believe it ever occurred to any professional publication editor to run the ‘paper’ by a qualified statistician before publication. It never occurred to them that it was important!

Donald

I have variously heard Rules of Thumb for minimum numbers of samples from 20 to 30. Certainly, 12 (as in months) does not meet the criteria. Twenty-four is marginal even, with the relaxed criteria. I suspect that the reason the minimum varies is that it might be different for different data sets, and for different purposes.

25-30 samples is the level that the central limit theorem is considered to ‘kick in’ for non-normal distributions, ie the sampling distribution of the mean becomes normal. The more normal the original distribution is, the less samples required for the mean’s distribution to become normal. Normality is a prerequisite for many statistical operations, primarily for calculating the accuracy of the mean we’ve sampled. Of course, the larger the sample, the more accurate the mean will be.

In this case, there is some skew in the original distribution, but not alot. 24 samples is more than enough to average. Also we’re mostly interested in the monthly means anyway, so there are 24×30 =720 samples available for that mean calculation. That’s more than enough to get a mean within a fraction of a degree.

Statistical sampling to get a sinusoidal function to fit data points to works if there are no clouds, no fronts, no rain, and no adiabatic winds. Otherwise the function goes to a state called kaka. Essentially, all sharp spike transitions in air temperature caused by pesky things like fronts and adiabatic winds and squalls will not fit such a function and means that all aberration from modeled data will not exist. The timeframe of sampling is roughly related to the scale of the atmospheric phenomena you want to study, so 24 daily samples means you want to study solar cycles and that is it.

Thanks Donald. Good points on which we can elaborate.

Back on William Ward’s “A Condensed . . . .“ thread on this site (January 1, 2019), Willis, with his typical masterful crushing of available data, showed that while very “noisy” (basically what you look at as weather), typical days’ temperature-histories were day-length and sinusoidal-like (1/15/2019 at 9:40 PM) with a STRONG fundamental and roughly two weaker harmonics (12 hours and 8 hours), as seen at 1/16/2019 at 11:02 PM. So why not just use this mathematical shape as a model to fit to data? That is, neglecting harmonics:

T(t) = Ao + A Sin(2*pi*f*t + phase)

This has four unknowns (Ao, A, f, and phase). We just have to find four actual data points (4 equations in 4 unknowns) and solve! Oooops! This gets hard if they are not linear equations. Even worse, we have NO data! We need T(t1), T(t2), T(t3), and T(t4). We have only two NUMBERS: Tmax(t=?) and Tmin(t=?). Since the corresponding times are not recorded, these are of little use.

So punt! First recognize that f = 1/day (1/(24 hours)) so there are really 3 unknowns, not 4. It’s a Fourier SERIES. Secondly we can guess possible trial times for Tmax and Tmin. Suppose we try Tmin(t=7AM) and Tmax(t=4PM). We are still one data point short and have the non-linear equations in our way. Computers to the rescue.

The questions are: (1) can we search values for Ao, A, and phase and look exhaustively (enough) so that the resulting T(t) goes through Tmin(t=7AM) and Tmax(t=4PM)? And (2) will this found curve (an offset sinusoid) have its max/min values at the input times guessed.

Please see the figure here:

http://electronotes.netfirms.com/StandDay.jpg

Here we chose Tmin(7) = 50 (50 degrees F at 7 AM) and Tmax(16) = 80 (80 degrees F at 4 PM). We chose a reasonable mean (DC value) of 65. We then searched a grid of phase from -pi to +pi, and of A from 0 to 60, in small increments, looking for the smallest total error on BOTH points (red dots) simultaneously. This works, the blue sinusoid goes through both red points. A different choice of Ao gives a different sinusoid, although it too goes through both red dots. No choice of Ao puts both red dots at the max/min values of the blue sinusoid. It (of course) can’t happen UNLESS the chosen times are exactly 12 hours apart. [Be kind enough not to ask if I recognized this before or after the computer showed me it WAS true!]

There are other approaches to fitting the data here: Non-uniform sampling, FFT interpolation, Prony’s method) but it is always a good idea to let the “toy” examples teach you something first.

Bernie

sorry check the literature. this has been done before.

the problem is you guys are trying to solve a problem that doesnt exist.

1. we have tmax and tmin. those are perfectly adequate diagnostic variables to test models.

we can combine them into tavg. again, adequate to evaluate models.

2 we are also intetested in the change of temperature over time. for this we want to know that delta tavg is an unbiased estimater of delta tmean. it is.

bottom line. we care about two things. testing models and estimating the change in average temperature.

tavg..(tmin +tmax/2) is adequate for both of those. Its not tmean. never will be, and it does not matter.

1. there was an LIA tavg is greater now than then.

2. co2 is a ghg, regardless of your choice of diagnostic temperature metric.

3. more co2 means a warmer world, whether you use tavg or tmean.

4. how much warmer is a function of ECS, again use the metric for temperature of your choice.

using tmean or tavg changes nothing material in the fundamental questions.

your time is better spent fighting the policies of AOC, and pushing for more nuclear. the public wont get or wont care about polishing the temperature bowling ball. focus on the fight that matters.

or lose the war.

Well, Mr Mosher, I don’t know what problem that doesn’t exist I am trying to solve, because I didn’t present any solution to any problem. I’d be happy tho to see some literature reference to see what has been done before along these lines.

Do we know much more about a non-issue now?

Dr. Capezzuoli

Your analysis is quite interesting from the standpoint of statistics, but as regards climate please consider comments by Crispin in Waterloo and mosher, that global warming is really about enthalpy, and that any statistic is as good as any other for assessing climate models.

It is elementary thermodynamics that temperature is not an intensive property, so the sum of multiple temperatures may not be a temperature but a statistic. Sort of like dividing by zero, sometimes it works, sometimes it doesn’t.

Using temperature extremes has the merit that at least at these points the thermal and radiative fields are as close to equilibrium as they can be, and equilbrium is another required but often overlooked attribute before measuring temperature.

Please note that almost half of all heat arriving at the surface gets converted to water vapor, the heat of which is not made available until the water condenses. Typically this will be far away and much higher than the 2 meter height of the climate network thermometers. Small changes in the phasing of morning or afternoon clouds can change local temperatures by multiple degrees as the conversion of heat to water vapor is influenced. Some of this heat of condensation will be observed at ground level, but much of it will only get to space from cloud radiation.

https://aea26.wordpress.com/2017/02/23/atmospheric-energy/

4Kx3 ==> Neither side of this issue is really adding anything to our knowledge.

Mosher seems not to care what the temperatures was anywhere at anytime — he just needs the “trend” to appear to be getting warmer — even though he knows full well (see his comment above) that these surface temperature records with their tiny changes over time do not inform us as to how much “extra” energy is being retained in the Earth system.

So far, climate science tells us, with its CO2 hypothesis, that the system should be gaining energy — which, if true, and it does appear to be true, is a value neutral concept. It is neither a very bad thing or a very good thing — until we really know what that “extra” energy is going to do.

The models do no and can not inform us about that in the medium and far distant climate future because we do not yet have a handle on the climate sensitivity issue.

Those fussing about on the temperature record (Nyquist, temperature profile, etc) are basically interested in the fact that the old Tmin+Tmax/2 “daily average” doesn’t represent the energy-as-temperature properly or precisely. In my opinion, it is a fool’s errand — the surface temperature record will never tell us that.

After all the Mannian-tomfoolery, adjusting and kriging and infilling and averaging averages of averages, all we can know from it is Mosher’s famous saying;

“The global temperature exists. It has a precise physical meaning. It’s this meaning that allows us to say…The LIA was cooler than today”.

But nothing more precise and almost nothing about the CO2 Climate Hypothesis.

4kx3: “It is elementary thermodynamics that temperature is not an intensive property…” Huh? Of course temperature is an intensive property! It is not summed if various sub-pieces of a constant temperature system are aggregated (total enthalpy, however is)…

Joe Campbell

You are of course correct. Thank you. Please delete the “not’. But it is still true that in general temperature cannot be averaged.

4kx3: I agree 100% that “in general temperature cannot [maybe “should not” is better] be averaged” except as a statistical device (where maybe the term “mean” should be better used)…

the public wont get or wont care about polishing the temperature bowling ball.

Public doesn’t care much about trends bowling ball precisely because it doesn’t trust its value. Here we’re exploring a little bit deeper why this general feeling is justified.

1. Yes it is warmer now than during LIA, thak goodness.

2. Yes but an inconsequential ghg compared to good old H2O.

3. Possibly, but not proven.

4. OK, so CO2 increased from 290 to 400ppm while Temperatures have increased 0.6C (ie total less the increase caused by adjustments). Say 50% due to CO2, so 0.3C over a period of 150 years.

Not enough to even give a second thought to.

100%

Years ago I was taught that, for a continuous function, Tav was not equal to (Tmax+Tmin)/2 but could readily be assessed, using some long-forgotten math, from the area below the line Tmax.

Five or six years ago, Mr. Mosher pointed out to me that we still used (Tmax+T min)/2 for comparative purposes because, in the old days, there was no way of continuously recording temperature. He even provided a link to a record of a single site which showed that (Tmax+T min)/2 did indeed provide a very close approximation to Tmean.

Now we seem to have switched to discussing Tav as opposed to Tmean would it not be a good idea to measure the area u8nder the curve?

There is no area under any curve when all you have is a single TMax reading from a Mercury filled Thermometer for the day.

“There is no area under any curve when all you have is a single TMax reading from a Mercury filled Thermometer for the day.

But Tmax at any instant is the temperature at that moment in time. Hence Tmax =Tmin = Tt. In other words measure T continuously as they have been doing in some labs for years.

One of the things WUWT does best is educate us all. Anything that helps us upgrade our math, data analysis, and science skills is good. The other thing I push as much as possible is a knowledge of history.

By and large, the alarmist community is a bit less knowledgeable than the skeptic community at all levels. They are prone to say things that don’t pass the sniff test. Dr. Mann’s attempt to erase the MWP, in the face of overwhelming historical and scientific evidence that it happened and was global, comes to mind. By educating ourselves, we’re in much better shape to call BS when the alarmists say stupid things.

I agree, CB. Reading the posts and their comment section here at WUWT is like having a polymath as your sensei. Regardless of the subject of the post there are always experts in practically every even remotely associated field that quickly joint the discussion. These are usually people who have worked or taught in the area for many years and have experience with and detailed knowledge of all the pitfalls common to most of the uninitiated.

I would add Steve and Nick into that group as well. Just because we don’t always agree with them doesn’t mean they aren’t extremely knowledgeable in their fields, and bring a lot to the conversation.

+1 on that :<)

What’s really revealing (sometimes ) in these comments is the motives behind their position on this very complicated topic of catastrophic anthrapogenic global warming (CAGW). The comment that Steve Mosher made, “your time is better spent fighting the policies of AOC, and pushing for more nuclear. the public wont get or wont care about polishing the temperature bowling ball. focus on the fight that matters.” is a good example. Steven Mosher’s motive for supporting CAGW, that is “more nuclear power” is a good one but is not working as intended and instead has run amuck with windmills and solar panels. If we continue with this CAGW insanity we won’t need to worry about our future generations energy supply because they will be fighting each other with sticks and rocks.

Bob, I am a student of history. I was surprised growing up how many people hated history and never wanted to even discuss history. For them it was ‘that was the past, dead and gone, why should they care.’

I once believed that the axiom, “Those that do not learn from history are doomed to repeat it.” was just said by Santayana but I have learned since it was said many times in many ways throughout history. Our problem is many, especially today, refuse to accept the truth of that statement. Not only do they not wish to learn from history they prefer to not just ignore it but to reject history totally.

We are today faced with the best example in my lifetime of the failure to learn from history. After a century of failed attempts to created a socialist paradises, all of which led to catastrophe and extreme violence, we have a large segment of the population that believes socialism is the answer to all the world’s problems. They are still convinced it would work fine if just “the right people” were in charge. Saul Alinsky preached that belief.

Which brings me to Albert Einstein’s definition of insanity.

But this time it’s different.

Mr. Mosher, I’d like to see the literature references to compare my work with what has been done before.

Then there is the political part, which is important if not dominant, that’s for sure. But it is not something that I want to discuss, at least not here, not now.

And what is the ECS, calculated from first principles? Oh, you don’t know, and no one else does either?

All reported ECS assumes:

All warming since 1850, or 1880, or some date is due to CO2, a very un-scientific pronouncement

And, there are almost always Feedbacks included, almost always positive, an even more un-scientific proposition.

100+

SM –> “2 we are also intetested in the change of temperature over time. for this we want to know that delta tavg is an unbiased estimater of delta tmean. it is.”

Please explain how the averages being used end up with more precision than the original temperature recordings used to calculate them.

If you use standard scientific procedure in maintaining significant digits of measurements can you even detect most of the change in temperature being quoted as fact?

steven mosher – February 16, 2019 at 3:02 am

“YUP”, …… and a colder world means less CO2.

And as you well know, it is the “temperature” of the ocean surface water, ….. of the near-surface air …… and/or the near surface land …… that are the “drivers” of atmospheric CO2 quantities.

Anyway, to wit:

Quoting Dr Fabio Capezzuol

A study in search of a daily average temperature.

It is MLO that any “search” or ”study” that was initiated for the purpose of determining a daily average near-surface temperature is little more than an act of futility simply because said “average” has no scientific or commercial “weather forecasting” value for more than 4 to 5 days, ……. other than being historical reference data, ….. but does have a smidgen of cultural value as appeasement of the curiosity of the public wanting to know a “comparison” of yesterday’s high/low average temperature ……. to the high/low average daily temperature of previous years.

Mosh – model testing?

So when we test the models on the temperature metric they fail. They are terrible at predicting the temperature of future atmospheres.

Your two intentions for temperature fail on some fundamental grounds. First, temperature is a proxy for heat gain from GHGs or direct radiation and convective heat transfer and has no direct meaning of its own. Second, unless accompanied by an assumed constant water vapour level, a higher temperature doesn’t reflect global warming. No one it seems expects the water vapour to remain constant, agreed? Third, the way the ECS is calculated by the IPCC is fundamentally flawed and not derived from first principles. Fourth, the convective heat transfer from the surface to the atmosphere with and without GHGs is omitted from the ECS calculation, and which if included, shows that the GHGs influence is not just non-logarithmic, it is positive or negative depending on the concentration! Good grief!

I am beginning to conclude no climate energy methods will get through review by a third year Mech Eng student. Do you think FLIR in Santa Barbara could sell a single product if they relied on mathematics as flawed as “climate math”? One is repeatedly struck by the technical incompetence in the Cli Sci community. Why should we believe any of it?

Tmax, Tmin and Tavg is only good for teaching arithmetic to grade fives.

“your time is better spent fighting the policies of AOC, and pushing for more nuclear. the public wont get or wont care about polishing the temperature bowling ball. focus on the fight that matters.”

I agree with you. There is so much lunacy written out in AOC’s GND that it is an enormous house of cards. Our time would well spent in developing the counter arguments.

Mosher with his english lit degree fails at physics again, climate change is about global energy balance it doesn’t care about tmax and tmin or an average of the two because that does not tell you a dam thing about the energy in the system. If Mosher understood basic physics he would know we can have a tmax for 10min while for the rest of the day it hovers near tmin. The average temperature is nothing like the energy balance of the rest of the day. So currently his pretty average temperature graphs getting warmer could just be because short periods of the day are getting warmer or a hundred other reasons.

What you have is a superficial trend match as most of the politics is done what needs to happen with the field is the science done properly and some of these controls studied and put in place.

Could you please supply the source of your statement “… delta tavg is an unbiased estimater of delta tmean.” for us?

“…sorry check the literature. this has been done before…”

That didn’t stop you when it came to working on BEST.

Test/evaluate them for what, pray?

There’s plenty of things we know they are useless for. Something they are useful for would be more, well, useful.

Standard average daily temperature can be determined by the +/- increment in the amount of energy stored in a standard mass during preceding 24 hours, represented by a single reading of its temperature at internationally agreed time interval relative to the sun’s elevation above the horizon.

(i.e. Stevenson’s box with a sealed mass content container with a single liquid or solid state phase in range -100 to +100 Centigrade )

Would that not be the same as RMS, like you calculate for AC current or voltage, as this calculates the equivalent effect of the wave? Just asking.

Yes, it is energy content available that matters, not an instant value however accurately measured.

Yes it’s the problem that Mosher doesn’t get that the way they are doing it is prone to spike errors and it is far from convincing that it represents the average energy of the system.

Is that some kind of standard instrument? It sounds like some kind of calorimeter but when I google for stevenson screen calorimeter, nothing turns up.

Darn. It should be:

… when I google for stevenson screen calorimeter, nothing turns up.

…. sort off.

Of course it’s not in google, it’s my recommendation to dummies who think that the (max-min)/2 has any physical meaning.

… this morning I had 10 quid in my wallet (daily max), now I have none (daily min).

Today’s monetary content of my wallet was £5 ?!

typo (max+min)/2

I think I heard this joke first on WUWT.

From my file of tag lines and smart remarks:

If you want to find out how hot it got, measure how hot it got.

In other words the TMAX and TMIN mean something. Why that’s an important concept can be explained:

First we find the average station temperature for the day. Then we find the average station temperature for the year. Then we take all the annual station averages from the equator to the poles and compute the annual global temperature. Finally all the annual temperatures are plotted out to see if there’s a trend or not, and if there is, it is declared an artifact of human activity and a problem. The solution to the “problem” requires that we all eat tofu, ride the bus and then on our 65th birthday in order to save the planet, we show up at the local Gaia Center to be euthanized.

TMAX and TMIN don’t give you the average temperature for the day. All they give you is the average between TMAX and TMIN.

Then why not do two calculations for the day, on TMAX and TMIN? That would show if the highs are getting higher or if the lows are getting lower, or if they’re meeting in the twain. At any rate, we would have two annual trends to examine.

Then why not do two calculations for the day, on TMAX and TMIN?

Doesn’t yield the desired answer.

Not as easy to manipulate fudge, dry lab, gun deck etc.

As a complete layman, I never the less believe that the reliability of temperature averages as well as the question of the meaningfulness of these are important questions. When graphs are given to support rising or falling temperatures with no error bars, any uncertainty or error is excluded. Simple answers are great but I wonder if the answers in the climate discussion are not often simplistic and hence misleading.

… and perhaps adjusted to fit agendas.

Why compute tavg? The closer you stay to actual measurements the better your analysis will be.

https://tambonthongchai.com/2018/04/05/agw-trends-in-daily-station-data/

ttps://tambonthongchai.com/2019/02/12/australia-climate-change-daily-station-data/

https://tambonthongchai.com/2019/02/12/australia-climate-change-daily-station-data/

“These results are anomalous. No theoretical framework exists for anthropogenic global warming acting through the greenhouse effect of atmospheric CO2 by way of fossil fuel emissions to cause warming in nighttime temperatures without affecting the maximum daytime temperature”

In the daytime, turbulence governs, and night, when the air is still, humidity and CO2 governs.

Also water vapor is low when temperature is low, so CO2 can have a greater effect. But the urban heat island effect also causes more warming when temperature is low, so the greater rate of warming of T-min as compared to T-max could be due to, or partly due to, the urban heat island effect. In the U.S. T-min is warming twice as fast as T-max.

They are trying to proxy global energy so they basically take a wild guess average temperature is a good proxy. The problem is there are lots of site situations it won’t be for example anywhere on the coast that gets an afternoon sea breeze is a classical one. The standard operation of climate science is blend and average everything on a wing and a prayer that it all smooths out.

Climate Science has too many other field refugees with little real physics background and until that changes the field will continue to struggle because most in the field can’t even understand the issues they have in their data.

It might be of interest that the Swedish Meteorological Service (SMHI) uses a kind of “standard day” concept to calculate average temperatures. It is based on the temperature at 0700, 1300, 1900 plus max and min temperatures:

Tav=(aT07+bT13+cT19+dTmax+eTmin)/100

Where “a” through “e” is a set of parameters which vary depending on month and longitude (since local solar time and Swedish standard time differs a bit)

It is known as the “Ekström-Modén formula” and has been used since 1914.

The parameter values are here:

https://www.smhi.se/kunskapsbanken/meteorologi/koefficienterna-i-ekholm-modens-formel-1.18371

However this formula is only used “in-country”, which means that SMHI:s average temperatures won’t be quite the same as e. g. GHCN “raw” averages for the same Swedish stations.

I loathe the “Drought Monitor” reporting and maps. I don’t believe their “snapshot” daily data analysis presents an accurate representation of drought. The Drought Monitor is a politically motivated organization.

It’s the same thing with the CA Department of Water Resources DWR. Their “snapshot” snow level measurements are ridiculous. For example …

https://abc30.com/weather/snow-survey-shows-water-content-is-below-average/5010528/

Does the CA DWR … not … know that EACH winter season in CA is different? Each year deposits rain and water in different patterns and time? ALL that matters is the season end TOTALS of precipitation, reservoir storage levels, and the amount of water flowing in the streams and rivers. This constant naval-examination of meaningless DAILY snapshots … or worse … the random, meaningless, “snow surveys” which NEVER appear to take place AFTER significant storms. The vast number of DWR snow surveys show “below normal” snowpack … burning into the public’s brains that we are in a “constant state of “drought” … despite the FINAL year end summary which indicates the total year was NORMAL. I NEVER hear the DWR give a widely publicized year end TOTAL or SUMMARY of CA precipitation … *crickets*. Because NORMAL wet season totals … don’t fit the Narrative.

https://fox5sandiego.com/2019/02/15/atmospheric-rivers-are-pulling-california-out-of-drought-and-piling-on-the-snow/

“Atmospheric River” … “Polar Vortex” … all “scary-sounding” (intended to be presented as “natural disaster”) terms to describe EXTREME weather. It’s nothing of the sort. It’s all NORMAL.

They are make-work/job creation exercises. Either work has to be found for under-employed staff on the payroll or have fewer staff (and that is a bureaucrat’s worst nightmare).

“your time is better spent fighting the policies of AOC”

Probably true. If the voters can ignore the historical evidence condemning communal theory and still install a person like AOC into the halls of power what chance do we have trying to explain to the voter some of the deficiencies and magical thinking behind climate science.

At the moment, there is no DST correction. There is the Dgrad metric which is indipendent of DST, tho.

Thank you, often wondered about it.

I would like to see the results of looking at all of the monitoring stations on a particular day and finding the median of the Tmins and the median of the Tmaxes. I am curious how that fluctuates. Is it going up, going down or staying steady. I think that the median temperature of the earth would be better than trying to calculate an average temperature. Since the North and South Hemispheres heat and cool opposite to each other it would be nice to know how they are balancing.

The overall average tells you nothing. The overall average can go up from the maximum values in the data set going up. The overall average can go up from the minimum values in the data set going up. The overall average can go up from the mid-range values in the data set going up. Or the overall average can go up from a combination of all three values in the data set going up. Therefore the overall average tells you exactly zero.

So your idea of finding the median of the minimums and the median of the maximums would actually tell you something useful. Since many AGW predictions are based on the expectation of maximum temperatures going up, eg. crop failures, droughts, hurricanes/tornado intensity, etc; it would be interesting to see if the median of the maximum temperatures is actually going up. Since a widespread global sampling of cooling-days shows a downward trend over the past three years it would be a nice data point to have for a comparison.

If climate monitoring is important, why are not many more automated monitoring sites being deployed worldwide? The opposite is happening.

For the cost of some (worthless) climate model runs we could blanket the planet with decent automated data acquisition.

An automated system could easily integrate temperatures over time continuously (24/7/365) which would be far more informative than tMin/tMax (a mediocre proxy for heat content).

Continuous relative humidity and barometric pressures would provide for tracking actual heat content which is what we really want to know.

Elementary things that good science requires isn’t being done or even considered.

Better data isn’t being sought because the data doesn’t help the AGW cause. Besides, data massaging is more fun !!

Nice exercise

But I would wish the same job done on say 1,000 stations over a period of 50 years up to 2018, all having of course TMIN, TAVG and TMAX info for the period.

Please generate two monthly time series out of absolute temperatures, one averaging TMAX and TMIN, the other one TAVG, and compare their differences.

Then generate two monthly time series as above, but out of departures from the mean of 1981-2010, where the departures are computed station by station (by eliminating stations lacking sufficient data in the period), and again compare their differences.

That would be in my opinion a really interesting job.

Late, but I’m, back. I’ve been enjoying some real cold in Hokkaido.

Bindidon:

“But I would wish the same job done on say 1,000 stations over a period of 50 years up to 2018, all having of course TMIN, TAVG and TMAX info for the period.

Please generate two monthly time series out of absolute temperatures, one averaging TMAX and TMIN, the other one TAVG, and compare their differences.”

Hourly data are not available 50 years back, so the exercise your propose cannot be done. At least not for that timespan; I have in the works a similar analysis for about 10 years timespan and comparing rural to urban stations. Be patient and stay tuned.