Richard Betts heads the Climate Impacts area of the UK Met Office. The first bullet point on his webpage under areas of expertise describes his work as a climate modeler. He was one of the lead authors of the IPCC’s 5th Assessment Report (WG2). On a recent thread at Andrew Montford’s BishopHill blog, Dr. Betts left a remarkable comment that downplayed the importance of climate models.

Dr. Betts originally left the Aug 22, 2014 at 5:38 PM comment on the It’s the Atlantic wot dunnit thread. Andrew found the comment so noteworthy he wrote a post about it. See the BishopHill post GCMs and public policy. In response to Andrew’s statement, “Once again this brings us back to the thorny question of whether a GCM is a suitable tool to inform public policy,” Richard Betts wrote:

Bish, as always I am slightly bemused over why you think GCMs are so central to climate policy.

Everyone* agrees that the greenhouse effect is real, and that CO2 is a greenhouse gas. Everyone* agrees that CO2 rise is anthropogenic Everyone** agrees that we can’t predict the long-term response of the climate to ongoing CO2 rise with great accuracy. It could be large, it could be small. We don’t know. The old-style energy balance models got us this far. We can’t be certain of large changes in future, but can’t rule them out either. So climate mitigation policy is a political judgement based on what policymakers think carries the greater risk in the future – decarbonising or not decarbonising.

A primary aim of developing GCMs these days is to improve forecasts of regional climate on nearer-term timescales (seasons, year and a couple of decades) in order to inform contingency planning and adaptation (and also simply to increase understanding of the climate system by seeing how well forecasts based on current understanding stack up against observations, and then futher refining the models). Clearly, contingency planning and adaptation need to be done in the face of large uncertainty.

*OK so not quite everyone, but everyone who has thought about it to any reasonable extent

**Apart from a few who think that observations of a decade or three of small forcing can be extrapolated to indicate the response to long-term larger forcing with confidence

As noted earlier, it appears extremely odd that a climate modeler is downplaying the role of—the need for—his products.

“…WE CAN’T PREDICT LONG-TERM RESPONSE OF THE CLIMATE TO ONGOING CO2 RISE WITH GREAT ACCURACY”

Unfortunately, policy decisions by politicians around the globe have been and are being based on the predictions of assumed future catastrophes generated within the number-crunched worlds of climate models. Without those climate models, there are no foundations for policy decisions.

“…CLIMATE MITIGATION POLICY IS A POLITICAL JUDGEMENT BASED ON WHAT POLICYMAKERS THINK CARRIES THE GREATER RISK IN THE FUTURE – DECARBONISING OR NOT DECARBONISING”

But policymakers—and more importantly the public who elect the policymakers—have not been truly made aware that there is great uncertainty in the computer-created assumptions of future risk. Remarkably, we now find a lead author of the IPCC stating (my boldface):

… we can’t predict the long-term response of the climate to ongoing CO2 rise with great accuracy. It could be large, it could be small. We don’t know.

I don’t recall seeing the simple statement “We don’t know” anywhere in any IPCC report. Should “we don’t know” become the new theme of climate science, their mantra?

“THE OLD-STYLE ENERGY BALANCE MODELS GOT US THIS FAR”

Yet the latest and greatest climate models used by the IPCC for their 5th Assessment Report show no skill at being able to simulate past climate…even during the recent warming period since the mid-1970s. So the policymakers—and, more importantly, the public—have been misled or misinformed about the capabilities of climate models.

For much of the year 2013, we presented those model failings in dozens of blog posts, including as examples:

- Will their Failure to Properly Simulate Multidecadal Variations In Surface Temperatures Be the Downfall of the IPCC?

- Models Fail: Land versus Sea Surface Warming Rates

- Polar Amplification: Observations versus IPCC Climate Models

- Model-Data Comparison: Hemispheric Sea Ice Area

- Model-Data Precipitation Comparison: CMIP5 (IPCC AR5) Model Simulations versus Satellite-Era Observations

- Model-Data Comparison with Trend Maps: CMIP5 (IPCC AR5) Models vs New GISS Land-Ocean Temperature Index

In other words, the climate models presented in the IPCC’s 5th Assessment Report cannot simulate what many persons would consider the basics: surface temperatures, sea ice area and precipitation.

Shameless Plug: These and other model failings were presented in my ebook Climate Models Fail.

“APART FROM A FEW WHO THINK THAT OBSERVATIONS OF A DECADE OR THREE OF SMALL FORCING CAN BE EXTRAPOLATED TO INDICATE THE RESPONSE TO LONG-TERM LARGER FORCING WITH CONFIDENCE”

A few? In effect, that’s all the climate models used by the IPCC do with respect to surface temperatures. Figure 1 shows the annual GISS Land-Ocean Temperature Index data and linear trend (warming rate), for the Northern Hemisphere, from 1975 to 2000, a period to which climate models are tuned. The linear trend of the data has also been extrapolated until 2100. Also shown in the graph is the multi-model ensemble member mean (the average of all of the individual climate model runs) of the simulations of Northern Hemisphere surface temperature anomalies for the climate models stored in the CMIP5 archive. The CMIP5 archive was used by the IPCC for their 5th Assessment Report.

Figure 1

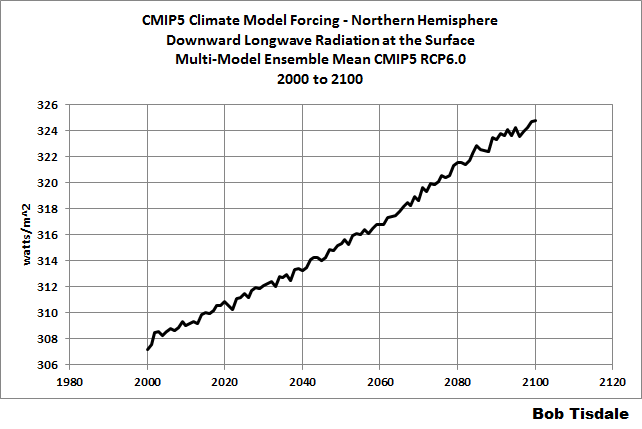

The model simulations of 21st Century surface temperature anomalies and their trends have been broken down into thirds to show that there was little increase in the expected warming rate through two-thirds of the 21st Century with the constantly increasing forcings. In other words, the models simply follow the extrapolated data trend through about 2066, in response to the increased forcings. See Figure 2 for the forcings.

Figure 2

So, Dr. Betts’s “a few” appears to, in reality, be the consensus of the climate science community…the central tendency of mainstream thinking about climate dynamics…the groupthink.

And the problem with the groupthink was that the climate science community tuned their models to a naturally occurring upswing in surface temperatures. See Figure 3.

Figure 3

Should the modelers have anticipated another cycle or two when making their pre-programmed prognostications of the future? Of course they should have. The models are out of phase with reality.

But why didn’t they tune their models to the long-term trend? If they had tuned their models to the long-term trend, there’s nothing alarming about a 0.07 deg C warming rate in Northern Hemisphere surface temperatures. Nothing alarming at all.

A NOTE

You may be wondering why I focused on Northern Hemisphere surface temperatures. Well, it’s well known that climate models can’t simulate the warming that took place in the Southern Hemisphere during the recent warming period. See Figure 4. The models almost double the warming that took place there since 1975.

Figure 4

CLOSING

Dr. Betts noted:

A primary aim of developing GCMs these days is to improve forecasts of regional climate on nearer-term timescales (seasons, year and a couple of decades) in order to inform contingency planning and adaptation (and also simply to increase understanding of the climate system by seeing how well forecasts based on current understanding stack up against observations, and then futher refining the models).

In order for the climate science community to create forecasts of regional climate on decadal timescales, the models will first have to be able to simulate coupled ocean-atmosphere processes. Unfortunately, with their politically driven focus on CO2, they are no closer now at being able to simulate those processes than they were two decades ago.

SOURCE

The GISS LOTI data and the climate model outputs are available through the KNMI Climate Explorer.

Really? Somebody really ought to let the alarmists know that, I think they missed the memo.

the hypocrisy in the assertion about GCM’s is that if they were working the climate crisis community would be promoting their accuracy on a continuous loop.

Looks like blatantly disingenuous blameshifting on Betts’ part.

It’s his job to just churn out numbers that his boss wants. He’s just following orders, he’s not responsible for what those numbers are used for. It’s crappy data, but it’s none of his business what happens after he prepares his reports.

AnonyMoose

August 26, 2014 at 9:53 am It’s crappy data, but it’s none of his business what happens after he prepares his reports.

“Once the rockets are up

who cares where they come down

that’s not my department,”

says Wernher von Braun.

“We don’t know what we’re doing,,, but give us a couple of trillion dollars and let us wreck the world economies anyway!!”

“**Apart from a few who think that observations of a decade or three of small forcing can be extrapolated to indicate the response to long-term larger forcing with confidence”

Wait. Isn’t that exactly what is done by those hundreds of peer-reviewed papers that predict extinctions, sea ice free North pole, plagues, droughts, flood, famines, super storms, etc., etc.? Don’t all the peer reviewers agree? So they are all wrong?

About time a modeler admitted it. I look forward to a wave of retracted papers.

I met Dr Betts at a recent climate conference at Exeter university. It included many of the great and the good of the climate world including Thomas stocker.

I was impressed by dr Betts himself and his openness and his uncertainty of the past climate as well as the future climate.

I wrote at the time that he was more sceptical than I had expected and I would class him much more as a lukewarmer than an alarmist. Of the two or three scientists I know at the Met office there are none I would class an an out and out alarmist although the head of the Met office and Julia slingo I would put in a different and more politically motivated category.

Tonyb

Despite advertising himself foremost as a modeler, Betts was a lead author on AR5 WG2, not 1. Maybe he did not read WG1 chapter 9, wherein is said that “there continues to be very high confidence that models reproduce observed large scale mean surface temperature patterns.”

Except they didn’t, as the ‘pause’ continues to illustrate.

The bigger stunner is the admission that most of this is politics rather than ‘science’.

Dr. Betts must be one of the 3% of scientists that don’t agree with the consensus – I wondered where they were hiding.

The SS CAGW is sinking. Betts is getting cold, wet feet and looking for a reputational lifeboat. This post clearly shows he has no intention of participating in any more IPCC activities.

The socio-political drive, coupled with a PC interpretation of the Uncertainty Principle, is beautifully expressed in Betts’ comments. There is nothing per se wrong with what he says or supports – as long as you are as upfront with your social engineering purpose as he was. The problem is that the regulating class and the eco-green are not upfront with what they push. They want us to do what we would not want to do if we understood what was going on.

But ever since the WMD “cause” of the Iraq-Coalition War, I get the story: our Governors decide, and the rest of us better get on board or be marginalized.

America was created by those who wished to live their lives and make their decisions as made sense. Not just to them, but on a basic level. We have abandoned the founding concepts of not simply doing as we are told, and why? because when power and money speak, the interests of those others is more important than the interests of those of us who simply work and support families.

On the basis of many arguments on Bishop Hill; Richard knows full well that not everybody agrees that the Greenhouse effect is real; the operative word being ‘IS’.

Some of us believe that the effect is already played out and Richard knows that.

Technically this rationalization could qualify for a place on the list of ‘where is the missing heat?’. Dr. Betts’ excuse is basically that the models don’t count anyhow.

“Bish, as always I am slightly bemused over why you think GCMs are so central to climate policy.”

If the GCMs are not to be taken seriously, what’s left of AR5? It had no new physical evidence of any significance. And the first two sentences of his second paragraph are pure drivel. The earth is NOT a greenhouse, and CO2 is a trace gas essentially at saturation effect.

“The old-style enegy balance models got us this far.”

These would be the ones with all of Trenberth’s “missing heat”, that somehow in defiance of thermodynamics transfers itself to the bottom of the oceans without being detected on the way?

Dr. Betts frequently appears on Bishop Hill and is mostly welcomed there for his willingness to engage in a civilized manner. He’s really in a class by himself and shouldn’t be compared to run-of-the-mill AGW advocates. I think he may be closer to Judith Curry in outlook than anyone else who quickly comes to mind, though he operates under a different set of constraints.

“Everyone [who has thought about it to any reasonable extent] agrees that CO2 rise is anthropogenic.”

Define reasonable. I’ve thought about it considerably and don’t agree that all or even most CO2 rise is necessarily anthropogenic. The oceans’ ability to hold CO2 drops with increasing temperature, and those temperatures have risen since the end of the Little Ice Age. Furthermore, I believe volcanic CO2 emissions have been underestimated by at least one order of magnitude, possibly two.

No.

If you cannot be certain of large changes in the future, nor rule them out, if as you say” “It could be large, it could be small. We don’t know”, then you are in the state known as “ignorance” about climate. So, climate mitigation policy is a poitical judgement based on what policy makers want, irrespective of anything to do with climate whatsoever. Climate mitigation policy is just a surrogate, an excuse, a propaganda tool, for advancing the pre-existing left wing political agenda.

It has always been thus.

Sorry far to late , they spent years telling us that GCMs are unquestionable gods who can never be wrong and who have amazing powers of prediction. And anyone who said otherwise has been attacked and smeared .

Now we are just supposed to accept that GCMs do not matter , well given that is virtual all they got what are their actual claims based on then ?

“Once the rockets are up, who cares where they come down? That’s not my department,” says Wernher von Braun.

h/t Tom Lehrer

I did not see that you had posted that when I posted essentially the same comment somewhere above. Great minds think alike?

Martin

I hear tell that the 40-year trend in Antarctic sea ice increase is not very important too. I know this because a person who firmly believes in AGW told me so. So there!

This really ticks me off.

The missing part.

OK so Betts says,

“Everyone agrees that the greenhouse effect is real,

that CO2 is a greenhouse gas.

that CO2 rise is anthropogenic

that we can’t predict the long-term response of the climate to ongoing CO2 rise

We don’t know.”

He left out the most important part. Which leads me to believe they do not want to talk about it.

What matters and what’s missing in Betts’ little sermon is the proportion or percentage of the greenhouse effect (and climate) that can be attributed to human influence.

Betts must have some idea. Does he agree with this?

http://www.geocraft.com/WVFossils/greenhouse_data.html

“Water vapor, responsible for 95% of Earth’s greenhouse effect, is 99.999% natural (some argue, 100%). Even if we wanted to we can do nothing to change this.

Anthropogenic (man-made) CO2 contributions cause only about 0.117% of Earth’s greenhouse effect, (factoring in water vapor). This is insignificant!

Adding up all anthropogenic greenhouse sources, the total human contribution to the greenhouse effect is around 0.28% (factoring in water vapor).”

Is that why Betts makes no mention of what proportion he attributes to anthropogenic influence?

Does everyone now (quietly) agree that the human contribution to the greenhouse effect is really around .28%?

Or is the Team conveniently uninterested?

There must be some reason Betts et all never talk about the human percentage and proportionate role in the greenhouse effect. What is the answer?

I seems to me that the ONLY aim of the current/developing GCMs these days is to gin up hypothetical climate scenarios to justify funding busy work for the massive government planning sector that involves a greater arena of academia, NGOs, private consulting firms, every eco missions and alternative energy/tax schemes for all.

IPCC AR5 TS.6 is loaded down with “We don’t knows.”

As predicted this will be a slow retreat.The retreat is definitely occurring now but it will take perhaps another 2 years for its complete demise. Sorry guys, it will just fade away. There will be no great stories such as “DR Mxxx has been arrested in his office caught with his hands in da cookie jar”.LOL

You don’t need lots of computer models to extrapolate the data (but it helps to convince the politicians if you have used high tech computer models)..

In Australia after the BOM fiasco, people responsible for the fraud in BOM will just be moved sidewards or retired

Rud Istvan August 26, 2014 at 10:05 am

The bigger stunner is the admission that most of this is politics rather than ‘science’

====================================================================

Not even politics. CAGW and the politics thereof are simple one of – perhaps the major one – a number of battlegrounds within a larger war of culture and ideology. Free thinking versus group think might be ONE way to characterise this war.

If you have time, this article, and (102 page, but worth it) PDF are worth reading with regard to what I write above – they look at CAGW as a meme.

http://wearenarrative.wordpress.com/2013/10/27/the-cagw-memeplex-a-cultural-creature/

http://wearenarrative.files.wordpress.com/2013/10/cagw-memeplex-us-rev11.pdf