UPDATE: for those visiting here via links, see my recent letter regarding Dr. Richard Muller and BEST.

I have some quiet time this Sunday morning in my hotel room after a hectic week on the road, so it seems like a good time and place to bring up statistician William Briggs’ recent essay and to add some thoughts of my own. Briggs has taken a look at what he thinks will be going on with the Berekeley Earth Surface Temperature project (BEST). He points out the work of David Brillinger whom I met with for about an hour during my visit. Briggs isn’t far off.

Brillinger, another affable Canadian from Toronto, with an office covered in posters to remind him of his roots, has not even a hint of the arrogance and advance certainty that we’ve seen from people like Dr. Kevin Trenberth. He’s much more like Steve McIntyre in his demeanor and approach. In fact, the entire team seems dedicated to providing an open source, fully transparent, and replicable method no matter whether their new metric shows a trend of warming, cooling, or no trend at all, which is how it should be. I’ve seen some of the methodology, and I’m pleased to say that their design handles many of the issues skeptics have raised and has done so in ways that are unique to the problem.

Mind you, these scientists at LBNL (Lawrence Berkeley National Labs) are used to working with huge particle accelerator datasets to find minute signals in the midst of seas of noise. Another person on the team, Dr. Robert Jacobsen, is an expert in analysis of large data sets. His expertise in managing reams of noisy data is being applied to the problem of the very noisy and very sporadic station data. The approaches that I’ve seen during my visit give me far more confidence than the “homogenization solves all” claims from NOAA and NASA GISS, and that the BEST result will be closer to the ground truth that anything we’ve seen.

But as the famous saying goes, “there’s more than one way to skin a cat”. Different methods yield different results. In science, sometimes methods are tried, published, and then discarded when superior methods become known and accepted. I think, based on what I’ve seen, that BEST has a superior method. Of course that is just my opinion, with all of it’s baggage; it remains to be seen how the rest of the scientific community will react when they publish.

In the meantime, never mind the yipping from climate chihuahuas like Joe Romm over at Climate Progress who are trying to destroy the credibility of the project before it even produces a result (hmmm, where have we seen that before?) , it is simply the modus operandi of the fearful, who don’t want anything to compete with the “certainty” of climate change they have been pushing courtesy NOAA and GISS results.

One thing Romm won’t tell you, but I will, is that one of the team members is a serious AGW proponent, one who yields some very great influence because his work has been seen by millions. Yet many people don’t know of him, so I’ll introduce him by his work.

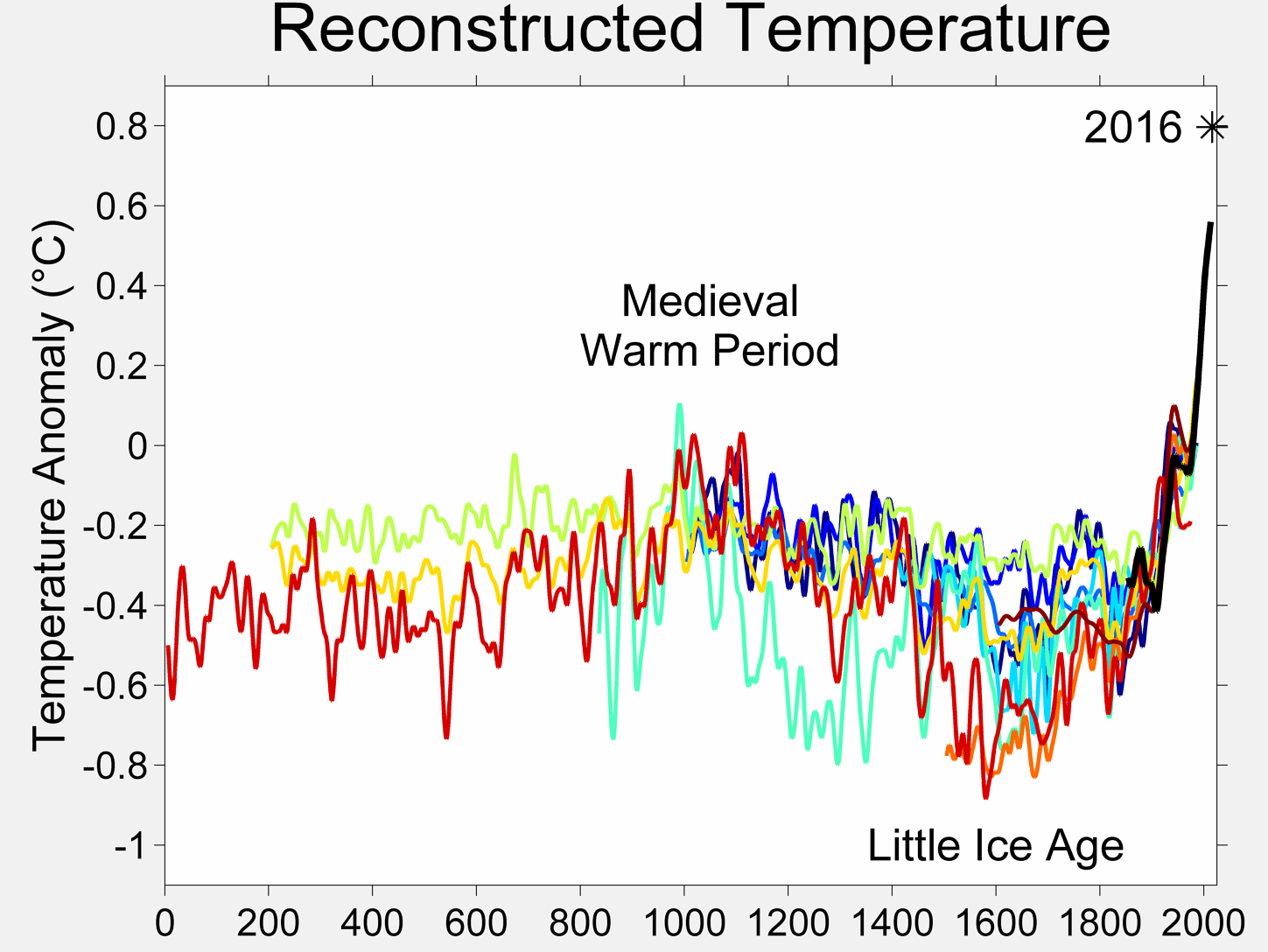

We’ve all seen this:

It’s one of the many works of global warming art that pervade Wikipedia. In the description page for this graph we have this:

The original version of this figure was prepared by Robert A. Rohde from publicly available data, and is incorporated into the Global Warming Art project.

And who is the lead scientist for BEST? One and the same. Now contrast Rohde with Dr. Muller who has gone on record as saying that he disagrees with some of the methods seen in previous science related to the issue. We have what some would call a “warmist” and a “skeptic” both leading a project. When has that ever happened in Climate Science?

Other than making a lot of graphical art that represents the data at hand, Rohde hasn’t been very outspoken, which is why few people have heard of him. I met with him and I can say that Mann, Hansen, Jones, or Trenberth he isn’t. What struck me most about Rohde, besides his quiet demeanor, was the fact that is was he who came up with a method to deal with one of the greatest problems in the surface temperature record that skeptics have been discussing. His method, which I’ve been given in confidence and agreed not to discuss, gave me me one of those “Gee whiz, why didn’t I think of that?” moments. So, the fact that he was willing to look at the problem fresh, and come up with a solution that speaks to skeptical concerns, gives me greater confidence that he isn’t just another Hansen and Jones re-run.

But here’s the thing: I have no certainty nor expectations in the results. Like them, I have no idea whether it will show more warming, about the same, no change, or cooling in the land surface temperature record they are analyzing. Neither do they, as they have not run the full data set, only small test runs on certain areas to evaluate the code. However, I can say that having examined the method, on the surface it seems to be a novel approach that handles many of the issues that have been raised.

As a reflection of my increased confidence, I have provided them with my surfacestations.org dataset to allow them to use it to run a comparisons against their data. The only caveat being that they won’t release my data publicly until our upcoming paper and the supplemental info (SI) has been published. Unlike NCDC and Menne et al, they respect my right to first publication of my own data and have agreed.

And, I’m prepared to accept whatever result they produce, even if it proves my premise wrong. I’m taking this bold step because the method has promise. So let’s not pay attention to the little yippers who want to tear it down before they even see the results. I haven’t seen the global result, nobody has, not even the home team, but the method isn’t the madness that we’ve seen from NOAA, NCDC, GISS, and CRU, and, there aren’t any monetary strings attached to the result that I can tell. If the project was terminated tomorrow, nobody loses jobs, no large government programs get shut down, and no dependent programs crash either. That lack of strings attached to funding, plus the broad mix of people involved especially those who have previous experience in handling large data sets gives me greater confidence in the result being closer to a bona fide ground truth than anything we’ve seen yet. Dr. Fred Singer also gives a tentative endorsement of the methods.

My gut feeling? The possibility that we may get the elusive “grand unified temperature” for the planet is higher than ever before. Let’s give it a chance.

I’ve already said way too much, but it was finally a moment of peace where I could put my thoughts about BEST to print. Climate related website owners, I give you carte blanche to repost this.

I’ll let William Briggs have a say now, excerpts from his article:

=============================================================

Word is going round that Richard Muller is leading a group of physicists, statisticians, and climatologists to re-estimate the yearly global average temperature, from which we can say such things like this year was warmer than last but not warmer than three years ago. Muller’s project is a good idea, and his named team are certainly up to it.

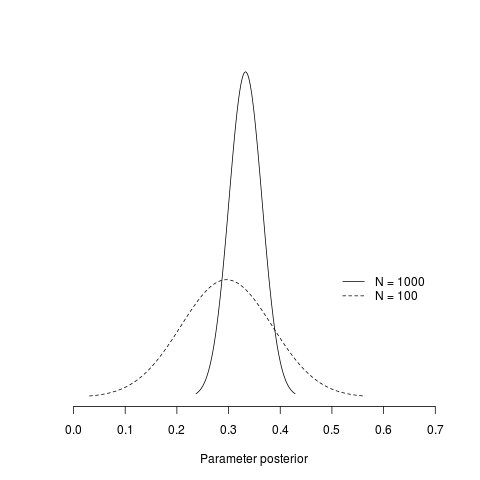

The statistician on Muller’s team is David Brillinger, an expert in time series, which is just the right genre to attack the global-temperature-average problem. Dr Brillinger certainly knows what I am about to show, but many of the climatologists who have used statistics before do not. It is for their benefit that I present this brief primer on how not to display the eventual estimate. I only want to make one major point here: that the common statistical methods produce estimates that are too certain.

…

We are much more certain of where the parameter lies: the peak is in about the same spot, but the variability is much smaller. Obviously, if we were to continue increasing the number of stations the uncertainty in the parameter would disappear. That is, we would have a picture which looked like a spike over the true value (here 0.3). We could then confidently announce to the world that we know the parameter which estimates global average temperature with near certainty.

Are we done? Not hardly.

Read the full article here

Anthony, this sounds positive. Let’s hope for the best and wait for the results.

Anthony, thanks for the info about the BEST project. I have been waiting for an update from you on it. On thing for sure is that having so many different disiplens(sic) of scientist, it will bring about a result, good or bad, that will be ROBUST in every respect. Joe Romm and the team will no doubt have a field day with anything that is produced by the BEST team, a good name for them and it will piss off Romm and company(who cares). Looking forward to more on the project.

When I was searching for a signal in noisy data, I knew that I was causing it. The system was given a rapidly firing regular signal at particular frequencies. By mathematically removing random brain noise, I did indeed find the signal as it coursed through the auditory pathway and it carried with it the signature of that particular frequency. The input was artificial, and I knew what it would look like. It was not like finding a needle in a haystack, it was more like finding a neon-bright pebble I put in a haystack.

Warming and cooling signals in weather noise is not so easy to determine as to the cause. Does the climate hold onto natural warming events and dissipate it slowly? Does it do this in spurts or drips? Or is the warming caused by some artificial additive? Or both? It is like seed plots allowed to just seed themselves from whatever seed or weed blows onto the plot from nearby fields. If you get a nice crop, you will not be able to say much about it. If you get a poor crop, again, you won’t have much of a conclusion piece to your paper. And forget about statistics. You might indeed find some kind of a signal in noise, but I dare you to speak of it.

This is my issue with pronouncements of warming or cooling trends. Without fully understanding the weather pattern variation input system, we still have no insight into the theoretical cause of trends, be they natural or anthropogenic. We have only correlations, and those aren’t very good.

So just because someone is cleaning up the process, doesn’t mean that they can make pronouncements as to the cause of the trend they find. What goes in is weather temperature. The weather inputs may be various mixes of natural and anthropogenic signals and there is no way to comb it all out via the temperature data alone before putting it through the “machine”.

In essence, weather temperature is, by its nature, a mixed bag of garbage in. And you will surely get a mixed bag of garbage out.

Anthony Watts said “……until our upcoming paper and the supplemental info (SI) has been published. ”

When ? Where?

REPLY: It is in late stage peer review, I’ll announce more when I get the word from the journal. I suspect there will be another round of comments we have to deal with, but we may get lucky. Bear in mind all the trouble Jeff Condon and Ryan O’Donnell had with hostile reviewers getting their work out. – Anthony

I hope they collect sunshine hours. Global brightening/dimming is certainly capable enough of changing the temperature.

People attack what they fear.

So very happy to see that WUWT (the Website) is not resting on its laurels and is moving out with great dispatch as ever. The BEST project sounds very promising indeed. Your endorsement counts mucho. Take care of yourself Big Guy; don’t push your own envelope too hard, or too far, too fast.

I am greatly intrigued by this data. It has been frustrating that there has not been a good standard yet for combining the large data in a consistent manner. Curiosity is building.

Hope you enjoyed your trip Anthony.

The layout of the post seems to have got mangled though:

The fifth paragraph below the wikipedia graph ends in mid-sentence.

and

Brigg’s first graph and the three paragraphs above it are missing.

If done right this is the best development in a long time.

Here’s hoping they can find data sources uncontaminated by Hansen and friends…

Since many tried to collate existing records and came up with something similar to HadCRUT, the point is more which stations to select than how to make an average of them. I would prefer one thousand rural stations over 3,000 of whatever quality.

I still think the SST satellite record like the Reynolds OI.v2 is pretty good for the modern era, just too short.

I am truly thankful that someone other than me does all this number crunching. Though I have no doubts I could, in time, wade through it, it is not my cup of tea. The big question for me, coming from an instrument background, is whether or not the original data is a valid measure of temperature for that location.

I too seem to have become persona non grata over at Climate Progress (is it funded by Colonel Gaddafi I wonder, for all its diatribe about being nonpartisan it certainly acts in a partisan manner?) I only asked if they could stick to the science and try to leave the playground banter to the more juvenile blogs. I know how naive of me! Apparently they think that blocking someone is the same as making them disappear, how wrong they are.

Ah, my proofreading skills are terrible, I see the “excerpts” and “…” now =)

I’ll eat my hat if the BEST result isn’t essentially in agreement with NOAA and GISS.

There’s too much at stake for too many scientists to back-pedal at this point in time. This is an establishment project headed by an AGW proponent at one of the most famously leftwing universities in the world. It’s like having cops check the work of other cops – they all stick together through thick and thin – brotherhoods are like that.

Plus the data isn’t that damn hard to analyse. It is almost certainly warmer now than it was in 1880. Reanalysis won’t change much of anything. If it did it already would have been done. So between a basic distrust of any team of establishment scientists rocking the boat and a basic belief that it’s about 0.7C warmer now than 130 years ago there’s hardly a chance that anything will change.

Moreover, those who don’t believe it’s warmer now will still not believe it’s warmer now and those who do believe will continue to believe. Contrary to popular belief in skeptic blogs there are a few basic things that stand out as credible

– it’s warmer now by a half degree or so than it was 130 years ago

– CO2 is a greenhouse gas that has the physical potential to raise surface temperatures

-anthropogenic activities have raised atmospheric CO2 from 280ppm to 380ppm

Most everything else is arguable except for the beneficial effects of a warmer planet and higher CO2 on the primary producers (green plants) in the food chain.

“And, I’m prepared to accept whatever result they produce, even if it proves my premise wrong. I’m taking this bold step because the method has promise. So let’s not pay attention to the little yippers who want to tear it down before they even see the results. I haven’t seen the result, nobody has, not even the home team, but the method isn’t the madness that we’ve seen from NOAA, NCDC, GISS, and CRU, and, …”

… and it still has not been tested, vetted, examined, and otherwise validated by the world.

It is premature for you to assign unyielding faith to this method, whatever it is. Do not be lulled into investing yourself in this process, and its result. Your bold step should not be made on promise, but on delivery.

Perhaps there is an Anthony Watts out there who will point out the deficiencies of the new method, once that method is made known. If so, it would be best if that Anthony Watts were you …

OK, sounds great. But what about the ARGO ocean buoy data, which is not often mentioned?

Also, there would be a simple way to get data from any point on the land by use of modified ARGO devices and circuitry, presumably with solar power panels. They could be placed anywhere in remote locations, thus obviating the urban heat island effects. And, the transmission of data to satellites would be automatic, so there would be no observer problems.

Good stuff, and very much what I prefer to read about: attempts at actual science and less about the bickering.

Will we get answers? Probably not, but a reduction in the wrong questions might help us grope a few steps closer to the exits in the darkened room of climate science.

Any predictions on BEST results? I’ve just created a post dealing with predictions:

http://climatequotes.com/2011/03/05/prediction-on-best-temperature/

I guess that the warming will be less than the other three, but still substantial.

If it’s really science, then let the chips fall where they may. However, I distrust temperature analysis as a means of determining whether the Earth as a whole is heating, cooling, or remaining the same. High temperatures can often be more an indicator of heat shedding mechanisms than of global warming. It’s the net energy flux that counts.

The challenge we face is measuring a very tiny putative drift in a widely-ranging signal with a high degree of chaos, superimposed on an ice-age rebound trend along with multidecadal swings and random volcanic spurts of largely unknown effect. Nor will the presence of this drift, if established with any statistical certainty, prove the AGW case. Correlation is not causation, and no amount of hand-waving will make it such.

In other words, it’s the Watts.

“in the land surface temperature record they are analyzing”

Is BEST just land surface based? There has been considerable concern expressed regarding the handling of the SST/Ocean data, does BEST cover this very, very important area?

REPLY: Land first, sea later. – Anthony

REPLY: Land first, sea later. – Anthony

Thanks looking forward to it all.

I have to agree with Brad above, if they’re using data filtered from Hansen et.al. their results will be questionable; however, I’m getting the impression from Anthony that he’s pretty sure of their method so I’m hoping he also covered this concern when he talked to his source. I’m looking forward to seeing what the BEST team come up with, but I’m not going to accept anything coming out of academia without a through review of their methods and sources. I just don’t trust them anymore.

I’m intrigued by the promise that you see in BEST and look forwards to seeing what they generate.

I’ll let the Science speak for itself as usual

If you want a number that tells you the air temperature of a location with the noise smoothed out just measure the ground temperature at some appropriate depth, but if you want to know the global SURFACE temperature you better take into account the vast pool of polar water in the deep ocean. Temperature of South African mine at 4000 feet = approx 65 C; temperature of vast areas of the ocean at 4000 feet = approx 4 C. How did that happen?

Quote from: http://welldrillingschool.com/courses/pdf/geothermal.pdf

“Low grade geothermal energy [in contrast to high temperature geothermal energy of hot springs] is the heat within the earth’s crust. This heat is actually stored solar energy.” This interesting document also points out that in 1838 a careful study by the Royal Observatory of Edinburgh “showed that temperature variation at a 25 foot depth to be about 1/20 of the surface variation.” I would seem that the deeper you go the closer you come to the average annual temperature.