Originally tweeted by Stephen McIntyre (@ClimateAudit) on April 30, 2023.

Note from Anthony: For those of you that remember the yeoman’s work that Steve McIntyre did at Climate Audit debunking Michael Mann’s hockey stick and flawed methodology this should come as no surprise. Once again, hockey sticks get generated where the original data doesn’t show it. The only conclusion you can make is that the data and the method have been adjusted to fit a preconceived and desired result. This series of Tweets has been compiled here for easier access and readability. – Anthony

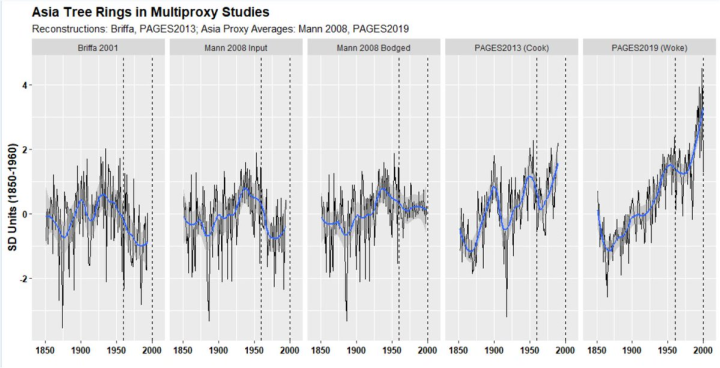

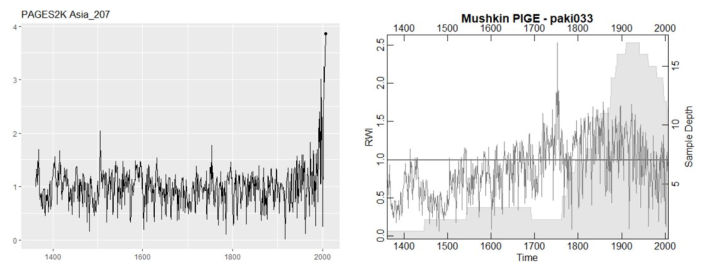

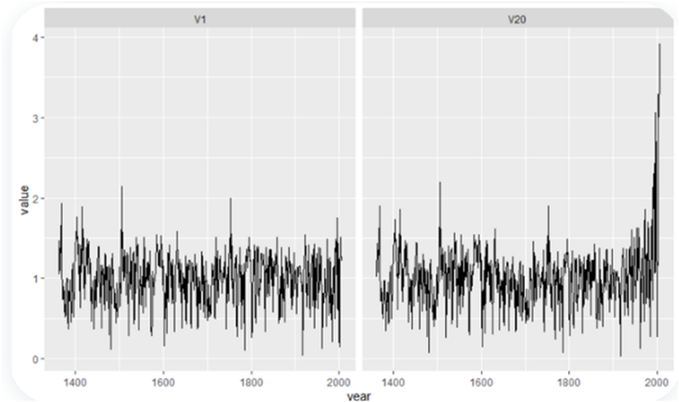

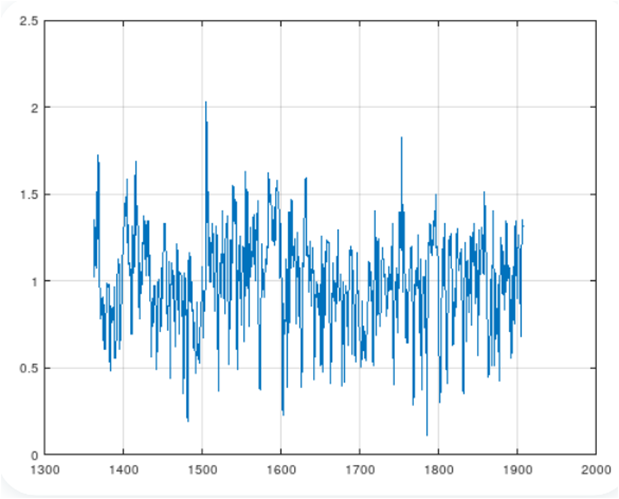

[M]ost readers are familiar with famous “hide the decline” from Climategate. Below are 1850-2000 parts of 5 series calculated from Asian tree ring data, explained below. I recently received some fantastic PAGES2k reverse engineering from @detgodehab and am re-visiting.

The data illustrated below comes from

(1) original Briffa 2001 Asian series with late 20th century decline (chopped off in Mann’s IPCC diagram);

(2) average of Asian series in gridded MXD series sent by Briffa/Osborn to Rutherford and Mann, ostensible input in Mann 2008

(3) average of (the 45) gridded MXD as used in Mann 2008. As discussed long ago at Climate Audit, Mann chopped off the offending declines and replaced them with temperature data. This was a different incident to the IPCC diagram or the 1999 WMO “hide the decline” diagram.

(4) PAGES2K (2013) introduced a novel Asia reconstruction (Cook et al) from tree rings in which late 20th C decline observed in Schweingruber data did not exist. Closing values were similar to high values at mid-20th century. The difference was not reconciled by PAGES2K.

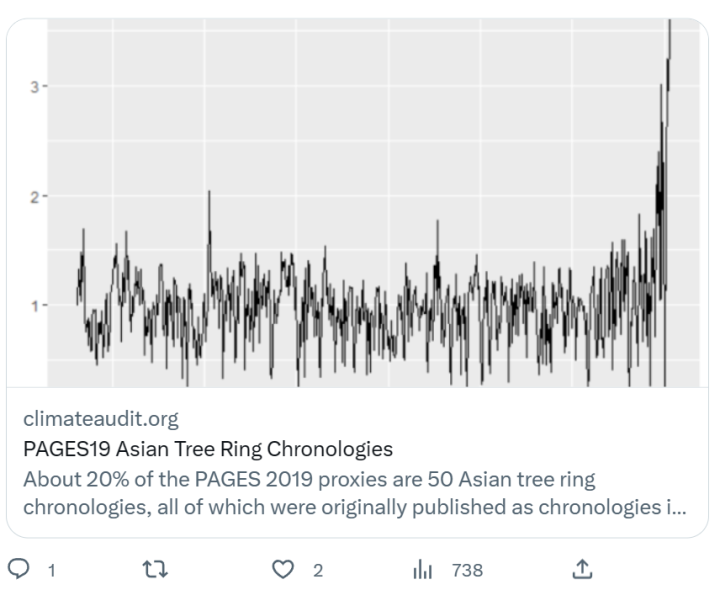

(5) the current PAGES reconstruction – the “Woke Reconstruction” for short and used in IPCC AR6 – contained a subset of the PAGES2K Asia dset, the average of which yields a monster blade. The “decline” is in the Woke rear-view mirror.

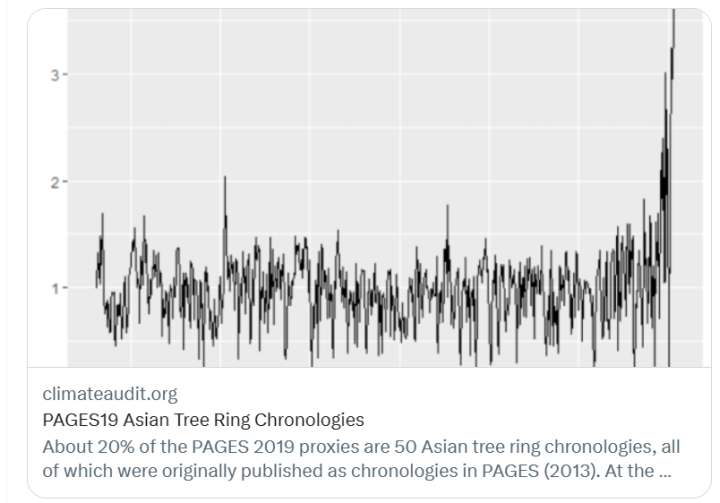

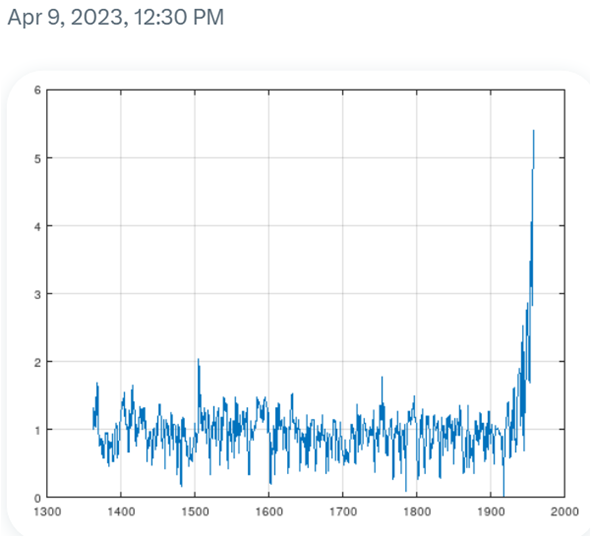

A few years ago, I had noticed that some of the tree ring chronologies underlying the Woke Reconstruction had enormous closing blades that did not appear possible to replicate using standard methodologies.

I did a couple of Twitter threads as well:

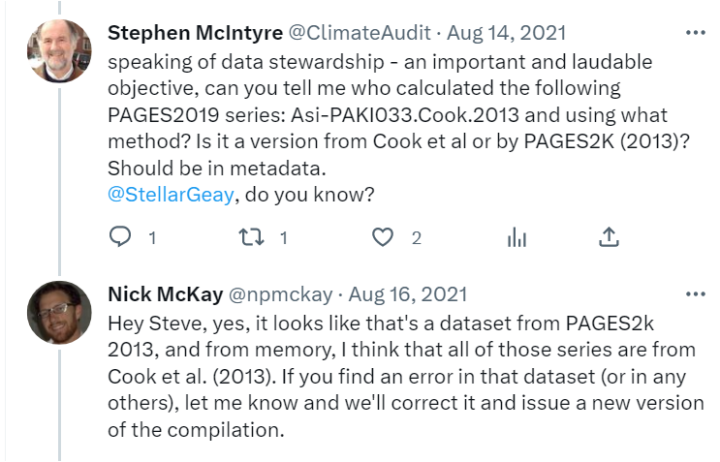

I asked two lead authors of PAGES 2019 about the provenance of the Asian tree ring series, but got nowhere. They didn’t consider that they had any responsibility as lead authors of a Nature article to answer questions about their data.

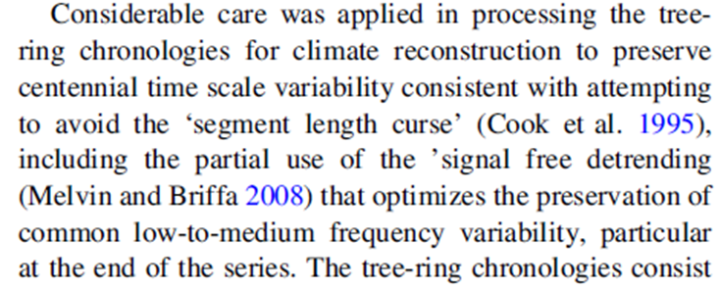

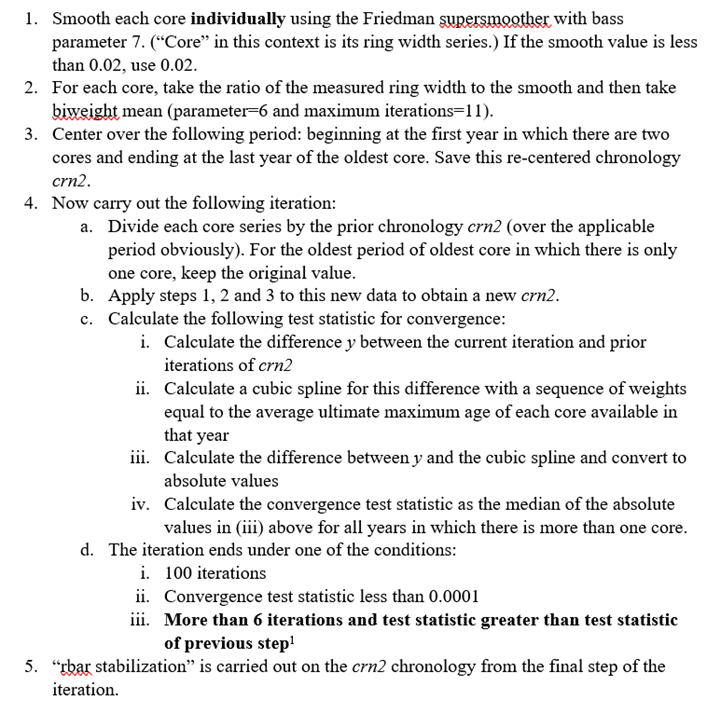

The underlying reference (Cook et al 2013) contained only a single sentence as purported description of chronology methodology: that they took “considerable care” to avoid ‘segment length curse’, with “partial use” of a novel technique then recently introduced by UEA’s Tom Melvin

the keepers of these chronologies were at Columbia U which resolutely refused data when I was trying to figure out Hockey Stick mysteries. Jacoby: “Fifteen years is not a delay. It is a time for poorer quality data to be neglected and not archived.”

anyway, reader @detodehab got intrigued with the puzzling Asian tree ring chronologies and reverse engineered their calculation. He replicated the results to every detail. No one could have possibly imagined the actual calculation from details in PAGES2K or Cook et al 2013.

It’s hard for a statistical methodology to be so bad as to be “wrong”. Mann’s principal components methodology was one seemingly unique example. PAGES2K’s Asian tree ring chronologies are another. It’s worse than anyone can imagine.

unfortunately, exposition of the defective calculation are technical and will take some time. But for now, the monster blade of the “Woke” PAGES 2019 Asian tree ring data is bogus. PAGES2019 selectively chose the biggest blades, nearly all of which come from bogus chronologies.

I have a question for readers on order of exposition. Which should come first:

1) narrative of detective work by which calculation was reverse engineered

2) pathologies of PAGES2K Asia tree ring methodology

Not all Asia chronologies are pathological, but biggest blades are.

on the left is description of PAGES Asia2K chronologies and on right is my brief description of their actual algorithm as deduced by @detgodehab and verified by me. I’ll return later to how he figured this out. For now, the pathologies of the method.

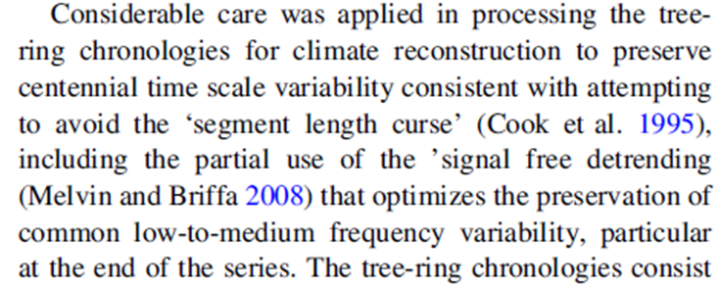

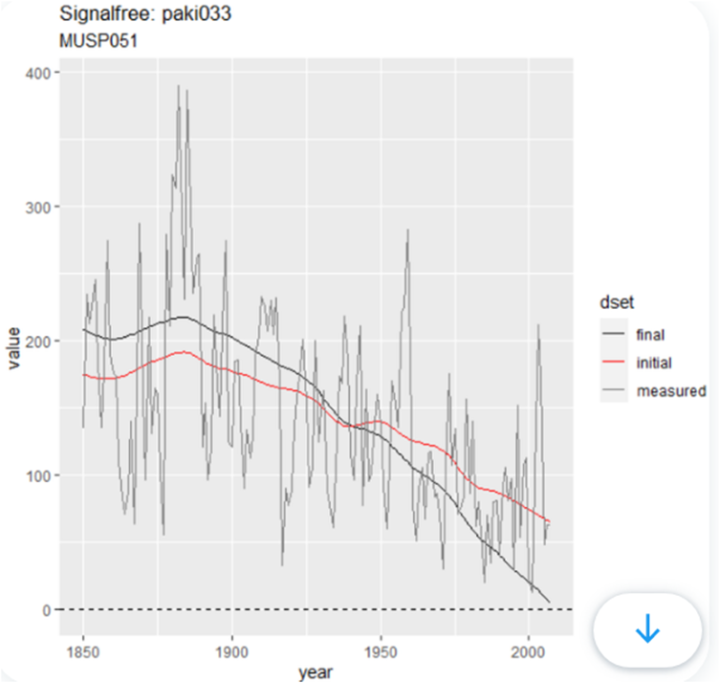

I’ll describe the pathologies of the PAGES2K Asia algorithm more or less as we discussed them in DMs over past few weeks. @detgodehab had begun with analysis of paki033, the series that I had featured in a 2021 thread and blog article.

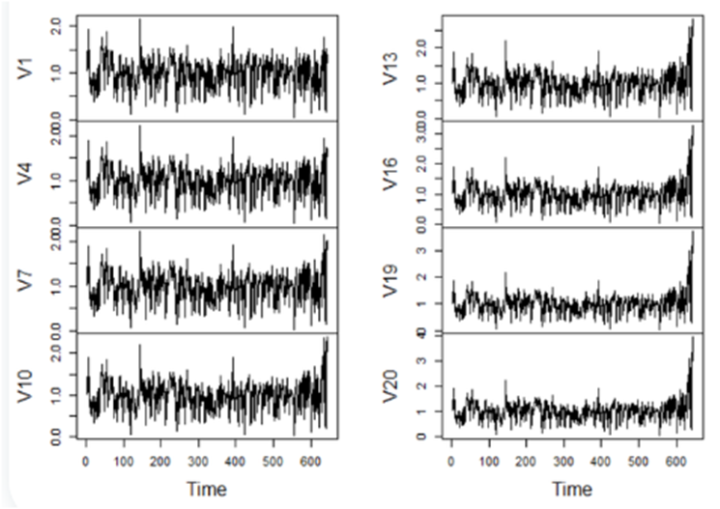

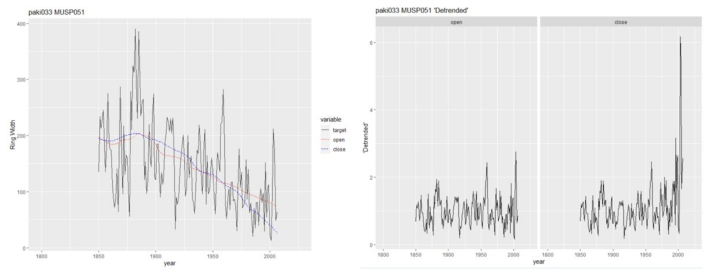

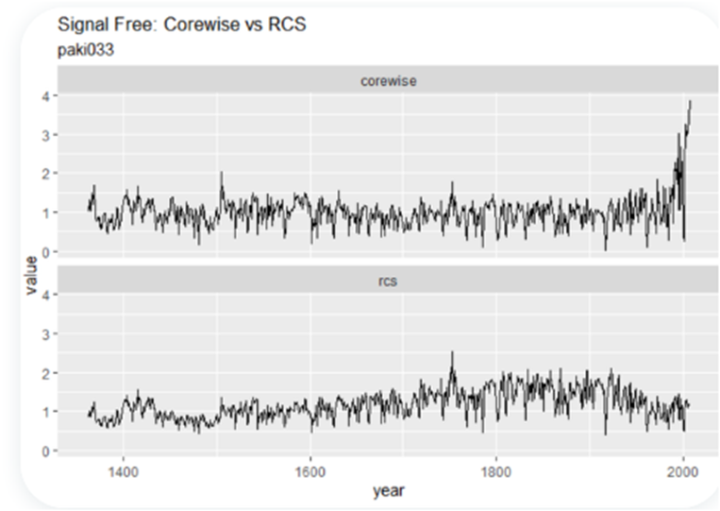

@detgodehab had ported the algorithm to Octave. The paki033 iteration stopped after 20 iterations. So let’s look at the evolution of the chronology. It opened as nondescript series on left and closed with big blade. On right is sequence of steps showing emergence of closing blade

what happened to individual cores? Tree ring “chronologies” are calculated as the difference between measurements and smooth (pseudo-model). Between start and close, the ‘model’ moved closer to zero at the close, so that contribution to chronology (shown on right) increased bigly

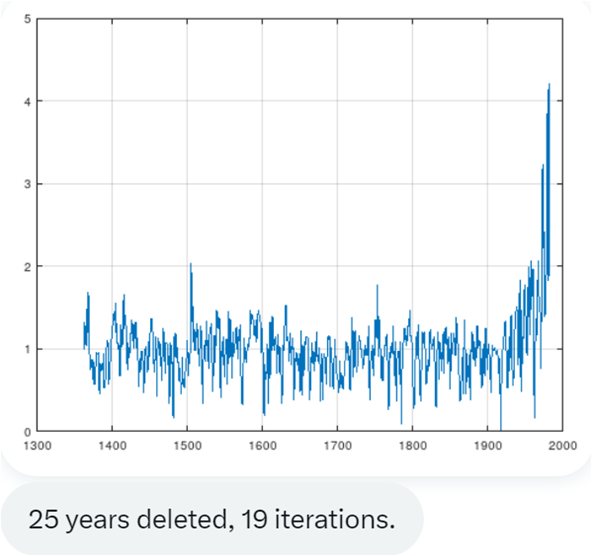

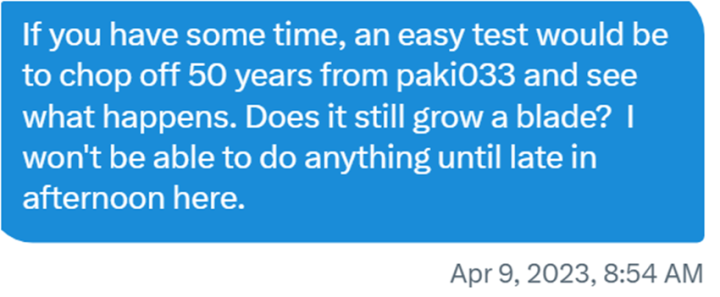

this looked very suspicious as a procedure. An obvious question was whether the monster blades in certain PAGES2K chronologies were some sort of artefact, as opposed to “climate”. So I suggested that @detgodehab see what happens when last 50 years of data not used? As a test.

bingo. Excluding the last 50 years of data, paki033 had an even bigger blade //50 years earlier//. For good measure, @detgodehab did test excluding 25 years and got same big blade //25 years earlier//.

so it was very clear that the big blade being produced by the PAGES2K Asia chronologies was bogus and some sort of artefact of their methodology and NOT due to climate. This doesn’t disprove global warming. It is only relevant to PAGES2K.

Also, PAGES2K uses much other data. Nor are all Asia 2K chronologies calculated with this bogus methodology. But PAGES2019 selected the worst and most bogus chronologies (claiming they were the best) and that’s why there’s the monster blade shown in opening tweet.

while it’s evident that the PAGES2K Asia methodology was pathological, when @detgodehab chopped off 100 years, it didn’t produce a blade. I haven’t parsed this to see why. There are many mysteries in the algorithm which I’ll continue to describe.

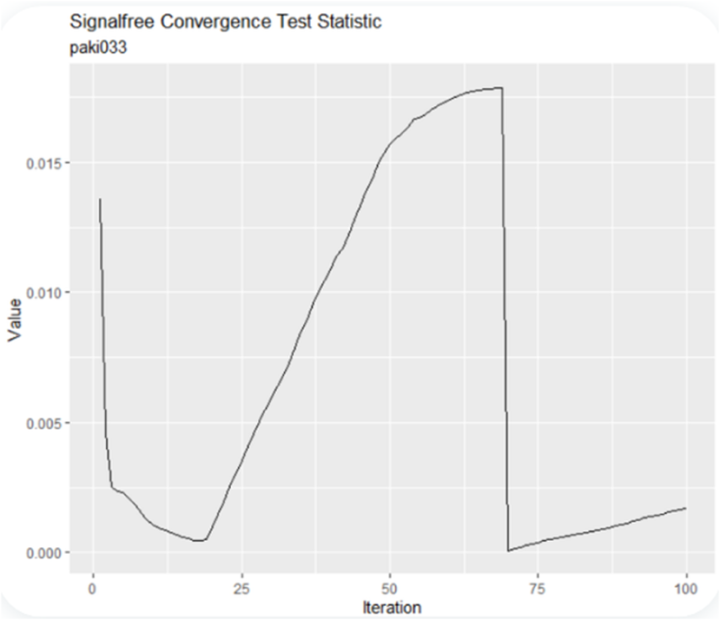

In an iteration, convergence is presumed. But PAGES2K algorithm did NOT converge for paki033. It was stopped at iteration 21. I ran D’s algorithm for 100 iterations and found that the Melvin “test statistic” (a weird one) increased up to iter ~68, dropped suddenly, then rose

this is NOT acceptable behaviour in a valid algorithm purporting to converge

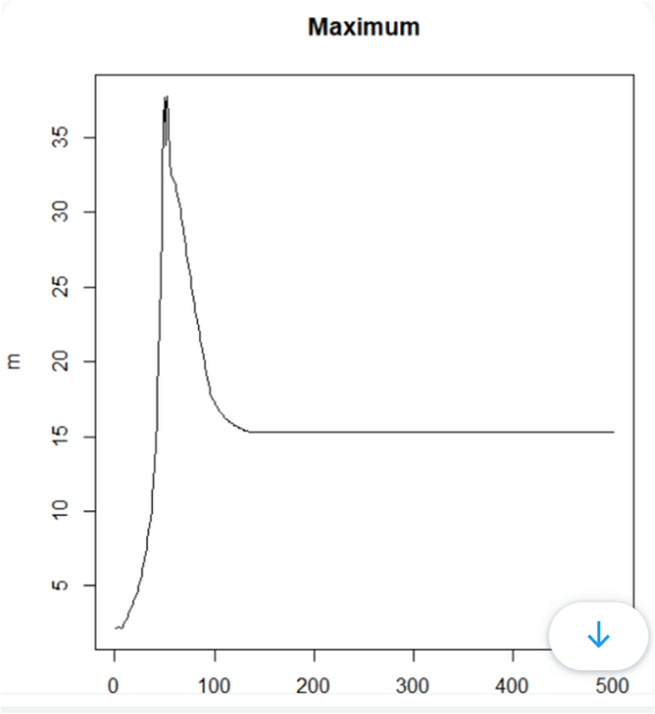

what was happening to the chronology during these wild changes in “convergence” statistic? The blade (which had stopped at ~4) continued to grow reaching ~37 at iteration 50, then declined to ~15.3 by iteration 100. Obviously not climatic

the maximum of the blade by iteration for 500 iterations is shown below. It did actually converge under Melvin statistic – but to an implausibly large blade of 15.338.

@detgodehab observed “so clearly convergence doesn’t mean that a chronology is valid”. Clearly.

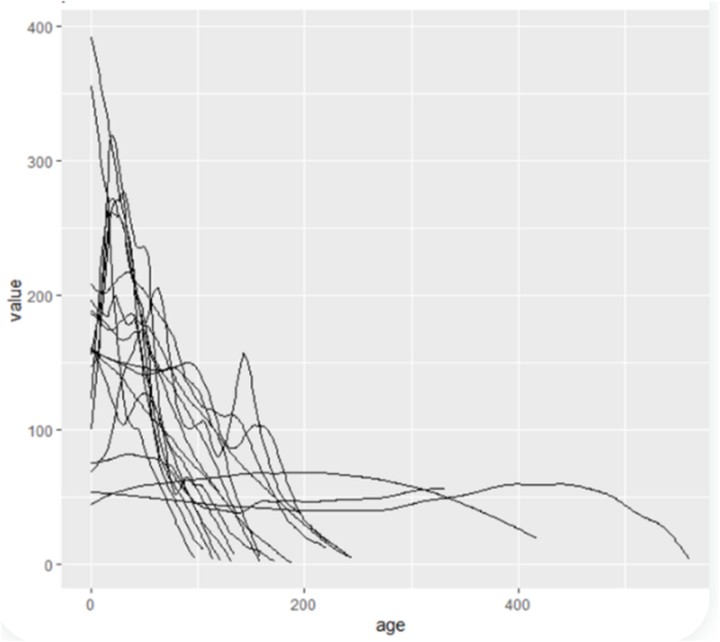

recall the diagram shown previously in which I had extracted the smooth ‘model’ for an individual core. At right are the ‘models’ for each core at convergence: they all approach zero at end.

a technical point: there are two main approaches to “detrending” each core to allow for juvenile growth: 1) a separate curve for each tree/core; 2) one curve (typically negative exponential plus constant) for site.

I did an experiment applying Melvin’s iterative method and supsmu smoothing as follows: 1) to individual cores as done in PAGES2K Asia; 2) one curve for entire site (“RCS”). The monster blade only appeared with PAGES combination of Melvin iteration and supsmu corewise smoothing.

thus far, I’ve discussed one site paki033.

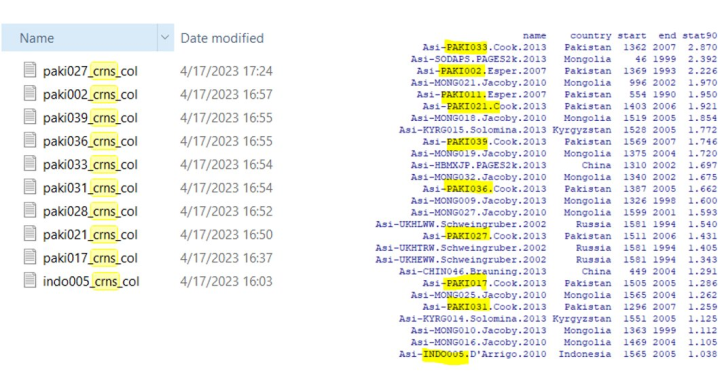

@detgodehab has verified that same flawed algorithm was used for at least 8 other Pakistan sites. Note that these sites (together with Columbia U’s Mongolia chronologies) dominate PAGES19 list of heavy contributors to closing blade.

Also note that all these sites were preferential selected by the PAGES2019 screening procedures (which I’ve criticized elsewhere.)

Originally tweeted by Stephen McIntyre (@ClimateAudit) on April 30, 2023.

Mann’s original work was shown, I believe by McKittrick, to produce a “hockey stick” from red noise. It does not appear that Mann has devised a more valid approach.

Safe and effective.

It was Steve McIntyre, but all he ever showed was a hockey stick in the first principal component P1 (but gave the impression he was talking about the result). . In the full reconstruction (ie what is published) M&M agreed with Mann.

In MBH98 they used a non-centered mean, probably by mistake. It hasn’t been done since. In PCA, you subtract the mean because it is a sure principal component, reflecting differences in mean of the different proxies. That is just one less component to calculate. If you subtract a decentered mean, you don’t get the full value of that, and the first principal component P1 aligns with the decentering (hockey stick). But that is compensated by the other components, so when you have the final result, that had no ultimate effect.

I did read a commentary by MacIntyre and McKittrick, in the middle 2010’s, and they emphatically did not agree with Mann.

Stephen McIntyre and Ross McKitrick wrote a retrospective in 2019 including comments on the ‘hockey stick’ particularly concerning the effect of ex post screening of samples that apparently is common practice in paleo-climate studies and obviously biases the results: “While screening on the basis of correlation to temperature superficially seems to make sense, the error is easily understood if you hypothesize a pharmaceutical scientist using ex post screening: imagine a drug study which only reported results for patients who got better”.

“While screening on the basis of correlation to temperature superficially seems to make sense”

Of course it makes sense and the attempted counter example actually explains why. Our entire knowledge of pharmaceuticals is based on ex post screening. You test some drugs for a condition, discard the ones that don’t work, and test further the ones that do. The talk of biased reporting of results is not the analogue. All results of each proxy are reported, as are all results of each trial. The selection occurs at a level above. Proxies are selected on the basis of correlation with temperature, as drugs are selected on correlation with health.

It is nonsense to screen according to correlation with the supposed thermometer record as if, for instance in the case of tree core samples, some trees during their entire long life are trustworthy proxy ‘thermometers’ and others are not based on less than ten percent of that lifespan.

To do so is artless circular reasoning or fraud.

That is why blind, doubt blind and triple blind experimentation is used in medical research.

“some trees during their entire long life are trustworthy proxy ‘thermometers’”

I doubt that any are.

C’mon everybody knows mercury thermometer equals platinum probe equals certain select tree rings equals we’re all doomed unless we go back to the age before steam. You just have to contextualize these things.

But in this analogy the tree is not the drug, but the patient. The analogy does indeed demonstrate M&Ms point in that a pharmaceutical trial would quickly be dismissed if they removed patients with no or adverse results to the drug only basing their results on the patients that responded positively.

And that is why the famous hookey shtick shows the MWP and the LIA, because the selected proxies so perfectly represent the “temperatures” of those periods?

Correct Nick?

Sarc/off

But of course it does NOT.

Nick writes “Of course it makes sense “

Oh Nick 🙁

RE: **Our entire knowledge of pharmaceuticals is based on ex post screening. You test some drugs for a condition, discard the ones that don’t work, and test further the ones that do**

Now nick is pretending to be a pharmacy expert.

Looks like he has missed the past three years. The Cheaper and effective life-saving treatments Ivermectin and Hydroxychloroquine have been denigrated by the corrupt Fauci and the drug lords in favor of more expensive and even dangerous drugs like Remdesivir. Ever wonder why over six million people have died due to lack of treatment?

Nick,

“used a non-centered mean, probably by mistake”

You really believe this was an honest mistake?

You really should stop playing Igor to Mann’s Doctor Frankenstein.

Of course. It made no difference to the final result, so there is no motive. And it was never done again.

“Of course. It made no difference to the final result,..”

The Figure below, left, compares MBH98 PC1 and the 1993 Graybill-Idso White Mountain strip bark Bristlecone pine tree-ring core. They’re nearly identical visually and 0.8 correlated.

On the right is Figure 5 from 1993 Graybill & Idso, comparing White Mountain strip bark and full bark Bristlecone pine tree ring cores.

The extreme hockey stick rise is clearly a strip bark artifact. But MBH used it anyway and bugled it as proof of unprecedented warming.

Graybill and Idso ascribed the rise to CO₂ fertilization.

Shameless doesn’t cover any of it, Nick, including your dissimulation.

Mr. Frank: Thank you for demonstrating his more-than-shameless deflection.

Thanks for your consideration, Paul.

Pls. call me Pat.

Forget it Nick. Mann’s place of prominence in the pantheon of junk science was forever secured when he covered up the inconvenient decline of his proxy with the modern temperature ‘record’.

He didn’t do that at all. Not even McI claims that.

Oh, please!!

https://climateaudit.org/2011/03/29/keiths-science-trick-mikes-nature-trick-and-phils-combo/

OK, did you read it?

“It consists of the following elements:

1. A digital splice of proxy data up to 1980 with instrumental data to 1995 (MBH98), lengthened to 1998 (MBH99).

2. Smoothing with a Butterworth filter of 50 years in MBH98 (MBH99- 40 years) after padding with the mean instrumental value in the calibration period (0) for 100 years.

3. Discarding all values of the smooth after the end of the proxy period.”

There is nothing about covering inconvenient decline, or decline at all He is making the esoteric claim that at the end of the proxy period, where smoothing is difficult because of lack of knowledge of future values, he uses instrumental values for that. I don’t know if he really did, but if he did, it is a very reasonable thing to do.

McIntyre often complained that no-one but he understood what the complaint is. Well, I do.

“There is nothing about covering inconvenient decline, or decline at all He is making the esoteric claim that at the end of the proxy period, where smoothing is difficult because of lack of knowledge of future values, he uses instrumental values for that. I don’t know if he really did, but if he did, it is a very reasonable thing to do.”

Mann denied it, and said no one would ever do that. Because Proxy data is not temperature.

I did read it, Nick. What you conveniently ignore is that a newly minted PhD was selected to be a ‘lead author’ by the IPCC because his graphic supposedly did away with the troublesome (to them) Medieval Warm Period and the Little Ice Age.

The real deception wasn’t in how the the proxy data was ‘filtered’ – it was the ignoring of many better proxies that showed that the above events were real, while including the thermometer record to give the impression that ‘dangerous’ warming had commenced with the onset of industrialization.

While we’re on the subject of climate proxies, doesn’t it strike you as a bit ironic that the defenders of using tree rings to ‘document’ climate change usually ignore the actual remains of ancient forests revealed by the current retreat of glaciers.

There’s also this at Steve McI’s analysis:

“Keith’s Science Trick

““Keith’s Science Trick” is first used in May 1999 in Briffa and Osborn (Science 1999) and Jones et al (Rev Geophys 1999). It is nothing more than the deletion of data to hide the decline. (my emphasis)

“The deletion of adverse data to hide the decline was first reported at CA in 2005 here in connection with IPCC TAR spaghetti graph.

“In the wake of Climategate, prior incidents of hiding the decline were discussed, including recent analyses of Briffa and Osborn (Science 1999) e.g. here. Following is a graphic showing Keith’s Science Trick – the deletion of adverse data – a practice continued in subsequent spaghetti graphs, including those in IPCC TAR and IPCC AR4.”

The graphic below from Steve McI’s site shows the castrated decline. Clearly the tree-ring core identified as a warming trend and elected for trend-affirming surgery.

The Figure Legend is: “Figure 2. Annotated version of Briffa and Osborn Science 1999 Figure 1 – see recent posts for derivation.”

Nick claims “There is nothing about covering inconvenient decline, or decline at all He is making the esoteric claim that at the end of the proxy period, where smoothing is difficult because of lack of knowledge of future values, he uses instrumental values for that. I don’t know if he really did, but if he did, it is a very reasonable thing to do.”

Step away from the train wreck. Normally your analysis is reasonable. Sometimes pedantic. In this case shameful.

Never, ever, trust Nick Stokes. He’s just an operative tool.

https://climateaudit.org/2014/10/01/sliming-by-stokes/

Frank, I think that the “Mike” of the infamous “Mike’s Nature Trick” wasn’t M. Mann, but some other nefarious “Mike” who also found it easy to put one over the peer reviewers and editors of Nature.

“probably by mistake”

Hahahahahaha!!! HAAAHAHAHAHAHAHAHA!!!

This is what Ian Joliffe said:

https://climateaudit.org/2008/09/08/ian-jolliffe-comments-at-tamino/

I can’t believe you are still defending the Hockey Stick!

Nick can never admit that he might be wrong – look how he steadfastly defends unreliables despite all the evidence given to him.

“I have just read the M&M stuff criticizing MBH. A lot of it seems valid to me. At the very least MBH is a very sloppy piece of work – an opinion I have held for some time.”

E mail from Tom Wigley quoted in Climategate The Crutape Letters

Mann, by bloviance of his own inflated ego, says he never makes mistakes. Therefore “In MBH98 they used a non-centered mean, probably by mistake. ” Mann must have done it deliberately to achieve a desired result.

If there was any mistake, he had plenty of time to issue a correction…

Mr. Stokes: Others have debunked the content of this misleading post (M&M did not agree, citing to yourself is bad form, mate). I post to note the technique used (again) by Mr. Stokes to avoid ever addressing the takedown of this Pages misconduct. He picks a fight on a non issue (was it McKitrick or McIntyre? Both, I say, but what matter?) and follows with his citation to himself, where he invented the “agreement” that never was.

Mr. Stokes, what do you make of the article above? Need more time to find something else to deflect with?

Courtesy demands that activist scientists are asked about their more sensational papers. But they never respond to that courtesy with a reply. So their work must be replicated to be understood.

To save time their is a simple procedure to replicate their science. At every point in the creation of the model ask yourself, “What makes for the most sensational finding?” And incorporate that in the model.

Do not be distracted by physical evidence, historical plausibility or the laws of physics. Replicate their models by following their teleology.

For others dumb like me:

tel·e·ol·o·gy noun

Mr. M: Those Courtneys! Stretching vocabularies at comment #2!

“Those Courtneys!”

Both, excellent commenters here at WUWT! 🙂

Did this inspire the VW emissions cheating software designers?

It may have, but we can’t know for sure,

VW origins go back to the mid-1930’s, but the alleged cheating would occur following the tighter emission standards, much more recent. We can use old German tree ring chronologies (derived from furniture and coo-coo clock wood) to set a ‘pristine’ baseline for comparison, but a current chronology is unavailable because all recent wood has gone into Drax.

The whole process of reducing the dimensionality to one from a host of individual time series is just ignorant to address the question of climate change. Mann and company are flat out charlatans.

They don’t reduce to one. They reduce to about 5. That is the point of principal components analysis.

No shit Nick. Why even use PCA. What is the hockey stick graph of? The 1st PC. The question to ask is are the data generated by a stationary time series process? PCA analysis isn’t the right tool. This is especially true if you believe CO2 introduced the non stationarity.

“What is the hockey stick graph of? The 1st PC.”

No, it is not. It is of the reconstruction. You reduce to five dimensions, because that keeps most of what is meaningful and discards the noise. Then you put it all back together.

Showing the 1st PC just shows a component of a vector in one set of axes. Change the axes, and it will be different. And that is all McIntyre does, mostly. But he has slipped up and shown a reconstruction which is what Mann and everyone else shows. And wouldn’t you know? He gets just the same as they do.

“because that keeps most of what is meaningful and discards the noise”

And the grossly overweighted Bristlecones weren’t noise, of course.

Nick, how much of the reconstruction depends on the PC1? What PC gives the hockey stick? Is the hockey stick shown a function of age-modeling growth gone wrong?

The whole PCA effort is misguided, which you never address.

Why reduce the dimensionality of the data? It is an archaic exercise. You lose data in the process. The whole approach is a complete waste of time. You have individual core samples. No need to smear the data into a single series. Core sample by core sample, turn the data into temperature proxies. One can then ask the relevant questions about the time series properties of the individual series that are hypothesized to show a structural relationship to CO2 concentrations.

Think of the CRN series. Why would you ever aggregate them? The right approach is to deseasonalize and then test for stationary series by series. There isn’t a single CRN series in CA that shows an increasing trend. My guess is that the overwhelming majority of CRN stations show no significant trend.

Climate science veered off into an unneeded statistical swamp. The question is why?

Principal components are numerical constructs. They have no discrete physical meaning. There’s no physical theory that will convert a proxy into a physical temperature.

The whole method is a fraud.

And “they used a non-centered mean, probably by mistake. That’s rich, even for Nick.

Pat, I am very familiar with both PCA and Factor Analysis. The way PCA is used in climate science makes no sense. Factor analysis was used widely in the finance literature to understand the number of independent factors needed to explain the covariance matrix of stock returns. Of course, there is a complete lack of theory to map calculated factors into any economic variables to understand the covariance structure. Factor analysis worked great in developing index strategies using a subset of companies in an index.

Back in the day when high dimensionality was a problem in computer use, maybe PCA made sense. I’ll never forget when I first started to write Fortran code to deal with financial data only available on magnetic tapes. Without thinking much about it, I wrote a program that would dump the data for 12k companies, with 120 quarters of financial data, and about 360 data points per quarter into a structure I could then manipulate. In the mid-80s, this wasn’t possible on the UVA mainframe.

If you have 50 core samples from an area, there is no reason to smear them into a single series.

Interesting story, Nelson. I’ve used PCA once, to recover individual spectra from a mixture. One needs to combine PCs as indicated by a physical theory to extract something meaningful.

In climate science (so-called) they assign meaning to PC1 by fiat.

The thgeory about combining tree-ring cores is that they’re purportedly mostly random noise with a weak signal. Combining them cancels the noise and enlarges the signal. That’s the idea, and it’s a crock.

More hockey stick cheating. It never stops, despite being continuously and easily caught out as here, yet again.

A true story. I deconstructed Marcott’s 2013 Science hockeystick over at Judith’s at the time. Was a simple comparison of his PhD thesis to the Science paper supposedly based on his thesis, to prove indisputably that Marcott committed gross academic misconduct in the Science paper. (Repeated evidence with slight rewrites in essay ‘A High Stick Foul’ in ebook Blowing Smoke.) I offered the draft book essay two months pre-publication to the then chief editor of Science, Marsha McNutt, suggesting immediate retraction. Her assistant acknowledged receipt. Then nothing. These people think they can continue such dishonest behavior with impunity, because nothing has ever happened. Am personally committed to changing things so there ARE adverse consequences.

Rud, I like your use of ‘easily’. Best of luck with the difficult bit that comes next.

How may people help you in making sure there are adverse consequences to the scientific fraudsters like Marcott, Mann, McNutt & etc.?

Can someone decrypt this very important post and turn it into comprehensible English for us schmucks.

That’s my thinking also.

Or especially, why is it necessary to have ‘an algorithm‘ when you’re trying to show the world how the width of tree rings has changed over time?

For me, the very fact that ‘an algorithm’ is required to elucidate something soooo simple means somebody is lying.

iow: Algorithms = Data Adjustment

period

When I used to hear people talk about “algorithms” when discussing climate models, I thought they were referring to “Al Gore-isms”

You know, boiling oceans and all that.

I’ve since concluded that climate “science” does in fact make extensive use of “Al Gore-isms”.

algorithm

ăl′gə-rĭᴛʜ″əm

noun

1.A finite set of unambiguous instructions that, given some set of initial conditions, can be performed in a prescribed sequence to achieve a certain goal and that has a recognizable set of end conditions.

2.An erroneous form of algorism.

3.a precise rule (or set of rules) specifying how to solve some problem; a set of procedures guaranteed to find the solution to a problem.

I like #2, but I think #1 is more appropriate. So, no, algorithms do not automatically equal data adjustment.

Its the passage from tree rings to temps that needs an algorithm.

Sure, but you are not a shmuck. Look again at this post’s illustrated iterations of the ‘data’ algorithm used for processing the paleo ‘data’. The more you iterate it, the stronger the hockey stick becomes out for many decades. Simple explanation: proof that the algorithm rather than the paleodata produced the hockey stick. No different than Mann 1999. Just a different method.

Rud, what is iterations, what is data algorithm and what is paleo data.

@Bob iterations – doing the same calculation (+, –, *, / ) over and over again.

data algorithm – the precise functional way that you do a particular data (number) calculation (+, –, *, / ) e.g. A + B = C

paleo data – paleo (old or past) data (numbers) e.g. the measured width of tree rings in old wood from the historic years 1000 thru 1200

Philip’s answers are more enlightening than mine

Thank you.

“paleo data – paleo (old or past) data (numbers) e.g. the measured width of tree rings in old wood from the historic years 1000 thru 1200”

I’m still wondering how anyone could conclude that tree rings are a proxy for temperature. As a forester for 50 years I’ve looked at a lot of tree rings on stumps and say for certain that the size of the rings had little to do with the temperature- at least here in New England. Maybe to some degree in some very dry areas but I doubt the correlation is a good one- at least not to draw such important conclusions.

Eversource has been doing a lot of veggie management in my neck of the woods. Based on a cursory view of any random stump, I seriously doubt one could find a meaningful correlation of the ring widths along any random radius, let alone those obtained from different trees.

Dendrochronology may be useful in determining the relative ages of the lumber in King Tut’s tomb, but it’s useless as a temperature proxy.

right, it’s very good for dating- terrible for temperature proxy- there have been many discussions of this on WUWT- apparently unread by the climatistas 🙂

The very trees that are tested are anomalies … lived long for a reason unknown to the tester, in a forest of which few survived to be tested, in terrain inconvenient to human firewood collection, in soil of unknown chemistry. Testing of dozens of trees from the same location would be required to establish a tree ring width trend and usually we don’t have that. The growing season day-to-night temperature range in tree growth areas probably means that 10% of temperature range and 30% of the rainfall range is going to result in inconsistent ring widths because it is WHEN those occur during growing season that is just as important.

Net result, tree ring proxy temperatures have error bars larger than the climate change numbers we’re trying to assess, and is a great environment for performing meaningless averages and trendlines and declarations of statistical significance that don’t really exist.

So why do so few “climate scientists” understand this problem? It seems like a big deal to me. No doubt Mann and others have heard these complaints- I wonder how they defend themselves?

Confirmation bias? If it provides the “right” answers, probably doesn’t pay to ask too many difficult questions….

that’s why I have more respect for the engineering profession than science (replication crisis)- if an engineer builds a bridge and it fails the engineer is in trouble- engineers had better ask tough questions before building anything

Why do you never see variance/standard deviation numbers for anything in climate science? Answer – it would mess up the assumption that climate scientists know what that are doing in claiming accurate numbers. Someone needs to convince them that every average has a variance and in climate science most have covariance also due to correlation.

How many times have you seen Simpson’s paradox mentioned?

Here is a paper that discusses the problem.

https://agupubs.onlinelibrary.wiley.com/doi/full/10.1002/jgra.50461

Here is a statement from the paper.

“””””As mentioned above, the situation with LEO O2 temperature looks like a strong case of Simpson’s paradox, when conclusions drawn from part of the data change sign if the complete data set is analyzed. “””””

Think about temperature data and what may occur with various trend lengths. It is one reason for desiring all long trends. Even though long trends invariably are broken into smaller periods due to calibration, shelter changes, probe changes, etc.

Also, when one looks at various stratifications that disappear with averages of averages of averages, one should investigate the possible paradoxes and one must wonder where statistics is leading us. Examples are decreasing temperatures throughout fall and winter versus rising temperatures in spring and summer creating unique stratifications. These can cause problems, especially when it is necessary to unscientifically tease a one thousandths temperature change from data measured to no better than one tenths of a degree.

As I recall, the basic speculation is that if your tree is in a near treeline (eg just warm enough to survive the winter) location, the major constraint to growth is the ambient temperature. Warm periods yield more growth, cold periods less. Of course, any major changes to the tree’s circumstances could alter that correlation. The climate warms, a herd of reindeer fertilize the site, a volcano blankets the forest in ash, torrential rain, major subsidence, the tree gets bigger and has a greater ability to withstand cold, collect water, get sun light, etc.

“the major constraint to growth is the ambient temperature”

So the hockey stick is based on extremely flimsy evidence. That’s a poor excuse for “science”.

Bob I put a reply to your questions in for review. At the time I did not know how to attach it directly. Perhaps the moderator will do so. Good questions, you have to break this stuff down into pieces.

That and the point that @detgodehab made by deleting 25 years from the source data series and running it through the same algorithms and they still produce hockey sticks.

McIntyre and @detgodehab also deleted 50 years and got the same hockeystick endings.

Proving the methodology produced the hockey sticks not the source data.

That’s quite simple, Crisp. The whole thing boils down to three letters.

WTF!

Mr. Crisp: The math is way over my head, but I’ve read at Climate Audit and here for over ten years, and here’s my take- Mr. Mann’s 7th grade science fair project was his theory that green apples are the sweetest. He chose three red, four red and yellow, and three green. He took a bite out of each one, and recorded that the three red and four mixed apples were…..non-conforming samples! The cores from those (they really tasted good!) were buried in the backyard. He reported his results to the fair (the three greens) as proof of the theory, all three tree stumps–I mean, green apples were the sweetest! What we see in this article above is that Pages simply took in tree ring samples that spiked up; the rest were non-conforming. Maybe Mr. Stokes can show us where Mr. Mann disclosed all the non-conforming samples in that seminal work.

Here is a very rough attempt to explain what it saying.

Proxy measurements are taken. In this case, measurements of tree ring width. These are converted into temperatures by applying some arithmetic to them. This is known as an algorithm.

In the case of the original Hockey Stick something called PCA, or Principal Components Analysis, was used. To explain this, you have some quantity of interest. For instance, age at death of a set of people. You also have some variables which you suspect will account for it or have a causal factor. Years smoking. Weight. Years of exposure to air pollution. Occupation.

PCA tries to get at the factors (which will mostly not be just one of the variables) which account for most of the variation in the thing that interests you.

When you do PCA, you have to standardize the variables. If you don’t, high scores will produce a mathematical artifact and give some of them an excessively high weighting. So you do this by ‘centering’ them, which is a treatment which expresses them as scores of difference from the mean. A bit like what is done with temperatures in climate.

What Mann et al did was to ‘center’ the scores by something other than the mean. Who knows why. No explanation was ever given, and it became the subject of controversy. Predictably the warmists defended it and the skeptics attacked it. The skeptics were right in terms of statistics, and Ian Joliffe, who Tamino describes as the world authority on PCA, said so very clearly.

Steve MacIntyre entered the dispute by showing that if you applied Mann’s method to random noise, you got a hockey stick. This showed the Hockey Stick was an artifact of the method of processing the data, not in the data itself.

The present post says the same thing applies to the Pages reconstruction of global temperatures. The decisive point it makes is that if you knock off the last 50 years and apply the algorithm to the previous readings up to 50 years ago, you still get a final hockey stick, which we know did not exist.

Its impossible to tell at this point whether this is correct. Steve has a strong track record. The same thing, or something similar, did happen with the first Hockey Stick. We don’t know what the algorithm is that is being used, how it was reconstructed, what the proof is that its the one Pages used.

If you had to bet at this point, Steve is a good bet. But have to wait for the rest of the posts to explain more, to be sure.

parse this into one paragraph that 80% of the dumb population could understand, would be better.

Torture can be used to extract a false confession. The data was tortured. The torture took the form of many repeats of “ex post screening”, which in this case means repeatedly manipulating the data nearer to the result you want. M&M’s quote in Chris Hanley’s comment puts it well: “While screening on the basis of correlation to temperature superficially seems to make sense, the error is easily understood if you hypothesize a pharmaceutical scientist using ex post screening: imagine a drug study which only reported results for patients who got better”. Only in this case, it wasn’t that simple, it took a lot of torture.

The tree ring data represents the supposed “real” temperature over some time period. The scientists attempt to come up with a “curve fitting polynomial” – a function which, when plotted, “fits” the real data or close enough statistically speaking. This is called “the model”. The reasoning is that if the polynomial fits well enough (called convergence in the article) then you can use that function to calculate what the temps will be in any year in the future (years not covered by the real data). This is called extrapolation. This discussion concerns Mann’s crazy methodologies to produce a model that fits the real data but in the end always goes UP when extrapolated out far enough. Or something to that effect.

As I like to snark: It’s a likelihood of a probability of a likelihood of a probability…

I wish I were surprised by the deceit of the fearmongers.

Experimental science relies upon repeatability. A single experimental result is meaningless, series or individual.

Failure to share the raw data and methods used is a clear sign it’s not science being presented but activism.

“Failure to share the raw data and methods used”

This is just lazy boilerplate. Of course they share the raw data. NOAA maintains an extensive database. Every one of the many graphs in this post is showing raw data from such a database.

And the whole scientific literature that has built up is there to explain the methods.

Nick, A.W. Montford’s “The Hockey Stick Illusion” will dispel any notion that “The Movement” (as M. Mann described his cabal of climate conspirators) paid little conscience to contributing to “the whole scientific literature that has built up is there to explain the methods”

Good book! Highly Recommended.

Sure, Racehorse, sure.

“This is just lazy boilerplate. Of course they share the raw data. NOAA maintains an extensive database. Every one of the many graphs in this post is showing raw data from such a database.

And the whole scientific literature that has built up is there to explain the methods.”

Riight, which is why McIntyre has extensively documented repeated failures to archive data and methods, and refusals from researchers and research institutions to provide such things.

“Riight, which is why McIntyre has extensively documented repeated failures”

Just nonsense. This very article is based on archived data from a systematic database.

What McIntyre complained about was archiving of ice core results. He picked out Lonnie Thompson in particular. But in fact Thompson extensively archived his results.

It’s Nick that practices lazy boilerplate as I saw that same response from him in several other places. 🙂

so show us the “scientific literature” proving that tree rings are a good proxy for temperature

Bob, paleo data is information such as the collected width of tree rings over time. An algorithm is a computer program. An iteration is an instance, for example you use your algorithm to calculate the average width of your tree rings. Your program compares them to something like temperature. Each time you run your program is an iteration. If you make your program calculate using a variable that changes for each iteration, your calculation might help you understand the interaction of the variables.

Without changing a variable, how do “reiterations” result in an amplification of a wrong result? Also, how do McIntyre and @detgodehab confirm that the “reverse engineered” algorithm they came up with was the same one used in the original Asian study.

It’s an interesting post. Thanks to McIntyre and Watts for still working on this.

tree rings can NOT be a proxie for temperature- they are a very rough proxie for soil moisture, the health of the tree, the degree there is competition with nearby trees, the age of the tree, etc.

I know nothing about ice core data as proxie for temperature but I do know about tree rings after 50 years as a forester.

Seems like beating a dead horse unless it is being claimed that it was out and out fraud and not just bad science.

There’s a good video everyone should watch, google it up yourself, but the gist is “CIA Officer teaches how to detect if someone is lying.” The sum of the video is, if someone is accused of a crime, and doesn’t straight up deny it, but instead tells you how they’re a good person … this is a pretty good tell to know they’re lying.

Now use that information to examine the statements in the messages, especially “Considerable care was exercised in the approach …”. This is a straight up tell, that the author knows there’s misleading data analysis going on.

In the absolute very best tradition of decision based evidence making.

Lysenko still working hard out there

Lies, damned lies and paleoclimatology.

Michael Mann’s defining contribution to ‘Climate Science’ is the Hockey Stick graph.

That simple truth tells the world all it needs to know about how contrived, Climate Science is. Climate science is simply the corruption of Climate and Science. Many of the so called Climate Scientists and its advocates have now adopted the more public persona of Climate Alarmism. It does nothing other than reveal them and show the scale of their malfeasance.

Despite the data and the Hockey Stick Graph methodology being scientifically dismissed. Described as simply junk. That and despite the grafting of actual data with proxy (data) which was done to introduce the uptick shape, rather than reveal the series progressing downward. Despite these revelations of incompetence Mann is inducted into The NAS?

Go figure, as you guys say over there.

A few years ago when I started studying the climate issue- I contacted a few dendrochonologists at major universities. I asked them about this supposed theory that tree rings could be temperature proxies. I got no reply but one suggested a text book on the subject. I ordered the book and found nothing in it about tree rings being temperature proxies- so i can only wonder how this theory became a key part of “climate science”. As a forester for 50 years and having looked at a lot of tree rings on stumps- I saw no evidence that the rings had much to do with temperature. There are so many factors contributing to the size of the rings- and temperature is probably one- but to draw important conclusions such as the hockey stick seems crazy to me. I’m sure of one thing- if the weather is very hot and the soil moisture is dry- the rings will be small. The most important factor is clearly soil moisture.

As an engineer and a woodsman born and bred on a wooded smallholding back in the day, I agree with you.

“Despite these revelations of incompetence Mann is inducted into The NAS?”

It just goes to show how corrupt our professional organizations have become over the human-caused climate change issue.

It’s an edge effect which means either a valid method in incompetent hands or simply a flawed method. The fact that truncating the sequence by 50 years produces the same is the proof of it.

From the article: “so it was very clear that the big blade being produced by the PAGES2K Asia chronologies was bogus and some sort of artefact of their methodology and NOT due to climate. This doesn’t disprove global warming.”

But it does disprove the “evidence” for unprecedented warming.

The only “evidence” the climate alarmists have of anything unusual going on with the Earth’s temperatures is the bogus, bastadized Hockey Stick chart. Without that, they have Nothing to talk about.

Thank you for all your wonderful, essential work on this subject over the years. Real Science is still alive and kicking! And is kicking climate alarmist butt, in this case.

I haven’t seen a paleo recon yet, which shows anomalous 20thC warming, that wasn’t laced with shenanigans.

The smoking gun against the Hockey Stick is the 100% inability to blindly reproduce. There is 0.00% chance that 100 unbiased researchers would ever have developed “Mike’s Nature Trick to Hide the Decline.” Also, the Hockey Stick is defined by the altering of the construction method. In 1903 the Instrumental data is added and the trend immediately changes. There is absolutely nothing in the quantum mechanics or trend in CO2 that would justify an “dog leg” in the chart. If Climate Science was a true science they would look for what could have changed a dramatic change in 1902, because CO2’s quantum mechanics didn’t change, nor did the trend in Atmospheric CO2. Temperature changed dramatically…but nothing about CO2 did. That is a smoking gun, and no one even bothers to discuss it. No group of unbiased scientists would ever reproduce the hockeystick, and if they did, it would rule out CO2 as the cause. CO2 shows a log decay in W/M^2, so it would never cause as abrupt upward shift in temperature, it would actually work to slow the increase. That is defined in the quantum mechanics, and those haven’t changed.

If climate science was a real science there would be a 100% Concensus that CO2 can’t cause a dog leg in the temperature chart. The quantum mechanics rules out CO2 as the cause of the Hockeystick. The Physics of the CO2 molecule and trend it atmospheric CO2 simply do not support a dog leg in the Hockeystick. Something else must be causing that rapid warming.

The Green House Gas states that Temperature is a function of W/M^2. From that they claim that Temperature is a function of CO2, through the GHG Effect. That means that Temperature is a function of CO2, but in reality, it is Temperature is a function of Log CO2 which relates CO2 to W/M^2. Atmospheric CO2 increases at a near linear rate, no where near the rate needed to compensate for the log decay in W/M^2. CO2 would need to increase at a log rate to compensate for the log decay in W/M^2. That is why a linear increase in CO2 won’t cause a linear increase in temperatures…yet that is what we have. The linear increase in temperatures rules out CO2 as the cause of the temperature change. The dog leg in 1902 or the hockeystick simply demonstrates a change in construction, not an actual change in global temperatures. Nothing in the trend of CO2 or quantum mechanics of CO2 will ever support the rapid change in temperatures highlighted in Hockeystick. Any real science would know that and there would be a consensus that the the Hockeystick is a clear fraud.

Story Tip: We are making this way way way too complicated. The Hockeystick is created not by a change in global temperatures but by the inclusion of instrumental data with the Proxy Data in 1902 and then proxy data is dropped in 1980. The chart dramatically alters exactly at those dates. There is absolutely nothing magical about 1902 and 1980, and instrumental data goes back to 1650 with Central England. To prove the Hockeystick is a complete fraud, simply alter the dates at which the Instrumental data is included in the reconstruction. The Hockeystick will follow those dates. If you add instrumental data is 1880, the warming will start in 1880, if you add the instrumental data in 1920, the warming will start in 1920. If however, you control for the UHI and Water Vapor and only use instrumental data specifically chosen to isolate the impact of CO2 on temperatures (cold and dry deserts) you will get no warming at all since 1902. The hockey stick simply represents the wishful thinking of an activist masquerading as a scientist that wanted a graphic that supported his political agends.