11:19 AM 08/03/2017

A new study using an expensive climate supercomputer to predict the risk of record-breaking rainfall in southeast England is no better than “using a piece of paper,” according to critics.

“The Met Offices’s model-based rainfall forecasts have not stood up to empirical tests and do not seem to give better advice than observational records,” Dr. David Whitehouse argued in a video put together by the Global Warming Policy Forum.

Whitehouse, a former BBC science editor, criticized a July 2017 Met Office study that claimed a one-in-three of parts of England and Wales see record rainfall each winter, largely due to man-made climate change.

Using its $127 million supercomputer, the Met Office found in “south east England there is a 7 percent chance of exceeding the current rainfall record in at least one month in any given winter” and “a 34 percent chance of breaking a regional record somewhere each winter” when other parts of Britain were considered.

“We have used the new Met Office supercomputer to run many simulations of the climate, using a global climate model,” Met Office scientist Vikki Thompson said of the study.

The Met Office commissioned the study in response to a series of devastating floods that ravaged Britain during the 2013-2014 winter. Heavy winter rains caused $1.3 billion in damage in the Thames River Valley.

Scientists said supercomputer modeling could have predicted the flooding. Thompson said the supercomputer “simulations provided one hundred times more data than is available from observed records.”

But Whitehouse said the supercomputer’s models did “not give any better information than what could be obtained using a piece of paper.”

Using observational records, Whitehouse argued the 7 percent “chance of a month between October and March exceeding the record for that month in any year is equivalent to a new record being set every 86 months.”

“New monthly records were set twice in the 216 October-March months between 1980 and 2015,” he said. “Therefore the ‘risk’ of a new record for monthly rainfall is 5.5% per year, according to the record.”

“Between 1944 and 1979, there were three new record monthly rainfalls – an 8.7 per cent chance of any month in a year exceeding the existing record,” Whitehouse continued, adding that “between 1908 and 1943, there were 4 record events – a risk of 14.5%.”

“The risk of monthly rainfall exceeding the monthly record in the Southeast of England has not risen, contrary to many claims,” he argued based on the observational data, adding the “Met Office computer models do not give any more reliable insight than the historical data.”

WATCH:

Follow Michael on Facebook and Twitter

Content created by The Daily Caller News Foundation is available without charge to any eligible news publisher that can provide a large audience. For licensing opportunities of our original content, please contact licensing@dailycallernewsfoundation.org.

Can it play tic tac toe?

Just put in the answer you want, wait and wallah it will give you the answer you want via 10 billion phony calculations. Isn’t the state of science just grand!

“wallah” ?? You mean “voila”…

If you program a super duper computer that costs 130 million USD with the political established UNFCCC the result will still be the political established UNFCCC.

Shirley you jest, Grant. It’s “Viola.”

Do you want to play a game (War Games)

It’s entertaining to watch postmodern neomarxism, that believe nothing is truth, claim that their policy based science(actually a part of the new Marxism) is truth?

Pong or Missile Command?

Garbage in Garbage out. 127 Millions worth. 🙂

Roger

Why people believe more computer power will give you better answers is a mystery.

“Why people believe more computer power will give you better answers is a mystery.”

No mystery. IF YOU KNOW WHAT YOU ARE DOING, there are situations where you can use computing power to overpower problems that are otherwise intractable. For example, in the early days of military satellites, the USAF used programs based on solving the equations for an ellipse to predict satellite positions. But as their mission requirements became more demanding, the equations got out of hand. They switched to step by step numerical integration. The later requires more computing power. But it scales far more easily.

And the numerical integrations software was easily validated. If it predicted that a particular satellite would rise above the horizon at Kodiak Island at 0534Z at an azimuth of 343 degrees, successful acquisition of the satellite at 0534Z went a long way toward validating the software. (In practice, they did run a lot of additional tests)

The problem in climate modeling is that there is no evidence whatsoever that the modelers know what they are doing. The models are basically unvalidated. It’s all faith based.

Getting an answer quicker doesn’t make it a better answer.

The leading climate models did not agree with each other 30 years ago and have not converged. This is because the modeling groups use different parameterizations for clouds and water vapour, the dominant greenhouse gas.

There is a link between solar activity, clouds and climate that the models ignore. (See Nir Shaviv’s lectures on Youtube and his published papers.) The IPCC rightly discounts TSI (solar irradiance) but ignores

So, if the models omit the Sun, we should expect them to fail to reflect reality.

Why is this so difficult for everyone?

There is no known way to uniquely solve a system of partial differential equations. It’s not a matter of computational power; the solution doesn’t exist.

You can throw all the gigaflops and terraflops at it you want; the problem can’t be solved using existing mathematics.

You could reduce the grid size but obviously if your model is fundamentally flawed you will just get more of the same flawed output, possible just quicker. Interesting that a ‘scientist’ doesn’t know that a model does not produce data, but then she does work for the Met Office.

I think you actually have to write a computer program to realize just how DUMB computers are. People who have never programed a computer just don’t realize that it will do just what you tell it to do, nothing more, nothing less. It can’t think for itself, it can’t make independent judgements, it can only spew out what you told it to spew out.

No better than just following historical averages? For a climate computer model, that would be very good performance./sarc

Can it sing “Daisy, Daisy” like HAL did in the movie, “2001: A Space Odyssey?”

No. Unless you load an MP3 of the song. Garbage needs to be programmed in before it can be output.

HAL was not connected to the interweb then, so now no need for programming, just google it, it’s all there! Or ask Siri! LOL

As the Hon C Monckton might say ‘this is just argument from authority (of a a bloody great big computer)!

The producers from CSI Miami rent it to play their “scientific” laboratory animations and therefore find it’s accuracy very useful.

They’re using two different definitions of data in one statement. That’s wrong to the point of dishonesty.

Measurements can provide data in the computing sense, but simulations can’t produce data in the climate science sense. That’s “cloud castling.” You can’t use assumptions as evidence for future assumptions.

Well, not honestly.

I actually think many scientists and the horde of pseudo-scientists that infest Climate Science do not understand the difference. It’s not dishonesty so much as plain ignorance and a belief in the conclusion, which means the “right” answer must mean the “right” assumptions.

The main weakness in rainfall forecasting is the parameters used in the model. Majority of the cases, these parameters rarely relate to rainfall. Because of this even to date the long-rainfall forecasts in India both by IMD and other scientific institutes-private forecasters. When I was in IMD/Pune we used to prepare the forecast based on the parameters defined by the then British IMD chief. The best regression parameters were used in the regression equation. Here most of the computations were made with very combursome hand driven number meter — not calculator. At that time IMD has no computer and all the work is carried out with manual calculations [thus no data manipulation as these are carried out by lower rank assistants]. Now In India sophisticated computers are in use in the forecast. The forecasts rarely predicted rainfall >110% and < 90% range of average rainfall — the averages vary with institutions as they use different data sets.

We used to have a forecaster [daily weather] who used to give very accurate prediction based on the ground based data maps. With the sophisticated computers and satellite data it has not improved much except the movement of the cyclones in the sea.

Dr. S. Jeevananda Reddy

In my book “Dry-land Agriculture in India: An Agromaterological and Agroclimatological Perspective”, BS Publications, Hyderabad, India, 2002, 429p on page 126:

“It appears that sometimes the low tech is more powerful over the high tech. The 1998 Hurricane George in Central America can be cited as an example, where it was proved that human experience is more vital than the sophisticated computer estimates using ultra-modern data sets. Hurricane George raged through the Gulf of Mexico and lumbered toward New Orleans/USA after ravaging the Caribbean and Florida/USA. There, local television meteorologists stood in front of computerized screens and grimly echoed the National Hurricane Center Official Forecast, the New Orleans would be the Hurricane’s next ground zero. But on WWL-TV, an 80 year old weather forecaster gripped a black marker pen, pointed to his plastic weather-board and calmly assured viewers that the Hurricane would veer off toward Biloxi, Miss. As usual, Nesh Charles Roberts Jr., an 80-year old private meteorological consultant, was right. India Meteorological Department also used to have one such person at their central forecasting centre in Pune, namely George. But, unfortunately such people never get any recognition but those work on sophisticated computers get all the show. I situ-knowledge and thumb rules are very important in the short-term weather forecasts over the sophisticated technology, under monsoon conditions. It is vital for accurate forecasts! Yet who cares!”

When I was a scientist at ICRISAT, early morning scientists used to wait for my bus arrival to know the rainfall for the day. For Hyderabad, the Thumb rule is if there is a low pressure system around Kolkotta/West Bengal, Hyderabad in AP will be dry. Based on the morning weather map, I used to tell them which rarely failed.

Dr. S. Jeevananda Reddy

I was in New Orleans for hurricane Andrew and when hurricane Gilbert entered the Gulf of Mexico.

Bob Breck accurately forecast that Andrew would jog west, missing New Orleans, but pummel the bayous to our West, including Baton Rouge.

Bob Breck also accurately forecast that Gilbert would hammer the Yucatan.

Nor was Bob Breck shy about asking Professor Bill Gray for Gray’s opinion regarding approaching storms and conditions.

It appears you are cherry picking subjective views regarding selected events and then stating that one event as if it is universal or pervasive.

There are many meteorologists who combine experience, history and technology to develop quality forecasts; many visit this website, comment here or even run the website.

Nevertheless, when a storm of Katrina, Camille, Gilbert’s or Andrew power enters or develops in the gulf, everyone prepares for a major storm. Only fools, or as is common around the Gulf Yankees and West coast visitors ignore the danger.

Hurricanes used to visit the East coast regularly; there are cities full of people who treat hurricanes as trivial events. Since the 1960s, I’ve heard experienced meteorologists trying to warn East coast residents of the danger.

Conflating your poor opinion regarding television meteorologists as applying to all professional broadcast meteorologists is improper.

ATheok — I doubt you understand what I presented!

In the Hurricane action time, I was in USA and saw this in media reports. The meteorologists of TV presented what the official report says and they haven’t presented their opinion.

Dr. S. Jeevananda Reddy

Interesting, thanks.

Without you providing names of meteorologists, links to videos or verified transcripts; I’ll base what I observed while living in New Orleans against your claims.

Nor is your ad hominem regarding my understanding, useful to your claim.

Using a computer to play Go and beat humans was done creating a learning program that ran trough huge numbers of games to learn what works and what doesn’t, it would interesting to use a similar method of analyzing the historical weather and climate data and see what an artificial intelligence program would predict as future weather and climate with out gaming it with BS assumptions like we do now with CGMs.

Let’s give the boys a fidget to play with next time.

“simulations provided one hundred times more data than is available from observed records.” Ponder that.

I did. Beat me to the punch.

Yes, it’s the first thing that caught my eye. Part of me wants to believe it is just a typo, but the other part is worried that it is not.

That is like saying a crystal ball or a set of tarot cards provides data.. !!

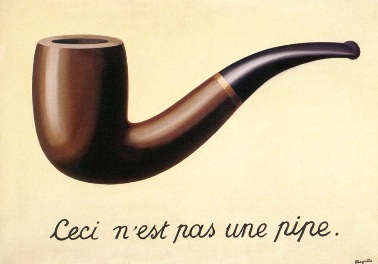

This picture should be at the entrance of any supercomputing facility:

Urederra, I doubt that they’d get it – the scientists not the programmers.

Forget using that computer for climate models. Hook it up to synthesizers and program it to produce some killer beats. It could usher in a new sub-genre of electronic music called “synthetic data”, but use the French translation to make it sound cool — données synthétiques

It’s funny how climate “scientists”, global warming enthusiasts and alarmists have all forgotten the golden rule of computer output:

Garbage in, Garbage out.

Since no one else seems to have pointed it out, if rainfall were a random process, new records will be common in the early years of record keeping and will be less common as the length of the record increases. If 5cm of rain falls on the rainiest day of the first year of record keeping (and if things are random), there’s a 50% chance that the second year will set a new record, a 33% chance of a new record on the third year … etc.

Something of the sort may well be happening here.

Touche’

But wouldn’t extreme (3+ sigma) events become more probable with time? What happens early becomes part of the’ normal’ history (they define the mean and SD) and the ‘un-normal’ events will show up with enough sampling.

Mods –

…Whitehouse, a former BBC science editor, criticized a July 2017 Met Office study that claimed a one-in-three of parts of England and Wales see record rainfall each winter, largely due to man-made climate change….

Should read

” Whitehouse, a former BBC science editor, criticized a July 2017 Met Office study that claimed a one-in-three CHANCE of parts of England and Wales seeING record rainfall each winter, largely due to man-made climate change. “?

[It is incorrect in the source Daily Caller article as well… -mod]

If these computers were that good they would be picking tattslotto numbers or horse race winners .

TABCorp in Australia, with which I still have a “gaming license” had a system called “HALO”. It was worth AU$1bil. Could not touch it. “Monitored” at that time by Microsoft MOM2000. Yes, really! No changes around Melbourne Cup Day. Betting is big business in Aus, so much so that “luxbet.com.au” (I set up the systems management for that server farm) was “hosted” out of the Northern Territories to get around regulatory rules. Not sure about today.

That is of absolutely no use unless they can tell which region will be affected.

Before the event happens, of course.

GIGO a supercomputer merely means you can use a lot more GI and get your GO faster.

Has the MET has bought body and soul into climate ‘doom’ an action which has brought it considerable funding, what other results would you expect?

“Has the MET has bought body and soul into climate ‘doom’ ”

Sadly …YES

Their forecasting is poor, they rarely get the weather correct beyond 3 days so resort to lucrative long-term scare mongering.

Exactly a month ago the press and various forecasting agencies were predicting the UK was about to enter a six week heatwave of record temperatures as a Spanish plume was due to head north and get stuck. Since then it has largely been a wetter and less settled summer than average.

And yet they still create press releases predicting decades and centuries out.

Decades of failed climate models.

Whined excuse, MetO needs a supercomputer.

Super computers require brilliant highly trained people to properly utilize. Otherwise, it is a big power hungry paperweight that can calculate more commands per day. Thus providing more calculations per day; but not one bit better than the slower computers.

Sadly, MetO lacks brilliant people and highly trained technicians.

British citizens should demand that MetO only use wind and solar to run their supercomputer. It will not change the quality of their forecasts.

Here’s a one. Don’t even need any paper.

(Having a semi-working brain does help tho)

Its like a computer prog but not really. More of a Science Hypothesis.

1. CO2 is actually an atmospheric coolant

2. This cooling causes water vapour in the air to condense faster than it otherwise might. (Making clouds)

3. Clouds being clouds, especially over the UK, tend to make it rain

4. The rain disperses said cloud(s) and makes it sunnier than it was.

5. As it happens, sunshine is as equally important as CO2 is for plants.

6. Hence the extra sunshine makes the plants grow more than they did and we get Global Greening

And everyone thought CO2 made them grow faster.

(Indirectly it did, you really must be careful of getting Cause & Effect the right way round and therein is the problem with current (digital) computers – they all have a clock controlling them.

One thing *has* to happen before any other.

Another problem with digital computers is sampling.

Don’t sample often enough and you effectively create negative time/frequencies and Lo-and-Behold – positive feedback becomes a reality.

Yes?

You *did* realise I meant that positive feedback is real only to the computer and also you spotted how I’ve perfectly described a ‘climate thermostat’?

good

Computer is fine, it is a great machine.

“Pray, Mr. Babbage, if you put into the machine wrong figures, will the right answers come out?”

GIGO !

Met office translation:

“Garbage In, Gospel Out”

Good one!

As a Brit I have given up taking any notice of the weather forecast and just look out the window (you can see the MET office building in Exeter it has no windows) and if I do hear what the forecast is I expect the opposite, even when there’re issuing storm warnings. There’s all this shouting and arm waving and then very little happens and if anything happens the BBC etc are all over it trying to hype it up to “climate change”.

James Bull

‘you can see the MET office building in Exeter it has no windows’

Brilliant! That explains a lot 🙂

JB,

I’m afraid that I’m getting equally cynical about US weather forecasts. We now have Doppler radar, geostationary weather satellites, computers, and supposedly a better understanding of meteorology than when I was a young man. Yet, subjectively, it doesn’t seem to me that forecasts are any better than formerly, except maybe in California, which only has two seasons — sunny and rainy.

Put the computer to better use, like playing video games.

“simulations provided one hundred times more data”

Stop right there. What the heck are they saying??

Since when is a simulation capable of providing ‘data’?!

Their simulations provided 100 times more guesses that there is data. So what? The ‘model’ should have started with an analysis of historical trends which would have highlighted the downward trend in the number of new records with rising temperatures.

As always with model averages, they provide no information.

If there is 100 model runs, one of those runs is the most accurate of the 100 runs (and we can never know which one is) The other 99 dilute the most accurate run.

What is produced at end are not probabilities. It’s merely net casting, trying to catch the real number in your net of forecast number range.

Any accuracy is complete luck.

What “unprecedented” rainfall in England looks like!

https://notalotofpeopleknowthat.wordpress.com/2017/07/26/met-office-unprecedented-rainfall-nonsense/