NASA Aqua Sea Surface Temperatures Support a Very Warm January, 2010

by Roy W. Spencer, Ph. D.

When I saw the “record” warmth of our UAH global-average lower tropospheric temperature (LT) product (warmest January in the 32-year satellite record), I figured I was in for a flurry of e-mails: “But this is the coldest winter I’ve seen since there were only 3 TV channels! How can it be a record warm January?”

Sorry, folks, we don’t make the climate…we just report it.

But, I will admit I was surprised. So, I decided to look at the AMSR-E sea surface temperatures (SSTs) that Remote Sensing Systems has been producing from NASA’s Aqua satellite since June of 2002. Even though the SST data record is short, and an average for the global ice-free oceans is not the same as global, the two do tend to vary together on monthly or longer time scales.

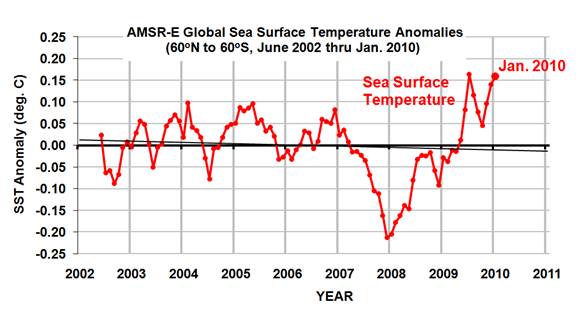

The following graph shows that January, 2010, was indeed warm in the sea surface temperature data:

But it is difficult to compare the SST product directly with the tropospheric temperature anomalies because (1) they are each relative to different base periods, and (2) tropospheric temperature variations are usually larger than SST variations.

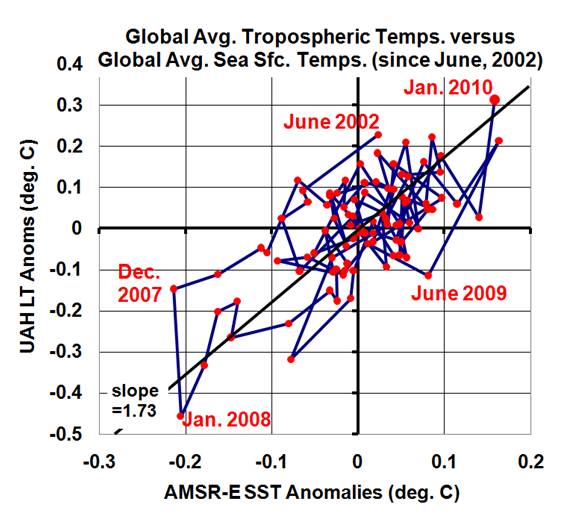

So, I recomputed the UAH LT anomalies relative to the SST period of record (since June, 2002), and plotted the variations in the two against each other in a scatterplot (below). I also connected the successive monthly data points with lines so you can see the time-evolution of the tropospheric and sea surface temperature variations:

As can be seen, January, 2010 (in the upper-right portion of the graph) is quite consistent with the average relationship between these two temperature measures over the last 7+ years.

[NOTE: While the tropospheric temperatures we compute come from the AMSU instrument that also flies on the NASA Aqua satellite, along with the AMSR-E, there is no connection between the calibrations of these two instruments.]

Jordan (14:32:48) :

“No demonstration of how a statistical analysis can provide a test of aliasing in the above aliased sinusoid? No statistical analysis of an aliased series which points us to the required sampling rate then?”

Your sinusoid was purely in the time domain – it has limited relevance the spatial sampling of temporal signals. The Hansen analysis is to look at temporal correlations in the time domain as a function of sample spacing. If there was a problem of aliasing you would see the correlations falling off and then increasing as the spacing was increased. In fact the correlations in temperature anomalies fall off gently, and are the decrease is comparable to the scatter in the data out to about 1000 km. This confirms there is not an aliasing problem.

2009 12 +0.288 +0.329 +0.246 +0.510

2010 01 +0.724 +0.841 +0.607 +0.757

So in ONE month the difference exceeds the variability by 0.436 over the +0.288 of December 2009 value. What is the significance of these temperature measurements on a climatic viewpoint? Zip.

I think this data should be looked at very closely. How has it been corrected or manipulated. The lesson of the previous few months is, do not take ANYTHING at face value.

My gut feeling is, the anomolously high snow coverage of the northern land areas is biasing the satellite data somehow.

kzb (10:20:25) :

I think this data should be looked at very closely. How has it been corrected or manipulated. The lesson of the previous few months is, do not take ANYTHING at face value.

My gut feeling is, the anomolously high snow coverage of the northern land areas is biasing the satellite data somehow.

However the tropics and SH are both high too, which is unlikely to be due to snow cover.

YR MON GLOBE NH SH TROPICS

2009 01 +0.304 +0.443 +0.165 -0.036

2009 02 +0.347 +0.678 +0.016 +0.051

2009 03 +0.206 +0.310 +0.103 -0.149

2009 04 +0.090 +0.124 +0.056 -0.014

2009 05 +0.045 +0.046 +0.044 -0.166

2009 06 +0.003 +0.031 -0.025 -0.003

2009 07 +0.411 +0.212 +0.610 +0.427

2009 08 +0.229 +0.282 +0.177 +0.456

2009 09 +0.422 +0.549 +0.294 +0.511

2009 10 +0.286 +0.274 +0.297 +0.326

2009 11 +0.497 +0.422 +0.572 +0.495

2009 12 +0.288 +0.329 +0.246 +0.510

2010 01 +0.724 +0.841 +0.607 +0.757

Tom P: “Your sinusoid was purely in the time domain – it has limited relevance the spatial sampling of temporal signals.”

OK, but I did suggest the sinusoid could be in space or in time (just change of units if the oscilloscope). That said, perhaps you’re saying you believe there is a method based on correlation when there are multiple dimensions.

I have my doubts, and here are some reasons…

There is no way to saparate the components of the samples into those labelled “genuine” and those labelled “folded” (aliased). As George Smith correctly says, the central limit theorem does nothing to help get us out of aliasing (statistics are also unable to separate genuine from aliased). I’d be extremely reluctant to rely on any statistical aggregate without assurance that aliasing is out of the question.

Hansen and Lebedeff do not even address the issue of aliasing. It is not the subject of their paper – they don’t mention the sampling theorem or aliasing. The only person who links this paper to the sampling thorem appears to be you Tom.

If Hansen and Lebedeff had addressed the question of aliasing, they ought to have referred to the relevant mathematics and literature, and then explained why a different method was required. They make no attempt to do so.

The the method you appear to be suggesting is completely silent when it comes to suggesting a required sampling rate (time or space). Or any criterion for determining that a required sampling rate has been achieved. Odd that the method would not even come up with an answer.

The Hansen and Lebdeff paper is concerned with gridding the surface, carrying out grid averages and, where there is no data, in-filling using data from distant sources. The correlation calculation is a method seeking to justify the use of data from “neighbouring” sites. There is no theoretical basis to suggest whether a given correlation is acceptable or not:

“The 1200-km limit is the distance at which the average correlation coefficient of temperature variations falls to 0.5 at middle and high

latitudes and 0.33 at low latitudes. Note that the global coverage defined in this way does not reach 50% until about 1900; the northern hemisphere obtains 50% coverage in about 1880 and the southernh emisphere in about 1940. Although the number of stations doubled in about 1950, this increased

the area coverage by only about 10%, because the principal deficiency is ocean areas which remain uncovered even with the greater number of stations. For the same reason, the decrease in the number of stations in the early 1960s, (due to the shift from Smithsonian to Weather Bureau records),

does not decrease the area coverage very much. If the 1200-km limit described above, which is somewhat arbitrary, is reduced to 800 km, the global area coverage by the stations in recent decades is reduced from about 80% to about 65%.”

I could have some sympathy with this analysis – the authors are trying to make the best of a bad lot (past temperature methods that just happen to have been gathered). But that doens’t make it correct.

There is a straightforward answer to all of this Tom: a detailed survey of the global tempertature field and a design specification for sampled data acquisition systems.

We expect no less from the digital control systems in a chocolate factory – this gives you the assurance of consistent quality when you buy a bar of chocolate. Why not the same for climate data?

If the design tells us that past data is of inferior quality, at least we will know whether or not to “buy” those temperature reconstructions.

Jordan (12:09:41) :

Hansen’s paper is specifically justifying the grid spacing required to accurately determine the Earth’s temperature anomaly variation. It might not frame the problem in a way you like, but that does not make its analysis any less valid.

But I’m relieved you think there’s no Nyquist problem for chocolate bars – I certainly don’t support any undersampling in that case.

Bob Tisdale (18:28:53):

It’s the precisely the great intensity of the now-fading South Pacific warm pool (~4K at its peak development in January) that makes me suspect a genesis quite apart from ENSO. It’s certainly far greater than anything seen in the equatorial zone during the same time period. Do you have your own speculations why this is so?

anna v (22:40:13):

You’re quite correct! Aliasing does not make the data series “meaningless,” any more than the individual snapshots in the videos here are devoid of information. It’s just that the information can be highly misleading when strung together in a series. Aliasing can change the entire spectral structure of the underlying analog signal, but only in an analytically prescribed way. With adequately long series the average value and the total variance of a random or chaotic signal are not affected in the general case, as shown by the Parseval Theorem. Only in highly contrived examples–sampling a pure sinusoid at exactly its own frequency, instead of twice that, does it produce quite meaningless results.

sky: “Aliasing can change the entire spectral structure of the underlying analog signal”

True. And it can introduce a systematic error which means we do not have the comfort that the “error” signal meets the requirements of the central limit theorem.

An example: when a signal is aliased, any frequency component at the sampling rate (or multiples of the sampling rate) will appear as a standing offset. We always “hit” those components at the same point in its cycle, although we do not know which point. Aliasing therefore introduces a bias into the calculation of mean value, and we can only calculate the maximum size of the bias (if we know the signals spectral density).

Setting that aside for a moment (but not to forget it), where frequency components are quite close to the sampling rate (or multiples), we get those “modulated waveforms” seen in the above video. These are systematic errors which will eventially tend to zero, but it requires a much longer series for them to do so.

Does a chaotic system mitigate this in any way? I don’t know – we’d need to have the spectrum of the chaotic system to assess that question.

I appreciate your point about taking a series of snapshots. However, if those were analogous to each snapspshot of the global temperature field, my concern is that the snapshots are aliased and there are large gaps in the image being used to construct the putative climate signals.

Tom P: Basically, I think the H&L paper is trying to make too much of inadequate data. You’ve got to have doubts when they consider use of “neighbouring” data on the grounds of correlation coefficient of only 0.3 (although 0.95 would not address my concerns about aliasing). And when they are forced to either infer data from up to 1200 km distance, or accept only 65% coverage of the surface. A paper to pop in the wastebasket, methinks.

Jordan (00:07:52) :

You don’t understand a paper, so you throw it in the bin. I suppose it’s one way to avoid having to consider your own faulty analysis.

Phil -the single biggest temperature anomaly on your list is +0.841 degrees for the NORTHERN HEMISPHERE (2010-01). This is so much in conflict with the weather we have experienced all over Europe, China and the US for this month that we have got to wonder about it.

Tom P: ” It might not frame the problem in a way you like, but that does not make its analysis any less valid.”

There is an established methodology for helping to deal with the issues of data sampling and discrete signal processing. It acutally does give us theoretically grounded criteria for determining when data is corrupted by inadequate sampling.

The H&L paper fails to discuss why it doens’t use those techniques. It doesn’t even acknowledge that they exist or that aliasing could be an issue. And you want to talk about faulty analysis?

So the paper plunges into a new technique without referring its techniques back to relevant theory and/or literature. Perhaps you could provide the missing references to the mathematical theory which supports your assertions with respect to correlation of the sampled as a mechanism to alert us to aliasing, and to produce criteria for sampling rate. So far you have failed to do so.

If not, then we could all agree that this would be another example of climatology venturing into the realms of inventing new techniques without interacting with the relevant specialist communities (ref Wegman).

Besides Tom, I did not want to get into this debate. All I have said is that there appears to be no evidence of the survey and sampling design study which would have determined the necessary sampling strategy for the global temperature field. Such a study would have become the “handbook” for all attempts to produce these temperature reconstructions.

Sorry Tom, but you are simply defending an unhelpful distraction in a paper which pretty well confirms the issue I rasied. Unless you can deliver a convincing reference to those missing mathematics, I see no further reason to entertain you.

Jordan ((5:07:32):

It’s only with strictly periodic signals (i.e., line spectra) that aliasing affects the mean value, because the phase of such signals–no matter how complex–is permanently fixed, thus leading to systematic bias With chaotic or stochastic signals, such as encountered in nature, the phase takes on random values in the long run, which results in asymptotically unbiased estimates of the mean.

There’s no way that the very high January SST anomaly is due to aliasing. The satellite anomlies are much too coherent with the surface record. Although we all can speculate on the spectacular warm pool in the Southern Ocean, what produced it is not clearly known. The fact that it remained quite stationary for more than a month, rather than drifting with the strong winds in the “roaring forties,” argues for a stationary source of heat, unrelated to ENSO induced current changes.

sky: These are good points.

I’m not very familiar with argumens about chaos. I’m more familiar with signal components we might refer to as “noise” or perhaps “error” in statistics.

There may well be an issue with sationarity and ergodicity of the climate. That might cause problems when we assess the frequency spectra with a finite data sample. But surely the same problem would apply to statistical analysis over finite intervals?

It is appealing to say that climate processes are not stationary ergodic processes, and I can see where you are coming from. But surely that means that statistical analysis of the data we have collected over finite sample intervals would have no particular significance?

Returning to the topic of noise – this can be characterised by a frequency spectrum. Red noise is concentrated at the lower frequencies – each has a finite component – we could sample red noise and get an aliased signal. For example zero mean noise could be sampled to produce a bias (offset) due to the coincidence beteen certain frequency components and the sample rate.

I understand a stochastic process as being one with a rendom component, but also with a deterministic element. If we don’t have the latter, then I would struggle to see any scope for making predictions. And if we don’t have the latter, would there not be an issue in trying to give meaning to past observations?

If we were to concluded that climate signals are basically unobservable, there could well be a lack of logic in seeking to explain changes like January 2010.

“The satellite anomlies are much too coherent with the surface record.”

I’m not too sure that this can be used to argue that aliasing does not exist an any one or all of the temperature series. Bear in mind how aliasing can produce plausible signals and statisitcal analysis (which is invalidated by aliasing) could well latch onto those appealing similarities.

Is there any chance that the +0.841 degrees anomaly for the northern hemisphere in January 2010 is actually a transcription error. I wonder if it should be MINUS 0.841 degrees?

Frankly I just can’t believe this is the largest positive anomaly on the list.