By Jim Steele

A year ago, when passing through Huntsville, Alabama, I had the great good fortune to sit down with Roy Spencer and John Christy for a pleasant extended lunch. As we were leaving John gifted me his new book, “Is It Getting Hotter in Fresno…or Not?” The city of Fresno might not resonate with most people but having studied the effects of climate on ecosystems in California for 30 years, I knew it would be an invaluable contribution to my understanding. On the cover is young boy staring to the heavens, so I also hoped that meant that John would explain climate change at the recommended level of a 6th grader to better reach the general public.

But climate change is complex, and can’t be explained by any simplistic, single magical control knob. A better title for his book would have been “How Honest Scientists Adjust Temperature Data and Evaluate Trends.” The young boy on the cover represented John’s life as a “weather geek” with an unwavering desire to understand climate and weather since middle school. Throughout the book, Christy’s uncompromising integrity is obvious as he devotes several chapters to explaining how and why temperature data MUST be adjusted before any comparisons between weather stations can be meaningfully evaluated. He readily admits however those adjustments are part science and part an art form, requiring a thorough knowledge of the landscapes surrounding each weather station. Without understanding those landscape effects Christy warns “the adjustment procedure is heavily influenced by what the scientist expects. So rather than objective information, we have pre-determined information>”

You might need to read twice the chapters on why temperature adjustments are needed, but I suggest you do. It points out how different landscapes, natural or altered, affect the weather. That will help you separate good adjustments from bad adjustments. Christy saw firsthand how each change in the location of Fresno’s weather station brought it to a new landscape. He witnessed the effects growing urbanization on various areas of the region. Too many climate skeptics readily assume all adjustments are driven by a political bias. Indeed, without a thorough knowledge of the surrounding landscape changes, applying a one-size-fits-all algorithm and the expectation that any warming must be driven by rising CO2, will certainly bias temperature adjustments. But good skeptical climate scientists, like John Christy, understand there are several important variables affecting weather, and good adjustments must be made.

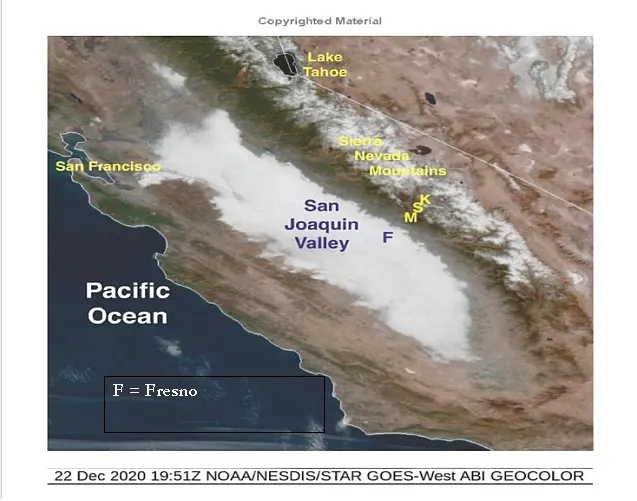

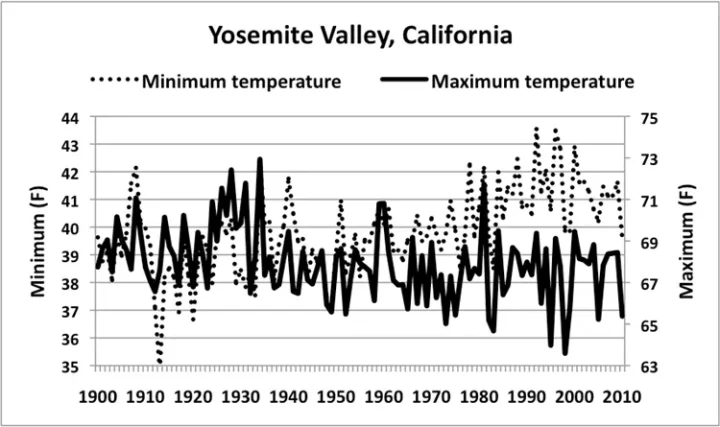

While Fresno’s weather holds little interest for most, especially if they have never been there, I was eager to read John’s analyses. Fresno is just 60 miles southwest of Yosemite National Park, whose climate I had studied as part of my research on northern California’s ecosystems and wildfires. Fresno is a growing city surrounded by grasslands of the San Joaquin Valley 300 feet above sea level, where lost wetlands and irrigation affect temperatures. In winter Fresno is affected by tule fogs, while Yosemite’s forests are buried in snow at 4000 feet. Nonetheless, like Christy’s analysis of Fresno’s temperature trends, the US Historical Climate network determined the maximum temperatures of Yosemite have not been warming, in contrast to significantly rising minimum temperatures. A similar lack of warmer maximum temperatures since 1930 has been reported for all northern California.

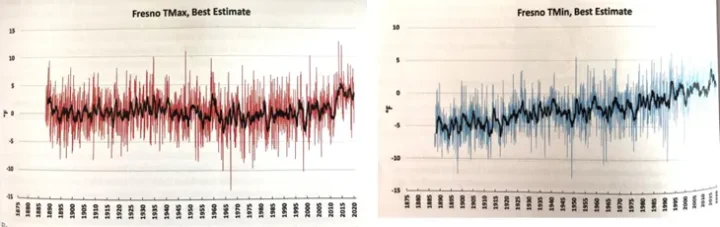

Using Christy’s adjustment methodologies, maximum Fresno temperatures declined as did Yosemite’s between 1930 and 2010, while minimum temperatures behaved very differently, steadily warming. After accounting for urbanization effects, he concluded, “I have more confidence that the natural climate of Fresno has not warmed over the past 130 years and the upper bound of the natural TMAX (maximum temperatures) would be about +0.05 °F/decade.” And despite the uptick since 2010, there has been no statistically significant daytime warming in Fresno.

In contrast there has been a significant rise of 0.51°F /decade in the minimum temperature. As illustrated in the lights of the satellite photo, the current location of Fresno’s weather station (KFAT) is the Fresno airport located in the heart of Fresno’s increasing urban heat island. Due to human alteration of that landscape, KFAT is now 6°F warmer than in the late 1880s. Clearly maximum and minimum temperatures are being driven by different dynamics. Thus, they should be analyzed separately as Christy has done. Averaging maximum and minimum temperatures creates a misleading average as would averaging apples and oranges.

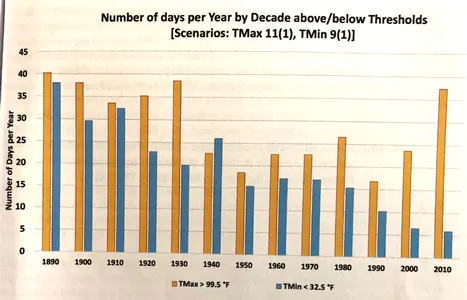

Finally, Christy examines trends in extreme temperatures using various thresholds. One such analysis examined the number of days per decade when maximum temperatures exceeded 99.5°F (orange bars). As would be expected from the trend analysis, extreme hot days have been increasingly less common since 1880. Although there has been a recent uptick, but still there were more hot days in the 1930s. And as a growing urban heat island would also predict, the number of days when minimum temperatures dipped below 32.5°F, has been steadily decreasing (blue bars).

For everyone interested in how temperature trends are constructed, I highly recommend Christy’s book. His thorough examination of Fresno’s temperatures suggests there is no climate crisis. But for people living in Fresno’s city limits, efforts to reduce the stifling heat caused by urban heat islands, would be money well spent.

Great part of the USA, working in Grass Valley, living in Nevada City and spending weekends at Lake Tahoe, regretfully lasted only a month or so.

(for some reason my comment got sent to moderating bucket)

Seattle has experienced this same weather pattern since at least March 2022. Our official weather station is at SeaTac airport. Our temperature equipment had to be completely replaced several years ago because of erroneous data.

In the last 3.5 months, we have had at least 20 days when the high temperature was at least 10 degrees F below normal. The opposite has been true for our low temperatures, which are consistently normal or modestly above normal.

It would be very interesting to find out if this Seattle trend goes back many decades like the trend in Fresno.

People have normal temperatures. Places don’t.

Portland has had two official thermometer locations. From the 1850’s to 1941, it was in Portland. There was a slow increase shown over time. Of course, Portland grew during that time.

In 1941, the station was moved east to the area of the Portland Airport, right on the Colombia River, but sparsely populated at that time. Now, the same rise in temperature readings is seen, just as the area grew up around it.

I live west of Portland, so am seldom impacted by the urban heat island of Portland. My weather station often is 10 degrees different than the airport, which is downwind of Portland. Almost always, it is warmer at the airport than it is here, especially if there is any wind blowing toward the airport.

Land (city) based thermometers are better at showing urban growth than climate change.

Although I worked in something other than climatology I think my experience with changing the way something is measured/tested is relevant.

When new test/measurement equipment or software was commissioned there was period of running new and old in parallel for a comparison study. Any differences in results were investigated and new tests adjusted if necessary or assigned to the better resolution and accuracy of the new.

For a change in weaather station location surely running old and new for a long period, a year or more, would give some indication of the differences between the old location and equipment and the new. Slightly better than picking numbers out of the air. Changing the equipment in a weather station would preclude parallel running but comparisons to nearby stations shouldn’t be beyond the wit of man and computer

The only problem with running old/new in parallel is that you still can’t tell what the calibration was in the past. You can only find the difference in calibration for old/new beginning in the present. The calibration of field instruments drifts, that’s just a given. The amount of drift at any point in time in the past simply can’t be determined by the amount of drift that is present today.

This is why it is so important to end the old record and start a new one when such a change is made. And it doesn’t even have to be old/new, it can happen when ever an existing instrument is calibrated against a laboratory standard.

The only way around this is for the field instrument to be calibrated on a regular basis, perhaps even quarterly. Then the difference between the field instrument and the laboratory standard should be included in the uncertainty interval associated with readings between the calibration periods and not as adjustments to the raw data.

I should point out that making adjustments in data for the purpose of analysis as Christy has done is fine – as long as the raw data is not “adjusted” and the adjustments made for analysis purposes is laid out in detail and is explained – as Christy has apparently done.

When you are combining data from multiple stations it is almost impossible to do what Christy has done. In this case, including calibration drift as part of the uncertainty interval for each station is imperative, and it is also imperative that the uncertainty interval be propagated into the final result and not just ignored as many climate scientists do today by just assuming all error cancels out.

“What’s in that MMTS Beehive Anyway?

MMTS is “Maximum/Minimum Temperature Sensor”

Page 2.

Equipment is swapped regularly without running in parallel to verify sensor readings. Nor is there any consideration for sensor reading temperatures affected by close quarters with local wildlife.

When I was looking into sensor details, there were reports of frogs or wasps taking up residence in the MMTS shield. How the impact of a frog or a wasp nest gets adjusted out is anyone’s guess.

One amusing story was of a guy who had demounted an MMTS and was taking it away in his car for servicing. Angry wasps came out and attacked him as he was driving. The experience was instructive but unpleasant. 🙂

So it really was “the bees and the spiders?”

Prepare for a period of simulated exhilaration.

When I was involved with metrology service and analysis, recall period out of tolerance notices were routinely sent to engineers when their instruments failed to meet manufacturers specifications during calibration cycles. When a group of instruments failed to meet specifications at a 2 sigma level, the entire family was derated in accuracy. It was then up to the engineers to decide whether to continue to use or discard those instruments. Continued use required review and justification.

In my experience, I can tell you with a straight face that the majority of out of tolerance notices issued and the families discarded most often were temperature measuring devices.

I believe it! Even blowing dust from a nearby rural road can enter an aspirated temperature measuring device and affect its accuracy. If there are any electronic or mechanical components in the measuring device they will devolve over time as far as accuracy is concerned. No amount of calibration can fix a circuit board whose components have deteriorated too far. All you can do is scrap it and any of its relatives that might suffer from the same fate.

But what of a sealed liquid mercury thermometer? How often would it need to be recalibrated?

I am just thinking about the adjustments made to old manually read thermometers in days before all this electronic stuff.

It would seem to me that the fire chief or captain who took down the temperature readings 100 years ago could be relied upon for better consistency and accuracy then all these ‘new fangled” electronic devices.

The issue with something like a mercury thermometer is not calibration per se but the uncertainty of the reading. Is the meniscus read consistently? Is the temp going up or down at the time of reading (affects the wetting of the tube). Is the tube diameter actually consistent? All of these affect the uncertainty but not the calibration.

Part of the problem with calibration also has to do with the resolution. Ask yourself why were temperatures rounded to the nearest integer temperature? Answer, because they were marked in one degree increments. The reader had to decide, was the meniscus above or below the “1/2” point between marks. You couldn’t really calibrate these in the newest sense of the word, i.e., turning a screw.

Remember too how glass tubes were made in the late 19th and early 20th century. The glass was not hard and the mandrel was not uniform. Lots of uncertainty in the readings regardless of the ultimate accuracy. It is why using averages of integer numbers with high uncertainty to match up with current temp readings is a joke. All graphs doing this should show the uncertainty intervals. They would dwarf the uncertainty in current recordings.

Tim,

Quite correct.

In some fields, drift can be corrected by measurements in parallel using instruments of different design. For temperatures, there have been changes from mercury in glass style to electric resistance sensors, so it makes sense to continue with widespread mercury sensors for many decades to help pin down instrumental drift. Sadly, even if this is being done, there seems to be very little public reporting of results. Geoff S

Even using multiple measuring devices doesn’t eliminate uncertainty. Different devices can have different drift rates but who knows how they interact, especially when installed in the field? You might get cancellation if they drift in different directions at the same rate but what if they drift in the same direction?

Station changes from thermometer changes to housing changes, to land use changes such as windbreaks, grass changes, to even 10 foot change in location, etc., all cause changes in the microclimate measured at the device. Running stations in parallel really only shows that there are differences in the microclimate being measured. It does NOT show that either thermometer is incorrect and should have its readings adjusted. The very first choice to make in “adjustments” is which microclimate is more correct, the warmer one or the cooler one. Too many automatically assume that the “newest” is the most accurate and therefore “cool” previous data.

Dr. Christy has obviously shown how UHI can work its way through a system. One question I have is why UHI is not included in warming causes. This is heat in the atmosphere caused by converting insolation to IR rather than reflecting insolation and is actually being attributed to CO2 rather than what actually causes it.

Changing recorded data on a permanent basis just goes against everything I was trained in. Recorded data should be discarded if not fit for purpose, not massaged to meet one’s “requirements”.

The primary purpose for creating new information for the publics consumption (I will not call it new data), is to create a long record for statistical purposes in trending. This is not an ethical thing to do. The data records should remain as recorded and individual scientists may recommend changes when performing specific studies. I have no problem with “normalizing” a data series so it connects with another series in particular studies, but that is up to the authors to justify within each study.

Compare Christy’s integrity to thee lack thereof of Phil Jones and his fellow alarmists as exposed in the Climategate emails.

Jones hid the fact that a paper he wrote about warming in China did not account for any station moves or increasing urbanization around weather stations once deemed rural.

Climate emails suggest Phil Jones may have attempted to cover up flawed temperature data

https://www.theguardian.com/environment/2010/feb/09/weather-stations-china

Jones then admitted important raw data had been “lost”.

“In an interview with the science journal Nature, Phil Jones, the head of the Climate Research Unit (CRU) at the University East Anglia, admitted it was “not acceptable” that records underpinning a 1990 global warming study have been lost.

The missing records make it impossible to verify claims that rural weather stations in developing China were not significantly moved, as it states in the 1990 paper, which was published in Nature. “It’s not acceptable … [it’s] not best practice,” Jones said.”

https://www.theguardian.com/environment/2010/feb/15/phil-jones-lost-weather-data

Isn’t it time to get weather stations out of airports?

No, they are critical to aircraft safety, particularly for calculating air density.

The use of airport weather stations for climate purposes should never have started and clearly they should be dropped from climate analyses.

Ok, lets try that again, shall we…

“Isn’t it time to get weather stations [used for global climate etc etc etc] out of airports”?

OK. You have (or anyone) some suggestions where? Not so easy.

I live about 17 miles outside a good size city in Southwest Pennsylvania.

Driving to downtown in the morning for work, the difference from my house to downtown in the summer will be between 5 to 8 degree F, just using my thermometer for fun. Difference is probably higher in the afternoon.

In the winter, when we have more than 3 inches of snow in all area around my house as well as within the city, I see something interesting, no need for a thermometer. Within a few days, driving back home, all the snow is melted from downtown up to less than a mile from the small area where my house is.

I have live here 52 years, it is a heavy wooded area. Even in the winter, with no leaves we have so many trees and many are green trees. Enough to cut sunlight in comparison. No wonder the snow does not melt so fast.

Also, as Jim Steele mentioned how the daily average temperature is calculated, what is the significance of this number! I have absolutely no idea what this number means.

The mid-range value is truly meaningless. It simply doesn’t describe the climate. The climate is based on the total temperature profile. Two different locations can have vastly different climates yet have the exact same mid-range value. That means that even anomalies calculated using the mid-range values are meaningless as well. Averages of meaningless mid-range values are also meaningless.

If you assume that the daytime temp profile is usually close to being a sine wave, the mid-range value doesn’t even represent an “average” value. The average value of a sine wave is 0.673 x T_max. While nighttime temps are more of an exponential than a sine wave, the average of an exponential curve is not the mid-range value either. A true daytime/nighttime average temp would give you some indication of the climate at a point on the globe any any specific point in time. But it would require keeping daytime and nighttime analyses separate which wouldn’t lend itself to the CAGW crowd wanting to trumpet the earth is going to turn into a cinder.

When you look a the National Data Buoy Center Gulf of Mexico area there are a number of offshore FAA sites only showing atmospheric conditions. While there are a number of shore stations from different sources that are useful, they are too scattered. Too many are “No recent reports.” https://www.ndbc.noaa.gov/

That’s what he US Climate Reference Network has done. WUWT posts it results continuously in upper right column of this site

USCRN is showing a warming rate of 0.34°C / decade since 2005. But probably too short a period to draw firm conclusions.

and too much variability to detect a trend

You can detect a trend. I just did.

I doubt it’s significant once autocorrelation is taken into account, it’s only 17 years of a small portion of the globe, but there’s nothing to contradict any of the other data sets.

Tell you what. What is the variance of NH summer vs winter (not annual but actual season) Tmax and Tmin, then the variance of the SH summer vs winter (again not annual but season) Tmax and Timing.

For time series, changing variance can cause spurious trends.

Or maybe you could do the work yourself. If you think it’s possible that the rise in US temperatures was caused by changing variance over just 17 years, show how. Just asking me to do the work is futile, as you will just claim I don’t know what I’m doing, or I’m looking at the wrong sort of variance or whatever.

For what it’s worth, looking at just the summer months shows a rate of increase of 0.35°C / decade, and winter months show a rate of increase of 0.38°C / decade.

Neither showing any significance at this point as there are only 17 years, and lots of year to year variance.

It is not up to me to do your work. If you are going to make claims it is up to you to qualify them as to accuracy.

I pointed out one thing that can happen with variance that affect time series trends. If you don’t believe this is a problem, then so state that the variances are constant and present no spurious trends.

Read the following:

Modeling The Variance of a Time Series (umd.edu)

Here is another good reference for time series.

1.1 Overview of Time Series Characteristics | STAT 510 (psu.edu)

I’ve done the work. I’ve said what the warming rate is, and said it’s unlikely to be statistically significant at this point due to autocorrelation.

If you think there’s some way that changing variances contrived to produce a spurious warming, as opposed to simple chance, you have to show how.

You haven’t done the work at all.

As has been pointed out to you over and over and over and over again – winter temps have a larger variance than summer temps. This is totally lost when you create daily mid-range values to use in calculating weekly, monthly, and annual averages for local, regional, and global areas. In fact, you lose the variance of the daily temperature profiles as well. That gets subsumed into the calculated mid-range values and disappears. Two different locations can have wide variance differences between minimum and maximum temps but you lose that relationship when they both have the same mid-range value!

Variances are totally ignored as is the propagation of uncertainty.

You keep arguing that the variance is not lost, that we still have the raw data but that argument is a total non sequitur. It simply doesn’t address the fact that climate analyses ignore variance.

Variance is a measure of the uncertainty associated with a data distribution. The wider the variance the less certain you are about the values that can be expected in a distribution – i.e. as the variance goes up so does the standard deviation. If you ignore variance then exactly what do you truly know about the temperature profile you are attempting to portray?

All the USCRN data is available. It was Jim Steele who showed the graph of monthly mean anomalies. That is the data I’m using. If you don’t like it complain to him.

There’s little point debating variance with someone who still doesn’t understand that the variance of a mean of random variables is not the same as the variance of the sum.

Something smells disingenuous about your answer Bellman

I brought up the USCRN data in a reply to someone saying its time to move weather stations away from airports and pointed out the graph is always posted in WUWT’s sidebar. I did not argue about the significance of the trend .34F trend since starting in 2005. You injected that opinion, to which I agreed you that the time span was too short and there is a lot of variability to determine significance. Some have disingenuously tried to push a significant trend by using the annual average to remove the variability seen in the monthly data

I’m not sure what the problem is here. We both agree that there is a positive but not statistically significant warming trend in the USCRN data.

I find it interesting, as some here seem to think that USCRN discredits other data sets, whereas it seems to me that so far it suggests other US data is not completely wrong.

Your argument that using annual averages pushes the significance is puzzling. I would expect trends based on annual averages to show less significance. It decreases the variance but reduces the sample size. They should cancel out leaving the confidence more or less the same, but annual averages also reduces the effects of autocorrelation, which should mean larger confidence intervals.

I’d check this out for myself, but I’m going to be away for the next few days, and don’t have time at the moment.

The problem is that if there is no statistically significant trend, then there is no knowledge of what trend truly is, positive or negative.

That’s not a problem. It’s a statement of fact. The USCRN is the best measure of US temperature, but it only goes back to 2005, so it’s difficult to draw firm conclusions from it.

All I can really say is that so far there is little evidence that it is contradicting the other land based US temperature records.

“ I would expect trends based on annual averages to show less significance. It decreases the variance but reduces the sample size. They should cancel out leaving the confidence more or less the same, but annual averages also reduces the effects of autocorrelation, which should mean larger confidence intervals.”

You don’t know the variance associated with the daily averages used to come up with the monthly averages. Therefore the variance of the monthly averages is useless for judging anything.

This follows through to the annual averages as well.

Daily, monthly, and annual averages based on mid-range temperatures tell you *nothing* about the climate anywhere, let alone a global average. Since temperature profiles that vary widely can generate the same mid-range value you have lost the very data you need to look at in order to judge the climate!

Somebody needs to stop WUWT publishing any more of these monthly averages before even more data is lost.

Nope! All that needs to be done is the calculate the variance of the population elements and carry that forward!

Then everyone can make an informed decision concerning the uncertainty of the averages of averages of averages!

That’s called SCIENCE, not statistics.

And they use daily averages to remove the variability seen in daily data. And then they use the daily averages to create monthly averages. And then they use monthly averages to create annual averages. And then they use annual averages to create global averages. It’s averages all the way up the line with nary a mention of the variance of the daily temperature profile – which is what *actually* determines climate!

“All the USCRN data is available.”

As I said, this is a non sequitur. The variance of the USCRN data is *NOT* used in climate science analyses or models. *THAT* is the issue you simply refuse to admit to.

Averages with no variances attached are meaningless. Nothing can be concluded about what the averages mean.

You can’t even admit that mid-range temperatures hide the variance of the actual temperature profiles unless the variance is specifically provided for temperature profile as well as for their combination into a mid-range temperature.

I totally understand why variance is so unused in climate science. Variance is a measure of uncertainty. As variance goes up, uncertainty goes up as well. Since variances add when combining random variables such as temperature, the uncertainty of the resulting data goes up with each addition of a random variable into the data set. This soon creates so much uncertainty in what the data is telling you that it becomes useless. It’s no wonder then that climate scientists want to always ignore the variances of their data. It’s the same logic as assuming that all uncertainty in your data cancels so stated values suddenly become 100% accurate!

“Since variances add when combining random variables such as temperature, the uncertainty of the resulting data goes up with each addition of a random variable into the data set. This soon creates so much uncertainty in what the data is telling you that it becomes useless.”

Thanks for confirming you don’t understand how variances work. I’ve no time to explain yet again why the variance of the mean of random variables goes down as you add more variables, and you’ll just ignore it over and over and over again. But on the remote chance that you are prepared to learn something I’d suggest checking with Jim. He keeps posting links that show what happens to varience when you add weighted random variables.

“Thanks for confirming you don’t understand how variances work. I’ve no time to explain yet again why the variance of the mean of random variables goes down as you add more variables, and you’ll just ignore it over and over and over again. “

I’ve given you quotes from FOUR DIFFERENT STATISTICS TEXTBOOKS that tell you how variances add when combining random variables.

And you *still* say those textbooks are wrong with your only proof of that being that *you* say they are wrong.

The variance of the mean is *NOT* the variance of the combined populations. For physical measurements the average is useless unless it is assumed that the measurements are made of the same thing using the same device.

This is the main reason that the mid-range value of daily temperatures is so useless. You lose the statistical description of the temperature profile itself. The variance of the mean tells doesn’t tell you anything useful.

Assume you have two independent., random variables X1 and X2 which both have the same variance, σ^2 .

let Y1 = X1 + X2

Var(Y1) = Var(X1) + Var(X2) = 2σ^2

The variance of the average value (X1+X2)/2 is σ^2/2

Again, however, the variance of the average LOSES the variance of the population which is what the uncertainty of the population should be based on, not on the average of the population.

It’s exactly like uncertainty. The uncertainty of the mean is *NOT* the same thing as the average uncertainty. The uncertainty of the mean is the uncertainty of the population. It is *not* the average uncertainty. The average uncertainty only spreads the uncertainty of the population evenly across all members of the population, a useless exercise as far as physical reality is concerned.

I know this will never sink into your brain. That’s sad.

“The variance of the average value (X1+X2)/2 is σ^2/2”

Finally. That’s what I’ve been trying to tell you. More generally if you take the mean of N values from the same distribution the standard deviation is σ/√N.

“Again, however, the variance of the average LOSES the variance of the population which is what the uncertainty of the population should be based on, not on the average of the population.”

Now you are just trying to redefine your terms. If you want the variance of the population than say that. It’s not the same as the variance of a sample mean. And it doesn’t matter how many samples you take, the variance of the population remains the same.

You keep rowing back on your claims, and then claiming victory. First you insist that the uncertainty of the mean is the same as the uncertainty of the sum. Now you are saying the uncertainty of the mean is the same as the uncertainty of the population.

To be clear, are you now admitting that uncertainty does not increase with sample size?

To make the point.

I used the following calculator.

Standard Deviation Calculator with sample distribution

Day 1 — 72, 97

Day 2 — 74, 94

Day 3 — 71, 95

Day 4 — 69, 96

Day 5 — 75, 100

Day 1 — var = 312.5

Day 2 — var = 200

Day 3 — var = 288

Day 4 — var = 364.5

Day 5 — var = 312.5

avg var = 1477.5 / 5 = 295.5

var of all data points combined = 167.5

daily avg — 84.5, 84, 83, 82.5, 97.5 = 84.3

var of daily avgs = 3.8

Look at what you lose in variance when averaging mean values. You go from 295.5 down to 3.8. This is why propagating variance is so important and why you don’t see it climate science. No one wants to quote a figure like +0.15 anomaly with a variance of even 3.8 let alone a variance of 100+.

Now just imagine what the variance is when you start averaging NH and SH temperatures which are real independent random variables with no covariance.

The very basic thing learned in statistics is that the variance describes how well the mean describes the range of data.

“The very basic thing learned in statistics is that the variance describes how well the mean describes the range of data.”

It doesn’t really do that. The standard deviation is a better descriptor. Variance is more a means to an end.

The fact that the variance in your daily values is much smaller than the overall daily variance is much smaller, is the point. The averages are consistent – they are not random. You might want to examine them for lots of reasons, but they are not necessary to know how certain the daily averages are.

I can show you a hundred different references that show you are incorrect.

Here is one: Variance is Statistics – Simple Definition, Formula, How to Calculate (byjus.com)

It says:

The Standard Deviation provides an interval around the mean and is used to describe the shape of the distribution.

the SD is calculated based upon the variance.

±σ = √variance

They are both statistical descriptors of importance. Do not try to minimize one below the other.

I tried to show you an example of why variance is important. I’ll try one more time. Example: I run four groups of a similar experiment and have four values in each group.

Group 1 –> 5, 6, 6, 7 avg = 6, variance = 0.7

Group 2 –> 6, 8, 9, 10 avg = 8.25, variance = 2.9

Group 3 –> 6, 8, 10,12 avg = 9, variance = 6.7

Group 4 –> 5, 7, 9, 9 avg = 7.5 variance = 3.7

average of averages = 7.7

average of variances = 3.5

Variance of averages = 1.64

So if I report my findings as 7.7 ±1.64 what do you think people trying to duplicate my findings will think?

This is exactly what happens with anomaly reports of temperatures. The daily variance is HIDDEN behind multiple averages. Just averaging summer and winter temps will hide daily variance between the seasons. Why do you think people have no failth in a Global Average Temperature? It is basically a metric with no variance to describe the range of the data used to calculate it.

You can find all sorts of nonsense on the internet. And a lot of what I see about variance is confused. But you quote is correct, variance is a measure of how far a set of points is spread out from the mean. Standard deviation is also a measure of how far a set of points is spread out from the mean. Both do the same job, and if you know one you know the other. It’s just that standard deviation is a more human readable measure as it has the same scale and dimensions as the data, rather than being the square of the spread.

“Both do the same job”

No, they don’t. Variance is a measure of the entire population, even those data points far from the mean. Standard deviation is a measure of those data points close to the mean.

“if you know one you know the other.”

Sure you do. That doesn’t mean they do the same job or tell you the same thing!

Really no idea what you are trying to say here. SD is literally just the square root of variance. It cannot ignore points far from the mean or emphasise points closer to the mean.

If you are claiming that the variance value is closer to the entire range, that is not necessarily true at all. Consider a normal distribution with SD of 10. The variance is 100, but very few points will be anywhere near 100. And then consider a distribution with an SD of 0.1. Variance is now 0.01. How does that measure points far from the mean compared with SD?

“So if I report my findings as 7.7 ±1.64 what do you think people trying to duplicate my findings will think?”

Depend’s on what the point of your experiment is. Are you trying to show a statistical difference between your different groups, or are you only interested in the average? I don’t think it’s a good idea to just give a figure of ±1.64 without explaining what it is, especially if you are using variance rather than an extended uncertainty.

It’s the same with global, or in this case US anomalies or temperatures. What data is useful depends on what questions you are investigating. If you want to know how much temperatures vary across the country you need the deviation in temperatures across the country. If you want to know if winter is colder than summer you need to know the average values for those two seasons and the uncertainty on those averages. If you want to know if one year was warmer than another year you need the annual averages and a value for the uncertainty of that average. If you want to know if there’s been a significant trend over a period of time, you need the monthly or annual anomaly averages.

Again, I’ll ask, if you think it is meaningless to have just the monthly anomaly with no indication of the variance of all the data that went into it, why do you never object to the constant reporting if UAH data, or to the USCRN monthly average graph on these pages? How do you think a pause could be detected using just these useless monthly averages?

“If you want to know if winter is colder than summer you need to know the average values for those two seasons and the uncertainty on those averages.”

Averages simply don’t tell you about climate. Two entirely different climates can have the same average value.

Climate is the WHOLE temperature profile, not just some mid-point value whose variance is unknown.

“Finally. That’s what I’ve been trying to tell you. More generally if you take the mean of N values from the same distribution the standard deviation is σ/√N.”

Why do you keep refusing to explain what the average variance or average uncertainty means in the real world?

You are like a record stuck on a scratch and is unable to advance.

“Now you are just trying to redefine your terms. “

Malarky! *I* am the one that has been continually trying to point out to you that average uncertainty is *NOT* the uncertainty of the mean! The same thing applies to variance. The average variance is *NOT* the same thing as the variance of the population!

It is the uncertainty of the mean that is important. It is the variance of the population that is important.

And yet you keep arguing about average uncertainty and average variance as if they have some kine of importance in understanding reality!

“ If you want the variance of the population than say that. It’s not the same as the variance of a sample mean. And it doesn’t matter how many samples you take, the variance of the population remains the same.”

Again, ONE MORE TIME, the average uncertainty/variance is *NOT* the uncertainty of the mean or the variance of the population!

That is what *I* have been trying to tell you. And now you are confirming what I’ve been telling you. My guess is that you will, once again, return to arguing about the average uncertainty and average variance having meaning in the real world!

NO ONE IN THE REAL WORLD CARES WHAT THE AVERAGE UNCERTAINTY OR AVERAGE VARIANCE IS! NO ONE!

An average is a statistical descriptor – and that is *ALL*. It is supposed to tell you something about the POPULATION! But it can *only* tell you something about the population if it includes that variance/uncertainty of the population! The average variance and average uncertainty tells you *nothing* about the population. Your continued focus on average uncertainty/variance is nothing more than an obsession with a delusion!

CLIMATE IS BASED ON THE POPULATION OF TEMPERATURES, i.e. the entire temperature profile.

Just take two profiles, P1 = (60,40), P2 = (55,45)

Var1 = 200, Var2 = 50

combine the two Vtotal = 250

V1_avg = 200/2 = 100, V2_avg = 50/2 = 25

V_avg_total = 125

V_avg_total is half the variance of the population. What in Pete’s name does it do to carry V_avg_total forward into any further calculations? It has hidden the variance of the population! So it makes any judgement about the uncertainty of the combined population questionable!

You’d save yourself a lot of wasted effort if you would only read what I keep saying. I”m not interested in the average uncertainty or the average variance. Average variance is *not* the variance of the average.

Take a random variable X with variance var(X). Take an independent random sample of size N from X and take their mean, mean_X. Then

Var(mean_X) = var(X) / N

That is the variance of the average. It is not the average variance. The average variance of X is not surprisingly var(X).

For some reason *you* want to claim the real variance of the mean is the variance of the population, which *is* the average variance of the population.

ROFL!!! Var(X)/N *is* the average variance!

Average = Total/N What do you think your equation is? The Var(X) divided by N *is* the average variance!

Judas H. Priest! How do you think you calculate an average?

This is just getting silly.

Var(X) is the variance of the random variable X, or the variance of the population represented by X. It is not the variance of any total. Dividing it by 2 does not give you the average of its variance because the average of its variance is, by definition, var(X).

As I keep saying you could easily test this for yourself.

“Var(X) is the variance of the random variable X, or the variance of the population represented by X.”

WOW! Talk about getting silly!

Dividing the variance of the population by the size of the population is somehow not calculating the average variance!

Var(X) = Σ(X – X_mean)^2 /N

And *YOU* want to divide by N again? WHAT FOR? The only possible reason is to try and find an average variance!

Variance is a measure of the dispersion of the population. And you somehow want to find an average of that? WHAT FOR?

“And *YOU* want to divide by N again? WHAT FOR?”

Try to concentrate. You have two different N’s. Your first one is the population size, which might be infinite. The second N is the sample size. I want to divide the sum of the variances by the square of the sample size because I want to determine the variance of the mean. As all the samples are the same random variable this is the same as dividing the variance of the random variable, or population, by N.

“The only possible reason is to try and find an average variance!”

An average variance of what? All the random variables have the same variance because they are the same variable, hence the average variance is the same as any individual variance.

I think you’ve confused yourself by switching from talking about random variables to the idea of a specific sampling, but it’s the same maths. If you know the variance of a random variable you know the variance of the mean of N variables is equal to the variance of the single variable divided by N. And from that you can say that if you take a random independent sample from a population with a known variance you can divide that by sample size to get the variance of the sample mean. And mor practically, this means the standard error of the mean is the standard deviations of the population divided by the square root of the sample size

“Try to concentrate. You have two different N’s. Your first one is the population size, which might be infinite. The second N is the sample size.”

Try to concentrate! If you already have the population variance then why are you taking samples in order to find it?

” I want to divide the sum of the variances by the square of the sample size because I want to determine the variance of the mean.”

There is no “sum of the variances”. The variance is a sum of differences squared for all elements divided by the population size.

And, again, when you divide by N again you *are* finding an average variance!

Why are you so hung up on finding average variance and average uncertainty? They are USELESS for anything! Why can’t you answer that simple question?

“All the random variables have the same variance because they are the same variable, hence the average variance is the same as any individual variance.”

Huh? Var(Y) = Var(X1) + Var(X2)

Who says X1 and X2 have the same variance? Why would you want to divide Var(Y) by N, the total number of elements if not to find the average variance? And what use is the average variance?

“If you know the variance of a random variable you know the variance of the mean of N variables is equal to the variance of the single variable divided by N.”

Word salad that is meaningless. You are *still* trying to find an average variance!

“And from that you can say that if you take a random independent sample from a population with a known variance ”

Again, if you know the population variance THEN WHY ARE YOU TAKING SAMPLES and trying to find the variance of the sample? If it isn’t the same as the population variance THEN SOMETHING IS WRONG with your sampling!

“And mor practically, this means the standard error of the mean is the standard deviations of the population divided by the square root of the sample size”

And now we’ve circled back around again! The standard deviation of the sample means, what you call the standard error of the mean, is *NOT* the accuracy of the mean. Dividing the standard deviation of the sample means by the square root of the sample size TELLS you nothing about the accuracy of the mean you calculated from the sample means! It *ONLY* tells you how precisely you have calculated the mean of the sample means!

Each measurement element consists of two parts: Stated Value +/- Uncertainty.

The final uncertainty is the sum of the uncertainties of each measurement uncertainty. That uncertainty *is* the uncertainty of the total population and is also the uncertainty that gets propagated to the mean.

*YOU* want to throw that uncertainty away (thus you always assume that all uncertainty cancels) so you can call the stadard deviation of the sample means the uncertainty of the mean you calculate from the sample means. The uncertainties of the measurement elements just gets ignored.

*EXACTLY* what all the so-called CAGW climate scientists do!

And now you’ve dragged me back into your same old delusion. I’ll not answer you in this thread any longer. You simply refuse to learn how uncertainty is propagated!

” If you already have the population variance then why are you taking samples in order to find it?”

You’re not, and this constant jumping between similar sounding terms is why I suggest you need to concentrate on what’s being discussed. In this case I’m saying that *if* you know the variance of the population you can calculate what the variance of the mean of a random sample of a specific size will be.

This would be the case in the example if rolling dice I suggested you test for yourself.

Of course, in most cases where you are taking samples you don’t know the population mean or variance, so it has to be estimated from the sample.

“There is no “sum of the variances”. The variance is a sum of differences squared for all elements divided by the population size.”

You spent ages trying to convince me that the correct way to calculate the variance of the mean of random variables was to sum the variances. I’m just saying the correct way is to sum the variances and then divide by the square if the sample size.

“And, again, when you divide by N again you *are* finding an average variance!”

And again, I am *not* dividing by N, but by N^2.

“Why are you so hung up on finding average variance and average uncertainty? ”

Why do you keep projecting your own misunderstands on to me. You seem to be so triggered by the concept of dividing anything by N, you assume it must be an average. If you add the variances of N values and then divide by N^2, you are not finding the average variance. If all the variances are the same, this becomes var /N. You are not finding the average variance, you are dividing the average variance by N.

“Again, if you know the population variance THEN WHY ARE YOU TAKING SAMPLES and trying to find the variance of the sample? If it isn’t the same as the population variance THEN SOMETHING IS WRONG with your sampling!”

Again, I am not trying to find the variance of the sample. I am trying to find the variance of the sample mean. I specifically say that in the sentence you were quoting.

“And now we’ve circled back around again! The standard deviation of the sample means, what you call the standard error of the mean, is *NOT* the accuracy of the mean. ”

And you’ve changed the subject and gone off on another rant about uncertainty. I said nothing about the accuracy of the mean, I was simply pointing out that calculating the variance of the mean of random variables is how you get to the formula for the standard error if the mean.

“There’s little point debating variance with someone who still doesn’t understand that the variance of a mean of random variables is not the same as the variance of the sum.”

Another non sequitur! You *still* can’t address the fact that a mid-range temperature masks the variance of the entire temperature profile! You *still* can’t address that any averaging involving those mid-range temperatures do not accurately anything since there is no associated variance provided for the averages!

Without knowing the variance that goes along with those averages there is no way to judge the uncertainty associated with those averages.

As usual for a statistician and/or climate scientist you just want to ignore anything that might question whether the data is usable or not. You and your buddies just want to assume that all variances are zero and all uncertainty cancels.

That’s not science at all! It is ideology and religious dogma!

I went to Fresno once, meth-head hell. I won’t be going back.

As a fan of Ansel Adams and John Muir since I was a boy, I did have the privilege though of visiting and hiking in the Sierra Nevada mountains, one of the most beautiful places on earth.

I learned to avoid Fresno also. There is a story about original Spanish Conquistadores surveying the area. The Capitan sent out two search parties to collect representative samples of fruits. The first group came back with bunches of grapes from the costal area. The Capitan tasted them and judged them delicious. The second group came back with bunches of grapes from further inland. Upon tasting them, the Capitan spat them out and exclaimed: “Fresno!”

Actually, this is a scene out of the movie “Fresno.”

As someone who moved to Fresno in the 1980s and still visit regularly – I an attest that it is as hot (maximum) as it ever was. And that the temperatures at night are qualitatively different – it used to be that the scorching daytime temperatures (in Spring at least) would drop fairly rapidly after dark such that it would reach comfort by 10 pm or so.

But that is no longer true. It takes until midnight or later for even moderately hot (90s is moderately by Fresno standards) to drop to 60s.

“[T]he adjustment procedure is heavily influenced by what the scientist expects…”

______________________________________________________________

So far in 2022 the number of adjustments that GISTEMP makes to their Land Ocean Temperature Index Looks like this:

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec

291 243 252 401 346

The 346 Represents what GISTEMP said in April 2022 compared to May 2022.

These several hundred changes all the way back to the 19th century go on every month.

And those temperature adjustment over time form a pattern (see the image that’s supposed to appear somewhere) Here’s a LINK

Jim ==> To be clear, when Christy is speaking of adjusting temperature records, he means adjusting what appears to the world as a single location record — Fresno, California — but is in reality many differing records strung together as the weather station for Fresno has moved over time.

Understanding the record depends on understanding the changing landscape surrounding the weather station.

Fresno is a hot, dry place in the hot, dry Central Valley of California. Half a million people live there.

A note on the Tmin record: Tmin for an agricultural region like Fresno is only really significant if it crosses the 32°F/0°C line. In other words, above or below freezing. A shift from about 39°F to 42°F looks like “3 whole degrees!” but has almost no effect on anything important in Fresno. May save on heating bills…..

May I suggest that those who have a link for their own location’s temperature records copy that link into TheWayBackMachine’s search function? Then compare the results?

PS Over time the “link” address may have changed. (ie going from “.com” to “.gov”.)

Gunga ==> You can give it a try….not always successful when the page runs a script to create a graph or text file in present time.

Starting in 2007 I started to copy/paste the tables of record highs and lows for Columbus Ohio into Excel. (Sometimes it took a bit of editing to get the dates, values and years into columns.)

But I did have the web address and added the oldest (2002) I could find on TheWayBackMachine.

Now the show a pdf rather than a table of numbers.

The last time I compared different list, about 10% of both record highs and lows have been changed. Not new records set but old values changed.

PS Here’s and example of what you might find. (In 2016 they changed the web address.)

https://web.archive.org/web/2016*/http:/www.erh.noaa.gov/iln/cmhrec.htm

An EPA graphic shows the trend in the contiguous US has been higher low temperatures rather than higher highs. The graph of Unusually Hot Summer Temperatures shows that “hot daily highs” (smoothed) for 1910-2020 have just returned to the percent of land area of the 1930’s. From the perspective of the 20th Century there is nothing unusual about today’s daily high summer temperatures. The orange line shows the percent of the US land area that has warmer low temperatures has more than doubled since the 1930’s, from about 20% to almost 45% of the country, another indication that it is warmer lows rather than hotter highs.

Climate Change Indicators: High and Low Temperatures

https://www.epa.gov/climate-indicators/climate-change-indicators-high-and-low-temperatures

This indicator describes trends in unusually hot and cold temperatures across the United States.

Figure 1. Area of the Contiguous 48 States with Unusually Hot Summer Temperatures, 1910–2020

https://www.nature.com/articles/s41598-018-25212 (Web view)

See this agricultural study for confirmation of what you just said. Sooner or later climate science is going to need to explain some of these studies from other disciplines.

Link says “not found” at Nature.com.

Try this link.

U.S. Agro-Climate in 20th Century: Growing Degree Days, First and Last Frost, Growing Season Length, and Impacts on Crop Yields | Scientific Reports (nature.com)

Could maybe an editor update the link on my 1st post?

Thanks. Yep, modestly warmer weather is better, all things considered. So GDD is a negative, but nearly everything else is better for agriculture. What is happening is warmer lows, mostly, and not significantly higher highs.

And how does this unscientific guesswork affect the associated uncertainty? Surely it is increased by at least the adjustment amount.

A better conclusion to all this adjustment is that such data is unfit for climate analysis purposes and should never be used in climate analysis.

I’m baffled that someone trained in real science and math would happily casually adjust data and then think they are performing science.

Patrick ==> The “adjusting” here is in the effort to make sense of a record that is not truly continuous. For 20 years, they measured temperature (hypothetically) in an orange grove, then moved the weather station to the local fire station, mae of fine red brick, on the edge of town which soon was surrounded by ten thousand houses built for returning WWII vets, then moved to the little regional airport, surrounded by a few cement runways but now has a huge air-conditioned terminal (pumping all that heat back outside) a staggering array of new runways and roadways — creating a massive heat island inside a heat island.

How do we interpret the patched-together records which measured temperature in different places under different, constantly changing conditions?

You can’t piece them together. There is no time machine that lets you go back and determine the microclimates that existing thermometers were measuring. Adjusting “official” temperature data in order to manufacture “long” records is a farcical attempt at science. At best, it should be done on individual studies where the reasons and algorithms are shown to all so that reasonableness can be seen.

Jim ==> Quite right — it is a fool’s errand. The best we can do is simply show the real un-fudged data and annotate it.

In the Real World®, the little squeeny bit of rise in temps, a degree here or there is not worth worrying about.

For a record of measurements to be scientific and used to do Science, it must be the same place, the same time, the same conditions, the same tools, etc etc etc over a long enough period of time to be important.

It’s not clear to me what the criticisms are against “good adjustments”?

If urbanization has created a warming trend, then how do we attribute that warming to urbanization when others attribute it to rising greenhouse gases?? That attribution determines major policies from local to global.

By comparing rural stations with urbanized stations, it can be better argued, even with a given necessary level of uncertainty, that the warming was due to urbanization.

Again, the rationale behind a lot of the adjustments is to eliminate UHI temperature increases. Again, why? It seems to me the overriding expectation is to create a “long record” with no obvious warming that can’t be blamed on CO2.

Think about it. Short length records that appear and disappear can cause lots of variation and introduce spurious trends. A “long record” with smooth changes looks to be free of any untoward variations and makes for a nice smooth trend.

I agree with you about including UHI. From my point of view UHI generates IR while rural has a substantial direct reflection of the sun’s insolation. Why would the warmists not want this? Because it displaces CO2 as a warming factor and further minimizes its effects. Can’t have that!

Jim ==> I quite agree — in a more general sense, way too much of modern science involves results which are then “corrected” for things not measured.

Adjustments to past data are subjective at best, reflecting the biases of those doing the adjustments.

It is perfectly fine to do these kinds of adjustments in a study of temperature data but the adjustments *must* be justified and published as part of the study if the study is to be considered credible.

It is my opinion that the adjustments should actually be shown as increases in the uncertainty of the data and that the resulting uncertainty be properly propagated into the final results. I suspect that this isn’t being done with climate studies/models because the final uncertainty would overwhelm the tiny differences the studies are attempting to identify.

How do you homogenize temperatures from different stations, some of which are located over sand/clay/bermuda grass/fescue grass/ kentucky bluegrass/cement/etc, some of which change color because of changing seasons, in order to estimate the temperature at a location with no measuring station?

No. You throw the data out. Or you attach large error bars which makes the data useless in most cases.

if you approach this as a science, which the warmers don’t, you have to treat the data as you would in any science – strictly and honestly. And if you screwed up your data collection you are honest and say “we don’t know because we don’t have the data.”

Patrick ==> I once did a thought experiment essay here: “Mind over Math: Throwing Out the Numbers” https://wattsupwiththat.com/2021/03/07/mind-over-math-throwing-out-the-numbers/ which offered a glimpse at what can be done.

It is unfortunate, and it makes thing more complicated and harder to grasp, but let us suppose we have some good reason to want to know how the cost of something in particular (natural gas? tea? caviar? bricks?) has changed over the past several decades in Poland vs change in the USA.

Would we get a truer picture by sticking to the exact reported prices in whatever exchange medium Poland has used over that time and the exact reported prices in $ the USA has seen

or

might we want to adjust for inflation in Poland and the USA over that time period (assuming reasonable data on that!!) and also adjust for the changing exchange rates over that time period?

I want to make an edit. BUT, and this happens frequently, when I invoke Edit, I get nothing, just a blank text box so it is impossible to make any change? Is there some way around this difficulty?

You are mixing a “counting” function over time with a “measurement” function which is not a time function. They are two different things.

“might we want to adjust for inflation in Poland and the USA over that time period (assuming reasonable data on that!!) and also adjust for the changing exchange rates over that time period?”

The operative phrase in this is “assuming reasonable data”.

Inflation and exchange rates really have no uncertainty associated with them, or at least the uncertainty is far smaller than the actual values themselves. They are considered to be “known constants”. Those constants may change over time but that only requires doing a piecewise analysis of the values. I.e. inflation = i_1 at time t_1 and i_2 at time t_2. i_1 and i_2 are still known constants with no uncertainty.

That’s not the case for temperature measurements, especially measurements in the past. They are *not* known constants. Temp measurements are variables with uncertain values, typically written as “stated value +/- uncertainty”.

When you adjust that “stated value +/- uncertainty” what are you actually adjusting? The “stated value” or the uncertainty interval surrounding that “stated value”?

Since you simply cannot know the calibration of that measuring instrument at any specific point in time (outside of a calibration lab anyway) it doesn’t make any sense to me as an engineer to adjust the “stated value”. All you can really do is adjust the “uncertainty interval” to allow for not knowing the actual calibration. That is exactly what the uncertainty interval is to be used for!

Just confirms how absurd it is for climate ‘science’ to quote results to ridiculous levels of accuracy while making claims about the future based on models, which will be 100% wrong.

What a tragedy that science has been reduced to nonsensical measures such as ‘consensus’. If only Feynman were alive now — I cannot believe he’d have stooped to the unbelievably low standards set by so-called ‘scientists’ today.

Books such as this should be part of the curriculum, but of course they’ll just be rubbished by those who think “they know better”.

“The Science is Settled”.

The Data isn’t.

None of the historical USHCN meteorological stations have sensors accurate enough to use for climatological studies. The USCRN aspirated PRT sensors are good enough, but they were only installed beginning about 2003 and only cover the US.

The historical readings can be adjusted as carefully as one likes. But the results will not produce a climatologically useful record. I haven’t read John’s book, but expect that problem does not appear in its pages.

Pat ==> Back in the day of the original Surface Stations Project, I evaluated the Santo Domingo, Dominican Republic station. It was actually pretty good, in the middle of an open field behind the National Weather Bureau. One oddity was the field would be planted in corn in the spring, which would grow to six feet surrounding the fenced station. The other oddity was the concrete block set to one side of the Stevenson Screen containing the thermometer.

I asked the retired Chief Meteorologist (who still reported to work every day) about the block….he explained that it was there for the use of the shorter weathermen to stand on when they checked the thermometer, so their eyes would be level with the top of mercury column….

He lamented that most of the short men were too proud to stand on it, resulting in temperaure reading that were often a whole degree too high….

“resulting in temperaure reading that were often a whole degree too high”

This is quite interesting, and maybe just a little bit humorous.

What made them suspect short, proud weatherman were taking incorrect readings in the first place?

Did they take some ‘proud’ (not the adjective I’d use) short weatherman and make him take measurements when on a block and when not, to see how far out his measurements were?

How did they separate the proud short weathermen from the others? If they were too proud to stand on the block, maybe they were also too proud to admit they didn’t stand on it.

How did they fix the problem?

And what about weatherman who were tall, so had to stoop to get their eyes level with the thermometer?

Now, you are discussing both uncertainty and error in measurement. Not an easy subject.

I know of someone who has all the answers.

Chris ==> This is a true anecdote — the Chief Meteorologist had been supervising this weather station for decades, all the weathermen worked for him. They were his employees. He knew his people, he watched them work every day.

They didn’t fix the problem. But he did tell me the story by way of explanation for some of the defects of his temperature records.

Hispanic cultures trap even the bosses. For decades he did nothing about it. But he had a reasonable excuse for the Gringos.

Is that called systemic error? Did the minimum temperatures have less error?

Jim ==> It is a perfect example why these older human-recorded records, even when long and complete, have to be taken with a shaker of salt.

I agree completely, although the doctor limits my intake. It is one other reason to consider the uncertainty of past records to be fairly large. An NWS employee once told me that before 1900 the uncertainty should be ±4, and before WWII ±2. It just floors me that the folks dealing in anomalies believe they can “adjust” data with this kind of uncertainty and use them to calculate anomalies to the 1/1000th of a degree using them. Graphs without shading to indicate the uncertainty (not Standard Error of the Mean) range of these temps are just creating propaganda.

Kip, even perfectly sited and maintained sensors will produce temperature errors due to irradiation and wind speed effects. Every calibration study shows this.

The GISS fix for the short weatherman problem might just be to assume consistent methodology and subtract 1 C from all the temps. Eh! Voila! Accuracy! 🙂

Pat ==> Yeah — but what about the tall guys? How many short? How short were those short guys?

Anyway, true story!

Pat,

Respectfully, your comment might be misleading to some unknowledgeable people. Even USCRN aspirated PRT sensors can have uncertainty wider than the differences climate scientists want to identify.

It’s not just the capability of the sensor itself that is the determining factor for total uncertainty but the entire measurement device itself. Even a USCRN aspirated device can have build up in the airflow path affecting the airflow across the sensor. Things like snow, ice, insect infestation, etc can affect the uncertainty. Component drift in the electronic circuits associated with the sensor can be unknown over time and affect the total uncertainty at any specific point in time.

It is sad that most climate studies/models simply don’t take into account uncertainty at all. They just assume that all uncertainty cancels and disappears into the averages they take.

Didnt Christy put much of this down to the increase in irrigation, and hence water vapour increase locally?

There are so many factors that apply to temperatures that it is impossible to even know them let alone quantify them. How many rural measuring stations are affected by evapotranspiration of crops surrounding the measuring station (i.e. higher humidity)? How should this be included in the uncertainty interval of the data from the station?

Yet we are expected to believe that the CAGW crowd can measure temperature differences out to the thousandths digit?

This isn’t the first time I’ve been informed that it isn’t so much the maximum temperatures that are increasing than it is the minimums.

Hands up though who has seen stories by MSM telling us this. Anyone?

If you believed MSM you’d think that it was the maximum temperatures that were increasing the most.

The San Joachim valley is a rift valley, an extension of the rifting that also includes the Salton Sea further to the south. The Salton Sea hosts an active volcanic system, so are we sure that no such system exists (at depth) in the San Joachim Valley?

So now there are “good adjustments” and “bad adjustments”?

I don’t buy it.

Available on Amazon for Kindle for $6.95

https://www.amazon.com/getting-hotter-Fresno-not-hometowns-ebook/dp/B091JJJW8R/ref=sr_1_1?crid=2LH9KT2AH7QUK&keywords=is+it+getting+hotter+in+fresno&qid=1655492956&sprefix=%2Caps%2C78&sr=8-1

Thanks for the link. I just ordered the Kindle version!

If you have to adjust you haven’t chosen your site, the equipment or both from a good science point of view. USCRN has shown how it’s done it’s not a matter of re-inventing the wheel but getting matters right from the get go. There is no justification for adjusting PROPERLY collated data.