The pause continues…

Dr. Roy Spencer writes:

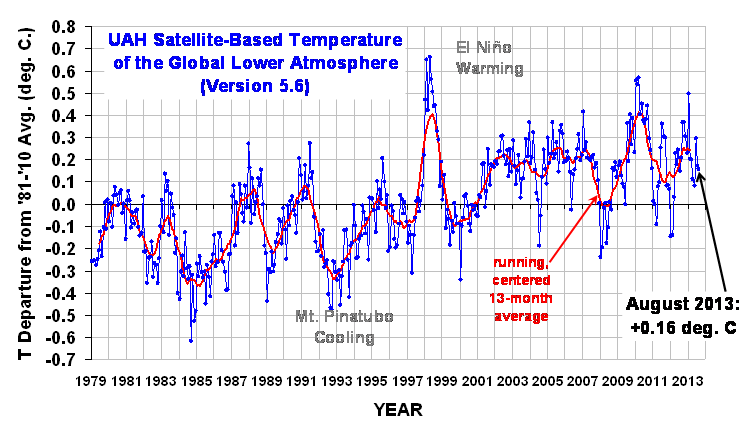

The Version 5.6 global average lower tropospheric temperature (LT) anomaly for August, 2013 is +0.16 deg. C (click for large version):

The global, hemispheric, and tropical LT anomalies from the 30-year (1981-2010) average for the last 20 months are:

YR MON GLOBAL NH SH TROPICS

2012 1 -0.145 -0.088 -0.203 -0.245

2012 2 -0.140 -0.016 -0.263 -0.326

2012 3 +0.033 +0.064 +0.002 -0.238

2012 4 +0.230 +0.346 +0.114 -0.251

2012 5 +0.178 +0.338 +0.018 -0.102

2012 6 +0.244 +0.378 +0.111 -0.016

2012 7 +0.149 +0.263 +0.035 +0.146

2012 8 +0.210 +0.195 +0.225 +0.069

2012 9 +0.369 +0.376 +0.361 +0.174

2012 10 +0.367 +0.326 +0.409 +0.155

2012 11 +0.305 +0.319 +0.292 +0.209

2012 12 +0.229 +0.153 +0.305 +0.199

2013 1 +0.496 +0.512 +0.481 +0.387

2013 2 +0.203 +0.372 +0.033 +0.195

2013 3 +0.200 +0.333 +0.067 +0.243

2013 4 +0.114 +0.128 +0.101 +0.165

2013 5 +0.083 +0.180 -0.015 +0.112

2013 6 +0.295 +0.335 +0.255 +0.220

2013 7 +0.173 +0.134 +0.212 +0.074

2013 8 +0.158 +0.107 +0.208 +0.009

Note: In the previous version (v5.5, still provided to NOAA due to contract with NCDC) the temps are slightly cooler, probably due to the uncorrected diurnal drift of NOAA-18. Recall that in v5.6, we include METOP-A and NOAA-19, and since June they are the only two satellites in the v5.6 dataset whereas v5.5 does not include METOP-A and NOAA-19.

Names of popular data files:

From the UAH online press release by Dr. Phillip Gentry:

Global Temperature Report: August 2013

- Global climate trend since Nov. 16, 1978: +0.14 C per decade August temperatures (preliminary)

- Global composite temp.: +0.16 C (about 0.29 degrees Fahrenheit) above 30-year average for August.

- Northern Hemisphere: +0.11 C (about 0.20 degrees Fahrenheit) above 30-year average for August.

- Southern Hemisphere: +0.21 C (about 0.39 degrees Fahrenheit) above 30-year average for August.

- Tropics: +0.01 C (about 0.02 degrees Fahrenheit) above 30-year average for August.

July temperatures (revised):

Global Composite: +0.17 C above 30-year average

Northern Hemisphere: +0.13 C above 30-year average

Southern Hemisphere: +0.21 C above 30-year average

Tropics: +0.07 C above 30-year average

(All temperature anomalies are based on a 30-year average (1981-2010)

for the month reported.)

Notes on data released Sept. 10, 2013:

Compared to seasonal norms, in August the coolest area on the globe was southern Greenland, where temperatures in the troposphere were about 1.97 C (about 3.55 degrees F) cooler than normal, said Dr. John Christy, a professor of atmospheric science and director of the Earth System Science Center (ESSC) at The University of Alabama in Huntsville. The warmest area was south of New Zealand in the South Pacific, where tropospheric temperatures were 2.82 C (about 5.1 degrees F) warmer than seasonal norms.

Archived color maps of local temperature anomalies are available on-line at:

As part of an ongoing joint project between UAHuntsville, NOAA and NASA, Christy and Dr. Roy Spencer, an ESSC principal scientist, use data gathered by advanced microwave sounding units on NOAA and NASA satellites to get accurate temperature readings for almost all regions of the Earth. This includes remote desert, ocean and rain forest areas where reliable climate data are not otherwise available.

The satellite-based instruments measure the temperature of the

atmosphere from the surface up to an altitude of about eight

kilometers above sea level. Once the monthly temperature data is

collected and processed, it is placed in a “public” computer file for

immediate access by atmospheric scientists in the U.S. and abroad.

Neither Christy nor Spencer receives any research support or funding from oil, coal or industrial companies or organizations, or from any private or special interest groups. All of their climate research funding comes from federal and state grants or contracts.

— 30 —

So the lack of climate change is worse than we thought.

I was wondering if someone would break the chart down to just the current month. IE: Show nothing but the month of Aug for all of the years in the chart. I think that would useful, to graph challenged readers like me.

And as I always try to provide on this thread, for those interested, here’s a link to the full sea surface temperature update for August 2013 (Reynolds OI.v2 data). I posted it yesterday:

http://bobtisdale.wordpress.com/2013/09/09/august-2013-sea-surface-temperature-sst-anomaly-update/

That unusual warming in the extratropical North Pacific was still underway in August.

Regards

So now we are at 201 months of no warming?

RSS numbers also out.

Down slightly by 0.05C

http://notalotofpeopleknowthat.wordpress.com/2013/09/10/uah-rss-numbers-for-august/

Bob Tisdale: Measuring sea levels is problematic. How reliable is measuring sea temp? More or less confidence than say land?

All those trillions spent stopping climate change have worked a treat.

God I hate graphs with running averages or regression superimposed on them, as if our naked eye is incapable of picking out trends. All it does is obscure the data.

The underlying warming trend (after removing the ENSO, AMO, solar cycle, and volcano influences) of the UAH/RSS average and the lower troposphere provided by the radiosonde balloons/HadAT going back to 1958 is a pretty steady 0.056C per decade.

http://s22.postimg.org/jyr62xv35/UAH_RSS_Had_At_Warming_Trend.png

Bill,

Right. It would be warming except for the cooling. (heavy sigh)

In order to have a solar/climate connection show up the solar conditions have to vary by a certain degree of magnitude over a certain duration of time, anything short of that WILL NOT BE ENOUGH ,to show a solar /climate connection.

This is why it is hard to show solar/climate connections since the end of the Dalton , to very recently.

However the sun has gone into a prolonged solar minimum state which is turing out much WEAKER then the conventional forecast thus far ,and IS going to have an impact on the climate going forward if the prolonged solar minimum reaches the many solar parameters I have talked about.

solar flux sub 90 sustained.

solar wind sub350 km/sec. sustained.

UV light off upwards of 50% sustained.

cosmic ray count 6500 or more sustained.

solar irradiance off .015% or more sustained

ap index 5.0 or lower 98+% of the time sustained.

These solar values folowing several years of sub solar activity in general which we have had since year 2005.

Salvatore Del Prete says:

September 10, 2013 at 10:47 am

That is the basic flaw in the reasoning of those who keep trying to say there are no solar/climate relationships.

Salvatore Del Prete says:

September 10, 2013 at 10:50 am

Many are in denial of the climatic response to the last two prolonged solar minimum periods,(Maunder Minimum /Dalton Minimum) and do not accept the concept of thresholds, which require a certain degree of magnitude change and duration of time change in the state of solar activity in order for it to exert an influence on the climate.

The period from 1844-2005 should have shown weak to no solar/climate correlations due to the fact solar activity through out that time was in a steady regular 11 year strong sunspot cycle with peaks and lulls which would masked any potential solar/climate correlations.

To clarify there is not one prolonged solar minimum period during that time frame following several years of sub-solar activity in general , to refer to ,to see if prolonged solar minimum conditions do or do not exert an influence on the climate directly and thru secondary means.

Salvatore Del Prete says:

September 10, 2013 at 10:53 am

The low solar activity associated with solar cycle 14 does not meet my criteria for a prolonged solar minmimum period following many years of sub-solar activity in general an thus a definitive solar/climate correlation.

Mike Silver says:

September 10, 2013 at 11:40 am

rgbatduke says:

“As I said, if the sun does enter a prolonged period of comparative inactivity with extended, weak solar cycles, then no matter what the climate does it will be very useful data for those seeking not to assert certain knowledge that they do not, in fact, possess but to determine what the correct theory is by building constructive, physics-based theories that explain the data as the climate moves through something more than a monotonic behavior of irregular warming, which is pretty much all that has persisted for the last 30 to 40 years (as it did for the similar length period at the beginning of the 20th century from roughly 1910 to 1950).”

The weak solar cycle started late 2008, and we have had highly negative AO/NAO conditions and very low land temperature episodes already. It helps to keep up to date.

“As far as I know, there is no convincing evidence that the climate over the last 2000 years was modulated by solar magnetic activity, and we lack direct observational evidence in the form of sunspot counts to extend the Maunder Minimum assertion back to earlier periods of cooling.”

There are Aurora records, and reconstructions:

http://www.aanda.org/articles/aa/full/2007/19/aa6725-06/img81.gif

http://davidpratt.info/climate/clim9-10.jpg

“There is no convincing evidence I’m aware of that solar magnetic activity had anything to do with the Younger Dryas itself,”

Did you check google scholar?

http://www.sciencedirect.com/science/article/pii/S0012821X03007015

“I especially doubt that the climate is a one-trick pony, slaved to solar magnetic activity to the exclusion of all else,”

There are always negative AO/NAO conditions when the solar plasma is slow:

http://www.leif.org/research/Ap-1844-now.png

[snip . . misposting . . mod]

Bill Illis says:

September 10, 2013 at 11:39 am

So, in round numbers, there has been a 0.16 degree change since the baseline temperature of the mid-1970’s, or 0.16 degree in 45+ years, right? Makes it just under 0.4 degrees per century for near on the last 1/2 century? 8<)

It went out before I had a chance to edit it, all that additional stuff.. Sorry

It’s all on the scale and range of graphs selected. We are on the 6th upswing since the end of the last ice age and this upswing is cooler than the preceding 5 upswings as we continue our downward slide to a return to the normal temps for this epoch which is what we term the ice age.

In the nearer term view we are continuing our exit from the little ice age.

In the even nearer term we might, as Bill Illis has pointed out with no context think we have some minor CO2 based warming going on.

Or we can look at an even nearer term and see we are in a cooling phase.

All of this is make interesting discussion points, while the smart money is buying up condo land on the eastern edge of the future American atlantic shoreline at the edge of our current continental shelf 🙂 (and speculating on where the population of Canada is going to migrate)

The MET and others have already suggested some cooling as we continue through the cool phase of the PDO. The wild card is the solar cycle. Today we have one, tiny little sunspot that some think would not have been counted in past centuries. In any case, we’re that tiny spot away from a sun spotless day with many likely to follow. If we have the grand solar minimum many or most astronomers now expect, we’ll get a better handle on whether or not it’s just TSI or other solar radiation wavelength that forces global temperature change. Exciting times!

Is there a plan to move back to the replacement AMSU-2?

Maybe we could convince Roy to supply the data in kelvin or supply a climatology in addition to anomalies

Wow so Bob Tisdale’s global sea surface temps graph is similar to AMSU satellite data from Roy Spencer’s. The correlation is probably rr2 0.99 or something similar just from looking at both graphs. This confirms Roy Spencer’s and RSS data as a very reliable indicator of Global temperature trends. It s shows that Hadcrut, GISS etc BEST land based thermometer Stevenson boxes are probably way off due to UHI etc.

John Mason says: @ur momisugly September 10, 2013 at 11:57 am

All of this is make interesting discussion points, while the smart money is buying up condo land on the eastern edge of the future American atlantic shoreline at the edge of our current continental shelf 🙂 (and speculating on where the population of Canada is going to migrate)

>>>>>>>>>>>>>>>>>>>>>>>

Just make sue you have an iron clad trust passing on to your distant heirs : >)

The only surprise will be the speed of the Solar effect.

Jeff in Calgary says:

September 10, 2013 at 11:19 am

So now we are at 201 months of no warming

Well, Yes and No, Jeff!

The RSS figures just released can be shown to have a tiny negative slope all the way back to November 1996, which is indeed 201 months.

However, for this UAH V5.6 dataset, you really have to cherry-pick your dates to find any cooling (as a pose to not much warming). The furthest back you can go with a negative slope is July 2008, or only 61 months, but within this 61 months there are several shorter periods ending in August 2013 which have a positive slope, so I think the statisticians among us will pour cold water on the idea that we can call it “cooling”.

To illustrate how confusion can be spread with the misuse of statistics, the latest 61-month period is the warmest such period in the dataset, even though it has a negative slope.

You can do a couple of other analyses on this data which also don’t show any cooling – just a deceleration in the warming. If you work out the 34 annual temperatures from 1979, and fit a least squares trend line through those for each year up to the end of 2012, you can go all the way back to 2005 and find a negative trend, though now the trends from 2006, 2007 and 2008 are all positive. But before you say, “Aha 7 years cooling”, repeat the exercise using “years” which run from September to August, in order to include the very latest data, and your 7-year cooling disappears, leaving only the 2 recent annual periods from Sept 2009 and 2010 with negative trends.

Confusing? And that’s without even mentioning statistical significance, or wondering whether each month can be considered to be independent of the previous one.

Many thanks to the providers of these data sets, without whom we would not be able to look forward to the 10th of each month and pick around these few hundredths of a degree….

Phillip Bratby says:

September 10, 2013 at 11:12 am

So the lack of climate change is worse than we thought.

————————————————————

It will be for some …………

“There is no convincing evidence I’m aware of that solar magnetic activity had anything to do with the Younger Dryas itself,”

Did you check google scholar?

http://www.sciencedirect.com/science/article/pii/S0012821X03007015

A paper is not “convincing evidence”, not when there are at least two or three competing theories, NONE of them particular compelling. Perhaps it was a small asteroid impact — there is evidence to support it. Perhaps it was the melting of an ice berm, and sudden draining of a meltwater “ocean” that flooded the arctic, interrupted the thermohaline circulation which re-established on a more southerly track and caused a thousand-year hiatus in the warming. Perhaps it was the sun. Perhaps it was whatever the hell caused the Ordovician-Silurian glacial era (my favorite has to be cosmological clouds of leftover darkons or magnetic monopoles or maybe just plain old dust that the sun drifted into, although there are a few issues with the solar wind). There might even be more hypotheses out there, these are all ones I’ve read. It could even be pure chaos, and not even have a “proximate cause” in the form of an actual controlling driver. It could be combinations of several things happening in coincidence.

I’d work through the rest of your quotes (of me), but it doesn’t matter. You included the first quote which is the only one that really matters. One way or another, we’ll improve our scientific understanding of something that we have never before seen with modern instrumentation in orbit, and perhaps learn something about the climate even if what we learn is a null result (that the solar cycle has NO discernible effect on the climate, where “discernible” means outside of the unexplained natural variability and noise, which is actually a rather huge range making it not that unlikely an outcome).

rgb

Many thanks to the providers of these data sets, without whom we would not be able to look forward to the 10th of each month and pick around these few hundredths of a degree…. C error bar, increasing as you work backwards in time, and THEN consider the p-value of the null hypothesis comparing a zero-trend least squares fit to the best trended fit via e.g. Pearson’s

C error bar, increasing as you work backwards in time, and THEN consider the p-value of the null hypothesis comparing a zero-trend least squares fit to the best trended fit via e.g. Pearson’s  . You could drive a truck through there, and if you let the error bars grow to 0.5 C or better in the early 20th century (as is pretty reasonable) you could drive the truck back into the 19th century and barely be able to assert that it has probably warmed. And yeah, that doesn’t even begin to account for autocorrelation in what is most certainly non-Markovian, nonlinear, chaotic time evolution with multiple autocorrelation timescales ranging from seconds to centuries.

. You could drive a truck through there, and if you let the error bars grow to 0.5 C or better in the early 20th century (as is pretty reasonable) you could drive the truck back into the 19th century and barely be able to assert that it has probably warmed. And yeah, that doesn’t even begin to account for autocorrelation in what is most certainly non-Markovian, nonlinear, chaotic time evolution with multiple autocorrelation timescales ranging from seconds to centuries.

Ya mon. It becomes even amusinger if you estimate any sort of reasonable statistical/experimental error and associate it with the data. Throw in a

Sigh. Now, give me a CENTURY’S worth of high quality instrumental data obtained with nobody’s thumb on the damn scales, spanning something other than a monotonic (supposed) rise, and maybe we’ll get somewhere.

rgb

Jeff in Calgary says:

September 10, 2013 at 11:19 am

So now we are at 201 months of no warming?

With RSS, it is actually 202 months since it went 1 month forward and 1 month back from last month. So it goes from November 1996 to August 2013. See:

http://www.woodfortrees.org/plot/rss/last:202/plot/rss/last:202/trend

As for UAH, I cannot dispute what Richard Barraclough says about 5.6, however version 5.5 went back to January 2005 last month and the new value should push it back a bit more. I can be more specific once the new numbers come out on WFT.