NOTE: This post is important, so I’m going to sticky it at the top for quite a while. I’ve created a page for all Spencer and Braswell/Dessler related posts, since they are becoming numerous and popular to free up the top post sections of WUWT.

UPDATE: Dr. Spencer writes: I have been contacted by Andy Dessler, who is now examining my calculations, and we are working to resolve a remaining difference there. Also, apparently his paper has not been officially published, and so he says he will change the galley proofs as a result of my blog post; here is his message:

“I’m happy to change the introductory paragraph of my paper when I get the galley proofs to better represent your views. My apologies for any misunderstanding. Also, I’ll be changing the sentence “over the decades or centuries relevant for long-term climate change, on the other hand, clouds can indeed cause significant warming” to make it clear that I’m talking about cloud feedbacks doing the action here, not cloud forcing.”

[Dessler may need to make other changes, it appears Steve McIntyre has found some flaws related to how the CERES data was combined: http://climateaudit.org/2011/09/08/more-on-dessler-2010/

As I said before in my first post on Dessler’s paper, it remains to be seen if “haste makes waste”. It appears it does. -Anthony]

Update #2 (Sept. 8, 2011): Spencer adds: I have made several updates as a result of correspondence with Dessler, which will appear underlined, below. I will leave it to the reader to decide whether it was our Remote Sensing paper that should not have passed peer review (as Trenberth has alleged), or Dessler’s paper meant to refute our paper.

by Roy W. Spencer, Ph. D.

While we have had only one day to examine Andy Dessler’s new paper in GRL, I do have some initial reaction and calculations to share. At this point, it looks quite likely we will be responding to it with our own journal submission… although I doubt we will get the fast-track, red carpet treatment he got.

There are a few positive things in this new paper which make me feel like we are at least beginning to talk the same language in this debate (part of The Good). But, I believe I can already demonstrate some of The Bad, for example, showing Dessler is off by about a factor of 10 in one of his central calculations.

Finally, Dessler must be called out on The Ugly things he put in the paper.

(which he has now agreed to change).

1. THE GOOD

Estimating the Errors in Climate Feedback Diagnosis from Satellite Data

We are pleased that Dessler now accepts that there is at least the *potential* of a problem in diagnosing radiative feedbacks in the climate system *if* non-feedback cloud variations were to cause temperature variations. It looks like he understands the simple-forcing-feedback equation we used to address the issue (some quibbles over the equation terms aside), as well as the ratio we introduced to estimate the level of contamination of feedback estimates. This is indeed progress.

He adds a new way to estimate that ratio, and gets a number which — if accurate — would indeed suggest little contamination of feedback estimates from satellite data. This is very useful, because we can now talk about numbers and how good various estimates are, rather than responding to hand waving arguments over whether “clouds cause El Nino” or other red herrings. I have what I believe to be good evidence that his calculation, though, is off by a factor of 10 or so. More on that under THE BAD, below.

Comparisons of Satellite Measurements to Climate Models

Figure 2 in his paper, we believe, helps make our point for us: there is a substantial difference between the satellite measurements and the climate models. He tries to minimize the discrepancy by putting 2-sigma error bounds on the plots and claiming the satellite data are not necessarily inconsistent with the models.

But this is NOT the same as saying the satellite data SUPPORT the models. After all, the IPCC’s best estimate projections of future warming from a doubling of CO2 (3 deg. C) is almost exactly the average of all of the models sensitivities! So, when the satellite observations do depart substantially from the average behavior of the models, this raises an obvious red flag.

Massive changes in the global economy based upon energy policy are not going to happen, if the best the modelers can do is claim that our observations of the climate system are not necessarily inconsistent with the models.

(BTW, a plot of all of the models, which so many people have been clamoring for, will be provided in The Ugly, below.)

2. THE BAD

The Energy Budget Estimate of How Much Clouds Cause Temperature Change

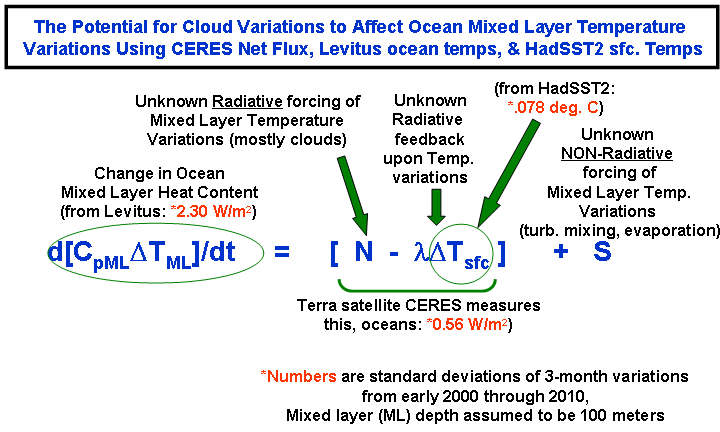

While I believe he gets a “bad” number, this is the most interesting and most useful part of Dessler’s paper. He basically uses the terms in the forcing-feedback equation we use (which is based upon basic energy budget considerations) to claim that the energy required to cause changes in the global-average ocean mixed layer temperature are far too large to be caused by variations in the radiative input into the ocean brought about by cloud variations (my wording).

He gets a ratio of about 20:1 for non-radiatively forced (i.e. non-cloud) temperature changes versus radiatively (mostly cloud) forced variations. If that 20:1 number is indeed good, then we would have to agree this is strong evidence against our view that a significant part of temperature variations are radiatively forced. (It looks like Andy will be revising this downward, although it’s not clear by how much because his paper is ambiguous about how he computed and then combined the radiative terms in the equation, below.)

But the numbers he uses to do this, however, are quite suspect. Dessler uses NONE of the 3 most direct estimates that most researchers would use for the various terms. (A clarification on this appears below) Why? I know we won’t be so crass as to claim in our next peer-reviewed publication (as he did in his, see The Ugly, below) that he picked certain datasets because they best supported his hypothesis.

The following graphic shows the relevant equation, and the numbers he should have used since they are the best and most direct observational estimates we have of the pertinent quantities. I invite the more technically inclined to examine this. For those geeks with calculators following along at home, you can run the numbers yourself:

Here I went ahead and used Dessler’s assumed 100 meter depth for the ocean mixed layer, rather than the 25 meter depth we used in our last paper. (It now appears that Dessler will be using a 700 m depth, a number which was not mentioned in his preprint. I invite you to read his preprint and decide whether he is now changing from 100 m to 700 m as a result of issues I have raised here. It really is not obvious from his paper what he used).

Using the above equation, if I assumed a feedback parameter λ=3 Watts per sq. meter per degree, that 20:1 ratio Dessler gets becomes 2.2:1. If I use a feedback parameter of λ=6, then the ratio becomes 1.7:1. This is basically an order of magnitude difference from his calculation.

Again I ask: why did Dessler choose to NOT use the 3 most obvious and best sources of data to evaluate the terms in the above equation?:

(1) Levitus for observed changes in the ocean mixed layer temperature; (it now appears he will be using a number consistent with the Levitus 0-700 m layer).

(2) CERES Net radiative flux for the total of the 2 radiative terms in the above equation, and (this looks like it could be a minor source of difference, except it appears he put all of his Rcld variability in the radiative forcing term, which he claims helps our position, but running the numbers will reveal the opposite is true since his Rcld actually contains both forcing and feedback components which partially offset each other.)

(3): HadSST for sea surface temperature variations. (this will likely be the smallest source of difference)

The Use of AMIP Models to Claim our Lag Correlations Were Spurious

I will admit, this was pretty clever…but at this early stage I believe it is a red herring.

Dessler’s Fig. 1 shows lag correlation coefficients that, I admit, do look kind of like the ones we got from satellite (and CMIP climate model) data. The claim is that since the AMIP model runs do not allow clouds to cause surface temperature changes, this means the lag correlation structures we published are not evidence of clouds causing temperature change.

Following are the first two objections which immediately come to my mind:

1) Imagine (I’m again talking mostly to you geeks out there) a time series of temperature represented by a sine wave, and then a lagged feedback response represented by another sine wave. If you then calculate regression coefficients between those 2 time series at different time leads and lags (try this in Excel if you want), you will indeed get a lag correlation structure we see in the satellite data.

But look at what Dessler has done: he has used models which DO NOT ALLOW cloud changes to affect temperature, in order to support his case that cloud changes do not affect temperature! While I will have to think about this some more, it smacks of circular reasoning. He could have more easily demonstrated it with my 2 sine waves example.

Assuming there is causation in only one direction to produce evidence there is causation in only one direction seems, at best, a little weak.

2) In the process, though, what does his Fig. 1 show that is significant to feedback diagnosis, if we accept that all of the radiative variations are, as Dessler claims, feedback-induced? Exactly what the new paper by Lindzen and Choi (2011) explores: that there is some evidence of a lagged response of radiative feedback to a temperature change.

And, if this is the case, then why isn’t Dr. Dessler doing his regression-based estimates of feedback at the time lag or maximum response? Steve McIntyre, who I have provided the data to for him to explore, is also examining this as one of several statistical issues. So, Dessler’s Fig. 1 actually raises a critical issue in feedback diagnosis he has yet to address.

3. THE UGLY

(MOST, IF NOT ALL, OF THESE OBJECTIONS WILL BE ADDRESSED IN DESSLER’S UPDATE OF HIS PAPER BEFORE PUBLICATION)

The new paper contains a few statements which the reviewers should not have allowed to be published because they either completely misrepresent our position, or accuse us of cherry picking (which is easy to disprove).

Misrepresentation of Our Position

Quoting Dessler’s paper, from the Introduction:

“Introduction

The usual way to think about clouds in the climate system is that they are a feedback… …In recent papers, Lindzen and Choi [2011] and Spencer and Braswell [2011] have argued that reality is reversed: clouds are the cause of, and not a feedback on, changes in surface temperature. If this claim is correct, then significant revisions to climate science may be required.”

But we have never claimed anything like “clouds are the cause of, and not a feedback on, changes in surface temperature”! We claim causation works in BOTH directions, not just one direction (feedback) as he claims. Dr. Dessler knows this very well, and I would like to know

1) what he was trying to accomplish by such a blatant misrepresentation of our position, and

2) how did all of the peer reviewers of the paper, who (if they are competent) should be familiar with our work, allow such a statement to stand?

Cherry picking of the Climate Models We Used for Comparison

This claim has been floating around the blogosphere ever since our paper was published. To quote Dessler:

“SB11 analyzed 14 models, but they plotted only six models and the particular observational data set that provided maximum support for their hypothesis. “

How is picking the 3 most sensitive models AND the 3 least sensitive models going to “provide maximum support for (our) hypothesis”? If I had picked ONLY the 3 most sensitive, or ONLY the 3 least sensitive, that might be cherry picking…depending upon what was being demonstrated. And where is the evidence those 6 models produce the best support for our hypothesis?

I would have had to run hundreds of combinations of the 14 models to accomplish that. Is that what Dr. Dessler is accusing us of?

Instead, the point was to show that the full range of climate sensitivities represented by the least and most sensitive of the 14 models show average behavior that is inconsistent with the observations. Remember, the IPCC’s best estimate of 3 deg. C warming is almost exactly the warming produced by averaging the full range of its models’ sensitivities together. The satellite data depart substantially from that. I think inspection of Dessler’s Fig. 2 supports my point.

But, since so many people are wondering about the 8 models I left out, here are all 14 of the models’ separate results, in their full, individual glory:

I STILL claim there is a large discrepancy between the satellite observations and the behavior of the models.

CONCLUSION

These are my comments and views after having only 1 day since we received the new paper. It will take weeks, at a minimum, to further explore all of the issues raised by Dessler (2011).

Based upon the evidence above, I would say we are indeed going to respond with a journal submission to answer Dessler’s claims. I hope that GRL will offer us as rapid a turnaround as Dessler got in the peer review process. Feel free to take bets on that. ![]()

And, to end on a little lighter note, we were quite surprised to see this statement in Dessler’s paper in the Conclusions (italics are mine):

“These calculations show that clouds did not cause significant climate change over the last decade (over the decades or centuries relevant for long-term climate change, on the other hand, clouds can indeed cause significant warming).”

Long term climate change can be caused by clouds??! Well, maybe Andy is finally seeing the light! ![]() (Nope. It turns out he meant ” *RADIATIVE FEEDBACK DUE TO* clouds can indeed cause significant warming”. An obvious, minor typo. My bad.)

(Nope. It turns out he meant ” *RADIATIVE FEEDBACK DUE TO* clouds can indeed cause significant warming”. An obvious, minor typo. My bad.)

Dave Springer says:

September 9, 2011 at 4:34 pm

I believe that reasons for going that deep have been given, especially by Spencer. If it is not in his papers under discussion, check his website. They are not debating the definition of “mixed layer.”

KR says:

September 9, 2011 at 3:44 pm

“Climate models, on the other hand, are boundary condition models, where you look at how the average values will change based on boundary conditions such as conservation of energy. They are generally run repeatedly with small variations in their initial conditions, simulate how the weather _might_ evolve over time, and based upon limits such as how fast energy moves in/out of the atmosphere, oceans, etc., try and predict the _average_ conditions over time. Variations like the ENSO, which are +/- shifts in where energy is located in the climate, average out over time, so even simple zero dimensional models that don’t attempt to replicate ENSO can provide some predictive power (if the boundary conditions are correct) over longer terms. But climate models are lousy at predicting whether it’s going to rain in Topeka next week – that’s a different question.”

This is a great beginning. You are doing the work of explicating Dessler’s paper. But you stop with the generalities. If you want us to take a different view of Dessler’s paper, restate the important arguments in it using a full explication of his major points instead of an introduction as you have here.

I see a lot of comments about how you cannot give models confidence on longer time scales when they fail the short time scales. In short, this point says that since you are modeling the average of weather, you cannot be successful if you do not successfully model weather processes.

I see a lot of comments talking about forcings and energy flows and how you cannot ignore forcings and energy flows when you are analyzing climate.

I happen to agree with both assessments, but has anyone really examined how radiative energy known as ‘forcings’ are used and put to work by the climate system? That seems like the very fundamental idea this whole exchange is all about.

Friends, if KR’s numerous statements and imputations have any remaining credibility in your mind – his shotgun defence of the indefensible:

KR repeatedly says (among many things):

September 9, 2011 at 11:20 am

“….The three models among those best known for reproducing ENSO are the closest to the observational data, particularly at the 3-5 month lag range. And the short (10 year) time frame used emphasizes ENSO variations, which makes this more a test of ENSO match than climate sensitivity.”

The three best models produce crap info about ENSO. For heaven’s sake, Landsheidt did a far better job of predicting using only solar motion! Wern’t all the models predicting an El Nino this year? And any of the other parties, please know that KR presented the same arguments and imputations over at Climate Audit and was even more thoroughly picked apart. KR seems to be here at WUWT trying to see if the more general audience will swallow at least some of it. It is CAGW rapid reaction distraction.

The main technique seems to be to make defences against things not said, to prove untrue that which was not claimed and to toss a dripping basket of red herrings over the conversation in the hope that someone will think colour makes a meal.

I encourage anyone who has time to read through the fascinating and thoroughgoing discussion at CA. It still continues and provides a good analysis of the whole of both papers and indeed the 2010’s as well. One comes away feeling informed and prepared to follow the conversation in papers that will undoubtedly follow.

KR, I read all that you are posting at CA. You are not going to convince the sentient that Dessler’s paper refutes S&B. It is too obviously flawed and even self-contradictory. Or that Spencer using 6 of the available 14 data sets was a deliberate selection of data that would make a point that is otherwise not determined. This was all patiently set out for you on CA (in spades). Perhaps you missed their cogent responses to your comments and accusations.

Clearly Dessler wrote in a rush and it was given to pals for the rubber stamp. Probably, in retrospect, unwise. It should be retracted for a re-write. Alternatively if you think S&B’s data analysis is flawed, you get something into print showing it and we will all read that too. No problem.

KR;

Have you noticed how all alone you are? Where is R Gates? Nick Stokes? Any of the regular trolls? Just one anonymous guy throwing up straw men because he (she?) doesn’t have the buts to put their real name behind their “science”.

Here’s some science for you. CO2 is logarithmic. Ergo:

All the CO2 emissions from 1920 until now have raised the concentration in the atmosphere from 280 PPM to 400 PPM. The result has been about the same amount of warming in the century since significant emissions began as the century before. The last 15 years have been FLAT.

So the obvious model would suggest that CO2 going from 280 to 400 means diddly squat.

Being logarithmic, the same increase again, from 400 PPM to 520 PPM means… exactly 1/2 of diddley squat.

Monkey with boundary conditions and average values and variations and zero dimensional models all you want. Those are the numbers, in exact opposition to everything the “models” said would be happening by now. Argue methodology all you want, those are the facts.

Countdown to anonymous bleating from the shadows about phase delay, pipelines, its more complicated than that…. three…. two…. cue the violins…

KR:

I see you continue to ignore points put to you and you continue to parrot warmist excuses.

At September 9, 2011 at 2:44 pm you respond to Nuke Nemesis having asked;

“How long a period does something happen to occur before it’s climate change and not weather? What’s the generally accepted time frame?”

By saying;

“The generally accepted time frame in the climate field appears to be 30 years.”

No! Absolutely not! That is a falsehood.

And your comments that follow are also wrong (which is not surprising because you imply they derive from Tamino who only posts the rubbish he is throwing away on his blog).

Any period of any length can be used for a climate assessment provided the length is specified. For example, HadCRUT and GISS publish climate data for individual months and years. And the 1994 IPCC Report compared 5-year periods as a method to discern changes to hurricane frequency.

30 years is the standard period used as a base for a comparison datum. It was chosen in 1958 – as part of the International Geophysical Year – as a purely arbitrary period because it was then thought that 30 years was the maximum time since reliable climate data had started to be collected. So, for example, HadCRUT and GISS determine their anomalies as differences from a 30 year period (but each uses a different 30 year period).

30 years would be a completely inappropriate time for distinction between ‘climate’ and ‘weather’. For example, 30 years is not a multiple of the 11 year solar cycle or the 22 year Hale cycle.

Richard

Reader troyca wrote at climateaudit, why Spencer’s computation using CERES-only data is better than Dressler’s combination of 2 datasets:

“…But based on the differences we see here (particularly with the SW fluxes), obviously it isn’t the case. Since we’re calculating CRF as the difference between clear-sky and all-sky fluxes, ANY difference in those two datasets is going to show up in the estimated cloud forcing, including their different estimates of solar insolation (which has nothing to do with clouds). The magnitude of the changes in flux is far smaller that the magnitude of the total flux, so you would expect using two different datasets to have a lot more noise unrelated to the CRF. Note that if there is ANY flux calculation bias in either of the two datasets unrelated to clear-sky vs. all-sky, it WILL show up in the CRF, whereas if you use the same dataset, even if a flux calculation bias is present, it will NOT show up in CRF unless it is related to clear-sky vs. all-sky.

Do I think Dessler should have had better reasons for switching to ERA-interim? Honestly, it’s not that interesting to me…what interests me is that clearly the CERES-only result is 1) important and 2) probably a better estimate.”

KR says:

September 9, 2011 at 3:44 pm

More same hand-waving blathering.

===============================

KR, I gotta give you points for persistence! Man, I don’t know if I could sit there and type like you do, all the while getting your ass handed to you. In all of your comments, have you made one point that didn’t get shot out of the sky? What I want to know, though, is what is wrong with you?

Dr. Spencer published a paper and you warmista go nuts! But, you don’t argue the science, you argue inane points that even if correct don’t even amount to minutia. Dessler’s response was inexcusable and indefensible. As was the Wagner publicity stunts and the three idjiots’ op-ed piece. (though, in retrospect, it seems to have worked out quite well for the skeptic side. 🙂 Kinda makes you wonder, don’t it? I mean, think about it. 5 PhDs (not counting reviewers and editors of GRL) Kicking up such a fuss as to make more news than when SB11 came out. All eyes were on Dessler’s paper that was so flawed that the skeptics had it shredded before 8 A.M. CST! I didn’t even get a chance to comment here before it was smoked! Was it a set up? Is there a closet skeptic on the inside? Or were all those mental giants so vapid as to not even know Dr. Spencer’s position on clouds feedback/forcing?

But all of this comes about because you guys and your idiotic models. THEY DON’T WORK! Instead of assuming data is wrong when it doesn’t fit, address the models. I’d go into some details about why, but I know I’d be wasting my time with you. If you want a working model, forget CO2. Our knowledge base isn’t sufficient for a model to detect a signal change of one Kelvin amongst all of the noisy events. CO2 simply isn’t that important. Get the basics down first and then add the trace gases and tweak. Oh, but that would mean we’d have to address the hydrology aspect first.

KR, there are many of us here that like the fact that alternative views are presented here. It is good when this occurs. However, if you come here trying to defend the baseless attacks on Dr. Spencer, you simply won’t be received well. If you want to argue the science, please by all means. But, don’t come here telling us crap we know isn’t true. Its insulting and as you can probably discern, it pisses people off. And KR, quit letting Tamino fill your head full of garbage, he doesn’t know squat. Keep reading here. There are some very sharp guys and gals around here. CA is also a great place to go and learn and debate.

@John Whitman,

Yes, eternal vigilance. And sadly, no, it won’t ever end. Power seeking despots exploit peoples’ beliefs. They find a niche that conforms with some peoples’ beliefs and it is easily accepted by the people with those beliefs. In this case, the belief Industry = bad and harmful coupled with the idea we’ve too much population to be sustained, made this bit of lunacy accepted by many. And you were spot on in calling out the overtones and undertones sneering at Dr. Spencer’s theology. No one asks Dessler about his views, why should they worry about Spencers?

Dave Springer says:

September 9, 2011 at 4:34 pm

An Optimal Definition for Ocean Mixed Layer Depth

It’s about 50 meters average across seasons. At least according to a published study.

Dessler’s using 300m as I recall. Sounds like he’s making up his own definitions for things to suit his agenda. FAIL

In an earlier iteration of Spencer’s simple model he used a depth of 1000 metres. he was criticised by Ray Pierrehumbug for this and told he should have used 35m.

So in SB11 he uses a shallow depth, and gets criticised by Dessler who says he should be using 700m.

The goalposts seems to have quite a variance range…

“September 9, 2011 at 12:22 pm

700m for the mixed layer? Wow! My own personal experience (from deep diving) is, you start to get into the realm of 10 deg C water at something between 20 and 30m, and into the 6 deg C realm down around 50m, and after that you have the transition into true abyssal uniformity.”

Yes, but doesn’t 700m give a lot more space to hide the “missing heat” in?

Concerning Dressler’s modifying his paper per Spencer’s comments, let me see if I have got this straight: In the peer review process, nobody caught the inadequacies and the numerical shortcomings of the Dressler paper, But when someone whose scientific abilities the journal disses sees the papers for a few hours, Dressler finds that he must rewrite and recalculate his paper.

@KR says:

September 9, 2011 at 3:44 pm

“Climate models, on the other hand, are boundary condition models, where you look at how the average values will change based on boundary conditions such as conservation of energy. …Variations like the ENSO, which are +/- shifts in where energy is located in the climate, average out over time, so even simple zero dimensional models that don’t attempt to replicate ENSO can provide some predictive power (if the boundary conditions are correct) over longer terms. ”

//////////////////////////////////////////////////////

The key point here is that (i) there is an assumption that these variables average out over time, but there is no hard evidence that that is actually true; and (ii) what amount time is required for these variables to average out.

The problem we have is that we are only looking at a snapshot window of climate and its variability. It may well be the case that variables such as ENSO do average out but that averaging out may, in fact, take place over centuries if not millenia.

Personally, I consider this assumption which over simplifies matters to be of concern, and it assumptions like this which explain why model projections are so flawed and depart/diaagree with empiracal observational data.

@KR says: September 9, 2011 at 3:44 pm

As an analogy:

…………………………………….

* You could also model traffic based on boundary conditions such as population levels, employment conditions, lane sizes, and planned changes to on/off ramps over the next few years, and from that predict _average_ traffic a decade out. But that model won’t tell you about the traffic next Tuesday.

Different models, different predictive ranges. “

////////////////////////////////////////////////////////////////////////////////////////////////////////////////

I wonder whether such a model run made in say 2002 for the year 2012 for traffic in and around the Higashi Nihon Daishinsai area are on target given the 2011 earthquake and tsunami. Models are simply hopeless since with have insufficient knowledge of all variables at play, and how those variables work and interplay. Until we have a better and more extensive knowledge and understanding of all variables that can affect climate, models will continue to be next to worthless. Any unattached objective observer knows that is the case. It is only modellers, and those that rely on them rather than conducting empirical science who are deluded and are blinded to reality.

We have seen the model runs. We know they depart from reality. Rather than wasting time fudging the models, we should concentrate on empirical science and observation and seek to obtain a better understanding of what is going on in the real world and how it works and why it behaves as it does.

Once (and only once) we have a significant improvement in our knowledge and understanding, we can seek to rewrite the models from scratch. Perhaps in 10 or 20 years we can look again at models and may be by then, we will be able to write a worthwhile model that will have some reliable predictive powers. Until then, all funding of models should be withdrawn and that funding should be redirected elsewhere. That is the only way climate science will be taken forward.

Dave Springer says:

September 9, 2011 at 4:47 pm

SteveSadlov says:

September 9, 2011 at 12:22 pm

I agree with both of you. I’ve dived both the GOM and the Atlantic and seen very little variance at depth below 30m. Unless there is something wrong with my dive computer…

tallbloke says:

September 10, 2011 at 2:19 am

“In an earlier iteration of Spencer’s simple model he used a depth of 1000 metres. he was criticised by Ray Pierrehumbug for this and told he should have used 35m. So in SB11 he uses a shallow depth, and gets criticised by Dessler who says he should be using 700m.”

The mixed layer ends where the thermocline begins. The depth is variable and depends on definition used but in no case does the mixed layer extend hundreds of meters deep but OTOH 35 meters is too shallow. Too deep is better than too shallow so I have to agree with Dressler on that point and Spencer should have stayed with his first instinct of 1000 meters but anything over 150 meters is just overkill. My only real objection to the deeper depths is it is well beyond the mixed layer boundary and shouldn’t be called the mixed layer.

35 meters is too shallow. 1000 meters is overkill.

climsci.net — “The owner of this domain has not yet uploaded their website.“

Roy,

Obviously the clouds issue is a big one. I noticed you cited a 2005 paper by GL Stephens, but you did not cite his 2010 paper in GEWEX found at http://tallbloke.files.wordpress.com/2011/09/gewex-feb2010.pdf

Of course, the second paper may not be peer-reviewed and so you may not have seen it and would not be criticized for not citing it. However, I think it is possible this paper makes a contribution to our understanding. Stephens is basically claiming that clouds with rain or drizzle decrease albedo – certainly a reasonable guess and worth investigating. Do satellites have the capability to categorize cloud cover based on a whiteness scale? Does that kind of dataset exist now?

Thanks for responding!

You’re wasted in your present career, Lucy Skywalker. I don’t know the hourly rate, but you would have to be amongst the world best mixers (“So yes, the tide may have turned, but the war is not yet won.”) of metaphors…

Some of you might find these two papers quite interesting. The first deals with the role of different cloud types in the Arctic during the critical transition period of August to September:

http://epic.awi.de/Publications/Sed2010a.pdf

And the second deals with looking at the total energy content of the atmosphere and enthalpy as opposed to only sensible heat. This paper is something Dr. Spencer in particular might be interested in reading if he hasn’t already:

http://www.agu.org/journals/gl/gl1116/2011GL048442/2011GL048442.pdf

Dresser, Real Climate, and friends must be living in a fantasy world.

How is writing this script?

Lindzen and Choi. publish an opus magnum third paper on the subject of feedbacks that acknowledges deficiencies in their second paper and addresses all past criticisms scientifically. The mathematical analysis of radiation data from two different satellites in the third paper shows unequivocally that the planetary feedback response to a change in forcing is negative. Spenser publishes a paper that shows changes in planetary cloud cover causes cyclic warming and cooling.

PDO is negative. There has in the past been cooling when the PDO is negative and increased La Ninos. There is a predicted second La Nino (Back to back La Nino.) The cause of negative PDOs is unknown however there is correlation in the past with low solar magnetic cycles and the occurrence of negative PDO.

The solar magnetic cycle during the last thirty years of the 20th century was at its highest level in 10,000 years.

Three independent solar parameters indicate the sun is rapidly moving to a Maunder minimum.

There is in the paleorecord cycles of warming followed by cooling that correlate with solar magnetic cycle changes.

Sea level has fallen 6 mm in 2010 which is twice the yearly rise.

Dresser issues a very public rushed draft paper that states planetary cloud feedbacks are positive rather than negative (in response to a forcing change) and changes in planetary cloud cover does not cause cyclic climate change. Dresser includes with the rush draft paper derogatory name calling. Real Climate and friends have very publicly stated cloud feedbacks are positive rather than negative along with similar derogatory name calling and sarcasm.

Dresser, Real Climate, and the IPCC have painted themselves into a corner. Statements that the science is settled, those questioning the science are “deniers” or idiots, and that Western governments must immediately spend trillions of dollars to prevent the end of civilization and the planet, reverberates in the mass media.

Facts are facts. Propaganda does not change facts. The general public will know if the planet is cooling.

Richard Verney says:

“Perhaps in 10 or 20 years we can look again at models and may be by then, we will be able to write a worthwhile model that will have some reliable predictive powers. Until then, all funding of models should be withdrawn and that funding should be redirected elsewhere. That is the only way climate science will be taken forward.”

—–

The predictive skill, or lack thereof, of models is not the only rubric by which their usefulness should be judged. As we don’t have a separate or control earth with which to use to conduct experiments, models allow testing of individual parameter changes to identify dynamical relationships. As the climate is a system existing on the edge of chaos, it is taken as a given that no model will be able to predict those inherently unpredictable tipping points exactly. What we can do though, and this gets to the usefulness of models, is look at past periods in earth’s history in which climate parameters were similar to today, and see how well the climate models were at simulating that climate and use that a general guide to what we might be able to expect during our current period.

Crispin in Waterloo says:

September 9, 2011 at 9:00 pm

Thanks. Very helpful.

If I find this whole blog trumps paper battle the main saturday popcorn hour feature, does that mean I’m truly a nerd or that my brain cell seriously lack exciting input?

David Falkner says:

September 9, 2011 at 8:25 pm

“I happen to agree with both assessments, but has anyone really examined how radiative energy known as ‘forcings’ are used and put to work by the climate system? That seems like the very fundamental idea this whole exchange is all about.”

Superb question. The answer is no. The reason is that climate science is in its infancy. Unfortunately, it is frozen at that developmental stage because Warmista refuse to get up from their supercomputers and do some empirical research. The natural processes that make up the climate system, La Nina for example, have not been described in some way that can be called scientific. Sadly, there is no plan to redirect some of the big bucks to such studies.

Dr. Roger Pielke, Sr., has done many “small” studies that are pristine examples of good science. Once climate science overcomes its developmental roadblock it will look very much like Pielke’s work. Check out his website.