Spurious Warming in the Jones U.S. Temperatures Since 1973

by Roy W. Spencer, Ph. D.

INTRODUCTION

As I discussed in my last post, I’m exploring the International Surface Hourly (ISH) weather data archived by NOAA to see how a simple reanalysis of original weather station temperature data compares to the Jones CRUTem3 land-based temperature dataset.

While the Jones temperature analysis relies upon the GHCN network of ‘climate-approved’ stations whose number has been rapidly dwindling in recent years, I’m using original data from stations whose number has been actually growing over time. I use only stations operating over the entire period of record so there are no spurious temperature trends caused by stations coming and going over time. Also, while the Jones dataset is based upon daily maximum and minimum temperatures, I am computing an average of the 4 temperature measurements at the standard synoptic reporting times of 06, 12, 18, and 00 UTC.

U.S. TEMPERATURE TRENDS, 1973-2009

I compute average monthly temperatures in 5 deg. lat/lon grid squares, as Jones does, and then compare the two different versions over a selected geographic area. Here I will show results for the 5 deg. grids covering the United States for the period 1973 through 2009.

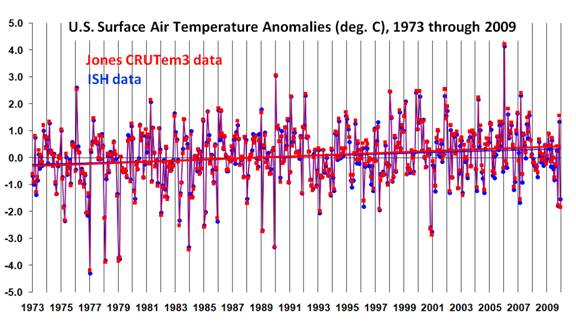

The following plot shows that the monthly U.S. temperature anomalies from the two datasets are very similar (anomalies in both datasets are relative to the 30-year base period from 1973 through 2002). But while the monthly variations are very similar, the warming trend in the Jones dataset is about 20% greater than the warming trend in my ISH data analysis.

This is a little curious since I have made no adjustments for increasing urban heat island (UHI) effects over time, which likely are causing a spurious warming effect, and yet the Jones dataset which IS (I believe) adjusted for UHI effects actually has somewhat greater warming than the ISH data.

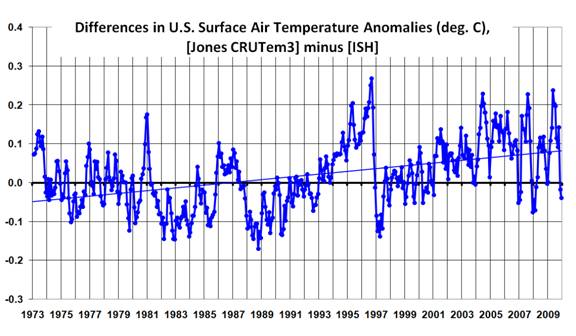

A plot of the difference between the two datasets is shown next, which reveals some abrupt transitions. Most noteworthy is what appears to be a rather rapid spurious warming in the Jones dataset between 1988 and 1996, with an abrupt “reset” downward in 1997 and then another spurious warming trend after that.

While it might be a little premature to blame these spurious transitions on the Jones dataset, I use only those stations operating over the entire period of record, which Jones does not do. So, it is difficult to see how these effects could have been caused in my analysis. Also, the number of 5 deg grid squares used in this comparison remained the same throughout the 37 year period of record (23 grids).

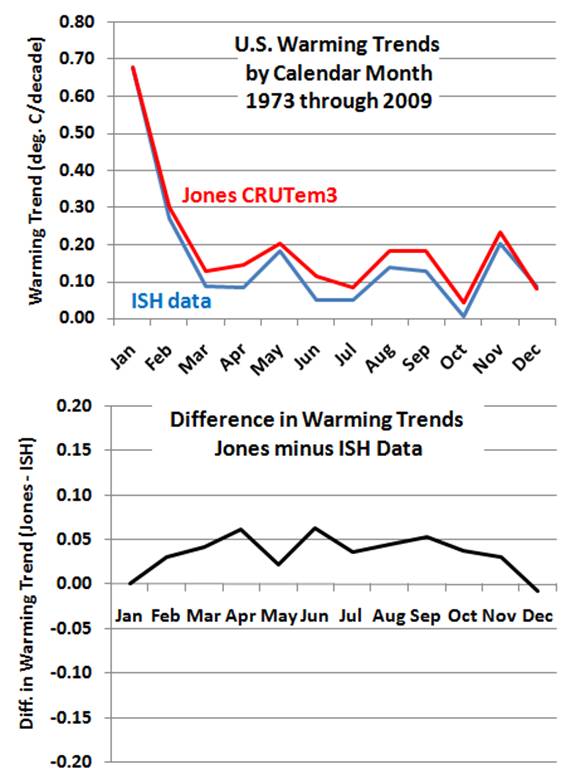

The decadal temperature trends by calendar month are shown in the next plot. We see in the top panel that the greatest warming since 1973 has been in the months of January and February in both datasets. But the bottom panel suggests that the stronger warming in the Jones dataset seems to be a warm season, not winter, phenomenon.

THE NEED FOR NEW TEMPERATURE RENALYSES

I suspect it would be difficult to track down the precise reasons why the differences in the above datasets exist. The data used in the Jones analysis has undergone many changes over time, and the more complex and subjective the analysis methodology, the more difficult it is to ferret out the reasons for specific behaviors.

I am increasingly convinced that a much simpler, objective analysis of original weather station temperature data is necessary to better understand how spurious influences might have impacted global temperature trends computed by groups such as CRU and NASA/GISS. It seems to me that a simple and easily repeatable methodology should be the starting point. Then, if one can demonstrate that the simple temperature analysis has spurious temperature trends, an objective and easily repeatable adjustment methodology should be the first choice for an improved version of the analysis.

In my opinion, simplicity, objectivity, and repeatability should be of paramount importance. Once one starts making subjective adjustments of individual stations’ data, the ability to replicate work becomes almost impossible.

Therefore, more important than the recently reported “do-over” of a global temperature reanalysis proposed by the UK’s Met Office would be other, independent researchers doing their own global temperature analysis. In my experience, better methods of data analysis come from the ideas of individuals, not from the majority rule of a committee.

Of particular interest to me at this point is a simple and objective method for quantifying and removing the spurious warming arising from the urban heat island (UHI) effect. The recent paper by McKitrick and Michaels suggests that a substantial UHI influence continues to infect the GISS and CRU temperature datasets.

In fact, the results for the U.S. I have presented above almost seem to suggest that the Jones CRUTem3 dataset has a UHI adjustment that is in the wrong direction. Coincidentally, this is also the conclusion of a recent post on Anthony Watts’ blog, discussing a new paper published by SPPI.

It is increasingly apparent that we do not even know how much the world has warmed in recent decades, let alone the reason(s) why. It seems to me we are back to square one.

If anyone were to question the need for data to be freely available, work like this that makes it clear.

“In my opinion, simplicity, objectivity, and repeatability should be of paramount importance.”

Isn’t this the case in most things in life.

“Once one starts making subjective adjustments of individual stations’ data, the ability to replicate work becomes almost impossible.”

This is probably the motive.

Or even whether the world has warmed in recent decades?

That’s the real ‘square one’.

/Mr Lynn

Well done, nice work, falling eye lids, see y soon.

“It is increasingly apparent that we do not even know how much the world has warmed in recent decades, let alone the reason(s) why. It seems to me we are back to square one.”

I wholeheartedly concur with you Roy.

Not only do we have a lack of station resolution; we cant tell if the effect if clouds (and rain) are evident with only four samples per day. We also have network resolution problems; any station node within a network can only accurately report temp within a ~500m radius.

Needless to say, without a full resolution it is only too easy to end up with a false result! Your adherence to only ‘surviving stations’ shows this.

Best regards, suricat.

Well I think we all know what this means. Scientific fraud. How many other disciplines have been contaminated by spurious, agenda driven, analysis and data alteration?

The climate warming onion is being peeled and the closer to the core it is peeled, the more rotten that core seems to be.

The first layer was the principal advocates of the CO2 based climate warming, the CRU scientists who by their own words were shown to have massaged and corrupted and possibly deleted relevant data to achieve their personal agendas.

Then the single most important supposedly science based climate organisation in the whole climate warming scam, the IPCC was shown, again by it’s own writings, to be rotten and corrupt and to have deliberately taken on an advocacy role in advising and attempting to influence the world’s governments to alter the very social structure of the way most of the world’s peoples live.

Now right down near the onion’s increasingly rotten core, it seems that the very data that the supposed catastrophic rising of global temperatures is based on and from which all the claims of global warming emanate is being shown to have been either accidently distorted and compromised due to complete incompetence on the part of the advocate climate “scientists” or deliberately and wantonly twisted, massaged and altered by those same “scientists” to achieve a preordained result.

From this it appears that nearly all of the papers, articles and opinions which relied entirely on the veracity of the supposedly science based data supporting the concept of catastrophic global warming are no longer worth the paper they are printed on or the gigabytes of electrons that flowed from their publication.

Why should we ever trust in any way these “scientists” ever again?

If these are the standards of honesty and integrity that so many of the world’s scientists apparently accepted of climate science for nearly two decades, why should we the public who pay the salaries and the often lavish grants that fund science, ever again place any trust in any science until science itself is openly seen to be cleaning it’s filthy augean stables?

Thank you Roy.

Once again it would seem that Prof Jones reached his conclusion and then selected data to support it.

If this allowed to stand, the Enlightenment and the Age of Reason may as well never happened, and we may as well go back to using Aristotle as the font of all wisdom and pigs bladders as a means of predicting earthquakes.

Unless the word “spurious” has a specific meaning in climate research, I found the use of the word here to indicate a strong bias of “I’m right and the other is wrong”. I would personally prefer a more neutral term to be used, such as anomalous – which indicates that there is a strange difference without specify any indication as to what it means or which dataset is correct. After all, it is stated that the actual figures being compared are not the same measures. It could be that the difference is due to that fact alone.

Having said that, I found it very interesting. I’ve wondered several times recently what temperature anomaly graphs would look like purely off raw data without any modification.

It is increasingly apparent that we do not even know how much the world has warmed in recent decades, let alone the reason(s) why. It seems to me we are back to square one

It’s been painfully apparent for quite some time that the elitist Climate Scientists themselves did not care about their “science” enough to start from the beginning in deciding what they were measuring and how best to measure it.

It is perhaps even more astonishing that many other people who think they are Climate Scientists and also feed off “Climate Science” didn’t care enough about their all important basic claim – that “it” is warming “globally” – to personally look into what “it” is and how “it” is measured.

They’ve only had over 20 years to find out.

Looks like a straight forward approach to me, as it follows the KISS principle.

And the findings are interesting, to say the least.

It would be great if it could be the start of a genuine discussion with the people that created the CRUT data. And by discussion I mean a focus on content, not on trying to discredit the other party.

As a newcomer to the climate discussion I am surprised how the two camps talk (bad) about each other iso with each other.

Dr Spencer,

No, the achievement of your article above is not developing a dataset yourself and comparing it to the CRU dataset thereby opening questions about their adjustments . . . and thank you for that.

The truly significant achievement of your article is your contribution to the art of scientific communication. The clearness of your writing strongly illuminates the topic.

I am most grateful for your clear professional style, secondarily grateful for your contribution to the knowledge of the US Surface Temperature Records.

Let there be light . . . . on the temperature dataset.

John

oh excellent post Roy on several counts. Thank you.

I would like to see a century of global mean temperature changes estimated from individual stations which all have long and checkable track records, with individual corrections for UHI and other site factors, rather than the highly contaminated gridded soup made from hugely varying numbers of stations.

The January spike in warming trends suggests UHI to me. Exactly the same is seen in the Salehard (Yamal) record over recent years.

Well, ask and ye shall receive. I wrote about using first-order stations to have a look at temperature trends on a thread earlier today, and now find Dr. Spencer has already done something similar.

It is important to know what kind of research is being done on temperature data. And since we cannot yet replicate Jones’ research, we are left to verify the null hypothesis, or in this case, not verify it. Good example of verification research (done a different way with different analysis, different data set, etc) supporting the null hypothesis (IE the CO2 increase is not greatly warming the atmosphere) in contrast to Jones’ work, which rejects it. The design is simple, straightforward, transparent, and leaves the ball in the other court to replicate your work, and attempt to verify it or not.

And by the way, the paper Leif sited re: forcing, left 25% of the warming unexplained. Could a calculation error in Jones’ temperature enhancements be that 25%?

Wouldn’t be much easier and fruitful for dr Spencer to compile the rural stations in the USA and calculate the trend from this data set, without trying to correct Jones’s mistakes in constructing his temperature index. And then maybe to compare this rural trend with his own UAH trend? For the USA 48, UAH finds decadal trend of 0.22 degrees C. Preliminary analysis of Dr Long based on just rural stations in the USA shows approximately 0.07 or 0.08 per decade. Isn’t that a peculiar inconsistency worth of exploring a little bit more (especially when coupled with an even more peculiar CONSISTENCY between Spencer’s data and NOAA urban, adjusted trend)???

Very insightful explorations.

Good to have US “anthropogenic warming” confirmed!

Could any of the January/February “warming” be due to a “daylight saving time” impact temperature reading time?

Or the change in heating period affect the UHI?

Other examples of “anthropogenic” influence on temperatures is shown in:

Fabricating Temperatures on the DEW Line

The more you read about land-based temperature measurements the more confused you get (well I do).

What was interesting reading the 2009 paper about UHI in Japan (which was reposted on this blog) was that there were a large number of high quality stations with hourly measurement and yet the “correlation” between temperature rise and population density was relatively low (0.4).

At the same time there was a clear trend showing increasing population density caused higher temperature measurements to a 99% significance.

What that means is that there is definitely a UHI effect in Japan. And also that the variation is huge – microclimate effects probably.

(The paper also showed that there had definitely been a significant real warming in Japan over three decades).

Perhaps as Roger Pielke Sr says we should really focus on ocean heat content and not on measuring the temperature 6ft off the ground in random locations around the world.

Anthony,

Thank you for posting Dr Spencer’s article.

Anthony, are you (and Dr Spencer) thinking what I am thinking?

You did it with thermometers. It is time to do it at one level up, this time a SURVEY 23 GRIDs OF THE US SURFACE TEMPERATURE DATASET . Do it by assigning on of 23 grids (5 C deg grid squares) to some volunteer to do dataset survey Grid cell by Grid cell. I can help, though no statistician.

John

OK, new contest; find something correct in An Inconvenient Truth.

=================================

I am computing an average of the 4 temperature measurements at the standard synoptic reporting times of 06, 12, 18, and 00 UTC.

Are these reported manually, reading bulb thermometers and are maxima and minima calculated from these events ?. These reporting times are presumably convenient and standard and would not necessarily coincide with daily maxima/minima.

This has always puzzled me, even the old bulb thermometers had maximum/minimum markers that could be read and reset once each day and this information would be more readily available to the newer thermistors. So why have ‘reporting times’ ?

Good thoughtful article, Dr Spencer.

Slightly OT….Have you seen Al Gore’s article in the New York Times where he calls us a “criminal generation” if we ignore AGW? This was published today.

http://www.nytimes.com/2010/02/28/opinion/28gore.html?hp

I’d like to propose a new standard for climate research. I propose that every paper include a zip file containing all data and a script initiated by a single command that will automatically carry out all calculations of every number AND graph in the paper. Any needed manual modification of raw data should be carried out by explicit lines in the script, along with an explanation. All needed software should be included in the zip file if possible, therefore open source software should be strongly encouraged. In order to save download bandwidth, it may be permissible for the script to specify separate data packages or software packages by cryptographic hash, if those packages would be frequently used in many papers. This way we would not only have the data and the code, we would know that both the data and the code were the ones used in the calculations, and we could easily check that the calculations were repeatable. This procedure would add a small burden,especially to the plotting of graphs, which would have to be scripted, but it would dramatically increase the credibility of the research. Skeptics may be able to almost force climate researchers to use this method by using it themselves, and thus establishing it as a required best practice.

Hey David. Mercury freezes solid at -40 F