Physicist Luboš Motl of The Reference Frame demonstrates how easy it is to show that there is: No statistically significant warming since 1995

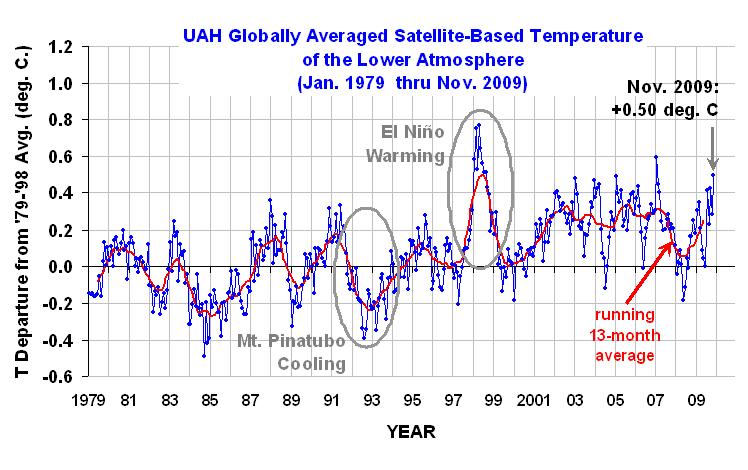

First, since it wasn’t in his original post, here is the UAH data plotted:

By: Luboš Motl

Because there has been some confusion – and maybe deliberate confusion – among some (alarmist) commenters about the non-existence of a statistically significant warming trend since 1995, i.e. in the last fifteen years, let me dedicate a full article to this issue.

I will use the UAH temperatures whose final 2009 figures are de facto known by now (with a sufficient accuracy) because UAH publishes the daily temperatures, too:

Mathematica can calculate the confidence intervals for the slope (warming trend) by concise commands. But I will calculate the standard error of the slope manually.

x = Table[i, {i, 1995, 2009}]

y = {0.11, 0.02, 0.05, 0.51, 0.04, 0.04, 0.2, 0.31, 0.28, 0.19, 0.34, 0.26, 0.28, 0.05, 0.26};

data = Transpose[{x, y}]

(* *)

n = 15

xAV = Total[x]/n

yAV = Total[y]/n

xmav = x - xAV;

ymav = y - yAV;

lmf = LinearModelFit[data, xvar, xvar];

Normal[lmf]

(* *)

(* http://stattrek.com/AP-Statistics-4/Estimate-Slope.aspx?Tutorial=AP *)

;slopeError = Sqrt[Total[ymav^2]/(n - 2)]/Sqrt[Total[xmav^2]]

The UAH 1995-2009 slope was calculated to be 0.95 °C per century. And the standard deviation of this figure, calculated via the standard formula on this page, is 0.88 °C/century. So this suggests that the positivity of the slope is just a 1-sigma result – a noise. Can we be more rigorous about it? You bet.

Mathematica actually has compact functions that can tell you the confidence intervals for the slope:

lmf = LinearModelFit[data, xvar, xvar, ConfidenceLevel -> .95]; lmf["ParameterConfidenceIntervals"]

The 99% confidence interval is (-1.59, +3.49) in °C/century. Similarly, the 95% confidence interval for the slope is (-0.87, 2.8) in °C/century. On the other hand, the 90% confidence interval is (-0.54, 2.44) in °C/century. All these intervals contain both negative and positive numbers. No conclusion about the slope can be made on either 99%, 95%, and not even 90% confidence level.

Only the 72% confidence interval for the slope touches zero. It means that the probability that the underlying slope is negative equals 1/2 of the rest, i.e. a substantial 14%.

We can only say that it is “somewhat more likely than not” that the underlying trend in 1995-2009 was a warming trend rather than a cooling trend. Saying that the warming since 1995 was “very likely” is already way too ambitious a goal that the data don’t support.

kdkd (01:10:30) :

“We predict that co2 will cause warming from scientific information derived form both classical mechanics and quantum theory.”

True. But the relevance of the AGW hypothesis has more to do with predictions of catastrophe. And that requires amplification of the basic physical responses.

There is no physical basis or evidence for amplification whatsoever.

mspelto (04:23:25) :

Thanks for pointing this out. Tamino’s post is indeed very good:

http://tamino.wordpress.com/2009/12/15/how-long/#more-2124

It shows very clearly the serious statistical shortcomings of Luboš Motl’s post and comes to a very different conclusion regarding the significance of recent temperature trends.

Keep in mind. The reason the climate scientists state 15 years is because they didn’t believe the AGW signal could be hidden for that long. It has nothing to do with longer term warming or cooling. It is precisely the AGW signal itself that is important here.

While I’m not sure that was the point of this article, the analysis does make the following question valid … “where is the AGW signal?”.

mspelto (04:23:25) :

tamino makes 2 choices, one is taking a 30 year period matching the warming half of a PDO cycle and the other is chosing GISS instead of satellite data.

while taking only the warming half cycle is about as misleading as you can get, GISS is only the second worst data set after Petersen/Karls NOAA data.

Among various issues, this alone is more than enough reason not to rely on GISS:

“…In the ROW (rest of the world), there are almost the same number of negative (urban) adjustments as positive adjustments…”

http://climateaudit.org/2008/03/01/positive-and-negative-urban-adjustments/

mspelto

Here is the original document concerning helm glacier

http://www.sfu.ca/~jkoch/gpc_2009.pdf

Much of its retreat was during the very warm first half of the 20th Century (especially around the 1930’s). It then advanced at various times.

To compare today to the LIA is meaningless. It was much colder then. If the glacier had been observed in the 1730’s or during the MWP it would have been very small.

Tonyb

kdkd (01:10:30) :

“We predict that co2 will cause warming from scientific information derived form both classical mechanics and quantum theory.”

Please explain the justification from quantum theory. Show your workings.

(btw, you forgot to include string theory, and/or M-theory plus 11-dimensional analysis.)

“Kevin Kilty (19:12:54) :

I agree with DocMartyn’s main point – you need a hypothesis to test against. Just plotting a graph and fitting a linear trend is a pointless exercise.

Isn’t the implicit null hypothesis a zero trend in this case? You will find even less significance testing against an assumed positive trend.”

Not at all, the implicit Null Hypothesis is that DeltaTemp=Function[CO2]. The data MUST be plotted with reference to [CO2], which we do know to be rising, in a non-linear manner.

Satellites were adjusted according to cherry picked warmer surface stations. We do not really know now what the actual data was.

_Jim (14:43:02 – 12/26/09), here is something to compare Tamino’s “famous” E. English early data post of last year.

Here is a plot of the English 1659-2008 data, showing the down turn toward over the last few years.

http://www.imagenerd.com/uploads/t_est_28-bGGxs.gif

This used a 40 year Fourier Convoluiton filter. The figure below shows the same 1659 data filtered with three different filters. These include 40 year Fourier, MOV and a “filtfilt” 2 pole recursive Chev (runs forward in time, then reveres backward in time, defined better in the MATLAB signal Cond. toolbox). The “filtfilt” and MOV have end point problems the Fourier avoids.

http://www.imagenerd.com/uploads/ave1-raw-smoothed-5rhsb.gif

The next figure compares the Fourier and Empirical Mode Decomposition (EMD) filters with the CRU 1855-2003 data set. The EMD has the ability to use non-stationary and non-linear data sets.

http://www.imagenerd.com/uploads/cru-fig-6-NMyC0.gif

Note the comparison between the two, especially at the end points.

The last figure overlays the with a Climate4you global temp http://www.climate4you.com/

composite. This compares the standard data sources and Hadcet, E. England & Ave14. Ave14 is a composite of the 14 longest running temperature records, starting before 1800. The include the E. England, Uppsalla, DeBilt, Berlin, Paris, Geneva, etc., as found on the rimfrost http://www.rimfrost.no/ site.

http://www.imagenerd.com/uploads/climate4u-lt-temps-Ljbug.gif

Seems all have a tendency to bend down over the past 8-9 years.

If you take the blackbody radiation spectrum of the earth and overlay on it the absorption spectrum of CO2 you can see that if CO2 absorbs all the radiation in the bands of its absorption spectrum it will only be a very small part of the total radiation from the earth. This is the reason that the modelers need some “pushing” or amplification mechanism to achieve dramatic predictions such as Hansen’s “tipping”. Since for the last 10 years the CO2 has gone up but the temperature has remained about constant, perhaps the effect of CO2 on greenhouse warming has saturated and increasing concentrations will have no effect. Comments?

Put it this way: If the climate did not change – wouldn’t that be the time to worry.?

cohenite (22:06:54) :

99[7] is NOT a cherry pick because it is supported by prominent climate events

It was 1995, not 1997. And it is still a cherry pick as the trend itself [or ‘climate events’] is used to select the starting point.

I did the same thing about a month and a half ago where I looked at four datasets (UAH,RSS,HADCRUT AND GISS) and got similar results.

http://noconsensus.wordpress.com/2009/11/12/no-warming-for-fifteen-years/

Jeff L (the first post)

Have you ever been to the GISS website?

The data and methods are all published right on their website. Your comment is more an indictment of your making a claim on faith rather than checking the facts before making an accusation.

Thanks

Edward

Galen Haugh (23:25:31) : (and others) seem to be to have the correct response to all the AGW :

Where is your original source data ?

What is your methodology ?

Where is your source programming (fully documented) ?

– what; you won’t release it ? then GO AWAY and don’t come back until you can provide the basic science behind your claims.

We should spend a considerable amount of time educating our peers & government officials in what this all means & why it is so important.

How dare they claim to be doing science when they fail to meet the most basic premise behind the Scientific Method ?

Another question – based on the stats :

the daily average temperature can NOT be understood by supplying Min/Max values as an illustration of the underlying tendency of the temperature over 24 hours. This is because the ‘signal’ is definitely not sinusoidal; and even if it were; Nyquest requires AT LEAST two samples ; giving only two samples runs the major risk of aliasing the data.

I doubt you could get a decent reconstruction of the ‘signal’ even with four samples; since the actual ‘signal’ is a very skew sine (skew in time) modulated by all sorts of chaotic influences.

Consider a cloudy temperate summers day; the sky clears about 13h00; and gives a peak temp before clouding over at 14h00. The sky clears with sunset; causing a protracted depression of the temperature overnight the overnight temperature spends most of the night hovering around the Min. Clearly the temperature will be lower for a considerable greater period than it is higher than the simple average created by just taking the Max/Min values

– example running (10 minute sample rate) average temperature over the last 24hours at my house (UHI) 4.4C;

Max 6.4 Min 2.9

but the Min/Max average = 4.6 (rounding down to 1 sig place) – and that’s on an overcast winter’s day/night…

error is 0.2C (4.5%)

So the so called daily ‘average’ ((Min + max)/2) gives a false measure. And that false measure is then used to generate the monthly average (sum of daily average divided by days in the month).

Am I missing something ? Or is this GIGO ?

And that’s before we get into the whole adjustment and falsification of ‘missing’ data – sorry I believe that’s called ‘reconstruction’ – but if there was no data; how can you make it up – err reconstruct it ?

Leif Svalgaard (10:24:18) :

I think Motl’s explanation was acceptable. If I read it correctly, he is saying that he was trying to see how far back one had to go to find a statistically significant trend.

If you missed it, it was here:

Luboš Motl (01:24:45) :

Sounded like Motl just went as far back as he could in an attempt to see how long it would take to find statistically significant warming.

” Eve (14:08:45) :

My heating fuel usage shows that there has been cooling since 1997, which is the oldest data I have. I will show fuel usage in Litres per year. I do not heat the house when it is warm therefore each year after 1997 must have been cooler. The furnace has a scheduled maintenance each Nov, the same two people live in the same house and the thermostat settings have not changed.

1997-2767.20 Litres

1998-3057.50 Litres

1999-4009.30 Litres

2000-3874.70 Litres

2001-3586.70 Litres

2002-3752.20 Litres

2003-3634.50 Litres

2004-4072.50 Litres

2005-3293.50 Litres

2006-4276.70 Litres

2007-3700 Litres

2008-4476.20 Litres”

The Swiss Homeowners association publishes “Heating-Degree-Days” since 1981. I used the numbers for December/January (in Zurich) and got a very slight positive linear trend (+1HDD/year for 62 days). That would indicate it got a little bit colder.

According to the official numbers Zurich-winters are 1°C warmer today than in 1980. These are homogenized data, of course.

Dave F (11:45:32) :

I think Motl’s explanation was acceptable. If I read it correctly, he is saying that he was trying to see how far back one had to go to find a statistically significant trend.

If that were the case, one could quantify that [Motl didn’t] in tis way.

Going x years back produces a trend with significance S, then vary x from 1 through N [where N is perhaps 30 as is normally assumed to define ‘climate’] and plot S as a function of x to show that x = 15 was a ‘good’ choice.

@ur momisugly Bob Tisdale (17:23:49) :

Thanks for the replies.

What’s worse than not knowing the answers?

Not searching for them.

I like the idea of “going back far enough to find a significant trend” either positive or negative. This is a great way of finding significant temperature trends to then discover if oceanic trends correlate. My hypothesis is that significant temperature trends (of course one would need to define what makes the temperature trend significant) are nearly always (correlation co-efficient?) correlated with significant oceanic trends. I believe this has been done.

To: kdkd

quoting crosspatch:

[ Any linkage of climate warming and CO2 is pretty much a guess and nothing more. ]

“No, this is incorrect. We predict that co2 will cause warming from scientific information derived form both classical mechanics and quantum theory. We can then do a range of lab experiments, and planetary observations to see whether the theory fits the data. The correspondence is reasonably good. There’s a bunch of things in the rest of your post that are plain incorrect as well (plenty of warming since the 1930s etc).”

No, this is incorrect

“You” are predicting (man-caused) Global Warming based on:

1) assuming no technological or engineering advances for 400 years in energy production or conversion or efficiency. (No fusion, no nuclear power production changes, no fast-flux or breeder reactors, no pebble bed or recycled waste used as fuel, no change in Uranium, thorium, or plutonium sources, no slow breeder development, no change in nuclear waste disposal methods (that is, continuing our present problem (er, solution) of using (er, ignoring or exaggerating) the issue to stop further nuclear power production, no additional hydro or pumped storage application, ….)

2) Assuming all CO2 production is from man-released causes, then accelerating that assumed increase and extrapolting that accelerated increase for 400 years into the future.

3) Ignoring increased plant life and productivity from the increased CO2 in the atmosphere, but then predicting increased famine because (somehow ????) more plants will die from the assumed global warming caused assumed heat increase and assumed lack of water while also ignoring the increased growing season from this assumed global warming “threat”

4) Assuming the assumed increase in CO2 concentration will cause an increased atmospheric heat retention, and then multipying that assumed effect by 10 (sometimes 15) times arbitrarily – because otherwise there is is no effect of CO2 on atmospheric heat retention.

5) THEN assuming that EVERYTHING ELSE in our current climate remains exactly the same by allowing NO feedbacks: Assuming that the increase in temperature has no other effect on water vapor, clouds, net flows of air in the upper, middle or lower atmosphere, the PDO, the AMO, polar winds, net reflectivity, heat conditions or humidity, air flow from the jet streams, etc. (This is because the current crude finite element GCM’s you are exclusively relying on for 400 year-long predictions can’t model day or night, winters and summers, jet streams, and any region smaller than a single good sized US state or average European country.

6) Then you take these simplified extrapolations and merely multiply the effects by factors of hundreds and thousands: If temperatures increase (by 1.5 degrees) then the polar ice caps and all of Greenland will melt (even though average temperatures are colder than -30 degrees!) somehow the ice will melt, or that a 1.5 degree increasein temperatures will kill 30,000 spciecies (even though an actual 0.5 degree temeprature increase in temperatures has killed exactly …. NONE. Somehow, a 1.0 degree change will kill 29,999 more species across the world.)

You predict droughts and floods and hurricanes – with no evidence and no rational justification of ANY of your numbers.

That sir, is what you are predicting. Somehow.

You predict the climate 100, 200, and 400 years into the future, but fail at the first 15 years by multitudes.

And with the deliberate aid of the propagandist (er, press) you are only allowing one side to speak.

Solent:

The theory of chemical bonds is based on quantum theory. It’s rather well understood, and the basis of much of modern civilisation’s infrastructure. Increased co2 levels failing to warm the atmosphere (once short term variability has been accounted for) would be a major blow against this theory. However, there is no evidence for this.

RACookPE1978:

Classic “sceptic” technique of taking a bunch of information, taking bits out of context then extrapolating into areas totally irelevant to the original premise, and a gish gallop of statements with scientifically dubious provenance.

But Pamela, that is part of the problem:

These “linear” predictions are dead wrong. All of them. From any point back in time.

You have got to begin (as noted in part above) with a gut-level understanding of this world’s climate as a cycle: a series of summed sine and cosine waves of various amplitudes ADDING to each other sometimes and SUBTRACTING from each other at other times.

A volcano throws a single wave (a trough actually) into the mix of from 1/2 year to 1-1/4 years long.

An El Nino throws a single spike into the mix: 3/4 year to 1-1/2 years long.

The AMO/PDO winds add two separate 30 year cycles on top of three sunspot cycles of about 11 years each to a 65 year solar oscillation (the 1870’s peak, dip in 1905-1910, the 1935-40 peak, the dip in the early 70’s and back up to today’s peak.

All of these short term cycles are on top of an independent (?) 800-900 hundred year Optimum Period Cycle. (Roman Warming Period-Medieval Warming Period-Modern Warming Period)

Every one of these pushes the temp’s up or down by a little bit.

Drawing any single line from any two points in the overall curve is simply dead wrong. Today’s temps have leveled off from a local 60 year peak

RACookPE1978:

“Classic “sceptic” technique of taking a bunch of information, taking bits out of context then extrapolating into areas totally irelevant to the original premise, and a gish gallop of statements with scientifically dubious provenance.”

Show me that any of those statements are wrong.

RACookPE1978:

You’ve committed the classic error of assuming that all the feedback mechanisms will go in the direction that you expect them to (i.e. error of wishful thinking). Based on no evidence we’d have to expect that feedback mechanisms will be neutral through random chance. However, the available evidence and underlying theory suggests that there are quite a few positive feedback mechanisms starting to operate.