Source: Mantua, 2000

The essay below has been part of a back and forth email exchange for about a week. Bill has done some yeoman’s work here at coaxing some new information from existing data. Both HadCRUT and GISS data was used for the comparisons to a doubling of CO2, and what I find most interesting is that both Hadley and GISS data come out higher in for a doubling of CO2 than NCDC data, implying that the adjustments to data used in GISS and HadCRUT add something that really isn’t there.

The logarithmic plots of CO2 doubling help demonstrate why CO2 won’t cause a runaway greenhouse effect due to diminished IR returns as CO2 PPM’s increase. This is something many people don’t get to see visualized.

One of the other interesting items is the essay is about the El Nino event in 1878. Bill writes:

The 1877-78 El Nino was the biggest event on record. The anomaly peaked at +3.4C in Nov, 1877 and by Feb, 1878, global temperatures had spiked to +0.364C or nearly 0.7C above the background temperature trend of the time.

Clearly the oceans ruled the climate, and it appears they still do.

Let’s all give this a good examination, point out weaknesses, and give encouragement for Bill’s work. This is a must read. – Anthony

Adjusting Temperatures for the ENSO and the AMO

A guest post by: Bill Illis

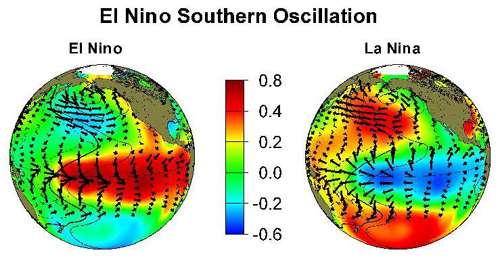

People have noted for a long time that the effect of the El Nino Southern Oscillation (ENSO) should be accounted for and adjusted for in analyzing temperature trends. The same point has been raised for the Atlantic Multidecadal Oscillation (AMO). Until now, there has not been a robust method of doing so.

This post will outline a simple least squares regression solution to adjusting monthly temperatures for the impact of the ENSO and the AMO. There is no smoothing of the data, no plugging of the data; this is a simple mathematical calculation.

Some basic points before we continue.

– The ENSO and the AMO both affect temperatures and, hence, any reconstruction needs to use both ocean temperature indices. The AMO actually provides a greater impact on temperatures than the ENSO.

– The ENSO and the AMO impact temperatures directly and continuously on a monthly basis. Any smoothing of the data or even using annual temperature data just reduces the information which can be extracted.

– The ENSO’s impact on temperatures is lagged by 3 months while the AMO seems to be more immediate. This model uses the Nino 3.4 region anomaly since it seems to be the most indicative of the underlying El Nino and La Nina trends.

– When the ENSO and the AMO impacts are adjusted for, all that is left is the global warming signal and a white noise error.

– The ENSO and the AMO are capable of explaining almost all of the natural variation in the climate.

– We can finally answer the question of how much global warming has there been to date and how much has occurred since 1979 for example. And, yes, there has been global warming but the amount is much less than global warming models predict and the effect even seems to be slowing down since 1979.

– Unfortunately, there is not currently a good forecast model for the ENSO or AMO so this method will have to focus on current and past temperatures versus providing forecasts for the future.

And now to the good part, here is what the reconstruction looks like for the Hadley Centre’s HadCRUT3 global monthly temperature series going back to 1871 – 1,652 data points.

I will walk you through how this method was developed since it will help with understanding some of its components.

Let’s first look at the Nino 3.4 region anomaly going back to 1871 as developed by Trenberth (actually this index is smoothed but it is the least smoothed data available).

– The 1877-78 El Nino was the biggest event on record. The anomaly peaked at +3.4C in Nov, 1877 and by Feb, 1878, global temperatures had spiked to +0.364C or nearly 0.7C above the background temperature trend of the time.

– The 1997-98 El Nino produced similar results and still holds the record for the highest monthly temperature of +0.749C in Feb, 1998.

– There is a lag of about 3 months in the impact of ENSO on temperatures. Sometimes it is only 2 months, sometimes 4 months and this reconstruction uses the 3 month lag.

– Going back to 1871, there is no real trend in the Nino 3.4 anomaly which indicates it is a natural climate cycle and is not related to global warming in the sense that more El Ninos are occurring as a result of warming. This point becomes important because we need to separate the natural variation in the climate from the global warming influence.

The AMO anomaly has longer cycles than the ENSO.

– While the Nino 3.4 region can spike up to +3.4C, the AMO index rarely gets above +0.6C anomaly.

– The long cycles of the AMO matches the major climate shifts which have occurred over the last 130 years. The downswing in temperatures from 1890 to 1915, the upswing in temps from 1915 to 1945, the decline from 1946 to 1975 and the upswing in temps from 1975 to 2005.

– The AMO also has spikes during the major El Nino events of 1877-88 and 1997-98 and other spikes at different times.

– It is apparent that the major increase in temperatures during the 1997-98 El Nino was also caused by the AMO anomaly. I think this has lead some to believe the impact of ENSO is bigger than it really is and has caused people to focus too much on the ENSO.

– There is some autocorrelation between the ENSO and the AMO given these simultaneous spikes but the longer cycles of the AMO versus the short sharp swings in the ENSO means they are relatively independent.

– As well, the AMO appears to be a natural climate cycle unrelated to global warming.

When these two ocean indices are regressed against the monthly temperature record, we have a very good match.

– The coefficient for the Nino 3.4 region at 0.058 means it is capable of explaining changes in temps of as much as +/- 0.2C.

– The coefficient for the AMO index at 0.51 to 0.75 indicates it is capable of explaining changes in temps of as much as +/- 0.3C to +/- 0.4C.

– The F-statistic for this regression at 222.5 means it passes a 99.9% confidence interval.

But there is a divergence between the actual temperature record and the regression model based solely on the Nino and the AMO. This is the real global warming signal.

The global warming signal (which also includes an error, UHI, poor siting and adjustments in the temperature record as demonstrated by Anthony Watts) can be now be modeled against the rise in CO2 over the period.

– Warming occurs in a logarithmic relationship to CO2 and, consequently, any model of warming should be done on the natural log of CO2.

– CO2 in this case is just a proxy for all the GHGs but since it is the biggest one and nitrous oxide is rising at the same rate, it can be used as the basis for the warming model.

This regression produces a global warming signal which is about half of that predicted by the global warming models. The F statistic at 4,308 passes a 99.9% confidence interval.

– Using the HadCRUT3 temperature series, warming works out to only 1.85C per doubling of CO2.

– The GISS reconstruction also produces 1.85C per doubling while the NCDC temperature record only produces 1.6C per doubling.

– Global warming theorists are now explaining the lack of warming to date is due to the deep oceans absorbing some of the increase (not the surface since this is already included in the temperature data). This means the global warming model prediction line should be pushed out 35 years, or 75 years or even 100s of years.

Here is a depiction of how logarithmic warming works. I’ve included these log charts because it is fundamental to how to regress for CO2 and it is a view of global warming which I believe many have not seen before.

The formula for the global warming models has been constructed by myself (I’m not even sure the modelers have this perspective on the issue) but it is the only formula which goes through the temperature figures at the start of the record (285 ppm or 280 ppm) and the 3.25C increase in temperatures for a doubling of CO2. It is curious that the global warming models are also based on CO2 or GHGs being responsible for nearly all of the 33C greenhouse effect through its impact on water vapour as well.

The divergence, however, is going to be harder to explain in just a few years since the ENSO and AMO-adjusted warming observations are tracking farther and farther away from the global warming model’s track. As the RSS satellite log warming chart will show later, temperatures have in fact moved even farther away from the models since 1979.

The global warming models formula produces temperatures which would be +10C in geologic time periods when CO2 was 3,000 ppm, for example, while this model’s log warming would result in temperatures about +5C at 3,000 ppm. This is much closer to the estimated temperature history of the planet.

This method is not perfect. The overall reconstruction produces a resulting error which is higher than one would want. The error term is roughly +/-0.2C but the it does appear to be strictly white noise. It would be better if the resulting error was less than +/- 0.2C but it appears this is unavoidable in something as complicated as the climate and in the measurement errors which exist for temperature, the ENSO and the AMO.

This is the error for the reconstruction of GISS monthly data going back to 1880.

There does not appear to be a signal remaining in the errors for another natural climate variable to impact the reconstruction. In reviewing this model, I have also reviewed the impact of the major volcanoes. All of them appear to have been caught by the ENSO and AMO indices which I imagine are influenced by volcanoes. There appears to be some room to look at a solar influence but this would be quite small. Everyone is welcome to improve on this reconstruction method by examining other variables, other indices.

Overall, this reconstruction produces an r^2 of 0.783 which is pretty good for a monthly climate model based on just three simple variables. Here is the scatterplot of the HadCRUT3 reconstruction.

This method works for all the major monthly temperature series I have tried it on.

Here is the model for the RSS satellite-based temperature series.

Since 1979, warming appears to be slowing down (after it is adjusted for the ENSO and the AMO influence.)

The model produces warming for the RSS data of just 0.046C per decade which would also imply an increase in temperature of just 0.7C for a doubling of CO2 (and there is only 0.4C more to go to that doubling level.)

Looking at how far off this warming trend is from the models can be seen in this zoom-in of the log warming chart. If you apply the same method to GISS data since 1979, it is in the same circle as the satellite observations so the different agencies do not produce much different results.

There may be some explanations for this even wider divergence since 1979.

– The regression coefficient for the AMO increases from about 0.51 in the reconstructions starting in 1880 to about 0.75 when the reconstruction starts in 1979. This is not an expected result in regression modelling.

– Since the AMO was cycling upward since 1975, the increased coefficient might just be catching a ride with that increasing trend.

– I believe a regression is a regression and we should just accept this coefficient. The F statistic for this model is 267 which would pass a 99.9% confidence interval.

– On the other hand, the warming for RSS is really at the very lowest possible end for temperatures which might be expected from increased GHGs. I would not use a formula which is lower than this for example.

– The other explanation would be that the adjustments of old temperature records by GISS and the Hadley Centre and others have artificially increased the temperature trend prior to 1979 when the satellites became available to keep them honest. The post-1979 warming formulae (not just RSS but all of them) indicate old records might have been increased by 0.3C above where they really were.

– I think these explanations are both partially correct.

This temperature reconstruction method works for all of the major temperature series over any time period chosen and for the smaller zonal components as well. There is a really nice fit to the RSS Tropics zone, for example, where the Nino coefficient increases to 0.21 as would be expected.

Unfortunately, the method does not work for smaller regional temperature series such as the US lower 48 and the Arctic where there is too much variation to produce a reasonable result.

I have included my spreadsheets which have been set up so that anyone can use them. All of the data for HadCRUT3, GISS, UAH, RSS and NCDC is included if you want to try out other series. All of the base data on a monthly basis including CO2 back to 1850, the AMO back to 1856 and the Nino 3.4 region going back to 1871 is included in the spreadsheet.

The model for monthly temperatures is “here” and for annual temperatures is “here” (note the annual reconstruction is a little less accurate than the monthly reconstruction but still works).

I have set-up a photobucket site where anyone can review these charts and others that I have constructed.

http://s463.photobucket.com/albums/qq360/Bill-illis/

So, we can now adjust temperatures for the natural variation in the climate caused by the ENSO and the AMO and this has provided a better insight into global warming. The method is not perfect, however, as the remaining error term is higher than one would want to see but it might be unavoidable in something as complicated as the climate.

I encourage everyone to try to improve on this method and/or find any errors. I expect this will have to be taken into account from now on in global warming research. It is a simple regression.

UPDATED: Zip files should download OK now.

SUPPLEMENTAL INFO NOTE: Bill has made the Excel spreadsheets with data and graphs used for this essay available to me, and for those interested in replication and further investigation, I’m making them available here on my office webserver as a single ZIP file

Downloads:

Annual Temp Anomaly Model 171K Zip file

Monthly Temp Anomaly Model 1.1M Zip file

Just click the download link above, save as zip file, then unzip to your local drive work folder.

Here is the AMO data which is updated monthly a few days after month end.

http://www.cdc.noaa.gov/Correlation/amon.us.long.data

Here is the Nino 3.4 anomaly from Trenbeth from 1871 to 2007.

ftp://ftp.cgd.ucar.edu/pub/CAS/TNI_N34/Nino34.1871.2007.txt

And here is Nino 3.4 data updated from 2007 on.

http://www.cpc.ncep.noaa.gov:80/data/indices/sstoi.indices

– Anthony

Jeff Alberts: “There are probably thousands of ways one could theoretically match the known temperature record, but you’d never know which was the “right” way without fully understanding, and being able to perfectly model, ALL the parameters.”

I think you’re overstating the case, especially in demanding perfection, which is not possible. That said, climate models incorporate well-understood physics, the number of parameters is finite and modellers have ways of testing for individual effects.

There’s a major disconnect here, though, between the sceptic claim that the climate has strong negative feedbacks that drive the climate towards equilibrium, and the implication that the climate is random to the point that anything could happen.

And nor do sceptics always find fault with modelled science. Earlier in the year there was a good deal of celebration across the sceptical blogosphere in the wake of the Keenlyside forecasts, which were based on models.

Richard Courtney: “At best, all that can be said is the model seems to provide outputs that compare to “what the climate does”.

Yes. Our point of difference is over the status of the outputs of the models. You appear to think that they do little but mirror the theory. I think they can provide information to illustrate the way the climate works.

Running a model is similar to running a laboratory experiment. A laboratory experiment is not non-human reality, but it provides evidence that can be used to understand the real world.

“In other words, models do not provide evidence of reality…”

Scientific evidence also includes experiment, and experiments are not “reality” in the sense of pristine nature. They are human devices, intended to test the theory.

OK, phil, so the mean transmittance goes from 0.6466… to 0.6006… for one doubling. What happens for the next doubling?

You could also accurately say, “That’s the point, you’re not guessing, you’re making predictions.”

Mike

I suppose a non-scientist who didn’t have much command of the english language could.

A usual definition of ‘guess’ is ‘to suppose something without sufficient information to be sure of being correct’.

Brendan H:

This matter is only loosely related to assessment and improvement of the Illis Analysis (which is the subject of this debate). However, I make this final reply to you because your comments suggest ideas that may be typical of others who have been influenced by a certain propaganda web site run by some modellers.

You say to me:

“Yes. Our point of difference is over the status of the outputs of the models. You appear to think that they do little but mirror the theory. I think they can provide information to illustrate the way the climate works.”

What you say you think is delusional. Any model (of climate or anything else) does what its constructors have told it to do. Therefore, a model is a construct from the opinions and understandings of its constructors. Hence, outputs of a model are – and can only be – evidence of the opinions and understandings of its constructors.

The “way the climate works” may or may not be similar to the opinions and understandings of a climate model’s constructors. And, therefore, it is not possible for the outputs of climate models to “provide information to illustrate the way the climate works” except in so far as the opinions and understandings of model’s constructors have been proven to be an accurate description of “the way the climate works”.

But no human knows “the way the climate works”. For example, the Illis Analysis assesses effects of AMO and ENSO but nobody knows what causes AMO and ENSO.

A claim that climate models “can provide information to illustrate the way the climate works” is an assertion that the climate models are constructed by people with deific omniscience (so I suppose you are talking about models of James Hansen and Gavin Schmidt; joke).

Richard

Phil. wrote :

It’s not that it never radiates, just that it’s far below equilibrating with the absorbed radiation. Tom’s atmosphere would never warm up, also he forgets about the other gases present, for example if water has a shorter emission lifetime than CO2 then it will be the preferential emitter (check out the HeNe or CO2 lasers). The IR is absorbed by CO2 (and H2O) the gas heats up until either it gets hot enough so that emission (by either or both species) equals absorption, or convection occurs the gas expands and the temperature drops. Either way you get a lapse rate, on our planet the convective lapse rate predominates in the troposphere.

Sorry but I am not sure that you know how the the CO2 laser works .

The population inversion is realised by injecting hot N2 OUT OF EQUILIBRIUM .

The hot N2 transfers energy to CO2 by collision which releases then coherent IR radiation by induced radiation .

This has nothing to do with what’s happening in the atmosphere (induced radiation is negligible and there is no population inversion) unless it is to show that translationnal and vibrationnal energies interact both ways what is what I have been saying since the beginning .

You can’t get out of LTE .

Either you say now that it doesn’t exist and are not only wrong but also contradict yourself because you wrote earlier that LTE is the fundamental assumption .

Or you say that LTE exists and then both the constraint of Planck’s energy distribution (which is really synonymous to LTE) and energy conservation say that emission = absorption AND that the temperature at a given pressure is constant aka independent of time .

You are confusing “warming up” which is a transitory time dependent process and the “equilibrium temperature” which is a stationnary time independent value for given boundary conditions .

If you don’t see that yet , write here your energy conservation for a small volume in LTE and show us emission is “far below equilibrating with the absorbed radiation”.

I think that you are about to discover a new perpetuum mobile 🙂

Eli Rabbet :

Of course that the result emission = absorption at LTE doesn’t mean that the temperature is constant whatever happens .

Quite trivially if some GHG concentration varies , the equilibrium temperature will vary too .

Clearly if one considers the first slice of the atmosphere at the surface boundary , this surface boundary will reach different equilibrium temperatures when the GHG concentration varies (and in turn its conducting , convecting and radiating behaviour will change) .

John Finn (02:08:56) Says,

” Apologies to all for pursuing this but Eric says

” You might want to revise your belief that the surface skin mechanism is not working on the basis of real data.”

Eric

This from the links you provide

” The slope of the relationship is 0.002ºK (W/m2)-1. Of course the range of net infrared forcing caused by changing cloud conditions (~100W/m2) is much greater than that caused by increasing levels of greenhouse gases (e.g. doubling pre-industrial CO2 levels will increase the net forcing by ~4W/m2), but the objective of this exercise was to demonstrate a relationship.”

Note the “object of the exercise was to demonstrate a relationship” because it certainly did not demonstrate that the forcing ( ~1.6 w/m2 ) from CO2 increases to date had resulted in a warming of the world’s oceans. Although, of course, the ocean “skin” temperature might be 0.003 K warmer than it was in 1850.

So No, Eric, I don’t wish to revise my belief. In fact, I’m staggered that someone is getting away with this nonsense.”

Your arguments are contradictory. If the skin is present, it is evidence that the surface has absorbed radiation. The evidence shows that the skin does exist. The measurement is difficult to make, as Richard Courtney said in a previous post.

If the skin is absent, it is evidence that the downwelling radiation has been absorbed in part by the oceans and the region that is warmed has been mixed with the waters below. You have to account for the disposition of the down-welling radiation flux some how. It can’t just disappear and have no effect. The energy must go somewhere. As you point out, there are upward fluxes of energy from the ocean involved in IR emission, evaporation and convection, but if the downwelling did not exist the net upward flux of energy would be enormously higher.

It doesn’t make sense to claim that absorption of the downwelling radiation from the atmosphere by the ocean is impossible, because the skin layer is so thin. After all, the layer that emits the upwelling radiation is just as thin.

The layer from which the H2O molecules escape by evaporation is even thinner, just a few molecules thick at most.

All this talk of a skin on the ocean, makes it sound like it is some actual physically different kind of water.

The only extent to which the water surface is different, is that the absence of water above the surface means that the very surface molecules experience a downward attractiver force from the molecules below, that is not balanced by molecules above (ther being none). That of course results in the surface tension, which causes the surface to seek the smallest area lowest energy state.

As far as the effect on incoming electromagnetic radiation, there isn’t any “mm thick” surface skin that behaves any differently form the rest of the ocean.

However, water is the most opaque known liquid for long wavelength (IR radiation), such as the thermal radiation from the atmosphere. I suspect that it is somehow related to the fact that water has a very high dielectric constant (81) at radio wavelenghts, that results in very rapid extinction of radio waves in water. whether that is the same mechanism at optical wavelenghts, I can’t say for sure, but the downward IR is avsorbed by ordinary optical absorption processes in the top 10 microns of the ocean surface.

The top few molecular layers of course, are the source for the ocean’s emitted thermal radiation, and also for the evaporation into the atmosphere.

The water molecules follow something like a Maxwell-Boltzmann distribution of kinetic energies, and the high energy tail of that distribution supplies the energetic molecules that leave the surface, and don’t return (in an open environment). A direct result of the loss of those higher energy molecules is that the surface temperature is depressed.

In addition, you have about 545 calories per gram of latent heat of evaporation transported from the water into the atmosphere.

It is well known that Hurricanes leave a cold surface water track behind them, because of the astronomical amounts of energy removed through evaporation. Some people think it is the stirring of the ocean to bring up cooler waters from the deep, but if that happened to any extent, it would simply quench the hurricane, and shut it down.

So downward IR radiation in the 5-50 micron range, from the atmosphere (and/or GHG) results in prompt evaporation from the surface layer, but you would hardly call it a skin.

As for the incoming solar radiation, it is well known that most of that energy propagates deep in the ocean. In the wavelength range 450-550 nm which is where the solar spectrum peaks, the water absoption is a minimum with an attenuation coefficient for oceanic waters of about 0.001 (cm^-1). For mean coastal waters it is more like 0.003, and doesn’t get as high as 0.004 for even turbid coastal waters. For wavelengths above 550, for the yellow/orange/red/IR the absorption is much stronger, and the same thing happens for the shorter wavelengths.

About 3% of the incident soalr spectrum is refelcted due to ordinary Fresnel reflection and a refractive index of about 1.33 for water.

The rest penetrates in a manenr that is almost like the inverse of the solar spectral peak.

Some of that radiation gets taken up by phytoplankton, and much of it is converted to heating of the water. the warmer water expands of course so convection tends to carry that energy back to the surface, in addition of course to some conduction to surrounding (or deeper) waters. In the usual scheme of things, convection usually trumps conduction in the transport of thermal energy. Radiation from those deeper waters is irrelevant, because it would be at long wave IR and would be reabsorbed before going very far.

So the IR radiation emitted from the ocean is a property of the surface layer only, and that would normally be the warmest water.

Anything the returned IR from the atmosphere does to the surface, couldn’t affect the incoming solar radiation in any meaningful way. It is going to be largely returned to the surface, but it isn’t going to generate any significant amount of prompt evaporation, like the IR does.

Of course the preceding presumes a somewhat quiet condition; but the effect of storm induced turbulences, will greatly complicate the issues.

But I don’t see any great thermal engine pumping solar energy into the abyssal depths of the ocean.

I am sure someone with academia’s access to the numerical data for ocean conductivity and expansion and other thermal properties, can do the calculations for conduction, convection, and evaporation, if they want to quantify the different processes.

I read above some very interesting comments about science; observation, experiment, models and theory; and there seems to be some creative explanations; which don’t jibe with anything I ever learned.

The lay person typically thinks that scientific theories explain how the universe works.

Actually, the only thing that explains how the universe works; the reality; is that which is observed and possibly measured. As for laboratory experiments not being reality, how could that be. They are observations and measurments that are every bit as real as going out side, and holding up a moist finger to see which way the wind is blowing. Lab Experiments are simply reality in a controlled environment, so that extraneous influences that may hide the reality, can be minimised.

There is a limit even to what we can observe or measure; and Heisenberg in his principle of “Unbestimmheit” (maybe mit ein umlaut) tells us that in the very act of obseving, we alter the conditions so that that which we seek to measure changes in an unpredictable manner; so that we cannot even observe the present state of a single particle; let alone the whole universe; so that makes any prediction of the future state an impossibility.

At best we can gather statistical information about what mostly happens in repeated circumstances.

So the universe is simply far too complex to ever describe how it works; and science theories don’t attempt to do that.

A science theory is a rigorous description of the properties which we assign to a completely fictional model, that we create out of whole cloth. So the model, and the theory, are one and the same. As a result of our assignment of certain properties to our theoretical modle, we are able, using mathematics to exactly describe the behavior of the model, in any deined circumstance (experiment).

The mathematics of course is no more real than is the model; we made all that stuff up in our heads also,a dn we created our mathematics for the purpose of analysing the behavior of our fictional models.

Some people think that mathematics is some universal language, that must exist anywhere in the universe.

If you believe that; why don’t you write down here, a short list of the objects and elements of mathematics, that you are sure exist somewhere in the real universe.

Don’t waste your time; there are none. The real universe copntains no points, no lines, no circles, no spheres, no conic sections; it is all pure fiction that we made up.

The simple cartesian co-ordinate equation: x^2 + y^2 + z^2 = r^2 describes a mathematical sphere.

No amount of prestidigitation can cause that equation to explain the presence of 8 km high mountains on the surface of the earth.

So our models/theories of science, are entities unto themselves,a dn we can describe in great detail how they work.

Now we constructed our models in the first place, with the idea of building something that we believe behaves in much the same way that the real universe does. We can run experiments (simulations) with out fictional models that are not analagous to any real experiment that anybody actually did in the real universe. If our model does something interesting, we can then perform the analagous experiment to see if we can observe a similar behavior in the real universe.

we are very intolerant of non conformity between the calculated behavior of our fictional models, and the real observed behavior of the universe.

When that happens, and we eliminate experimental error sources, and mathematical calculation errors, our model becomes suspect, and we seek to reconstruct it, and assign it different properties, in such a way that we eliminate the discrepancy between real universe observation, and fictional model simulation.

We were quite happy with Newtonian Gravitation, until we observed a discrepancy in the rate of precession of the perihelion of Mercury, amounting to 43 seconds of arc error per century. Einstein’s general theory of relativity, gave us a new model of gravity, that eliminated that lousy 43 seconds of arc discrepancy.

So just how happy are we supposed to be, with a climate model of planet earth, that depicts the earth as an isothermal sphere at a constant temperature of about +15 deg C, bathed all over its entire surface, with solar radiation at 342 Watts per square meter; even at the south pole in the winter midnight darkness. Then the surface radiates at 390 Watts/m^2 at all points in the infrared, while green house gases apply an unbalancing “forcing”, to cause the planet to warm up or cool down.

Frankly; such a model, as enshrined in the official NOAA global energy budget; doesn’t meet the necessary test of behaving similarly to the real universe.

Urban Heat Islands (UHI) are real entities in the real universe; so they should not screw up the description of how the planet warms or cools.

The only reason they are a proble, is that the information (measurments) obtained from UHIs is not properly accounted for in the models that represent the behavior of the planet and its climate.

Maybe it is time to actually model a real planet; like the one we are most interested in knowing about.

After an intelligent and erudite discourse on how the progress of science proceeds,

George Smith says,

“So just how happy are we supposed to be, with a climate model of planet earth, that depicts the earth as an isothermal sphere at a constant temperature of about +15 deg C, bathed all over its entire surface, with solar radiation at 342 Watts per square meter; even at the south pole in the winter midnight darkness. Then the surface radiates at 390 Watts/m^2 at all points in the infrared, while green house gases apply an unbalancing “forcing”, to cause the planet to warm up or cool down.

Frankly; such a model, as enshrined in the official NOAA global energy budget; doesn’t meet the necessary test of behaving similarly to the real universe.

Urban Heat Islands (UHI) are real entities in the real universe; so they should not screw up the description of how the planet warms or cools.

The only reason they are a proble, is that the information (measurments) obtained from UHIs is not properly accounted for in the models that represent the behavior of the planet and its climate.

Maybe it is time to actually model a real planet; like the one we are most interested in knowing about.”

The above diatribe is a straw man argument, and the author should know better. The Climatology models do not assert that the planet is an isothermal sphere etc. This is just a gross calculation of averages of measured values and does not constitute a real model. The models are termed “General Circulation Models” because they examine how the absorbed energy is circulated around a globe that contains real atmosphere, oceans and land masses.

so what is the model? the IPCC report does not tell you the variables or the state conditions. what flux does the model contain for heat emissions from the earth itself? the ground does have a heat capacity and it is warmer the deeper you get.

I have pored over the ipcc documents to try to even get an inkling of wht they model, what input states they use and I cant get it

Eric,

Actually the author does know better, and he knows that any model that does not model the real time workings of the planet, and its radiant energy inputs and outputs, is not liklely to come up with believable results.

The NOAA model of the earth energy budget, assumes a radiant emission that depends on an assumed average temperature; whereas in the real world, the earth’s surface radiant emissivity ranges over more than an order of magnitude from the coldest regions to the warmest regions. And the total emission for an “average temperature” model always underestimates the emission, because that depends more on the fourth power of the temperature and not on the temperature. So just what is the point of computing a global average temperature; it has no more scientific validity than computing an average global telephone number or enumerating the average number of animals per square km on earth.

Besides GISStemp doesn’t measure anything except GISStemp; it certainly doesn’t measure any average temperature for the earth, or even for the earth’s surface, or even for the air five feet above the earth’s surface; it doesn’t even cite the results in any standard temperature scale; but refers to a baseline that is itself unknown.

How do you use a baseline period from 1961 to 1990 or any other recent epoch as a scale to plot “Data” from a thousand years ago; when there was no information about what would happen from 1961 to 1990.

How the atmosphere and the oceans circulate around the planet and the continents is certainly something I am not schooled in; so I just assume that there are those who do know about that stuff; but as far as the overall question of whether the planet is net gaining energy or losing it, I’m quite confident I have a good understanding of how that works, and you can’t figure that out by taking the averages of anything, because the energy transport mechanisms are highly nonlinear.

Besides as I have stated before; the global sampling regimen, such as goes into Dr. James Hansen’s ritual, falls far short of complying with the well understood laws for sampled data systems.

The thermal processes that go on in different global terrains, bear no simple relationship to the local temperatures; so even if you could measure the true global mean temperature; which you can’t; it tells you exactly nothing at all about the energy flows.

With a summertime daily temperature range spanning almost 150 deg C from the hottest surface locations to the coldest; no average temperature or GISStemp machinations is going to tell you anything useful about whether the planet is warming up or cooling down.

So since you used the term; why don’t you give your standard definition of what a “Straw man” argument is; I notice people like to throw that term around when they are engaged in what passes for debate.

You willnotice Eric, that I made no mention whatsoever of the so-called GCMs; never said a word about circulation models.

I did specifically refer to a CLIMATE model; not a CIRCULATION model (different C-word), and I referred in that to a picture of the earth energy balance (budget) as published on the official NOAA weather site. The “climate model” I referred to in that statement is the model that yields that precise energy budget picture.

If that model upsets you, then maybe you could take that up with NOAA.

I am also willing to entertain, anybody else’s description of a model that they believe will yield the same energy fluxes that the NOAA diagram depicts.

I actually made one that places the earth at the center of the universe (well at least the local universe) and it is surrounded by a hollow spherical sun with a radius of 93 million miles. Every point of the inside surface of that hollow sun emits elecromagnetic radiation that is confined to an emission solid angle of about 0.5 degrees angular diameter, and is essentially constant over that angle and zero elsewhere.

Such a model will produce a half degree angular diameter “sun” that is directly overhead at any point on earth at all times. Well I won’t pursue the construction of that model any further because clearly it is nothing like anything we have ever experienced. Of course neither is the NOAA picture which it models.

So Eric; don’t create your own straw man, if you think you see another. As I said, I made no mention of GCMs whatsoever.

George,

What you call a model is not a model in any sense. It simply is a representation of an annual average energy budget, to give an idea of the energy fluxes in the atmosphere over a period of a year. It is based on measurements.

I consider such an average informative and not the least upsetting.

There is no way that the total picture of the earth’s climate as modelled can be put on a website that can be absorbed by the average person who is interested in climate.

If you want an accurate daily model calculated for each minute or hour for years, you are asking for something that is unrealizable until computers become very much larger and faster, and even then it is unlikely that you will be satisfied. Meanwhile the scientists will make do with what they have and the answers will be imperfect and improve with time.

TomVonk (03:53:05) :

Until you drop your strange theory that the presence of a GHG in the atmosphere doesn’t cause the atmosphere the warm up there’s really nothing to discuss, you’re obviously a disciple of the Jack Barrett school of physics.

I’m well aware how the CO2 laser works, the one I built works rather well!

The reference to the He/Ne and CO2 lasers was to point out that a gas which has a long radiative lifetime can give up its energy to a shorter lived species which preferentially radiates, in response to one of your earlier comments.

Richard: “Hence, outputs of a model are – and can only be – evidence of the opinions and understandings of its constructors.”

If the creators of the models have some understanding of the earth’s climate, then the models will also incorporate those understandings. And the models do incorporate much that is known about the climate, for example in the form of well-established physical laws.

As for the accuracy of climate models, the IPCC third report concluded that models provided a reasonable agreement with observations, although models and observations differ in a number of areas.

Interestingly, models had predicted that tropospheric warming should be greater than surface warming, although the data seemed to show otherwise. But the data were shown to be faulty and corrections have brought them closer to the models. This boosts our confidence in the models.

“A claim that climate models “can provide information to illustrate the way the climate works” is an assertion that the climate models are constructed by people with deific omniscience…”.

No it doesn’t. Omniscient beings would not need models. One can gain sufficient understanding of part of the climate system, and/or a general understanding of climate trends, without requiring omniscience.

It seems that serious debate of the Illis Analysis has ended here and has been replaced by promotion of pseudo-science.

Brendan H your comments concerning models are plain silly.

It does not matter if the climate models “incorporate much that is known about the climate, for example in the form of well-established physical laws.” What matters if the climate models are an adequate emulation of climate as demonstrated by their emulation of reality and their demonstrated forecasting skill.

The indications of any model can only be trusted to the degree that those indications are observed to agree with reality. And the predictions of a model can only be trusted to the degree that the model has demonstrated forecasting skill. These facts are true of all models including climate models.

Clearly, the climate models do not adequately emulate reality because they fail to emulate important climate behaviours such as AMO, ENSO, etc.

And the ability of a computer model to appear to represent existing reality is no guide to the model’s predictive ability. For example, the computer model called ‘F1 Racing’ is commercially available. It “incorporates” “well-established physical laws” (if it did not then the racing cars would not behave realistically), and ‘F1 Racing’ is a much more accurate representation of motor racing than any GCM is of global climate. But the ability of a person to win a race as demonstrated by ‘F1 Racing’ is not an indication that the person could or would win the Monte Carlo Grande Prix if put in a real racing car. Similarly, an appearance of reality provided by a GCM cannot be taken as an indication of the GCM’s predictive ability in the absence of the GCM having any demonstrated forecasting skill.

It is extremely improbable that – within the foreseeable future – the climate models could be developed to a state whereby they could provide reliable predictions of global climate over any time scale. This is because the global climate system is extremely complex. Indeed, the global climate system is more complex than the human brain (the climate system has more interacting components – e.g. biological organisms – than the human brain has interacting components – e.g. neurones), and nobody claims to be able to construct a reliable predictive model of the human brain. It is pure hubris to assume that the climate models are sufficient emulations for them to be used as reliable predictors of future global climate over any time scale when the models have no demonstrated forecasting skill.

And the climate cannot have demonstrated forecasting skill over the medium and long terms. None of the existing climate models has existed for 20, 50 or 100 years so it is not possible to assess their predictive capability on the basis of their demonstrated forecasting skill over such time scales; i.e. the models have no demonstrated forecasting skill over such time scales. This is why their indications of future climate change are said to be “projections” and not “predictions”.

No model’s predictions should be trusted unless the model has demonstrated forecasting skill. But, as stated above, it is not possible to assess the predictive capability of the climate models on the basis of their demonstrated forecasting skill because none of them has existed for sufficient time for them to have demonstrated any forecasting skill for 20. 50 or 100 years ahead.

Put bluntly, predictions of the future provided by existing climate models have the same degree of demonstrated reliability as has the casting of chicken bones for predicting the future.

You make a laughable appeal to authority when you say;

“As for the accuracy of climate models, the IPCC third report concluded that models provided a reasonable agreement with observations, although models and observations differ in a number of areas.”

Well, use that IPCC report as religious scripture if you want, but those of us who are interested in the science of climate change look at facts and evidence. You cite the IPCC report that included the now discredited ‘Hockey Stick’ of Mann, Bradley and Hughes (i.e. the most discredited graph in the history of statistics) before it had been published elsewhere. And that IPCC report included the ‘Hockey Stick’ 8 times, but the most recent IPCC report dropped it and made no mention of it.

Then you make a direct attack on the scientific method when you say:

“Interestingly, models had predicted that tropospheric warming should be greater than surface warming, although the data seemed to show otherwise. But the data were shown to be faulty and corrections have brought them closer to the models. This boosts our confidence in the models.”

The data were not “shown to be faulty”. And if they had been “shown to be faulty” then that could not mean “This boosts our confidence in the models” because scientists always place observation of reality before any model of assumed reality.

The most recent the US Climate Change Science Program (CCSP) report attempted to hide the inconvenient truth that the ‘fingerprint’ of AGW is absent and, therefore, observed warming is not a result of the AGW the climate models project. But it is obvious to anybody who compares the two relevant Figures in Chapters 1 and 5 of that CCSP report that

(i) the climate models predict AGW will cause most warming to occur in the lower

troposphere at altitude in the tropics,

and

(ii) this predicted warming is not happening according to measurements of the lower

troposphere.

But the same CCSP report that included the two cited figures asserts that there is no significant difference between them! The report justifies this strange assertion by using the fact that outlying data points of the temperature measurements overlap with the indications of the computer models. This justification is nonsense according to the practices of both science and statistics: it is a claim that the bulk of the measurements should be ignored and trust should be placed in a few outlying data points that fit a preconceived notion. But that claim is ‘double edged’: if it is accepted then it has to be agreed that there was no global warming in the twentieth century.

I again repeat that a theory is an idea, a model is a representation of the idea, and reality is something else.

You can have your superstitious belief in climate models but I will continue my scientific view of all models (including climate models) so I will make no further responses to your proclamations of your superstition.

Richard

How would one expect to accurately model a complex chaotic system with poor knowledge of any of the parameters? What is the LOSU of clouds? Aerosols? We’re still learning about this stuff, so I hardly see how we can expect to accurately model such a thing. Best guesses which seem to correspond to what we see are just chance, really. We can see this with weather models that CONSTANTLY get things wrong even just the next day. sometimes they get it right, but the fact that they get it wrong probably just as often shows that there’s no real skill there.

There’s also a major disconnect with the AGW claim that positive feedbacks will overwhelm any “natural” signal. I don’t believe climate is totally random, but we certainly don’t understand the major drivers, much less any secondary or tertiary drivers.

As someone else said, even a broken clock is right twice a day. My beef with models is the use of the output as evidence. They’re not evidence, they’re supposed to be used to test theories/parameters. But without practically perfect knowledge of the complex chaotic system being modelled, any results which replicate reality are most likely accidental. And even a percent or two of error can cause things to spiral away from what the reality might be, but there’s simply no way to know until the reality in time arrives.

Phil.

Until you drop your strange theory that the presence of a GHG in the atmosphere doesn’t cause the atmosphere the warm up there’s really nothing to discuss, you’re obviously a disciple of the Jack Barrett school of physics.

I observe that you only hand wave , make irrelevant statements and avoid under any circumstances to say something that would have a scientific value .

But you are on a hook .

Everybody on this thread has seen that you wrote that CO2 radiates (much) less than it absorbs because “it transfers its energy to N2 by collisions” .

In mathematics less is not the same thing as equal or more .

So I have asked you already 2 times to write up energy conservation for a small volume in LTE and to SHOW that CO2 radiates much less than it absorbs .

Should not be too hard for somebody who pretends to know how a laser works .

I’ll make it even easier for you – suppose that there is only CO2 and N2 in the volume .

Not surprisingly you were sofar unable to do even this trivial physics .

As long as you don’t , there is indeed nothing to discuss in your meaningless posts .

“” Eric (19:42:08) :

George,

What you call a model is not a model in any sense. It simply is a representation of an annual average energy budget, to give an idea of the energy fluxes in the atmosphere over a period of a year. It is based on measurements. “”

Well I don’t want to consume Anthony’s space much more on this; but I do think the NOAA energy budget drawing does create an illusion that is not even close to reality, so it tends to mislead, rather than instruct.

Just the simple change of replacing the solar constant at 1368 W/m^2 with an “average” insolation 1/4 of that value, illustrates the point. The only way that 432 number has any validity is if you DO assume that it falls on the entire surface of the globe at that level. The fact that the sun really strikes the daylight side of the earth at 4 times the rate in the chart, and that vary little of the incoming, ever strikes anywhere near the poles, gives an immediate explanation of exactly why the earth is not an isothermal sphere. In addition the higher real rate in the tropics results in a much quicker warming and reaches a much higher temperature. As a consequence the earth cools at a much higher rate than depicted by the chart’s numbers.

It might appear that the earth heats up in the daytime and cools down at night, unless it is cloudy, in which case it warms up at night; every TV weather man can tell you that.

Actually, the only part of that which is true is that the earth heats up during the daytime. It also COOLS during the daytime, and at a much higher rate than it cools at night; clouds or no clouds. And if it is cloudy at night it never heats up, it still cools but at a slower rate. All of that is masked by the static picture NOAA portrays.

The huge imbalance between equatorial insolation and polar insolation, is what makes the global circulation mechanisms mandatory. The roughly black body radiation rate from the coldest to the hottest surface regions (on any midsummer day) ranges over a factor of about 12 times. As a result, the polar regions are very poor at cooling the planet; they don’t radiate nearly fast enough.

The whole idea of a “mean global temperature” such as GISS, RSS, UAH, and HADcrut imply, at least to the lay public that is aware of any of them, is not of any real scientific value, because there is no link between such a number and any evaluation of the net flow of energy into and out of the planet.

To be talking about changes of tenths or even thousandths of a degree on a temperature map that actually has about a 150 deg C peak to peak range is totally absurd.

As to the analysis work that started this thread, which as Richard Courtney has said, has about petered out (or been hijacked), I am quite sure that those ENSOs, AMOs and PDOs are of great interest to those who study the spatial properties of climate, as distinct from the global total aspects. But I wonder just what if anything can be said about the climate at the earth’s poles inso far as any of those cyclic events having any influence.

What surprises me most about climate discussions; at least to the extent that Anthony has been able to generate them on this site, is how seldom there is any mention of the sun as having anything at all to do with the climate. Well we have had a very interesting sunspot free year; but the climate IPCC supporters have gone out of their way to dismiss the sun and its changes as being involved in the earth climate. They seem to focus only on the effect of GHG (except water), and are quite unfazed by the observable fact (from ice cores at least), that atmospheric CO2 changes, have never ever led to subsequent global surface temperature changes; although the converse is clearly true.

Feedback systems always have a propagation delay; so it is never possible for the output signal (effect) to occur before the input signal (cause).

George

It looks like this thread is wrapping up now so I just wanted to say thanks to everyone for their comments and questions and special thanks to Anthony Watts for allowing me to put forward this method/technique for adjusting temperatures for the natural variation caused by various ocean indices/patterns.

I’m still working on the reconstruction. One thing I noticed in trying to model southern hemisphere temperatures is that the SH has a great deal of unusual up and down swings in temperatures (which are not evident in the northern or tropical series). The same swings occur in the raw southern Atlantic ocean temperatures I had downloaded and when I tried to employ the same smoothing techniques that are applied to the ENSO and AMO, the explanatory power of the swings in the raw data disappeared. So, I am trying to use the unadjusted, unsmoothed raw data for the the Nino 3.4 region and the AMO regions (there is not as much of an upward trend in the raw unsmoothed AMO data BTW). The raw unsmoothed ocean temp data provides a more faithful reconstruction of global temperatures but there is a little too much “squiggle” afterwards. I haven’t decided whether to just use the raw data or employ a 3 month smooth instead of the 5 month employed on the Nino 3.4 region index for example.

We should draw some conclusions, however, as a result of this thread.

1. Temperatures can be adjusted for the ENSO, the AMO and the southern Atlantic natural variations.

2. Temperatures should, in fact, be adjusted for these variations (I mean when you are analyzing them, not the actual temperatures as there has been too much “adjustment” in the record already). These variations are just masking the real global warming signal underneath and some incorrect conclusions have been drawn because of that. This lack of recognition about natural variation has caused some to draw incorrect conclusions about temperatures in the 1980s and 1990s and also contributed to the global cooling scare of the 1970s. The downswing in temperatures in the 50s, 60s and up until 1975 was really just caused by the downswing in the AMO. This reconstruction says the underlying warming trend continued throughout this period.

3. Actual global warming to date has been much, much less than the theory originally predicted. The actual track we are on now would produce warming of just 1.3C to 1.6C per doubling (I haven’t confirmed that final number yet.)

4. The latest proposition from the theory says that the deep oceans are absorbing some of the increase expected and we will still get to the 3.0C or 3.25C per doubling number, it will just take longer. I agree there has been warming of the deep oceans and I have no reason to say the theory is not correct BUT …

5. The analysis says if there is not an uptick in temperatures in the next 5 years or so, we will have moved so far off the global warming trend expected that it will, in fact, likely take well over 100 years to get there (I’m going out on a limb here and saying that my trendline indicates 500 years.)

6. The global warming researchers need to be clear about the actual trendline for temperatures that they expect now. We deserve to know. (I think they have projected a timeline but they do not want to be clear at this time since it may create less urgency in the issue.)

7. We need to create a new Southern Atlantic Multidecadal Oscillation. It has as big an impact on temperatures as the AMO (in my newest analysis) and it is does a very good job of explaining the unusual southern hemisphere temperature trends. The original AMO was discovered by accident when someone noted these north Atlantic sea surface temperatures were correlated with rainfall events in far off places, even Brazil.

8. We need to reduce the smoothing in these indices since explanatory information is being lost.

Once again, thanks to everyone who participated and special thanks to Anthony.

Jeff Alberts: “We can see this with weather models that CONSTANTLY get things wrong even just the next day.”

Yes, but weather is not climate. Using the analogy of a boiling pot of water, a weather forecast is like attempting to predict where the next bubble is going to rise, whereas a climate statement would be that the average temperature of the boiling water is 100 deg C. So we can make accurate statements about a total situation without necessarily knowing what is happening at the local level.

“As someone else said, even a broken clock is right twice a day.”

The Keenlyside forecasts were for the ‘next decade’, ie 2005-2015, so most of that period is in the future, but sceptics were happy to accept these forecasts without much by way of critical analysis.

“But without practically perfect knowledge of the complex chaotic system being modelled, any results which replicate reality are most likely accidental.”

The models don’t attempt to “replicate reality” so much as to understand the interactions between the various climate factors. Over time, the models have improved, and as my boiling water analogy shows, it is not necessary to possess perfect knowledge of all the features of a climate system to understand the main features. And importantly, there are a number of modellers, using different strategies, arriving at generally similar results.

Richard Courtney: “It is extremely improbable that – within the foreseeable future – the climate models could be developed to a state whereby they could provide reliable predictions of global climate over any time scale.”

Depends what you mean by “reliable predictions”. Besides, as mentioned previously, the warming of the troposphere, climate models have also predicted factors such as cooling of the stratosphere and amplification of warming at the poles, both of which have occurred.

“…nobody claims to be able to construct a reliable predictive model of the human brain.”

No, but we can make reasonably reliable predictions about human behaviour. We can predict that through their lifespan people in developed countries will most likely attend school, change into surly teenagers, rebel a bit, get a job, get hitched, drop a sprog or two, gain some assets etc.

We can also predict individual behaviour if we know someone well enough. The assumption you are making is that climate is random to the point where anything at all could happen. That’s a pre-scientific point of view. The climate might be chaotic, but it’s chaotic within limits.

“And that IPCC report included the ‘Hockey Stick’ 8 times, but the most recent IPCC report dropped it and made no mention of it.”

Ch 6 of the Working Group Report – The Physical Science Basis has an extensive discussion of the “hockey stick” reconstruction of recent temperatures and shows a hockey-type graph on p 467.

“The data were not “shown to be faulty”. And if they had been “shown to be faulty” then that could not mean “This boosts our confidence in the models” because scientists always place observation of reality before any model of assumed reality.”

The models predicted a certain outcome. The initial data failed to support this outcome. Faults were discovered with the data, and the corrected data more closely matched the models. Therefore, it follows that this justification of the models’ outputs can increase our confidence in the efficacy of the models.

Furthermore, we have a case of both “adequate emulation” and “reliable prediction”, your two requirements for validating trust in the models.

Bill Illis:

Thankyou for your excellent summary.

But your summary does not say if you intend to publish. I again say that your work deserves publication and is much too important to remain outside the ‘mainstream’ literature.

And I again thank you for the insights your work has provided.

Richard

George,

“So the IR radiation emitted from the ocean is a property of the surface layer only, and that would normally be the warmest water.

Anything the returned IR from the atmosphere does to the surface, couldn’t affect the incoming solar radiation in any meaningful way. It is going to be largely returned to the surface, but it isn’t going to generate any significant amount of prompt evaporation, like the IR does.”

Actually the Surface of the ocean emits upward more energy flux than the 324W/M2 it receives on average, from the downwelling atmospheric IR.

It emits 390W/M2 upward IR, 78W/M2 by evaporation and 24W/M2 by convection for a total of 492W/M2. As a result the surface of the ocean is cooler on average than the bulk below it. This flux difference is supplied by the bulk, which sends upward toward the surface the amount it receives from the sun, 168W/M2.

If the 324W/M2 downwelling radiation did not get absorbed by the ocean surface, the bulk would have to supply more than 168W/M2 and the system would get cooler.

Your argument that the downwelling radiation can’t effect anything is all wet.