Guest Post by Willis Eschenbach

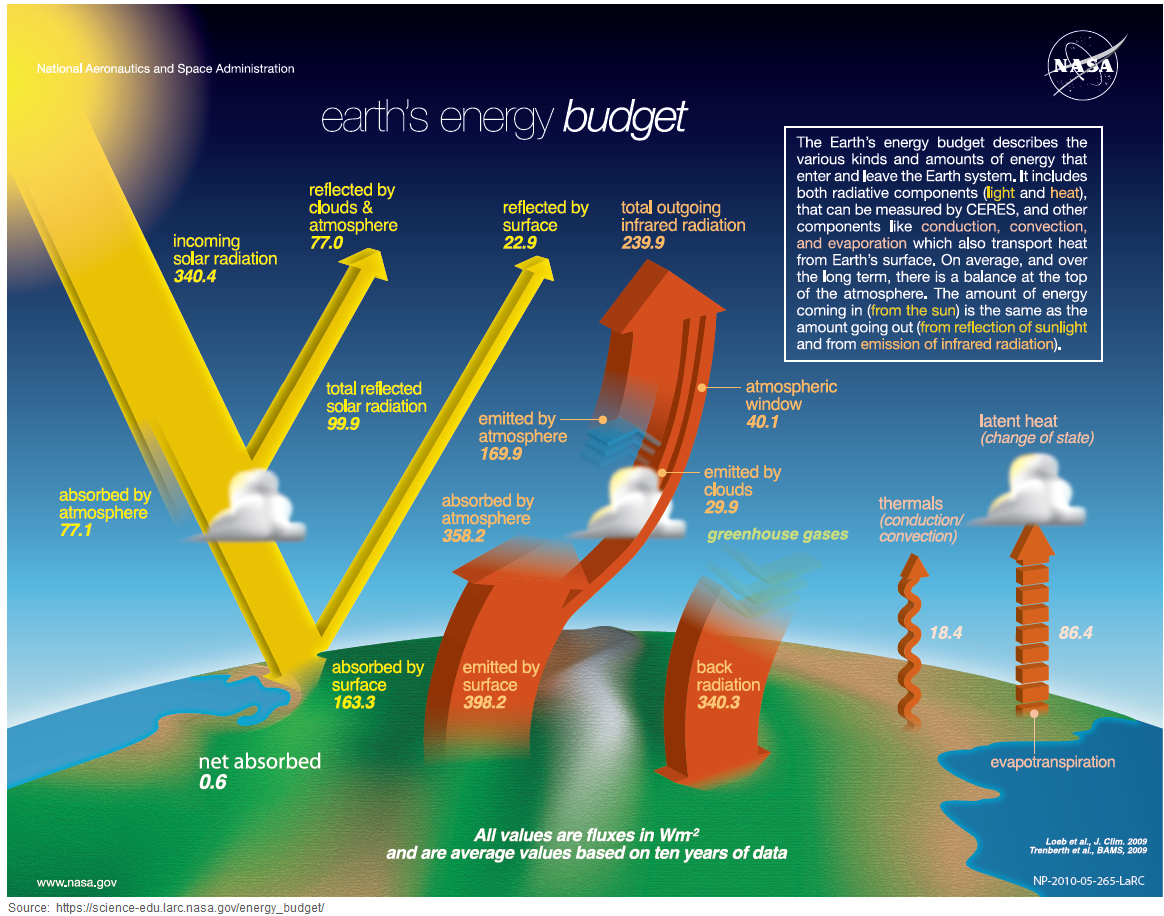

Let me invite you to wander with me through some research I’ve been doing. I got to thinking about the surface radiation balance. The earth’s surface absorbs radiation in the form of sunlight plus thermal radiation from the atmosphere. On a global 24/7 basis, the surface absorbs a “downwelling” flow of about half a kilowatt of radiative energy per square meter.

I investigate science in pictures. The raw numbers mean little to me. So I made a global map of variations in the absorption of total energy. Figure 1 shows that result.

As you might imagine, the most absorption is in the tropics. The least is up on the Antarctic Plateau. And you can see the light line above the equator in the Pacific. That’s the location of the ITCZ, the “intertropical convergence zone” where the atmospheric circulations of the northern and southern hemisphere meet. It’s an area that gets thunderstorms most days, which reflect lots of sunlight back out to space.

But that’s not what first caught my eye …

I often start researching my way down one road and then I get sidetractored into a different path … in this case I eventually noticed an oddity of that graphic. Most curiously, the total energy absorbed by the the surface in the two hemispheres is exactly the same …

… moments like this are when I’m happy that I’m an independent unfunded researcher, free to follow my monkey mind. So now, as I’m in the process of writing this up, I want to know if this exact equality between the total downwelling surface radiation in the northern and southern hemispheres is a coincidence, or whether it is stable over time. So hang on while I go take the annual averages … OK, Figure 2 shows the annual surface radiation absorption by hemisphere.

Now, this is most fascinating … despite the fact that the southern hemisphere has 30% more ocean than the northern, despite different cloud patterns and weather formations, despite one pole being a seasonally frozen ocean and the other a 5,000 foot (1,500 m) ice-covered stony plateau, despite the annual average amount absorbed in different places ranging from 120 to 670 W/m2, despite the hugely changing absorption due to the seasons, despite all of that, every single year the amount of energy absorbed by the surface is split evenly, to within half a percent, between the two hemispheres. Cries out for an explanation …

… my guess is that it’s the result of thermoregulatory emergent phenomena, but that’s a topic for another time. See, this is what my research is like. No straight lines. I prefer to wander side-trails I’ve never trodden. So I’m as surprised as you are that the absorption is almost exactly 50/50 north and south and that that is so stable … but I digress, that’s a topic for another day.

To return to the theme of the post, at the same time that the surface is absorbing half a kilowatt per square meter of downwelling radiation, the earth (like all solid objects and most gases) is also constantly emitting a certain amount of thermal “upwelling” longwave radiation. The amount that gets radiated is a function of temperature. So you can calculate the temperature from the amount of emitted radiation using something called the Stefan-Boltzmann equation. It’s how IR stand-off thermometers work.

The amount of radiation that is emitted is almost always smaller than the amount absorbed, because:

• some energy is lost from the surface as “sensible” (feel-able) energy

• some energy is lost to evaporation as “latent” energy in the form of water vapor, which releases energy when it condenses.

• some energy is “advected”, meaning moved horizontally from one point to another.

Figure 3 shows another global map, this time of upwelling thermal energy emitted from the surface.

So in rough terms, the surface receives 500 W/m2 of downwelling radiation, and only emits about 80% of that, 400 W/m2, in the form of upwelling radiation. The rest leaves the surface as sensible and latent heat.

From this, an interesting question arises—if the absorbed radiation goes up or down by one W/m2, how much does the emitted radiation change? Naively we might assume that since the amount emitted is 80% of the amount absorbed, for each additional watt per square meter of absorbed radiation, we would expect an increase in emitted radiation of 0.8 W/m2 … but does it work like that?

There are several ways that we can look at this question. Let me start with a gridcell by gridcell time series analysis. Here are the 1° latitude by 1° longitude gridcell short-term (monthly) trends in 21 years of records of upwelling vs. downwelling radiation at the surface. This is looking at monthly changes in both variables after the removal of seasonal variations. Let me call this the “Monthly Analysis”.

The most unexpected part of Figure 4 is that unlike the rest of the world, in the warmest areas of the ocean, when radiation absorbed at the surface goes up … emitted surface radiation goes down.

This is quite odd. If we take say a block of steel at steady-state, the more radiation it is absorbing, the more radiation it is emitting. And as we would expect, over most of the earth’s surface, and over all of the land, the absorbed radiation controls the emitted radiation. As is true for a block of steel, the more radiative energy that is absorbed, the more energy is radiated away. It’s just “Simple physics”, as mainstream climate scientists like to say.

But in the blue zones in Figure 4, the reverse is true—above a certain threshold, the more radiative energy that is absorbed, the less is radiated. “Complex physics”, as I like to say …

I say that this is because of the combined action of tropical cumulus fields and thunderstorms. These cool and remove energy from the surface in a host of ways, and in their wake leaving lowered temperatures and less energy to be radiated. Here’s a movie about how thunderstorms chase the hot spots.

Thunderstorms are unique in that they are not simple feedback—particularly over the ocean they are able to drive surface temperatures down to below the thunderstorm initiation temperature. This accounts for the fact that as absorbed surface radiation increases in those tropical areas, the amount of radiation emitted decreases … but again I digress …

The reality is clear from Figure 4. We are looking at a very complex system. There is no one single simple linear relationship between emitted radiation and absorbed radiation. Depending on the location and the nature of the surface (land vs. ocean vs ice vs …), it ranges from an additional 0.8 W/m2 of surface emissions per one additional W/m2 absorbed, down to a decrease of – 0.2 W/m2 of emissions per one W/m2 of additional absorption. Not only are they not of even approximately the same size, they’re not even of the same sign.

As Figure 4 shows, the short-term area-weighted global average trend is only an increase of about a quarter of an additional watt per square meter of emitted radiation for each one W/m2 of additional radiation absorbed.

The various areas do act as we might expect. For example, there’s a greater response in land radiation emitted than in the ocean, due to the ocean’s temperature changing more slowly because of its greater thermal mass. And the tropics show the least change from a one W/m2 change in absorbed radiation.

But Figure 4 only shows short-term, month-by-month changes. And because it take time for earth and ocean to heat up and cool down, these short-term changes will be smaller than the long-term changes. For longer-term changes, we have to look elsewhere.

My insight on this was that we have some 64,800 gridcells in each of the maps above. Over the centuries, they’ve settled into a slow-changing steady state. Each of these has a long-term average absorption of total radiation, and a long-term average emission of thermal radiation. After many, many years, these absorption and radiation levels have incorporated and encompass all feedbacks and delayed responses. We know this because the average of the first half of the CERES data is almost indistinguishable from the average of the full dataset. Figure 1a below shows the same analysis as Figure 1, except for the first half of the data.

So … consider several adjacent gridcells in mid-Pacific or somewhere. Each one has slightly different long-term averages of absorption and emission of radiation. This lets us know what we can expect to happen in that area of the world if the absorption changes by say a watt per square meter. We can know that because in some adjacent gridcell, this is actually happening.

For Figure 5, for every individual gridcell, I looked at a 5° latitude by 5° longitude square area, with the individual gridcell located at the center. Utilizing two of these 5×5 patches of cells, one patch showing emission and one showing absorption, I used standard linear regression to calculate the local change in emission from a one w/m2 increase in absorbed radiation for that small area. Since this is using local data from adjacent gridcells, let me call this the “Local Analysis”.

Figure 5. Local Analysis. Two views (Pacific and Atlantic centered) of a different way to measure the longer-term change in surface upwelling radiation as a function of the absorbed radiation.

There are some interesting things about Figure 5. First, overall, as we’d expect, over the longer term the surface responds more to absorbed radiation than is shown in Figure 4, which is looking at month-to-month variations. In Figure 5 it averages about 0.4 watts emitted per watt absorbed, almost double the value shown in Figure 4.

However, the areas of negative correlation (blue areas) are all in the same location, in the tropical ocean above and below the equator. And the ocean still is changing less than the land, and the tropics is still changing the least.

Moving forwards, there’s a third way and separate way to calculate the long-term response of the surface to absorbed radiation. This depends on a scatterplot of the amount emitted by the surface as a function of the amount absorbed, as shown in Figures 7 to 9.

To begin with, Figure 7 shows the scatterplot for the entire globe. Note that this uses exactly the same data as in the two previous analyses, the “monthly” and “local” analyses. In all cases, I’m using the CERES 21-year gridcell-by-gridcell average values for the surface radiation absorbed and emitted.

Now, the general trend that we’ve been looking at in the two analyses above, the change in emission for a one watt per square meter increase in absorbed radiation, is given by the slope of the yellow line above. And it shows something quite curious …

Most of the data shows a pretty linear relationship between absorption and emission. From ~ 100 W/m2 to ~ 275 W/m2 of absorption, it’s pretty much a straight line. And the same is true, although with a somewhat lesser slope, of absorption from ~ 275 W/m2 to ~ 600 W/m2 of absorption

But once the average absorption (longwave plus shortwave) goes above ~ 600 W/m2, there is no further increase in emission. In other words, all of the additional incoming energy simply is lost as sensible, latent, and advected heat, and the emission doesn’t increase … and of course, “no increase in emission” means no increase in temperature.

Remembering that in the monthly and local analyses above, negative trends almost entirely occurred over the ocean, I split the data into land and ocean gridcells and looked at the two responses. Figure 8 shows the response over the land.

Now, this is much more what one would expect to find. As in the aforementioned steel block, as absorption increases, emission increases. Everywhere we look on the land, when absorption goes up, emission (and thus temperature ) goes up. “Simple physics”.

But this is just the land … what about the ocean?

Here we can see what we saw in Figure 1—there are areas of the ocean where, when the absorbed radiation increases, the emitted radiation decreases … which also means that the temperature is decreasing.

How much does this affect the trend worldwide? We can use the data above to get the trends for each gridcell with a given amount of absorbed radiation. Figure 10 shows that result. Let me call it the “Global Analysis”. Of course, since it is looking at global averages it doesn’t have the fine detail of the other methods.

But it does give the same general pattern, with land emissions all increasing with increased absorption, and with large areas with tropical Pacific emissions moving in the opposite direction from the absorption.

So we have three different estimates of the changes in surface emission resulting from a 1 W/m2 increase in surface absorption. The first one, the “Monthly Analysis”, is short-term so it doesn’t include any feedbacks or slow changes. Thus it gives smaller results than the other two methods.

The other two include all of those slow changes, because they are based on two-decade averages showing the long-term steady-state conditions. Here is a comparison of the three methods.

As you can see, because it’s showing short-term variations, the monthly analysis (blue) gives smaller answers across the board. However, it is closer regarding the land trend, because the land changes temperature faster. The other two methods are long-term and are in reasonable agreement. I would say that the local analysis method is the more accurate of the two longer-term methods. It is location-specific as opposed to absorbed radiation-specific, and so it captures finer detail.

Steady-State

“Steady-state” describes a condition where variables don’t change much. For example, over the entire 20th century the globe warmed by something less than one kelvin. The average temperature of the planet is about 288 kelvin. So over the 20th century, the total change in temperature was about a third of one percent. This is steady-state, where on all levels energy absorbed is generally equal to energy out.

Suppose we took a cold world, dropped it into orbit around a nice warm sun, and watched what happened.

It would warm up … but it won’t warm up forever. The warmer it gets, the more loss from the surface occurs in the form of sensible, latent, and advected heat. Eventually, it will hit a balance point where the surface will neither heat nor cool appreciably.

For the earth, this occurs at the point where on average, for every watt per square meter absorbed by the earth’s surface, about 0.8 watts are emitted.

However, and this is the important point, for excursions around the steady-state, the surface emits much less radiation for every watt per square meter absorbed. After the effect of all short- and long-term losses and feedbacks, the surface only emits an additional ~ 0.4 watt/m2 for every additional watt/m2 absorbed.

Discussion

Why is all of this important? It’s the lowest-level, simplest, and most straightforward part of a larger question. That question relates to the central paradigm of mainstream climate science, which says that the change in temperature (∆T) is a linear function of the change in top-of-atmosphere (TOA) downwelling radiation (“radiative forcing”, ∆F). Mathematically, this is expressed as:

∆T (change in temperature) = lambda (“climate sensitivity” constant) times ∆Ftoa (change in downwelling radiation at top-of-atmosphere), or

∆T = λ ∆Ftoa

Me, I think this equation is fatally flawed, in part for the reason visible in the graphs above—even within just the surface itself, there is no constant lambda “λ” that relates radiation emitted (a measure of temperature) to radiation absorbed. Instead, it varies widely by location and surface type, in both the short- and long-term trends.

In fact, to the exact contrary of the idea of a linear relationship, in large parts of the tropical ocean when absorbed radiation goes up, emitted surface radiation (which is to say surface temperature) goes down … the climate, my friends, she is very complex, and not “simple physics” in the slightest.

More energy is absorbed by an object and in response, it cools down? Say what? “Complex physics” at its very finest.

My best to all,

w.

No one who understood the GHE would ever say this. Rather downwelling radiation IS a function of temperature!!!

That is next to the question which surface emissivity is assumed in Fig. 3. Sure something close 1, rather than some accurate 0.91..

https://greenhousedefect.com/what-is-the-surface-emissivity-of-earth

All this “data” is rigged because none of it is actual measured data.

Firstly , how do you measure “surface emissions” from space when you have an entire ficking climate system getting in the way.

I always enjoy Willis’ digging and speculation but when the initial “data” is already a derivative dataset based on a metric tonne of models and assumptions, I don’t see the point of even starting until you have taken apart how the surface data is constructed.

GIGO.

That is the merit of my work. While indeed satellites are pretty useless in this regard, there are other sources we can use. And I think it is embarrassing to say like, cause the satellite does not tell, we are free to guess. It is no legal approach in science.

The satellite sensors DO only see the energy that falls upon them up there above TOA. So the upwelling and downwelling numbers are back-calculated. The “team” thinks they are accurate to about 0.6 W/m^2….possibly a highly optimistic number, plus they continuously modify the calculated output so that what they think should balance out to zero…is “zeroed”.

https://mdpi-res.com/d_attachment/remotesensing/remotesensing-12-01280/article_deploy/remotesensing-12-01280.pdf

Well, you may be right but, then the analysis shows, at the very least, that the formula created from that data set is flawed. If you can show that the data as presented and represented by the climate science community does NOT support this: ∆T = λ ∆Ftoa

then you have done something that is a very big deal.

Good point Daniel. It’s a shame Willis did not present his argument in that way.

[spam comment removed- Anthony]

Mods

I think that the “Matty” comment should be removed as spam. Lately, SciTechDaily has been dealing with some enterprising idiot that actually appears to be pedaling malicious SW.

So Matty, does your online pimp job make your Mom proud?

E. Schaffer, I think you misread “TOA downwelling radiation” as “downwelling longwave IR from gases in the atmosphere.”

“Downwelling radiation” at the surface is composed of incoming solar radiation, radiation emitted from radiatively active gases within the atmosphere, and a bit of reflected radiation (from clouds, dust, etc.). “Downwelling radiation at TOA” is just a funny way of saying solar insolation.

I think what Willis meant to say was that it is assumed by mainstream climate scientists that surface temperature is an approximately linear function of irradiance at the surface, which consists of solar irradiance, downwelling LW IR from radiatively active gases, and radiation emitted & reflected from clouds & dust, and that those latter sources of surface radiation are often specified in terms of the change in TOA solar insolation which would have an equivalent effect on surface temperature.

Is that about right, Willis?

The “approximately linear” aspect is not because Stefan-Boltzmann response / Planck Feedback is linear, but because almost any curve looks approximately linear if you examine a small enough section of it.

The CMIP 5 models mostly assume that an average global temperature increase of 1°C would increase radiant heat loss from the surface of the Earth by 3.1 to 3.3 W/m².

One small complication is that ≈22.6% of incoming solar radiation is reflected back into space, without either reaching the surface or being absorbed in the atmosphere.

Right, I have to make this concession. “Back radiation” is a function of temperature, not “downwelling radiation” as a whole, including solar radiation. Yet the point I am making is on the common fallacy of assuming “back radiation” could cause any GHE. As it is a function of temperature, it will not explain why a certain temperature / “back radiation” relation does exist in the first place.

Which is another fallacy. If emissions TOA are 240W/m2 and surface temperature is 288K, then (289/288)^4 x 240 – 240 = 3.35, and “Lambda” then is 1/3.35 = 0.3. The problem here is, that “climate science” wants to squeeze out as much warming by any forcing as possible and thus tries to maximize Lambda.

The above approach is obviously wrong, as emissions TOA do not depend on surface temperature, but on the emission temperature. Rather it should be (256/255)^4 x 240 – 240 = 3.79, and Lambda = 0.264. Accordingly a range of 3.1 to 3.3W/m2 for 1K is fudge factor producing some 15-20% more warming in the end. And it is one of many fudge factors.

In reality 2xCO2, allowing for overlaps and real surface emissivity, will only produce a forcing of 2W/m2 (NOT 3.7), translating into 0.53K of warming.

1. As for the 3.7 to 3.8 vs. 3.1 to 3.3 W/m² figure for radiative emission increase from a 1°C temperature increase, to quote everyone’s favorite Facebook relationship status, “it’s complicated.” Here’s a paper about that:

https://web.mit.edu/~twcronin/www/document/Cronin2020_PlanckQJ.pdf

I don’t have enough confidence in my understanding to rule out either figure, which is why I included both in my Stefan-Boltzmann response / Planck Feedback discussion.

2. It is not a “fallacy” that so-called “back radiation” warms whatever absorbs it. I.e., it has a so-called “greenhouse effect.” (I say “so-called” because that’s not how actual greenhouses work, but it does cause warming.)

3. As for how much radiative forcing you get from a doubling of atmospheric CO2 concentration, estimates vary quite a bit. I have a couple of web pages about that topic. Here’s the shorter of them (and it also links to the longer):

https://sealevel.info/Radiative_Forcing_synopsis.html

Your estimate of 2 W/m² is possible, but it is near the low end of the confidence intervals for the lowest estimates. Myhre 1998 & the IPCC (TAR & later) estimate 3.7 ±0.4 W/m² per doubling of CO2. AR5 reports that RF estimates for a doubling of CO2 assumed in 23 CMIP5 GCMs varies from 2.6 to 4.3 W/m² per doubling. van Wijngaarden & Happer 2021 (preprint) (see also 2020) report calculated CO2’s ERF at the mesopause (similar to TOA) to be 2.97 W/m² per doubling. Feldman et al 2015 measured downwelling longwave IR “back radiation” from CO2, at ground level, under clear sky conditions, for a decade. They reported that a 22 ppmv (+5.953%) increase in atmospheric CO2 level resulted in a 0.2 ±0.06 W/m² increase in downwelling LW IR from CO2, which is +2.40 ±0.72 W/m² per doubling of CO2. However, ≈22.6% of incoming solar radiation is reflected back into space, without either reaching the surface or being absorbed in the atmosphere, so, adjusting for having measured at the surface, rather than TOA, gives ≈1.29 × (2.40 ±0.72) = 3.10 ±0.93 W/m² per doubling at TOA. (There are also a few more estimates from other sources on that web page.)

1. Certainly one can make it complicated, by enhancing the base assumptions. Yet that will not compensate for erroneous base assumptions. Cess et al 1990:

So that would describe the origin, and it is wrong. It is well possible, given the mistake, that some arbitrary “justifications” were introduced later on. But it goes in the wrong direction anyhow. Other than a constant lapse rate, we would expect more latent heat with any warming, reducing the lapse rate (think of the “hot spot”), so that surface temperature would go up less than the emission temperature.

2. No definitely not. Again, “back radiation” as any radiation is a function of temperature, not vice verse. What causes the GHE is an elevated emission altitude combined with the atmospheric lapse rate. “Back radiation” has nothing to do with it. Rather the belief in “back radiation” producing radiative energy without energy input, is the equivalent to the belief in a perpetuum mobile.

3. That is why it is important to understand the circumstances for the respective estimates. I have named it all in the article below, and the table summarizes it nicely.

https://greenhousedefect.com/the-holy-grail-of-ecs/the-2xco2-forcing-disaster

OK, so the down-welling versus radiative energy balance is different for different areas of the earth, due to “complex physics”, or something similar. Now add in ocean and atmospheric currents, Milankovich (and other similar) cycles, set the continents adrift, pop off a few volcanos, and try to model climate change. Not going to happen.

You dummy. It’s simple physics, Ron. CO2 is the control knob for all climates everywhere, especially the Global Average Climate**.

Ignore all that extraneous detail .Trust The Science. Just ask Greta.

–

–

**Me’n Diogenes have been out looking for someone who actually lives in the Global Average Climate. No luck so far, and Biden’s policies are making the search d@mned expensive with the rising price of oil. The search for “that guy” continues. Some average person has to be living in the average climate somewhere somewhere… on average. Maybe we should check out the average location on the Earth next, ya think?

Maybe you haven’t found the right lamp, the one that illuminates the face of an honest person.

Zeus knows you won’t find that honest face amongst certain government agencies, alarmists and democrats.

That will leave Diogenes bereft of hope that he could find that honest face.

I don’t think that Diogenes was married!

It’s clouds! Clouds are one thing that global circulation models do not derive from basic conditions, and have to be parameterized, I.e. made up to fit what the model is tuned to.

It is time to start humming that old Joni Mitchell song.

Cloud formation is up in the air.

I knew cows are responsible for greenhouse gases- but(t) Horses??

3 of those and we can end the drought in the Sahara.

Now you’ve got me humming “Big Yellow Taxi”.

What?!? I’m not getting that at all. I’m whistling “The Blonde In The Bleachers.”

It’s not just large scale phenomena. Both observation and inferred parameters are affected by limited events (e.g. blocking, cofluence) on human and geological scales, in local and regional spaces.

You bring up an interesting point about human and geological scales, n.n.

On a geological scale, people live on mountains that were once at the bottom of a sea and wear mukluks where alligators used to swim.

In terms of human scale…

My grandfather was born in 1886 and started life in horse and buggy days (my great-grandfather was a wagon and carriage maker). My grandfather bought a 1910, Baker Electric, so was an early EV adopter. As roads improved and travel expanded, he switched to Ford ICEs (Model A), and then a 1953 Chevy, the last car he owned. He watched aviation from its infancy to men landing on the moon His son, my dad, worked on the Engineering of the lunar landing module. I think he may have flown once when a barnstormer came to town and gave rides for a dollar or so. I’m not sure of that. But air travel was fairly cheap ubiquitous at the time he died.

But climate change during his lifetime… the human scale?

Eh… some Winters were bitterly cold and others not so much. Some summers were cool and wet and others were hot and dry and crops were darned poor. he saw the heat of the ’30s and the floods of 1913 and 1939 as well as others.

That’s an important point about the difference in time scales, n.n.

When I was a kid, the snow was near waist high. Now, after a major, severe snowstorm it maybe, maybe comes up near to my knees. Climate change? Perhaps, or perhaps it’s the fact I’m no longer 3’2″, but I still remember trudging through the deep, deep snow back then.

The current period of glaciation began 400 years ago. 1585 was the last time that perihelion occurred before the austral summer solstice. Since then the North Atlantic has been getting more sunlight on average. Boreal summers are getting more sunlight but boreal winters slightly less.

Atmospheric water is peaking later in the year and that will continue for the next 12,000 years as perihelion moves closer to the boreal summer solstice.

The water recently flooding North America and Northern Europe will be peaking later in the year and dropping out faster ahead of cooler winters as snow. The snow will accumulate.

I appreciate where you’re coming from, Rick, but 400 years is longer than human scale and shorter than geological scale.

However conditions 200, 400, 1,000 10,000 and 100,000 thousand years ago can perhaps inform us as to whet we might expect, as best as we can figure out what the local weather and the various regional climate regimes were like at those times.

The point is that climate is changing and orbital mechanics play an important role. The seasons are obvious on an annual time frame and significant.

Orbit also has longer term predictable changes that humans should be aware of and already planning for. Within this millennium humans will be confronted with the next period of glaciation and falling sea levels – it began 400 years ago. That makes most of the exiting port infrastructure across the globe unsuitable. This is not a trivial problem. And unlike CO2, it is real. There may be ways to arrest ice accumulation by large scale dosing to reduce reflectivity but no process developed for that.

Cities at latitudes like Montreal will have much greater levels of snowfall than now being experienced; even New York. Snow clearing will require increasing effort. The Great Lakes could be iced up year round.

The last 4000 years in Earths history have been balmy with very low snowfall over the northern land masses – just 400 years past their lowest average level. That is now changing. There will be more water in the atmosphere over the North Atlantic ahead of cooler winters. Tropical storms WILL occur more frequently in the North Atlantic. Flooding will be more severe. These changes are barely perceptible now but the changes should be getting incorporated into infrastructure planning now.

As a specific example, dam design for high consequence dams are based on the maximum probably flood event with time horizons beyond 10,000 year average return interval. These are statical values based on historical data collected over maybe 200 years. A real consequence could be the failure of the Hoover Dam.

Humans must adapt to climate change. There is little possibility of arresting these certain changes.

If mankind stops emitting ghgs, the ghe stops increasing. Doesn’t that arrest global temperatures rise?

Well, the mechanics are interesting, Rick, but I was commenting on n.n.’s point about humans and their perception of scale.

Most people don’t appreciate geological time and operate on human scale, and many operate on even shorter scales, such as what happened last week.

The majority of people who frequent this blog seem to have an appreciation of the various time scales as they relate to climate.

The point of my story about my grandfather is that some things change rapidly on human scale – there are millions who have never seen an 8-track tape – but climate? Not so much.

… are one of MANY things …..

To be precise, it is atmospheric water. A fatal flaw in climate models is treating the phases of water, gas, liquid and solid, as separate entities. Clouds start out as water vapour. They can be formed from both liquid and gas phases. The amount of water vapour in the atmosphere is a function of the surface temperature. All linked to provide surface temperature upper limit over open oceans.

The critical level of atmospheric water is 45mm. Above this level the atmospheric water cools the surface while below this level, the atmospheric water warms the surface.

None of those changes affects heat transfer to space. So it’s irrelevant to earths heat balance

Yes. Climate is a complex system, not just ∆T = λ ∆Ftoa.

Contributing factors overlap with each other. Their relationships are non-linear, need time to occur and have feedback loops. I would not even try to create a computer model that calculates that.

Simple sensitivity model assumes that all difficulties average out. After several decades of work we have got a number from 0 – 7 C, which does not look useful.

I up-ticked.

Then had 2nd thoughts. The problem is… it does look useful.

Sure, it doesn’t look accurate enough to be physically useful.

But science is about getting funding to do science; the physical world is not necessarily involved.

Hey, the average of 0-7 is 3.5 deg C. You just need to put that in a report with lots of pseudo-scientific fluff and then use it to redesign the world economy !

Don’t you have the first idea how climatology works ?

Overlaps are a huge issue! And believe it or not, the whole “global warming” narrative is based on ignoring them all. Because if you do, CO2 can not do a lot of climate change anymore.

No, until the range of uncertainty is at least an order of magnitude smaller than warming expected to have undesirable effects, we don’t really have any useful information.

Willis here another aspect of the Northern/Southern hemisphere similarity that is worth consideration ……Albedo reflection of sunlight apparently within 0.2 W/m^2

https://agupubs.onlinelibrary.wiley.com/doi/full/10.1002/2014RG000449

One of the authors is Peter Webster, Judith Curry’s husband.

The paper seems to be basically arguing Willis’ self regulation.

More experts that don’t seem to understand the difference between diffuse and specular reflections.

They cite early work done on estimating albedo by measuring Earth-shine on the moon. What none of them apparently realize is that only retroreflectance can be measured with such a geometric configuration, which implies that primarily diffuse reflectance (clouds, vegetation, regolith, and snow) is being measured. Actually, whatever flux is measured from a diffuse reflector, such as clouds, has to be integrated over a hemisphere to get the total reflectance. To do the integration, one has to either assume Lambertian distribution, or have an empirical hemispherical bi-directional reflectance distribution function for the reflector(s).

All specular reflectance (with the exception of the sun’s rays normal to the surface) reflect to the side or away from Earth. Thus, only a very narrow cone of rays can be intercepted from a specular reflector such as water, which is not representative of all the reflected light. Furthermore, it is the rays beyond 60 degrees that represent higher reflectivity, and are sent off into space in the same direction as rays from the sun.

Very interesting, we need more “monkeying around.” Bell Laboratories had a funding source and wise management that allowed such. NSF may have started out that way, but evolved into the problem bureaucracy it now is. Excuse was always accountability, suspect that got more money wasted which increased over the years. Science communicators need to exam this before they pontificate.

As to the physics have long wondered what goes on in the ocean as under the mass of clouds now in the western Gulf of Mexico, quickly called Nicholas. Imagine being Halobates, the surface waterstrider!

Bureaucracy is created to eliminate, or at least minimize, error. It grows until it overwhelms the original purpose of the entity. The Soviet Union’s demise is the finest example of the resultant. The U.S. Federal Deep State is one of the current manifestations. Pick your own local example.

Bureaucracy is created to a) avoid risk to any individual in that bureaucracy and b) avoid responsibility falling on any individual in that bureaucracy. They are not concerned with error – otherwise they would all have to close down post haste.

The largest error one can make in a bureaucracy is to make one’s boss look bad. Bureaucracies designate scapegoats all the time, so individuals truly are singled out. The problem is that it is usually management’s fault but has to be blamed on someone in the lower levels.

Individuals are simply cogs in the great machine and will be punished for unorthodox behavior. Punishment often involves moving the individual to another job (sometimes with more pay) and starting rumors about how bad that individual is.

Rules in a bureaucracy do not resemble the rules one normally assumes in life and commerce. They have no rational standards so one can never assume rational behavior by a bureaucracy. Evidence the current institutionalized racism and wild misspending on “green.”

It is just convection.

Clouds and thunderstorms can visually display where upward convection is occurring but don’t display all of it.

Just consider the Polar, Ferrel and Hadley cells.

They change size, intensity and speed of overturning in order to neutralise any radiative imbalances.

The amount of such changes from CO2 variations would be indiscernible from short term natural variability caused by sun and oceans let alone from orbital variatins and/or Milankovitch cycles.

Earth is a free-wheeling heat engine? Constructal Theory Predicts Global Climate Patterns In Simple Way

Thanks, Ian. The Constructal Law is one of the most under-utilized Laws of Thermodynamics. I discussed some applications to climate here, here, and here. You’re one of the very few people who seem to get the importance of the Constructal Law.

w.

There is no “extra” downwelling radiation because there is no “extra” upwelling radiation.

IR instruments do not measure power flux directly, they measure comparative/referenced/calibrated temperatures and INFER power flux by ASSUMING emissivity.

And is often the case with assuming, assuming 1.0 surface emissivity is assuming wrong.

No RGHE, No GHG warming, no CAGW.

Ideation somehow always slips in.

Science isn’t ideation. Ideation is … something like … say …. believing some BS about back radiation being a real forcing without the slightest scrap of evidence to back it up.

I think they call this group lukewarmists. That’s ideation at its most destructive because it gives credibility to climate change alarmists they don’t deserve.

“…. believing some BS about back radiation being a real forcing without the slightest scrap of evidence to back it up.” So, the atmosphere, including clouds, doesn’t radiate infrared?

What is it about the word “forcing” that you don’t understand?

“Forcing,” according to the UN IPCC CliSciFi material, is an external force applied to the existing climate processes, or some such verbiage.

You seem to confuse “forcing” with the downward IR of the atmosphere. It is no more of a “forcing” than that of the upward IR at TOA.

Regretfully, people have been calling the downward IR of the atmosphere to the Earth’s surface as “back radiation.” Upward and downward IR are simply the results of the energy added to the atmospheric system. It can be envisioned as the radiation from both sides of a flat sheet of material, although the “sides” of the atmosphere (including clouds) radiate at different rates.

I think that is called a u-turn .. read the conversation again

Knowing the boundaries of the systems everyone is talking about would be nice.

Dave said using IPCC that forcing is “an external force“. External to what. Only thing external to TOA is the sun. Everything is external to the surface of the earth.

I’m not going to try to explain nor justify UN IPCC CliSciFi practices.

IPCC Science is written by actual scientists. Are you having trouble understand them?

Having a scientific and engineering background, I understand them well. It is the unscientific political interference in the preparation and conclusions of the reports that I have “trouble” with. Unbiased scientists have been frozen out of the UN IPCC CliSciFi reports. They read like poorly prepared soviet-era propaganda.

Of course the atmosphere radiates infrared; as does the surface. How do you think the earth cools?

It only cools if the solar energy entering earths system is less than what is leaving. Since about 1900, its been the reverse

So somehow every climate scientist, every atmospheric physicist, and every scientific institution in the world are wrong.How do you explain that? Could it be that instead you’ve made a fundamental error?

I was criticizing the “no back radiation” comment. I support the scientific consensus of GHG effects. I disagree with the clearly-disproven CliSciFi assertion that water vapor and clouds provide for positive feedback of 3-to-whatever times.

Nick is completely confused, as usual.

He confuses issues of the emissivity of the sensor and the emissivity of the object being measured.

He thinks that sensors measuring radiative flux density (in W/m2) need to make assumptions about the emissivity of the object being measured. (If you then want to derive the temperature of the object from this flux density, as with your kitchen IR thermometer, this is true, but that’s not what these sensors are doing — rookie mistake!)

He confuses gross and net radiative transfers, and mistakenly thinks emissivity is a function of net transfer.

These are all things that a semi-competent student would understand from their first undergraduate heat transfer course. But it’s very clear that Nick does not belong to that group!

Ed what emissivity should be used when calculating the the temperature of the CO2 that is radiating all that IR at 15 micro?

mkelly: The emissivity of gases is a complicated subject. As Kevin Kilty says below:

“The effective emissivity for gasses at one atmosphere total pressure is a function of the path length the gas presents times partial pressure of the IR active gas and the gas temperature.”

I typically use graphs and tables that spring from the engineering work of Hoyt Hottel.

But regardless, the emissivity value used for gases is a factor in calculating the gross radiative output as a function of temperature, regardless of the radiative input.

It’s a simple concept, explained in any introductory heat transfer class. But no matter how many times it’s explained to Nick, he can’t get it!

I am surprised that Willis has not condemned this. As his education proceeds, he might begin to appreciate the religious nature of downwelling radiation.

He would find working with top of atmosphere fluxes so much more meaningful using physical reality rather than concepts embedded in the GW religion.

There is zero infrared radiation leaving the surface of a tropical ocean. ALL heat transport from the surface is latent heat with a tiny amount of conduction. There is actually zero infrared released directly to space from the atmosphere below 8,000m. Too much water in all its phases to permit transmission.

“There is zero infrared radiation leaving the surface of a tropical ocean.” I didn’t know that the tropical ocean was at zero K. Thanks for the heads-up.

Additionally, thanks for informing me that there is no small “window” in the atmosphere that allows a small portion of Earth’s IR to go directly to space. Everybody seem to have gotten that one wrong.

You did not read or understand what I wrote. Specifically no IR goes directly to space over a tropical ocean. The IR from the surface is absorbed before it gets into space.

In locations that have less atmospheric water then the IR is not fully absorbed. Some wavelengths gets directly to space.

This link shows how corrections are made for determining SST from space.

https://www.ssec.wisc.edu/meetings/ciw/Workshop_Presentations/Wednesday_6_20_2012/2_Algorithm_Approaches/3_Wilson_TempAlgorithmOverview.pdf Note the difference between the low and high humidity spectra on the 8th slide. The high humidity spectrum is lower than the low humidity spectrum over the full wavelength.

RickWill: “Specifically no IR goes directly to space over a tropical ocean.”

WR: Do you mean no IR that can be absorbed by H2O or do you mean no IR that leaves the ocean – whatever the wavelength?

I would be interested to know for oceans around the equator how much IR energy (W/m2 and percentage) is able to reach space without being absorbed by whatever GHG molecule, both for clear sky and cloudy sky conditions. And I am also interested in the numbers for the present Arctic and the Antarctic.

There is none that goes direct to space over a tropical ocean at any wavelength. But the absorption for different wavelengths varies with the TPW, cloud cover and type of cloud.

In the paper I previously linked to on the other thread and above on this thread, a tropical ocean at 30 to 32C cycles water in the evaporation/precipitation cycle at 7.5mm per day. The IR radiant heat loss above the level of free convection is equivalent to the latent heat to evaporate 7.5mm per day. So the only heat transport from the surface is latent heat.

http://www.bomwatch.com.au/wp-content/uploads/2021/08/Bomwatch-Willoughby-Main-article-FINAL.pdf

See Table 1 for the surface measured insolation and the column OLR.

The average rate of precipitation is 7.5mm when the system is in temperature limiting mode at 30C. That means the average rate of evaporation is also 7.5mm. Most of the condensate is produced above the level of free convection. That means all OLR exits at high altitude.

Determining the sea surface temperature in the tropics from space is not trivial because there is no wavelength coming direct from the surface or anywhere near it. The NOAA/Reynolds SST data set combines the temperature measurements at the tropical moored buoys interpolated with satellite data to give quite accurate SST over the tropical oceans.

RickWill: “There is none that goes direct to space over a tropical ocean at any wavelength. But the absorption for different wavelengths varies with the TPW, cloud cover and type of cloud.”

WR:

*See for actual cloud cover:

https://climatereanalyzer.org/wx/DailySummary/#prcp-tcld-topo

Go find a different hobby

He did. His last one was a unicorn hunter.

Willis, as much as I admire your research, the lucid clarity of your writing on theses subjects is the real tribute to your insight and character.

An old prof once admonished me with the words “true genius is the ability to take the most esoteric idea and lay it out clearly and simply for whoever might seek to understand.

Thankyou

Willis says “thanks son”.

leitmotif, did you have to study to be as trivially and nastily snide as you are, or is it a God-given skill?

w.

Sorry Willis, I only have my maths and physics degree to fall back on. Maybe I should have gone for psychology like you did.

Too late now.

And despite that, I have a half-dozen journal articles that have garnered over 150 citations… and you? …

… Oh, wait, you don’t even have the albondigas to sign your own name, so we have no idea of your credentials or publication.

But in fact, none of that matters. It’s not about me. It’s not about you. It’s about the scientific claims … and I note that you haven’t refuted a single claim of mine in the above post.

w.

I thought your speciality was massage therapy, Willis?

Refutations of your nonsense are everywhere in the scientific literature, Willis.

Yet you didn’t address THIS posted article at all, your refutation claims are useless here.

Your assertion that peer reviewed science is irrelevant is, of course, irrelevant

Now you LIE about what I wrote which was about what YOU wrote in NOT challenging the blog post at all you made vague claims of refutations without a shred of posted evidence offered.

You are running on nothing here.

Willis talks in circles, while missing the fundamental mechanism of planetary warming that dominates today: an increasing GHE which increasingly restricts the flow of infrared thermal radiation attempting to leave earth’s system for space. An education in Message Therapy didn’t prepare him to teach laymen about physics or thermodynamics; he doesn’t understand either.

And what, pray tell, is the exact impact of this “restriction’ on radiation to space. Don’t tell me that the Global Average Temperature goes up. Tell me *exactly* what causes the GAT goes up. What happens to maximum temps during the day and to minimum temps at night.

You really don’t know? What do you think happens when thermal infrared radiation trying to leave earth is prevented from doing so and returns to earth? Try doing a heat balance on earths system.

If it returns to earth doesn’t the earth just turn around and re-radiate it back? And a little more is lost to space with each re-radiation?

Nor did you answer my question.

“Tell me *exactly* what causes the GAT goes up. What happens to maximum temps during the day and to minimum temps at night.”

First the basics. Do you understand and agree that the solar energy entering earths system must be numerically equal to the energy leaving?

Sam Best, you posted:

Hmmm . . . did you ever consider that GHGs have the same average temperature as non-GHGs (mainly N2 and O2) at any given altitude DESPITE the fact that they are absorbing about 90% of all LWIR energy radiated off Earth’s surface?

There is not a “standard atmosphere” temperature profile for CO2 and another for N2.

This reason for this is “thermal equalization” (aka “thermalization”) caused by the extremely rapid, on the order of nanoseconds timescale, collisions occurring between all atmospheric molecules at the typical temperatures and pressures within the 10 km altitude characteristic of the troposphere. Energy is exchanged in these collision such that the energized GHGs warm the relatively lower-energy N2 and O2 molecules, thus driving the ensemble to an average temperature.

The N2 and O2 molecules, being non-GHGs, radiate energy directly to space due to the simple fact that their temperature is above absolute zero. Increasing their temperature in turn increases the amount of thermal radiation they emit directly to space.

Thus, although GHGs block LWIR coming off Earth’s surface from going directly to space, they serve the extremely important function of re-distributing that absorbed LWIR energy to N2 and O2 (comprising 99% of Earth’s atmosphere) that otherwise are incapable of being “warmed” directly by absorbing LWIR.

And the ad hominem attack in your last sentence was uncalled for, besides being just wrong.

Some of that radiation is returned to earth warming it. Here: https://news.climate.columbia.edu/2021/02/25/carbon-dioxide-cause-global-warming/

and Willis really does not understand basic thermodynamics or physics.

So, Sam Best, exactly how many peer-reviewed scientific papers or even just general science-based articles, let alone books, have you had published on the subject of “basic thermodynamics or physics”?

I’ll be laughing out loud until you can cite some verifiable publications of your work.

The question is how many has Willis published? Answer: ZERO.

Sam Best September 17, 2021 5:38 pm

The only valid scientific question is “Are willis’s claims valid and true?”. Anything else is just a pathetic attempt to discredit me without touching the substance of my ideas.

And in any case, you’re wrong about my publications. Here’s my google scholar profile.

And I say again, this is just a vain meaningless attempt to sidetrack us from the fact that you can’t find anything wrong with my post. It’s not about my publications. It’s about my ideas

w.

Sorry, Willis. those are all pay to publish scams. No legit peer reviewed scientific journals. Yo

Sam Best September 17, 2021 6:28 pm

That is a damn lie from a damned liar. I never spent one dime to publish any of those. This is just more of your scumball attempt to blacken my name without attacking my ideas. Give it up. You couldn’t bite my ankles if you stood on tiptoe. Come back when you want to discuss the science.

w.

Sam Best posted:

Sam Best: your ignorance . . . it burns.

You assert that a publication in Proceedings of the National Academy of Sciences, cited by Willis as one of his publication venues (second from the bottom in his above listing), is a “pay to publish scam”?

ROTFLMAO!

You insult was unwarranted! You are not contributing anything but puffing up your own ego.

Your empty reply was the best you can do, that is what a child does a lot, but a grown man would have tried to post a real counterpoint to the article, but in the end you came up with education fallacy you must like bragging now……..

You must be from harvad!

Extremely interesting, Willis. You say at one point…

But there is sort-term feedback here in the form of the Stefan-Boltzmann feedback. For each unit of surface absoprtion there is a very quick increase in outgoing LWIR.

Figure 7 looks just about like a proportional controller with a hard limit at 30C or thereabouts. Two questions: 1) What is the source of the absorbed radiation estimates? 2) is there a way to extend Figure 7 to the left to see a hard limit at very low values of absorption? Polar night, for example?

I see in the caption the source of the data, and I have read how nasa obtains surface temperatures from GOES, and so the radiant fluxes are avaiable from views at various angles and over different bands. I also see one of the graphs has a temperature scale and the lowest radiances appear to be from polar night. So, there is apparently not a symmetry to the “limiter” here…

Certainly, the problem set is complex, and the solution space cannot be reduced to a simple scalar, and can only be estimated with accuracy and reproducibility through stochastic methods in a limited frame of reference.

The climates are changing, which can be completely accounted for with natural, large scale perturbations.

What scalar were you thinking of? Can the solution space be reduced to a set of scalars? Or through stochastic methods applied to a set of scalars in a limited frame of reference?

On a somewhat related note (to thermo regulatory systems)I was struck while wandering the sandy riverbank by the wave forms left by the flowing water – now receeded – in the sand/silt.

They were identical in size and shape to the wave forms I had seen hiking the snow and icefields of our Pacific NW.

Which would seem to me to indicate that the driving force for snow and ice melt is wind, not albedo. Are there any databases that might help tease out the effect of wind as a thermo regulatory system?

Thanks

Generally, the role played by wind with snow and ice is to cause sublimation.

Can the Stefan-Boltzmann equation even be used for gases?

That’s the basis of modern scientific climatology. Use the Stefan-Boltzmann equation freely for black bodies, gray bodies, green bodies, gases, clouds, water, or a single spectral line 🙂

Yes. The effective emissivity for gasses at one atmosphere total pressure is a function of the path length the gas presents times partial pressure of the IR active gas and the gas temperature. These engineering correlations are from experimental data and are a fundamental input for estimates of radiative transfer from hot combustion gasses to an enclosure.

Why are you asking such a question when you have your “maths and physics degree to fall back on?”

When I see a 50/50 split in such data, my hackles go up. We know that the satellite data show an actual imbalance of about 5W/m^2 which nowhere near credible, so they rig it make it out of balance by what they consider “reasonable”. IOW, they impose their expectations on what they are obliged to admit are flakey data.

My first guess at attribution of this crazy 50/50 split is that someone thought that is what it should be so forced it to be nearly identical. They know they can’t make it exactly equal without giving the game away.

Saying that 5W/m^2 is ‘nowhere near credible’ doesnt cut it, especially when its stated in the peer reviewed science. You have to show why its not credible.

And all due to that amazing substance called water that “climate science” ignores because CO2.😃

Not quite true.

Water as a gas is the super “Greenhouse Gas”.

Water as clouds is a parameterised factor.

Surface temperature is a function of an energy imbalance caused by CO2 increasing that creates a positive feedback with water vapour.

Coupled models bring the atmosphere and oceans together but the atmospheric phases of water remain uncoupled.

Models do not even have a mass balance with atmospheric water over an annual cycle. Integrating the precipitation less evaporation over a few years can result in negative atmospheric water. Where would you find such unphysical nonsense anywhere but climate models.

The atmospheric water and cloud formation are unlinked to the surface temperature. Again unphysical absurdities.

–The earth’s surface absorbs radiation in the form of sunlight plus thermal radiation

from the atmosphere.–

The surface {ocean and ground surface} does not absorb much energy other than the energy

of the sunlight. Earth is not like your house in which one heats air and the convectional heat

of the air warms the surface. A house is also heated by sunlight passing windows or sunlight

heating sides of house and mostly the roof of house, which also convectional heats the house.

Also if house is well insulation, and open a door, the outside warmed surface air will warm up the house air.

But like a house, thermal radiation [unless it’s fire or electric heating elements {which intense heat source like the sun is]} doesn’t heat surfaces of the your house.

The main thing about Earth is the temperature of entire ocean, which has average temperature of 3.5 C.

And this cold ocean is why we are in an Ice Age.

The reason this is the case, is that most of surface heating of Earth is done in the tropics and

tropics is 40% of surface area of the world. And the other 60% of Earth is more effected by

the 3.5 C average temperature of the Ocean.

So our cold average ocean temperature has near zero effect upon the tropical zone, and is “everything” about the polar region {though tropical ocean heat engine does add some atmospheric warmth to polar region- which more noticeable when ocean is not adding much heating to polar region or in regards to particular part of a polar region- ie the large isolated land mass of Antarctic continent.

So ocean temperature of 3.5 C little effect on tropics, large effect near liquid ocean surface

waters in polar regions and oceans closer to polar regions- Europe, Russia, Canada, and lessor amounts land in south hemisphere nearer to polar region {ie South Africa}.

Though global air temperature is about ocean surface temperature, and there is lots of that in southern hemisphere closer to southern polar region.

Our Late Cenozoic Ice Age or the 34 million years of icehouse climate has little effect upon the tropical temperature.

I couldn’t invest the time in reading this whole thing. Maybe tomorrow. Anyone want to summarize like “clouds regulate the climate?”

Sure. Here you go. “Clouds regulate the climate … except when they don’t”.

Or you could just, you know … read the post?

w.

I appreciate the effort you made, but it’s terrible writing. Give the punchline. Then explain the thought process after the fact. Very few people want to read a play-by-play of your exploratory data analysis, especially if you came to no actual conclusion or they literally stumble over it.

Here is the gold standard book on how to make an argument readable.

https://www.amazon.com/Pyramid-Principle-Logic-Writing-Thinking/dp/0273710516

This sentence could literally have been stated up front and then you do your exploratory data analysis piece.

It’s okay to go down side trails, but when you’re writing or speaking it’s called perambulation, and it’s not a good thing.

Captain Climate September 15, 2021 9:42 am

Let’s start with the fact that I’m far and away the most popular guest author on this site, with over a million page views per year, so I must be doing something right.

And you, on the other hand, don’t even have the albondigas to sign your own name.

Next, regarding this very piece, the British science writer Viscount Matt Ridley tweeted (w/15 retweets, 64 likes):

So I can only conclude that you are confusing your own preferences, which seem to be for bland, boring, suspense-free writing, with the preferences of most of my readership.

Sorry, but I fear you have vastly overestimated the popularity of your own preferences. However, please feel free to write up a post using your own style, and send it to the moderators to consider for publication.

Or you could start by just signing your real name to your comments and linking to some things you’ve written so we can all view some samples of your writing, which is no doubt conversational, fluent, clear, polite, witty, numerate, factual, humble, surprising, novel, and unafraid of tackling complex issues.

w.

Willis, you talk in circles, and often never come to a conclusion, As the Cheshire Cat said, ‘ If you don’t know where you’re going, any road will get you there”.

Sam, I suppose it’s possible to be more vague about your objections, but you’d have to work at it.

w.

As usual, Sam, you reach no conclusion, but wander aimlessly with spontaneous thoughts and lack of purpose.

This is an interesting analysis but where does the data for a 1W/m2 absorbed radiation difference, and the resulting emissions changes, come from?

While numbers are neat and tidy, measurements are much less so. It seems likely that the uncertainty in radiation measurements over the surface of the earth are much larger than these results, rendering any conclusion moot.

–This is quite odd. If we take say a block of steel at steady-state, the more radiation it is absorbing, the more radiation it is emitting. And as we would expect, over most of the earth’s surface, and over all of the land, the absorbed radiation controls the emitted radiation. As is true for a block of steel, the more radiative energy that is absorbed, the more energy is radiated away. It’s just “Simple physics”, as mainstream climate scientists like to say.–

Earth surface is 70% ocean. Ocean water is not vaguely like a block of steel.

Sunlight would heat the surface of block of steel and since steel is not as good as some other metals, it conduct some heat into the block of steel. And also since not a good as other metals, steel block would not conduct as quickly, the heat out the block of steel to it surface to be lost from convectional and radiant heat loss.

If Earth was a block steel, it would be cold Earth.

Ocean water surface absorbs very little of the sunlight, because it’s transparent to sunlight.

More 1/2 of sunlight is Near Infrared {shortwave IR} about 90% of that radiant energy is absorb in the top meter of ocean water. And the more intense sunlight goes much deeper into

the ocean. Also ocean water absorbs indirect sunlight. So at or near zenith with clear skies

on gets 1050 watts per square meter of direct sunlight and 70 watts of indirect sunlight. So, ocean absorbs 1120 watts of sunlight and block of steel absorbs 1050 watts per square meter of sunlight.

[It should kept in mind the tropics gets the most zenith or near zenith sunlight as compared to

anywhere else in the world {same day hours but more intense sunlight reaching the surface}

and why tropics absorbs more 1/2 of energy of the sun, though it’s only 40% of the surface of the planet.]

So within top 1/2 cm of steel, all the sunlight energy is absorbed, and with ocean water, less than 1% is absorb in top 1/2 cm of ocean surface.

As an engineer, I am gobsmacked to be informed the sunlight can penetrate 0.5 cm into steel, an electrically conductive material.

Perhaps I need to go back and revisit Maxwell’s equations for electromagnetism . . . but wait, no.

Well the very thin surface of steel is transparent, and one might add thicker layer of paint to make steel absorb more sunlight. But all that should be within 1/2 cm depth

if talking about steel. And it’s a comparison to water, any kind of deep ocean waters, should absorb less than %1 within .5 cm. A muddy stream could be different.

One might say in terms difference, Steel is more than 99% different than the open ocean seawater.

There other differences I did not mention such as having 20 foot ocean waves, which steel normally fails to do. Nor does the sunlight, which not magnified, at 1 AU distance, cause steel to evaporate.

The main important element of Tropical ocean Heat engine, which warms the entire world is that sea water evaporates and and holds massive amount of heat energy which can dump into the rest world on a constant 24 hour, 365 day basis.

Or why the tropical land is not the world’s heat engine.

The average temperature of the total mass of the world’s oceans is about 3.5 °C.

(ref: https://economictimes.indiatimes.com/news/science/oceans-average-temperature-is-3-5-degree-celsius/articleshow/62363696.cms?from=mdr )

This can be compared to the average Earth surface temperature (land and sea surfaces) that is reported, variously, to be between 15 and 16 °C.

Thus, since thermal energy cannot flow from cold to hot, overall the Earth’s oceans currently cool the surface . . . since the beginning of the Holocene some 12,000 years ago, the oceans DO NOT “warm the entire world”.

Gordon A. Dressler 12, 2021 3:32 pm

The average temperature of the earth is hot enough to melt solid rock … and yet despite that, very little of that heat makes it to the surface to affect us.

The same is true of the ocean being cold. Since the ocean surface isn’t cold, it makes little difference.

Regards,

w.

Willis,

Ok, let’s go there.

If by “ocean surface” you mean the mixed layer that averages to be about the first 150 m depth, it has an average temperature of about 17 °C at mid-latitudes, both NH and SH. However, global average annual sea surface temperatures vary from a low of about -2 °C near both poles to a high of about +30 °C near the equator. (Ref: https://rwu.pressbooks.pub/webboceanography/chapter/6-2-temperature/ )

However that mixed layer of the oceans is included in the calculation of the “the average Earth surface temperature (land and sea surfaces) that is reported, variously, to be between 15 and 16 °C”, as I specifically stated in my previous post. Comparing this to the data in the preceding paragraph, it is very problematic to state, as gbaikie did in his post above, that the “Tropical ocean Heat engine” “warms the entire world” while disregarding the non-Tropical heat sinks that simultaneously “cool the entire world”.

More specific to your last paragraph, I would consider any “ocean surface” temperatures below 15 °C as being relatively “cold” in discussing this matter.

I believe the best one can conclude is that the thermoregulatory effects of ocean water evaporation, followed cloud formation and associated dumping of latent heat directly into Earth’s atmosphere upon water condensation, generally at significant altitude, and NOT directly onto land surfaces, is what keeps ocean and land temperatures in close proximity as latitude varies.

In other words, Earth’s atmosphere is the most important energy control mechanism in the exchange of energy between “ocean surfaces” and land surfaces.

BTW, the presence of a fairly generous gradient in the ocean thermocline, where it can develop freely in deep waters, typically about 15 °C over 500 m depth (see aforementioned reference), shows that the ocean surface is heating the ocean depth, not just fueling water evaporation.

— Comparing this to the data in the preceding paragraph, it is very problematic to state, as gbaikie did in his post above, that the “Tropical ocean Heat engine” “warms the entire world” while disregarding the non-Tropical heat sinks that simultaneously “cool the entire world”–

Does non-Tropical heat sinks refer to falling cold water replace by less cold water. Because that like tropical ocean heat engine is also a global engine. And I would call it the refrigerator of our icebox climate. Or the why of why we are here.

But also a warming effect {by consuming oceanic heat] if average ocean temperature is high enough it’s warming effect which prevent polar sea ice forming. Or if ocean average was 5 C rather than 3.5 C there enough oceanic heat to cause an arctic being ice-free of polar sea ice in summer, easily -and maybe also in the winter.

And having liquid ocean rather than frozen ocean is warming effect for land area in arctic circle.

Though the non-Tropical heat sinks, might be referring to arctic cold air going southward, and since air mass must replaced, drawing warmer air into arctic. {which would be reduced if one had less arctic polar sea ice. Or warmer arctic ocean surface waters.}

“The same is true of the ocean being cold. Since the ocean surface isn’t cold, it makes little difference.”

It makes little difference to business of claiming Earth will like Venus- it’s not money maker.

But temperature of ocean determines that we in Ice Age- and aren’t going to leave it.

The temperature of ocean if it was 15 C, would mean we in greenhouse climate.

A greenhouse climate doesn’t have a 1/3 of world having deserts and does not have permanent ice caps and has warm ocean, and 15 C ocean is around threshold of a warm ocean.

Or some claim the ocean average volume temp has been as high as +25 C, and there is no climate state warmer than greenhouse {hothouse} climate. And no has bothered to say ocean temperature would be if we were in Snowball global climate {the coldest classification of an Earth climate]

Some people say Earth’s ocean has never been as high as 25 C,

and I say Earth’s never been in snowball climate- instead it seems Earth is currently about as cold as Earth has ever been.

{I am aware there is some evidence of past snowball earth’s- I don’t find any of it as compelling evident. Or such things as glaciers flowing in tropical ocean, means quite little in terms evidence of anything.}

“This can be compared to the average Earth surface temperature (land and sea surfaces) that is reported, variously, to be between 15 and 16 °C.”

The global average land surface air temperature is about 10 C

And global average ocean surface air temperature is about 17

This when averaged gives average global surface air temperature

of about 15 C.

The average air temperature of China is about 8 C

The average air temperature of India is about 24 C

Russia and Canada are two the countries with largest surface area

average around minus 4 C

Northern Hemisphere average land temperature is about 12 C

{largely due to African countries which are in the northern hemisphere- also India, and a lot small countries, and Mexico is about 20 C. And

US is around 12 C. Europe at 9 C- is dragging down average}

And southern Hemisphere land is about 8 C – largely because of Antarctica, and Australia with average 20 C helps a lot bringing the average up towards 8 C, plus the 1/3 of Africa, and the South American countries.

The tropical ocean which is about 80% of tropical zone and and 40% of global average ocean surface is about 26 C {dragging up the global ocean temperature to 17 C} or 60% of the rest of ocean averages around 11 C.

As known, North Atlantic ocean warms Europe by about 10 C. And without such ocean warming Europe average would be about as cold as Canada.

Gulf Stream first noted by Benjamin Franklin: notable politician and amateur scientist, but he had access to the press and his travels to France, his celebrity status in France- and that science was very popular topic.

{Or may have been known long before this- probably anyone navigating between US and Europe would have been aware of it.}

“The global average land surface air temperature is about 10 C

And global average ocean surface air temperature is about 17″

Interesting, So the ocean is a net heat source and the land areas are a net heat sink.

…from above

“Thus, since thermal energy cannot flow from cold to hot, overall the Earth’s oceans currently cool the surface . . . since the beginning of the Holocene some 12,000 years ago, the oceans DO NOT “warm the entire world”.

…so the opposite is true.

Hah! In reply there is this:

“Generally, the optical penetration depth is given by the reciprocal value of the (frequency- or wavelength-dependent) absorption coefficient alpha. For instance, this value amounts to 8.35e+5 1/cm for silver at 588 nm (see webpage “refractiveindex.info”), the optical penetration depth is thus 1.2e-6 cm.” — source: https://www.researchgate.net/post/What_is_the_formula_used_for_calculating_the_penetration_depth_skin_depth_of_light_in_a_material_How_does_it_related_to_dependent_of_the_frequency ; comment by Christoph Gerhard )

So with the reasonable assumption that silver, an electrically conductive metal, is not that unlike steel in terms of being electrically conductive, I can confidentially assert that the (sunlight) optical penetration depth into steel will be on the order of 1e-4 cm or less.

Indeed, that is a very thin surface layer . . . one not worth further discussion in the context of this comment thread.

Not sure why you mention electrically conductive metal.

Metals generally are indeed, more electrically conductive and thermally

conductive.

Wiki:

“Heat transfer occurs at a lower rate in materials of low thermal conductivity than in materials of high thermal conductivity. For instance, metals typically have high thermal conductivity and are very efficient at conducting heat, while the opposite is true for insulating materials like Styrofoam. “

Water {fresh or salty] has low thermal conductivity.

Steel has high thermal conductivity. compared to water, lower

compared to silver.

Salt water is more electrically conductive than pure water.

But steel more electrically conductive than saltwater, but less than silver

Or:

“Unlike most electrical insulators, diamond is a good conductor of heat because of the strong covalent bonding and low phonon scattering. Thermal conductivity of natural diamond was measured to be about 2200 W/(m·K), which is five times more than silver, the most thermally conductive metal. “

And silver also conducts electricity better than copper – though copper is cheaper, aluminum is even cheaper and a much lighter material and can be and is used {if used properly] to conduct electricity.

Both water and air are poor conductors of heat, and transfer heat by convection. Or dense air falls, dense water falls or warm water rises and warm air rises. Or atmosphere is well mixed due to convection. The ocean surface down to say 100 meters is well mixed. And could say entire ocean has fairly uniform temperature but has thermal gradient near surface. Atmosphere is fairly uniform if allow for the lapse rate. If ignoring lapse rate it might appear not to be a uniform temperature.

Or because convection heat transfer, both ocean and atmosphere has uniform temperature in terms vertically- if you include the effect of gravity which allows heavier to fall and lighter to rise

Though globally we don’t anything close to uniform air temperature of 15 C. It’s merely an average temperature of extreme ranges of temperatures, which are inflated into higher number due a high more uniform tropical ocean surface air temperature.

Or if we had a more uniform global surface air temperature, we would not be in an Ice Age. {The very cold ocean makes it an Ice Age}

Gordon mentions electrical conductivity because light is an electromagnetic radiation, and highly conductive materials strongly dampen the penetration of EM radiation.

In general, all dielectrics tend to be transparent (or translucent), while conductors tend to be opaque and have much higher reflectance.

— September 12, 2021 8:16 pm

Gordon mentions electrical conductivity because light is an electromagnetic radiation, and highly conductive materials strongly dampen the penetration of EM radiation.–

I don’t imagine steel as highly electrically conductive material.

I don’t know enough, to tell you what is highly electrically conductive material. I tend imagine a high pressure state, or maybe, involving plasma, or other stuff, I am not familiar with.

But I don’t need the joke explained.

I still don’t get it.

But I am ok with it.

In contrast to mica or quartz, which are good insulators, all metals are good conductors. One of the defining characteristics of metals is that they have metallic bonding (in contrast to covalent bonding of insulators) which means there are free electrons available to carry a current.

And in between are the semiconductors, which can be made to be anything from insulating to conducting.

gbaikie,

There is no “joke” regarding the inability of light to penetrate beyond a few microns into the surface of an electrically-conductive material. Such is established based on the scientific equations comprising Maxwell’s Laws of electromagnetism.

You could have recognized this if you had bothered to access the link I provided above in my post of September 12, 2021 4:01 pm.

I cannot guide you further. Good luck.

There is no way that light will pass through a 3/16″ thick piece of steel!

I wanted to use metric.

Are are certain no sunlight goes thru 4mm of steel?

Actually since on topic, I was thinking spacecraft which would use 4 mm thick aluminum, what depth of aluminum, of say 7068 aluminium alloy

would block 1,413 watts per square meter of sunlight.

And if instead if at Venus orbit would be any difference in regard thickness if got 2,647 watts per square meter of sunlight?